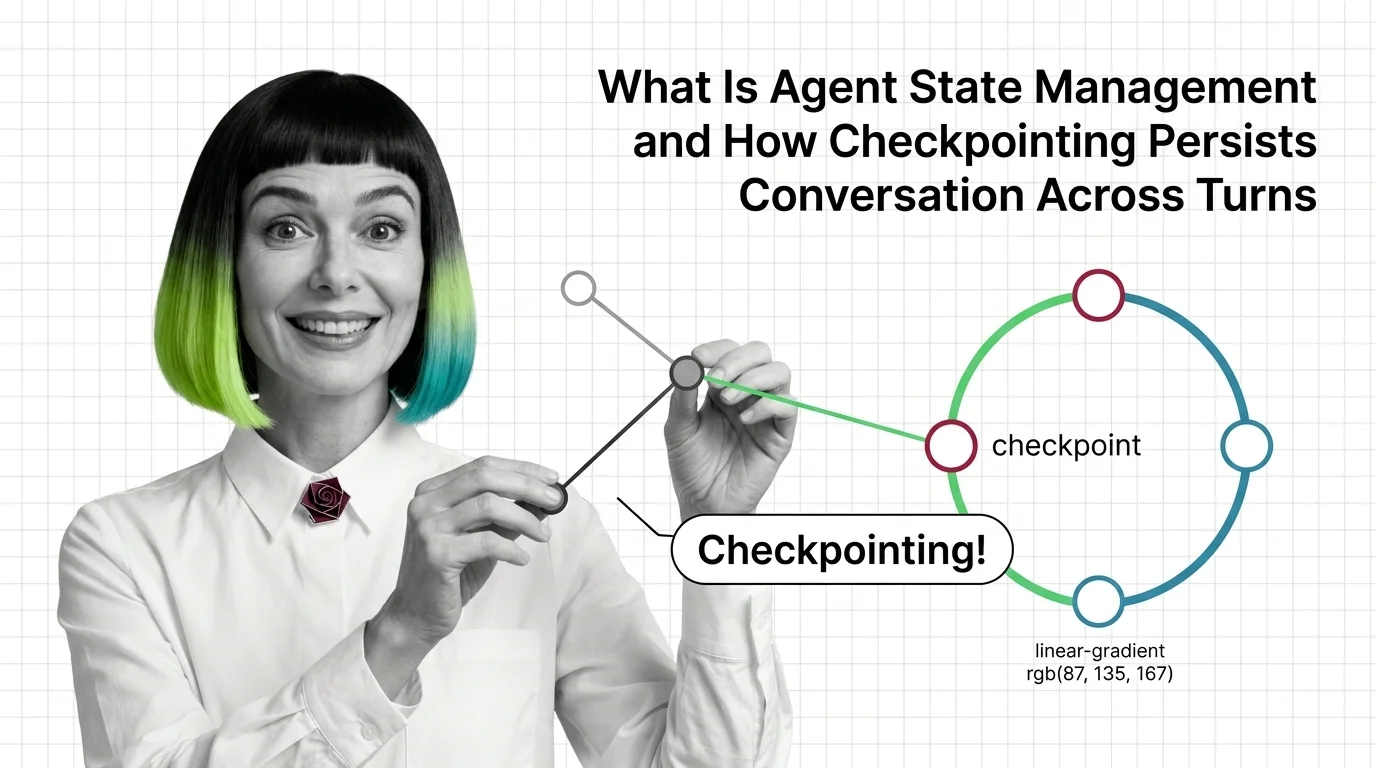

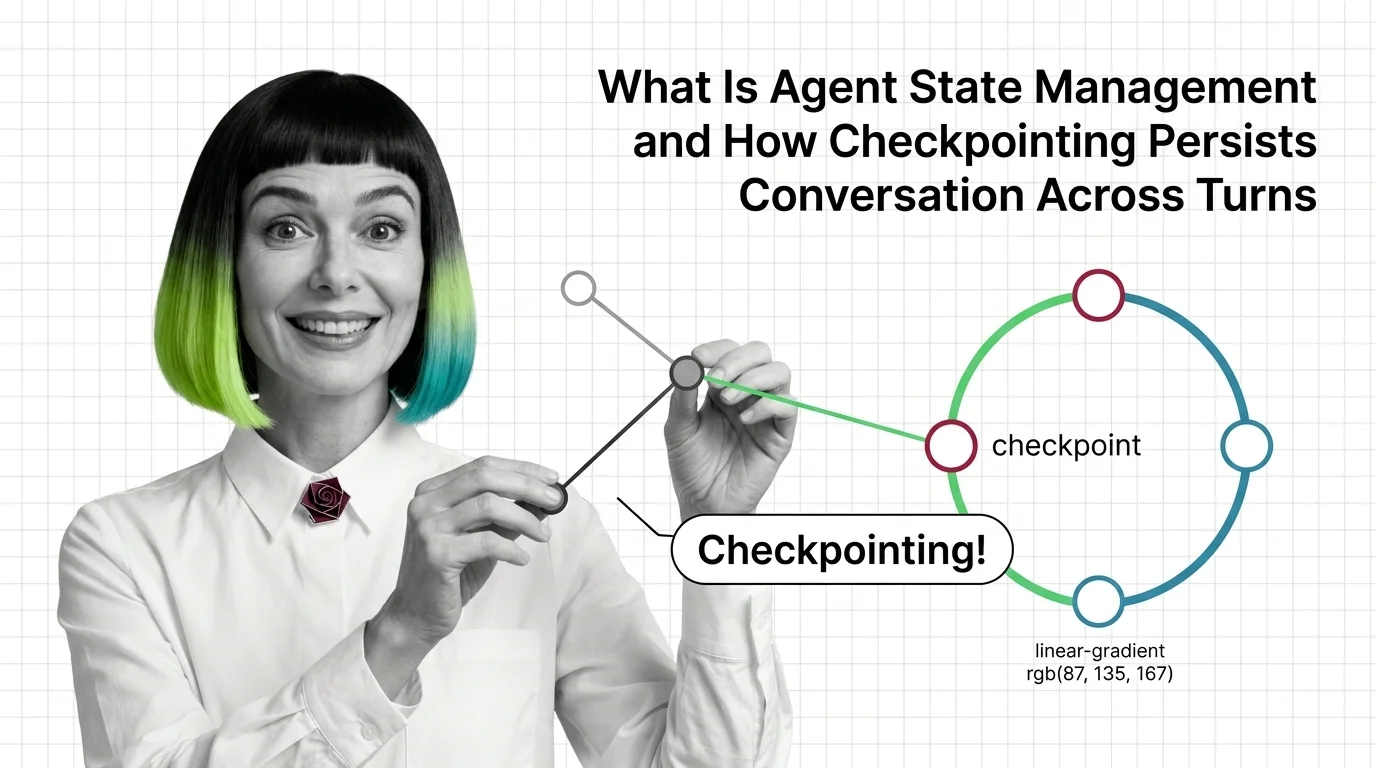

Agent State Management: How Checkpointing Persists Memory Across Turns

Agent state management decides whether your agent remembers. See how LangGraph checkpointers, threads, and reducers persist conversation across turns.

Agent state management is how an AI agent remembers what it has done, said, and decided across multiple turns or sessions.

It covers checkpointing intermediate steps, threading conversations by user, and persisting variables in a database so a long-running agent can resume after a crash, restart, or human handoff without losing context.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Agent state management decides whether your agent remembers. See how LangGraph checkpointers, threads, and reducers persist conversation across turns.

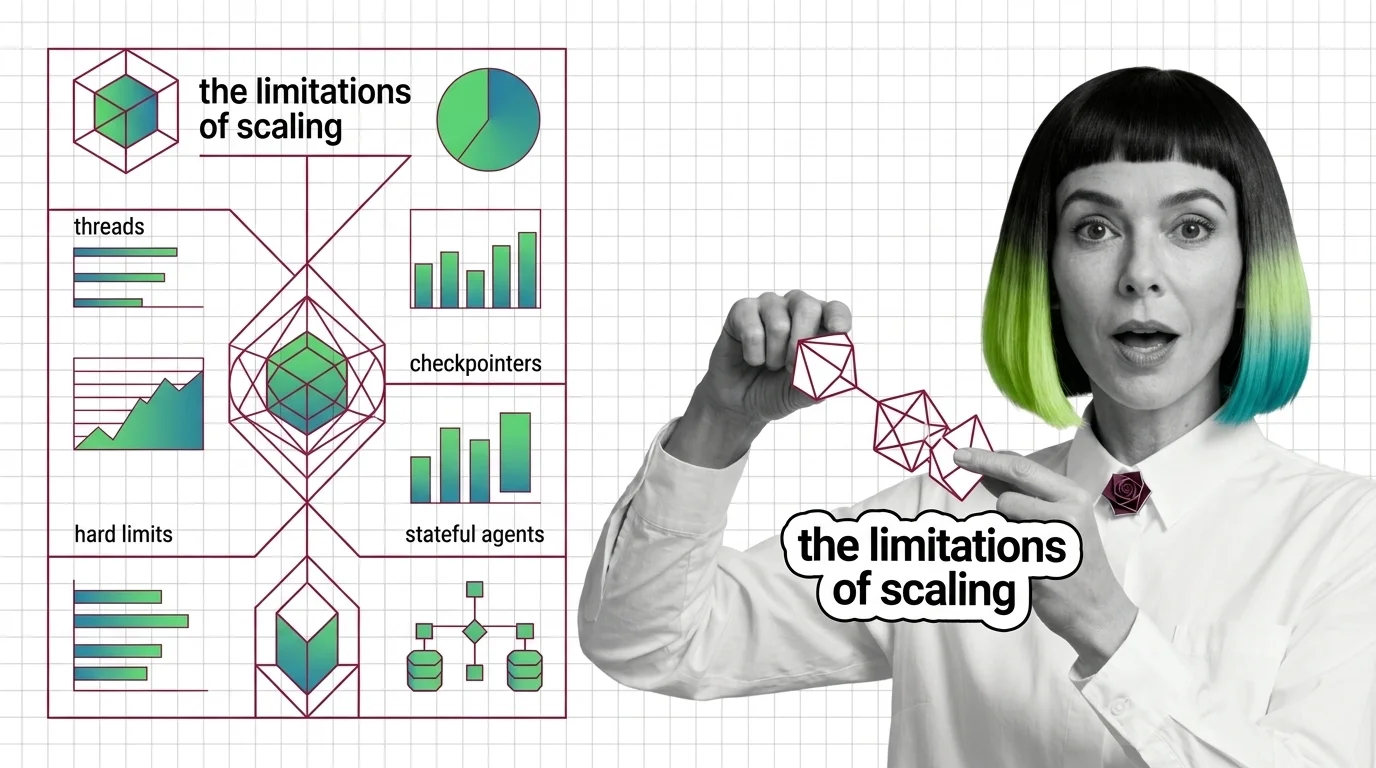

Agent state is not memory — it is plumbing that replays snapshots between steps. Mona explains threads, checkpointers, and where stateful agents break.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

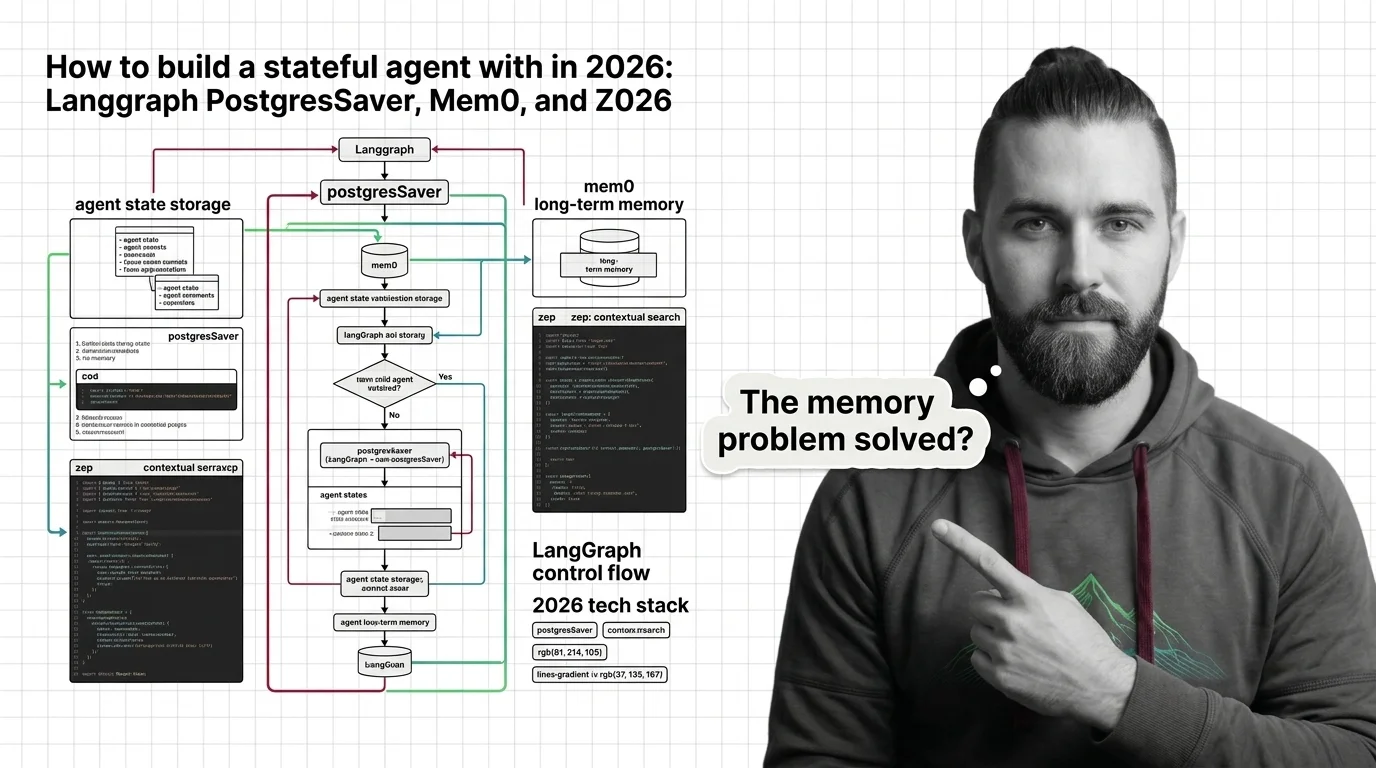

Stateful agents need three storage layers, not one. Wire LangGraph, Mem0, and Zep into an agent that survives restarts and remembers users.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated May 2026

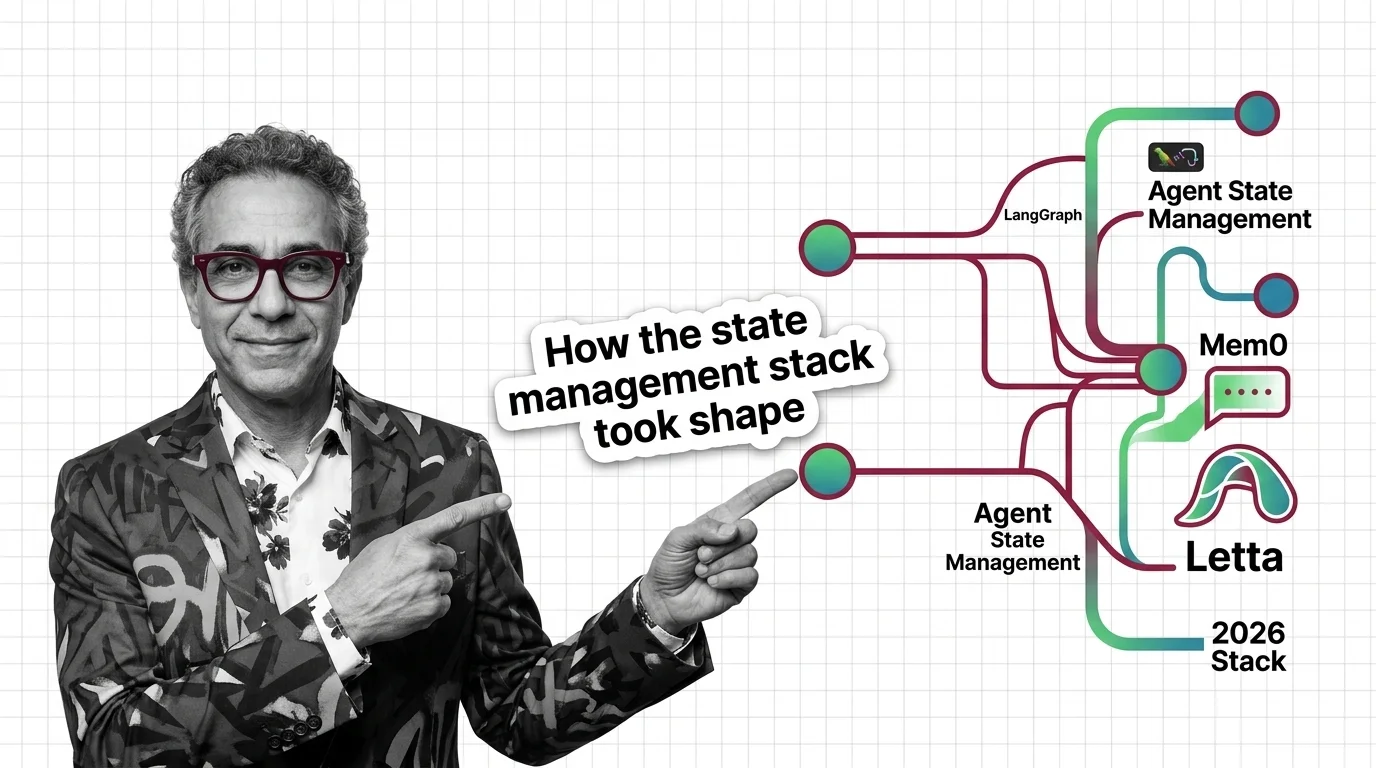

Agent state management split in 2026 into two layers — LangGraph checkpointing for thread state, Mem0 or Letta for cross-session memory. Here's the new stack.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

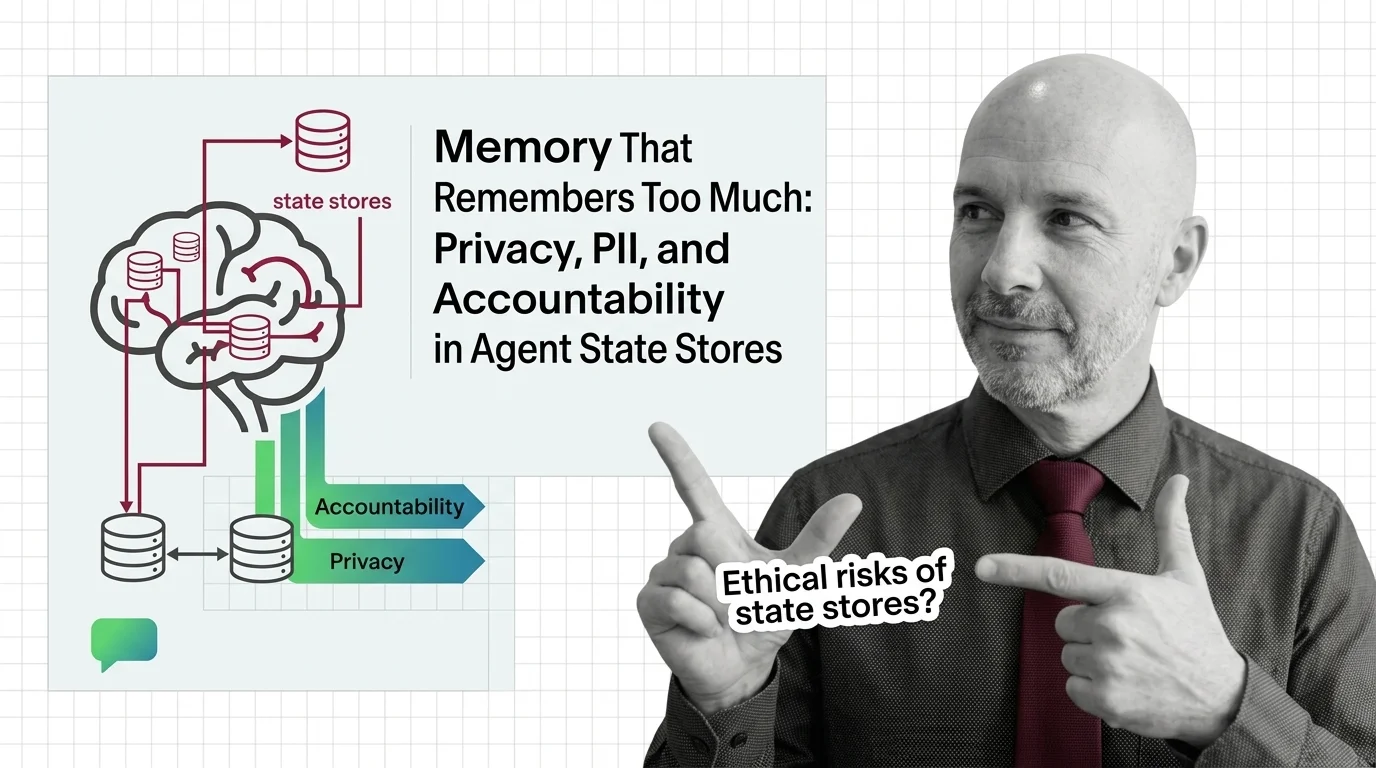

Persistent agent memory turns interactions into records. As courts, regulators, and red teams collide, accountability becomes the question we keep avoiding.