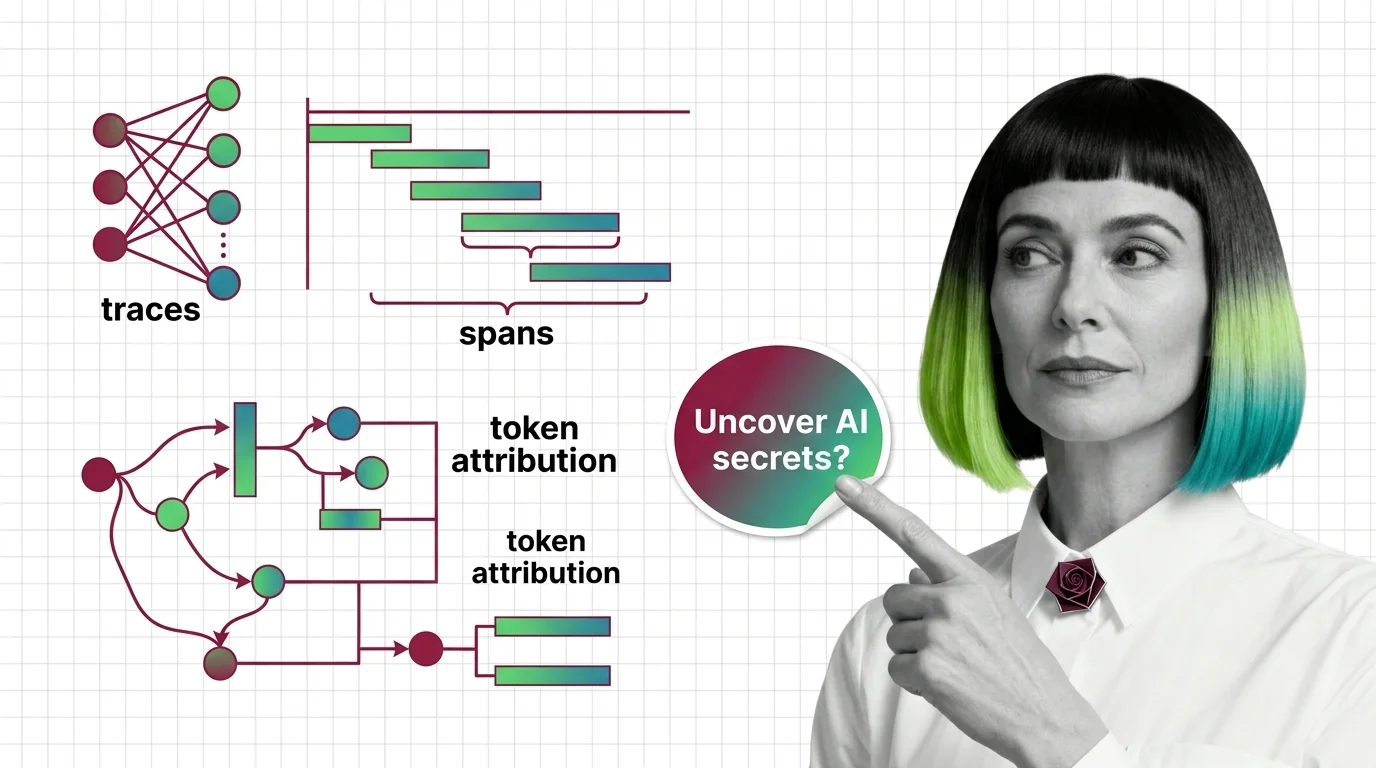

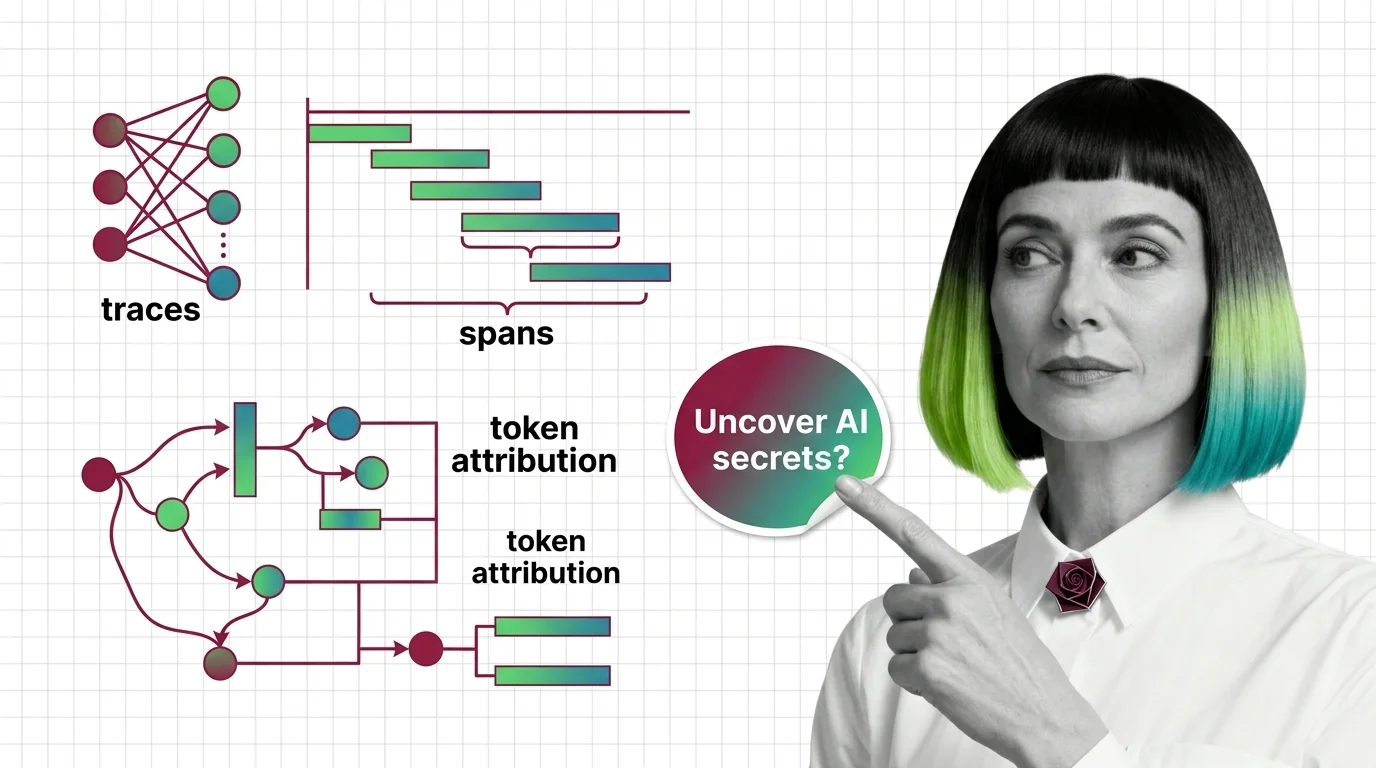

What Is Agent Observability? Traces, Spans, and Token Attribution

Agent observability records every step an AI agent takes. Learn how traces, spans, and token attribution reveal what your agent actually did at runtime.

Agent observability is the practice of tracing, logging, and monitoring AI agent systems so engineers can see what an agent did, why it chose each step, and where it failed.

It captures token usage, latency per step, tool call success rates, and full execution traces, turning opaque multi-step LLM behavior into something teams can debug, measure, and improve in production.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Agent observability records every step an AI agent takes. Learn how traces, spans, and token attribution reveal what your agent actually did at runtime.

OpenTelemetry GenAI semconv is still in Development. What you need to know about tracing prerequisites and hard limits of observing non-deterministic agents.

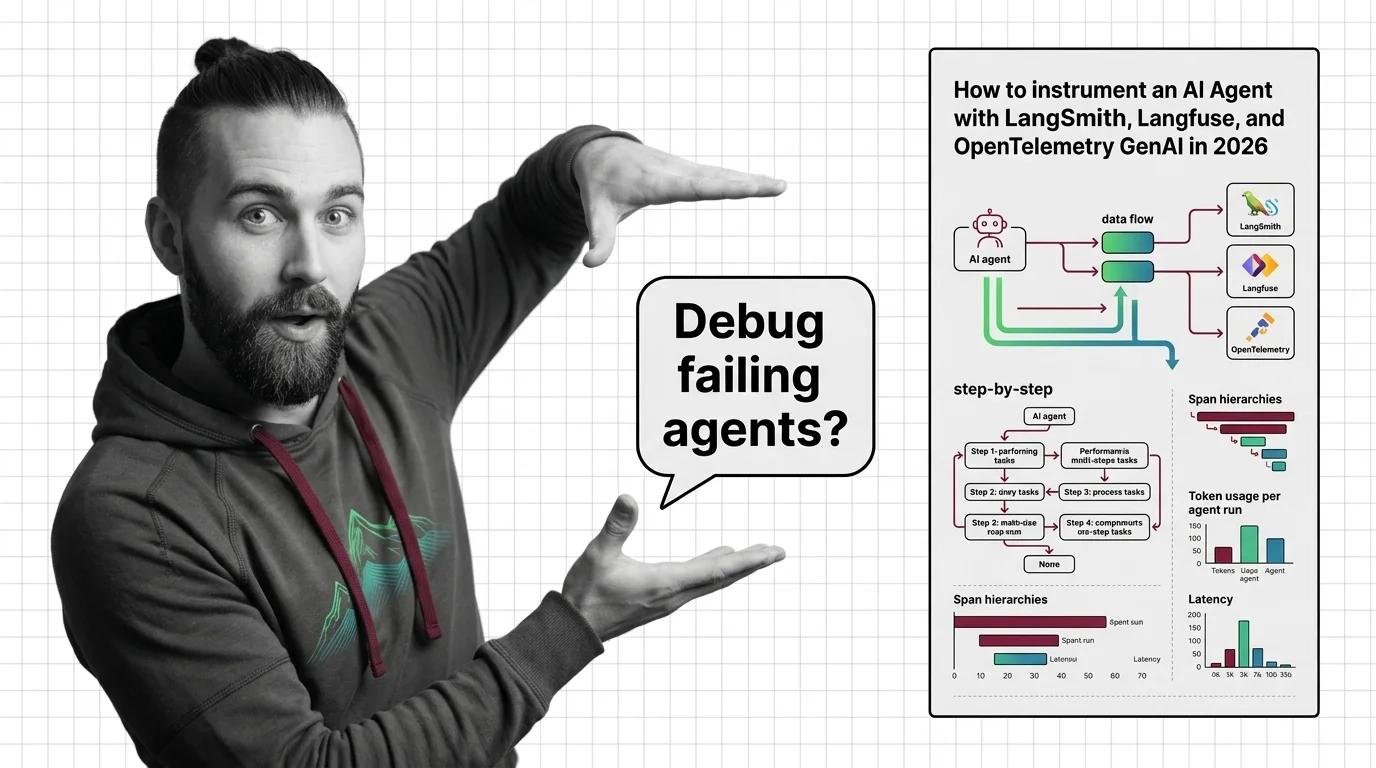

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

Instrument a production AI agent with LangSmith, Langfuse, and OpenTelemetry GenAI semconv in 2026 — span design, SDK choice, debug-readiness.

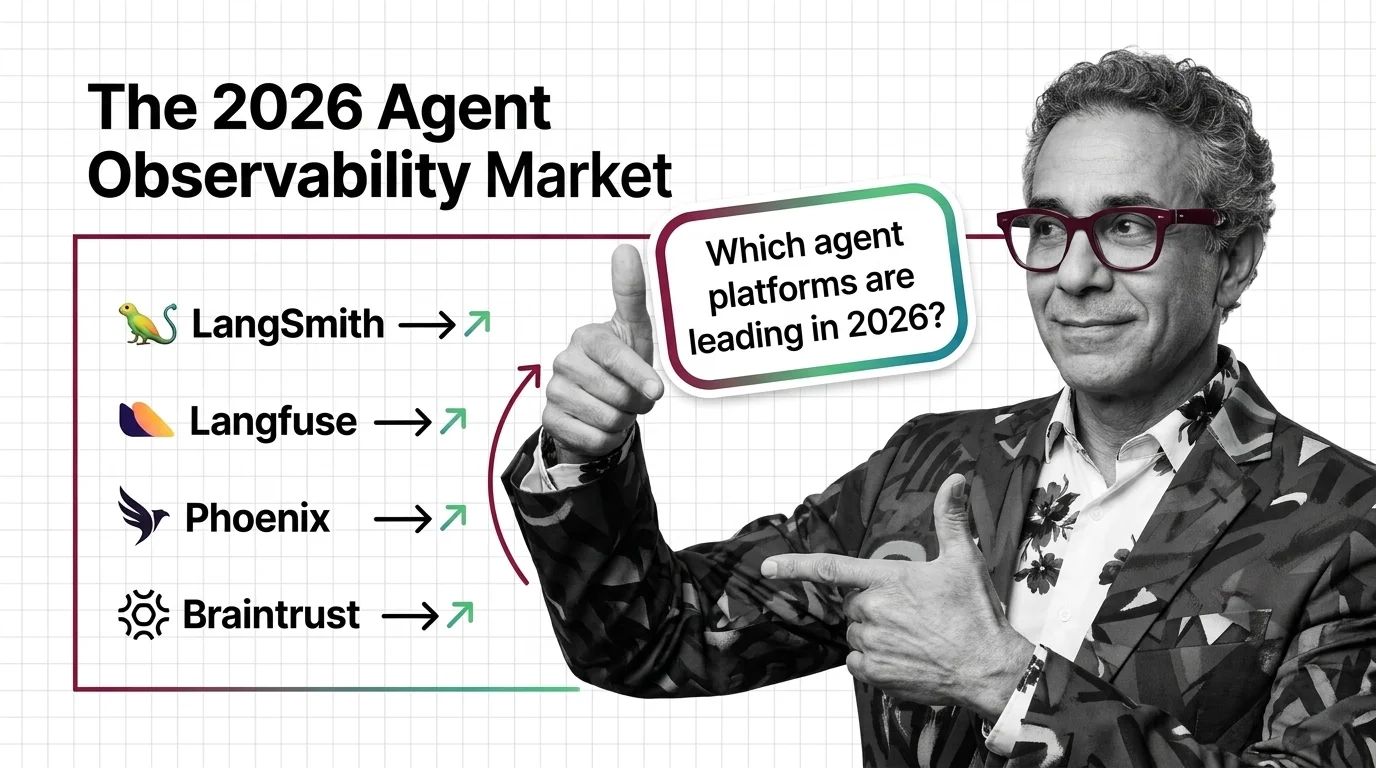

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated May 2026

ClickHouse bought Langfuse. Braintrust raised $80M at $800M. Datadog folded agents into APM. What the 2026 agent observability split means for AI teams.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

Agent observability captures every prompt, tool call, and screenshot. The privacy cost stays invisible — until the panopticon turns visible.