Agent Memory Systems: How LLMs Get Persistent Recall Across Sessions

Agent memory systems give LLMs persistent recall across sessions. Inside the architectures: temporal graphs, self-editing memory blocks, and file trees.

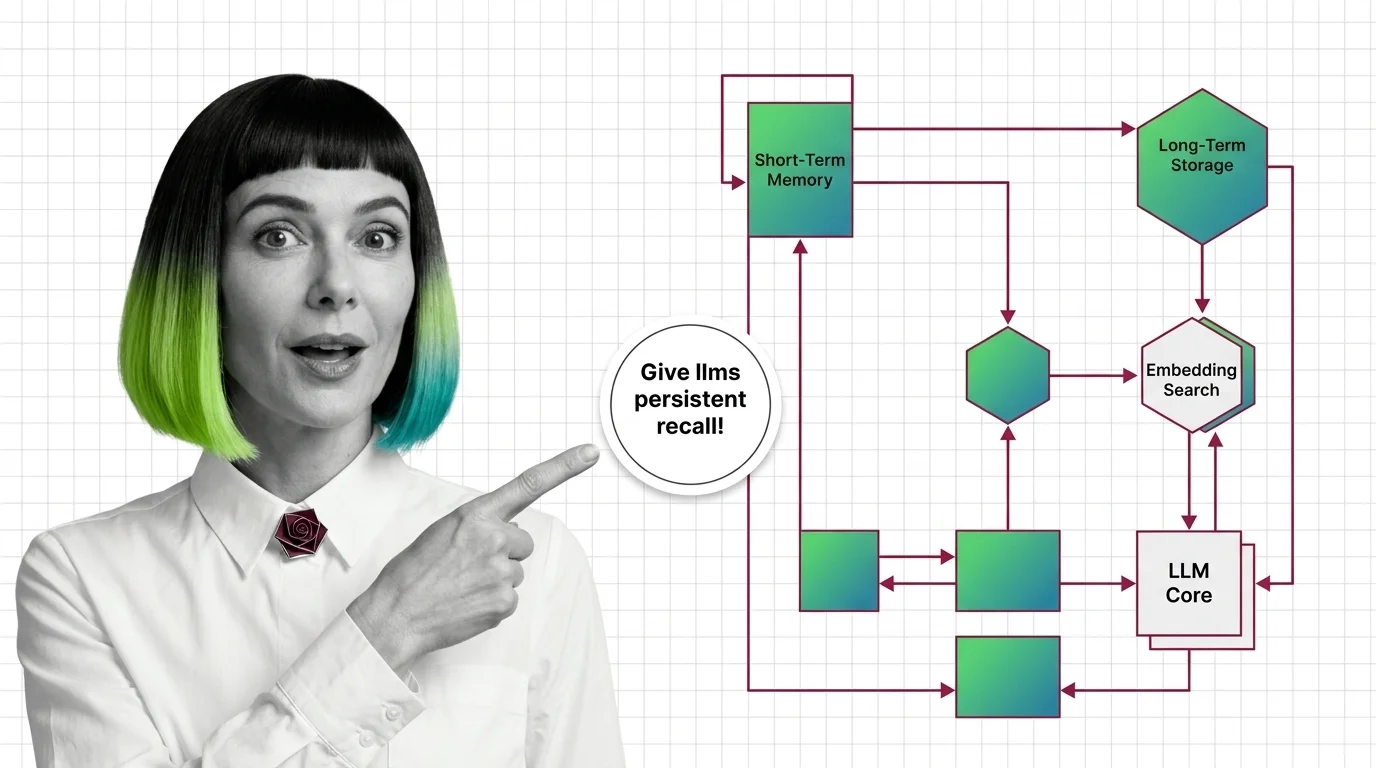

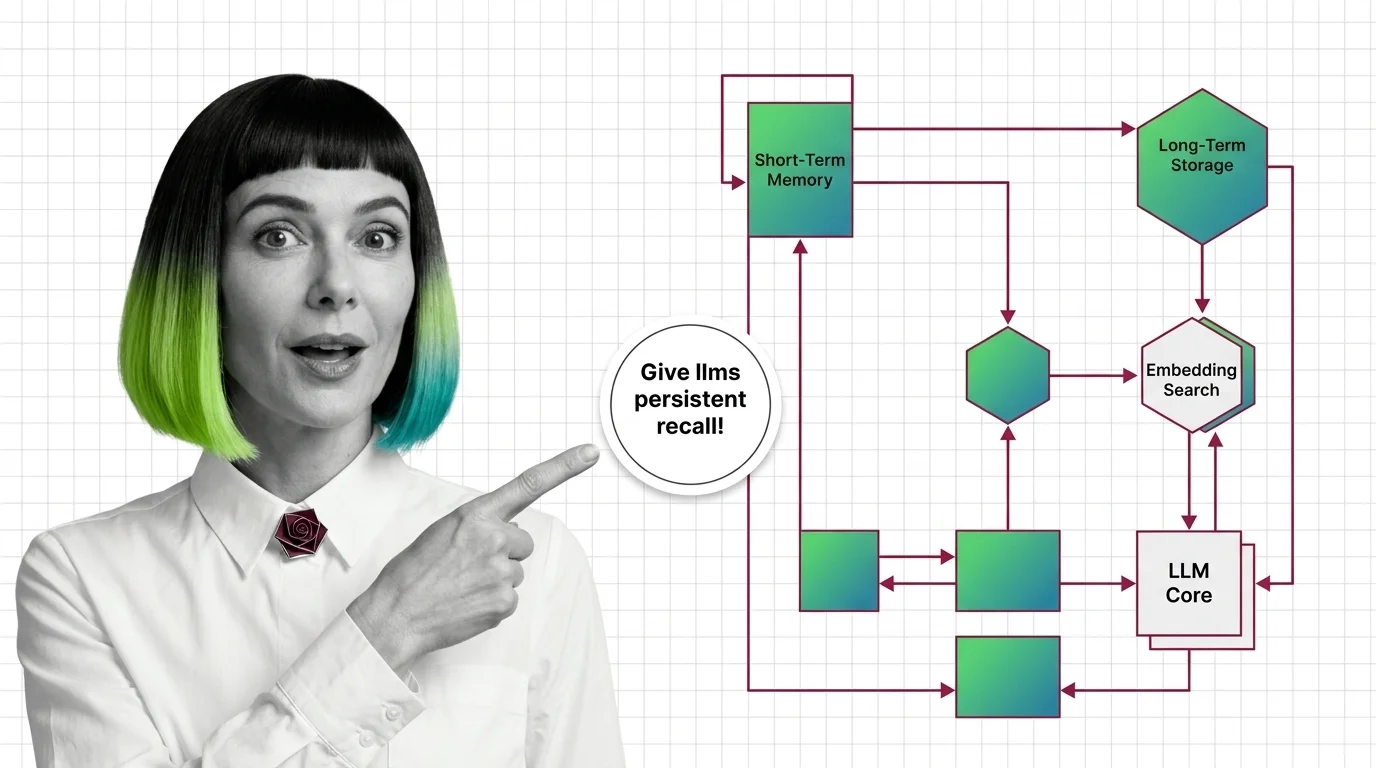

Agent memory systems are the architectures that let AI agents remember things beyond a single prompt.

They combine short-term context windows, summarised conversation history, vector-backed recall of past interactions, and episodic stores that capture what the agent did and why. Together these layers let an agent carry context across tasks, sessions, and users instead of starting from zero each call. Also known as: Agent Memory.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Agent memory systems give LLMs persistent recall across sessions. Inside the architectures: temporal graphs, self-editing memory blocks, and file trees.

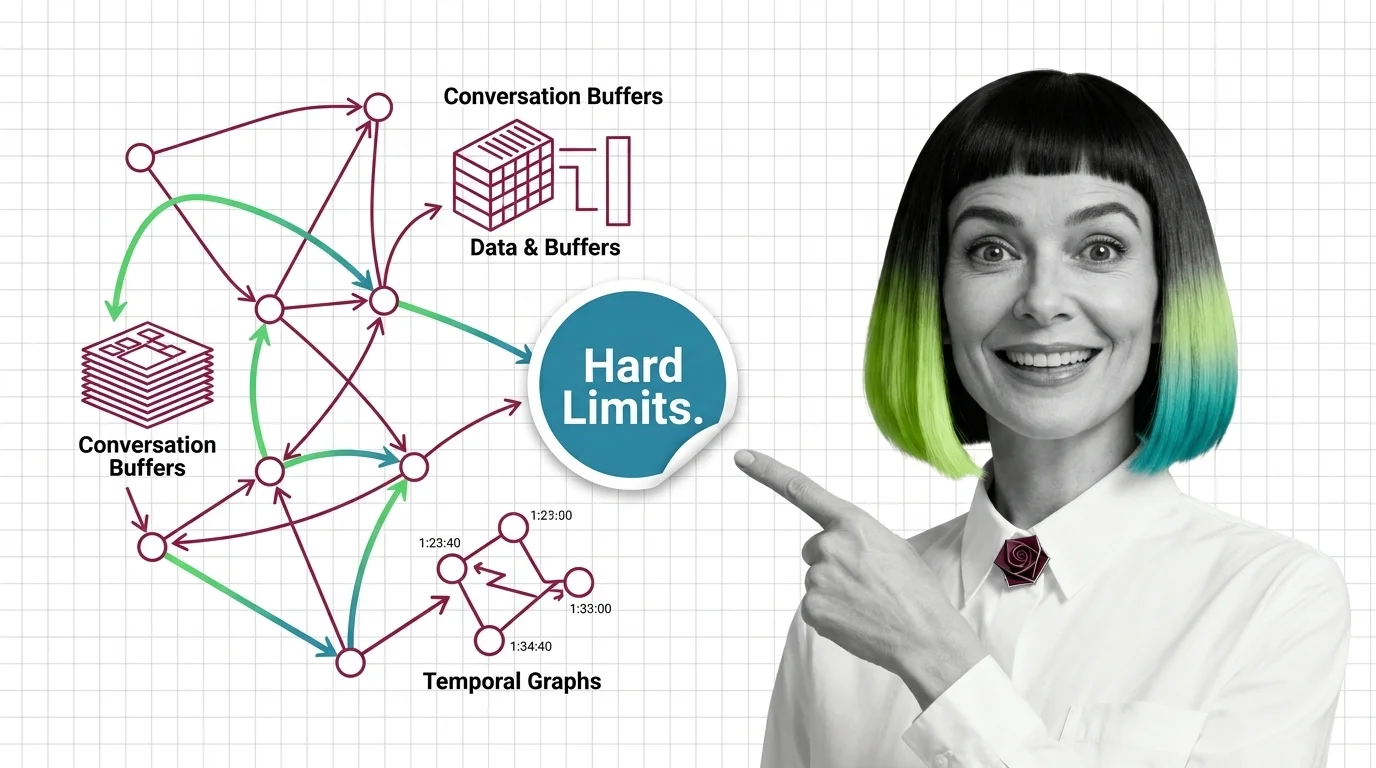

Agent memory isn't a bigger context window. Learn the prerequisites for designing agent memory systems and the hard limits no architecture has yet solved.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

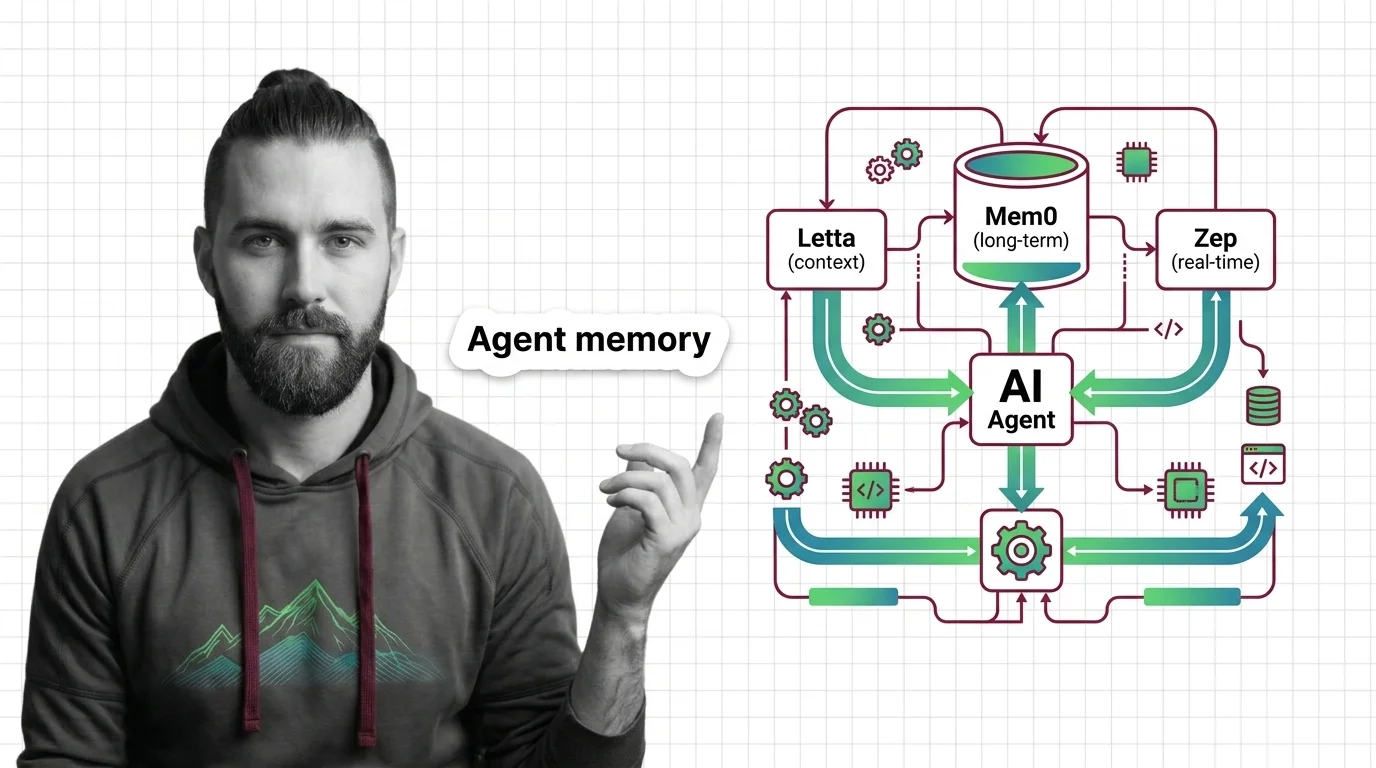

Spec a persistent memory layer for AI agents with Mem0, Letta, or Zep. A four-step decomposition for choosing the stack and wiring it correctly in 2026.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated May 2026

Production agent memory engines like ByteRover and Supermemory cleared 90% on LoCoMo while Mem0 and OpenAI Memory stalled. Here's the 2026 split.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

AI agents with persistent memory promise convenience but build a permanent record of you. The ethical tension between recall, consent, and erasure, examined.