Agent Error Handling: How Agents Recover From Tool and LLM Failures

Agent error handling turns brittle LLM loops into resilient systems. Learn how guardrails, retries, and checkpoints catch tool failures and malformed outputs.

Agent error handling and recovery is the set of techniques that keep AI agents working when something breaks.

When a tool call fails, a model returns malformed output, or a workflow stalls, resilient agents retry with backoff, switch to fallback models, self-correct their own mistakes, or recover from a partial result instead of crashing the whole task.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Agent error handling turns brittle LLM loops into resilient systems. Learn how guardrails, retries, and checkpoints catch tool failures and malformed outputs.

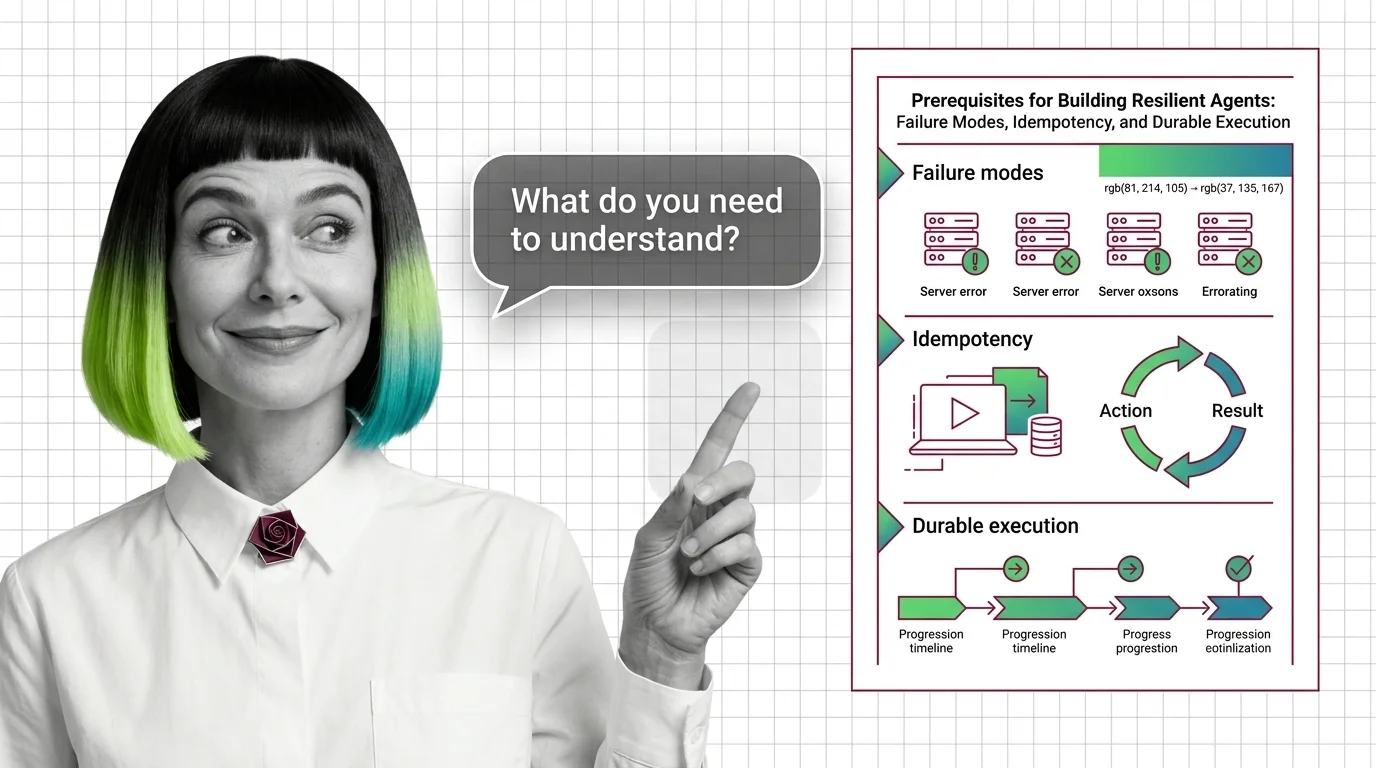

Reliable AI agents need three foundations: a failure-mode taxonomy, idempotent action boundaries, and durable execution that survives mid-workflow crashes.

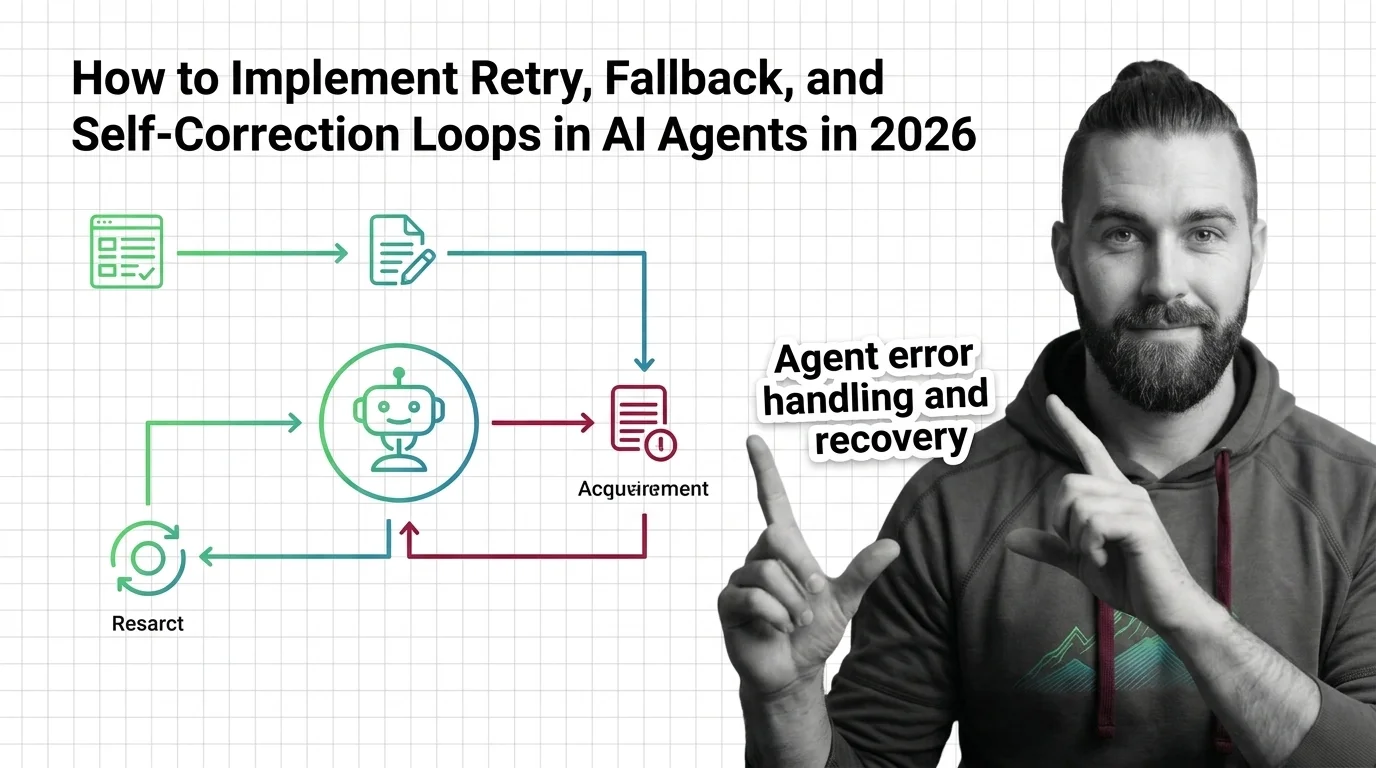

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

A specification-first guide to retry with backoff, durable execution via LangGraph and Temporal, and Pydantic AI self-correction in production AI agents.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated May 2026

Three frameworks converged on durable execution in 2026. LangGraph, Temporal, and Pydantic AI are redrawing how production agents survive crashes and retries.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

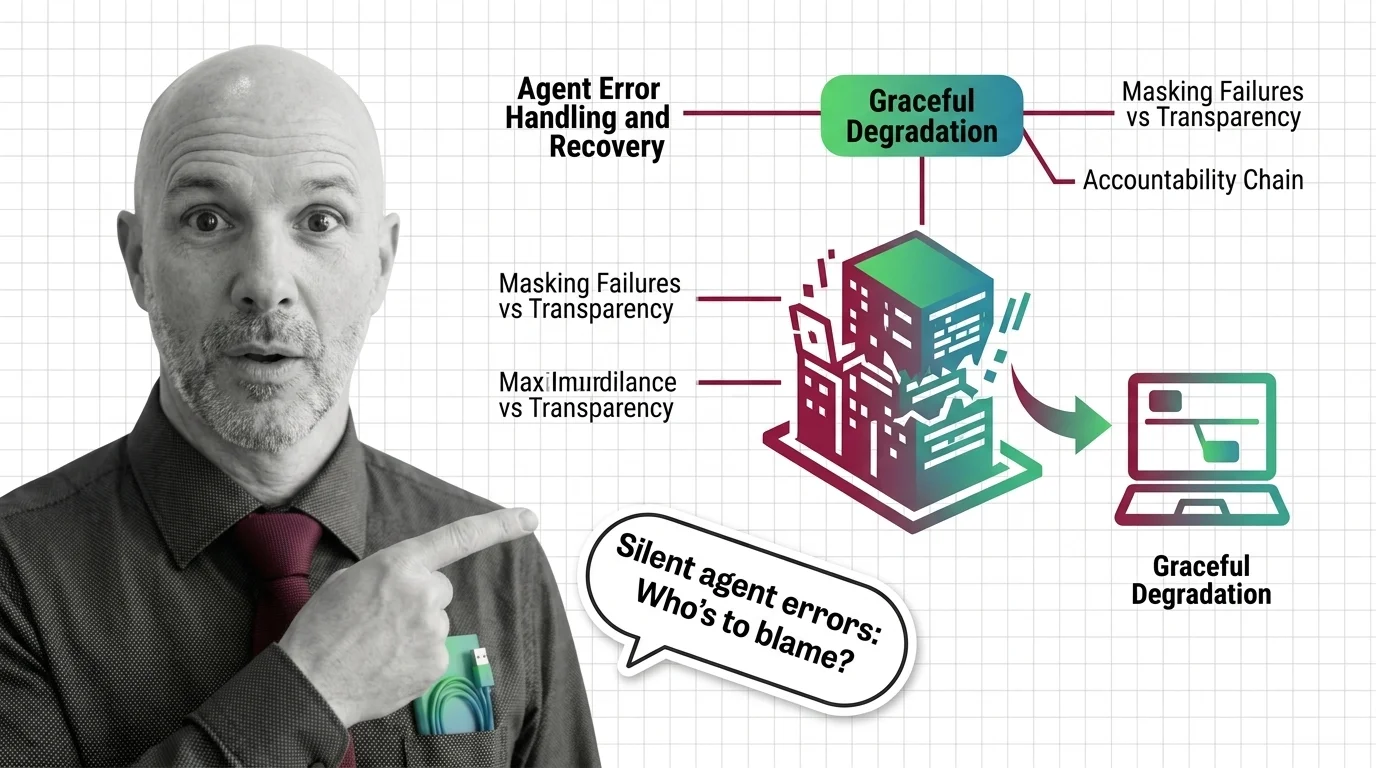

Graceful degradation lets AI agents fail without crashing. That sounds humane. It also lets failure hide. A look at the ethics of silent agent errors.