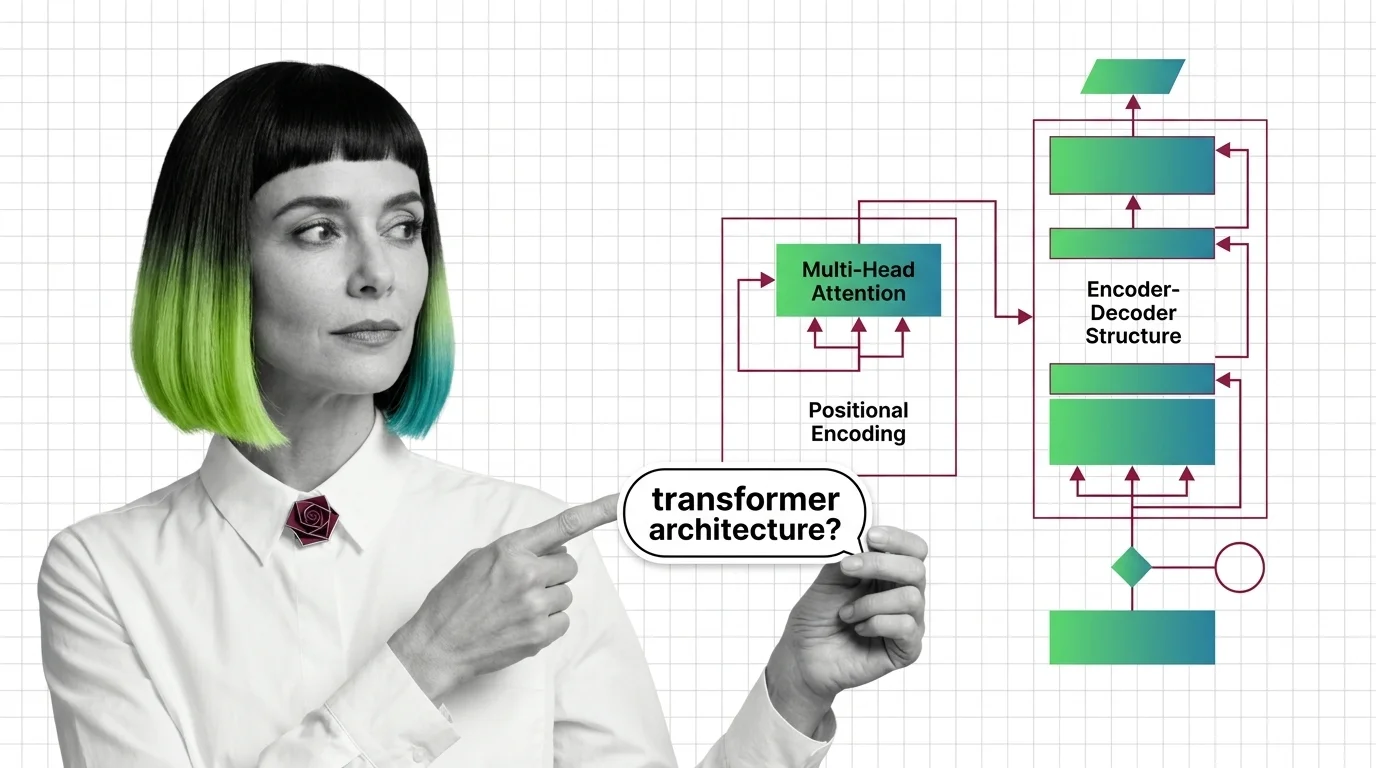

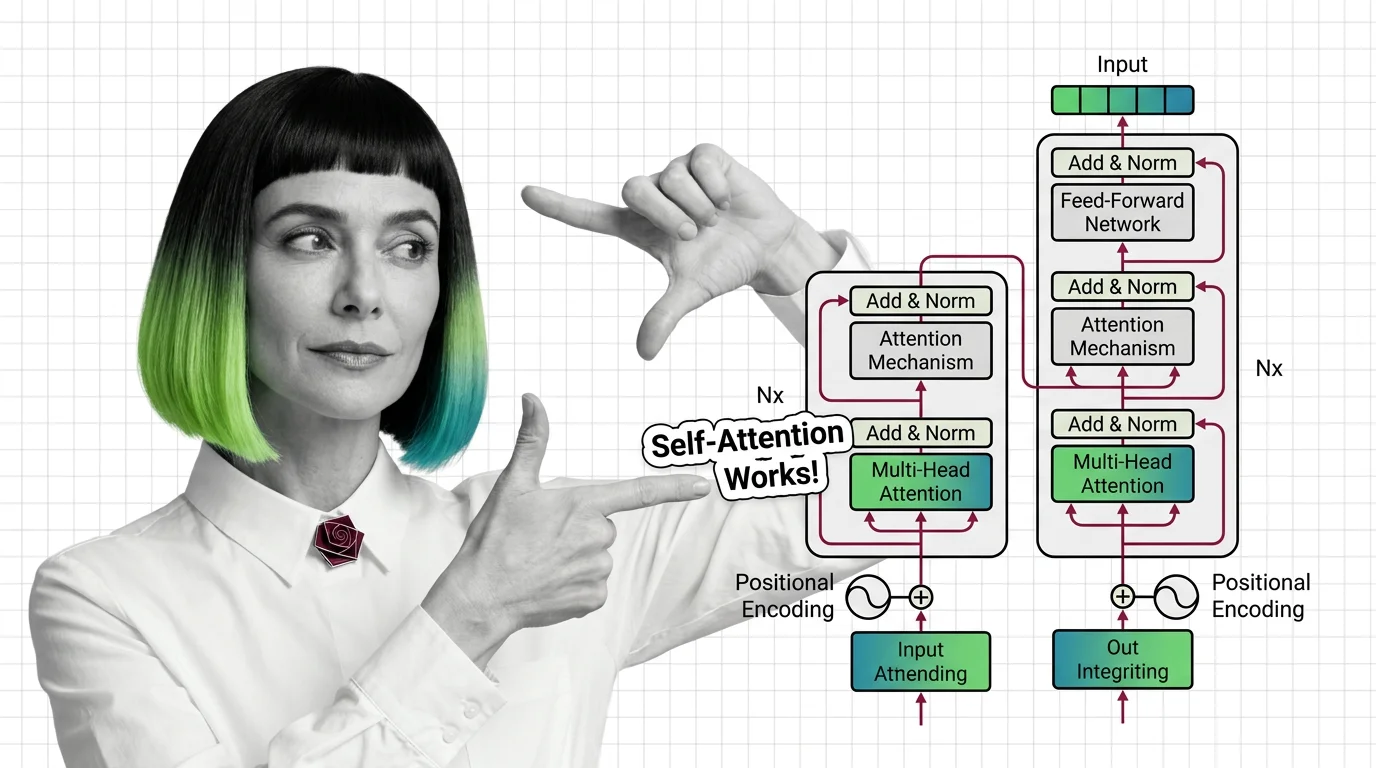

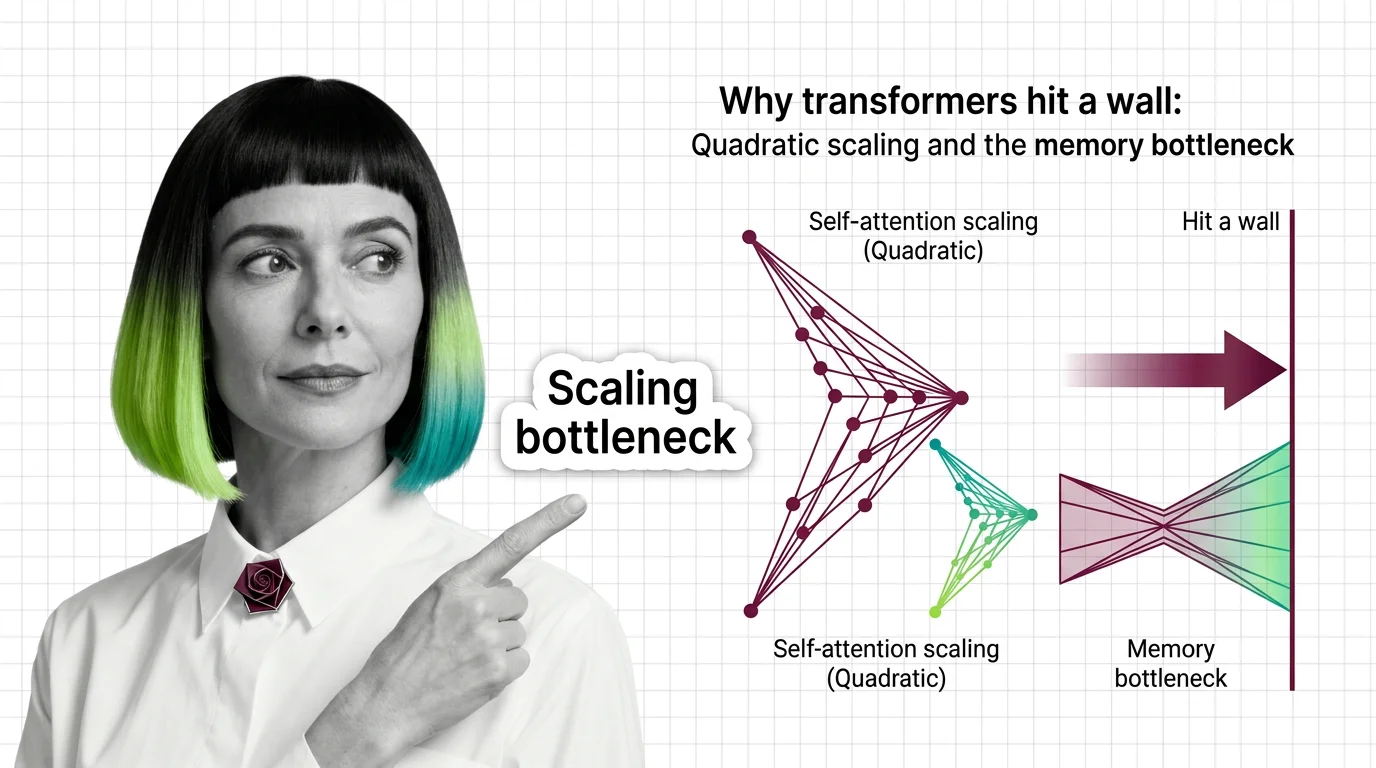

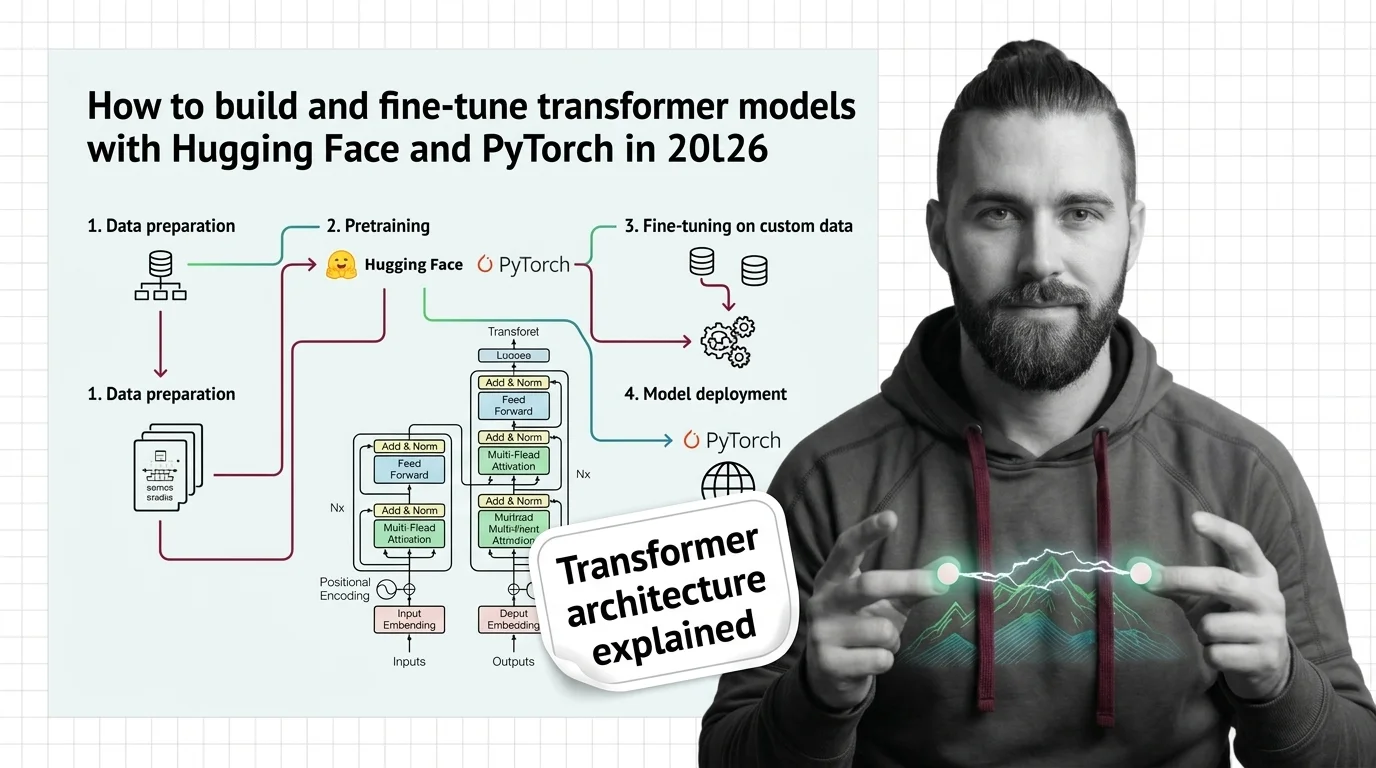

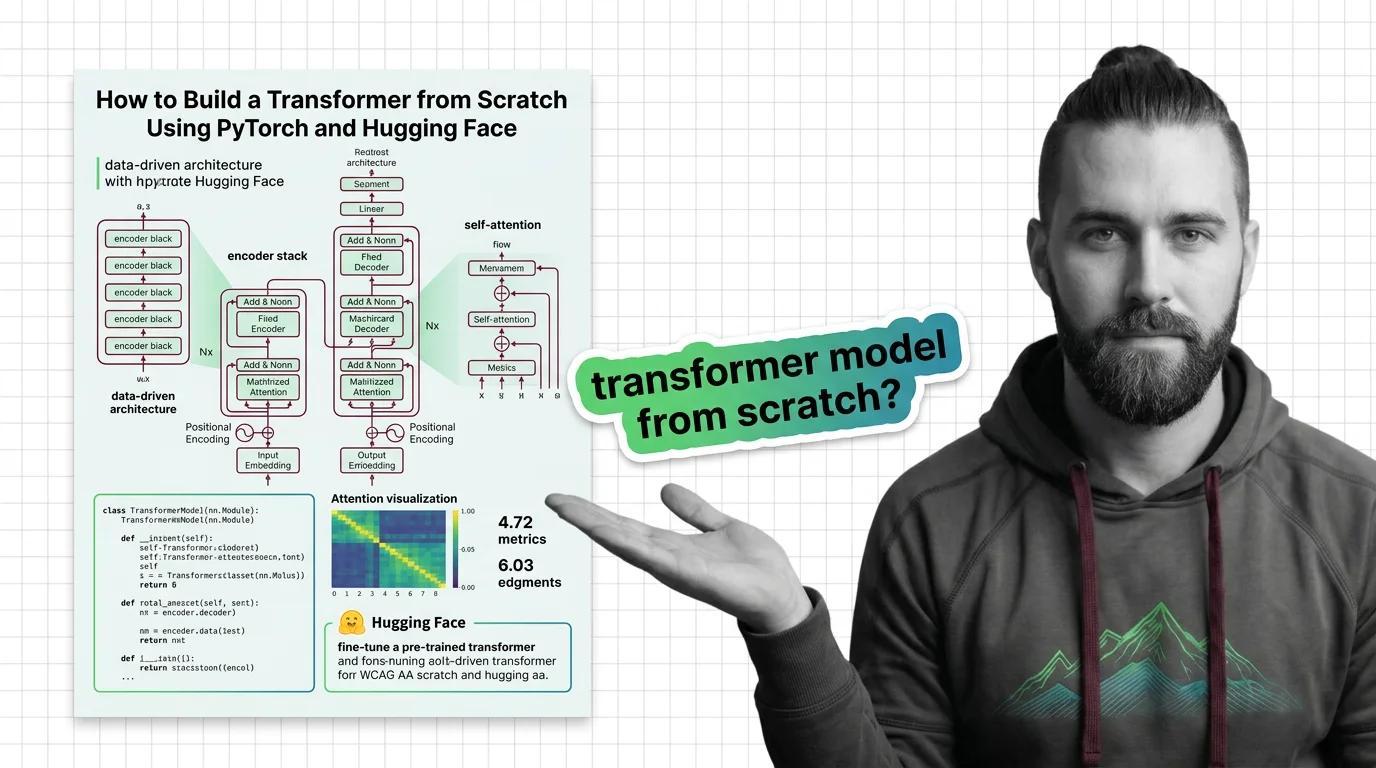

Transformer & Attention Internals

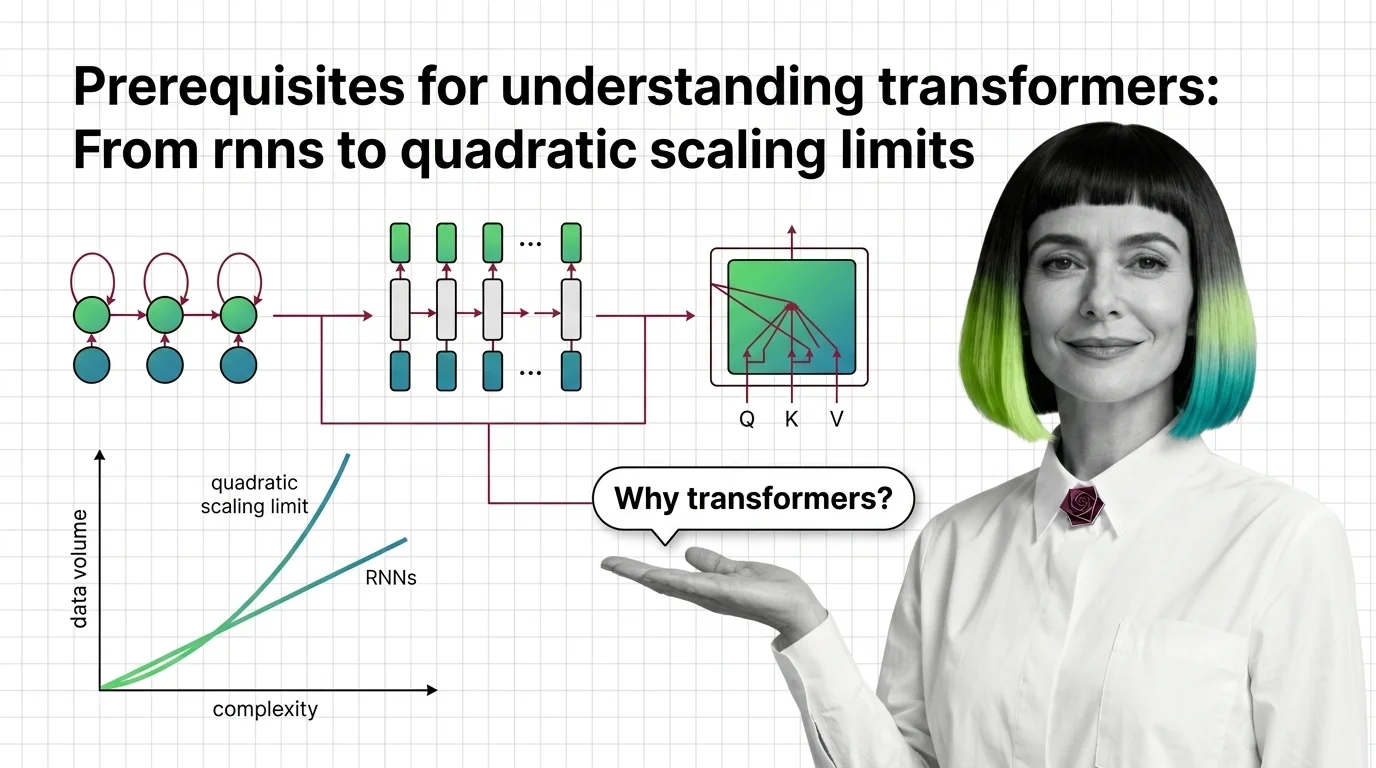

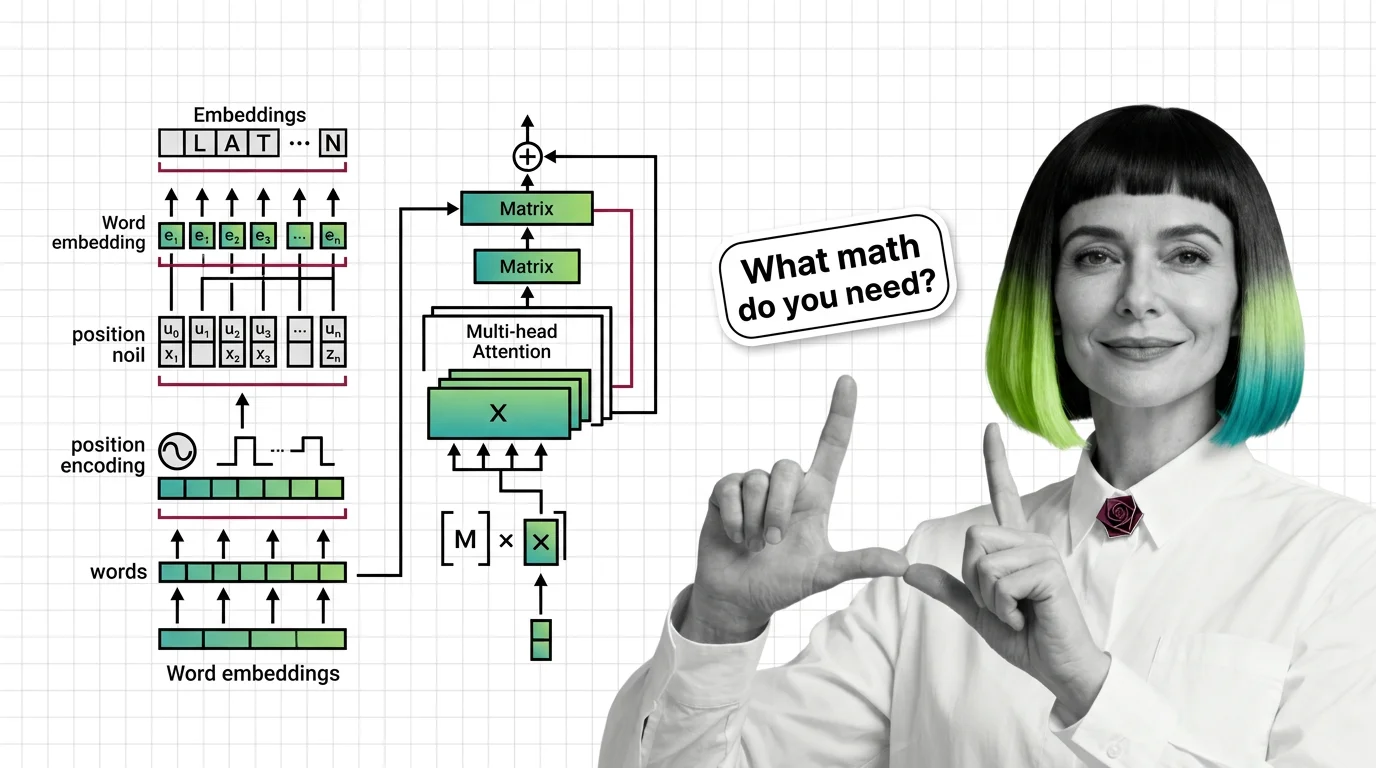

How the transformer architecture works internally, from attention mechanisms to positional encoding and encoder-decoder designs.

Where to Start

This cluster covers 1 topic. Here's a suggested reading order from fundamentals to advanced.