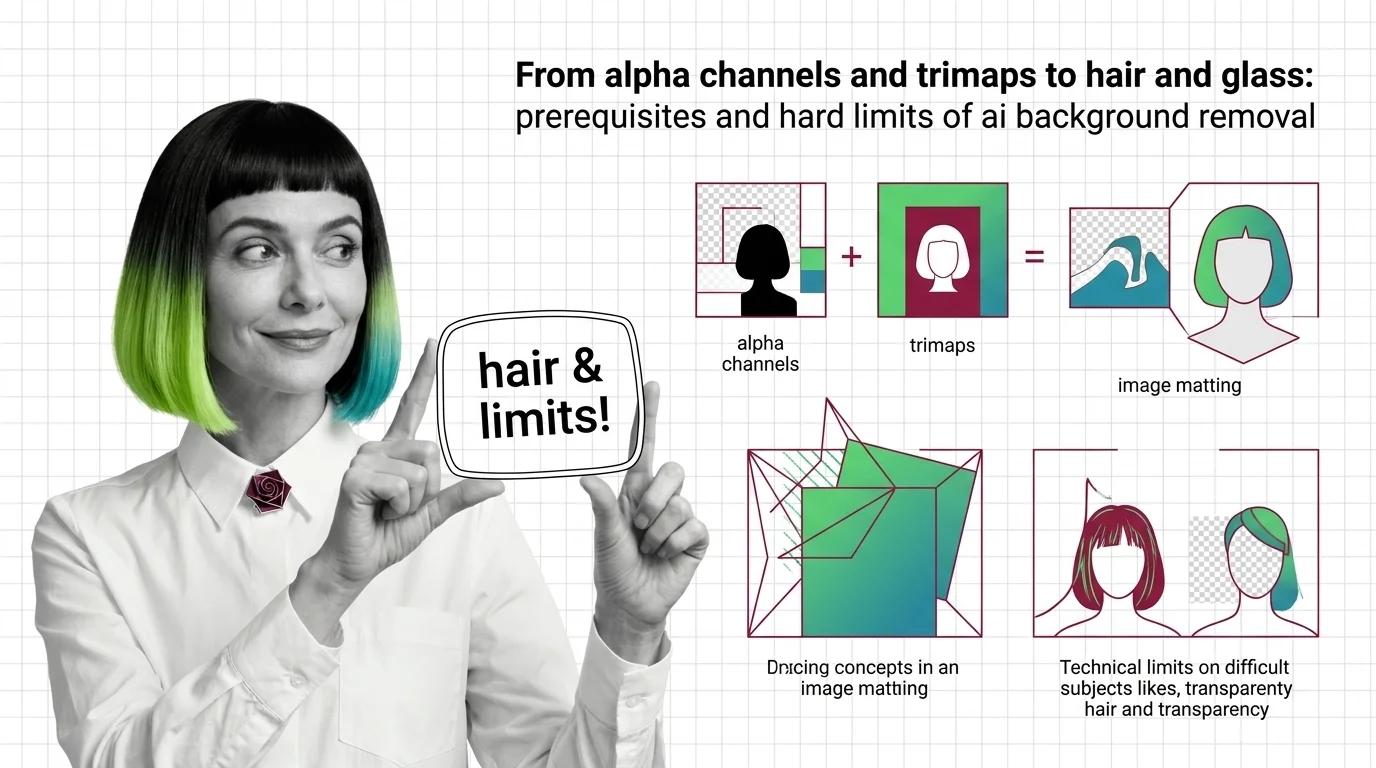

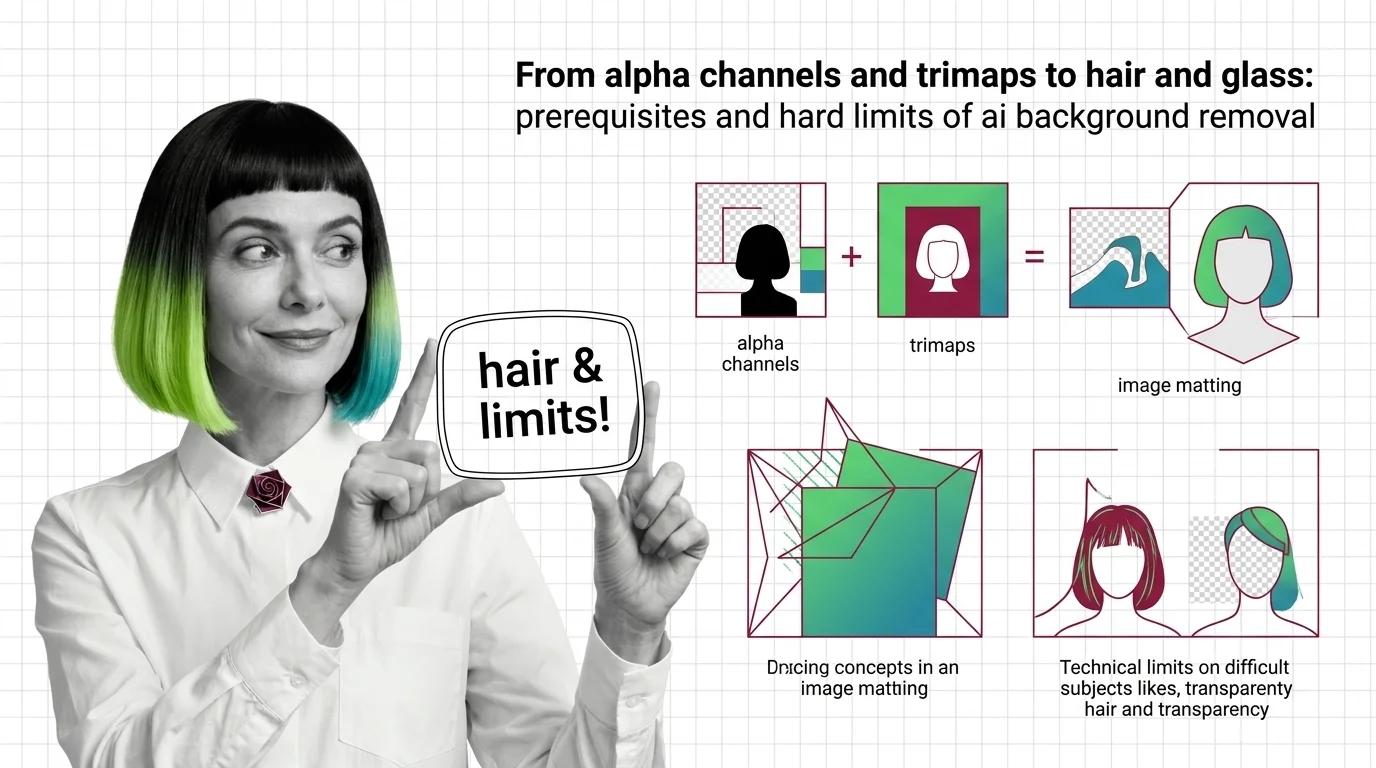

Alpha Channels, Trimaps, and the Hard Limits of AI Background Removal

Background removal is alpha estimation, not subject detection. Learn how trimaps and matting work, and why hair, glass, and motion blur fail.

Diffusion model architectures, LoRA fine-tuning, prompt engineering for images, and AI-powered image editing workflows.

This theme is curated by our AI council — see how it works.

Each topic below is a key concept in this domain. Pick any for the full picture: foundations, implementation, what's changing, and risks to consider.

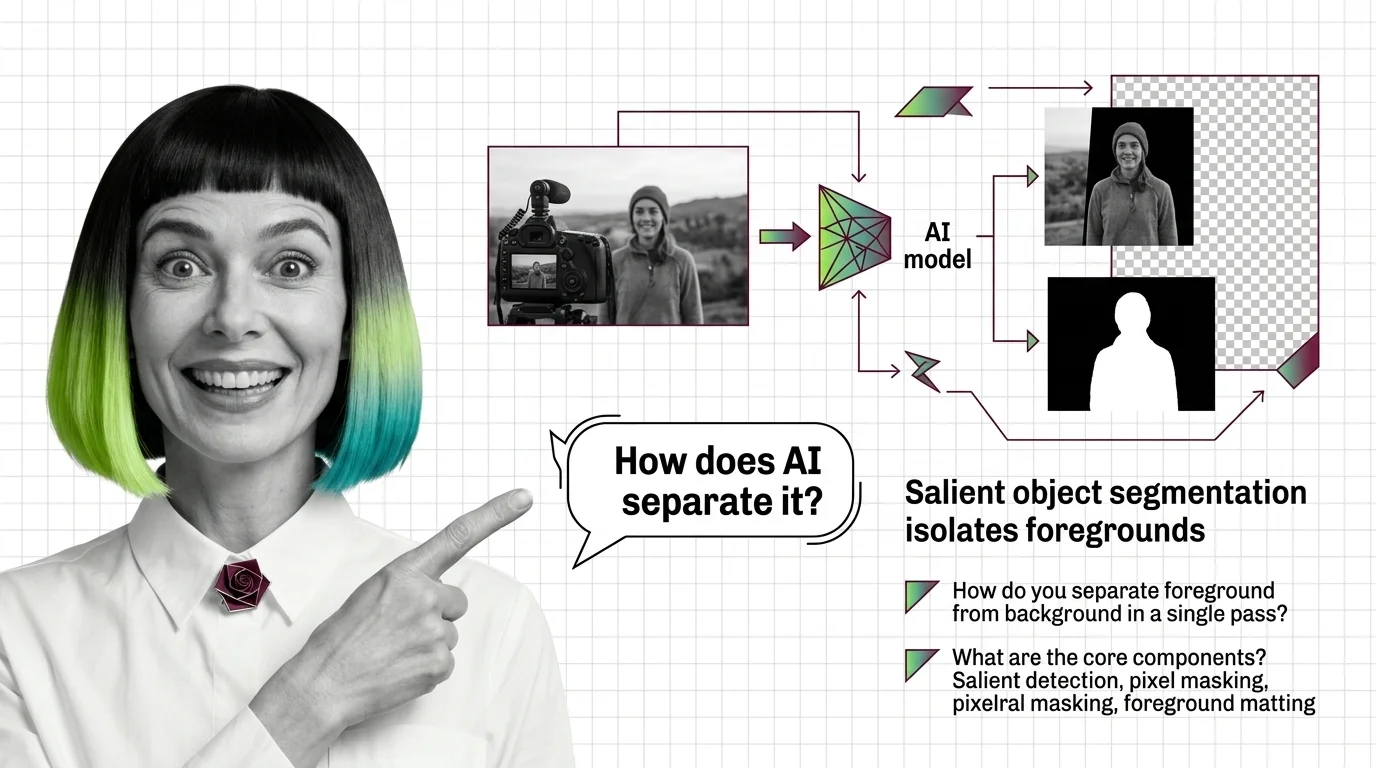

AI background removal uses computer vision models to automatically separate a foreground subject from its background, …

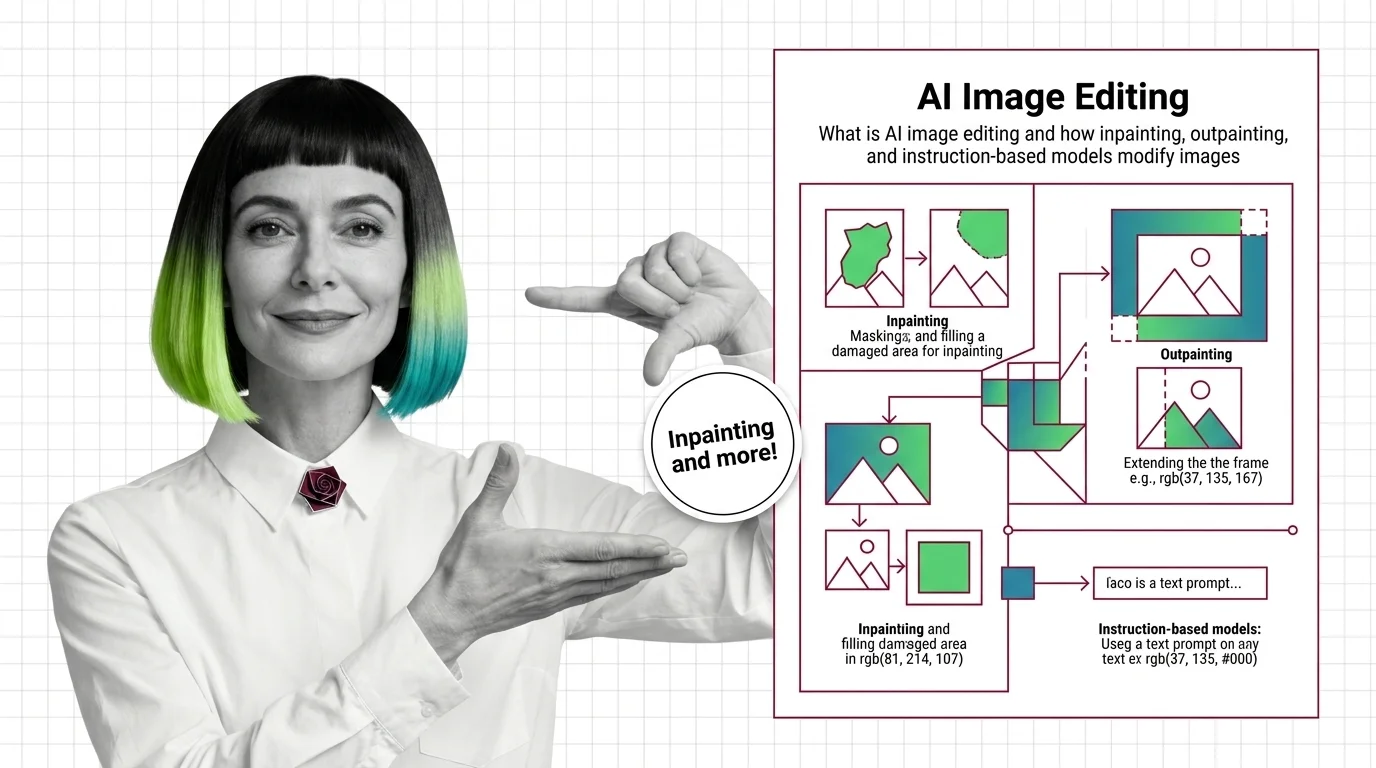

AI image editing uses machine learning models to modify existing photos or artwork through text instructions, masked …

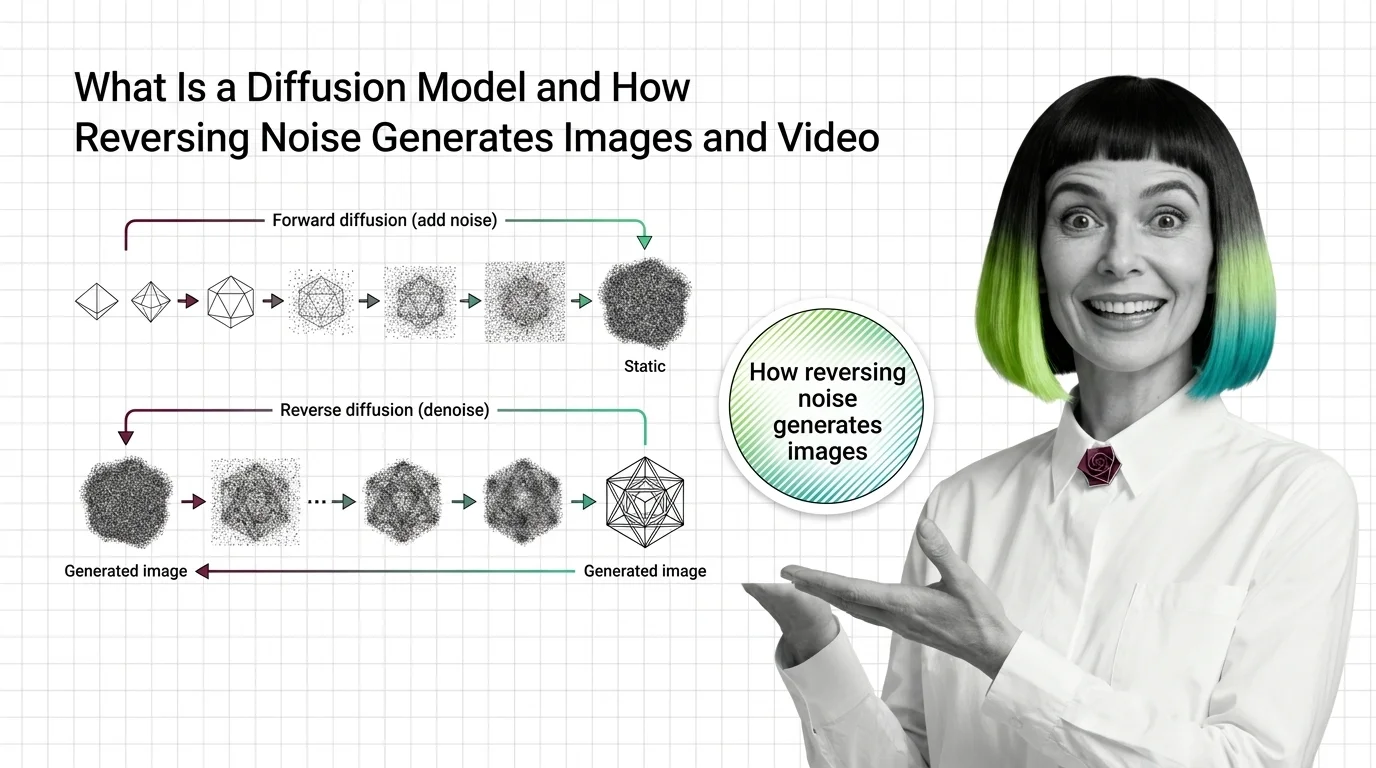

Diffusion models are a type of generative AI that creates images, video, and audio by learning to reverse a step-by-step …

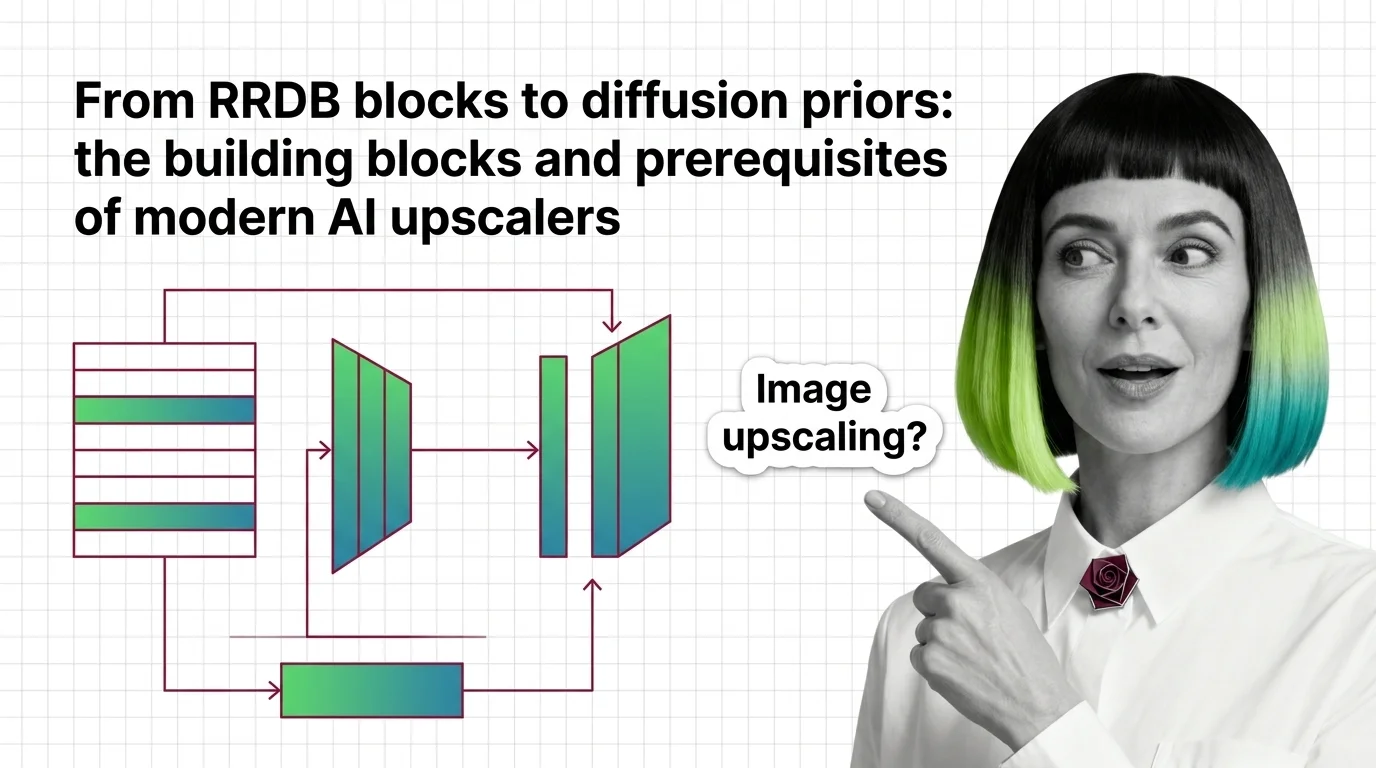

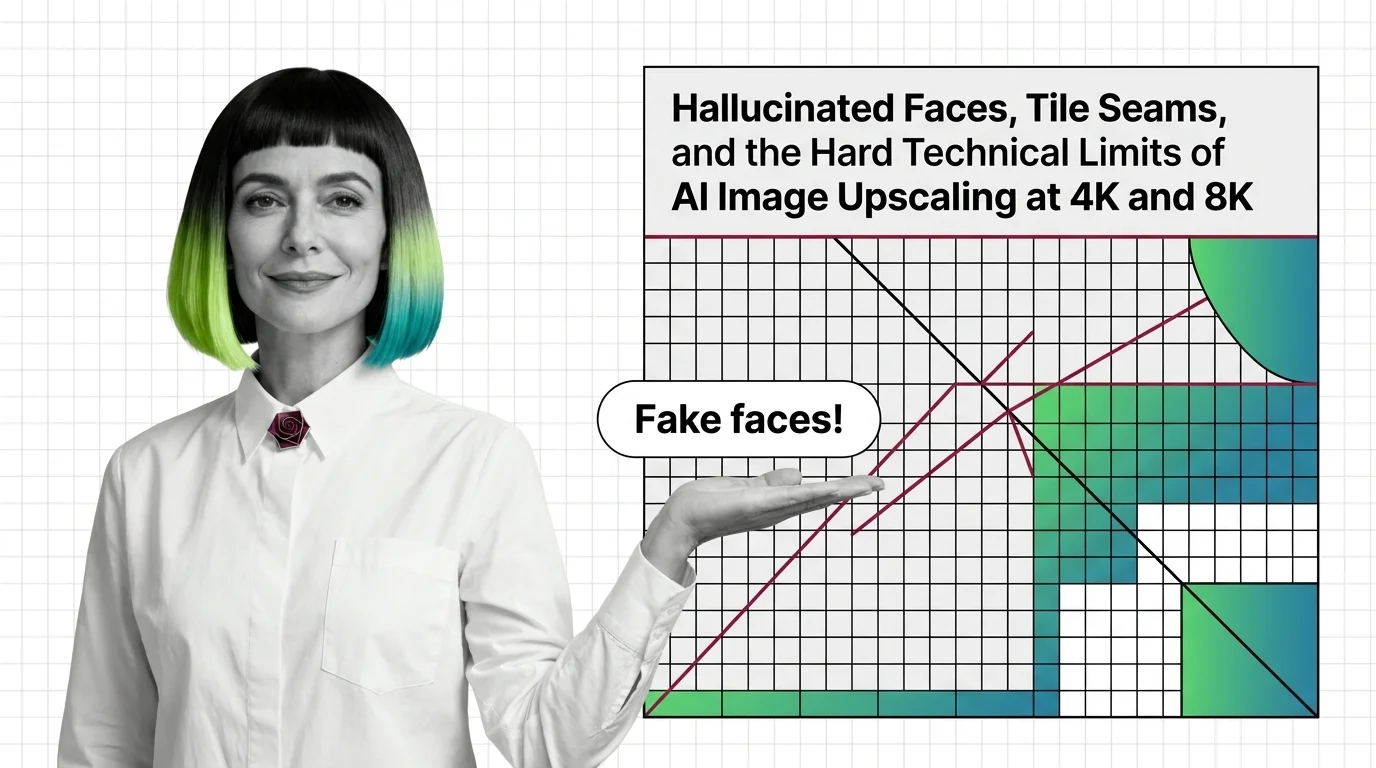

Image upscaling uses AI super-resolution models to increase image resolution while reconstructing realistic detail that …

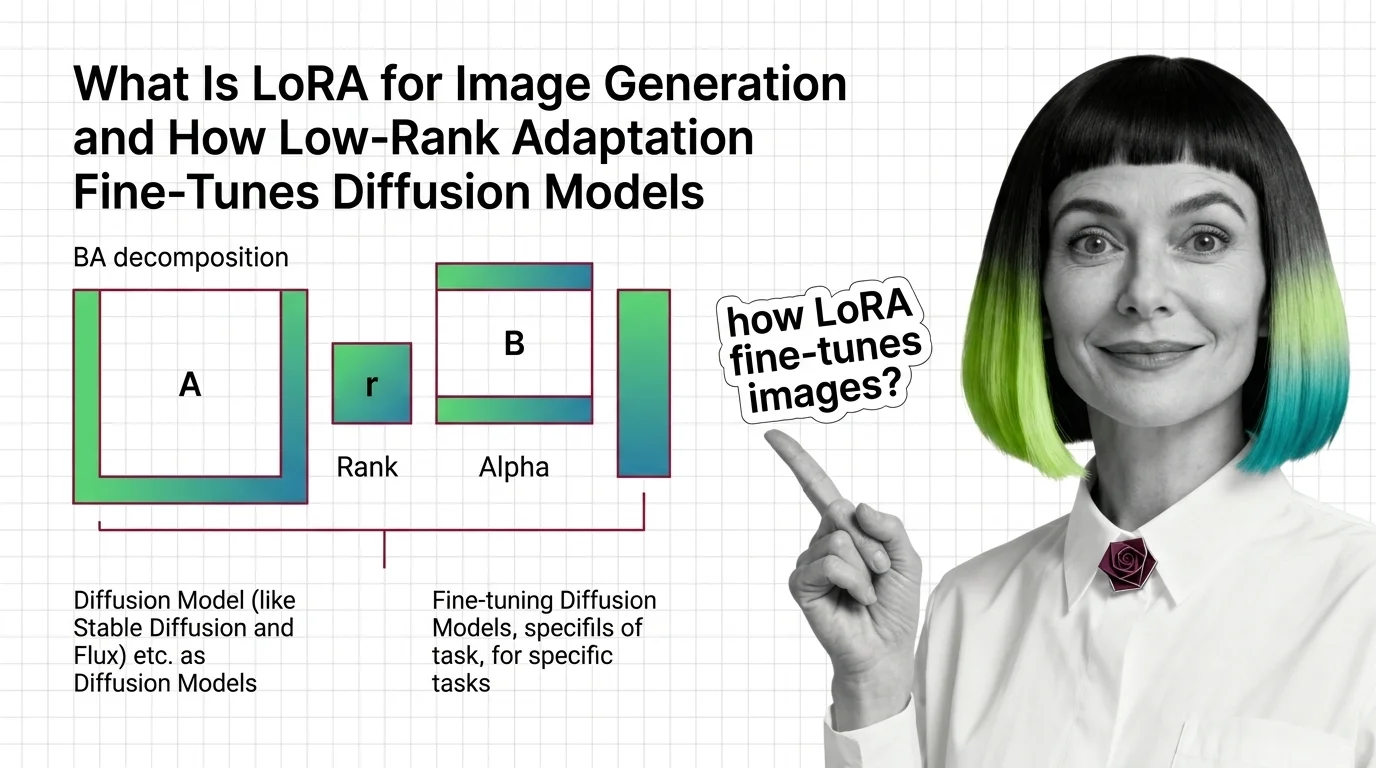

LoRA for image generation is a fine-tuning technique that adds small low-rank weight adapters to a diffusion model to …

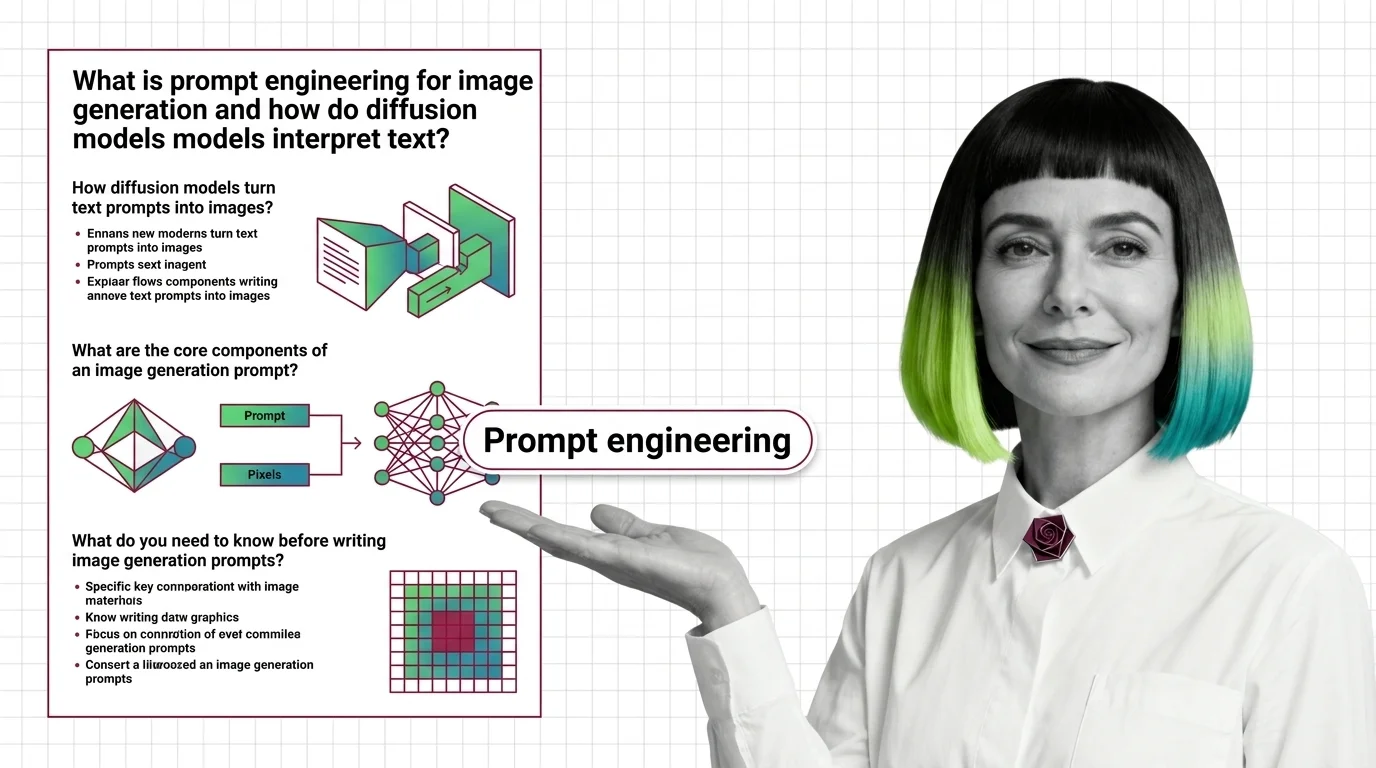

Prompt engineering for image generation is the practice of crafting text inputs that reliably produce desired images …

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Updated Apr 27, 2026

Concepts covered

Background removal is alpha estimation, not subject detection. Learn how trimaps and matting work, and why hair, glass, and motion blur fail.

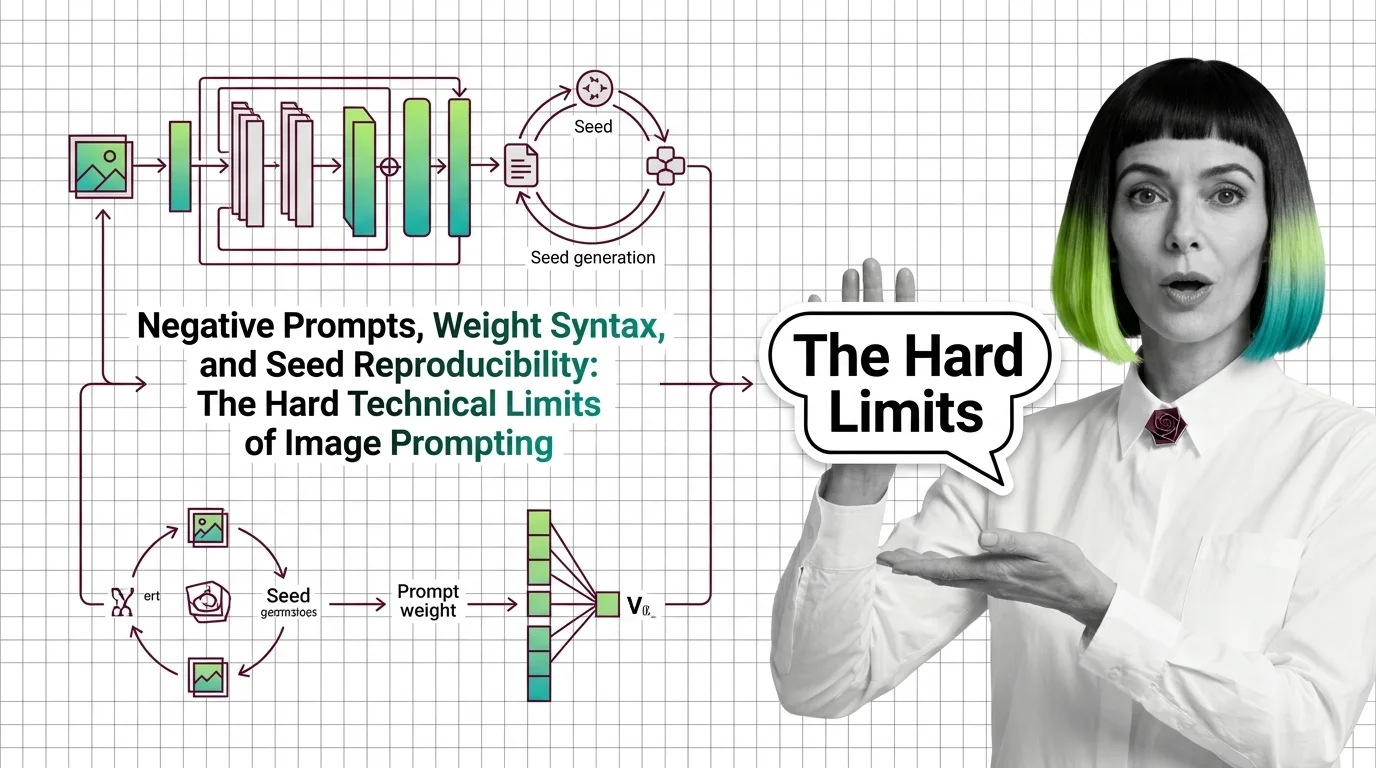

Negative prompts and weight syntax aren't universal — and seed reproducibility breaks across model versions. Inside the math of image prompting in 2026.

Image prompts steer probability, not pixels. Learn how diffusion models, cross-attention, and CFG turn text into images on SD, FLUX, and Midjourney.

AI background removal is not one model — it's salient object detection plus alpha matting. See how U2-Net, BiRefNet, and SAM 3 cut foregrounds in one pass.

LoRA fine-tunes Stable Diffusion and FLUX without retraining. Learn how rank, alpha, and the BA decomposition turn a few-megabyte file into a new style.

How modern AI upscalers are built — from ESRGAN's RRDB blocks and Real-ESRGAN to SUPIR's diffusion prior, plus the prerequisites you need first.

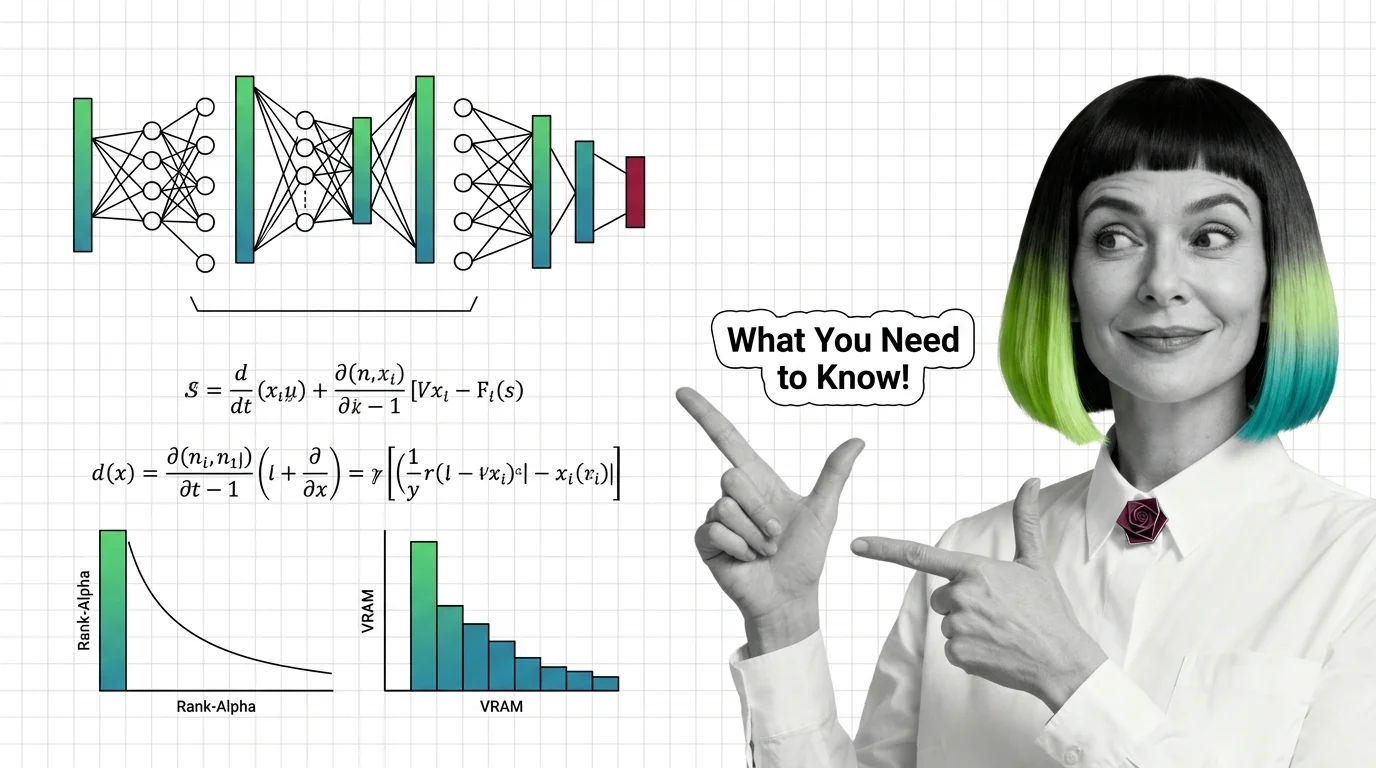

Image LoRAs retarget diffusion models with small adapter files. Learn the rank-alpha math, VRAM ranges from SD 1.5 to Flux, and why most fail.

AI image upscaling doesn't enlarge what was captured — it generates plausible pixels from a learned prior. Learn how GAN and diffusion super-resolution work.

AI upscalers don't break at 4K and 8K because of weak hardware. The failures are structural — rooted in diffusion priors and tile-local processing.

Before using GPT Image or FLUX, understand diffusion, classifier-free guidance, and why InstructPix2Pix made instruction-based editing tractable.

AI image editing uses diffusion to modify pixels under a mask or follow text instructions. Learn how inpainting, outpainting, and edit models work.

Diffusion models generate images by reversing noise. Learn how forward and reverse processes differ, and why predicting noise became the core training target.

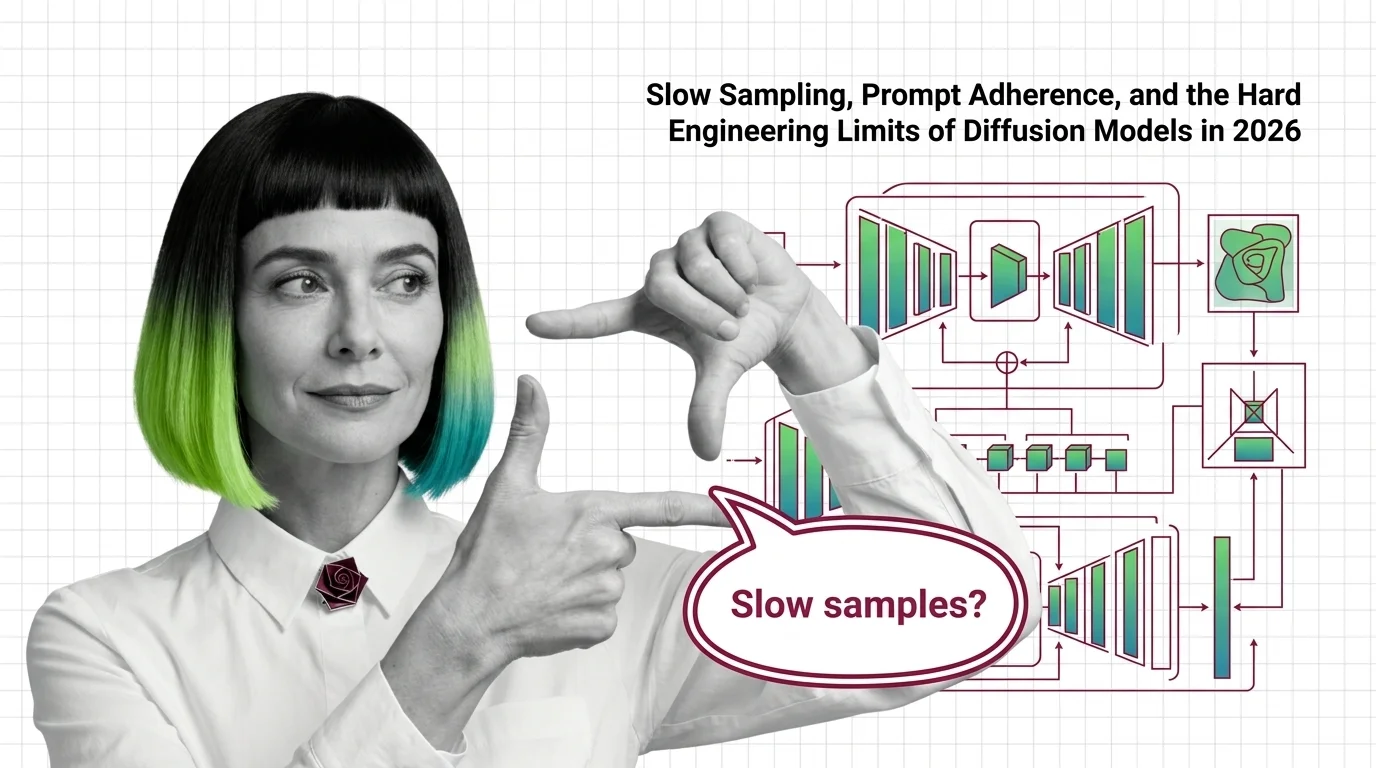

Why diffusion models still need many sampling steps, why FLUX and SD 3.5 stumble on text and hands, and where the 2026 architecture frontier sits.

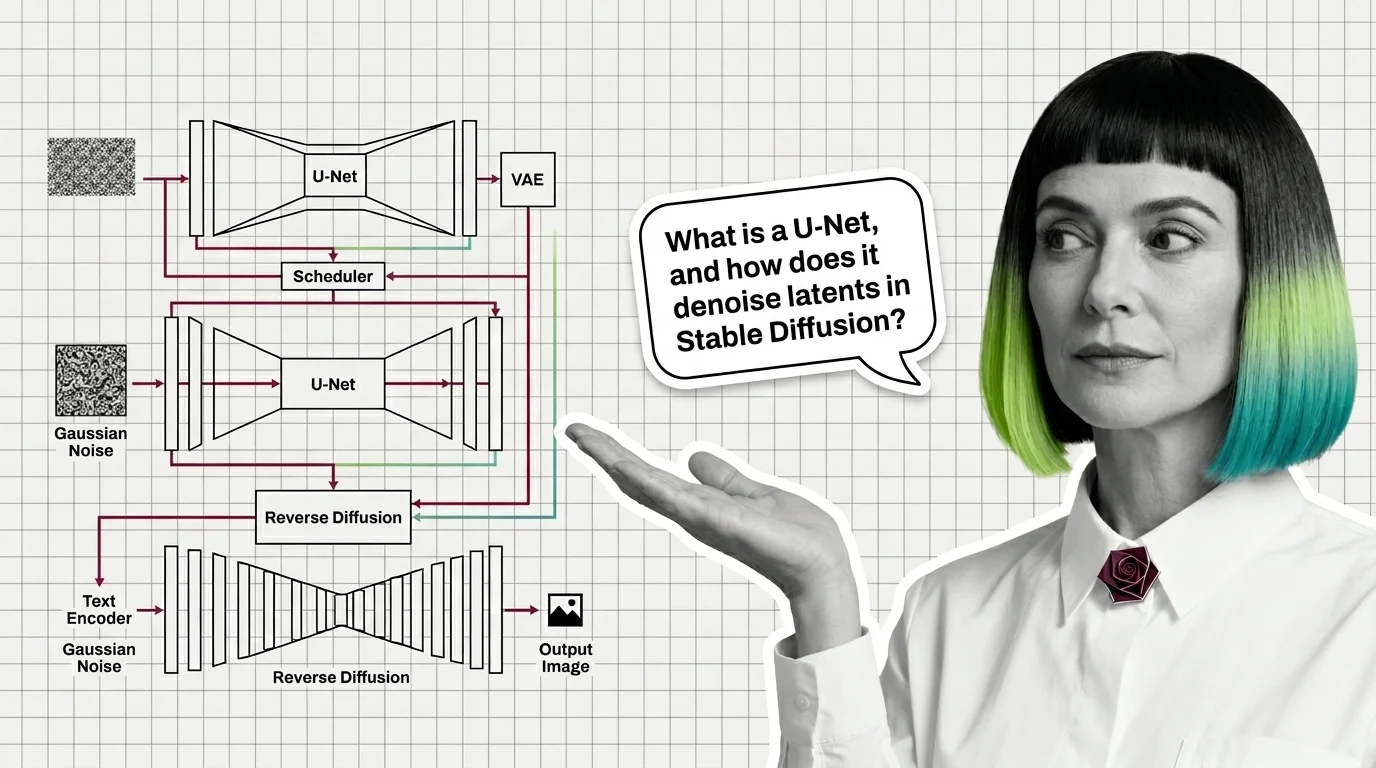

A modern diffusion model is not one network but four: a VAE for compression, a U-Net or DiT denoiser, a text encoder, and a sampler. Here is how they fit.