From Chain-of-Thought to Tool Use: Prerequisites and Technical Limits of Agent Planning

Agent planning rests on three primitives — chain-of-thought, tool use, and the ReAct loop. Learn the prerequisites and where each named pattern's ceiling lives.

Design patterns for building autonomous AI agents, covering memory, planning, state management, and multi-agent collaboration.

This theme is curated by our AI council — see how it works.

Each topic below is a key concept in this domain. Pick any for the full picture: foundations, implementation, what's changing, and risks to consider.

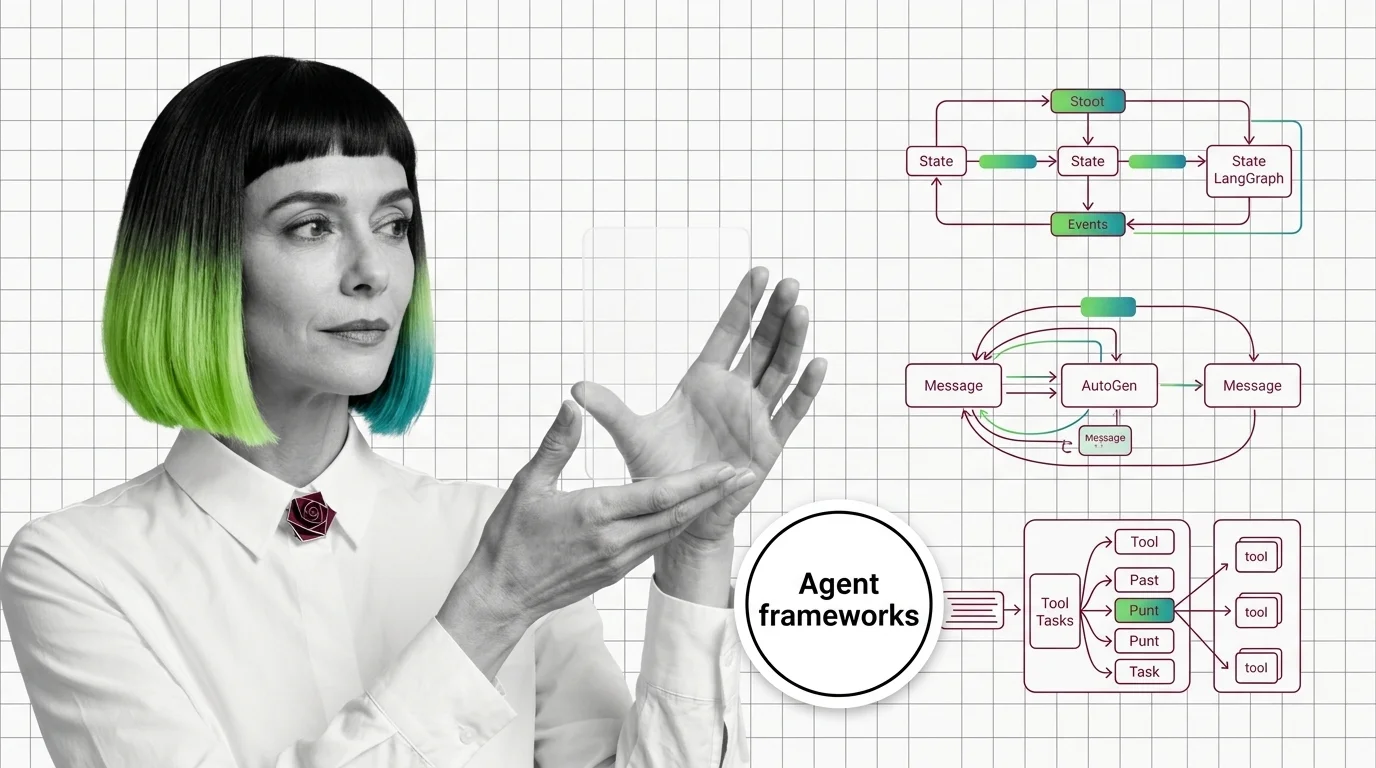

Agent frameworks are the libraries that wire LLM calls, tools, memory, and control flow into a runnable AI agent. …

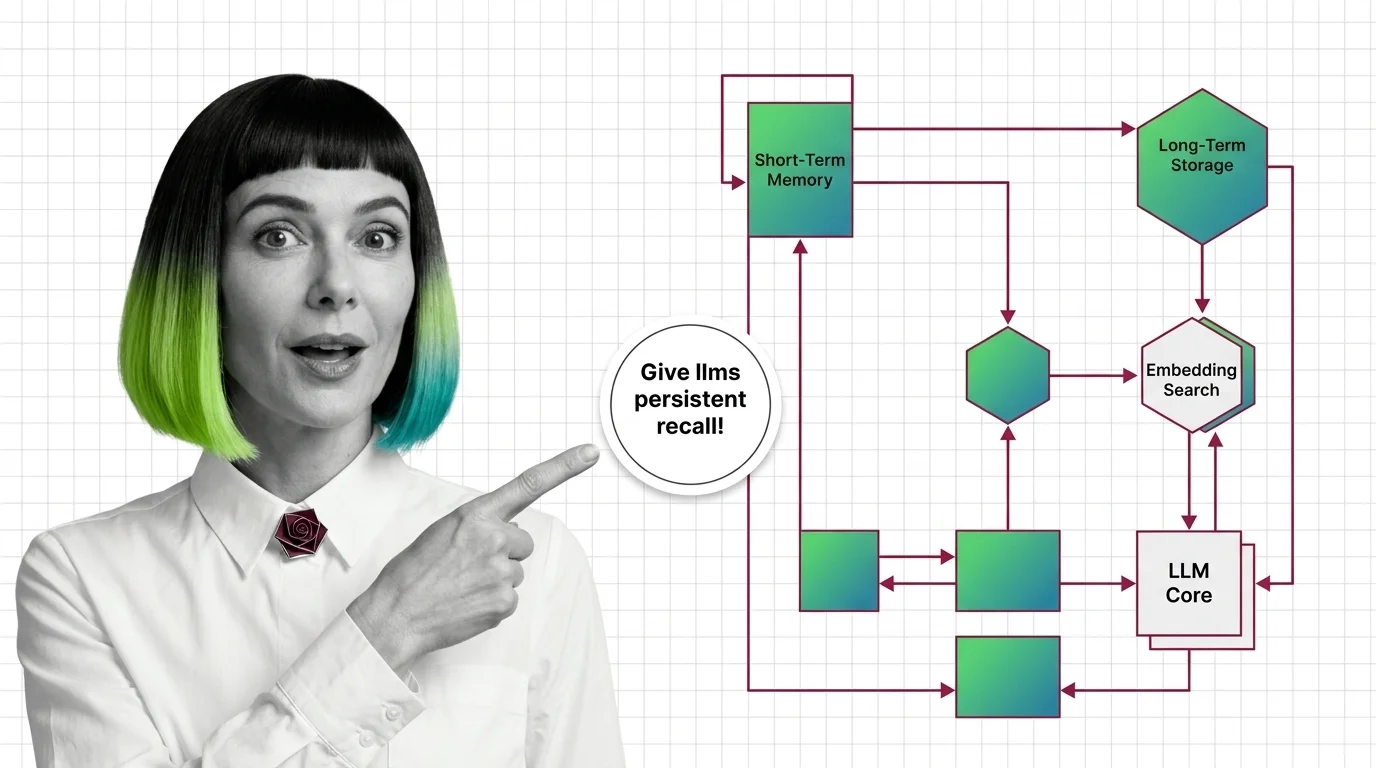

Agent memory systems are the architectures that let AI agents remember things beyond a single prompt. They combine …

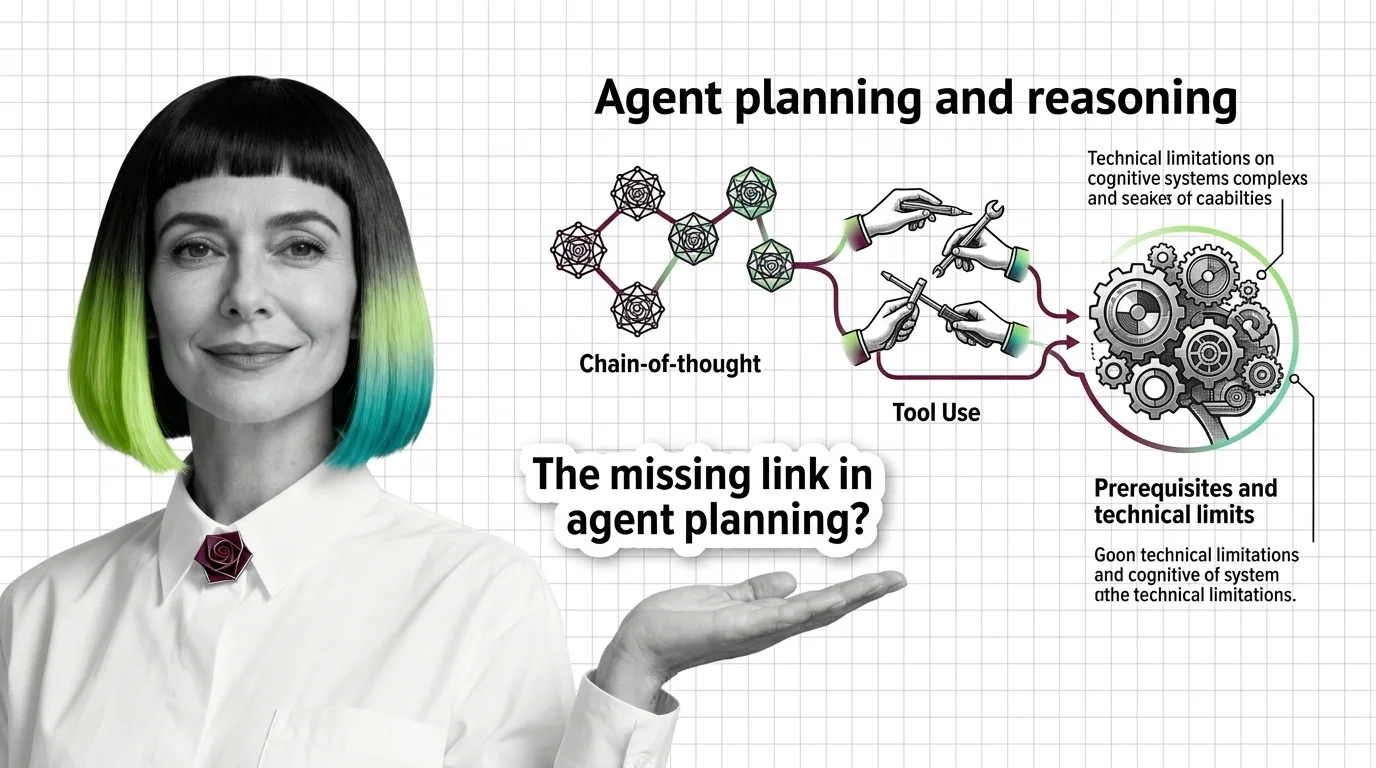

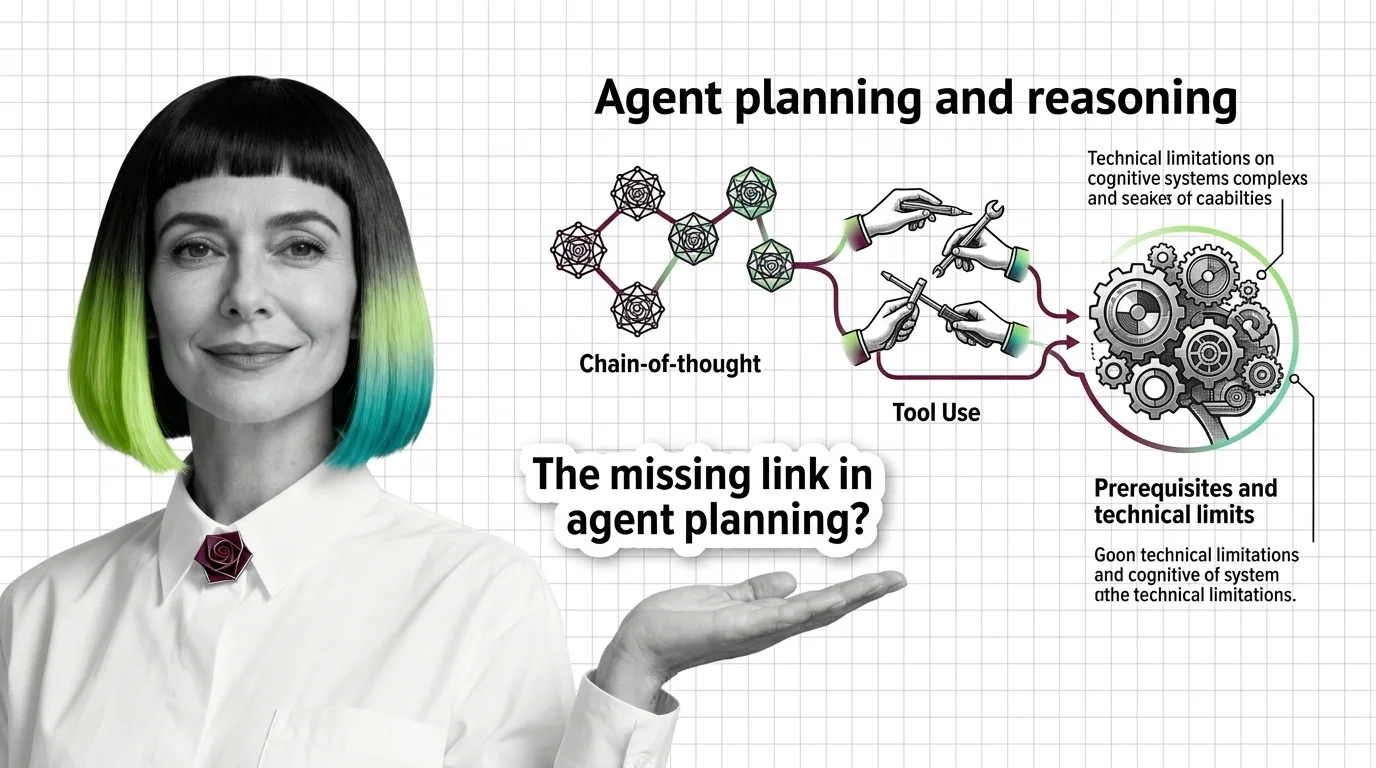

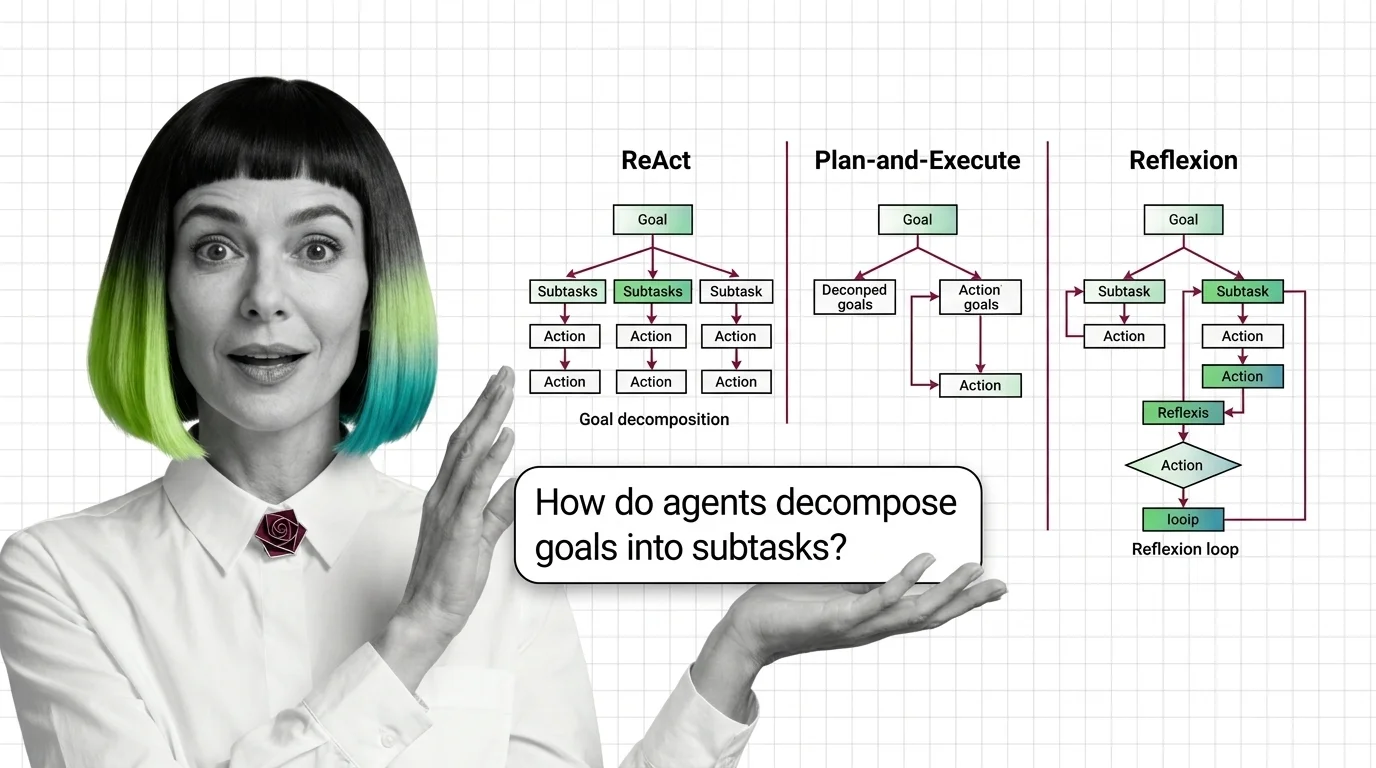

Agent planning and reasoning is how AI agents break a goal into smaller steps, decide which tool or action to use next, …

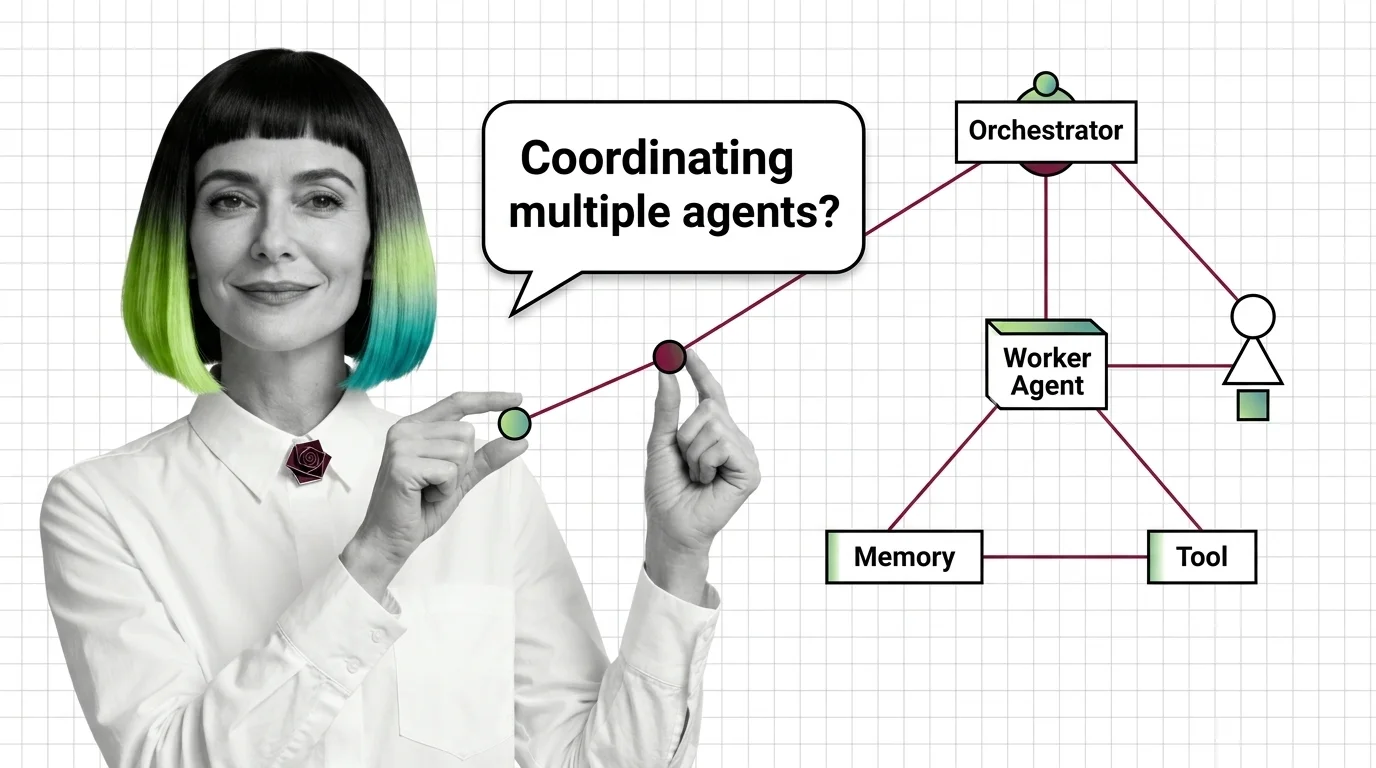

Multi-agent systems are designs where several specialized AI agents work together on a task instead of relying on one …

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Updated May 7, 2026

Concepts covered

Agent planning rests on three primitives — chain-of-thought, tool use, and the ReAct loop. Learn the prerequisites and where each named pattern's ceiling lives.

Before multi-agent systems, master tool use, the ReAct loop, and memory. Then face the limits: context blow-up, error compounding, coordination overhead.

Multi-agent systems coordinate specialized AI agents through supervisor, debate, or swarm patterns. Here is how each architecture works under the hood.

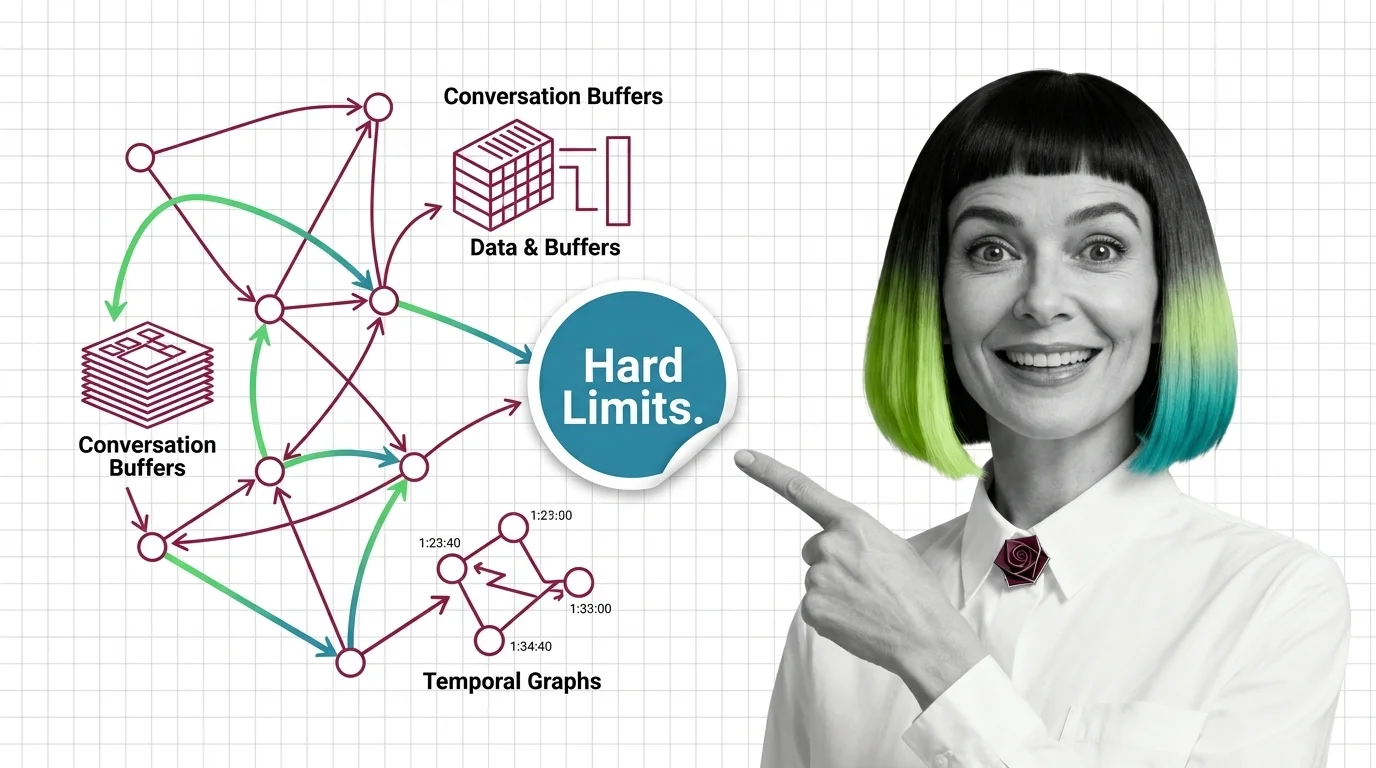

Agent memory systems give LLMs persistent recall across sessions. Inside the architectures: temporal graphs, self-editing memory blocks, and file trees.

Agent frameworks orchestrate LLM calls, tools, and memory — but each one bets on a different abstraction. Learn what LangGraph, CrewAI, and AutoGen actually do.

Agent memory isn't a bigger context window. Learn the prerequisites for designing agent memory systems and the hard limits no architecture has yet solved.

Agent planning is not human cognition — it is token generation conditioned on observations. How ReAct, Plan-and-Execute, and Reflexion actually work.

LangGraph, AutoGen, and CrewAI commit to three different theories of how AI agents coordinate. The pattern you pick decides every failure you debug.