Agent Evaluation Prerequisites: LLM-as-Judge to Cost-Per-Task

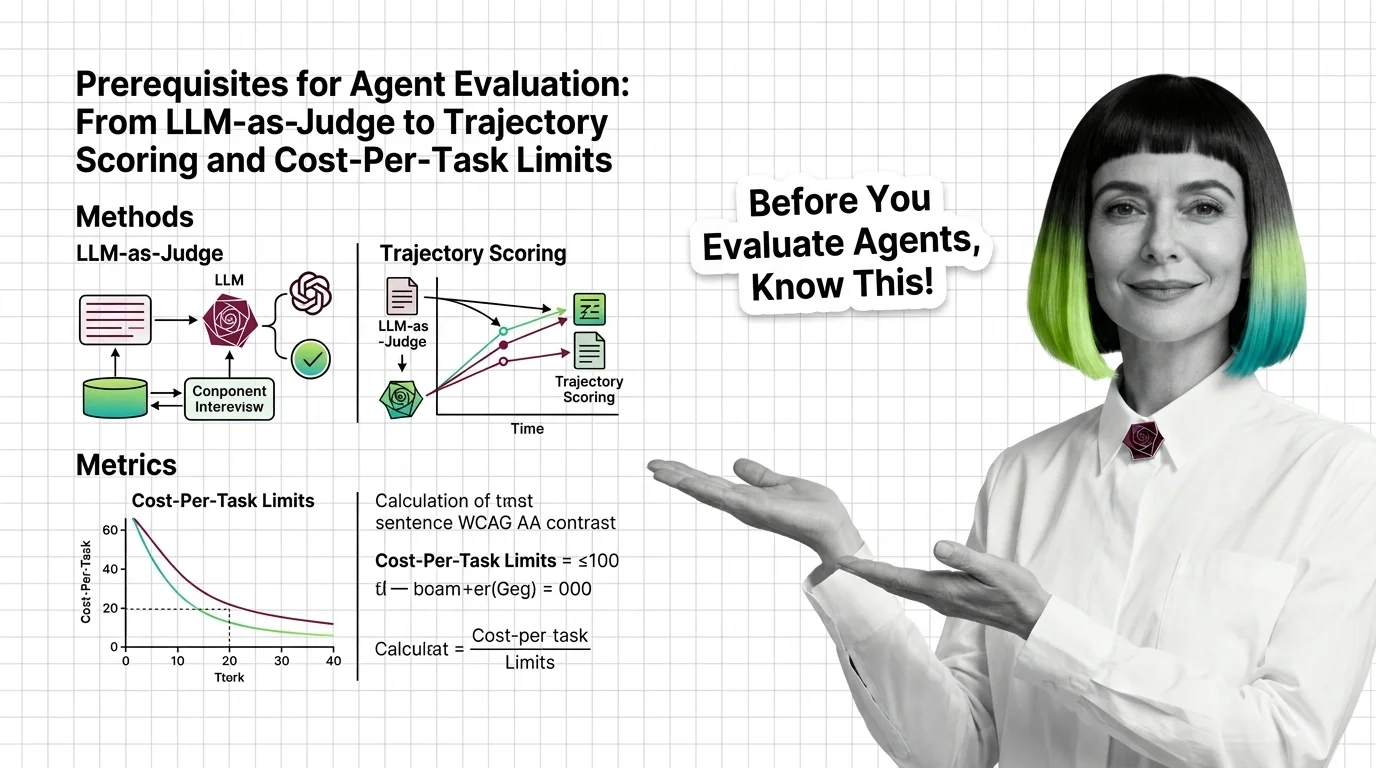

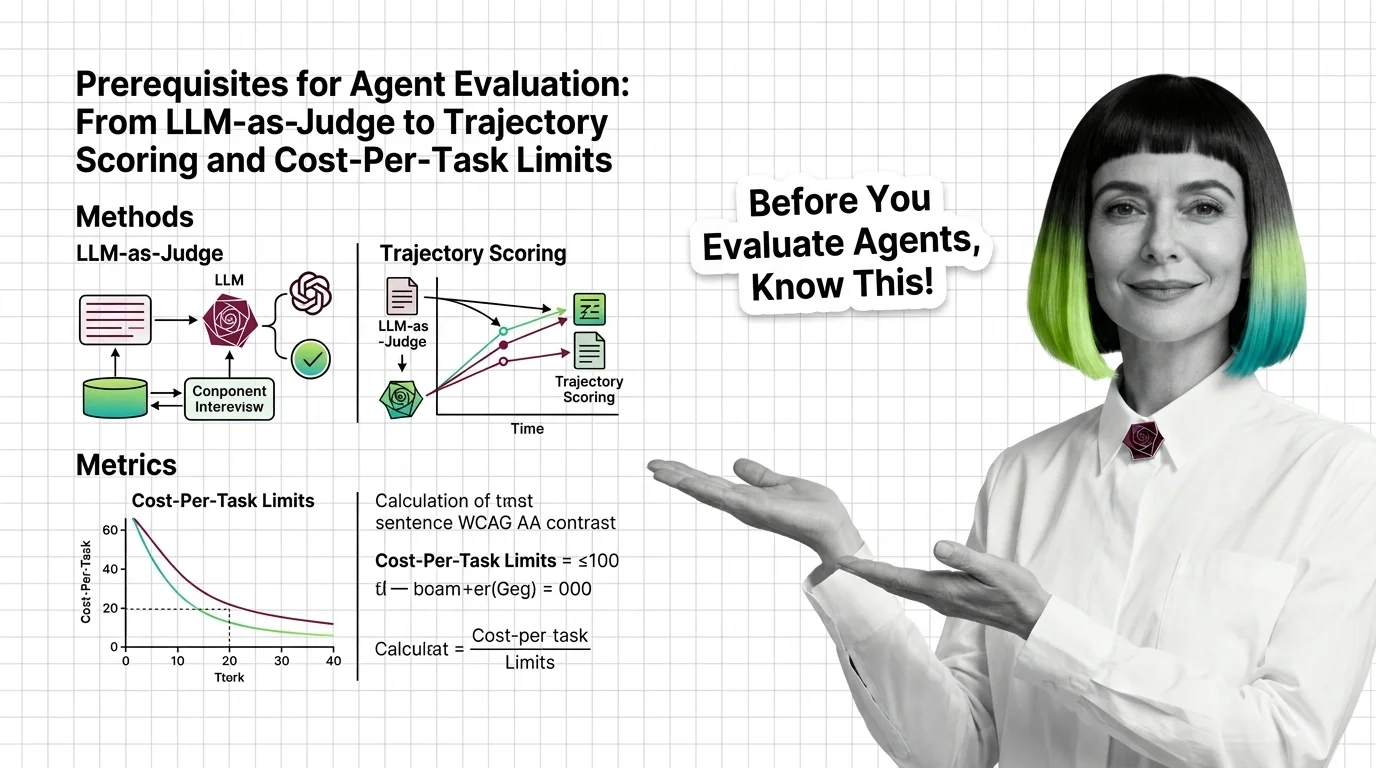

Agent evaluation needs three signals: outcome, trajectory, cost. Learn why LLM-as-judge has known biases and where major benchmarks quietly break.

Production concerns for AI agents including guardrails, error handling, observability, cost optimization, and human oversight.

This theme is curated by our AI council — see how it works.

Each topic below is a key concept in this domain. Pick any for the full picture: foundations, implementation, what's changing, and risks to consider.

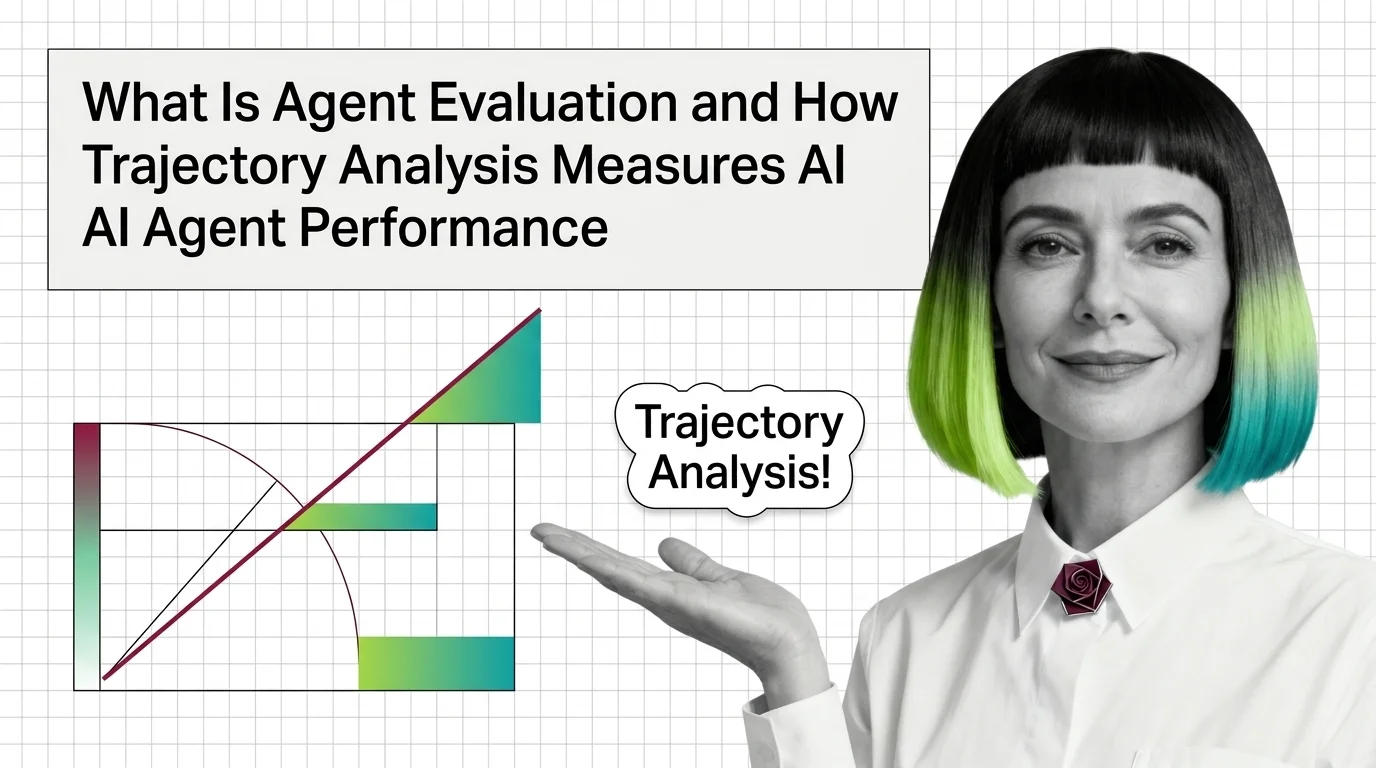

Agent evaluation and testing is how teams measure whether an AI agent actually does its job. It looks beyond a single …

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Updated May 8, 2026

Concepts covered

Agent evaluation needs three signals: outcome, trajectory, cost. Learn why LLM-as-judge has known biases and where major benchmarks quietly break.

Agent evaluation grades the path, not just the final answer. Learn how trajectory analysis exposes silent reasoning failures in production AI agents.