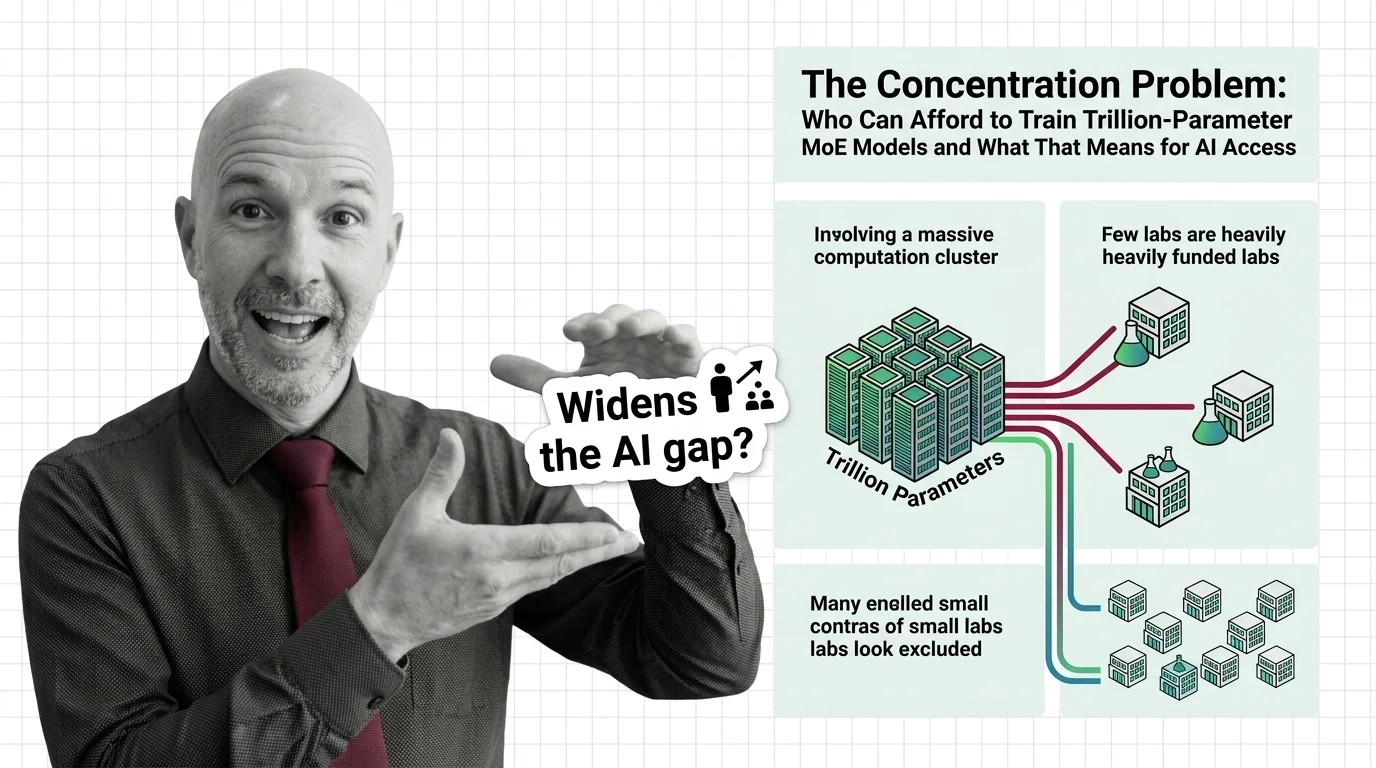

The Concentration Problem: Who Can Afford to Train Trillion-Parameter MoE Models and What That Means for AI Access

Table of Contents

The Hard Truth

What if the architecture designed to make AI more efficient is simultaneously making it less accessible? Sparse activation saves compute at inference, but the training bill has never been higher. The question is not whether MoE works. The question is who gets to build it.

There is a peculiar irony at the heart of Mixture Of Experts architecture. The entire premise is efficiency — activate only the experts you need, leave the rest idle, save compute at scale. But building the system that makes this possible requires resources that most organizations on Earth cannot assemble. The efficiency arrives after the gate has already closed. And the gate, it turns out, is measured in billions of dollars, millions of GPU hours, and energy budgets that rival small nations.

The Price Tag Nobody Publishes

The numbers that do surface tell a story of acceleration. DeepSeek-V3 — 671 billion parameters total, 37 billion active per token, 256 routed experts — cost $5.576 million for its final training run (DeepSeek Technical Report). That figure covers only the last iteration. The full research cost, including failed experiments and ablation studies, is certainly much higher. Moonshot AI’s Kimi K2, a trillion-parameter model with 32 billion active parameters, claims approximately $4.6 million in training costs, though this figure remains independently unverified (HPCwire). OpenAI’s GPT-5 confirmed the MoE architecture but has not disclosed parameter counts or training budgets (OpenAI).

These are the numbers that get released. The deeper question — what did the infrastructure itself cost? — rarely appears in the same announcement. The xAI Colossus cluster in Memphis, which trained Grok-3, cost approximately $4 billion (Epoch AI). Training cost growth has compounded at 2.4 times per year since 2016, and projections suggest individual training runs will exceed $1 billion by 2027 (Epoch AI). Follow this curve far enough and a pattern emerges: the ability to train a frontier model is becoming a privilege of fewer each year.

The cost figures are not just large. They are growing faster than the revenue models that are supposed to justify them. And the organizations absorbing these costs are not doing it out of curiosity.

The Efficiency Counterargument

The conventional defense deserves its strongest form. Sparse Activation is genuinely elegant — a Gating Mechanism routes each input to a small subset of experts via Top K Routing, and inference costs scale with active parameters, not the total count. A 671-billion-parameter model activating only 37 billion per token delivers frontier-quality responses at a fraction of what a dense equivalent would demand. Meta’s Llama 4 Maverick — 400 billion total parameters, 17 billion active, 128 experts — is open-weight (Hugging Face Blog). So is DeepSeek-V3. And Kimi K2.

This matters. Open weights mean that research labs, universities, and smaller companies can fine-tune and run these models without retraining from scratch. The inference efficiency lowers the compute barrier at the point of use. Access to the finished product has genuinely expanded.

Thoughtful defenders of the current trajectory would argue that this is how technology has always worked. The first computers filled rooms and cost millions. Now they sit in pockets. The concentration of training capability is temporary, the reasoning goes, and the benefits cascade downward through open releases, APIs, and distillation techniques. Give it time. The market will sort it out.

The Hidden Denominator

The flaw in this reasoning is not in what it claims but in what it assumes. It assumes that access to a pre-trained model is equivalent to access to the capability of building one. These are not the same kind of power, and conflating them obscures the structural dynamic at work.

Load Balancing Loss — the auxiliary objective that prevents expert collapse during training — reveals something important about the nature of this problem. Training a MoE model requires mastering a coordination problem across thousands of accelerators, managing Expert Parallelism at scales where communication overhead alone can consume a significant fraction of the budget. This is not merely expensive in the way that buying a larger GPU cluster is expensive. It is a qualitatively different engineering challenge, one that demands institutional knowledge, custom infrastructure, and the kind of iterative experimentation that only sustained capital investment can support.

The assumption that open weights dissolve the concentration problem confuses the ability to use a tool with the power to shape it. When you fine-tune a pre-trained model, you work within the expert boundaries, routing logic, and data representation that someone else chose. You inherit architectural decisions you cannot inspect, let alone alter. The base model determines the structure of knowledge that every downstream application encodes.

Everyone downstream is rearranging furniture in a house they did not build.

When Carbon Becomes a Question of Justice

The environmental dimension makes the concentration problem harder to set aside. Training GPT-3, now modest by current standards, consumed 1,287 MWh of electricity and produced approximately 552 tons of CO2 (MIT News). Current frontier MoE models are orders of magnitude larger. By 2026, data center electricity consumption is projected to reach approximately 1,050 TWh — enough to rank fifth globally as a nation, between Japan and Russia (MIT News). Cooling alone demands roughly 2 liters of water per kilowatt-hour. The majority of new data center electricity demand is met by fossil fuels, adding hundreds of millions of tons of CO2 each year.

These costs are not distributed equally. The organizations that train the models capture the economic value. The communities near data centers absorb the water stress, the grid strain, the heat and noise and emissions. The environmental burden is externalized by design — because the economic logic treats local impact as someone else’s problem, an externality to be managed rather than a cost to be borne.

The question of who can afford to train trillion-parameter MoE models is inseparable from the question of who bears the cost when they do.

Access Is Architecture

Thesis (one sentence, required): The concentration of MoE training capability is not a temporary market condition but a structural feature of an architecture that separates those who define AI systems from those who merely consume them.

This distinction matters because it determines authorship over the assumptions embedded in models that increasingly mediate how we work, learn, and make decisions. When a handful of organizations control the base models that everyone else fine-tunes, the diversity of perspective in AI systems is bounded by the diversity of perspective within those organizations. That is not a conspiracy. It is a consequence of architecture meeting economics, of technical design producing political outcomes that nobody explicitly chose.

Open-weight releases are valuable. They are also insufficient. They distribute the output of concentration without disrupting the concentration itself. The decisions that matter most — which expert structures to use, what data to include, what to exclude, how to weight competing objectives — remain with those who can afford the next training run.

The Questions We Owe Ourselves

The path forward is not to oppose MoE architectures or the principle of sparse activation. These are real engineering advances that make inference more accessible and more efficient. But we owe ourselves honesty about what they do not solve.

Does efficiency at inference justify concentration at training? Does access to a model’s outputs constitute meaningful access to the technology itself? Who audits the architectural choices embedded in base models that downstream users cannot see or change?

These questions do not have clean answers. But refusing to ask them — celebrating the efficiency gains while looking away from the power dynamics they enable — amounts to choosing comfort over clarity.

Where This Argument Is Weakest

This thesis is most vulnerable to the State Space Model trajectory. If architectures like Mamba and its successors achieve frontier performance at substantially lower training costs, the concentration dynamic could weaken or reverse. The argument also underestimates the possibility that distributed training techniques — federated approaches, collaborative pre-training across institutions — may mature faster than current trends suggest.

If training costs plateau or decline, the structural advantage of incumbents erodes. The thesis depends on costs continuing to rise. If they don’t, the concentration becomes temporary — and the conventional wisdom turns out to be right.

The Question That Remains

We built an architecture that makes AI inference efficient by design and AI training exclusive by consequence. The models are open. The power to shape them is not. Whether that changes depends on choices we have not yet made — and on whether we recognize them as choices at all.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors