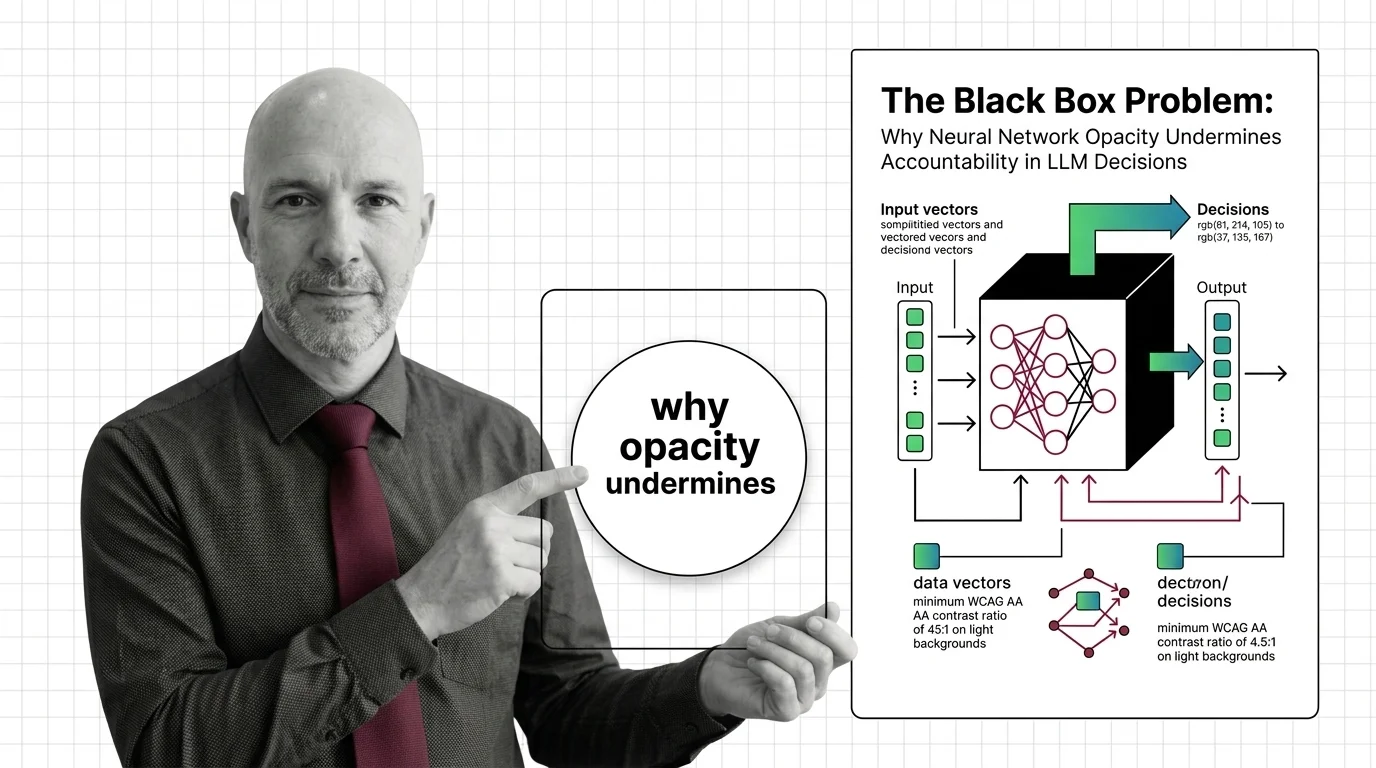

The Black Box Problem: Why Neural Network Opacity Undermines Accountability in LLM Decisions

Table of Contents

The Hard Truth

A patient is triaged. A loan is denied. A fraud flag is triggered. The system performed well on every benchmark. It cannot tell you why it made any of these decisions — and neither can the people who built it. At what point does “it works” stop being a sufficient answer?

We have grown remarkably comfortable with systems that make consequential decisions while offering no account of their reasoning. Not because the explanation is withheld — because the architecture does not produce one. The Neural Network Basics for LLMs driving today’s LLMs are opaque by structure, not policy. And the distance between “accurate” and “accountable” is wider than most institutions admit.

The Silence at the Center of the Decision

When a neural network denies a mortgage application or flags a patient’s scan as low-priority, the output is a number. A probability. A ranked list. What it is not — what it structurally cannot be — is a reason. Billions of parameters adjusted themselves through Backpropagation and Gradient Descent, optimizing for a loss function like Cross Entropy Loss, not for human legibility. Nobody designed the network to hide its reasoning. The reasoning was never legible to begin with.

This matters most where the stakes are highest. In healthcare, clinicians, developers, and regulators all share responsibility when opaque AI systems fail — but no consensus exists on how to attribute that responsibility (PMC Study). In finance, the absence of transparency challenges regulatory compliance across risk assessment, fraud detection, and investment decisions (Finance Watch). The people affected have no mechanism to contest what they cannot see, and the institutions making these decisions often cannot reconstruct the logic themselves.

The uncomfortable truth is not that neural networks are too complex for explanation. It is that we built accountability out of the architecture before we considered whether we could afford to lose it.

The Honest Case for Opacity

A fair examination of this problem must start with why neural networks are opaque in the first place — and the answer is not negligence. The distributed representation that makes these systems powerful is the same property that makes them illegible. Each Activation Function in each layer transforms its inputs in a way that is mathematically precise but semantically opaque. An Adam Optimizer navigating a loss surface with billions of dimensions finds solutions no human would design, precisely because it does not follow human-readable logic.

The capability IS the opacity. Engineers working in frameworks like PyTorch know this implicitly: the power of deep learning comes from representational freedom, from allowing the model to find structure humans would never anticipate. Problems like the Vanishing Gradient were solved not by making networks more transparent, but by making them deeper and more capable of learning distributed features.

Anyone who dismisses this trade-off is not being honest about the engineering. But anyone who accepts it without examination is not being honest about the consequences.

The Assumption We Embedded and Forgot

Here is where the conventional wisdom fractures. The implicit bargain — accuracy in exchange for opacity — rested on an assumption so obvious that it disappeared from view: that consequential decisions do not require explanation, only correctness. As long as the system performs well on aggregate metrics, the absence of per-decision reasoning is acceptable.

That assumption works where the cost of individual error is low. Recommending a song. Ranking a search result. But the EU AI Act, with high-risk transparency rules effective August 2026, encodes a different principle entirely. Article 13 requires high-risk AI systems be “sufficiently transparent to enable deployers to interpret output”; Article 26 requires fundamental rights impact assessments before such AI is used (EU AI Act). The NIST AI Risk Management Framework identifies explainability, interpretability, and accountability as core characteristics of trustworthy AI (NIST).

These are not technical guidelines. They are political statements about what societies believe people are owed when a machine makes a decision about their life. The assumption that accuracy is enough is being formally rejected — but the technology was designed under that assumption, and closing the gap is not a software update.

What Institutional Opacity Taught Us Before

The tension between power and legibility is not new. Administrative law spent centuries wrestling with the same problem in bureaucratic institutions — organizations that made consequential decisions through processes too complex for any individual to fully reconstruct. Kafka’s The Trial endures as literature because it captures something real: the experience of being subject to a system that has authority over your life but cannot explain its reasoning.

The response, over generations, was not to simplify institutions but to demand that they produce accounts. Due process, freedom of information, judicial review — these mechanisms forced institutional power to become legible. The principle was not that every decision must be simple, but that every consequential decision must be contestable.

Neural networks have no analogue to due process. A person affected by an LLM’s output cannot demand an account of the reasoning — not because the institution refuses, but because the architecture cannot produce one. We are building systems with more authority over individual lives than most bureaucracies, and fewer accountability mechanisms than a local planning office.

Accountability Without Explanation Is an Institutional Contradiction

Thesis: The black box problem is not a temporary engineering limitation — it is a structural incompatibility between how neural networks represent knowledge and what democratic accountability requires.

This is not an argument against neural networks but against institutional frameworks that treat opacity as an acceptable externality. When a hospital uses an LLM to triage patients, the relevant question is not whether the model is accurate on average. It is whether any specific patient, denied timely care, has a path to understanding why — and whether any specific clinician can reconstruct the reasoning well enough to take responsibility for it. The answer, today, is no. And the institutions adopting these systems are absorbing that “no” into their operations without fully reckoning with what it means for the people on the other side of the decision.

Questions Worth Sitting With

If accountability requires explanation, explanation requires interpretability, and interpretability is structurally absent from the architectures we are scaling — the question is not whether to regulate, but what regulation can demand from systems not designed to comply.

Should high-stakes decisions be restricted to architectures that can produce per-decision explanations? Should we accept probabilistic approximations — attribution maps, feature importance scores — as “sufficient” transparency when they do not reconstruct actual reasoning? Should we acknowledge that some decisions are too consequential for any system that cannot explain itself?

These are not technical questions. They are questions about what we believe people deserve when power is exercised over their lives.

Where This Argument Fractures

Interpretability research is making real progress, and intellectual honesty demands acknowledging it. Anthropic’s circuit tracing work applied attribution graphs and cross-layer transcoders to Claude 3.5 Haiku, surfacing mechanisms behind hallucination and jailbreak resistance (Anthropic Circuits). Sparse autoencoder experiments on smaller models have extracted thousands of features, with a significant majority mapping to single human-interpretable concepts.

But these results were demonstrated on a smaller model — scaling to frontier systems remains unproven. A collaborative paper by twenty-nine researchers across eighteen organizations found that core concepts like “feature” still lack rigorous definitions in the field (MI Community Paper). The science of making neural networks legible is promising but not ready. The decisions being made by opaque systems are happening now, not in a future where interpretability has matured.

If mechanistic interpretability succeeds at frontier scale, this argument weakens. That possibility is real. But building institutional policy around a hope is different from building it around a capability.

The Question That Remains

We spent centuries insisting that institutional power must explain itself — not because institutions are simple, but because the alternative is authority without recourse. Neural networks are now exercising that kind of authority in healthcare, finance, and criminal justice. The question is not whether they are too opaque for high-stakes decisions. The question is whether we are willing to demand from our most powerful tools what we have always demanded from our most powerful institutions: an account of their reasoning, offered to the people whose lives depend on it.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors