T5Gemma 2 and the Encoder-Decoder Revival: Why Google Doubled Down While Others Went Decoder-Only

Table of Contents

TL;DR

- The shift: Google shipped two encoder-decoder model families in five months while every other major lab stayed decoder-only — a deliberate architectural divergence.

- Why it matters: Encoder-decoder models show measurable advantages in latency, throughput, and long-context performance that decoder-only cannot match at equivalent size.

- What’s next: Production workloads — not research papers — will decide which topology owns which market segment.

Everyone agreed the architecture war was over. Decoder-only won. GPT-4, Claude, Gemini, Llama — the entire frontier stack runs on the same topology.

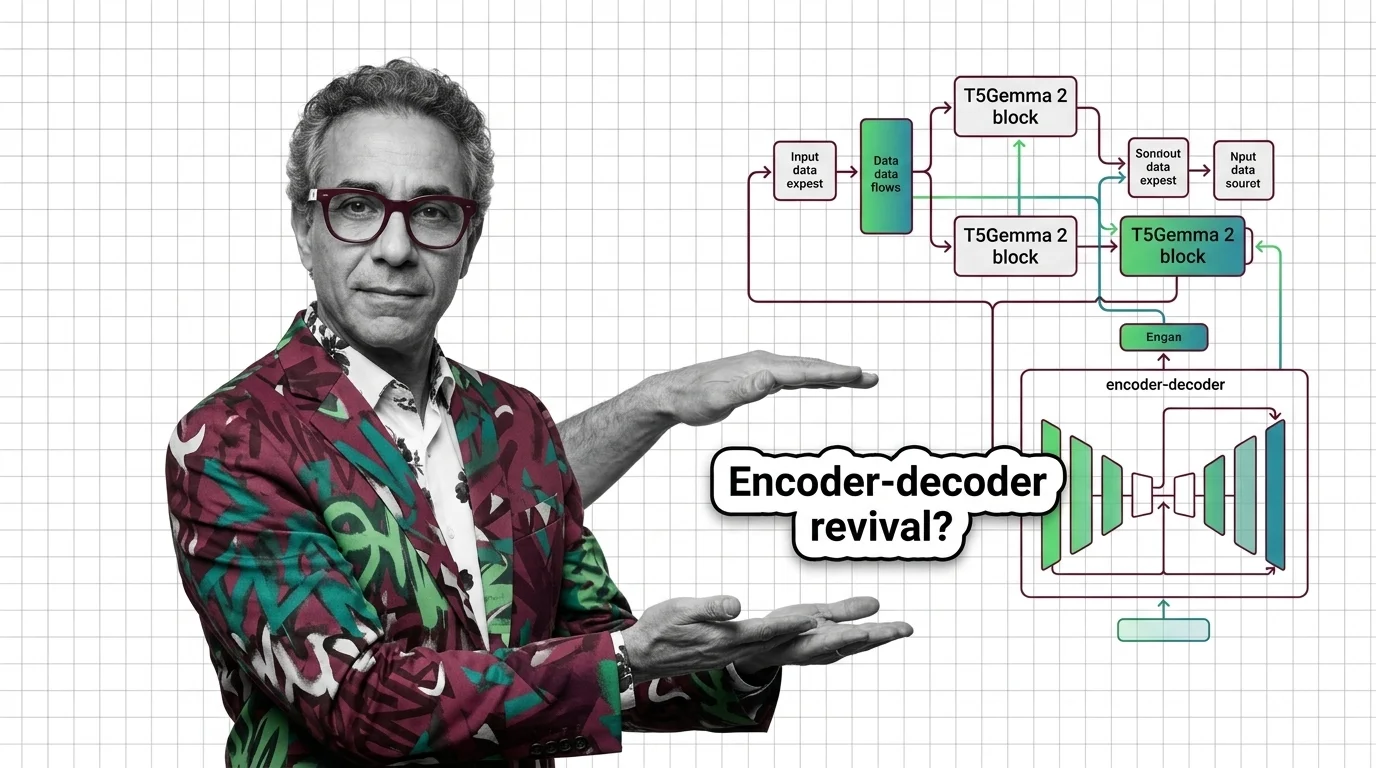

Then Google shipped two Encoder Decoder Architecture families in five months and made the rest of the industry look like it forgot half the Transformer Architecture.

The Architecture Race Just Split in Two

Thesis: Google is making a calculated bet that encoder-decoder models own the efficiency frontier — and the data is starting to back them up.

The T5 lineage was supposed to be a closed chapter. By 2024 every major lab had converged on decoder-only designs. Simpler to scale. Easier to train. One stack, one direction.

Google broke that consensus. T5Gemma launched in July 2025, converting Gemma 2 decoder-only weights into an encoder-decoder format (Google Developers Blog). Five months later — December 18, 2025 — T5Gemma 2 arrived, now built on Gemma 3, adding multimodal input and a 128K-token Context Vector window with up to 32K-token output (Google Blog).

Two releases in rapid succession. That’s not experimentation. That’s a roadmap.

The Efficiency Gap Nobody Expected

T5Gemma 2’s 1B-1B variant — roughly 1.7 billion parameters total — trails Gemma 3 4B by only 8.7 points on multimodal tasks and 6.9 points on long-context benchmarks (Zhang et al.). Less than half the size, single-digit gaps. On coding, reasoning, and multilingual tasks, encoder-decoder surpasses decoder-only at equivalent parameter counts.

The long-context result is the real signal. T5Gemma 2 was pretrained on 16K-token sequences but performs well up to 128K — the encoder-decoder split gives the architecture a structural advantage for long inputs (Zhang et al.).

The RedLLM study from Google DeepMind sharpens the efficiency case: encoder-decoder delivers 47% lower first-token latency and 4.7x throughput on edge hardware compared to decoder-only at equivalent quality (Zhang et al., RedLLM).

One caveat: T5Gemma 2’s post-training used lightweight supervised fine-tuning and distillation — not the full reinforcement learning pipeline applied to Gemma 3. Benchmark comparisons should account for this.

Who Moves Up

Teams building for constrained environments. If you deploy models on mobile or edge hardware where latency defines the user experience, encoder-decoder just became the architecture to benchmark against. That throughput gap is a product category.

Google’s model ecosystem. T5Gemma 2 ships in three sizes — roughly 0.8B to 7B total parameters — trained on 2 trillion tokens, supporting 140+ languages with multimodal input (HuggingFace). A full product line, not a research curiosity.

Speech and translation workloads. OpenAI’s Whisper — the most widely deployed encoder-decoder in production — proved the architecture’s staying power. The large-v3-turbo variant runs 6x faster with accuracy within 1-2% (HuggingFace). Over 4.1 million monthly downloads as of late 2025 (About Chromebooks). Encoder-decoder never left these domains. Now it’s pushing back into general language tasks.

Who Gets Left Behind

Anyone who assumed decoder-only was the only topology worth optimizing for. If your tooling is built exclusively around decoder-only, you have a blind spot. Blind spots compound.

Bart and legacy encoder-decoder models. BART has seen no significant updates from Meta. Google’s investment shifted entirely to T5Gemma. If you’re running BART-based pipelines, the upgrade path now leads to a different lab’s ecosystem.

The “one architecture fits all” narrative. Decoder-only’s simplicity argument assumed you’d never need architectural specialization. Google just showed that assumption has a cost — measured in latency, throughput, and parameter efficiency. The monoculture thesis has a crack in it.

What Happens Next

Base case (most likely): Encoder-decoder carves out high-value niches — edge deployment, speech, translation, long-document processing — while decoder-only remains dominant for general-purpose chat and reasoning. The market fragments by workload, not by ideology. Signal to watch: Third-party benchmarks confirming T5Gemma 2’s efficiency claims on non-Google hardware. Timeline: Mid-2026.

Bull case: Other labs release their own encoder-decoder variants, creating a two-topology ecosystem with tooling on both sides. Signal: Meta or Anthropic announcing encoder-decoder research or model releases. Timeline: Late 2026 to early 2027.

Bear case: T5Gemma 2 remains a Google-only bet. Decoder-only scaling closes the efficiency gap through distillation and quantization, and the revival stays confined to one lab. Signal: No major encoder-decoder releases from non-Google labs within twelve months. Timeline: Q1 2027.

Frequently Asked Questions

Q: What is T5Gemma 2 and why did Google release a new encoder-decoder model in late 2025? A: T5Gemma 2 is Google’s second-generation encoder-decoder family, adapted from Gemma 3 decoder-only weights. It adds multimodal input and 128K context, targeting efficiency advantages — lower latency, higher throughput — that decoder-only models struggle to match at equivalent size.

Q: How does OpenAI Whisper use encoder-decoder architecture for speech-to-text transcription? A: Whisper’s encoder processes audio spectrograms into dense representations. The decoder then generates text transcriptions token by token, using Beam Search at inference and Teacher Forcing during training. The architecture fits tasks where input and output modalities differ.

Q: Are encoder-decoder models making a comeback against decoder-only architectures in 2026? A: Within Google DeepMind, the revival is real — two model families in five months plus published research showing efficiency gains. Industry-wide, it remains a single-lab bet. Whether it broadens depends on independent validation from other labs.

The Bottom Line

Google isn’t dabbling. Two encoder-decoder releases in five months, a research paper backing the efficiency thesis, a full product line from sub-billion to 7B parameters. That’s conviction. The Attention Mechanism underneath hasn’t changed, but how you split the work between encoder and decoder matters more than anyone assumed. You’re either watching this closely or explaining later why you missed it.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors