Synthetic Faces and Learned Distributions: The Ethical Risks When VAEs Recreate Private Data

Table of Contents

The Hard Truth

A hospital trains a generative model on patient records to produce “synthetic” data for research — the outputs look new, but a small fraction are near-identical to real patients. When does “generated” become “remembered,” and who answers for the difference?

We talk about synthetic data as though the word itself confers safety. Synthetic, we assume, means invented — disconnected from the people whose information made the model possible. But a Variational Autoencoder does not invent from nothing. It learns a compressed representation of its training data and samples from that representation to produce outputs that are statistically plausible. The distance between “statistically plausible” and “recognizably real” is not as wide as we would like to believe.

What a Model Learns About a Person

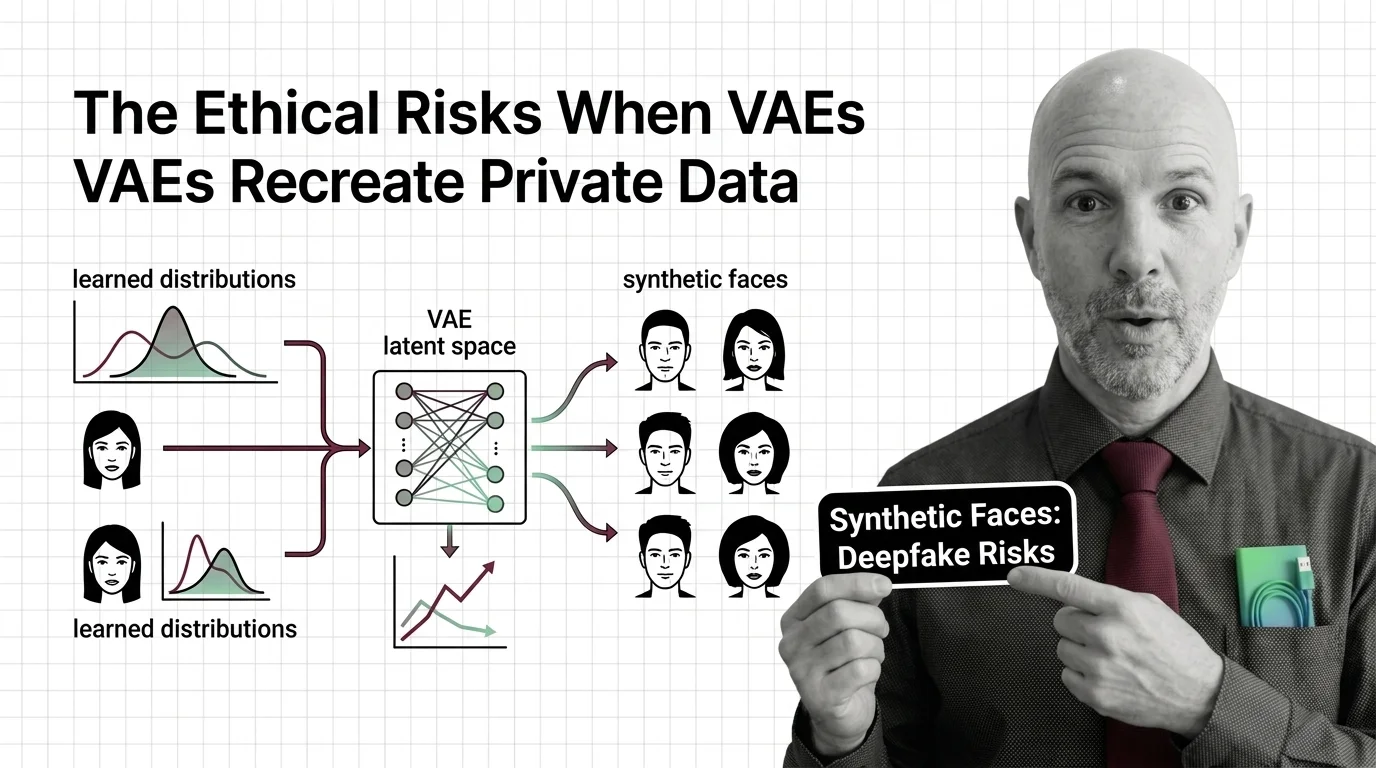

The architecture is elegant in a way that conceals the ethical problem it creates. A VAE encodes input — faces, medical histories, financial transactions — through Convolutional Neural Network layers into a compressed representation, then decodes it back into something resembling the original. The Reparameterization Trick makes this differentiable; the training objective, the Evidence Lower Bound, pushes the model to balance reconstruction fidelity against regularity. That balance is where the trouble begins.

Push too hard toward faithful reconstruction, and the model memorizes. Reconstruction attacks on VAEs are “extremely effective” — the models are considerably susceptible to overfitting and memorization of training data (DeepAI). This is not a hypothetical vulnerability. It is a documented property of the architecture. VAEs provide the encoder-decoder backbone for face-swapping systems, compressing facial features into representations reconstructed onto different bodies and contexts (ISACA Journal). Research on variational graph autoencoders generating synthetic patient trajectories found that small fractions of outputs showed near-identical similarity to training data — similarity scores approaching maximum, meaning effective re-identification (Nikolentzos et al., 2023). The question is not whether the model remembers you, but how much.

Data from 2019 indicated that the vast majority of deepfakes contained pornography, with most victims being women — though current proportions may have shifted as deepfake adoption broadened. The faces that trained these models did not consent to becoming raw material for someone else’s synthesis.

The Promise That Brought Us Here

The case for synthetic data deserves to be stated at its strongest before we examine what it conceals. Medical researchers need data to save lives but cannot ethically share patient records. Financial institutions need fraud detection training sets that do not expose real customers. The underlying principles of Neural Network Basics for LLMs teach us that generative models can learn patterns without retaining individual instances — in principle.

VAE variants demonstrate this promise. VQ-VAE architectures discretize the compressed representation, and Latent Diffusion models build on VAE encoders to produce high-fidelity outputs for medical imaging and drug discovery. The demand is real, the intent is often good, and the alternative — not sharing data at all — carries its own cost in slower research and worse models.

This is not a strawman. But the question is whether the case is complete.

The Assumption That Synthetic Means Anonymous

The hidden assumption is simple and almost universally held: if data is generated by a model rather than copied from a database, it is private by default. This treats the generation process as a sufficient anonymization step. But the NIST AI 600-1 risk framework identifies data privacy leakage and memorization as key risks for generative models (NIST). The risk is catalogued by the institution responsible for AI risk management standards — not by skeptics on the margins.

Synthetic generation is not anonymization. It is transformation — and transformation preserves information, sometimes more than intended. The fact that an output was produced by a model says nothing about how much of the training data it carries. HIPAA’s position on VAE-generated synthetic data is not explicitly codified; what exists is regulatory ambiguity, not regulatory protection. The gap between “synthetic data may be exempt from privacy regulations” and “synthetic data is exempt” is the gap where real people’s privacy disappears.

When Anonymization Promised More Than It Delivered

This pattern has a history. In the 1990s, researchers demonstrated that most Americans could be uniquely identified by just three data points — zip code, birth date, and sex. Generation after generation of “anonymized” data has been re-identified by someone with sufficient motivation and a complementary dataset.

VAE-generated synthetic data sits in the same trajectory. The model compresses information through convolutional or Recurrent Neural Network layers, applies a mathematical transformation, and produces outputs that are new in form but potentially old in substance. The volume of synthetic records creates a false sense of distance from the source — millions of generated samples, each carrying some probabilistic echo of the training data, look like safety in aggregate. Until someone matches one to a real person.

Who is harmed when re-identification happens? Not the institution that trained the model — they have plausible deniability built into the architecture. The person whose medical history or facial features were memorized has no mechanism to know, no standing to object, and no recourse once the damage is done.

Privacy Is Not a Byproduct of Novelty

Thesis: The ethical failure of VAE-generated synthetic data is not that the technology is flawed — it is that we have confused statistical novelty with genuine privacy protection, and built institutional practices on that confusion.

The reparameterization trick ensures smooth gradients. The evidence lower bound ensures a well-structured compressed representation. Neither ensures that the person in the training set is protected. Technical elegance and ethical adequacy are separate properties, and the field has treated them as though they were the same.

Differential privacy offers a partial correction — research on DP-RVAE demonstrates that adding calibrated noise to compressed codes can achieve complete novelty in generated outputs (Springer, DP-RVAE). But it comes with trade-offs in utility, and clinical use remains an active research challenge. The regulatory framework is catching up: EU AI Act Article 50 will require synthetic content to carry machine-readable AI-generation markers from August 2, 2026 (EU AI Act). A Code of Practice draft appeared in December 2025, with the final version expected by June 2026.

These are steps. They are not solutions. Labeling synthetic content does not prevent memorization. Transparency about generation does not equal privacy for the people in the training data.

Questions We Owe the People in the Training Set

The discomfort here is not about whether VAEs are useful — they are. It is about who bears the cost of that usefulness, and whether they were asked. A patient whose records trained a medical VAE did not consent to having their health trajectory become raw material for a generative model. A person whose face appeared in a training dataset did not agree to become a component in a synthesis pipeline.

Consent architectures for generative AI barely exist. Opt-out mechanisms assume you know your data was used. Regulatory frameworks assume synthetic data is categorically different from real data. What would meaningful protection look like — not in principle, but in practice?

Where This Argument Is Weakest

This argument is most vulnerable to the progress of differential privacy. If DP-VAE techniques mature to the point where memorization is provably eliminated without destroying utility, the core concern — that synthetic does not mean anonymous — becomes a historical footnote rather than a structural critique. The field is actively working toward this.

It is also vulnerable to the possibility that regulation closes the gap faster than expected. If the EU AI Act’s transparency obligations prove enforceable and HIPAA explicitly addresses synthetic data, the institutional vacuum that gives this essay its urgency could fill sooner than assumed.

I would welcome both developments. The argument being wrong would mean the problem was solved.

The Question That Remains

A variational autoencoder learns a compressed version of its training data and generates new outputs that look original. We have called this “synthesis” and treated its outputs as safe by default. But the people in the training data did not become less real because a model learned to approximate them.

If synthetic data carries enough of a person to re-identify them, at what point does generating it become an act that requires their permission — and what does it mean that we never thought to ask?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors