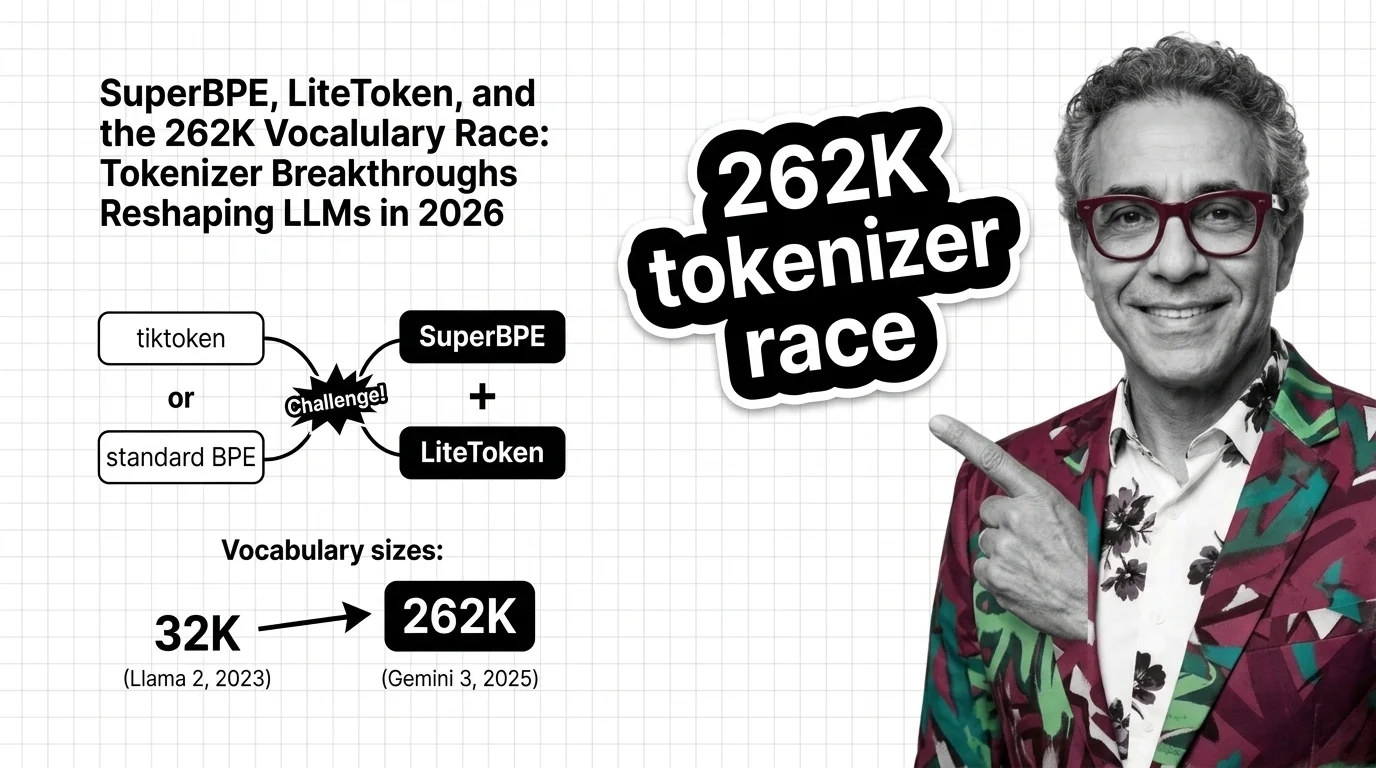

SuperBPE, LiteToken, and the 262K Vocabulary Race: Tokenizer Breakthroughs Reshaping LLMs in 2026

Table of Contents

TL;DR

- The shift: Tokenizer vocabulary design moved from implementation detail to competitive differentiator — vocab sizes quadrupled in two years and new research cuts inference compute by over a quarter.

- Why it matters: Every token saved is compute saved. At scale, tokenizer efficiency determines who serves models profitably.

- What’s next: Production adoption of SuperBPE or LiteToken-style optimizations will separate cost leaders from those burning margin on brute-force compute.

For eighteen months, the industry argued about model architecture, training data, and alignment. Almost nobody argued about the tokenizer. That was a strategic blind spot. The Tokenization layer — the first and last thing every language model touches — just became the most active front in the efficiency war.

The Layer Nobody Argued About Just Became the Argument

Thesis: Tokenizer design has graduated from solved problem to strategic variable, and labs treating it as legacy plumbing are about to pay a compute tax their competitors will not.

Two years ago, Tokenizer Architecture was a settled question. Llama 2 shipped with 32,000 tokens built on SentencePiece (Meta Blog). Standard. Sufficient.

No longer. GPT-4o runs o200k_base — roughly 200K tokens powered by Tiktoken (OpenAI Cookbook). Llama 3 jumped to 128,256 tokens, cutting token counts by 15% (Meta Blog). Mistral Tekken pushed to roughly 131K using BPE inspired by the same paradigm. And Google’s Gemma 3 — introduced in 2025, reusing the Gemini family tokenizer — took the vocabulary to 262,144 tokens (Google Developers Blog).

From 32K to 262K in two years. That is not iteration. That is an arms race.

The logic behind this scaling is mathematical. Research presented at NeurIPS 2024 demonstrated a log-linear relationship between optimal vocabulary size and model parameters — 32K was right-sized for a 7B-parameter model, but a 70B variant needed roughly 216K (NeurIPS 2024 Paper). Bigger models demand bigger vocabularies. The industry built bigger models. The vocabulary followed.

Two Papers, One Direction

Vocabulary expansion is only half the story. Two research papers are attacking Subword Tokenization itself — from different angles, toward the same conclusion.

SuperBPE, published at COLM 2025, redesigns how BPE handles merges. Across 30 benchmark tasks: 4.0% average improvement, 8.2% on MMLU, 33% fewer tokens, and 27% less inference compute (SuperBPE Paper). A 27% compute reduction reprices the entire cost structure of inference at scale.

LiteToken, published in February 2026, takes a different path. It removes what the authors call “intermediate merge residues” — leftover artifacts from how standard BPE constructs vocabularies. The key difference: LiteToken requires no retraining. It is a plug-and-play optimization applicable to existing models (LiteToken Paper).

Neither has been adopted in a production LLM yet. Both remain peer-reviewed research. But the direction is unmistakable: standard BPE has measurable inefficiencies, and the tools to fix them now exist.

Who Gains Ground

Google sits in the strongest position. The 262K vocabulary in the Gemma/Gemini family already reflects the scaling logic NeurIPS research validated.

OpenAI’s tiktoken defined the BPE-over-bytes approach that Llama 3 and Mistral Tekken adopted. That is a standards moat — when three major model families converge on your tokenization philosophy, you have shaped the field.

Research teams focused on Transformer Architecture inference efficiency — anyone whose cost model depends on tokens per forward pass through the Attention Mechanism — gain from SuperBPE-class advances. A quarter less compute per inference call, at cloud scale, is a P&L line item. Act accordingly.

Who Falls Behind

Teams still running 32K-class vocabularies on large models pay an invisible tax on every inference call. Undersized vocabularies force the model to spend capacity decomposing tokens that a properly sized vocabulary handles natively — and produce more Glitch Tokens, malformed entries that cause unpredictable behavior.

Anyone treating the Wordpiece or Unigram Tokenization layer as a locked dependency faces a structural disadvantage. The older Encoder Decoder Architecture tokenization assumptions do not hold for decoder-only models processing 100K-token contexts.

Model providers whose tokenizer is tightly coupled to training infrastructure will find themselves retraining where competitors simply swap a component. You are either retooling this layer or falling behind.

What Happens Next

Base case (most likely): At least one major lab ships SuperBPE-derived or LiteToken-style optimizations in a production model within a year. Vocabularies stabilize in the 128K-262K range. Signal to watch: A Llama 4 or Mistral next-gen announcement referencing optimized BPE. Timeline: Q3 2026 through Q1 2027.

Bull case: LiteToken’s plug-and-play design enables rapid adoption, cutting inference costs without retraining. Signal: Inference pricing drops tied to tokenizer improvements. Timeline: Late 2026.

Bear case: Benchmark gains do not replicate at production scale. Integration complexity exceeds what the papers suggest. Signal: No major model release cites either paper within 18 months. Timeline: Mid-2027 without movement.

Frequently Asked Questions

Q: How did tiktoken become the dominant tokenizer powering GPT-4o, Llama 3, and Mistral Tekken? A: OpenAI’s tiktoken introduced a fast BPE-over-bytes encoding that proved efficient at scale. Llama 3 and Mistral Tekken adopted the same paradigm — not the tiktoken library itself, but its architectural approach — because it handled multilingual text and large vocabularies more efficiently.

Q: How are SuperBPE and LiteToken challenging standard BPE tokenization in 2026? A: SuperBPE redesigns merge strategies, cutting tokens by a third and inference compute by over a quarter. LiteToken removes residual artifacts from existing vocabularies without retraining. Both are peer-reviewed research, not yet deployed in production models.

Q: How did LLM vocabulary sizes grow from 32K in Llama 2 to 262K in Gemma 3 between 2023 and 2025? A: Llama 2 shipped with 32K tokens on SentencePiece. Llama 3 jumped to 128K using tiktoken-based BPE. Google’s Gemma 3 pushed to 262K — the largest production vocabulary — using a SentencePiece tokenizer shared across the Gemini/Gemma family starting in 2025.

The Bottom Line

The tokenizer layer is no longer plumbing. It is a competitive variable with direct impact on inference cost, multilingual reach, and model efficiency. Labs treating vocabulary design as a strategic lever are setting the pace. You are either optimizing this layer or subsidizing someone else’s advantage.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors