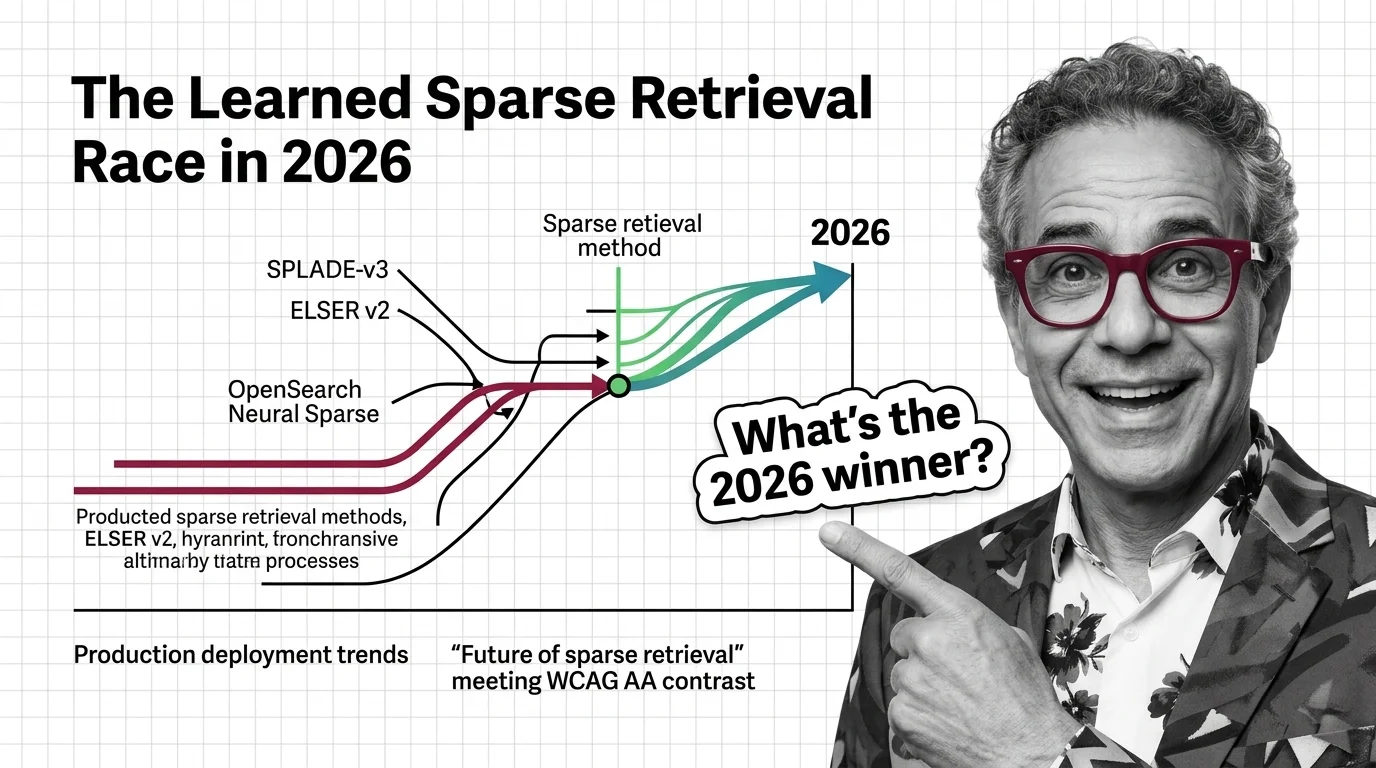

SPLADE-v3, ELSER v2, and OpenSearch Neural Sparse: The Learned Sparse Retrieval Race in 2026

Table of Contents

TL;DR

- The shift: Three independent Sparse Retrieval lines — SPLADE, ELSER, OpenSearch Neural Sparse — all reached production-grade releases while every major vector engine standardized on hybrid as the default.

- Why it matters: The “dense vs sparse” debate is over. Hybrid retrieval is the new floor for production RAG, and the platforms that shipped sparse in-cluster just won the operational fight.

- What’s next: Latency parity with BM25 has arrived, multilingual sparse has arrived, and the teams still running pure-dense pipelines are about to find out why their RAG Evaluation numbers aren’t moving.

For two years, the conventional read on sparse retrieval was that dense embeddings would absorb it. That read is now wrong. Three separate research and platform lines have all shipped production-grade sparse encoders, latency has collapsed, and every major vector engine quietly made hybrid the default. The teams still arguing about dense versus sparse are arguing about a fight that ended.

The Sparse Stack Just Closed the Gap

Thesis: Learned sparse retrieval has stopped competing with dense embeddings — it has joined them as the second mandatory leg of every serious production RAG system.

The signal is structural. Naver shipped SPLADE-v3 with the strongest single-model BEIR-13 average in the SPLADE line — 51.7 nDCG@10, up from 50.7, with MS MARCO dev MRR@10 at 40.2 (SPLADE-v3 paper). Elastic kept ELSER v2 as the production default and absorbed Jina AI in October 2025 to fill the multilingual gap (Elastic press release). OpenSearch dropped two new sparse models in September 2025 plus the first multilingual sparse encoder in the category, then released SEISMIC, an ANN algorithm purpose-built for sparse vectors.

Three labs. Three independent bets. One direction.

That’s not a coincidence. That’s a market converging on an architecture.

Three Lines, One Direction

Group the evidence by what it proves, not when it shipped.

Single-model effectiveness keeps rising. SPLADE-v3 retains the academic crown on BEIR Benchmark and MS MARCO, and Pyserini regressions still anchor the BM25 baselines underneath the comparison. But there is a catch: SPLADE-v3 ships under CC-BY-NC-SA-4.0 — non-commercial. Teams that want SPLADE-grade quality in production take a downstream variant or license it from Naver (SPLADE-v3 model card).

Platform ergonomics is where the real fight moved. ELSER v2 delivers roughly a 90% throughput improvement over v1 — 26 docs per second versus 14 on the optimized variant — and ships in-cluster with no GPU dependency, English-only, 512-token window (Elastic Search Labs). That’s not a benchmark win. That’s an ops win.

OpenSearch closed the multilingual gap. Doc-v3-gte lifts BEIR average to 0.546 versus 0.517 for doc-v3-distill, and multilingual-v1 hit MIRACL average 0.629 across 16 languages against BM25’s 0.305 (OpenSearch Blog). That is a category-redefining number for a sparse encoder.

Latency parity arrived in OpenSearch 3.3. SEISMIC pulls average query latency to roughly 11.77 ms versus standard neural sparse at 125.12 ms and BM25 at 41.52 ms (OpenSearch Blog). Sparse is no longer the slow leg of the stack.

The “every production vector DB now ships hybrid” claim — Elasticsearch RRF since 8.9, Redis 8.4 with FT.HYBRID — is documented across the Pureinsights and Superlinked write-ups, not asserted as bare fact (Pureinsights).

Who Wins the Hybrid Default

Platform vendors that shipped sparse in-cluster won the operational fight before the market noticed. Elastic owns the English production default with ELSER v2 and now owns multilingual via Jina models on the Elastic Inference Service after the October 2025 acquisition. EIS pricing starts at $0.08 per million tokens, with self-managed inference at $0.07 per VCU per hour (Elastic pricing) — boring numbers, but the ones production teams will quote at procurement.

OpenSearch wins the open-stack story. Doc-v3-gte is the new accuracy pick, doc-v3-distill the latency-and-footprint pick, multilingual-v1 the cross-lingual pick, SEISMIC the billion-scale pick. Four shipped models, one platform.

Teams running mid-sized RAG without a GPU budget win twice: on cost, and on the fact that Hybrid Search is no longer an integration project. They click a feature flag.

You’re either deploying hybrid retrieval now, or you’re paying the RAG Evaluation debt next quarter.

Who Gets Squeezed

Pure dense-only RAG stacks just lost their structural argument. The “we don’t need sparse, our embeddings handle it” line worked when sparse meant TF-IDF and Term Frequency counting. It does not work when sparse means a learned encoder that materially beats BM25 on out-of-domain BEIR queries.

Teams that built production roadmaps around SPLADE-v3 without reading the license get squeezed harder. CC-BY-NC-SA-4.0 is a research and evaluation license, not a drop-in production one. Commercial use runs through a Naver license or a downstream model with permissive terms.

BM25-only legacy stacks are surviving on inertia. That works until your architect sees the regression numbers on out-of-domain, ambiguous, multilingual queries.

Vector DB vendors that haven’t shipped first-class hybrid are about to discover what late means.

What Happens Next

Base case (most likely): Hybrid BM25 + dense + sparse becomes the universal RAG retrieval default by end of 2026. Pure dense and pure BM25 stacks remain as legacy. Signal to watch: Major RAG framework defaults (LangChain, LlamaIndex, Haystack) shipping hybrid as the out-of-the-box retriever instead of dense-only. Timeline: Six to nine months.

Bull case: SEISMIC-class ANN over sparse spreads from OpenSearch to Elasticsearch and the open vector DBs, sparse becomes faster than BM25 at scale, and a permissively-licensed SPLADE-grade open model lands. Signal: A second engine ships a sparse-native ANN algorithm; a Tier-1 lab releases an Apache-2.0 sparse encoder above ELSER v2 quality. Timeline: Twelve months.

Bear case: Long-context LLMs and full-corpus prompting eat the bottom of the RAG stack faster than expected, compressing the addressable surface for retrieval altogether. Signal: Frontier labs ship million-token models with cost curves that beat retrieval on common workloads. Timeline: Twelve to twenty-four months.

Frequently Asked Questions

Q: How are Elastic ELSER and OpenSearch Neural Sparse being deployed in production in 2026? A: ELSER v2 ships in-cluster on Elasticsearch with no GPU dependency, English-only, 512-token window, billed via EIS or self-managed VCUs. OpenSearch deploys doc-v3-gte for accuracy, doc-v3-distill for latency, multilingual-v1 for cross-lingual, SEISMIC for billion-scale workloads.

Q: Where is sparse retrieval heading in 2026 as hybrid search becomes the default? A: Toward latency parity with BM25, native multilingual coverage, and tight integration into engines as a first-class retrieval mode. Sparse stops being a separate decision and becomes one leg of the default hybrid stack alongside dense embeddings and BM25.

The Bottom Line

The dense-versus-sparse debate is over because the platforms ended it. Three independent sparse lines hit production-grade in the same window, the latency story flipped with SEISMIC, and every serious vector engine shipped hybrid by default. Watch for hybrid becoming the out-of-the-box retriever in major RAG frameworks — that’s the signal the shift has finished.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors