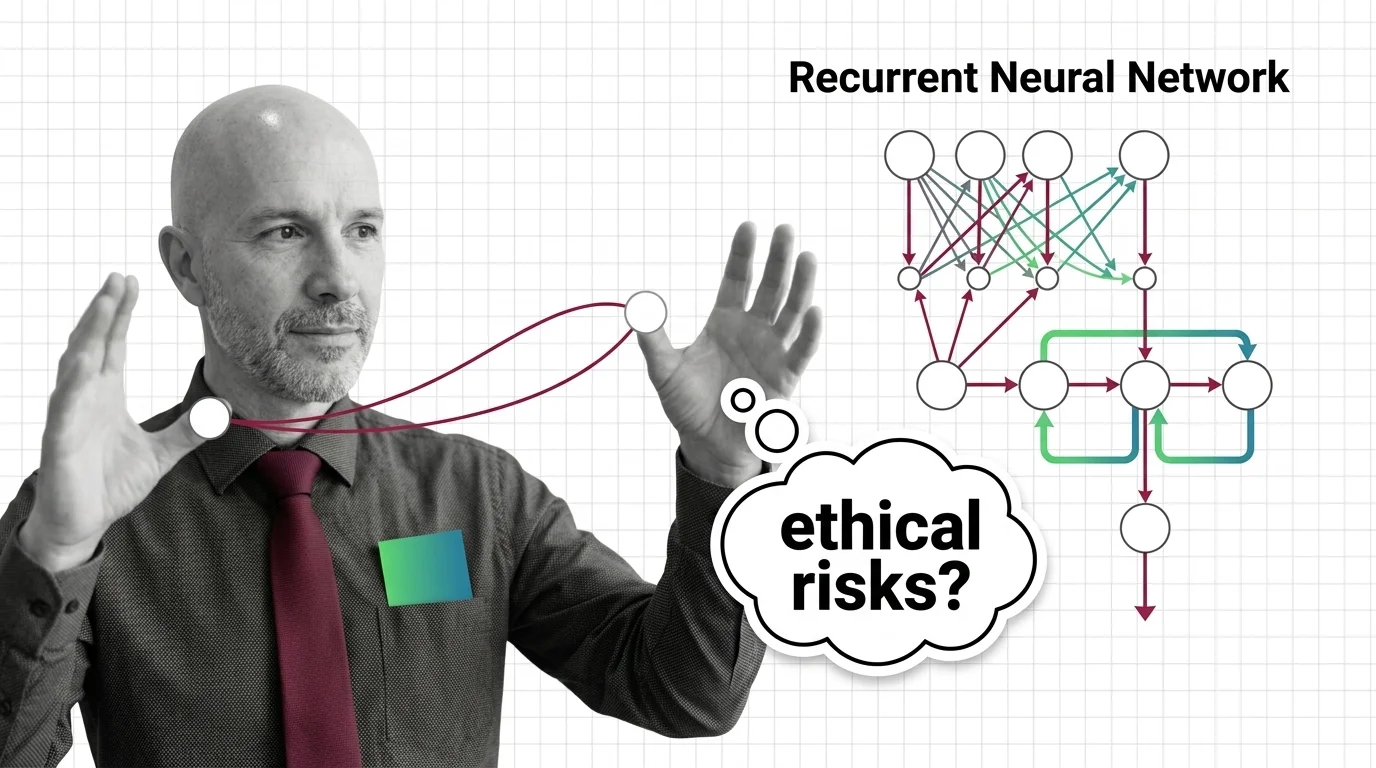

Sequential Bias and Opaque Memory: The Ethical Risks of Recurrent Networks in High-Stakes Decisions

Table of Contents

The Hard Truth

What happens when the system that decides whether you receive parole, qualify for a loan, or get flagged for medical intervention carries a memory you cannot inspect — a memory that accumulates influence with every step in the sequence, and that no regulator, no auditor, and no appeals court can read?

We have spent years debating the ethics of black-box AI. But most of that debate centers on models that process inputs in a single pass — an image classified, a document scored, a prompt answered. Recurrent neural networks do something different and, in many ways, more troubling: they remember. And the ethical weight of a system that carries forward hidden information from one decision to the next remains largely unexamined.

The Memory Nobody Audits

A Recurrent Neural Network processes data sequentially — each input shaped by what came before, encoded in a Hidden State that persists across time steps. In natural language processing or time-series forecasting, that temporal awareness is a feature. In high-stakes automated decision-making, it becomes something else entirely: an opaque accumulation of contextual influence that no human can trace back to its origin.

Consider a parole recommendation system trained on historical case data. Each case the model processes leaves a trace in its hidden state — a residue of patterns from prior decisions that shapes how it weighs the next one. If those historical decisions were biased — and decades of research confirm they often were — the hidden state does not simply reflect that bias. It compounds it, silently, across the sequence.

The ProPublica analysis of the COMPAS recidivism tool revealed predictions systematically biased against African American defendants (ProPublica via Springer). COMPAS was not itself a recurrent network, but the lesson it taught applies with sharper force to sequential models: when an algorithm absorbs the patterns of a discriminatory history, it does not merely replicate past injustice. It operationalizes it — embedding it in a computational substrate that moves faster and at larger scale than any human institution ever could.

The question is not whether recurrent models can encode bias. The question is whether anyone can tell you precisely where it lives.

The Case for Sequential Intelligence

The defense is not unreasonable. Neural Network Basics for LLMs underpin much of modern AI, and recurrent architectures specifically excel at tasks where temporal context genuinely matters — fraud detection, medical monitoring, predictive maintenance. A model that processes each input in isolation, like a standard feedforward network or a Convolutional Neural Network, cannot capture the dependencies that make sequential data meaningful.

And the field is evolving. The xLSTM architecture — presented at NeurIPS 2024 — introduces exponential gating and matrix memory that expand what recurrent models can retain and process (Beck et al.). The infrastructure supporting these models remains stable and actively maintained. The technical argument for using recurrent networks in consequential systems is straightforward: temporal data requires temporal models, and recurrent architectures remain among the most natural fits for tasks where order matters.

That argument, taken on its own terms, is reasonable. What it leaves unexamined is whether temporal modeling can be separated from temporal accountability — and whether the architecture’s memory creates obligations that its opacity cannot fulfill.

The Assumption Inside the Architecture

The hidden assumption is this: that sequential processing is ethically neutral — that a model’s ability to remember is merely a technical property, no different from any other architectural choice.

But memory is never neutral. What a system remembers, how long it remembers, and how that memory shapes downstream decisions are governance questions, not engineering questions. Research on RNN hidden states describes production systems with “hundreds of thousands of hidden state units” that exhibit “black-box behavior” — and this opacity, Garcia et al. note, “has halted the use of deep learning in applications such as medicine, robotics, and finance.”

Explainability techniques exist. SHAP, LIME, gradient-based attribution, attention visualization — all have been applied to LSTM architectures (Springer XAI LSTM). But SHAP computation alone requires roughly half a second to a full second per instance, making real-time explanation impractical at the speed these systems operate. The tools exist at a different speed than the decisions being made.

And there is a subtler problem. Recent preprint research suggests that task-trained RNNs exhibit a strong simplicity bias — they tend to collapse toward the same solutions via fixed-point attractors regardless of the complexity of the task. If that finding holds under further scrutiny, it implies that recurrent models may not be learning the nuanced temporal patterns their defenders assume. They may be learning shortcuts — and in high-stakes domains, those shortcuts may serve as proxies for protected characteristics that no explainability method is designed to detect.

The Bureaucratic Parallel

There is a useful analogy outside computer science. In the mid-twentieth century, sociologists studying institutional discrimination discovered that the most persistent forms of bias were not the product of individual prejudice. They were embedded in procedures — in the sequence of forms, reviews, and checkpoints that constituted bureaucratic process. The bias was structural, distributed across steps, and invisible to anyone examining a single decision in isolation.

Hidden states in recurrent networks operate on the same principle. No single hidden state vector is “the biased one.” The bias is distributed across the temporal sequence — accumulated through Backpropagation Through Time, shaped by training data that reflects decades of institutional decision-making. To audit such a system would require not just inspecting a single output, but reconstructing the entire chain of hidden state transitions that produced it. That is not technically impossible. It is practically unaffordable and institutionally unprecedented.

This is why regulation, however well-intentioned, struggles with these architectures. The EU AI Act requires high-risk AI systems to be “sufficiently transparent to enable deployers to interpret a system’s output,” with full applicability beginning August 2, 2026 and substantial financial penalties for non-compliance (EU AI Act). These provisions are model-agnostic — they do not target recurrent networks specifically. But the opacity of recurrent hidden states makes compliance uniquely difficult: transparency requirements assume the system’s reasoning can be surfaced, and for recurrent architectures, that assumption may not hold.

The Governance Gap Inside the Hidden State

Thesis: The ethical risk of recurrent neural networks in high-stakes decisions is not primarily a problem of biased training data — it is a problem of unauditable memory operating at institutional scale.

Every automated decision system carries the risk of encoded bias. What makes recurrent architectures distinctly dangerous is not that they are more biased than other models, but that their bias is harder to locate. It lives in the hidden state — a compressed, nonlinear representation of everything the model has processed up to that point. That representation is not a record. It is not a transcript. It is not something a court can subpoena or an auditor can review line by line. It is a mathematical object that encodes influence without offering explanation.

When such a system operates in criminal justice, healthcare, or financial services, the people affected by its decisions have no mechanism to understand what prior information shaped their outcome. They cannot appeal to the hidden state. They cannot ask why the model’s memory of a thousand prior cases — cases they had nothing to do with — influenced their own.

That is not a technical shortcoming awaiting a better explainability tool. That is a structural incompatibility between how these systems work and what accountability demands.

The Questions We Owe the Affected

If the hidden state cannot be meaningfully audited in real time, should recurrent architectures be permitted in domains where individuals have a right to explanation? Not prohibited as a political gesture, but restricted as a matter of institutional design — the way certain forms of evidence are excluded from courtrooms, not because they are useless, but because their reliability cannot be adequately assessed.

Who should bear the burden of proof? Should the organization operating a recurrent model demonstrate that its sequential memory does not encode discriminatory patterns? Or should the affected individual bear the impossible task of proving that a mathematical object they cannot inspect caused them harm?

These are not hypothetical questions. They are design decisions that will determine whether the next generation of sequential AI systems operates within or beyond the reach of democratic accountability.

Where This Argument Breaks

This argument is strongest when explainability tools remain slow and approximate. If future research produces real-time, faithful interpretations of hidden state dynamics — not post-hoc approximations, but genuine mechanistic transparency — the case for restricting recurrent models weakens considerably. If the simplicity bias finding proves robust and researchers develop methods to detect and correct proxy-variable shortcuts before they reach consequential systems, the opacity problem may become manageable.

The argument also assumes that alternative architectures — transformers, state-space models — are meaningfully more interpretable. If they prove equally opaque in practice, the problem is not unique to recurrent networks, and the institutional response must be broader than architecture-specific restriction.

The Question That Remains

We built systems that remember, and we did not ask what they would remember, or who would be harmed by memories no one can read. The technical question — can we make hidden states transparent? — matters. But the prior question matters more: should systems with unreadable memory be allowed to make decisions about human lives at all?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors