ScaNN, DiskANN, and Glass: The 2026 ANN-Benchmarks Race and Where Vector Indexing Is Heading

Table of Contents

TL;DR

- The shift: Quantization is no longer optional — every top ANN algorithm now depends on it, collapsing the graph-vs-clustering debate into a single engineering question.

- Why it matters: Production vector search is splitting between teams that scale beyond RAM and teams stuck at the HNSW ceiling.

- What’s next: Google and Microsoft are embedding benchmark-winning algorithms into managed cloud services — the algorithm race is becoming a platform war.

The Vector Indexing leaderboard used to be a clean fight. Graph methods on one side, clustering on the other, HNSW winning most arguments by default. That split just collapsed. The latest benchmark results point to a single engineering choice underneath every top performer — and teams still debating graph versus clustering are already behind.

Quantization Is the Price of Admission

Thesis: The ANN algorithm race is no longer graph versus clustering — it is quantization-first versus everything else.

The VIBE benchmark — the most recent large-scale ANN evaluation covering 12 in-distribution and 6 out-of-distribution datasets with modern RAG and multimodal Embedding workloads — delivered the signal (VIBE Paper). SymphonyQG, a SIGMOD 2025 entrant integrating RaBitQ quantization with graph indexing, topped 5 of 12 datasets at 95% recall. Glass, built by Zilliz on HNSW and NSG with SQ quantization, took 4 of 12.

Every top graph-based and clustering-based method uses quantization in at least some computations (VIBE Paper).

Not a coincidence. Convergent evolution with one shared trait. The algorithms ignoring quantization are falling off the leaderboard. The debate over index topology is now secondary to the compression strategy underneath it.

Three Moves, One Direction

Google shipped ScaNN into two production services within weeks. Vertex AI Vector Search 2.0 launched in January 2026 — the same engine powering Google Search and YouTube, sub-10 ms latency at billion scale (Google Cloud). AlloyDB followed with ScaNN-backed inline filtering and recall observability.

The SOAR algorithm underneath — Spilling with Orthogonality-Amplified Residuals — already held state-of-the-art in the Big-ANN out-of-distribution and streaming tracks at NeurIPS 2023 (Google Research Blog). Google didn’t just win benchmarks. It productized them.

Microsoft made the same structural bet from a different angle. DiskANN v0.49.1 shipped in March 2026 with a full Rust rewrite (DiskANN GitHub). Scale numbers: 1 billion vectors on 64 GB of RAM, 95% recall@1 at under 5 ms (DiskANN Overview). That is 5 to 10 times more points per node than HNSW at comparable accuracy.

DiskANN runs Bing, Ads, Microsoft 365, Azure Cosmos DB, and SQL Server 2025. A thousand-machine deployment manages 50 billion points — 6 times more efficient than sharded indices (DiskANN Overview).

SymphonyQG arrived from academia with the most robust accuracy profile in the VIBE study — the least sensitive to query difficulty across all tested datasets.

Three independent efforts. Same conclusion: the memory wall is real, and the teams breaking through it are winning.

Compatibility note:

- DiskANN Rust rewrite: The C++ codebase on

cpp_mainis unmaintained as of v0.49.1 (March 2026). New deployments should target the Rust branch.

Who Is Building on the Right Foundation

Google and Microsoft are converting algorithm advantages into platform gravity. ScaNN inside Vertex AI means Google Cloud customers get benchmark-winning search without managing infrastructure. DiskANN inside Cosmos DB and SQL Server does the same for Azure.

Milvus adopted DiskANN alongside IVF (Inverted File Index) and HNSW — supporting billions of vectors at 10 to 20 thousand queries per second (TensorBlue). Qdrant shipped ACORN filtered HNSW last year with named vectors for dense-plus-sparse hybrid search (TensorBlue).

The open-source projects that embraced quantization and disk-based access early now have production momentum. That momentum compounds.

The HNSW Ceiling and Who’s Under It

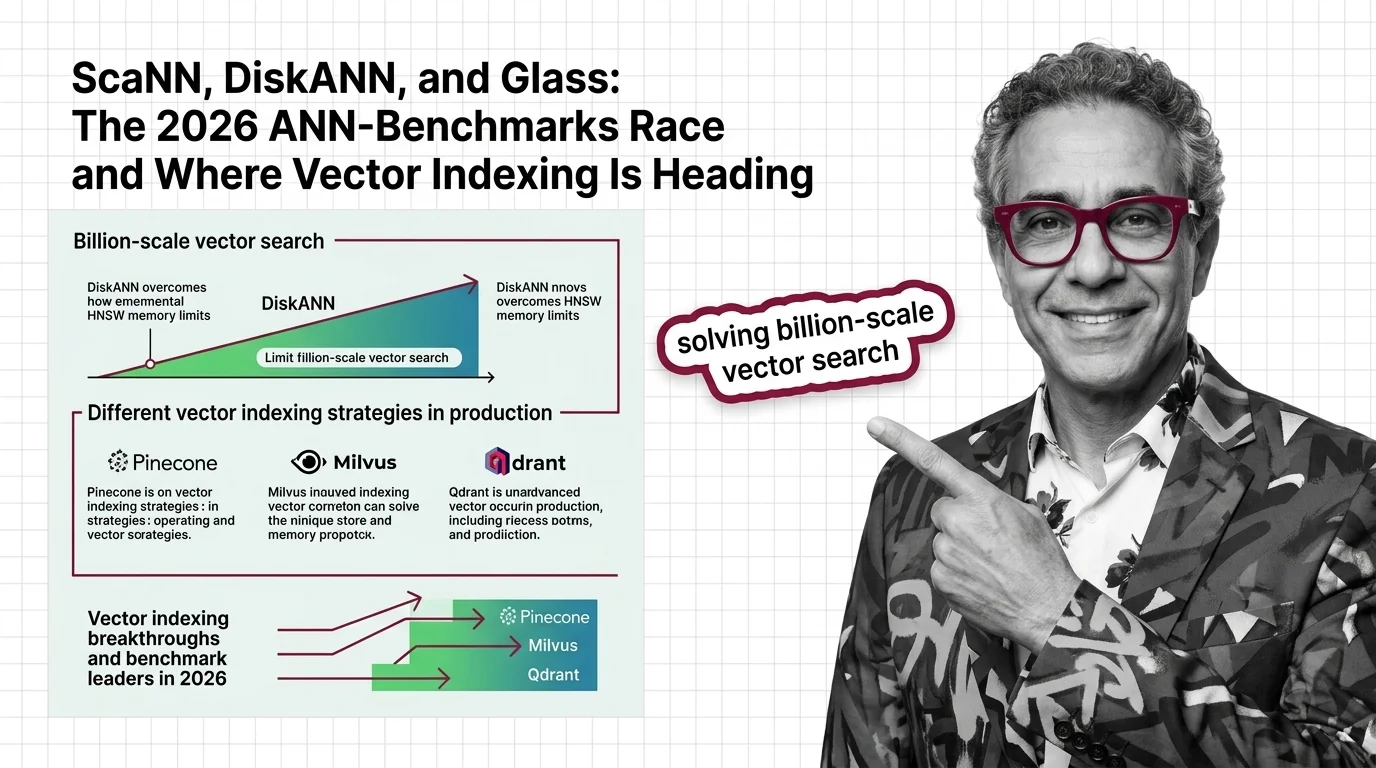

Pure HNSW peaks at 100 to 200 million vectors before memory costs make it impractical at comparable accuracy and latency. Any team building on unmodified HNSW is approaching that wall — or already hitting it.

Pinecone runs proprietary indexing not available on public ANN-Benchmarks. Sub-50 ms p99 latency at up to roughly 100 million vectors is the published claim. Without independent benchmarks, that stays a marketing number.

Glass won 4 of 12 VIBE datasets. But its GitHub repository has roughly 140 stars against Faiss’s 30,000-plus. Benchmark wins without community adoption are academic trophies. And recall drops to roughly 70% on the hardest out-of-distribution queries.

You’re either scaling past the HNSW ceiling or you’re rebuilding later.

What Happens Next

Base case (most likely): Quantization-augmented graph methods and disk-based indices become the default for new deployments above 100 million vectors. Cloud-managed vector search absorbs most of the market. Signal to watch: AWS adding DiskANN or SymphonyQG to a managed Similarity Search Algorithms service. Timeline: Next 12 months.

Bull case: A successor algorithm unifies graph and quantization so effectively that a single library dominates both million-scale and billion-scale workloads. The index choice becomes trivial. Signal: One library matching DiskANN at billion scale and SymphonyQG at million scale on the same benchmark. Timeline: 18 to 24 months.

Bear case: Fragmentation. No algorithm wins across scales, and teams maintain separate indices for different workload sizes — increasing operational cost. Signal: Big-ANN and VIBE winners continue diverging with no crossover. Timeline: Ongoing.

Frequently Asked Questions

Q: How do Pinecone, Milvus, and Qdrant use different vector indexing strategies in production? A: Milvus supports IVF, HNSW, and DiskANN for scale flexibility. Qdrant runs HNSW with quantization and added ACORN filtered HNSW for hybrid search. Pinecone uses proprietary managed indexing unavailable on public benchmarks.

Q: How did DiskANN solve the billion-scale vector search problem that HNSW couldn’t handle in memory? A: DiskANN stores graph structure and compressed vectors on SSD instead of RAM, fitting a billion vectors into 64 GB of memory. That is 5 to 10 times more per node than HNSW at comparable accuracy.

Q: What are the biggest vector indexing breakthroughs and benchmark leaders in 2026? A: SymphonyQG leads 5 of 12 VIBE datasets with RaBitQ quantization. ScaNN’s SOAR dominates clustering. DiskANN v0.49.1 owns billion-scale SSD-based search. All top methods share quantization as a core strategy.

The Bottom Line

The ANN algorithm race converged on one answer: quantization is mandatory and RAM-only architectures have a ceiling. Google and Microsoft already embedded their winners into cloud platforms. The window to choose your indexing strategy before the platform chooses for you is closing.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors