Scaling Walls, Data Exhaustion, and the Technical Limits of Pre-Training in 2026

Table of Contents

ELI5

Pre-training teaches a model language by predicting tokens across massive datasets. But the compute, data, and budgets it demands are hitting physical limits — forcing the field to find new ways to improve AI.

Six frontier models sit within 0.8 points of each other on SWE-bench Verified as of March 2026 — GPT-5.4, Claude Opus 4.6, Gemini 3 Pro, Llama 4 Behemoth, DeepSeek V3. Different architectures, wildly different training budgets, nearly identical benchmark scores. The obvious question is why they converged. The less obvious question — the one that reshapes the economics of the entire field — is what that convergence reveals about the limits of Pre Training.

The Exponential That Stopped Paying Off

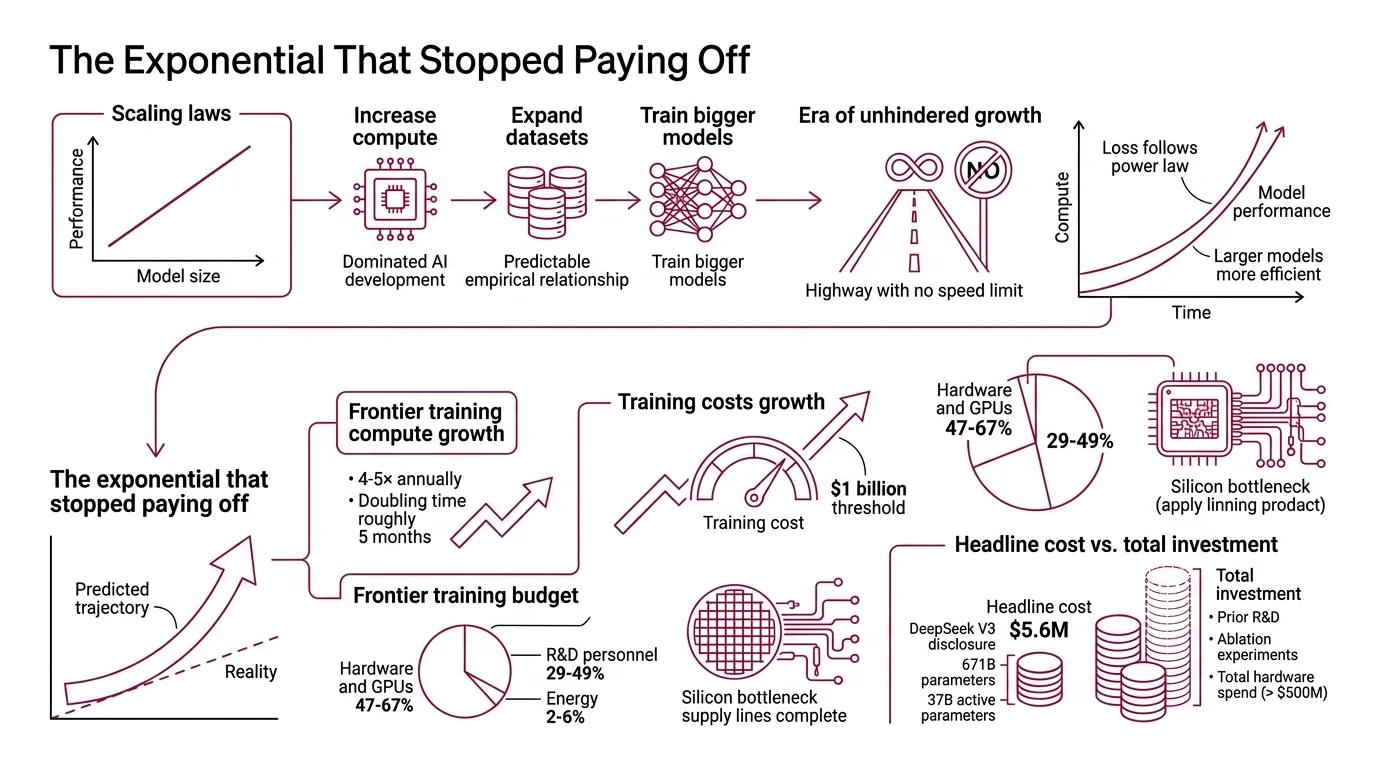

For five years, a single strategy dominated AI development: scale the model, feed it more data, spend more compute. The strategy worked because Scaling Laws said it would — and the empirical relationship was almost suspiciously clean.

Kaplan et al. formalized this in 2020: loss follows a power law with model size, dataset size, and compute budget, spanning seven or more orders of magnitude (Kaplan et al.). Larger models proved more sample-efficient, extracting more learning per token seen. That regularity became the industry’s operating thesis — a mathematical argument for building ever-larger clusters.

For a while, it looked like a highway with no speed limit.

Why are LLM pre-training compute costs still growing exponentially in 2026?

Because the power law demands it — and the penalty for stopping is falling behind.

Frontier training compute has grown at 4–5× per year since 2010, with a point estimate of 4.1× annually and a 90% confidence interval of 3.7–4.6×, translating to a doubling time of roughly five months (Epoch AI). Training costs have tracked at 2.4× per year since 2016, and projections cross the $1 billion threshold per training run by 2027 (Epoch AI).

The cost structure reveals where the pressure concentrates. Hardware and GPUs absorb 47–67% of frontier training budgets, R&D personnel 29–49%, and energy a surprisingly modest 2–6% (Epoch AI). The bottleneck is silicon, not electricity — a detail that explains why GPU allocation has become a geopolitical variable rather than merely a procurement problem.

DeepSeek V3 illustrates how the headline numbers distort the picture. Its disclosed training cost was $5.6 million — a 671-billion-parameter mixture-of-experts model with 37 billion active parameters, trained on 2,048 H800 GPUs over 55 days (Interconnects). That figure is real, but it excludes prior R&D, ablation experiments, and a total hardware spend exceeding $500 million. The sticker price is not the actual price.

The exponential continues because the power law still holds for loss reduction. But loss reduction and downstream task improvement are not the same curve. The gap between optimizing a loss function and producing a model that is measurably better at real tasks is an active research question — not a settled one. The money keeps flowing. The returns keep thinning.

Three Hundred Trillion Tokens and a Shrinking Pool

Compute can be scaled with capital. Data cannot be scaled with anything, because the total stock of high-quality human-generated text is finite — and we are closing in on a measured boundary.

What happens when large language models run out of high-quality training data?

Epoch AI estimates the global stock of high-quality text at approximately 300 trillion tokens, with a 90% confidence interval spanning 100 trillion to one quadrillion (Epoch AI). That range sounds enormous until you place it alongside the appetite of frontier training runs.

The timeline is tighter than most projections suggest. High-quality text data may already be effectively exhausted, or may last until 2028. The full data stock — including lower-quality sources — falls within an 80% confidence interval of 2026–2032 (Epoch AI). With aggressive overtraining at 5× compute-optimal ratios, the wall arrives around 2027; at compute-optimal rates, around 2028. These estimates were revised upward by approximately four years from Epoch AI’s 2022 projections — a revision that reveals the uncertainty itself is the signal.

One option when the wall arrives is recycling: train on synthetic data generated by other models. The result, documented in Nature by Shumailov et al., is model collapse — recursive training on AI-generated data causes irreversible loss of distribution tails. The rare patterns, the unusual phrasings, the long-tail knowledge that makes a model genuinely useful for edge cases erode within a few generations of synthetic recursion.

Synthetic data is not uniformly toxic. Mixed with real human text, it can accelerate training to a target performance level significantly faster than either source alone. But pure synthetic data does not outperform even CommonCrawl. The human signal remains the irreplaceable anchor.

Data Deduplication helps stretch the existing pool by removing redundant training examples, but it cannot manufacture new knowledge. The reservoir has a measurable floor, and the frontier is closer to that floor than to the surface.

The Geometry of Diminishing Returns

The third wall is subtler than cost or data scarcity — and arguably more consequential. The returns on pre-training compute are not merely expensive. They are shrinking in a way that no budget can remedy, because the math itself imposes a ceiling.

What are the diminishing returns of scaling pre-training compute and parameters?

Hoffmann et al. demonstrated the core principle in 2022. A 70-billion-parameter model trained on approximately 1.4 trillion tokens — the Chinchilla-optimal ratio of roughly 20 tokens per parameter — matched the 280-billion-parameter Gopher using the same compute budget, reaching 67.5% on MMLU, seven points above Gopher (Hoffmann et al.). The implication was precise: most models at the time were too large for their data budget.

In practice, the 20:1 ratio has become a floor, not a ceiling. Llama 3 trained at roughly 40 tokens per parameter, deliberately overtraining a smaller architecture for inference efficiency. The Chinchilla ratio describes a starting point for compute allocation, not a hard constraint.

But even with optimal allocation, the power law has a geometric property that works against you. Each successive halving of loss demands roughly the same multiplicative increase in compute. Early gains are dramatic; late gains are microscopic — and the cost of each marginal improvement grows without bound. This is not a failure of engineering. It is the shape of the curve.

The field has responded by shifting its weight. Post-training techniques — supervised Fine Tuning, preference optimization, reinforcement learning with verifiable rewards — now account for the majority of usable capability in frontier models (LLM Stats). Pre-training builds the representational foundation; post-training sculpts it into something a user can rely on. RLHF was an early version of this sculpting; newer methods like GRPO and DAPO push the alignment frontier further.

Test-time compute — spending additional inference cycles on deliberation, the approach pioneered by o1/o3-style reasoning — has become the primary performance lever for 2025–2026, offsetting the pre-training scaling plateau. The economics shifted from training to inference.

Distributed training frameworks like Megatron-LM and Deepspeed, and techniques like Masked Language Modeling, remain foundational infrastructure. They made the pre-training era possible. But the era when more pre-training compute was the default path to a better model has ended.

What the Scaling Curves Predict Next

If you are training a foundation model in 2026, the arithmetic looks different from 2022.

If your training budget sits below the frontier, the Chinchilla ratio still provides a reasonable allocation baseline — but expect to overtrain deliberately for inference efficiency. If your budget approaches the frontier, the marginal return per dollar on pre-training is almost certainly lower than the same dollar spent on post-training alignment or test-time inference infrastructure.

If you are relying on web-scraped data without aggressive deduplication and quality filtering, your model’s distribution tails are already degraded. The proportion of synthetic content in common crawl datasets grows every month, and model collapse is a measured phenomenon — not a theoretical concern.

If you are building for a domain where rare-event coverage matters — medical reasoning, legal analysis, scientific edge cases — the data wall arrives earlier and harder than the aggregate numbers suggest. Your effective training distribution is a thin slice of the total pool.

Rule of thumb: In 2026, a dollar spent on post-training or inference-time reasoning yields more measurable capability improvement than a dollar spent on additional pre-training compute — for models that have already reached frontier scale.

When it breaks: The shift to post-training and test-time compute assumes a strong pre-trained foundation. Models that skip or under-invest in pre-training lack the representational depth for later techniques to refine. The wall is real, but the foundation still needs to be built before anything gets stacked on top of it.

The Data Says

Pre-training built the modern AI industry on a single bet — that scale would keep working. The bet paid off until three walls converged: compute costs growing at 4–5× per year, a finite data stock measured in the hundreds of trillions of tokens, and a power law that demands exponentially more for each incremental gain. The field has already shifted its weight to post-training and test-time compute. The age of pre-training as the dominant lever for progress is not over, but its dominance is.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors