Rubber-Stamp Approvals: The Ethical Cost of Human-in-the-Loop Theater

Table of Contents

The Hard Truth

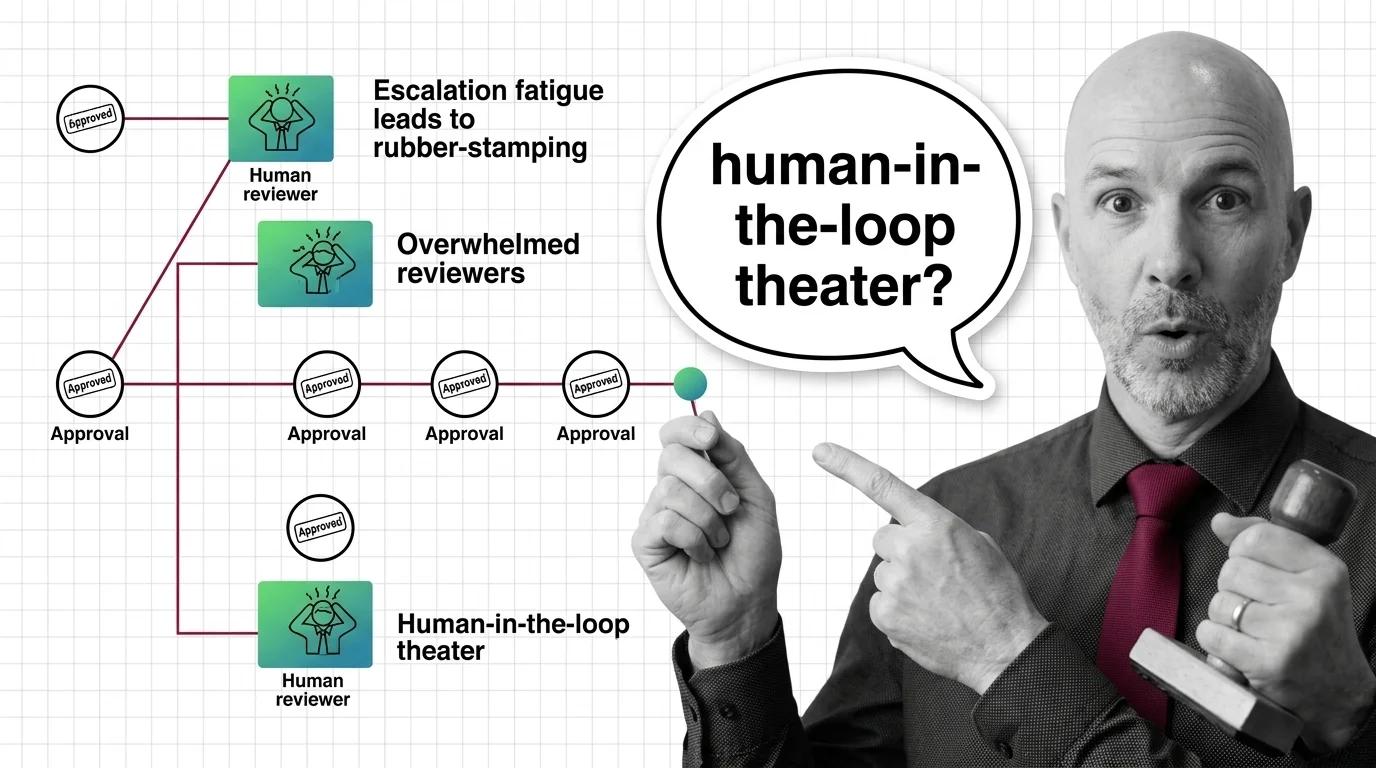

A reviewer sits in front of a queue that adds new items faster than she can read them. Each approval is one click. The system is designed to require her judgment, but the architecture has already decided what she will do. So when one of those approvals causes harm, who exactly authorized it — the system that produced the click, or the human who could not realistically have done anything else?

This is what Human In The Loop For Agents looks like in the kind of production deployment nobody photographs for the case study. The diagram still shows a human in the box. The org chart still names a reviewer. The audit log still records a sign-off. And yet the only thing the human can meaningfully add to the loop, given the volume she is given, is a signature — which is precisely the part of oversight that has the least to do with whether the decision is right.

When the Queue Beats the Reviewer

There is a question the field keeps avoiding because the answer is uncomfortable: what is the ethical status of an approval that a human did not have the time, context, or authority to refuse? The standard framing treats human-in-the-loop as a binary — either there is a reviewer in place or there is not. But oversight is not a switch. It is a budget. Reviewers have a finite attention budget, a finite knowledge budget, and a finite institutional budget for saying “no.” When agent throughput exceeds any of those, the loop is still nominally closed. It is just no longer doing what it was built to do.

The pattern is not new — it is just newly automated. Security operations centres have been living with this shape of failure for a decade. A 2025 ACM Computing Surveys synthesis of industry data on alert fatigue found that, across surveyed environments, large fractions of generated alerts are ignored, un-handled, or never investigated at all (ACM Computing Surveys, 2025) — figures that the authors themselves caution should be read as an illustrative range rather than a precise statistic. That is not a story about lazy analysts. It is a story about what happens when alerting volume is engineered without a corresponding budget for human attention. The same dynamic now installs itself, one agent integration at a time, into approval queues that were never designed with reviewer capacity as a constraint.

So the uncomfortable question is not whether oversight exists. It is whether oversight, at the volume the system produces, can do what it claims to do.

What Makes the Loop Defensible

The strongest case for human-in-the-loop is not naive. It is that for genuinely high-stakes actions — financial transactions, irreversible deletions, communications sent under a person’s name, medical or legal recommendations — even imperfect human review is usually better than no review. A reviewer who catches one error in twenty has still prevented one harm. The European Union’s AI Act, in Article 14, makes effective human oversight a mandatory design property of high-risk systems and explicitly notes that oversight persons must “remain aware of the possible tendency of automatically relying or over-relying on the output” (EU AI Act Article 14). The legislators clearly understood that a reviewer can become a rubber stamp; the answer in the regulation is that the system itself must be designed so that does not happen.

There is also a real engineering practice growing up around this. Mature Agent Guardrails architectures now distinguish between high-risk actions that always require approval and low-risk actions that can be auto-executed but sampled — for example, requiring 100% review of high-risk approvals while randomly sampling 5–20% of low-risk ones to detect drift over time, a pattern documented in vendor write-ups on agent approval design (Prefactor). Pair this with Agent Evaluation And Testing that monitors approval-acceptance rates as a signal, and the loop becomes harder to fake. None of this is theatrical. It is the version of oversight that takes its own job seriously.

This is the case to take at full strength before disagreeing with it. Inside its own frame, it is coherent.

The Assumption That Volume Stays Reviewable

The defense rests on a quiet assumption: that the volume of decisions an agent produces will stay inside the range a human reviewer can meaningfully process. That assumption is empirically fragile, and recent decision science suggests it is fragile in a structural way. A 2010 study in Human Factors by Parasuraman and Manzey, still treated as the canonical synthesis on automation complacency, concluded that automation bias “cannot be prevented by training or instructions” and affects naive and expert users alike (Parasuraman & Manzey, Human Factors 2010). A more recent legal-philosophy analysis in the European Journal of Risk Regulation reads the AI Act’s automation-bias clause and notes the same hard truth: you can require oversight by statute, but you cannot legislate away the cognitive tendency that makes high-volume oversight collapse into rubber-stamping.

So the assumption is that we can put a human in the loop and somehow exempt that human from the cognitive limits we have measured for fifty years. We cannot. The Amazon recruiting model that systematically downgraded women’s resumes is the classic case study in what happens when the named reviewer has neither the technical knowledge to detect the pattern nor the institutional authority to halt the system; commentators have read it as a failure not of human review but of the conditions that would have made human review meaningful (Barnard-Bahn). Scaling agent throughput while leaving reviewer capacity flat does not produce more oversight. It produces faster signatures.

The Crumple Zone of Approval

There is a useful concept from the wider study of automation that has not yet fully crossed into the agent conversation. In a 2019 paper in Engaging STS, the researcher Madeleine Clare Elish described what she called the “moral crumple zone” — a structural pattern, originally observed in self-driving cars and aviation autopilot, in which legal and reputational responsibility is “deflected off automated parts” of a system and onto the nearest human operator, even when that human had limited control over the outcome (Elish, Engaging STS 2019). The concept was developed for vehicles and cockpits. Applying it to enterprise agent stacks is an extension, not a direct quote, but the structural shape is uncomfortably similar.

Read the standard agent-approval architecture through that lens. The model produces the recommendation. The orchestration layer delivers it to a queue. The reviewer clicks. When a regulator, an auditor, or an injured user later asks who decided, the model vendor points to the deployer, the deployer points to the integration team, the integration team points to the reviewer, and the reviewer points to a queue she could not have read in the time she had. The crumple zone is not at the model. It is not at the platform. It is the chair the human sat in.

The MIT Technology Review essayist Uri Maoz, writing about military human-in-the-loop in April 2026, named the underlying asymmetry in a phrase worth borrowing past its original context: “the human cannot know the AI’s intention before it acts” (MIT Technology Review). His piece is specifically about military uses, and the generalisation to enterprise agents is editorial. But the asymmetry he describes is the same one a reviewer faces in a benefits-eligibility queue, a content-moderation pipeline, or an autonomous code-deployment system: the approval is being asked of a human who can see the surface of the decision but not the reasoning that produced it.

Where the Cost Actually Lands

Thesis (one sentence, required): When approval volume outstrips reviewer capacity, human-in-the-loop degenerates from oversight into compliance theater — and the ethical cost lands on the human reviewer placed in the crumple zone, not on the system designer who built the queue.

That cost is not always abstract. In 2021–2022, OpenAI contracted Sama to use Kenyan workers to label deeply traumatic content for safety training, paying the workers a take-home rate of roughly $1.32 per hour while OpenAI paid Sama about $12.50 per hour — and the project was cancelled in February 2022, eight months early, because the human cost of the work proved untenable (TIME). The case is emblematic of one period and one contract, not of how all reinforcement-learning labelling is paid in 2026, but it makes one thing concrete. Whenever a system needs human judgment at industrial scale, somebody is the human, and the conditions of their work are an ethical fact about the system. Approval queues for autonomous agents are now becoming the next version of this arrangement, only with the labour distributed across regulated employees inside the buyer’s own organisation rather than contractors offshore.

The thesis grows harder, not softer, as architectures improve. A faster, smarter agent generates a longer queue, which makes the temporal gap between recommendation and review wider, which makes meaningful refusal less practical, which pushes the crumple zone deeper into the reviewer’s chair.

Questions to Ask Before You Wire the Approval Queue

There are no clean prescriptions, only better starting questions. What is the maximum queue depth at which the human reviewer in your design can still read each item with the context required to refuse it — and what happens, by design, when that depth is exceeded? Has the team that built the integration ever sat with the reviewer for a full shift to watch what oversight actually looks like at production volume? When the model and the reviewer disagree, whose conclusion is recorded as the system’s decision, and whose name is on the audit log when it is wrong? And in domains touched by the EU AI Act’s high-risk obligations, which become enforceable on August 2, 2026 (EU AI Act Service Desk), what would your team be able to tell a regulator about the conditions under which a specific approval was given?

These are not engineering questions. They are governance questions wearing engineering clothes — and answering them is one of the few interventions that converts oversight from theatre back into oversight.

Where This Argument Is Weakest

This argument depends on a claim that approval-queue capacity is being set by throughput and not by reviewer reality. That could be wrong. NIST is preparing an AI Agent Interoperability Profile, planned for release in late 2026, which may begin to standardise the metrics under which agentic oversight is measured. Maturing audit tooling for agent traces, paired with realistic queue-depth budgeting and role-level accountability, could make oversight load a first-class design constraint rather than a residual one. If reviewer capacity becomes a hard parameter that engineering teams plan around — the way they plan around latency or cost — the argument here collapses into a transitional observation about an immature field. The honest position is that the institutions that would enforce that change are still being built.

The Question That Remains

If a system you do not control routes a decision to a human you do not employ, at a volume she cannot meaningfully review, in a tempo she cannot meaningfully refuse — and the audit log records her name on the outcome — whose conscience is the system actually borrowing? Until the field can answer that without flinching, every approval queue is a quiet wager that nobody on the next shift will be the one who clicks the wrong thing on a day when nobody could have done otherwise.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

Ethically, Alan.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors