Quadratic Attention, Concentrated Power: Who Wins and Who Loses as Attention Models Scale

Table of Contents

The Hard Truth

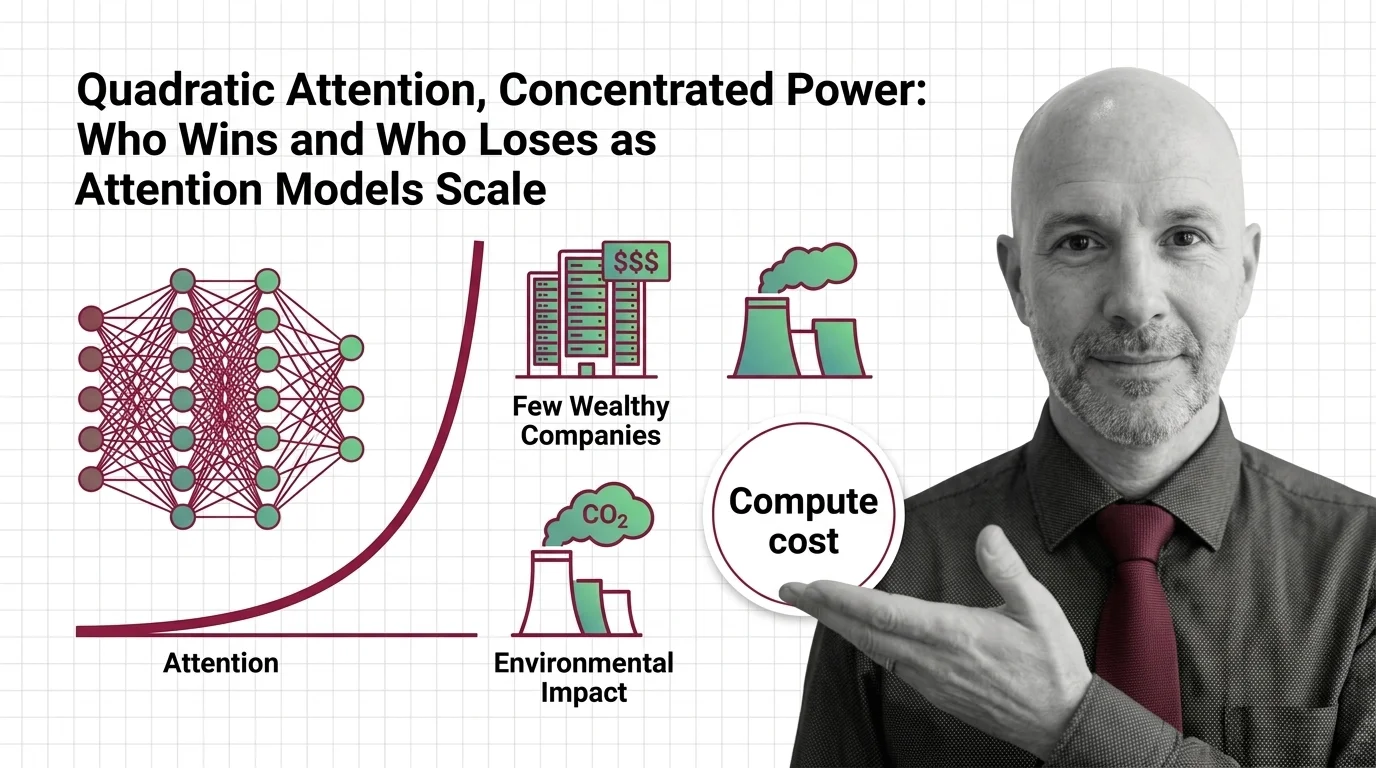

What if the most consequential decision in modern AI was not which model to build, but which exponent to accept? The attention mechanism scales quadratically. Every doubling of context does not double the cost — it quadruples it. Who can afford to keep doubling?

We talk about attention as if it were a neutral mathematical operation — a way for models to decide what matters. But hidden inside that operation is an economic filter, a gate that determines not just which tokens relate to which, but which organizations get to participate in the frontier at all. The exponent is quiet. Its consequences are not.

The Exponent Everyone Accepts

The Attention Mechanism sits at the heart of every frontier language model produced in the last four years. GPT-4o, Claude, Gemini, Llama — all of them run on the same fundamental design, the Transformer Architecture, and all of them carry the same mathematical burden: O(n squared) in memory, O(n squared times d) in time complexity (Fichtl et al.). That superscript is easy to gloss over in a research paper. It is much harder to gloss over when it arrives as a procurement decision.

Training costs for frontier models have been growing at roughly 2.4x per year, and if that trend holds, the billion-dollar training run will arrive by 2027 (Epoch AI). Not the billion-dollar company. The billion-dollar single experiment. Within that cost structure, hardware alone accounts for the majority — somewhere between half and two-thirds of total spend — with the rest split between the researchers who design these runs and the energy that sustains them.

Who can write that check? As of early 2026, the four largest hyperscalers — Amazon, Alphabet, Microsoft, and Meta — have committed a combined total approaching $700 billion in infrastructure spending, though not all of it is dedicated to AI alone (CNBC). The exponent is a membership fee, and the number of institutions that can pay it is shrinking.

The Reasonable Defense

Before diagnosing the problem, it is worth understanding why thoughtful engineers defend the status quo. Scaled Dot Product Attention works. It captures long-range dependencies that earlier architectures could not reach. It enabled the reasoning capabilities that turned language models from curiosities into infrastructure. Innovations like Grouped Query Attention and Flash Attention have made the quadratic cost more manageable, trading clever memory access patterns for meaningful throughput gains.

The argument, stated at its strongest, goes like this: quadratic attention is expensive, but it produces the best results. A 2025 survey found that no sub-quadratic model occupies the top ten on the LMSys leaderboard (Fichtl et al.). State-space models offer linear scaling and faster inference, but at the frontier — where the hardest reasoning happens — the quadratic architecture remains unmatched. Why replace what nothing else can yet equal?

That is a reasonable position. It is also the position of those who can afford the electricity.

The Assumption Inside the Architecture

The conventional defense assumes that compute abundance is a neutral condition — that the cost of attention is merely a technical parameter, to be optimized over time, and that optimization will eventually make it accessible to everyone. But there is a quieter assumption underneath: that the concentration of resources during the optimization period does not permanently reshape who gets to build, who gets to compete, and whose values get encoded into the systems that govern public life.

Consider what the quadratic exponent does in practice. It means that extending a model’s context — the amount of information it can reason about simultaneously — does not scale linearly with resources. Every step forward costs disproportionately more. This is not a problem if you are sitting on a two-hundred-billion-dollar infrastructure budget. It is an extinction event if you are a university research lab, a startup in Nairobi, or a public institution trying to build sovereign AI capacity.

The Softmax function, which normalizes attention scores so the model can weight relevant tokens, is itself a bottleneck that hardware manufacturers are racing to optimize — NVIDIA’s Blackwell Ultra architecture specifically targets this operation. But hardware optimization reinforces the dependency rather than dissolving it. Faster Cross Attention on proprietary silicon does not distribute the ability to compute attention. It concentrates capability further among those who can afford the latest chips.

Epoch AI summarized the dynamic plainly: only a few large organizations can keep up with these expenses, potentially limiting innovation and concentrating influence over frontier AI development.

The Planet in the Denominator

The cost of quadratic attention is not only financial. It is planetary.

Data centers consumed roughly 415 TWh of electricity globally in 2024 — about 1.5% of global electricity demand. The International Energy Agency projects that figure will reach approximately 945 TWh by 2030, with AI servers growing at roughly 30% per year (IEA). Training GPT-3 alone required 1,287 MWh of energy and produced 552 tons of CO2 (MIT News). It also evaporated an estimated 700,000 liters of freshwater — a figure specific to Microsoft’s US data centers, not a global measure (Brookings).

These are not abstract numbers. They represent rivers drawn down, grids strained, and carbon budgets spent — disproportionately in regions that did not choose to host this infrastructure and will not benefit equally from its outputs. A significant share of new data center electricity demand is reportedly being met by fossil fuels. The quadratic exponent is not just an algorithmic property. It is an environmental commitment, enacted without a vote.

The question is not whether AI should consume energy — all computation does. The question is whether an architecture that scales by squaring is the one we should be scaling, given what it demands from a planet already under pressure.

The Exponent as Governance

Here is where the argument arrives at its uncomfortable center. The quadratic cost of attention is not merely an engineering constraint waiting to be optimized. It is, in practice, a governance mechanism — one that determines who builds frontier AI, who profits from it, who bears its environmental cost, and whose values it encodes.

The exponent is a policy decision disguised as a design choice.

Regulation is beginning to take notice. The EU AI Act establishes a systemic risk threshold at 10 to the 25th FLOP — a line that only quadratic-scale models are likely to cross. Obligations for general-purpose AI providers took effect in August 2025, with enforcement powers — including fines — expected to apply from August 2026. But regulation that addresses only the outputs of concentrated power does not address the concentration itself. It manages symptoms while the underlying architecture continues to select for scale, for capital, for consolidation.

Sub-quadratic alternatives exist. State-space models and hybrid architectures are entering production. But they are entering it at the margins, not at the frontier. And the frontier is where the defaults are set — the defaults that shape which questions AI systems treat as valid, which languages they speak fluently, and whose knowledge they encode as ground truth.

The Questions We Cannot Outsource

This is not a call to abandon attention or to romanticize efficiency over capability. It is a call to stop treating a mathematical exponent as if it were ideologically inert.

If the quadratic cost of attention concentrates the ability to build frontier AI among a handful of corporations, then the architecture of attention is also an architecture of power. If scaling that architecture consumes electricity and water at rates that strain planetary boundaries, then choosing to scale is not a neutral engineering decision — it is an ethical one.

The hard question is not whether we should build smaller models. It is whether we are willing to accept that the most capable architecture might also be the most extractive, and whether capability alone is sufficient justification for the extraction.

Where This Argument Breaks Down

If sub-quadratic architectures reach frontier-quality reasoning within the next few years, this entire analysis collapses into a temporary growing pain. If hardware efficiency gains outpace the scaling of attention costs — if the exponent’s practical impact shrinks faster than models grow — then concentration may prove self-correcting. History offers examples of technologies that began as monopoly infrastructure and became commodity utilities. Electricity itself followed that arc.

The argument is also vulnerable to the charge that it conflates training with access. Open-weight models demonstrate that even expensive architectures can be distributed after the initial investment. If open release norms hold, the exponent may constrain who trains but not who deploys — and deployment is where most of the value eventually accumulates.

The Question That Remains

We designed the most powerful reasoning architecture in the history of computation, and we built it on an exponent that only the wealthiest institutions can afford to scale. That was not inevitable. It was a choice — made incrementally, in research labs, in procurement decisions, in infrastructure budgets that nobody voted on.

What happens when the cost of thinking well becomes a privilege rather than a right?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors