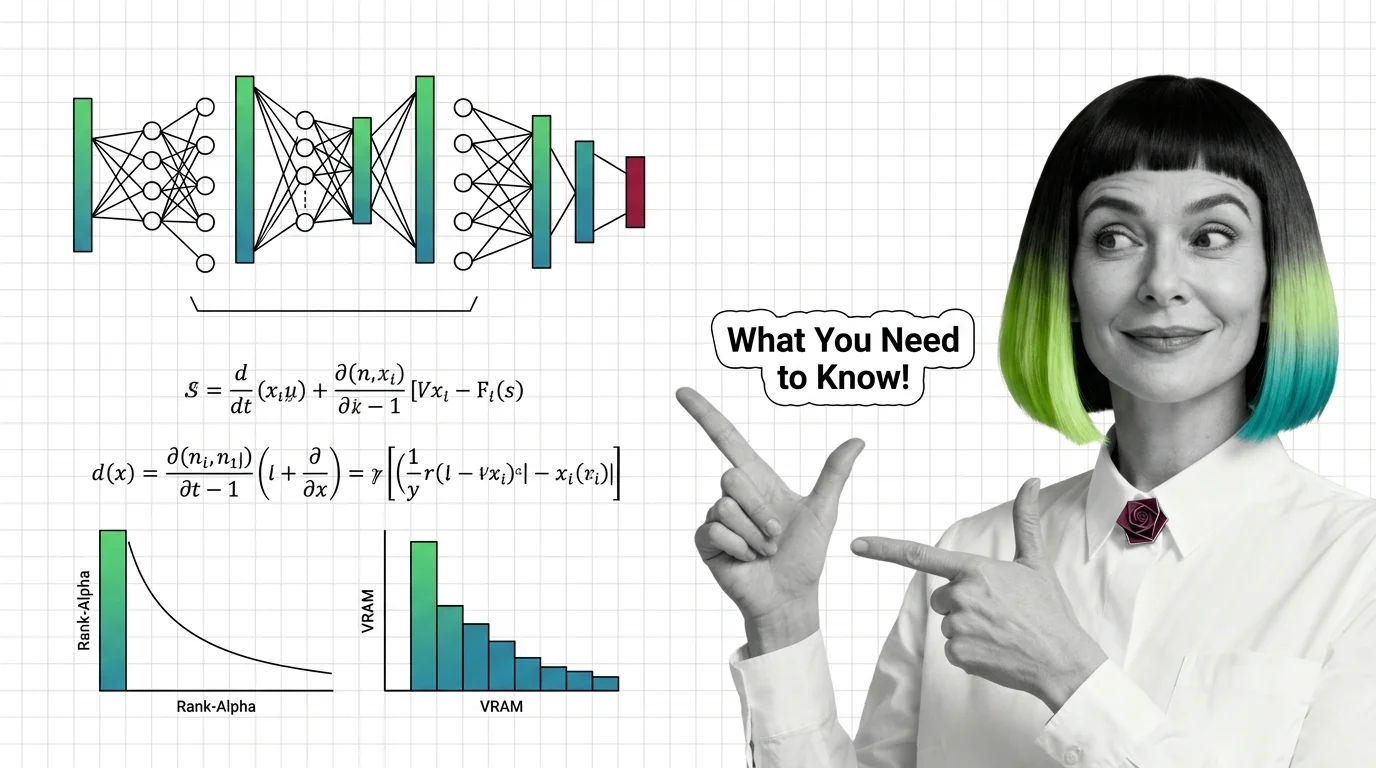

Training Image LoRAs: Diffusion Math, Rank-Alpha, and VRAM Limits

Table of Contents

ELI5

A LoRA is a small set of low-rank matrices that ride alongside a frozen image model and nudge its attention layers toward a new subject or style without retraining. The math is small. The training mistakes are not.

A character LoRA can be a small adapter file that reshapes a multi-gigabyte diffusion model and makes it draw your dog from any angle. It can also bake the kitchen lighting into every output, refuse to respond to its own trigger word, and silently leak its training style into prompts that have nothing to do with it.

The same architecture produces both outcomes. The difference is whether the trainer understood what was actually happening underneath.

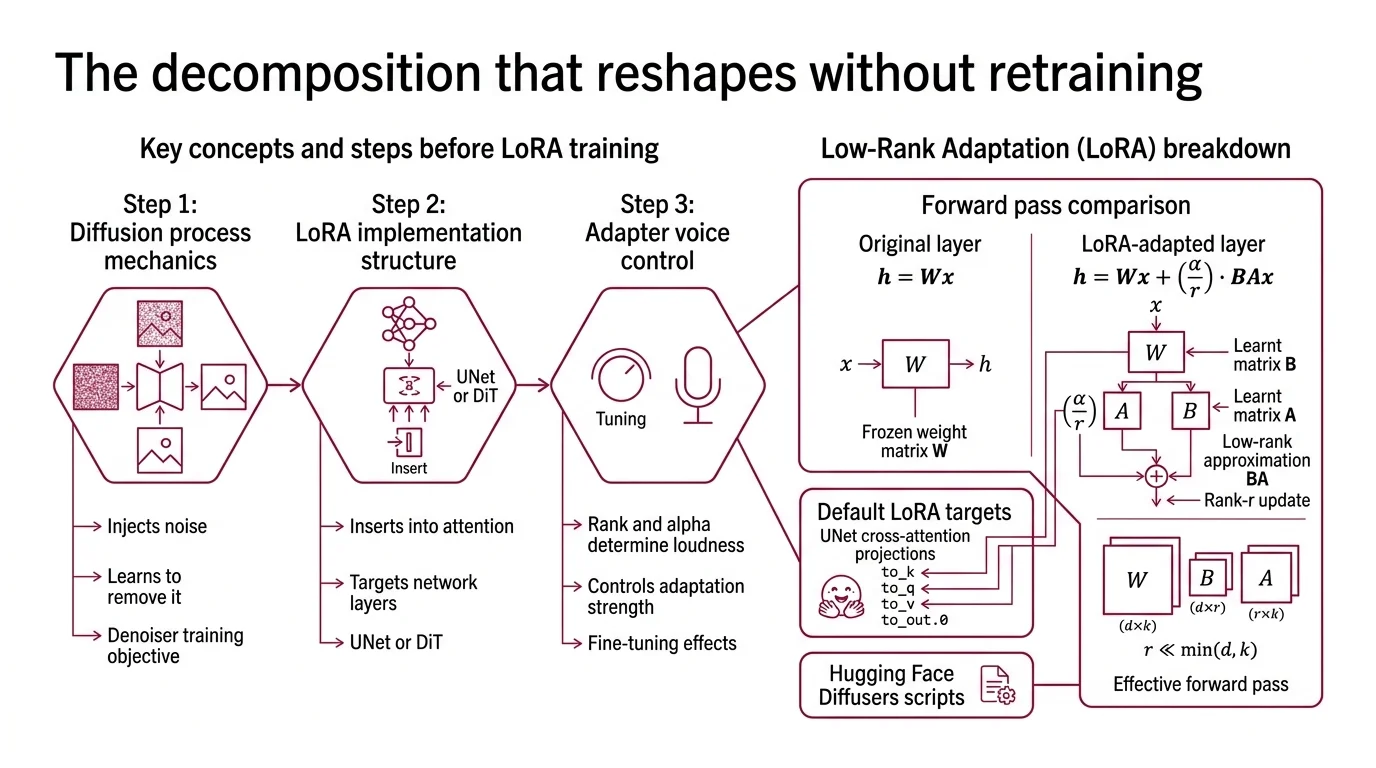

The Decomposition That Reshapes Without Retraining

LORA stands for Low-Rank Adaptation, introduced by Hu et al. in 2021 (arXiv) for fine-tuning Transformer LLMs. Its application to Diffusion Models for AI Image Editing is community-driven — first the cloneofsimo repo, then kohya-ss, then Hugging Face PEFT — but the core math is unchanged.

What do you need to know before training a LoRA for image generation?

Three things, in this order: how the diffusion process injects noise and learns to remove it, how LoRA inserts itself into the denoiser’s attention layers, and how rank and alpha together determine how loud the adapter’s voice gets.

The diffusion training objective itself predates LoRA. Ho, Jain, and Abbeel formalized denoising diffusion probabilistic models in 2020 (arXiv); the loss teaches a network — a UNet in older architectures, a DiT in modern ones — to predict the noise that was added to a clean image at a random timestep. Train long enough on enough images, and the network can iteratively denoise pure noise into something coherent. Flow matching, proposed by Lipman et al. (arXiv) and used by Flux and SD3, replaces the noise-prediction objective with a velocity field. The mechanics shift; the training shape doesn’t. You still have a heavy denoising network, you still want to teach it about a new concept without rewriting all of its weights.

That is what

LoRA for Image Generation does. For each chosen weight matrix W in the network, LoRA freezes W and learns two smaller matrices B and A such that the effective forward pass becomes:

h = Wx + (α / r) · BAx

where B ∈ R^{d×r}, A ∈ R^{r×k}, and r ≪ min(d, k) (arXiv). The product BA is the rank-r update — a low-rank approximation of how the layer needs to change. In Hugging Face’s Diffusers training scripts, the default targets are the cross-attention projections of the UNet: to_k, to_q, to_v, to_out.0 (Hugging Face Diffusers Docs). Those are precisely the modules where text conditioning meets the image latent. A LoRA does not learn to draw. It learns where the model should listen differently when a particular caption arrives.

Not a new model. A re-aimed one.

The rank-alpha relationship most tutorials get backwards

Rank r controls capacity: how many independent directions the adapter can move the original weights in. Alpha α controls strength: the scaling factor α/r applied at inference. Diffusers’ default in image-LoRA scripts sets lora_alpha = args.rank, which means α/r = 1 regardless of which rank you choose — the volume knob is locked. The moment you override alpha as an absolute number — say, alpha = 16 — changing rank then changes the effective scale α/r and therefore changes how loud the adapter speaks at inference (Hugging Face Diffusers Docs).

That detail is widely missed. Practitioners report “raising the rank made it weaker” because they doubled r while alpha stayed at an earlier fixed value, and the effective scale α/r halved.

The Kohya_ss wiki documents three conventions in active use — α = r, α = r/2, and α = 2r — without claiming any is optimal (Kohya_ss Wiki). Brenndoerfer’s hyperparameter analysis observes that changing α/r is mathematically equivalent to scaling the learning rate; many “rank/alpha tuning” sessions are really learning-rate tuning in disguise (Brenndoerfer LoRA Hyperparameters). Treat α and r as a pair, not as independent dials. Common image-LoRA defaults sit at rank 32, alpha 32, with the Diffusers learning rate of 1e-4 — already higher than full fine-tune rates because only the adapter trains.

Where the Training Pipeline Fails

LoRA’s elegance hides a sharp asymmetry. The math is small; the dataset and the captions are not. Most practical failures in image LoRAs trace to choices made before the optimizer ever runs — and they fail in patterns that look like model bugs but are actually data bugs.

Why do image LoRAs overfit, leak style, and break trigger words?

Three failure modes show up repeatedly across community trainers, and each has a mechanical explanation grounded in how the adapter conditions on input.

Overfitting on late epochs. A character LoRA trained on photographs of one dog in one apartment will start reproducing the apartment. The adapter has rank to spare; given enough steps, it will use that capacity to memorize co-occurring details — pose, background, lighting — and bind them to the trigger token. Civitai trainers note that intermediate checkpoints are often more flexible than the “final” one and recommend saving every N steps so the trainer can pick the epoch where the subject is captured but the background is still optional (Civitai overfitting article).

Style leakage from caption templates. If every training caption begins with the same trigger ("txcl, a portrait of..."), the adapter learns that the trigger covaries with portrait composition, lighting, and color grading — not just the subject. At inference, summoning the trigger drags the entire training-set aesthetic along with it. Diffusion Doodles documents this as the most common reason a “character” LoRA also visibly carries a “style” — the fix is caption variety: same trigger, different surrounding language (Diffusion Doodles).

Trigger words that don’t trigger on Flux. Flux uses a T5 text encoder, which reads natural language rather than treating the prompt as a bag of tokens. Practitioners report that isolated nonsense triggers — the kind that worked on SD 1.5 — get swallowed by T5 as noise. The community recommendation, per the RunDiffusion Flux LoRA guide, is a short unique token paired with a descriptive caption: txcl painting of a fox rather than just txcl (RunDiffusion Flux LoRA Guide). Treat that as a practitioner observation, not a published result.

These three failures share a structural cause: the adapter learns whatever co-varies with what you ask it to learn. Caption hygiene is not a stylistic preference. It is a way of telling the gradient where the signal ends.

VRAM Math: What Actually Fits on Your Card

LoRA’s compute footprint is small relative to the base model — but only the base model still has to fit in memory. The denoiser, the text encoders, the VAE, activations, gradients, and the optimizer state all share VRAM with the adapter. That is why “minimum VRAM” numbers in tutorials disagree by a factor of three.

The honest version is a range, not a number.

| Base model | Resolution | Realistic VRAM | Notes |

|---|---|---|---|

| SD 1.5 | 512×512 | ~8 GB | With gradient checkpointing (SynpixCloud) |

| SDXL | 1024×1024 | 16–24 GB | Drops near 10 GB with bf16 + fused backward (Puget Systems) |

| Flux.1 [dev] | native | >40 GB | All components, rank 16, no quantization (Diffusers Flux LoRA README) |

| Flux.1 [dev] via QLoRA | native | 16–24 GB | 4-bit NF4 base + FP16/BF16 adapters (Hugging Face Blog) |

Each row depends on batch size, optimizer (AdamW is heavy; 8-bit Adam and Prodigy are lighter), whether the text encoder is also being trained, and whether gradient checkpointing trades compute for memory. Treat the table as starting points, not guarantees.

QLoRA — introduced by Dettmers et al. in 2023 (arXiv) for LLMs — makes Flux training tractable on consumer cards by quantizing the frozen base model to 4-bit NF4 while keeping the adapter in higher precision. The base model’s contribution to memory drops sharply; the adapter still trains in BF16 or FP16. That is currently the only way to fit a Flux.1 [dev] LoRA on a 24 GB card without aggressive component offloading (Hugging Face Blog). For frontier work in 2026, the field is moving toward Flux.2 and SD 3.5 as base models — both larger than SDXL, both expecting QLoRA-class techniques as a default rather than as an optimization.

What the Math Predicts Before You Press Train

The mechanics above translate into a small number of observable consequences. Use them as a checklist before launching a run.

- If your dataset is below the lower end of the character-LoRA range, expect identity to be unstable; SeaArt’s character LoRA guide cites 20–25 images as the workable range, with quality and diversity outweighing volume (SeaArt Guide).

- If every caption shares the same opening, expect the LoRA to bind style to the trigger; Shakker AI’s tutorial cites roughly 200 images as a typical style LoRA target — that scale is for style, not character (Shakker AI Wiki).

- If you raise rank without raising alpha, expect the adapter to feel weaker, not stronger; the effective scale

α/rshrank. - If you save only the final epoch, expect background memorization; intermediate checkpoints are usually more flexible.

- If you train Flux without QLoRA on a 24 GB card, expect OOM; the math doesn’t bend.

Rule of thumb: train the smallest rank that still captures the subject, save checkpoints often, and read the loss curve as one signal among several — eyeball intermediate samples too.

When it breaks: the most common practical limit is not the optimizer. It is the dataset. A LoRA trained on a homogeneous set of images cannot generalize past those images, regardless of rank, alpha, or learning rate; the architecture has nowhere to learn diversity from.

The Data Says

Image LoRAs work because the diffusion denoiser already knows how to draw — the adapter only retargets attention. The math is a low-rank approximation of how that targeting needs to change, with α/r as the volume knob. Most “training problems” are dataset and caption problems wearing optimizer clothes; the geometry of the gradient cannot fix what the captions failed to disambiguate.

Compatibility & gotchas:

- Diffusers Flax schedulers: Deprecated in v0.37.0 — slated for removal. Migrate to PyTorch schedulers if your pipeline imports Flax.

- Flux.1 [dev] gate: Requires signing the Hugging Face gate form before

from_pretrainedworks; otherwise authentication fails silently.- Diffusers LoRA training docs: Marked “experimental — API may change” by Hugging Face. Pin your diffusers version per run.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors