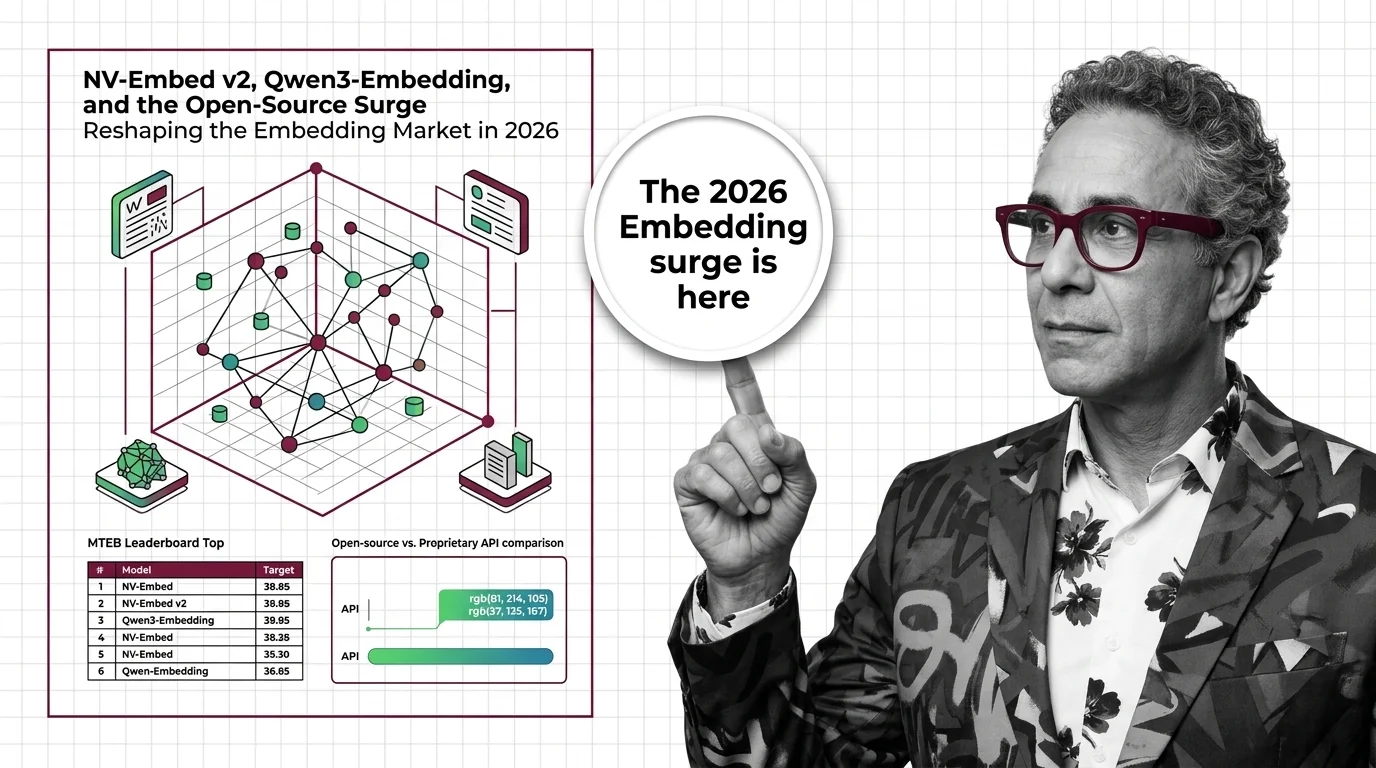

NV-Embed v2, Qwen3-Embedding, and the Open-Source Surge Reshaping the Embedding Market in 2026

Table of Contents

TL;DR

- The shift: Open-weight Embedding models from NVIDIA, Alibaba, and BAAI now match or exceed proprietary APIs on major benchmarks — at a fraction of the cost.

- Why it matters: The infrastructure layer powering Semantic Search and retrieval is decoupling from proprietary lock-in.

- What’s next: MongoDB’s $220M acquisition of Voyage AI signals the battleground is moving from standalone APIs to database-native embedding.

The proprietary pricing wall that held since the GPT-3 era is cracking from both sides — performance parity from above, cost collapse from below. Three open-weight models now sit at or near the top of the MTEB embedding leaderboard. And if your retrieval stack still runs on a per-token API bill, the window for recalculating is shorter than you think.

The Embedding Moat Just Disappeared

Thesis: Open-weight embedding models have reached benchmark parity with proprietary APIs, and the resulting price collapse is restructuring how teams build retrieval infrastructure.

For years, proprietary APIs from OpenAI, Cohere, and Voyage AI owned the quality tier. Open-weight models were experiments. That split no longer holds.

NV-Embed v2, built on Mistral-7B with 8 billion parameters, scored 72.31 on the legacy MTEB leaderboard across 56 tasks when it debuted in August 2024 (Hugging Face). Qwen3-Embedding, released mid-2025 in sizes from 0.6B to 8B, took the #1 multilingual MTEB slot at 70.58 across 100+ languages under Apache 2.0 (Qwen Blog). BGE-M3 from BAAI brought hybrid Dense Retrieval plus sparse plus multi-vector retrieval.

One caveat that changes the ranking conversation: MTEB migrated from a legacy 56-task benchmark to MMTEB v2 with 131 tasks and Borda-count scoring. Direct cross-version comparisons are misleading.

On the current refreshed English MTEB as of March 2026, Google’s Gemini Embedding 001 leads at 68.32 with the top retrieval score of 67.71 (Awesome Agents). NV-Embed v2’s retrieval score of 62.65 trails significantly. Open-weight models dominate older benchmarks and multilingual tasks — proprietary leaders hold the newest English evaluations.

Compatibility notes:

- Google text-embedding-004: Shut down January 14, 2026. Replaced by gemini-embedding-001 — dimension change from 768 to 3072 requires full re-indexing for existing deployments.

- NV-Embed-v2 license: CC-BY-NC-4.0 blocks direct commercial use. Production deployment requires NVIDIA NeMo NIMs licensing.

The Price Gap Is Now a Canyon

The benchmark convergence matters. The price delta is where strategy actually shifts.

Self-hosted open-weight models run at roughly $0.001 per million tokens on cloud GPUs — 10 to 100 times cheaper than API alternatives (Awesome Agents). OpenAI’s text-embedding-3-large sits at $0.13 per million tokens. Voyage 4 large and Cohere Embed 4 both charge $0.12 per million tokens.

Voyage 4, released in January 2026, introduced the first MoE-architecture embedding model with a shared embedding space (Voyage AI Blog). The architecture is novel. But its retrieval benchmarks are self-reported across 29 proprietary datasets — no independent MTEB v2 scores exist yet.

The decoder-based architecture that NV-Embed v2 and Qwen3-Embedding both adopted — repurposing language models as Vector Database encoders — has become the default pattern. The era of custom-built encoders trained purely for Cosine Similarity matching is closing. That convergence isn’t accidental. It’s architectural.

Who Moves Up

Teams running retrieval at scale. If you’re processing millions of documents through a Matryoshka Embedding pipeline, the difference between $0.13 and $0.001 per million tokens is the difference between a budget line and a rounding error.

Multilingual operations. Qwen3-Embedding’s 32K context window and 100+ language support under Apache 2.0 eliminates API keys, vendor dependency, and per-token billing in one move.

Database vendors building embedding natively. MongoDB paid $220M for Voyage AI in early 2025 (MongoDB Blog). That’s a platform play — embedding as a database primitive, not an external API call.

Who Gets Left Behind

Standalone embedding API providers charging premium rates without differentiated quality. OpenAI’s text-embedding-3-large scores 64.60 on MTEB — below every open-weight leader. At $0.13 per million tokens, that pricing needs a performance story. The story is getting harder to tell.

Teams still anchored to keyword matching. If your search infrastructure relies on Word2vec-era approaches while competitors deploy embedding-powered retrieval, the relevance gap compounds daily. You’re either retooling or you’re falling behind.

What Happens Next

Base case (most likely): Open-weight models become the default embedding layer for new projects. Proprietary APIs retain enterprise contracts through inertia and integration depth. Price pressure forces API vendors to cut rates or bundle embedding into platforms. Signal to watch: A major cloud provider ships a managed open-weight embedding service. Timeline: Six to twelve months.

Bull case: MongoDB’s Voyage AI integration triggers a wave of database-native embedding, collapsing the embedding-as-a-service category. Every major database ships built-in vector encoding. Signal: Two or more database vendors announce native embedding within six months. Timeline: Twelve to eighteen months.

Bear case: Licensing restrictions — like NV-Embed v2’s non-commercial CC-BY-NC-4.0 — create legal uncertainty that slows enterprise adoption. Proprietary vendors regain differentiation through multimodal embedding. Signal: Enterprise legal teams flag open-weight licensing as a procurement blocker. Timeline: Ongoing.

Frequently Asked Questions

Q: How did NVIDIA NV-Embed v2 and BAAI BGE reach the top of the MTEB embedding leaderboard? A: Both adopted decoder-based architectures that repurpose large language models as embedding encoders. NV-Embed v2 scored 72.31 on the legacy 56-task MTEB in August 2024. BGE-en-ICL scored 71.24 on the same benchmark. Both trail Gemini Embedding 001 on the current refreshed leaderboard.

Q: Will open-source embedding models replace proprietary APIs from Voyage AI, OpenAI, and Cohere? A: Not entirely, but the gap is closing fast. Open-weight models match benchmarks at a fraction of API cost. Proprietary vendors will retain customers through managed services and multimodal capabilities, but standalone embedding APIs face sustained margin compression.

Q: How are enterprise teams switching from keyword search to embedding-powered semantic retrieval in 2026? A: Teams deploy open-weight models like Qwen3-Embedding and BGE-M3 for self-hosted retrieval at under $0.001 per million tokens. Database-native embedding — led by MongoDB’s Voyage AI acquisition — eliminates the external API dependency that slowed earlier adoption.

The Bottom Line

The embedding market split along a new fault line. Open-weight models own cost and multilingual coverage. Proprietary APIs still lead the newest English benchmarks but face relentless price pressure. The infrastructure powering retrieval is decoupling from the vendors who controlled it. Act accordingly.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors