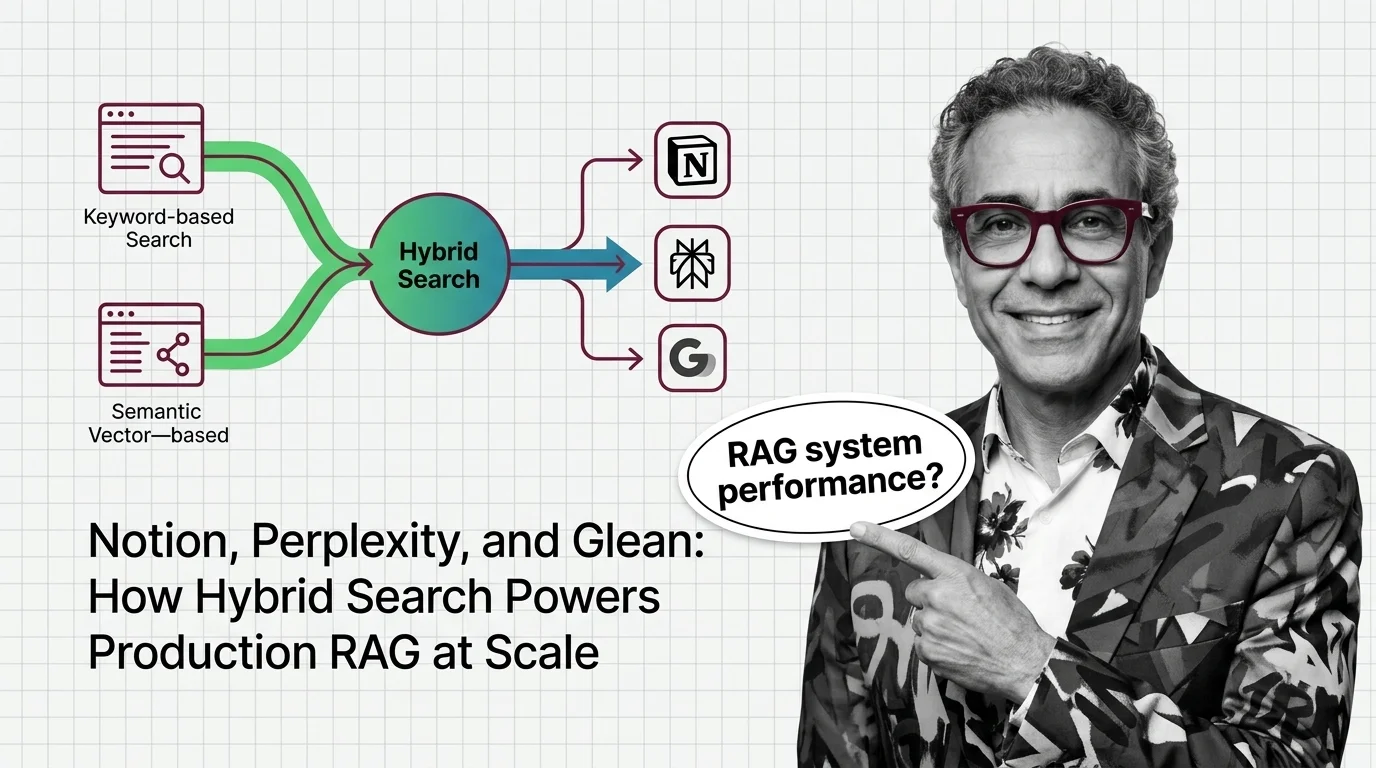

Notion, Perplexity, and Glean: How Hybrid Search Powers Production RAG at Scale

Table of Contents

TL;DR

- The shift: Production-scale RAG has standardized on hybrid retrieval — vector-only systems are now treated as a starting point, not a finish line.

- Why it matters: Three independent leaders, in three different domains, converged on multi-signal architectures that combine lexical and semantic retrieval with multi-stage ranking.

- What’s next: The retrieval layer is becoming infrastructure. Teams still running pure-vector setups will pay for it in answer quality, latency, or both.

For two years, the RAG playbook had a single default: embed everything, query a vector database, return the top-k. Cheap to build. Easy to demo. Brutal in production. Now the largest deployments in the field have moved on — and the pattern they all converged on is the same.

The Vector-Only Era Is Already Behind Us

Thesis: Hybrid Search has become the production default for Retrieval Augmented Generation at scale, and the leaders in three different domains — web search, enterprise search, and workspace AI — have all moved past pure-vector retrieval.

This isn’t an opinion. It’s a convergence.

Perplexity runs hybrid retrieval on its own Vespa.ai-based index, fusing lexical and embedding-based scorers with multi-stage ranking that ends in cross-encoder rerankers (Perplexity Research). Glean ships enterprise search built on lexical retrieval plus vector embeddings, layered over a proprietary knowledge graph (Glean Blog). Notion runs vector search at production scale on Turbopuffer while feeding a parallel keyword index from inside the same data lake (Notion Blog).

Three companies. Three domains. The same direction.

That’s not a coincidence. That’s a market signal.

Three Architectures, One Pattern

The evidence doesn’t organize by who shipped first. It organizes by what each system proves.

Signal 1 — Perplexity at web scale. The retrieval engine handles 200 million queries per day across an index of over 200 billion unique URLs (Perplexity Research). The architecture queries the search index via both modalities and merges results into a hybrid candidate set, then runs multi-stage ranking ending in cross-encoder rerankers. Median ranking latency lands at 358ms; p95 at 763ms. At that volume, a vector-only setup is not viable. The lexical signal isn’t an addition — it’s load-bearing.

Signal 2 — Glean inside the enterprise. Glean’s hybrid pipeline combines BM25-style lexical retrieval with vector embeddings, fused with Reciprocal Rank Fusion, layered on top of a self-learning model and a proprietary knowledge graph (Glean Blog). The company reports up to 30% reduction in irrelevant results compared to traditional methods (Glean perspectives). Note the framing: not “vector search wins” — the combination wins.

Signal 3 — Notion at workspace scale. Notion AI’s vector pipeline migrated from a dedicated-pod cluster to Turbopuffer, cutting query latency from 70-100ms to 50-70ms while delivering roughly 10x capacity at 90% lower cost over two years (Notion Blog). Inside the same data lake architecture, an Inverted Index-style keyword search runs on ElasticSearch, fed from the identical Hudi-on-S3 source pipeline (Notion Blog). The vector index and keyword index are physically separate but architecturally siblings.

The structural lesson lands the same way each time: at production scale, no single retrieval mode handles the full query distribution. Lexical and semantic are complementary, not competitive.

The Winners Are Already Built In

The companies positioned to capture this shift fall into three groups.

Search infrastructure providers. Vespa.ai is now the production substrate behind Perplexity’s traffic — cited as the only platform Perplexity evaluated as production-proven for real-time hybrid RAG (Vespa case study). Open-source vector databases like Weaviate and Qdrant face the same buyer pressure: customers stopped asking for pure-vector products.

Enterprise platforms with knowledge graphs. Glean’s combination of lexical, vector, and graph layers gives it a moat that pure embedding shops cannot match. Retrieval quality compounds with usage data, not with model upgrades alone.

Vector DB vendors that pivoted fast. Turbopuffer’s object-storage-native model is the architecture that let Notion 10x its scale at a fraction of the cost. Notion runs OpenAI’s zero-retention embedding API for vector generation, then keeps retrieval on dedicated infrastructure. The split is the future: third-party model APIs for embeddings, dedicated infrastructure for serving.

You’re either shipping hybrid retrieval or you’re shipping last year’s stack.

Pure-Vector RAG Just Became a Liability

Vendors that built their pitch around “embed everything, ship fast” now face a buyer with a different question: what do you do with rare keywords, exact-match queries, and acronyms your embedding model never saw?

Teams running embedding-only retrieval inside production applications are quietly leaking quality. Lexical-precise queries — product SKUs, error codes, compliance terminology — are exactly where vector similarity underperforms. Users notice. Engineering teams blame the model. The retrieval layer is the actual bottleneck.

And anyone treating retrieval as a static layer, separate from generation, is about to be flanked. Agentic RAG systems are absorbing retrieval into the orchestration loop — agents call retrieval as one tool among many, with reranking and query rewriting handled inside the reasoning step. Pure-vector backends without a hybrid path forward become legacy components inside that stack.

The vector-only retrieval pitch hasn’t died. It just got demoted.

What Happens Next

Base case (most likely): Hybrid retrieval becomes table stakes for production RAG within the next two quarters. Vector-only systems remain in prototypes, internal tools, and low-stakes use cases. Cross-encoder rerankers move from “advanced optimization” to default architectural assumption. Signal to watch: New vector DB vendors shipping native lexical-plus-vector fusion in their core product, not as an enterprise add-on. Timeline: Through end of 2026.

Bull case: Hybrid retrieval gets absorbed into agent frameworks as a primitive. Agents call a unified retrieval tool that handles lexical, semantic, and graph fusion automatically. Quality improvements compound with multi-stage ranking and learned rerankers tuned per domain. Signal: Major agent frameworks shipping built-in hybrid retrieval modules with cross-encoder rerankers as defaults. Timeline: Mid-to-late 2026.

Bear case: Hybrid retrieval pipelines prove harder to operate at scale than vendor marketing suggests. Multi-stage ranking introduces latency and tuning complexity that smaller teams cannot absorb. A wave of teams quietly retreats to vector-only setups for simplicity, accepting the quality trade-off. Signal: Public postmortems describing rerank-stage outages or hybrid pipeline rollbacks. Timeline: Could surface within six months for early adopters.

Security & compatibility notes:

- Langflow RCE (CVE-2026-33017): Unauthenticated remote code execution in the flow-build endpoint, exploited within 20 hours of disclosure (Sysdig). Not a hybrid-search component, but a reminder that adjacent RAG-stack tooling is now an active attack surface. Patch promptly and audit exposed endpoints.

Frequently Asked Questions

Q: Which companies use hybrid search in production RAG systems? A: Perplexity runs hybrid retrieval on its Vespa.ai-based index at 200 million queries per day. Glean ships hybrid (lexical + vector + knowledge graph) for enterprise search. Notion runs production vector search on Turbopuffer alongside an ElasticSearch keyword index in the same data lake.

Q: How does Perplexity use hybrid search to improve answer quality? A: Perplexity queries its index via both lexical and embedding-based scorers, merges results into a hybrid candidate set, then runs multi-stage ranking ending in cross-encoder rerankers. Median ranking latency is 358ms across an index of over 200 billion unique URLs (Perplexity Research).

The Bottom Line

The hybrid retrieval debate is over. Three production leaders in three different domains all settled it the same way. Teams still running pure-vector RAG aren’t behind on a paper — they’re behind on the production architecture the rest of the field already validated.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors