Mamba-3, Jamba 1.5, and Nemotron-H: How State Space Models Are Rewiring Long-Context AI in 2026

Table of Contents

TL;DR

- The shift: Five labs converged on hybrid SSM-attention backbones — the pure-transformer era for long context is over.

- Why it matters: Hybrids match transformer quality while reaching 256K-to-1M tokens at a fraction of the compute cost.

- What’s next: Production stacks built on pure attention are pricing themselves out of the long-context market within twelve months.

For years, long context meant paying the quadratic tax. More tokens, more compute, more cost. The fix was always “bigger GPUs, smarter kernels.” Then NVIDIA, AI21, TII, and Alibaba all shipped hybrid architectures that sidestep the problem — and Together AI dropped Mamba-3 at ICLR 2026 as the next research milestone.

The Pure-Transformer Bet No Longer Fits Long Context

Thesis: Hybrid State Space Model backbones have replaced pure transformers as the practical path to 256K-to-1M-token context at transformer-matching quality — and every major lab’s 2026 roadmap confirms it.

Five independent teams made the same call inside eighteen months. That is not coincidence. That is consensus priced in before the benchmarks caught up.

NVIDIA shipped Nemotron-H in March 2025 (NVIDIA ADLR). AI21 followed with Jamba 1.6 the same month (AI21 Blog). TII released Falcon-H1. Alibaba rolled out Qwen3-Next. Mamba-3 landed at ICLR 2026 as the architectural frontier paper (Mamba-3 paper).

The pure-transformer monoculture for long context just ended.

Three Labs, One Architecture Pattern

The evidence does not organize by release date. It organizes by what it proves.

Signal 1 — NVIDIA bet the stack on hybrids. Nemotron-H-56B layers 54 Mamba Architecture blocks, 54 MLP blocks, and just 10 self-attention blocks, pretrained on 20 trillion tokens in FP8 (NVIDIA ADLR). The 47B variant demonstrates roughly 1M-token inference in FP4 on a single RTX 5090 and posts a 2.9x speedup over Qwen-2.5-72B and Llama-3.1-70B at long context on H100. In March 2026, NVIDIA followed up with Nemotron 3 Super — an open hybrid Mamba-Transformer-MoE built for agentic reasoning (NVIDIA Developer Blog). Two generations of hybrids in a year.

Signal 2 — AI21 took hybrids into enterprise production first. Jamba 1.5’s Hybrid Architecture runs one attention block per eight total — seven Selective Scan Mamba blocks for every attention block — plus 16 Mixture Of Experts experts with top-2 routing (AI21 Blog). The Mini variant fits a 140K-token context on a single GPU. Jamba 1.6 kept the recipe and shipped a larger Large variant to regulated enterprise buyers.

Signal 3 — TII validated the pattern with a different mix. Falcon-H1 places transformer attention heads in parallel with Mamba-2 SSM heads inside the same layer — not stacked, not alternating. Sizes run from 0.5B to 34B, context to 256K, coverage across 18 languages, and throughput up to 4x on input and 8x on output versus a pure transformer at long context (Falcon LLM Blog).

Three teams. Three hybridization recipes. One conclusion: pure attention does not scale to million-token context at acceptable cost.

Who Wins

Infrastructure labs with early hybrid bets. NVIDIA owns the stack. Nemotron-H ships as open weights, and Nemotron 3 Super extends the family into agentic reasoning territory. The company making the silicon is also shipping the architecture those chips run best.

Enterprise-deploy vendors. AI21’s Jamba put hybrid SSM in front of regulated industries before anyone else. Falcon H1 gives sovereign-AI buyers a non-US option with full open weights. Both win the moment a procurement team asks for long context without the pure-transformer price tag.

Research groups pushing the SSM frontier. Mamba-3 introduces a more expressive discretization, complex-valued state updates, and a MIMO formulation. At 1.5B parameters, it beats Gated DeltaNet by roughly half a point on average downstream — and the MIMO variant widens the gap while matching Mamba-2 perplexity at half the state size (Mamba-3 paper).

You are either evaluating hybrid SSM backbones for your long-context roadmap or you are writing a bigger inference bill.

Who Loses

Pure-transformer inference stacks at frontier scale. Quadratic cost was manageable at 32K tokens. At 256K it is a margin killer. At 1M it is a cliff. Any production system whose roadmap assumes dense attention on long sequences is about to meet a hybrid competitor on price.

Nominal-context marketing. Hybrids and Linear Attention families changed the architecture. They also changed how context gets measured. NVIDIA’s RULER benchmark shows most models degrade sharply past 32K despite advertised windows of 128K or more (NVIDIA RULER repo). Vendors still quoting nominal max-context numbers without effective-context evaluation are playing last year’s marketing game in a market that just got instrumentation.

Teams that held out for a pure-SSM winner. Mamba-3 remains a research paper, not a deployed product. Every production winner blended SSM with attention. Betting on pure-SSM frontier models got the direction half right and the architecture fully wrong.

What Happens Next

Base case (most likely): By end of 2026, every serious long-context foundation model on the market is a hybrid. Pure-transformer frontier models continue for short-context workloads but cede the >128K segment entirely. Signal to watch: Independent RULER-style evaluations of Nemotron-H and Jamba 1.6 at full advertised context. Timeline: Next two quarters.

Bull case: Mamba-3’s MIMO formulation lands in production hybrids within a year, pushing effective context past 2M tokens on commodity GPUs. RWKV-style RNN-linear families carve out edge-deployment niches. Signal: A production release citing Mamba-3-style complex state updates shipped by a major lab. Timeline: Late 2026 through 2027.

Bear case: RULER-style evaluations expose that hybrid SSM backbones also degrade well past 200K, resetting expectations. The architecture story survives, but the “1M usable context” marketing collapses. Signal: Multiple independent benchmarks showing hybrid effective context plateauing well below nominal windows. Timeline: Could surface by mid-2026 as models hit wider production use.

Frequently Asked Questions

Q: What is the future of state space models and hybrid architectures in 2026? A: The near-term future is hybrid, not pure. Every major 2026 long-context model — Nemotron-H, Jamba 1.6, Falcon-H1 — combines SSM blocks with a minority of attention layers. Pure SSMs remain a research track. Hybrids are the production path.

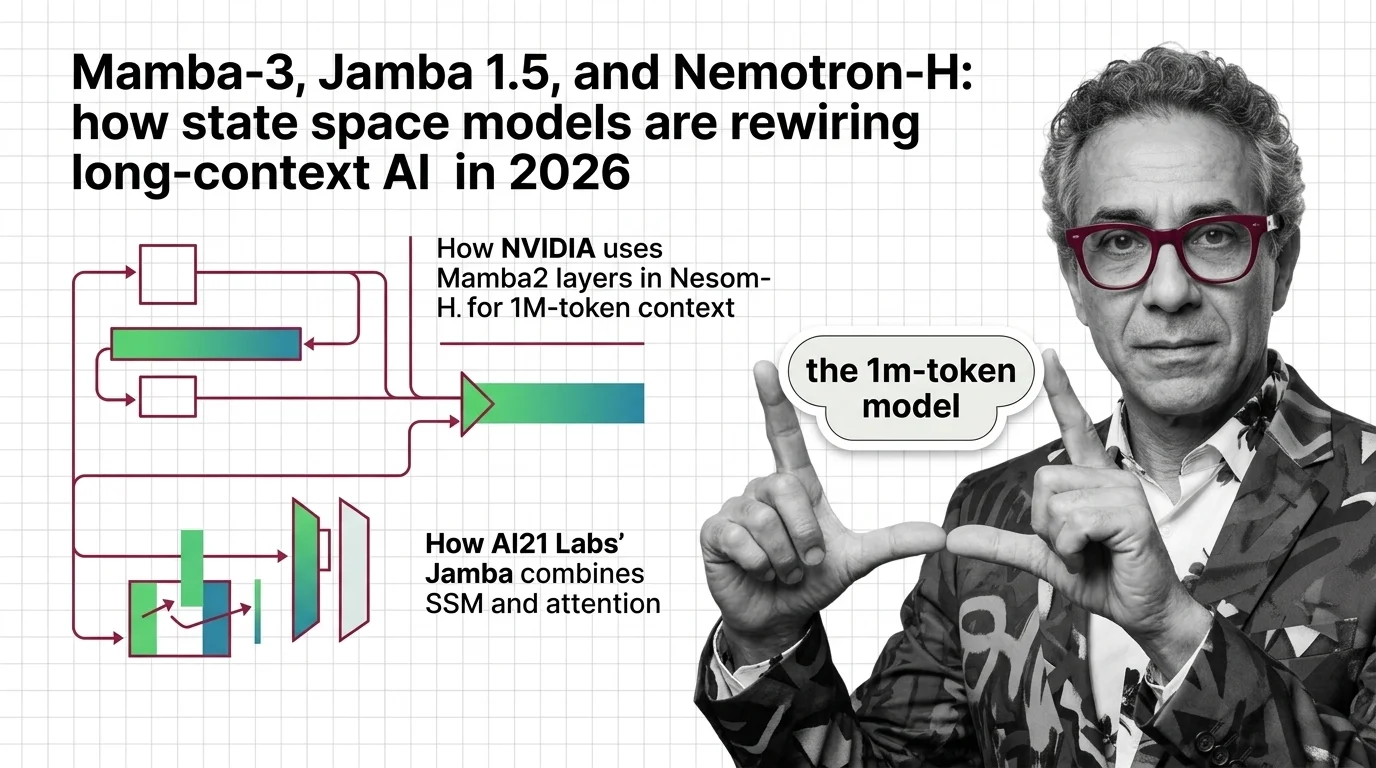

Q: How is NVIDIA using Mamba-2 layers inside Nemotron-H for 1M-token context? A: Nemotron-H-56B stacks 54 Mamba-2 blocks, 54 MLP blocks, and 10 self-attention blocks, trained on 20 trillion tokens in FP8. The 47B variant runs roughly 1M-token inference in FP4 on a single RTX 5090 — an inference-time claim, not a full-precision benchmark.

Q: How does AI21 Labs’ Jamba combine SSM and attention in production? A: Jamba 1.5 runs one attention block per eight total — a 1:7 attention-to-Mamba ratio — plus 16 mixture-of-experts experts with top-2 routing, delivering a 256K-token context. Jamba 1.6 kept the same recipe and shipped a larger Large variant for regulated enterprise deployment.

The Bottom Line

Pure transformers still dominate short-context workloads. At long context, the monoculture is done — hybrid SSM backbones are the default for 256K-token-and-up systems. The next fight is not architecture. It is which hybrid recipe scales cleanest under production load.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors