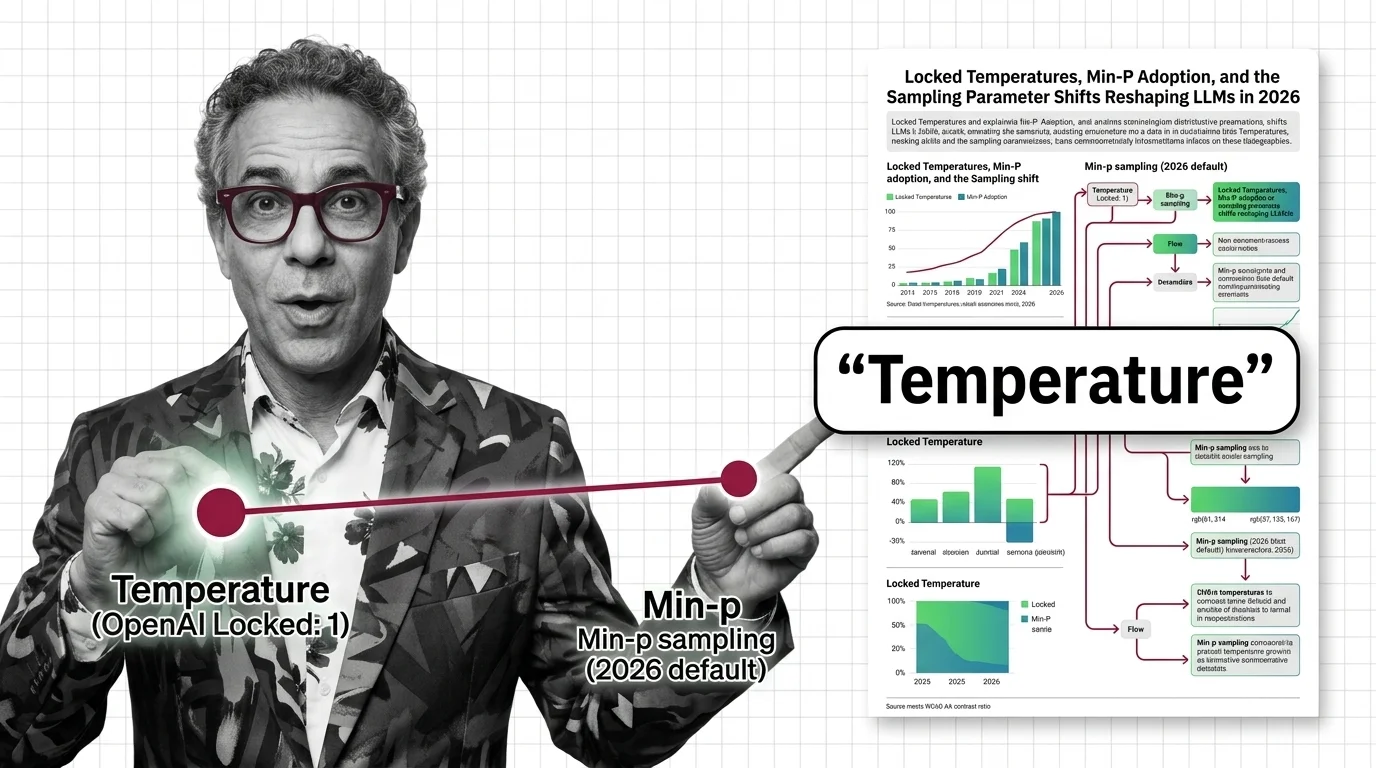

Locked Temperatures, Min-P Adoption, and the Sampling Parameter Shifts Reshaping LLMs in 2026

Table of Contents

TL;DR

- The shift: Proprietary labs are locking and removing sampling parameters while open-source stacks adopt min-p as a smarter replacement for top-k.

- Why it matters: The knobs developers relied on for two years are vanishing — the new defaults ship silently.

- What’s next: Structured output engines will absorb most sampling decisions, making manual parameter tuning a shrinking concern.

The Temperature And Sampling knobs that developers spent two years fine-tuning are disappearing — not because they failed, but because the models outgrew them. OpenAI locked temperature on its reasoning lineup while open-source stacks promoted min-p into the default chain. The parameter surface is being rewritten from both ends. You’re either tracking the changes or running configs that no longer match reality.

The Control Surface Just Split in Two

Thesis: The sampling stack is fracturing into two incompatible paradigms — proprietary lockdown versus open-source expansion — and the gap widens every quarter.

OpenAI’s reasoning models — GPT-5.4, GPT-5.4-pro, GPT-5-mini, GPT-5-nano, o4-mini — ship with Top P Sampling fixed at 1, temperature locked at 1, and frequency penalties zeroed out (OpenAI Docs). Developers cannot adjust any of them.

The replacement: a reasoning_effort parameter with six levels from none to xhigh. GPT-5.4 defaults to none for low-latency responses (OpenAI Docs). The control moved from how tokens are sampled to how deeply the model reasons.

That’s not a parameter adjustment. That’s a different interface entirely.

Anthropic went exclusive. Claude Sonnet 4.5 and Haiku 4.5 force a choice — temperature or top_p, never both. Google went further. Top-k is not available via the Gemini API at all.

Three providers. Three restrictions. One direction: fewer knobs, less developer control over token selection at Inference time. The open-source stack took the opposite bet.

One Algorithm, Contested Results

Min P Sampling — a dynamic truncation method that scales its cutoff relative to the highest-probability token — earned an oral at ICLR 2025, the 18th highest-scoring submission. The algorithm: multiply a base threshold by the max token probability, then sample only from Logits above that line (Min-p paper).

Adoption was fast. HuggingFace Transformers, vLLM, SGLang, llama.cpp, Ollama, ExLlamaV2, KoboldCpp, and text-generation-webui all support it — frameworks with 667,000+ combined GitHub stars (Min-p paper). llama.cpp now defaults min-p to 0.05 in its server, baking it into the sampler chain ahead of temperature (llama.cpp GitHub).

The picture is messier than the star count suggests. vLLM defaults min-p to 0.0 — disabled — requiring explicit opt-in (vLLM Docs). A critical reanalysis by Schaeffer et al. found min-p did not outperform baselines in quality or diversity when controlling for hyperparameter count (Schaeffer et al.). The academic case is contested even as the engineering community ships it by default.

Then there’s the layer underneath. Structured output engines are absorbing what manual sampling used to handle. XGrammar generates constraint masks in under 40 microseconds per token and runs as vLLM’s default structured output backend (XGrammar paper). llguidance, a Rust-based engine credited by OpenAI as the foundation for their structured output system, operates at roughly 50 microseconds per token.

When the grammar engine guarantees valid JSON, the sampling layer matters less. The constraint does the work that temperature used to do by accident.

Who Gains Ground

Open-source operators running llama.cpp or self-hosted vLLM Continuous Batching stacks. They get min-p plus structured output at zero added cost. Teams running Quantization pipelines for local deployment inherit min-p support when they update their inference server.

Structured output maintainers. XGrammar and llguidance are becoming infrastructure that every serving framework depends on.

Developers who already run Greedy Decoding or schema-constrained pipelines. Nothing broke — they are ahead by default.

Who Gets Left Behind

Teams that built creative generation around temperature on proprietary APIs. OpenAI’s reasoning models don’t offer that knob. If your product depended on temperature 0.9 for variation, that lever is gone.

Anyone treating top-k as a primary truncation strategy. Min-p’s dynamic threshold adapts to each token’s distribution. Top-k’s fixed cutoff does not.

Developers who haven’t checked their sampling configs since 2024. llama.cpp activates min-p by default. vLLM does not. Assuming either way without verifying is how you ship bugs.

What Happens Next

Base case (most likely): Min-p becomes the standard secondary knob in open-source stacks within the year, displacing top-k in new projects while top-k persists in legacy configs. Proprietary APIs continue removing exposed parameters. Signal to watch: vLLM changing its min-p default from 0.0 to a nonzero value. Timeline: Late 2026.

Bull case: Structured output engines absorb enough of the sampling workload that manual parameter tuning becomes a niche concern. A shared config standard emerges across frameworks.

Signal: Major cloud providers deprecating temperature in favor of intent-level controls like reasoning_effort.

Timeline: Mid-2027.

Bear case: The Schaeffer et al. critique gains traction. Min-p adoption stalls. Open-source stacks fragment around competing methods. Signal: llama.cpp reverting its min-p default or major frameworks shipping alternative dynamic truncation. Timeline: Early 2027.

Frequently Asked Questions

Q: How did OpenAI locking reasoning model temperature to 1 change developer workflows in practice?

A: Teams lost direct control over output diversity and creativity for reasoning tasks. The shift forced migration to reasoning_effort as the primary control lever, moving prompt engineering from token-level tuning to intent-level configuration.

Q: How is min-p sampling replacing top-k as the default in open-source LLM inference stacks in 2026? A: llama.cpp defaults min-p to 0.05 in its sampler chain. Other frameworks like vLLM support it but leave it disabled by default. Adoption is real but uneven — not a universal replacement.

Q: What sampling innovations are emerging as LLMs shift toward structured and constrained output generation? A: Grammar-based constraint engines like XGrammar and llguidance generate token-level masks in microseconds, guaranteeing schema-valid output directly at the decoding layer. The direction is clear: control is shifting from probabilistic tuning to deterministic enforcement.

The Bottom Line

The sampling stack split. Proprietary APIs are removing knobs. Open-source stacks are adding better ones. Structured output engines will make most of this debate irrelevant within two years. You’re either auditing your sampling configs this quarter or finding out they changed in production.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors