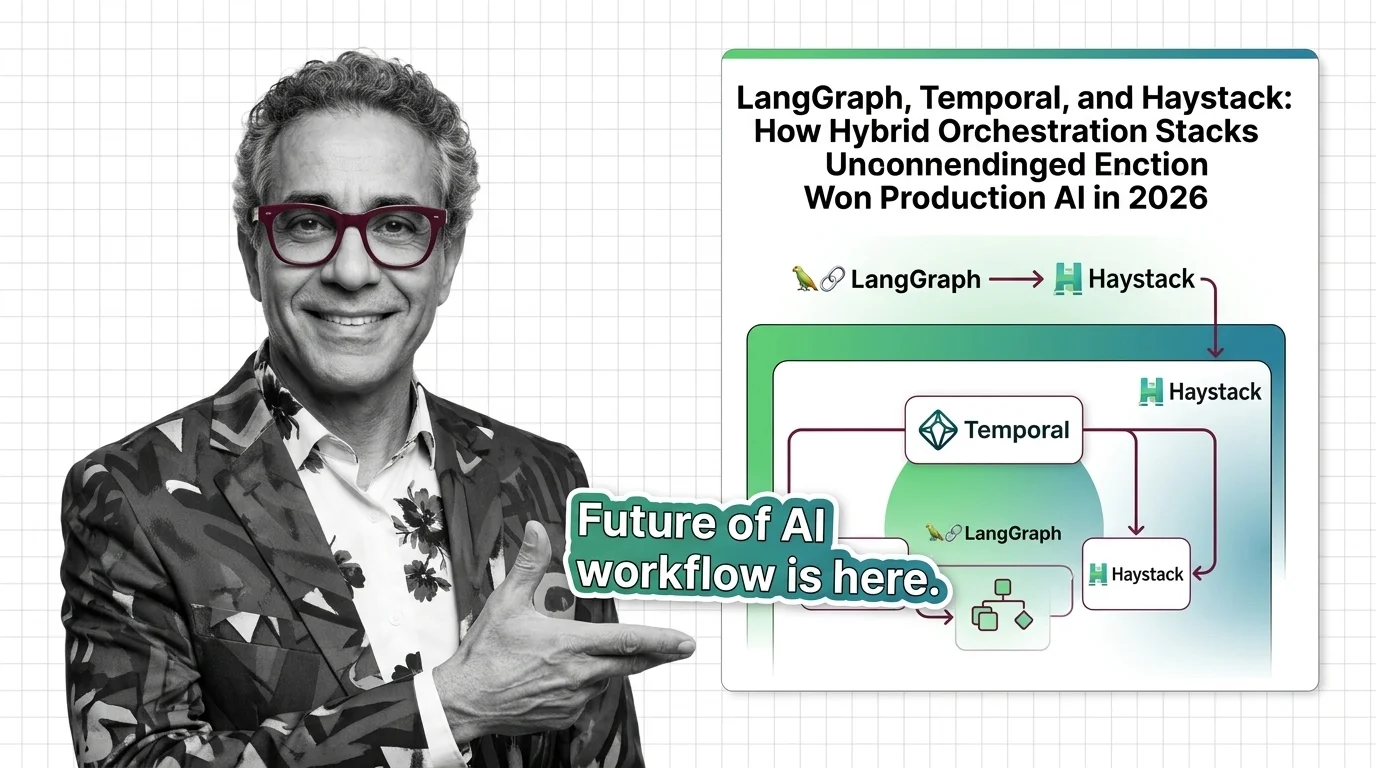

LangGraph, Temporal, and Haystack: How Hybrid Orchestration Stacks Won Production AI in 2026

Table of Contents

TL;DR

- The shift: Production AI moved off single-framework agents and onto two-layer hybrid stacks — reasoning frameworks running inside durable execution engines.

- Why it matters: Teams that paired LangGraph or Haystack with Temporal got agents that survive long-running work; teams that didn’t are still firefighting state corruption and retry storms.

- What’s next: The reasoning layer stays competitive. The durable outer layer is consolidating. Pick the outer layer with care.

The interesting fight in Workflow Orchestration For AI stopped being “which framework wins.” It moved one floor up — to how teams stack two layers to keep agents alive across hours, retries, and partial failures. That stack has a winner-shaped outline now, and deployments that ignored it are running out of road.

The Single-Framework Bet Already Lost

The two-layer hybrid stack is the new production standard. An LLM reasoning framework wrapped in a durable execution engine — that is the pattern enterprises are converging on for any AI workload that touches real systems.

For most of 2024, the conversation was tribal. CrewAI versus AutoGen versus LangGraph. Pick a side, write demos, ship. That framing collapsed the moment the first wave of agents hit production and started doing real work — calling APIs, charging cards, mutating databases — across runs that lasted hours or days.

Single-framework agents could not survive that. Restarts lost state. Retries fired twice. Failures cascaded.

The teams that solved it didn’t switch reasoning frameworks. They wrapped the one they had in something built to remember.

Three Stacks, One Pattern

The evidence is convergent. LangGraph hit 1.0 GA on October 22, 2025 — the first stable major release in the durable agent framework space, according to LangChain’s changelog. By early 2026 the package was pulling 46.1M monthly PyPI downloads, with a documented production roster of Uber, LinkedIn, Klarna, BlackRock, and JPMorgan (LangChain Blog).

Haystack shipped v2.25.2 on March 5, 2026, on a near-weekly release cadence — modular pipelines, semantic reranking, enterprise tiers (Haystack release notes). CrewAI sits at 31,200 GitHub stars and 5M monthly downloads (Pooya Blog), claiming the role-based middle tier for faster prototypes.

Three frameworks. Three different bets on what reasoning should feel like.

All three are now being deployed inside Temporal.

Temporal Nexus reached GA at Replay 2025, stitching workflows across isolated namespaces. Multi-Region Replication landed with a 99.99% SLA and a 20-minute RTO. The multi-cloud extension hit GA at Replay 2026 (Temporal Blog). The split is clean: deterministic durable workflows on the outside, non-deterministic LLM calls on the inside.

That is not a coincidence. That is a stack consolidating in front of you.

The Numbers Behind the Pivot

Approximately 61% of large enterprises now run at least one production AI agent system, up from roughly 18% in 2024 — Gartner figures cited via secondary aggregation (Pooya Blog). Gartner also projects task-specific agents in 40% of enterprise applications by the end of 2026, against under 5% a year earlier (Temporal Blog).

Treat the precise percentages as approximate. The direction is not.

The reason hybrid stacks won is operational, not architectural. A reasoning framework gives you a graph of LLM calls. A durable execution layer gives you state that survives a pod eviction at 3 a.m. You need both, or you spend your engineering budget reinventing the worse one.

Who Captured the Pivot

LangChain captured the reasoning layer. Production users, enterprise citation share, and the May 2026 release — DeltaChannel beta, per-node timeouts, Streaming v3 (LangChain Docs) — show a team executing on the durable-state direction faster than its category peers.

Temporal captured the outer layer. Multi-region, multi-cloud, AI-specific positioning — they got there before the agent companies needed them to.

deepset positioned Haystack for teams that want a unified open-source pipeline. The weekly release cadence reads like a company that knows the window is still open.

Enterprise platform teams that bet early on this two-layer pattern are shipping agents that survive contact with production. The teams still on a single framework are paying the difference in incidents.

Who Optimized for a Race That Ended

Anyone who built their AI roadmap around “pick one framework and standardize” is now standardizing on the wrong unit.

The split inside AutoGen tells the story. Microsoft rewrote AutoGen as v0.4+ with a different API. The original v0.2 lineage continues as the community fork AG2, branched on November 11, 2024 (DEV Community). Teams that wrote “AutoGen” on a roadmap eighteen months ago are now filing migration tickets to figure out which AutoGen they actually have.

Vendors selling closed orchestration suites against an open hybrid stack are losing the architecture conversation. Procurement still buys them. Engineering quietly builds around them.

You’re either running reasoning inside durable execution, or you’re rebuilding durable execution from scratch on top of a framework that wasn’t designed for it.

What Happens Next

Base case (most likely): The hybrid pattern hardens. LangGraph plus Temporal becomes the reference deployment for enterprise agents, with Haystack and CrewAI claiming the open-source and prototyping tiers respectively. Signal to watch: Major cloud providers shipping first-class agent runtimes that bundle a reasoning framework with a durable executor. Timeline: Through late 2026.

Bull case: A neutral standards body or a CNCF-style survey quantifies the pattern, accelerating procurement. The reasoning-layer race stays competitive while the durable layer consolidates around one or two engines. Signal: A vendor-neutral benchmark or open spec for the reasoning-plus-durable contract. Timeline: 2027.

Bear case: Frontier context windows grow large enough that lightweight orchestration covers most workloads, hollowing out the reasoning-framework layer. Durable execution still wins; framework differentiation shrinks. Signal: Major labs shipping inference-time agentic primitives that reduce reliance on external orchestration. Timeline: 2027–2028.

Frequently Asked Questions

Q: How are companies running multi-step LLM pipelines in production in 2026? A: They pair a reasoning framework — LangGraph, Haystack, or CrewAI — with a durable execution engine, almost always Temporal. The reasoning layer handles tool calls and branching. The outer layer handles retries, state, and long-running workflows that span hours or days.

Q: What is the future of workflow orchestration for AI in 2026 and beyond? A: Consolidation on the two-layer pattern. The reasoning-framework tier stays competitive and fast-moving. The durable-execution tier consolidates around fewer engines with stronger multi-region guarantees. Single-framework agents survive in prototypes and stateless inference paths, not in long-running production work.

Q: How is the orchestration market shifting between LangGraph, CrewAI, AutoGen, and Temporal in 2026? A: LangGraph leads the enterprise reasoning tier post-1.0. CrewAI owns rapid prototyping. AutoGen split into Microsoft’s v0.4+ rewrite and the AG2 community fork, fragmenting that ecosystem. Temporal is positioned as the durable executor most of them deploy inside.

The Bottom Line

The orchestration race didn’t pick a single winner — it picked a structure. Teams that wrap a reasoning framework inside a durable executor are shipping reliable agents. Everyone else is rebuilding the outer layer in private.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors