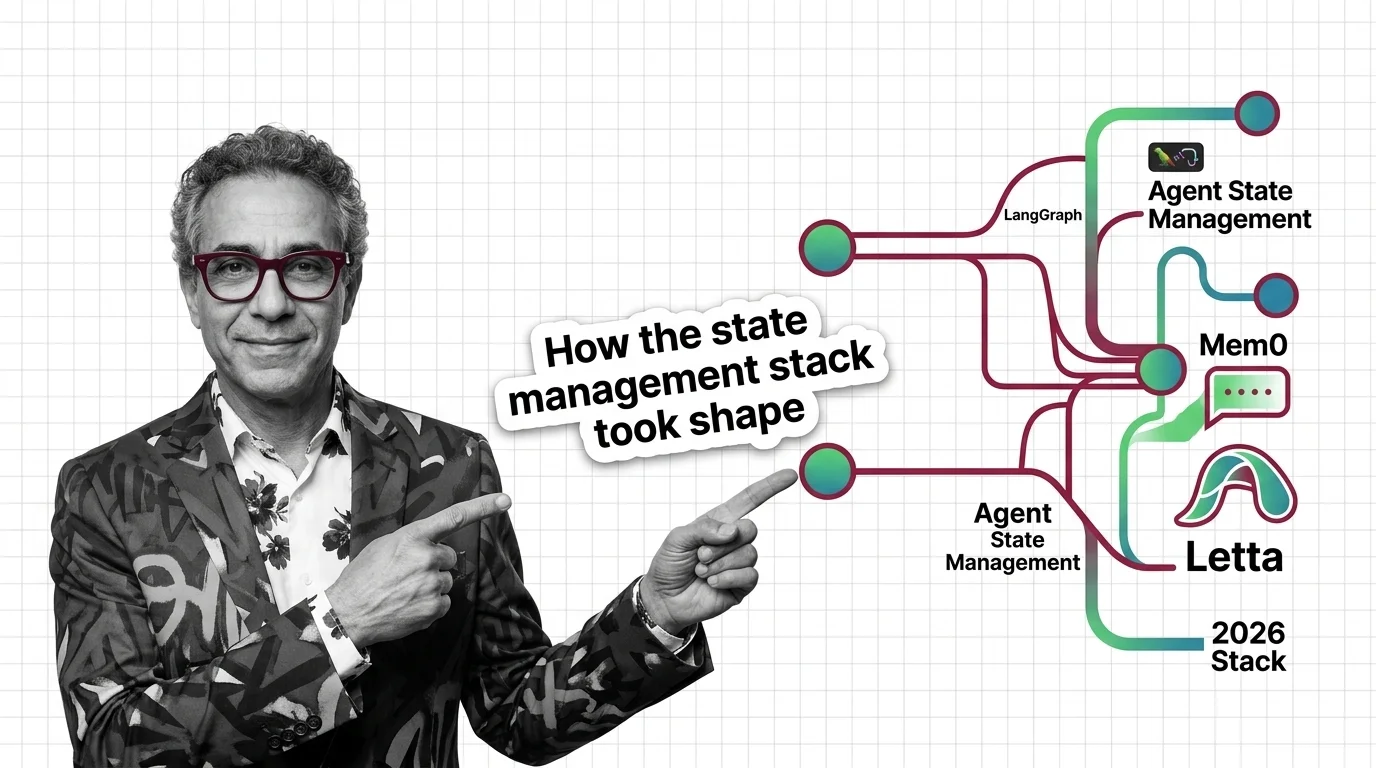

LangGraph, Mem0, Letta: The Agent State Stack in 2026

Table of Contents

TL;DR

- The shift: Agent state management has split into two layers — thread-scoped checkpointing on the bottom and cross-session memory on top.

- Why it matters: Teams still treating memory as one component are paying for it in latency, cost, and rebuilds.

- What’s next: A standard memory protocol is forming. The window to pick the right primitives is open now.

For two years, builders treated agent memory as a single design choice. Pick a vector store. Write a clever prompt. Hope it remembers what the user said yesterday. That model just collapsed. The 2026 production stack is two layers — and the teams that figured out which one goes where are shipping while everyone else is still scrolling framework docs.

The Stack Just Split in Two

Thesis (one sentence, required): Agent State Management is no longer one decision — it’s a layered architecture, with thread-scoped checkpointing at the bottom and cross-session memory on top.

LangGraph 1.0 went generally available in October 2025 and became the production default for one specific job: keeping a single agent task alive through crashes, retries, and human-in-the-loop interrupts.

That job is not the same as remembering a user.

A LangGraph checkpointer saves the agent’s state inside one thread. When the process dies, you replay from the last checkpoint. When a tool call needs review, you pause and resume. It does not persist what the user told you yesterday.

For that, you need a different layer entirely. Mem0, Letta, and Zep are answering a separate question: how does an agent build a model of a user — facts, preferences, ongoing context — that survives across sessions, threads, and even the agent’s own restarts?

Until now, teams smashed the two together. They wrote everything to a vector store and called it memory. The result was bloated context windows, unpredictable recall, and Multi Agent Systems that couldn’t tell the difference between “this is what we just decided” and “this is who the user is.”

The split is the new default.

Three Releases, One Pattern

Three releases over the past year tell the same story from different angles.

LangChain shipped LangGraph 1.0 with PostgresSaver and AsyncPostgresSaver as the production-grade checkpointer — the same one LangSmith uses internally to run agents for Uber, LinkedIn, and Klarna (LangChain’s changelog). LangChain’s own State of Agent Engineering report claims a 96% error-recovery rate for agents on this stack (LangChain’s report). That’s a single-vendor figure, not an independent benchmark. But it’s a number LangChain is willing to put in customer-facing material — which means the checkpointer is now table stakes for shipping agents at scale.

Mem0 published its 2026 algorithm update in April. On the LOCOMO benchmark, Mem0’s self-reported accuracy moved from 71.4 under the prior algorithm to 91.6. On LongMemEval, from 67.8 to 93.4 (Mem0 Blog). Mem0’s own comparison also reports roughly 91% lower p95 latency than dumping full chat history into context — about 1.44 seconds versus 17.12 — while using a small fraction of the tokens. Both numbers come from Mem0’s own blog and have not yet been independently reproduced. Treat them as a vendor signal, not a peer-reviewed result.

Letta — the framework formerly known as MemGPT — shipped v0.16.7 in March 2026 (Letta’s GitHub repository). Its core architectural commitment is the OS-style tiered memory model: core memory always in context, recall memory retrievable from history, archival memory indexed externally (Letta Docs). Letta is now built around this pattern, and Letta Code shipped shortly after as a coding agent that uses Agent Memory Systems to remember a developer’s repo conventions across sessions.

Three different teams. Three different bets. One direction: Agent Planning And Reasoning and memory have been pulled apart from execution state.

Security & compatibility notes:

- langgraph-checkpoint RCE (CVE-2025-64439): Insecure deserialization in

JsonPlusSerializerenables remote code execution. Fix: upgradelanggraph-checkpointto v4.0.0+.- langgraph-prebuilt v1.0.2 breaking change:

ToolNode.afuncnow requires a runtime parameter, andlanggraph1.0.1 does not pin the dependency. Pinlanggraph-prebuiltto a known-good version in your lockfile to avoid pulling a broken combination.

Who Moves Up

The teams winning this transition share one trait: they stopped treating memory as a feature and started treating it as infrastructure.

LangChain. By shipping a stable checkpointer that other vendors integrate against, LangChain made itself the substrate. Mem0’s LangGraph BaseStore adapter drops a memory engine into a graph node without restructuring agent logic (n1n.ai). That’s a leverage position.

Mem0 and Letta. Both are open source under Apache 2.0, both ship managed cloud tiers, and both have framework integrations across the popular stacks. Mem0 alone reports integrations with twenty-one frameworks, nineteen vector stores, and thirteen agent frameworks (Mem0 Blog). Their bet — that memory deserves its own product, not a paragraph in someone else’s docs — is now the consensus.

Application teams who already split the layers. Anyone who already has a thread-state layer separate from a user-knowledge layer can swap engines without rewriting the agent. They’re months ahead of teams that haven’t.

That’s not a niche advantage. That’s the difference between iterating and rebuilding.

Who Gets Left Behind

Teams still treating memory as one block of code are running last quarter’s playbook in a market that moved on.

If your agent writes everything to a single vector store, you’re paying retrieval cost on data that should never have been retrieved — and missing the data that actually matters because it’s drowning in transcript noise.

If your error recovery and your user knowledge live in the same persistence layer, you’re either over-saving conversation state or under-saving user identity. Pick one. Both are wrong.

If your Agent Frameworks Comparison still treats checkpointing and memory as the same checkbox, you’re choosing tools on the wrong axis.

The pattern is clear: the laggards are still optimizing the unified layer. The leaders already have two.

You’re either splitting the stack or you’re explaining to your CFO why latency tripled.

What Happens Next

Base case (most likely): The two-layer pattern becomes the production default by year-end. Most agent frameworks ship a checkpointer-plus-memory adapter pattern, and the question shifts from “do I need memory?” to “which memory engine for which workload?” Signal to watch: Major framework releases adopting cross-vendor memory adapters as a standard interface, similar to how vector store adapters became standard in 2024. Timeline: Six to nine months.

Bull case: A standard memory protocol — something like MCP for state — emerges and lets teams swap memory engines the way they currently swap LLM providers. Mem0’s OpenMemory MCP work is already pointing this direction (Mem0’s GitHub repository). Signal: Two or more memory vendors agreeing on a shared schema for storage and retrieval. Timeline: Twelve to eighteen months.

Bear case: Vendors fragment the memory layer faster than teams can integrate it. Each framework ships its own incompatible memory primitive, and integration debt slows agent rollouts. Signal: Major frameworks announcing proprietary memory layers that don’t expose standard adapter interfaces. Timeline: Already happening at the edges.

Frequently Asked Questions

Q: How are companies using agent state management in production in 2026? A: Production teams pair two layers: LangGraph’s PostgresSaver for thread-scoped checkpointing and replay, and a memory layer like Mem0 or Letta for facts that survive across sessions. The checkpointer handles fault tolerance; the memory layer holds user knowledge.

Q: What is the future of agent state management and persistent memory? A: The two-layer pattern is consolidating into the production default. Expect cross-vendor memory adapters to standardize the way vector store adapters did. The next battle is which memory engine — graph, OS-tiered, or temporal — wins each workload.

The Bottom Line

The agent state stack split in 2026 because trying to solve thread state and user state with one component never actually worked. LangGraph owns the bottom layer. Mem0, Letta, and their peers are racing for the top one. If your team is still building agents on a single persistence layer, the rebuild has already started somewhere else.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

—Stay ahead, Dan.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors