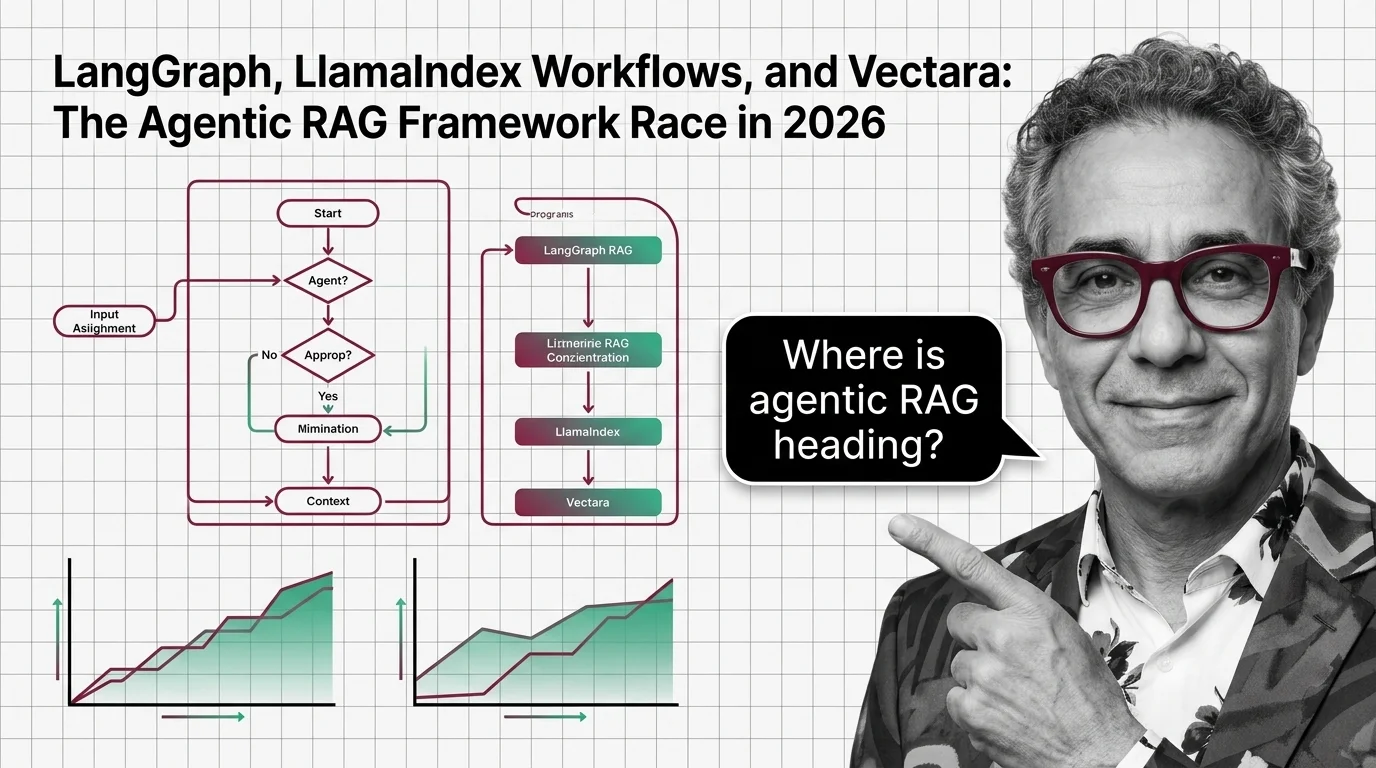

LangGraph, LlamaIndex Workflows, and Vectara: The Agentic RAG Framework Race in 2026

Table of Contents

TL;DR

- The shift: Agentic RAG is no longer one stack — it has split into three competing layers, each owned by a different framework.

- Why it matters: Where you build (orchestrator, retriever, or managed platform) now decides what you can change later without a rewrite.

- What’s next: The next twelve months reward teams that pick a layer deliberately and bolt on evaluation infrastructure before scale, not after.

A year ago, “agentic RAG” was a slide. By mid-2026 it’s a procurement decision. Three frameworks have shipped 1.0 milestones in the last twelve months, and they aren’t competing for the same job. They’re staking out different layers of the same stack — and the layer you pick now is the one you’ll be stuck with at scale.

The Agentic RAG Stack Just Split Into Three Lanes

Thesis: Retrieval Augmented Generation is being absorbed into agent toolchains, and the agentic RAG market has split into three structural bets — orchestration, retrieval, and managed governance — each owned by a different framework.

LangGraph plays orchestrator. LlamaIndex Workflows plays retrieval router. Vectara plays managed platform. None of them are pretending to be the others anymore.

That’s the real signal of 2026. The “one framework to rule them all” pitch is gone, replaced by a stack where each layer ships its own contract. The agentic survey on arXiv (2501.09136), revised in April 2026, classifies these systems along four axes — agent cardinality, control structure, autonomy, and knowledge representation. Three frameworks, three axes prioritized, three different bets on what matters most in production.

Pick the wrong layer and you don’t lose features. You lose optionality.

Three Frameworks, Three Bets

LangGraph reached 1.0 on October 22, 2025, with a “no breaking changes until 2.0” stability commitment (LangChain Blog). The repo describes itself as a “low-level orchestration framework for building stateful agents” and now sits at 31.1k stars on GitHub. Production users disclosed by LangChain include Uber, LinkedIn, Klarna, JP Morgan, Blackrock, Cisco, Replit, and Elastic.

The bet: durable state, built-in checkpointers, human-in-the-loop, and cyclic graphs with conditional branching are what production agents actually need. Retrieval is just one node in the graph.

LlamaIndex Workflows 1.0 shipped earlier — June 30, 2025 — as an async-first, event-driven framework with typed state in both Python and TypeScript (LlamaIndex Blog). Crucially, Workflows split out as an independent package (llama-index-workflows, @llamaindex/workflow-core) that can run without the rest of

LlamaIndex.

The bet: orchestration is table stakes; the moat is retrieval. Workflows ships an auto_routed mode and a Composite Retrieval API that routes queries across multiple indexes — turning

Query Transformation,

Hybrid Search, and

Reranking into composable steps inside one event-driven graph.

Vectara took the third path: own the whole pipeline. The platform now markets itself as “The Unified Context Layer for AI Agents” and pairs HHEM (the Hughes Hallucination Evaluation Model) with Mockingbird, its RAG-specialized LLM tuned for structured output (Vectara). The vectara-agentic Python library wraps it all behind a ReAct or OpenAI agent type.

The bet: enterprises don’t want to assemble Contextual Retrieval from open-source parts — they want hallucination scoring, governance, and on-prem deployment in one contract.

That’s three releases, three architectures, one direction. The market just told you it doesn’t believe in a single winner.

Who Wins the Split

Production teams who pick a layer deliberately.

The LangGraph user list isn’t a marketing page — it’s a hiring pattern. Uber, JP Morgan, Blackrock, and Klarna run regulated workflows. They needed durable state and human-in-the-loop before they needed cleverer retrieval. They picked the orchestrator and bolted retrieval on as a tool.

Retrieval-heavy teams who never wanted to write a graph DSL win with LlamaIndex Workflows. Event-driven, typed, splittable from the parent package — it lets you hold onto LlamaIndex’s retrieval primitives without buying into a monolith.

Compliance-bound enterprises win with Vectara. HHEM scoring, Mockingbird, and air-gapped deployment options answer the questions banks and hospitals ask before the procurement form. Self-RAG-style verification cuts hallucination rates to 5.8% versus 12–14% for standard agentic pipelines, according to Data Nucleus — the kind of delta that justifies a managed bill.

The winners share one trait. They picked their layer before they shipped.

Who Gets Squeezed

The “RAG is dead” crowd was loud through 2025. They’re getting quiet now.

RAG didn’t die. It got absorbed. Anyone who killed their retrieval roadmap on the assumption agents would solve it for free is now writing retrieval inside their agent toolchain — just without the libraries that made it cheap.

Single-stack DIY teams are squeezed too. Building your own state machine, your own checkpointer, your own evaluation harness was defensible in 2024. In 2026 you’re competing with three frameworks that already shipped those primitives. Your engineering hours go to plumbing instead of differentiation.

And teams treating any of these frameworks as a drop-in are walking into a security bill they didn’t budget for.

Security & compatibility notes:

- LangGraph Checkpoint RCE (CVE-2026-27794): Pickle deserialization in the caching layer enables remote code execution. Fix: upgrade to 4.0.0+, which disables the pickle fallback by default (breaking change). Source: SentinelOne.

- LangChain prompt-loading path traversal (CVE-2026-34070, CVSS 7.5): Allows access to arbitrary files via the prompt-loading API (March 2026). Source: The Hacker News.

- LangGraph SQLite checkpoint SQL injection (CVE-2025-67644, CVSS 7.3): Metadata filter keys are exploitable. Patch and audit checkpoint queries.

- LangChain Core secret exposure (CVE-2025-68664): Serialization injection can leak secrets (December 2025). Source: NVD.

If your stack runs on these frameworks, an upgrade and a deserialization audit are not optional this quarter.

What Happens Next

Base case (most likely): The three-layer split hardens. LangGraph owns orchestration in regulated enterprises. LlamaIndex Workflows wins the retrieval-router lane. Vectara wins compliance-first procurement. Signal to watch: A second managed agentic RAG vendor reaching named-customer parity with Vectara on enterprise deals. Timeline: Twelve to eighteen months.

Bull case: Workflows-style event-driven orchestration becomes the default outside LangChain’s ecosystem, pulling routing standards toward open packages.

Signal: A non-LlamaIndex framework adopting llama-index-workflows as its default orchestrator.

Timeline: Six to twelve months.

Bear case: Continued CVE drumbeat against LangChain/LangGraph pushes regulated buyers toward managed platforms faster than open-source teams can patch and harden. Signal: A second BREAKING-tier vulnerability in the LangChain stack within two quarters. Timeline: Three to nine months.

Frequently Asked Questions

Q: Which agentic RAG frameworks are leading production deployments in 2026? A: No single leader. LangGraph dominates orchestrator-led deployments at firms like Uber, LinkedIn, Klarna, and JP Morgan (LangChain Blog). LlamaIndex Workflows leads retrieval-router use cases. Vectara leads compliance-bound enterprise deals. Pick by layer, not brand.

Q: How are companies using LangGraph and LlamaIndex for agentic retrieval in 2026? A: LangGraph teams treat retrieval as one node in a stateful graph with checkpoints and human-in-the-loop. LlamaIndex Workflows teams build event-driven retrieval pipelines using auto_routed mode and the Composite Retrieval API to route queries across multiple indexes.

Q: Where is agentic RAG heading after multi-step retrieval and self-correction? A: Toward layer specialization. Agents own orchestration, retrieval becomes a typed tool, and verification moves left into the pipeline. Self-RAG-style verification has cut hallucination rates substantially in recent benchmarks, per Data Nucleus — pushing evaluation infrastructure from optional to default.

The Bottom Line

The agentic RAG market split into three layers, and the framework you pick is now an architectural commitment, not a library choice. Production teams that decide deliberately — orchestrator, retriever, or managed platform — win the next eighteen months. Everyone else writes the retrieval code they thought agents would skip.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors