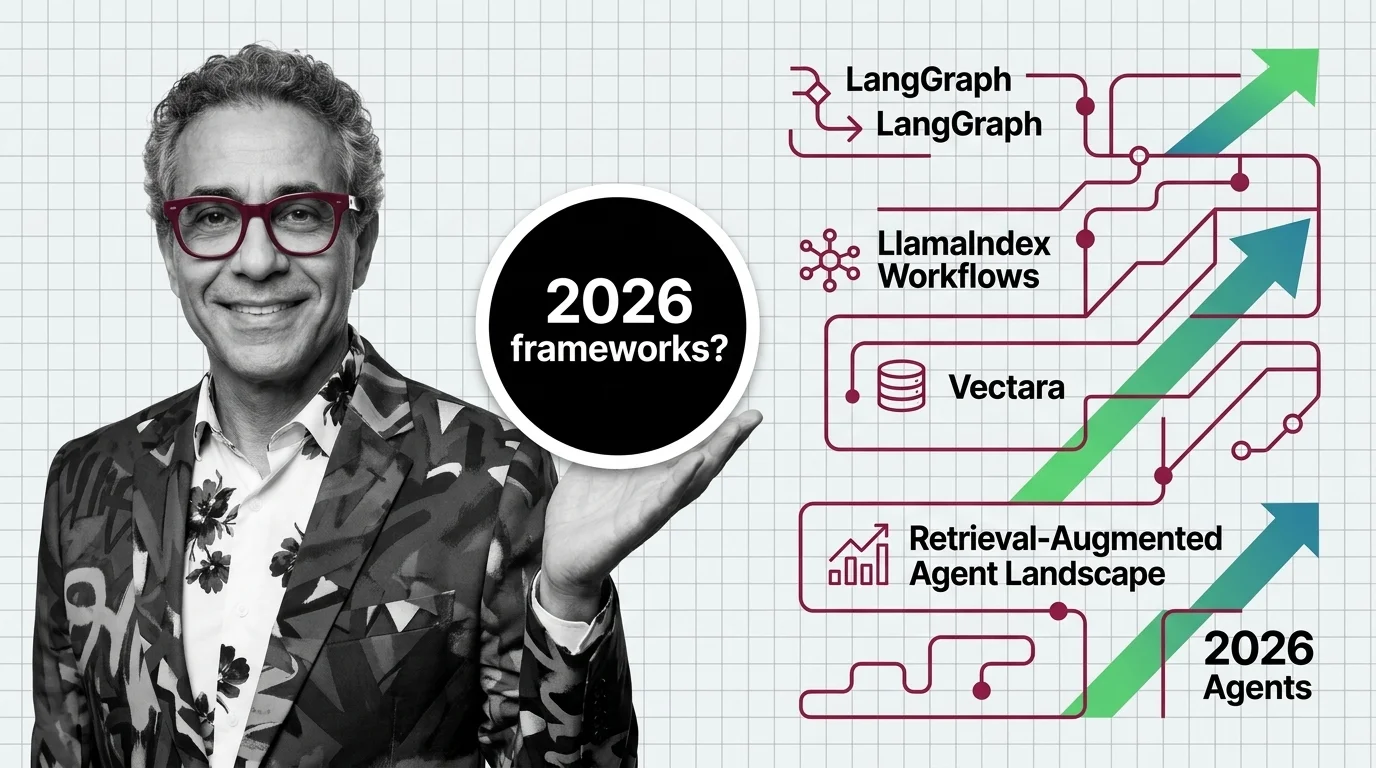

LangGraph, LlamaIndex Workflows, and Vectara: The 2026 Retrieval-Augmented Agent Landscape

Table of Contents

TL;DR

- The shift: Retrieval Augmented Agents just stratified into three layers — graph orchestration, event-driven retrieval workflows, and managed grounding — each with a different leader and a different buying decision.

- Why it matters: Picking a “RAG framework” is not the right question anymore. Picking which layer of the stack you own is.

- What’s next: MCP becomes the connective tissue between the layers. Teams without an evaluation discipline lose the next twelve months to silent failures.

For two years, retrieval-augmented generation was a Lego pile. You snapped together a vector store, a retrieval chain, a model, and called it production.

That era ended in the last twelve months — quietly, while everyone’s attention was on agents. The retrieval-augmented stack stratified, and the new shape rewards different bets than the old one did.

The Stack Just Stratified

Thesis: Retrieval-augmented agents are no longer a single product category. They are three layers, and the three barely compete with each other anymore.

Layer one is the orchestration runtime — the graph that decides which tool runs, what state persists, when a human gets pulled in. Layer two is the retrieval-first workflow engine — async, event-driven, data-aware by default. Layer three is the managed grounding service — the part of the stack that takes hallucination off your build list.

A year ago, all three lived inside the same monolithic framework calls. Today they ship as separate v1.0 products with separate strategic bets. That is not a feature update. That is the category splitting into specialized markets.

You are either picking a layer to own or you are paying three teams to integrate three layers badly.

Three Releases, Three Strategic Bets

The evidence is not anecdotal. Three v1.0 releases in eleven months mapped the new shape of the market.

LangGraph 1.0 went generally available on October 22, 2025 as the first stable major release with full backward compatibility (LangChain Blog). It ships durable state, built-in checkpoints, human-in-the-loop primitives, and time-travel debugging — the production-grade plumbing that classic chain abstractions never gave you (LangGraph Docs). Production references include Uber, LinkedIn, and Klarna.

LlamaIndex Workflows 1.0 shipped on June 30, 2025 as a standalone, lightweight package — llama-index-workflows in Python and @llamaindex/workflow-core in TypeScript (LlamaIndex Blog). The bet is different: event-driven, async-first, typed workflow state, resource injection, and OpenTelemetry observability baked in. It is decoupled from the broader LlamaIndex monorepo and aimed at teams that want

Workflow Orchestration For AI with retrieval-native DNA.

Vectara took the third path. Its Mockingbird-2 grounded-generation model posts a 0.9% hallucination rate on the HHEM leaderboard when paired with the Hallucination Correction Model, all in under 10B parameters (Vectara Blog). The pitch is not “build your own pipeline.” It is “stop paying the hallucination tax.”

Three releases. Three layers. One direction.

Who Just Moved Up the Stack

The winners are not the loudest brands. They are the teams that picked a layer and shipped a real moat.

LangChain repositioned LangGraph as the orchestration default and pushed classic chain agents into maintenance mode (LangChain Blog). That is a hard pivot most framework vendors fumble — and they executed it cleanly.

Stateful agents that need durability, recovery, and human approval steps now have a credible production runtime, not a notebook demo.

LlamaIndex stopped trying to be everything and made Workflows a standalone product. The retrieval-first, async-first design is honest about who it is for: teams building data-heavy agents that fetch, parse, and ground every step. The split from the core monorepo is the strategic tell.

Vectara sells what every enterprise procurement team actually wants: managed retrieval with measurable grounding, deployable as SaaS, customer-managed VPC, or air-gapped on-prem (Vectara). Its vectara-agentic library plugs into LlamaIndex’s agent framework instead of fighting it — a smart concession that turns a competitor into a distribution channel.

MCP is the dark-horse winner. Donated to the Linux Foundation in December 2025 with OpenAI, Google, and Microsoft as co-sponsors (MCP Blog), the protocol is now the default tool-calling layer in LangChain, LangGraph, LlamaIndex, and CrewAI. One industry tracker reports that the vast majority of new agent frameworks released in 2025 through early 2026 ship with built-in MCP support (Digital Applied) — directional, not audited, but the direction is unmistakable.

The orchestration layer, the retrieval layer, and the connective protocol all just got their adult versions.

Who’s Running Last Year’s Playbook

The losers are equally visible. They share a posture: optimizing for a stack shape that no longer exists.

Teams still hand-rolling retrieval chains with classic LangChain agent abstractions are now on a deprioritized path. LangChain’s v1 messaging is explicit — the classic agent API has been effectively superseded by LangGraph for production-facing work (LangChain Blog).

The migration is not optional. The only question is whether it happens on your timeline or under an incident.

Anyone who built on the pre-1.0 workflows module inside the LlamaIndex monorepo now owns a code path that the maintainers have moved past. The standalone llama-index-workflows package is the supported surface (LlamaIndex Blog). Pinning to the old import is a slow-motion technical debt event.

Frameworks without an evaluation and grounding story are the most exposed. Code Execution Agents amplify the cost of a wrong retrieval — a hallucinated fact at the top of the trace becomes a wrong tool call, a wrong commit, a wrong invoice. Stacks without HHEM-style grounding scores or equivalent telemetry are flying without instruments.

And the broader category of “LLM frameworks” itself is under pressure. Industry commentary argues that some of what frameworks used to own is now absorbed into native Agent SDKs from OpenAI and Anthropic plus MCP (MindStudio).

Treat that as context, not an obituary. The frameworks that ship orchestration depth, evaluation, and governance — not just nice wrappers — keep their seat.

If your 2026 AI roadmap still says “we will pick a RAG framework,” you are answering the wrong question.

What Happens Next

Base case (most likely): Three-layer stack consolidates. LangGraph wins orchestration mindshare for stateful agents. LlamaIndex Workflows owns retrieval-heavy and document-agent use cases. Vectara and a small set of managed grounding services take the enterprise share that does not want to operate a vector database. Signal to watch: Major enterprises publishing reference architectures that name the layers separately rather than naming a single framework. Timeline: Through the end of 2026.

Bull case: MCP becomes a true commodity layer and the three stacks interoperate cleanly. Teams mix LangGraph orchestration, LlamaIndex retrieval workflows, and Vectara grounding without writing glue code. The market grows because integration cost collapses. Signal: First-class MCP servers shipped by all three vendors, with documented interop reference apps. Timeline: Within nine months.

Bear case: Native Agent SDKs from frontier labs absorb enough orchestration and retrieval that mid-tier framework vendors get squeezed. Vectara survives because grounding is a real engineering moat. Smaller orchestration competitors do not. Signal: Frontier-lab SDKs shipping persistence, HITL, and managed retrieval primitives equivalent to LangGraph’s v1 feature set. Timeline: Twelve to eighteen months.

Frequently Asked Questions

Q: Which retrieval-augmented agent frameworks are leading in 2026? A: Three leaders by layer: LangGraph 1.0 for stateful orchestration with durable state and HITL, LlamaIndex Workflows 1.0 for event-driven retrieval-heavy agents, and Vectara for managed grounding with HHEM-scored hallucination rates. They are complementary more than directly competing.

Q: Where are retrieval-augmented agents heading in 2026 and beyond? A: Toward a three-layer stack — orchestration, retrieval workflows, managed grounding — connected by MCP as the standard tool-calling protocol. Expect mix-and-match deployments to replace monolithic framework choices, with evaluation infrastructure becoming non-negotiable.

The Bottom Line

The retrieval-augmented agent market is not one race. It is three, and the smart teams already picked a layer. Watch which buyers stop asking “which framework” and start asking “which orchestration runtime, which retrieval engine, which grounding service” — that is the leading indicator that the split is priced in.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors