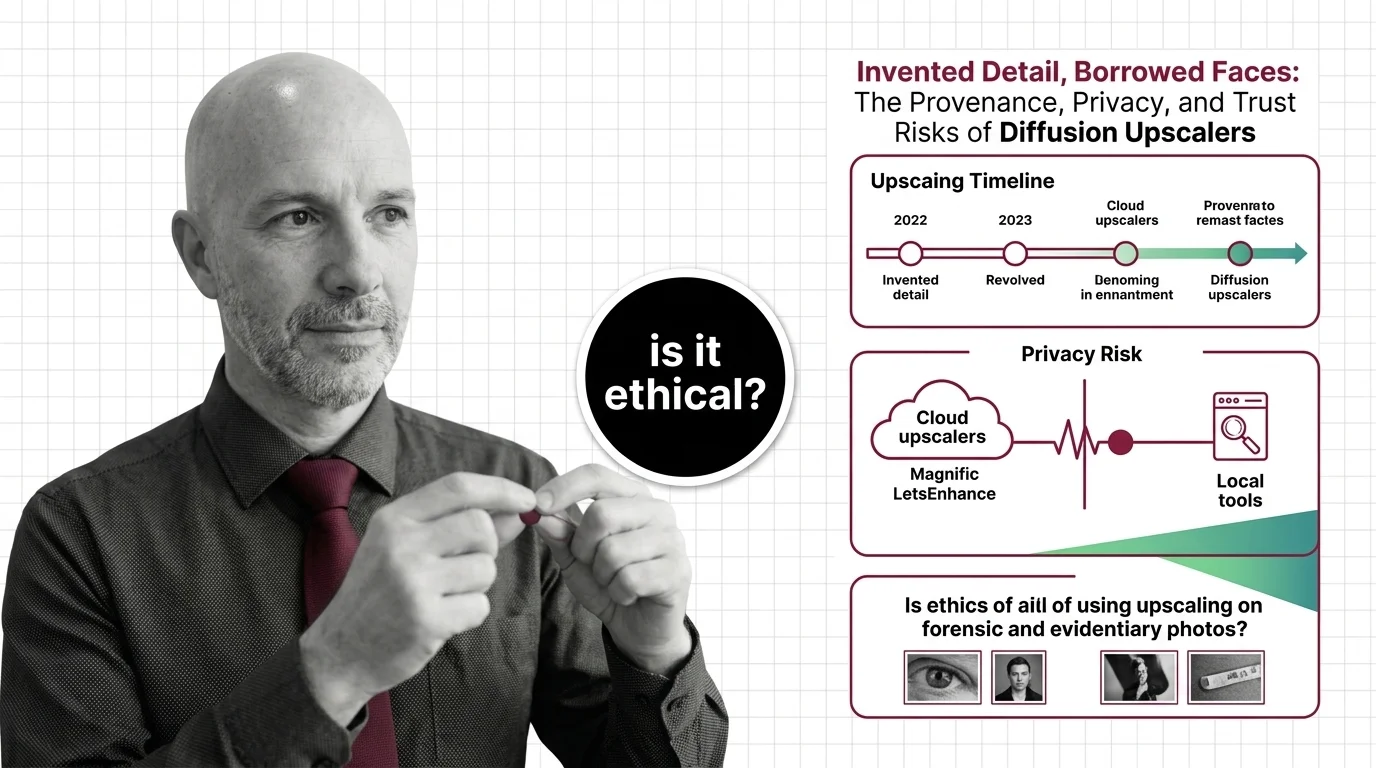

Invented Detail, Borrowed Faces: Diffusion Upscaler Risks

Table of Contents

The Hard Truth

A blurry photograph travels through a courtroom, a newsroom, and a family album. After one click in a cloud upscaler, three different sharp photographs walk back. Each one looks real. None is a recovery — each is a negotiation between your image and a model trained on someone else’s face. Whose photo are we looking at now?

When you drag a low-resolution image into Magnific and the slider asks how much “creativity” you want, the interface is being unusually honest. The number you choose is the volume on a hallucination dial. The output is not your photo restored — it is your photo plus a confident guess from a Diffusion Models prior trained on faces and surfaces that mostly belong to other people.

What We Are Calling Restoration, And What We Are Calling Invention

Most of us learned the word “enhance” from forty years of police procedurals. The detective leans into the screen, a few keys click, and the pixelated reflection in a window resolves into a license plate. The act felt magical, but it was framed as recovery — the detail was assumed to have been there all along, hiding in noise. Image Upscaling carries that same moral weight in the public imagination: press the button, get the truth back.

Modern diffusion upscalers do something philosophically different. They do not recover detail; they synthesize plausible detail consistent with everything the model has ever seen. Magnific’s product copy describes its Creativity slider as governing “the level of hallucinations (and therefore the new details) that you want the AI to generate” (Magnific Docs). Invented detail is the product, not a bug. The marketing word is “enhance.” The honest word is closer to “co-author.” That distinction sounds academic until the photograph is evidence, journalism, or someone’s grandmother.

The Case For Letting Pixels Become Pictures Again

It would be unfair to pretend the case for these tools is weak. A grainy mid-century portrait can be made beautiful again. Reviewers have documented striking results from Topaz Gigapixel, from Magnific’s Precision mode, and from open-source ESRGAN variants anyone can run on a modest GPU. Power users compose tile-by-tile pipelines through Tiled Upscaling in ComfyUI, sometimes layering a domain-specific LoRA for Image Generation to bias the invention toward a known style. Researchers have published models like Supir for anyone to experiment with — though SUPIR’s license restricts commercial use without written permission from the lead author, a constraint many product teams discover only after the demo is built (SUPIR’s GitHub repository).

For a hobbyist restoring family photos in private, the ethics are unremarkable. The question is what happens when the same act is performed for the public, on someone else’s face, or as part of a record that something actually happened.

The Word “Upscaling” Is Doing Concealment Work

The verb “upscale” inherits the moral framing of “restore.” It implies the operation is conservative — that we are returning to a state that already existed, not authoring a new one. That framing has consequences.

In State v. Puloka in King County, Washington, a defense team tried to introduce AI-enhanced video processed through Topaz Video AI. The court excluded it. The judge held that the technique “did not show with integrity what actually happened, but instead used opaque methods to represent what the AI model thought should be shown” — methods that “have not been peer-reviewed by the forensic video analysis community, are not reproducible by that community, and are not generally accepted,” failing the Frye standard for novel scientific evidence (American Bar Association; Greenberg Traurig). One trial-court ruling is not Supreme Court precedent, but it is the leading published U.S. exclusion of AI-enhanced footage on these grounds. The Maryland Judiciary went further in 2026, launching a pilot that provides court-appointed expert testimony on the authenticity of electronic evidence “that a court determines may have been created or altered by Artificial Intelligence” (Carey Law Office).

The forensic-imaging community has been quieter but no less direct. The SWGIT framework flags regenerative AI as categorically different from traditional enhancement, because the system “is not merely enhancing; it is adding / complementing details that were never captured” (Crime Scene Investigator). When the answer to was the suspect wearing glasses? is supplied by a model rather than the original sensor, the evidentiary chain has been broken in a way no enhancement log can repair.

Borrowing From the Library of Faces

The medium of photography has always been less innocent than its users assumed: darkroom dodging, retouched portraits, staged Civil War battlefields. There has never been a moment when “the photograph” was a neutral record of light. What changed in the analog era was that every intervention required a craftsperson, left physical traces, and was bounded by what one human could do in an afternoon.

Generative upscaling collapses that bound. A model can finish a face in milliseconds, drawing on a statistical memory of every other face it has ever seen. We have known since 2020 that this memory is not neutral. The PULSE incident, in which a self-supervised face upscaler turned a pixelated Obama into a recognizably white face, was not a one-off scandal. It was a structural symptom: the canonical face super-resolution benchmark, CelebA HQ, is roughly 90% white (DeepLearning.AI). A peer-reviewed CVPR study formalized what users were already discovering — that face super-resolution quality varies sharply by ethnicity and pose because of training-set imbalance (CVF Open Access). The bias is not a bug to patch. It is what the dataset means.

When such a model finishes the face in your blurred photograph, it is not recovering your face. It is borrowing one from the people the dataset over-represents. Whose face have we just attached to your name?

When Reconstruction Becomes Authorship

Thesis: a diffusion upscaler is not a tool that recovers what an image was — it is a co-author that proposes what the image could be, and the asymmetry between those two acts is where the ethical weight of this category lives.

The thesis cuts toward provenance, privacy, and the press at once. The European Union’s AI Act, in Article 50, becomes enforceable in August 2026 and directly addresses systems that transform input content: providers must “preserve marks and other intrinsic provenance signals on AI-generated or manipulated content… where such content is used as input and subsequently transformed by their AI system into a new output” (Herbert Smith Freehills Kramer). Upscalers are the textbook case, and most vendors are still operating under terms written before the rule existed.

What privacy and IP risks come with cloud upscalers like Magnific and LetsEnhance versus local tools? The bargain is not symmetric. Magnific is fully cloud-based; uploads are required, and processing happens on its servers. Its terms grant users “full copyright” of outputs while granting Magnific an “indefinite, worldwide, unlimited, non-exclusive, sublicensable, free license to use, host, store, scan, classify, index… reproduce… and transform” the uploads (Magnific’s legal page). The company says it “will not sell or advertise or make any commercial use” of asset contents, but the granted license is broad enough that a careful reader can argue the policy is silent on training rather than explicitly protective. LetsEnhance is similarly silent: its privacy policy lists “product development and improvement” as a permitted automated use of uploaded media (LetsEnhance’s privacy policy). Topaz Gigapixel processes locally by default and its iOS app runs offline, yet its formal privacy policy gives no image-specific commitments — a quiet gap between marketing posture and legal text (Topaz Labs’ privacy policy).

The Associated Press, in guidance from 2023 still in force, prohibits generative AI from being used “to create publishable content and images for the news service” (Reuters Institute). A 2025 AP-led survey found that around 70% of newsroom staff have used generative AI on the job (Poynter). That is not a policy gap. That is the field outrunning its own conscience.

What We Owe the Image, the Subject, and the Reader

The question is not whether to use diffusion upscalers — they are already woven into AI Image Editing pipelines from family photos to advertising mood boards. The question is what we owe the image itself, the person inside it, and the audience who will see the result.

Is it ethical to use AI upscaling on forensic, journalistic, or evidentiary photographs? Ethics here depends on labelling and purpose. As a private aesthetic act on your own photographs, it is mostly your business. As an act presented to a court, a jury, a reader, or a relative who believes they are seeing a recovered memory rather than a new composition, the duty to disclose is acute — and it is a duty that institutions, not individual users, have to underwrite.

Cloud services concentrate three risks that local tools do not: they take possession of the original image, they grant themselves licenses broad enough to cover model improvement, and they sit downstream of jurisdictions where the rules are still being written. Local options trade speed for the simple advantage that the photograph never leaves your machine. That is the difference between a private editorial decision and a contribution to someone else’s training corpus.

Where This Argument Is Weakest

This argument would need significant revision if two things turned out to be true. First, if the leading cloud vendors published clear, audited training-data exclusions and provenance signals well before August 2026 — and made them legally binding rather than aspirational — the privacy critique would soften considerably. Second, if forensic-imaging communities developed widely accepted standards for AI-enhanced evidence, with reproducibility and peer review, the categorical concern about evidentiary use would have to give way to a procedural one. Neither has happened yet. Both are possible.

The Question That Remains

Photography taught the twentieth century to trust the image. Generative upscaling is asking the twenty-first to trust the model that finishes it. We have not yet decided what that trust is supposed to look like — whether it is contractual, regulatory, journalistic, or personal — and the tools are scaling faster than the conscience that should govern them. What do we owe the people whose faces we will quietly borrow to finish someone else’s portrait?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors