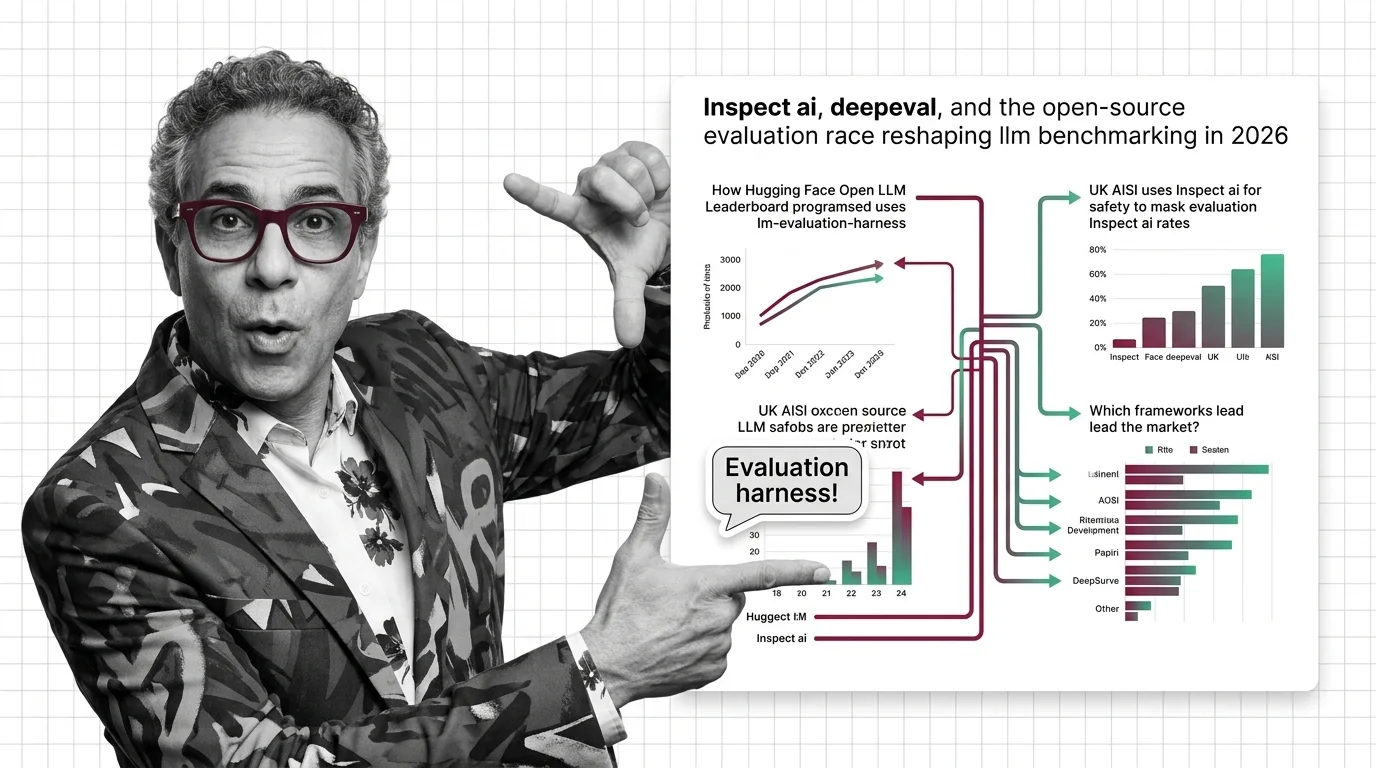

Inspect AI, DeepEval, and the Open-Source Evaluation Race Reshaping LLM Benchmarking in 2026

Table of Contents

TL;DR

- The shift: LLM evaluation has fractured into three lanes — government safety mandates, enterprise CI/CD testing, and academic benchmarking — each with its own dominant framework.

- Why it matters: The framework you adopt now locks you into a compliance, quality, or research trajectory that gets harder to switch later.

- What’s next: Government-mandated evaluation standards will spread beyond the UK, forcing the industry to standardize or fragment further.

Just over a year ago, one leaderboard defined how the industry measured model quality. The Open LLM Leaderboard retired in March 2025 after evaluating over 13,000 models (Hugging Face). What replaced it isn’t a single successor. It’s three parallel evaluation ecosystems built for different stakeholders, optimized for different outcomes, answering to different incentives.

The unified scoreboard era is over. The question is which lane you’re running on.

The Evaluation Market Split Into Three Lanes

Thesis: The single-framework era for LLM evaluation is over — government regulation, enterprise quality assurance, and academic benchmarking now require purpose-built toolchains, and those toolchains are diverging fast.

Lane one: government safety. The UK AI Security Institute built Inspect AI and then mandated it. All autonomous system evaluations submitted to AISI must use the Inspect framework (UK AISI). That’s not a recommendation. That’s compliance infrastructure.

Lane two: enterprise CI/CD. Deepeval — 14.5k GitHub stars — built the evaluation-as-testing pipeline production teams actually want. Fifty-plus LLM-evaluated metrics, pytest integration, and a commercial platform that handles regression testing, red-teaming, and monitoring (DeepEval Docs).

Lane three: academic and community benchmarking. EleutherAI’s Evaluation Harness anchors the research ecosystem with 12k stars and 60-plus standard benchmarks (EleutherAI GitHub). OpenCompass covers 100-plus datasets and 400,000-plus questions across the Chinese AI ecosystem. Stanford’s Helm Benchmark continues pushing multi-metric holistic evaluation.

These aren’t competing products. They’re different answers to different questions. That’s the structural shift.

The Data Behind the Split

The clearest signal: the UK moved from publishing a toolkit to mandating one. When AISI released its autonomous evaluation standard requiring Inspect AI, it turned an open-source project with 1.9k GitHub stars into regulatory infrastructure. Inspect ships with 100-plus pre-built evaluations spanning coding, math, cybersecurity, reasoning, and safety (UK AISI Docs).

The safety use case is already producing data that matters. Frontier models jumped from 1.7 to 9.8 completed steps on a 32-step cyberattack scenario between August 2024 and February 2026, per a Resultsense analysis of AISI evaluation results. Traditional Model Evaluation tools — a Confusion Matrix for classification, BLEU scores for generation — don’t capture multi-step capability gains at this scale. That gap is exactly why government-backed frameworks exist.

On the enterprise side, DeepEval hit v3.9.5 by March 2026. Confident AI wraps the open-source metrics engine in an enterprise platform with built-in red-teaming and monitoring. The play is straightforward: evaluation that ships inside your CI pipeline, not next to it.

Meanwhile, Hugging Face replaced its unified leaderboard with an OpenEvals organization hosting 200-plus community leaderboards — each measuring different capabilities for different audiences.

The monolith broke. Nobody is rebuilding it.

Who Moves Up

UK AISI. No other national body has both built an evaluation framework and mandated its use. That advantage compounds — every lab submitting evaluations to AISI trains its team on Inspect. Mindshare follows mandates.

Confident AI. They read the market right. Production teams don’t want research benchmarks. They want eval metrics that run alongside unit tests. DeepEval is the most-starred evaluation framework on GitHub, and the commercial platform gives them a revenue model academic projects lack.

Specialized leaderboard operators. The 200-plus community leaderboards that replaced the Open LLM Leaderboard aren’t fragmentation — they’re specialization. Teams building task-specific evaluations for medical reasoning, code generation, or multilingual performance now have purpose-built infrastructure.

One-size-fits-all benchmarking is dead. Specialization is the new competitive edge.

Who Gets Lapped

Anyone waiting for a unified standard is betting against the trajectory. The lanes are diverging, not merging.

Research-only frameworks without a production story face the sharpest pressure. lm-evaluation-harness remains foundational, but its last tagged release is v0.4.11 from February 2025 — and a CLI refactor in that cycle changed how teams install and configure the tool. Production teams are choosing frameworks with faster release cadences and native CI/CD integration.

Teams ignoring Benchmark Contamination face a different kind of loss. More benchmarks means more surface area for training-data leakage — and fewer eyes per benchmark to catch it. The teams treating contamination as someone else’s problem will produce the least trustworthy results.

You’re either auditing your evaluation pipeline or you’re trusting numbers you can’t verify.

What Happens Next

Base case (most likely): Government-mandated evaluation spreads. The EU and additional national safety bodies adopt Inspect-style frameworks within the next year. Enterprise teams standardize on CI/CD-native tools. Academic benchmarking fragments further into specialized community leaderboards. Signal to watch: A second national AI safety body mandating a specific evaluation framework. Timeline: 6-12 months.

Bull case: Inspect AI becomes the de facto international safety evaluation standard. Interoperability layers emerge that let teams run evaluations across frameworks. Fragmentation becomes manageable. Signal: Major cloud providers integrating Inspect AI into model deployment pipelines. Timeline: 12-18 months.

Bear case: Fragmentation deepens without interoperability. Evaluation results become incomparable. Benchmark shopping accelerates — labs pick the framework that flatters their model. Public trust in evaluations erodes. Signal: Multiple high-profile contradictory evaluation results for the same model. Timeline: Already underway.

Frequently Asked Questions

Q: How does the Hugging Face Open LLM Leaderboard use lm-evaluation-harness to rank models? A: The Open LLM Leaderboard used EleutherAI’s lm-evaluation-harness as its backend, running standardized benchmarks across submitted models. It retired in March 2025 after evaluating over 13,000 models and has been succeeded by 200-plus community leaderboards under OpenEvals.

Q: How does UK AISI use Inspect AI to evaluate frontier model safety in 2026? A: AISI mandates the Inspect framework for all autonomous system evaluations. It ships with 100-plus pre-built safety evaluations covering cybersecurity, reasoning, and coding — giving AISI a standardized lens on frontier model capabilities and risks.

Q: What evaluation harness frameworks are leading the LLM benchmarking market in 2026? A: DeepEval leads by GitHub adoption with 14.5k stars and enterprise CI/CD focus. lm-evaluation-harness at 12k stars remains the academic standard. OpenCompass dominates the Chinese ecosystem at 6.8k stars. Inspect AI holds the government safety lane at 1.9k stars.

The Bottom Line

The evaluation market split into three lanes — safety mandates, enterprise testing, and academic research — and each is building infrastructure without waiting for consensus. The frameworks that survive won’t be the ones with the most benchmarks. They’ll be the ones embedded in workflows that can’t be switched off.

You’re either choosing your lane now or letting someone choose it for you.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors