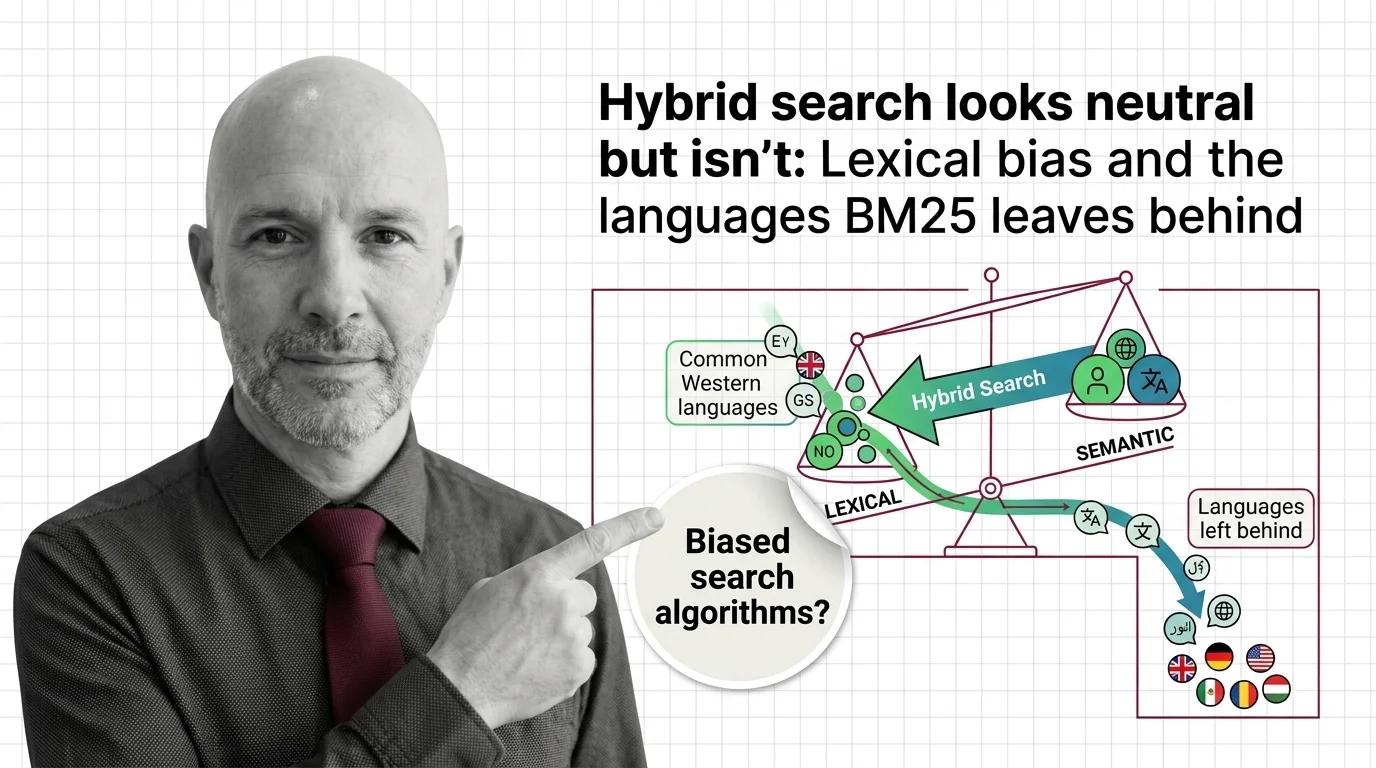

Hybrid Search Looks Neutral but Isn't: Lexical Bias and the Languages BM25 Leaves Behind

Table of Contents

The Hard Truth

What if the most consequential decision in your retrieval pipeline was made decades ago, in a language you happen to speak, and inherited by every document you index in a language you do not? Hybrid search is sold as the best of both worlds. Whose world?

A user types a question in Yoruba into a hybrid search system and receives mostly English results. The same question in English returns confident, well-ranked answers — and never surfaces the Yoruba document at all. Nothing in the system has crashed. The dashboard is green. By the metrics that matter to engineers, the search worked. By any metric that matters to a person whose language is the one being filtered out, something quieter has gone wrong.

Who Decides Which Words Count as Words?

The vocabulary we use for retrieval is curiously sanitized. We talk about “tokenization” as if it were a neutral preprocessing step, a mechanical decomposition of strings into searchable units. But tokenization is the place where a system decides what counts as a word — what is signal and what is noise, what gets indexed and what gets discarded as malformed input. The Inverted Index that powers every BM25 implementation is not a passive structure. It is a list of permitted vocabulary, built from whatever the tokenizer was willing to recognize.

When the default tokenizer was tuned for English, the implicit definition of “word” became English-shaped. Spaces between tokens. Latin script. A finite set of suffixes a Porter stemmer can collapse. Languages that violate any of those assumptions — agglutinative morphology in Finnish or Turkish, complex case systems in Slavic languages, the absence of word boundaries in Chinese and Japanese, scripts the standard library was never tested against — quietly slip out of the index. Not as an outage. As a drop in recall that nobody is required to measure.

The Case for Hybrid as Fairness Insurance

The conventional wisdom around Hybrid Search is reasonable, and it deserves to be presented at full strength. The argument runs like this: dense embeddings learn semantic similarity, which transcends the surface form of words. BM25 catches exact-keyword matches and rare technical terms that embeddings often dilute. By combining the two, hybrid systems hedge against the failure modes of either approach alone. And there is empirical weight behind the claim. The MIRACL benchmark, covering 18 languages with hundreds of thousands of native-speaker relevance judgments, found that zero-shot hybrid retrieval combining BM25 and multilingual dense passage retrieval beats both BM25 alone and in-language fine-tuned dense retrieval on most languages, per the MIRACL paper (TACL 2023).

That finding matters. It says that for many of the world’s languages, including several with limited training data, layering lexical and semantic retrieval produces measurably better recall than either method alone. Practitioners who build on hybrid search are not chasing a fad. They are responding to evidence. Retrieval Augmented Generation systems lean on hybrid retrieval because the gains are real, and Agentic RAG pipelines extend that logic across multi-step reasoning. The case for hybrid is not that it is perfect. The case is that it is, on average, the best zero-shot baseline we currently have.

But “on average” is doing a lot of work in that sentence.

What the Default Tokenizer Quietly Decides

The hidden assumption inside the conventional wisdom is that hybrid search’s two halves treat languages symmetrically — that the BM25 channel and the dense channel both reach across linguistic boundaries with comparable competence. The evidence suggests otherwise. Yang et al. (EMNLP MRL 2024) found that BM25 exhibits larger language bias than neural retrievers like mDPR and XLM-R: semantically equivalent queries in different languages produce divergent rankings on the same multilingual corpus. The root cause is not subtle. BM25 only retrieves documents that contain the query’s keywords, so the output documents and the query strongly correlate in language. The bias is not a flaw in BM25. It is a property of how the algorithm was designed.

The vendor stacks we use every day inherit this property without alarm. Weaviate’s BM25 implementation defaults to a word tokenizer that is English-oriented; Chinese and Japanese require a separate GSE analyzer, Korean requires kagome_kr, and accent folding for Latin-script languages is a 1.x configuration step rather than a default, per the Weaviate Docs. Qdrant’s BM25 inference defaults to English stemming and stopword removal, with non-English usage requiring explicit model configuration, according to the Qdrant Docs (Hybrid Search). Higher-resource languages correlate with higher language fairness in multilingual retrieval; lower-resource languages — Yoruba, Swahili, Telugu in the MIRACL set — face compounding disadvantage that no fusion algorithm corrects after the fact (Yang et al.). And morphologically rich languages need language-specific stemming and lemmatization to maintain recall at all, a finding documented by the ACL 2025 cross-lingual IR survey.

So who is configuring the Korean tokenizer in your retrieval stack? And who is checking whether the configuration was right?

Cartography, Not Mathematics

It helps to stop thinking of BM25 as mathematics and start thinking of it as cartography. A map is not a neutral object. The choice of what to label, what to abbreviate, what to leave blank — these are decisions about whose world the map represents. Nineteenth-century European cartographers drew Africa as an interior of mostly empty space because their tradition could not represent what it had not catalogued. The territory was full. The map was sparse. The sparseness was not an absence in the world; it was an absence in the map’s vocabulary.

The default inverted index in a hybrid retrieval pipeline is a map of permitted vocabulary. When that vocabulary is shaped by an English-first tokenizer, the territory of multilingual content remains full while the index becomes sparse for everything outside the assumed language. The system does not refuse to index Yoruba documents. It simply tokenizes them in a way that makes their words harder to retrieve, then weights them in a fusion stage as if the lexical channel had been an equal partner. That is not a bug report. It is a description of what the architecture is.

The Quiet Asymmetry of “Neutral” Retrieval

Thesis (one sentence, required): When the lexical half of a hybrid retrieval system speaks one language fluently by default and stutters in dozens of others, hybrid search does not eliminate language bias — it launders it through fusion algorithms that present asymmetric inputs as equal contributions.

The fusion stage compounds the problem with a kind of false equality. Reciprocal Rank Fusion, introduced by Cormack et al. at SIGIR 2009, combines rankings from independent retrievers without normalizing their scores — and that simplicity is part of why it became the default in mainstream vector databases. But Bruch et al. (ACM TOIS 2023) found RRF to be parameter-sensitive and to underperform convex combination fusion in both in-domain and out-of-domain evaluations. When the BM25 ranking that enters fusion was already filtered through a tokenizer that misses inflected forms in your language, RRF does not detect that asymmetry. It treats a degraded ranking as one half of a balanced pair. The architecture of fusion presumes both inputs have done comparable work. In multilingual settings, they often have not.

This is the quiet asymmetry. Each component is defensible in isolation. BM25 is a respected algorithm. RRF is a clean fusion method. Weaviate and Qdrant are honest about their tokenizer options in the documentation. But the pipeline’s emergent behavior — the fact that querying in a low-resource language produces systematically weaker retrieval than querying the same idea in English — is not visible in any single component. It lives in the assembly. And the assembly was built without a multilingual fairness budget that anyone is required to defend.

What We Owe the Languages We Index

So what do we do? Probably not the satisfying thing — the proclamation, the ban, the regulatory hammer. Probably the slower thing: treat language coverage as a first-class quality metric in retrieval evaluations, alongside latency and recall. Surface tokenizer choices in product documentation as design decisions, not implementation details. Audit hybrid pipelines against multilingual benchmarks like MIRACL before approving them for general release, and report per-language recall openly. The Yang et al. paper proposes LaKDA, a language-aware loss for neural rankers that enforces ranking consistency across semantically equivalent multilingual queries — an example of the kind of structural correction that lives at the model layer, not as a fusion afterthought.

The harder questions are institutional. Whose name is on the configuration file when a Korean user gets worse results than an English user from the same hybrid system? Whose responsibility is it to budget engineering time for tokenizer audits in languages the team does not speak? Whose accountability is engaged when “the defaults shipped that way” is offered as an answer? These are not technical questions awaiting a clever fix. They are governance questions about what we owe the people whose words we have agreed to index.

Where This Argument Could Fail

If a future hybrid retrieval stack ships with truly language-agnostic tokenization by default — multilingual subword segmentation across both channels, with documented per-language recall parity in the evaluation suite — much of this critique loses its bite. If neural retrievers continue to close the gap on rare-term recall such that the case for the BM25 channel itself weakens, the architecture-level concern about lexical bias becomes a transitional problem rather than a structural one. And if regulators or buyers begin demanding multilingual fairness reports the way they demand security audits, the institutional mechanism this essay calls for will exist without further argument. None of those futures are guaranteed. None of them are impossible.

The Question That Remains

We have built retrieval systems that promise to find what is most relevant, then quietly defined relevance through a tokenizer trained on a single language family among the thousands the world actually speaks. The honest question is not whether hybrid search works. It is who counts as a user when “neutral defaults” are not neutral, and which silences in the index we have agreed to live with because measuring them would be inconvenient. Whose languages, in the end, do we owe a working search?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

Ethically, Alan.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors