How to Build a Retrieval-Augmented Agent with LangGraph, LlamaIndex, and CrewAI in 2026

Table of Contents

TL;DR

- A Retrieval Augmented Agents system is three contracts: when to retrieve, what to retrieve, and what to do with the result. Specify each one in writing before you pick a framework.

- LangGraph, LlamaIndex, and CrewAI solve different parts of the same problem. Pick by the part you need, not by the logo on the README.

- Most “the agent hallucinated” incidents are missing-spec incidents. The agent retrieved when it shouldn’t have, or returned text when it should have called a tool.

A team ships an Agentic RAG prototype. It works on the demo. Two weeks in, the support inbox fills with “the bot ignored the manual” tickets. The retriever ran on every turn — including ones where the user asked what time it was. Cost tripled, latency doubled, and the answers got worse, not better.

That is not a model failure. That is a missing spec. Specifically, the spec for when retrieval is even allowed to fire.

This guide walks you through the four contracts a retrieval-augmented agent needs before you let any framework generate code. Then it tells you which of the three current production frameworks — LangGraph, LlamaIndex, or CrewAI — actually matches each contract.

Before You Start

You’ll need:

- An AI coding tool — Cursor, Claude Code, or Codex

- A working knowledge of vanilla RAG (indexing, embedding, retrieval, generation)

- A specific use case in mind — “enterprise knowledge search,” “compliance Q&A over policy PDFs,” or “internal API helpdesk.” Generic “build me an agent” prompts produce generic agents.

This guide teaches you: how to decompose a retrieval-augmented agent into four contracts — retrieval gate, retrieval scope, response policy, and orchestration shape — then specify each one to your AI coding tool so the generated code matches the framework’s idioms instead of fighting them.

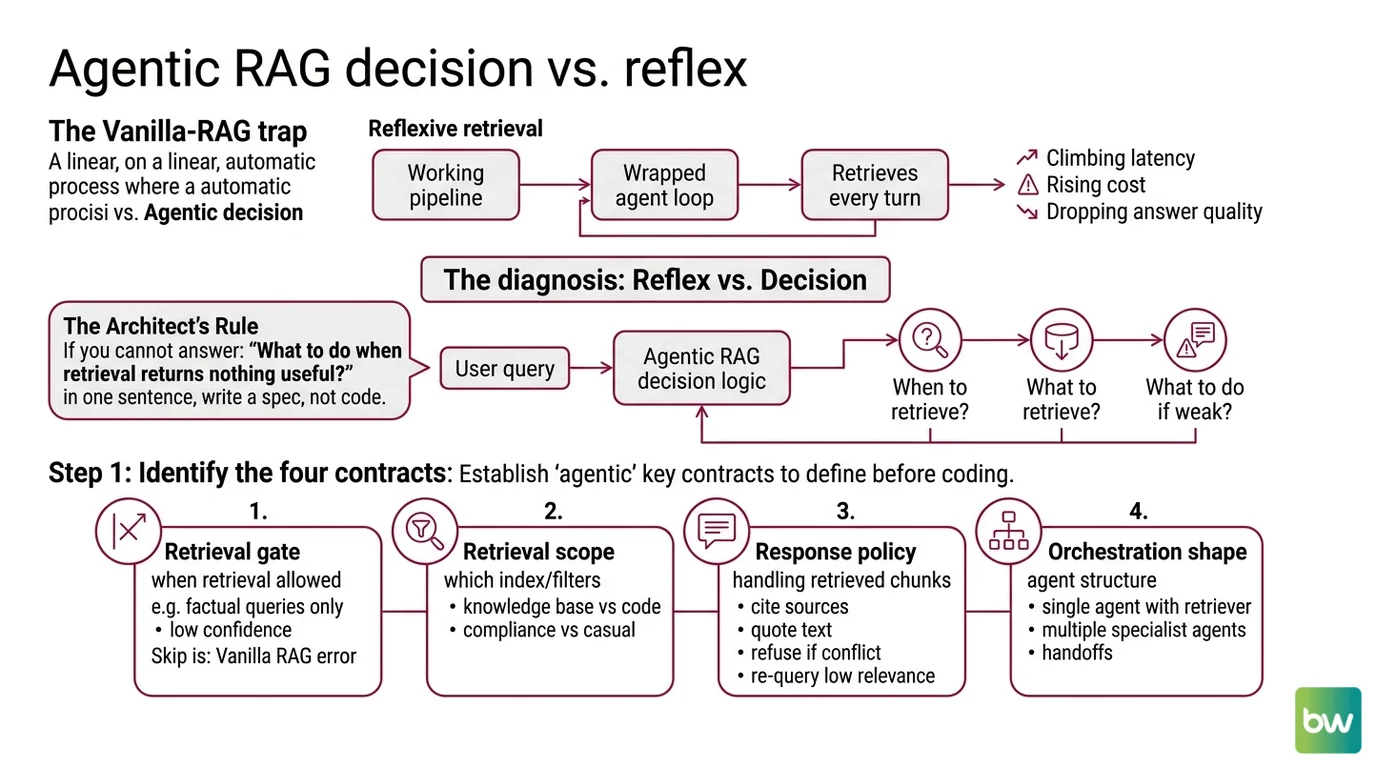

The Vanilla-RAG Trap

The bug pattern repeats. A team takes a working vanilla RAG pipeline, wraps it in an agent loop, and calls it “agentic.” The agent now retrieves on every single turn because the loop never specified an alternative. Latency climbs. Cost climbs. Answer quality drops because the retrieval is firing on conversational chit-chat that has no relevant documents.

It worked on Friday’s demo. On Monday, the same prompt produces three irrelevant citations because the user asked a follow-up the retriever can’t ground.

The diagnosis: the agent has retrieval as a reflex, not a decision. Vanilla RAG retrieves, period. Agentic RAG decides whether to retrieve, what to retrieve, and what to do if the retrieval is weak. That decision logic is the entire reason for the word “agentic.” Skip it and you’ve just added latency to vanilla RAG.

Step 1: Identify the Four Contracts

Before you let any framework generate a single line, write down four contracts. They map directly onto the three frameworks below — the contracts are framework-independent, but each framework prefers a different one.

Your retrieval-augmented agent has four contracts:

- Retrieval Gate — when retrieval is allowed to fire. Always? Only on factual queries? Only when confidence is low? This is the contract vanilla RAG skips entirely.

- Retrieval Scope — which index, which filters, which top-k. A knowledge-base query is not a code-search query. A compliance question is not a casual one.

- Response Policy — what the agent does with retrieved chunks. Cite them? Quote them? Refuse to answer if they conflict? Re-query if relevance is low?

- Orchestration Shape — single agent with one retriever, or multiple specialist agents handing off to each other.

The Architect’s Rule: If you cannot answer “what should the agent do when retrieval returns nothing useful?” in one sentence, you are not ready to write code. You are ready to write a spec.

The retrieval gate is where most teams go wrong. Vanilla RAG has no gate — it retrieves on every turn. An agentic system needs an explicit decision node. LangChain’s official agentic-RAG pattern names this node generate_query_or_respond — the LLM decides whether to call the retriever tool or answer directly, per LangChain Docs. That decision is the contract.

Step 2: Lock Down the Framework Contract

Once the four contracts are written, the framework choice gets easier. Each of the three production frameworks specializes in one part of the four-contract system. Pick by which part you need most.

Context checklist for your AI coding tool:

- Target framework named explicitly with current version

- Retrieval gate logic stated as a precondition, not implied

- Retrieval scope (which index, filters, top-k) defined per query type

- Response policy stated for three cases: strong retrieval, weak retrieval, no retrieval

- Orchestration shape: single agent or multi-agent, and why

- Error handling pattern: what happens if the retriever times out, the index is empty, or the LLM produces an off-spec response

The framework split in 2026 is cleaner than it was a year ago. LangChain Docs describes LangGraph as the stateful workflow layer where you write the gate logic as an explicit graph node — full control over retries, error handlers, and per-node timeouts. The March 10, 2026 release added type-safe streaming and per-node timeouts, per LangChain’s changelog. That matters because retrieval timeouts are the most common failure mode in production agentic RAG.

LlamaIndex Docs frames AgentWorkflow as the event-driven primitive for document-grounded agents. The architecture LlamaIndex Blog recommends is per-document agents with embedding search plus summarization, with a top-level agent over the document agents performing tool retrieval and Chain-of-Thought reasoning. That maps directly to retrieval scope: each document agent owns its own retrieval contract.

CrewAI Docs positions Crews as role-based multi-agent teams. CrewAI v1.14.0, released April 7, 2026, added path and URL validation on RAG tools — a direct response to prompt-injection vectors in agentic retrieval, per CrewAI’s changelog. Crews handle the orchestration shape contract well when you want to model retrieval as one role and synthesis as another.

The Spec Test: If your context says “use LangGraph” but never names which node owns the retrieval gate, the AI will produce a graph that retrieves on every node. The framework didn’t fail. The spec failed.

Step 3: Wire the Components in the Right Order

The build order matters because retrieval is a downstream dependency. Build it last, and you will rewire half the agent when the retriever’s interface doesn’t match what the gate node expected.

Build order:

- Retrieval layer first — index, embeddings, retriever interface. No agent yet. Just the contract: query in, ranked chunks out. Verify it works against a fixed test set before any LLM touches it.

- Gate node second — the decision logic. In LangGraph, that’s a graph node returning either a tool call or a direct response. In LlamaIndex, it’s a workflow step. In CrewAI, it’s the agent’s role description plus tool descriptions.

- Response policy third — the logic that consumes retrieved chunks. Strong retrieval: answer with citations. Weak retrieval: re-query or escalate. No retrieval: refuse or fall back to general knowledge with an explicit warning.

- Orchestration shape last — single agent or multi-agent. Defer this decision until the first three layers are stable. Premature multi-agent orchestration is the second-most-common failure mode after the missing retrieval gate.

For each component, your context must specify:

- What it receives — query string, chat history, retrieval scope hint

- What it returns — chunks with scores, decisions with reasoning, responses with citations

- What it must NOT do — silently fail, hallucinate citations, retrieve when the gate says no

- How to handle failure — timeout returns “retrieval unavailable,” empty index returns “no documents indexed for this scope”

The pattern LangChain Docs describes — nodes for retrieval, document grading, query rewriting, and response generation — is not a coincidence. It is the four-contract system rendered as a graph. If you specify the contracts first, the graph almost writes itself.

Step 4: Validate Against the Failure Modes

The validation criteria for an agentic RAG system are not “does it answer questions correctly.” They are “does it fail correctly when it should.” A system that always answers is hallucinating half the time.

Validation checklist:

- Retrieval gate fires on factual queries, stays silent on conversational ones — failure looks like: every turn retrieves, including “hi” and “thanks.”

- Weak retrieval triggers a re-query or refusal — failure looks like: low-relevance chunks get cited as if they were strong matches.

- Citations point to retrieved chunks, not invented ones — failure looks like: the citation text doesn’t appear in the retrieved context.

- The agent refuses to answer when retrieval is empty and the question is out-of-scope — failure looks like: confident fabrication when the index has nothing.

- Timeouts produce a graceful error, not a stuck loop — failure looks like: the agent retries the retriever indefinitely when the vector DB is down.

Security & compatibility notes:

- LangGraph RCE (CVE-2026-27794): Remote code execution in checkpoint deserialization. Fix: upgrade

langgraph-checkpointto 4.0.0; affected versions below 3.0 per NVD.- LangGraph SQL injection (CVE-2025-67644): SQLite checkpoint implementation accepts metadata filter keys without sanitization, per The Hacker News. Patched in current builds — pin versions explicitly.

- LangGraph ToolNode breaking change:

langgraph-prebuilt1.0.2 added a requiredruntimeparameter toToolNode.afunc, breaking code that overridesafunc. Pin to a known-good version and update overrides.- LangChain Core secrets exposure (CVE-2025-68664): Unsafe JSON serialization fallback exposed secrets via serialization injection. Removed in current versions per NVD — verify your

langchain-corepin.- LlamaIndex deprecation: Pre-2025 tutorials using

OpenAIAgentandReActAgentare superseded byAgentWorkflowandFunctionAgent. Use Workflows 1.0 patterns or your generated code will reference removed APIs, per LlamaIndex Blog.

These notes are not optional. Three of the five are CVEs, and the two warnings will produce import errors or silent behavior changes if your AI tool generates code against old patterns. Include them in the context file you hand to your coding agent.

Common Pitfalls

| What You Did | Why AI Failed | The Fix |

|---|---|---|

| Said “build an agentic RAG system” with no gate spec | AI defaulted to retrieve-every-turn, the vanilla pattern | Specify the retrieval gate as the first node. State when it fires and when it doesn’t. |

| Named the framework but not the version | AI generated code against pre-Workflows-1.0 LlamaIndex or pre-1.x CrewAI APIs | Pin the version explicitly: langgraph==1.1.10, llama-index-core on the 0.14.x line, crewai==1.14.0 |

| Asked for multi-agent without specifying handoff | AI invented a hierarchy that retrieves the same documents three times | Define the handoff contract first. Single agent until the second agent has a distinct role and a distinct retrieval scope. |

| Skipped the response policy for weak retrieval | AI generated code that cites low-score chunks as if they were authoritative | Spec all three retrieval states: strong, weak, empty. Each gets a different response branch. |

| No timeout on the retriever | AI generated happy-path code that hangs when the vector DB is slow | Use LangGraph’s per-node timeouts (March 2026 release) or equivalent. State the timeout as a constraint in the spec. |

Pro Tip

Frameworks are not religions. The 2026 production pattern, per Knowlee Blog, is composition — teams use LangGraph for control flow plus LlamaIndex for retrieval plus CrewAI or another layer for role abstractions. No monoliths. If your spec assumes you must pick one, your spec is the problem.

The mental shift: each framework is a specialization. LangGraph specializes in stateful graphs with explicit control. LlamaIndex specializes in document-grounded retrieval. CrewAI specializes in role-based multi-agent prototyping. A single retrieval-augmented agent might use all three — LangGraph as the outer loop, LlamaIndex as the retriever inside one node, CrewAI for the workflow that built the index in the first place. The contracts are stable. The implementations compose.

Frequently Asked Questions

Q: How to build a retrieval-augmented agent step by step in 2026? A: Write the four contracts first — retrieval gate, scope, response policy, orchestration. Then pick the framework that matches your dominant contract: LangGraph for control, LlamaIndex for document grounding, CrewAI for roles. Build retrieval before agents — premature multi-agent designs hide the gate.

Q: How to use retrieval-augmented agents for enterprise knowledge search? A: Use per-document agents with their own retrieval scope, then a top-level agent that routes queries to the right one, per LlamaIndex Blog. Watch out: per-document agents that all index the same source. Deduplicate at the corpus level or you pay for the same retrieval three times.

Q: When should you use an agentic RAG framework instead of vanilla RAG? A: When the answer to “should I retrieve right now?” is sometimes no. Vanilla RAG is correct when every query needs retrieval. Agentic RAG fits when retrieval is conditional, scope shifts per query, or weak retrieval needs to trigger a re-query or refusal.

Your Spec Artifact

By the end of this guide, you should have:

- A four-contract document — retrieval gate, retrieval scope, response policy, orchestration shape — written before any code

- A framework selection rationale that names which contract each chosen framework owns, with version pins

- A validation checklist of the five failure modes your system must handle correctly before you ship it

Your Implementation Prompt

Paste this into Claude Code, Cursor, or Codex when you’re ready to generate the first scaffold. Fill the bracketed values from your four-contract document. The placeholders map one-to-one to the Step 2 checklist — every bracket is a decision you have already made.

Generate a retrieval-augmented agent in Python using [framework: langgraph==1.1.10 | llama-index-core==0.14.21 | crewai==1.14.0].

Four contracts (the spec — do not deviate):

1. Retrieval gate: retrieve only when [condition, e.g., "the user query contains a factual claim or named entity from the corpus domain"]. Skip retrieval when [condition, e.g., "the query is conversational, a greeting, or a clarification of the previous response"].

2. Retrieval scope:

- Index: [name + embedding model]

- Filters: [metadata filters per query type]

- Top-k: [number, default 5]

3. Response policy:

- Strong retrieval (top score > [threshold]): answer with inline citations to retrieved chunks

- Weak retrieval (top score between [low] and [threshold]): re-query with [rewrite strategy] or escalate

- Empty retrieval: respond with [refusal text] and do not invent citations

4. Orchestration shape: [single agent | multi-agent with handoff from {role A} to {role B}]

Error handling:

- Retriever timeout after [N] seconds returns "retrieval unavailable" — no retry loop

- Empty index returns "no documents indexed for this scope"

- LLM off-spec response triggers [validation step]

Security constraints:

- Pin langgraph-checkpoint to 4.0.0+ (CVE-2026-27794)

- Validate retrieval tool inputs (path/URL injection)

- Do not use deprecated LlamaIndex OpenAIAgent or ReActAgent — use AgentWorkflow

Validation: produce a test script that exercises all five failure modes from the spec's validation checklist.

Ship It

You now have a four-contract mental model that turns “build an agentic RAG system” into a decomposition the AI can actually generate. The framework choice stops being a religion and starts being a question of which contract you care about most. Once the contracts are written, the version pins and security caveats are the boring-but-critical layer that keeps the system shippable instead of CVE-shaped.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors