Build a Hybrid Search Pipeline: BM25, SPLADE-v3 + RRF in 2026

Table of Contents

TL;DR

- Sparse Retrieval catches the exact tokens dense vectors miss — error codes, SKUs, version strings, surnames.

- SPLADE-v3 learns sparse expansions, so the lexical lane gets paraphrase coverage without losing token-level precision.

- Reciprocal Rank Fusion merges BM25, SPLADE-v3, and a dense ranker on ranks alone — no score normalization, one configurable constant.

A user types “ERR_OOM_42 reproduction” into your support search. Your dense Embedding model returns three articles about “memory issues” and zero matches for the literal error code. The model didn’t fail. Your retrieval architecture did. It only had one lane.

Before You Start

You’ll need:

- Pyserini installed in a Python 3.12+ environment (the April 2026 release dropped 3.11 support, per Pyserini PyPI)

- A working understanding of Term Frequency and TF-IDF

- A passing familiarity with Dense Retrieval and how it differs from lexical search

- A corpus you control — chunked, ID’d, and ready to index three different ways

This guide teaches you: How to decompose a retrieval system into independent lanes, specify the contract between them, and fuse their rankings into a single result list that beats any lane alone.

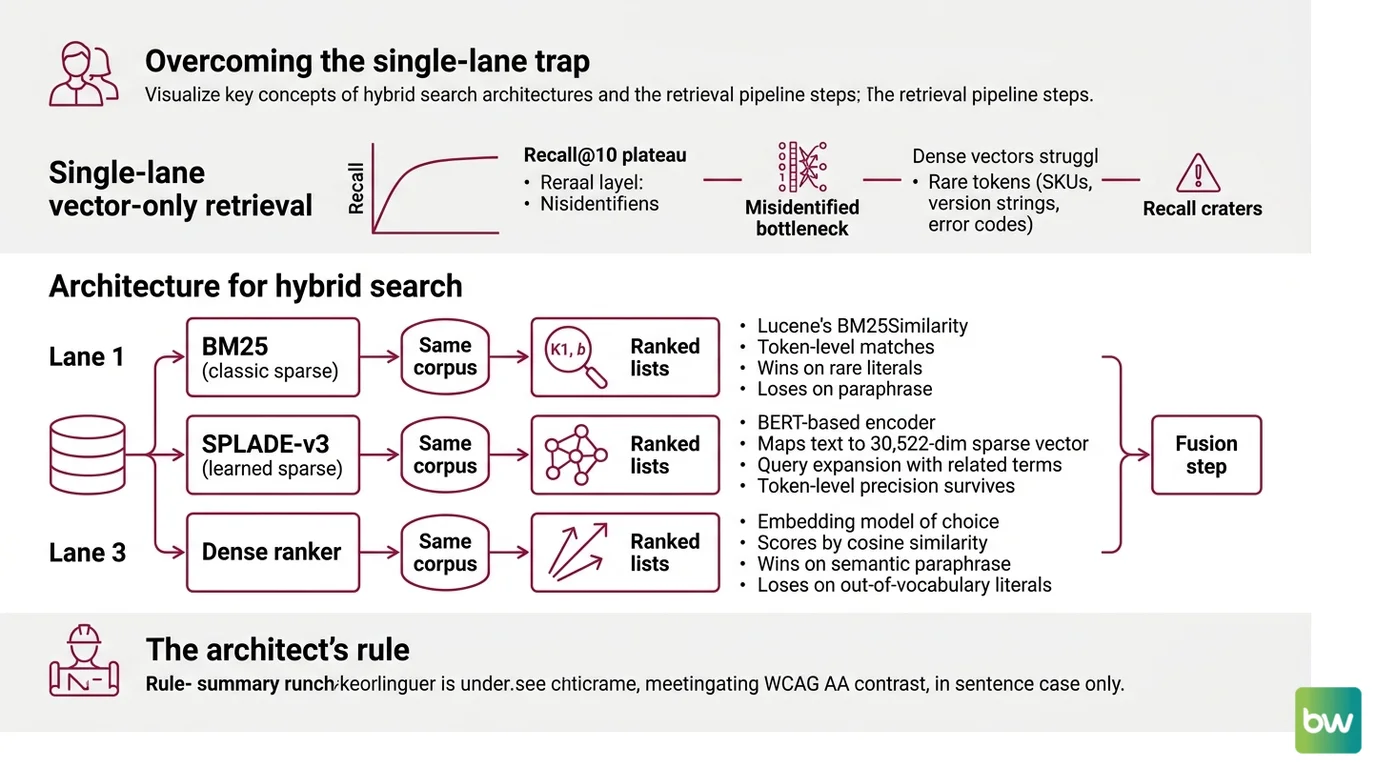

The Single-Lane Trap That Costs You Recall

Teams ship a vector-only retriever, watch recall@10 plateau in evals, and conclude the embedding model is the bottleneck. It usually isn’t. The bottleneck is that one lane can’t catch every kind of query at once. Dense vectors handle paraphrase well. They lose on rare tokens — SKUs, library names, version strings, surnames, error codes. Sparse retrieval catches those, but loses on synonym queries.

That’s the case for Hybrid Search — treat it as an architecture choice with a known failure-mode mapping, not a hedge against picking the “wrong” retriever.

It worked on Friday. On Monday, the team added a product catalog with model numbers, and recall on “DK-2200 v3 firmware” cratered because no embedding had ever seen that token.

Step 1: Identify the Three Retrieval Lanes

You’re not building one retriever. You’re building three, then combining them. Each lane has a different failure mode and a different recovery zone. The job of decomposition is to keep them independent enough that one lane’s weakness never silently corrupts another lane’s strength.

Your pipeline has these parts:

- BM25 (classic sparse) — Lucene’s BM25Similarity with default

k1=1.2, b=0.75per the Pyserini Docs. Token-level matches on the surface form. Wins on rare literals. Loses on paraphrase. - SPLADE-v3 (learned sparse) — Naver’s BERT-based encoder that maps text into a 30,522-dim sparse vector over the WordPiece vocabulary, per Hugging Face. Each query gets expanded with related terms, but the index is still inverted, so token-level precision survives.

- Dense ranker — your embedding model of choice, scoring by cosine similarity in a learned vector space. Wins on semantic paraphrase. Loses on out-of-vocabulary literals.

Each lane runs against the same corpus, returns its own top-k ranked list, and hands those lists to a fusion step. None of them know about the others.

The Architect’s Rule: If you can’t draw the three lanes on a whiteboard with the same input arrow and three separate output arrows, you don’t have a hybrid pipeline. You have one ranker with a few extra opinions.

Step 2: Lock Down the Contract Between Retrievers

The fusion step needs uniform inputs. Specify the contract before you write any indexing code, or you’ll spend a week debugging score scales that should never have been compared in the first place.

Context checklist:

- Document IDs are stable strings, identical across all three indexes

- Each lane returns top-k as

(doc_id, rank)pairs — ranks start at 1, not 0 - Top-k value is the same for every lane (typical: 100 or 1000)

- Tokenizer for BM25 is documented (Lucene’s standard analyzer or your custom one)

- SPLADE-v3 query encoding uses

naver/splade-v3with no fine-tuning unless your eval justifies it - Dense lane uses one fixed embedding model and one fixed similarity metric

The Spec Test: Ranks-only is non-negotiable. If you can’t answer “what does each lane return?” in one sentence per lane, your fusion step will silently default to whichever lane has the largest raw scores. Reciprocal rank fusion only works if the contract is ranks-only — feed it raw scores and it stops being RRF.

Step 3: Wire BM25, SPLADE-v3, and RRF in the Right Order

Build order matters because each lane has different setup cost and different debug surface. Get BM25 working end-to-end before you touch a learned encoder. The rare-token failures you’ll see in BM25 are the ones SPLADE-v3 has to recover, and you can’t tune the recovery if you can’t see the original gap.

Build order:

- BM25 baseline first — Index the corpus with Pyserini’s

LuceneSearcher. Run your eval set. Record recall@10 and your RAG Evaluation scores per query. This is your floor. - SPLADE-v3 second — Encode the corpus with

naver/splade-v3, then query through Pyserini’sLuceneImpactSearcher— the API designed for learned sparse models per the Pyserini Docs. Run the same eval set. Compare per-query, not just aggregate. - Dense lane third — Add your embedding model with whatever dense index you already trust. Run the same eval set again.

- RRF fusion last — Take ranked lists from all three lanes and combine them with the formula from the original Cormack, Clarke, and Büttcher paper:

score(d) = Σ over rankers r of 1/(k + rank_r(d)), withk=60. Re-rank by combined score.

For each lane, your context must specify:

- What it receives: raw query string (no preprocessing tricks one lane doesn’t share)

- What it returns: ordered list of

(doc_id, rank)tuples, length k - What it must NOT do: normalize scores, drop ties silently, or deduplicate against another lane

- How to handle failure: if a lane returns fewer than k results, fusion still runs — missing docs simply don’t appear in that lane’s contribution

Step 4: Prove the Hybrid Beats Each Lane Alone

A hybrid pipeline is only worth the operational cost if the fused list outperforms the best single lane on your queries. Measure that. Don’t assume it.

Validation checklist:

- Per-query delta — failure looks like: hybrid wins on aggregate but loses on a specific query class (e.g., regresses on exact-match SKU queries because dense outvotes BM25 on rank 1)

- Lane ablation — failure looks like: removing one lane changes nothing, meaning that lane is contributing noise rather than complementary signal

- BEIR Benchmark sanity check — failure looks like: your hybrid underperforms SPLADE-v3 alone on the BEIR datasets the SPLADE-v3 paper reports, which suggests your fusion or your top-k is misconfigured

- RRF

ksensitivity — failure looks like: small changes tok(say, 30 vs. 60 vs. 100) flip your ranking order on most queries, meaning one lane dominates and the others are noise

Compatibility & versioning notes:

- Pyserini (April 2026 release): Requires Python 3.12+ per Pyserini PyPI. Older pipelines on 3.11 must upgrade the interpreter before installing.

- ELSER versioning: If you swap SPLADE-v3 for ELSER, standardize on ELSER v2 (GA since Elastic 8.11, per Elastic Docs). ELSER v1 is still labeled technical preview — do not put it in production.

- SPLADE-v2 docs: The

experiments-spladev2.mdpaths in the Pyserini repo reference older checkpoints. For new builds, use the SPLADE-v3 or SPLADE++ EnsembleDistil prebuilt indexes instead.

Common Pitfalls

| What You Did | Why AI Failed | The Fix |

|---|---|---|

| Compared raw BM25 scores to dense cosine similarities | The two scales aren’t commensurable; max-score normalization hides that | Use reciprocal rank fusion on ranks only — the original paper proved it beats score-normalized fusion |

| Indexed for SPLADE-v3 with the wrong searcher class | LuceneSearcher is for BM25; learned sparse needs LuceneImpactSearcher, per the Pyserini Docs | Switch to the impact searcher and re-encode the corpus once |

| Left top-k different per lane (e.g., k=10 BM25, k=100 dense) | Lanes contribute unequal coverage to fusion, biasing toward whichever lane has the longer list | Pin top-k for every lane — start at 100 |

| Recommended ELSER v1 in production | v1 is still in technical preview, per Elastic Docs | Use ELSER v2 (GA since Elastic 8.11), or pick SPLADE-v3 if you don’t run on Elastic |

Pro Tip

One ranking signal, three error patterns. When fusion underperforms, never tune the fusion step first. Look at per-query rankings from each lane. Find a query where lane A ranks the right doc at position 1 and lane B ranks it at position 80. That gap is a spec problem in lane B — wrong analyzer, missing field, untokenized literal — not a fusion problem. RRF can’t recover signal that isn’t in any lane.

Frequently Asked Questions

Q: How to implement sparse retrieval with SPLADE and Pyserini in 2026?

A: Encode your corpus with naver/splade-v3 from Hugging Face, then query through Pyserini’s LuceneImpactSearcher — the API designed for learned sparse representations, per the Pyserini Docs. The April 2026 Pyserini release requires Python 3.12+, so plan an interpreter upgrade before you pip install. Avoid the older experiments-spladev2.md paths — those checkpoints are deprecated.

Q: When should you use sparse retrieval instead of dense embeddings? A: Use sparse retrieval as a complement, not a replacement, when your queries contain rare literals — error codes, SKUs, surnames, version strings — that no embedding model has seen. Pure dense retrievers lose on out-of-vocabulary tokens. The honest answer: don’t pick one. Run both lanes and let RRF handle the disagreement.

Q: How to combine BM25 and dense retrieval with RRF for hybrid search?

A: Run BM25 and your dense ranker independently, take the top-k ranked lists from each, and fuse with the Cormack et al. formula: score(d) = Σ 1/(k + rank_r(d)) with k=60. The trick is that RRF uses ranks only — never raw scores. Pin top-k to the same value for both lanes, or one will drown out the other.

Your Spec Artifact

By the end of this guide, you should have:

- A three-lane decomposition diagram for your retrieval pipeline (BM25, SPLADE-v3, dense)

- A contract document specifying input format, output format, and top-k for every lane

- A validation checklist that compares hybrid output against each single-lane baseline on your own eval set

Your Implementation Prompt

Drop this into Claude Code, Cursor, or your AI coding tool of choice once you have the three-lane decomposition on paper. The placeholders force you to commit to specifications before any code gets generated.

Build a hybrid retrieval pipeline with three independent lanes and a fusion step.

LANE 1 — BM25 (classic sparse):

- Index: [Pyserini LuceneSearcher / your existing inverted index]

- Tokenizer: [Lucene standard analyzer / custom analyzer name]

- BM25 parameters: k1=[1.2], b=[0.75]

- Top-k: [100]

LANE 2 — SPLADE-v3 (learned sparse):

- Encoder: naver/splade-v3 (Hugging Face)

- Searcher: Pyserini LuceneImpactSearcher

- Index path: [your impact index path]

- Top-k: [100, must match Lane 1]

LANE 3 — Dense:

- Embedding model: [your model name and version]

- Vector index: [FAISS / your index]

- Similarity: [cosine / dot product]

- Top-k: [100, must match Lanes 1 and 2]

CONTRACT (all lanes):

- Input: raw query string, no per-lane preprocessing

- Output: list of (doc_id, rank) tuples, ranks start at 1, length = k

- Doc IDs are identical strings across all three indexes

- Lanes do NOT normalize scores or deduplicate against each other

FUSION:

- Algorithm: Reciprocal Rank Fusion, score(d) = sum over r of 1/(k + rank_r(d))

- Constant k: 60 (Cormack et al. 2009 default)

- Re-rank by combined score, return top-[10]

VALIDATION:

- Eval set: [path to your labeled query-document pairs]

- Metrics: recall@10, [your domain-specific metric]

- Required check: per-query delta vs. each single-lane baseline

- Required check: lane-ablation test — removing any lane must change ranking on >[20]% of queries

Ship It

You now have a pipeline that doesn’t pick a winner between sparse and dense retrieval — it lets each lane do what it does well and merges the rankings on ranks alone. The next time recall plateaus, you’ll know whether to fix a lane, retune k, or add a fourth signal — because each piece is independently observable.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors