Graph vs Conversation vs Crew: LangGraph, AutoGen, CrewAI Patterns

Table of Contents

ELI5

Three frameworks disagree about how AI agents should coordinate. LangGraph models them as a state machine, AutoGen as actors trading messages, CrewAI as a crew passing task outputs. The pattern you pick shapes every failure you later debug.

A Multi Agent Systems project fails the way it always fails: one agent hangs, another silently truncates its tool output, a third writes a confident summary on top of stale state. The post-mortem turns into archaeology. The reason has less to do with the LLM than with the coordination pattern your framework chose for you. That is what an honest Agent Frameworks Comparison is about — not features, but coordination primitives.

The Myth That Frameworks Are Three Flavors of the Same Thing

The treat-them-as-equivalent reading goes: LangGraph, AutoGen, and CrewAI all give you multiple agents talking to each other, so the choice is mostly a taste question — DAG vs. chat vs. team metaphor. Pick the API you like, write your prompts, move on.

That reading collapses on first contact with a real bug. Each framework encodes a different theory of where coordination lives — in shared state, in messages, or in task outputs. The theory is the architecture. The architecture decides what fails, what is debuggable, and what you can resume after a crash.

Not three flavors of the same thing. Three different bets.

Three Architectures, Three Different Theories of Coordination

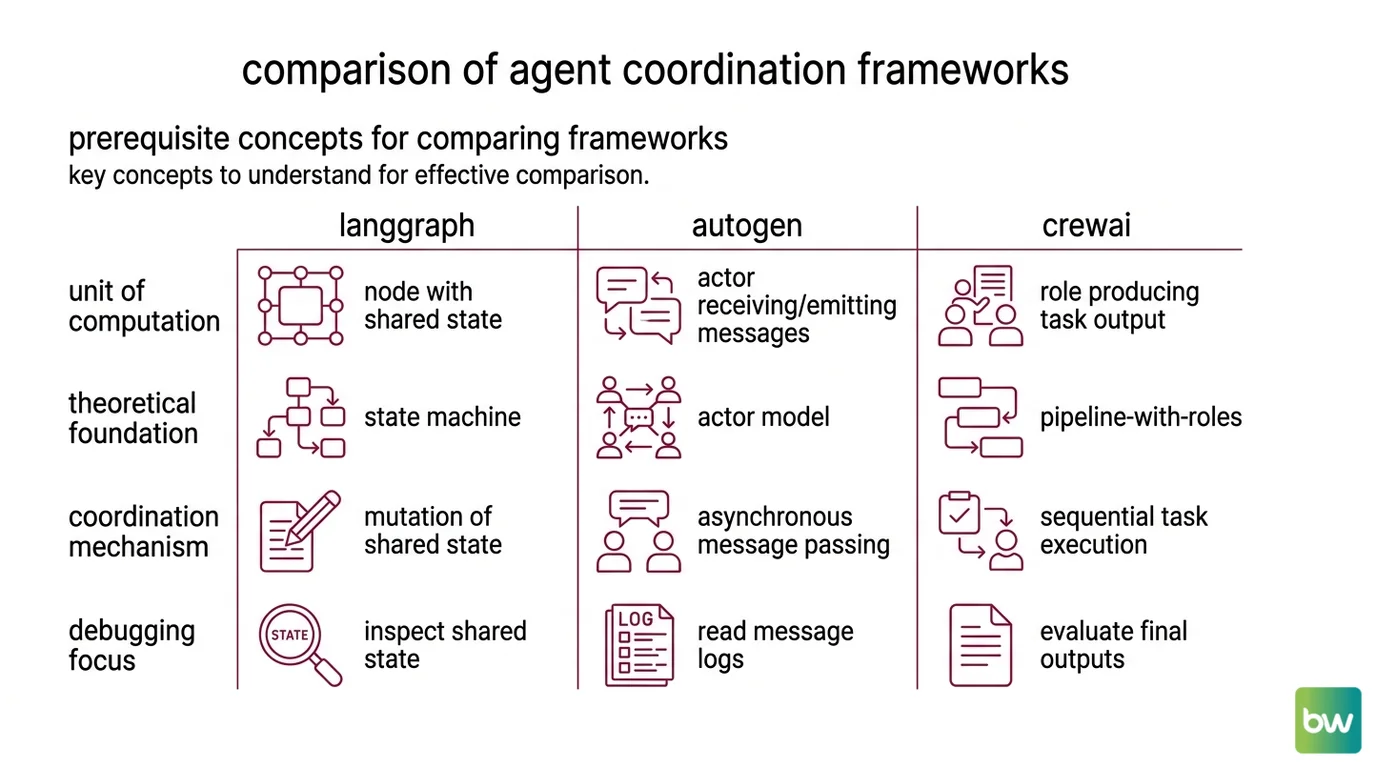

Before comparing star counts and tutorial quality, it helps to look at what each framework treats as the unit of computation. LangGraph treats it as a node operating on shared state. AutoGen treats it as an actor receiving and emitting messages. CrewAI treats it as a role producing a task output that another role consumes. Those three primitives map cleanly onto three older patterns from distributed systems — state machine, actor model, and pipeline-with-roles — a mapping made explicit in DataCamp’s framework comparison.

That mapping is the only honest way to compare them.

What do you need to know before comparing agent frameworks (state machines, message passing, tool use)?

Three concepts let you read the rest of the comparison without getting lost.

The first is the state machine. A state machine is a graph of nodes connected by edges, where each node reads from and writes to a shared state object.

LangGraph’s core abstraction is exactly this: a StateGraph whose nodes are functions or LLM calls and whose edges encode control flow over a typed state dictionary, per LangChain Docs. Coordination is mutation: an agent does not “talk” to another agent; it modifies the shared state, and the next node reads what it needs. Debugging means inspecting the state at each step.

The second is Message Passing. In the actor model, each agent is an independent actor with a private mailbox. Agents coordinate by sending and receiving asynchronous messages, never by reading each other’s internals. AutoGen’s v0.4 architecture is built on this primitive: a Core layer with async event-driven message passing, with the higher-level AgentChat API and Extensions stacked on top. Coordination is conversation. Debugging means reading the message log.

The third is tool use and Agent Planning And Reasoning. Both depend on a structure that lives outside the framework — prompts that tell the model when to call a tool and how to plan multi-step actions. Frameworks differ in how aggressively they constrain that structure. LangGraph lets the graph constrain it. AutoGen lets the conversation constrain it. CrewAI lets the role-and-task contract constrain it.

Once those three primitives come into focus, the rest of the comparison stops being a feature checklist and starts being a question about which kind of failure you are prepared to debug.

How Each Framework Stores State and Routes Communication

The architectural primitives only matter once you can see how they show up in code and in failure modes. Here the frameworks split sharply.

LangGraph keeps a single typed state object that nodes mutate.

Agent Memory Systems for long-running runs are first-class: the framework’s checkpointer saves a snapshot at every super-step plus per-task pending writes, with backends including in-memory, SQLite, Postgres, and Redis (LangChain Docs). Threads with a thread_id group those checkpoints, which means time-travel debugging — replaying the run, editing the state at any step, branching off to test a fix — is a built-in primitive, not a side project. The strong claim is that state is the source of truth, and the graph is just the schedule for mutating it.

AutoGen treats state as private to each actor. Coordination flows through messages, with patterns like GroupChat selecting which agent speaks next. The strength is composability: you can drop an actor into a group, and the group’s selector decides routing. The cost is that no actor has the full picture — debugging means reconstructing causality from message logs across mailboxes. AutoGen is now in maintenance mode (AutoGen GitHub). Microsoft is directing new users to the Microsoft Agent Framework, which unifies AutoGen’s actor primitives with Semantic Kernel (Microsoft Learn). For new projects, the actor pattern is still useful to understand; the specific package is no longer where the future is being written.

CrewAI sits between the two. Its primitives are Crew (a team), Agent (a role with a goal, backstory, and tools), and Task — orchestrated by Flows for event-driven control with explicit state, per CrewAI Docs. Agents do not message each other directly; they pass task outputs forward, and Flows can themselves orchestrate Crews. The architectural duality is the headline: Crews give you autonomous role-based collaboration, Flows give you granular event-driven control, and either can wrap the other. CrewAI is now independent of LangChain primitives (CrewAI Docs) — a recent change worth knowing if you are reading older tutorials that assumed the legacy dependency.

| Framework | Coordination unit | Where state lives | Debug primitive |

|---|---|---|---|

| LangGraph | Node operating on state | Shared, typed State | Checkpoints + thread time-travel |

| AutoGen | Actor with mailbox | Private to each actor | Message log across actors |

| CrewAI | Role producing task output | Flow state + task outputs | Flow execution log |

The table looks symmetric. The implications are not.

What the Patterns Predict When Agents Fail

Most of each framework’s strengths and weaknesses can be derived from its primitive without reading another doc.

If coordination is shared state, then the typical failure mode is state corruption and silent staleness — but recovery is cheap because every super-step has a checkpoint. If coordination is messages, the typical failure mode is missed or out-of-order messages, and recovery is hard because no actor has the full timeline. If coordination is task outputs, the failure mode is bad handoffs — a previous role produced something the next role cannot use — and recovery means re-running the upstream task with better instructions.

Those three failure modes are not equally observable.

What are the technical limits of current agent frameworks: state durability, debugging, and observability gaps?

State durability is the easy axis. LangGraph’s checkpointer model treats every super-step as a transaction worth persisting, which makes “resume after a crash” and “rewind to step seven and try a different prompt” the same operation. AutoGen’s actor model does not give you that for free — each actor’s state is private, and durability is something you build into specific actors when you need it. CrewAI’s Flows expose state as a first-class object, but the model is closer to “workflow checkpoint” than to “every-step time travel.”

Debugging gaps come from where the truth lives. With shared state, you read the truth from one place. With actor messages, the truth is the union of every actor’s view, and you need a tracing layer that correlates events across mailboxes. CrewAI Flows give you an execution log, but the agent-level reasoning inside a Crew is still the model’s chain-of-thought, which the framework does not own.

Observability is the gap that all three share. According to LangChain’s State of Agent Engineering report — read with the caveat that LangChain ships an observability product itself — 89% of organizations claim some form of agent observability, but only 62% have step-level tracing. An accompanying analysis flags a deeper structural gap (LangChain Blog): most LLM observability tools were designed for single request/response pairs, so they miss the issue lifecycle, fail to correlate parallel-branch traces, and let silent tool failures hide behind a confident final answer. The observability layer is starting to converge — LangSmith and OpenTelemetry-compatible tracers now work across LangGraph, AutoGen, CrewAI, and other agent SDKs — even where the underlying architectures have not.

The pattern is simple: debuggability follows from the primitive. A graph framework with checkpoints gives you replay for free. An actor framework gives you message logs. A crew framework gives you task lineage. None of them give you the others without extra work.

Rule of thumb: Pick the framework whose failure mode you would rather debug. If the answer is “I want to rewind state,” LangGraph. If “I want clean role contracts with explicit handoffs,” CrewAI. If “I am comfortable reasoning over message logs,” the actor pattern — though for new code that pattern now lives in Microsoft Agent Framework, not in AutoGen.

When it breaks: All three frameworks share a structural ceiling: they coordinate agents but do not coordinate the non-determinism inside each agent. A planning step that sometimes returns a tool call and sometimes a string will break any of them — graph, conversation, or crew — and the only fix is at the prompt or schema layer the framework does not own.

Framework status notes:

- AutoGen (Microsoft): In maintenance mode as of 2026 — community-managed, security and bug fixes only, latest is

python-v0.7.5(September 30, 2025). New work is happening in Microsoft Agent Framework v1.0 (released April 2026), which unifies AutoGen’s actor model with Semantic Kernel.- CrewAI: Actively developed; current release is

crewai 1.14.4(April 30, 2026). The “independent of LangChain” claim is recent — older tutorials may still assume the legacy LangChain dependency.- LangGraph: Actively developed across

langgraph,langgraph-sdk(0.3.14, May 5, 2026), andlanggraph-checkpoint. Cite a specific version if you depend on a feature; otherwise treat it as actively maintained.

The Data Says

The three frameworks are not three flavors of the same idea. They are three different theories of where agent coordination lives — in shared state, in messages, or in task outputs — and the theory you adopt decides which class of failure you are committing to debug. Pick the architecture before you pick the API.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors