Garbage In, Garbage Out: The Ethical Cost of RAG Parsing Errors

Table of Contents

The Hard Truth

Imagine a clinician reads a confident AI summary of a patient’s chart and acts on it. The model did not hallucinate. It read exactly what the parser handed it — a malformed table, a footnote spliced into the wrong paragraph, a redaction misread as a number. Whose mistake is the patient living with?

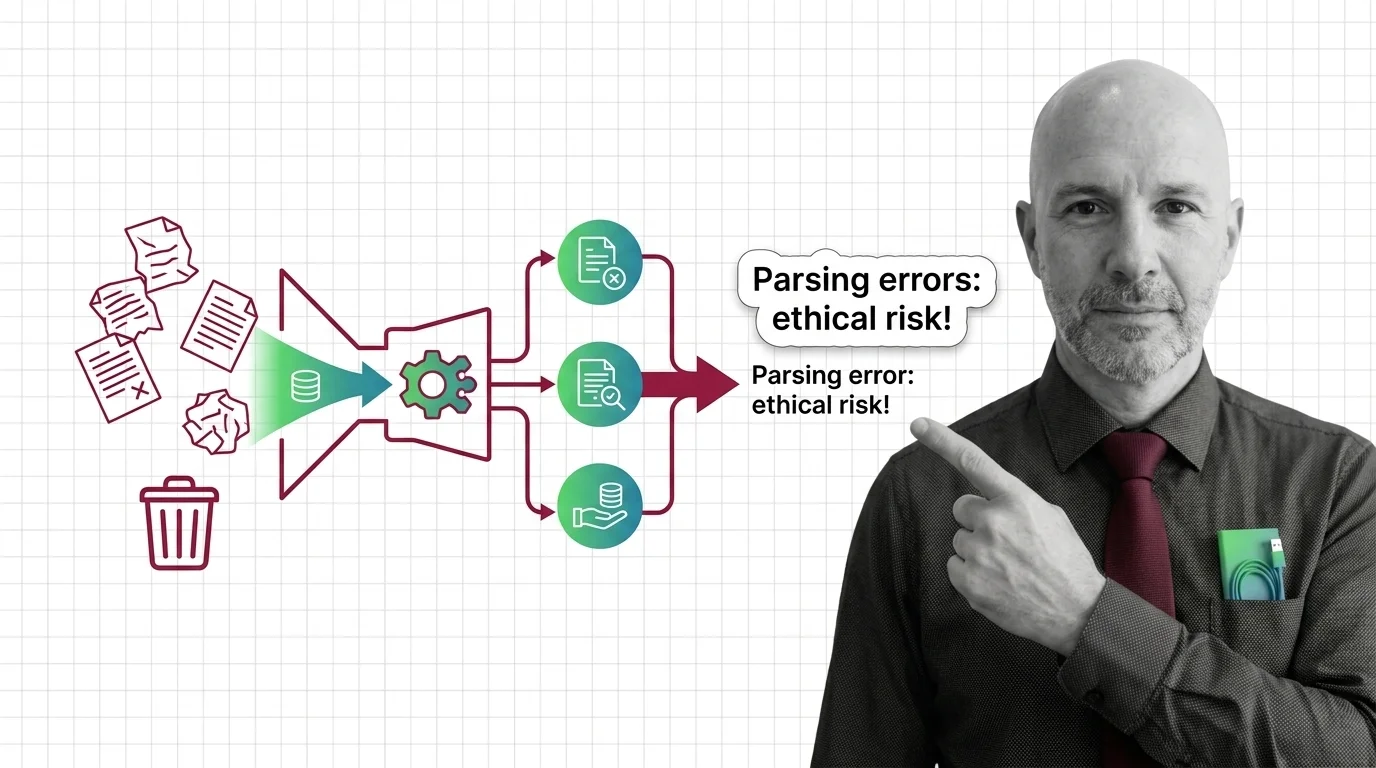

Most of the public conversation about Knowledge Graphs For RAG and retrieval systems treats the Document Parsing And Extraction step as mere plumbing — a preprocessing chore solved by libraries and forgotten. That framing is convenient, and it is also where the ethical risk hides. Long before a model writes a sentence, a parser has already decided what counts as a sentence at all.

We Have Made Reading Itself a Hidden Variable

The whole field has been arguing about hallucinations for two years, as if the danger lived inside the model. But the danger of high-stakes RAG begins earlier: the moment a PDF, a scan, a contract, or a clinical note is converted into tokens. That conversion is not neutral transcription. It is interpretation. Tables collapse into prose, footnotes attach to the wrong paragraph, redactions become numbers, multi-column layouts shuffle into nonsense.

In legal, medical, and financial contexts, those quiet distortions are the upstream cause of decisions that hurt people. And almost nobody outside the engineering team sees them happen. The user sees a confident answer, not the raw extraction. The patient or defendant or retail investor sees nothing at all.

So the ethical question is not “does this AI hallucinate?” but something harder to govern: who is accountable when the machine reads correctly, but the document was read wrong before the machine ever saw it?

The Story We Tell Ourselves About Parsers

The conventional wisdom is reasonable. Document parsing has improved dramatically. Modern parsers can carve structure out of difficult layouts that would have defeated tools from a few years ago. Vendor benchmarks tell an encouraging story: Docling reports table-extraction accuracy near the high nineties, and Unstructured publishes leading scores on its own SCORE-Bench. Independent comparisons such as the LlamaIndex ParseBench round out the picture.

Engineers therefore treat parsing as a solved class of problem — pick a tool, configure chunking, move on to the more interesting work of retrievers and re-rankers. Lawyers and clinicians, who do not see the pipeline, inherit this confidence at a remove. They are told the system is “trained on legal documents” or “evaluated for clinical use,” and they reasonably assume the document layer behaves like a faithful scribe.

This story is not wrong. It is just incomplete in a specific, dangerous way. A 95% accurate parser is impressive in aggregate. In a single life-changing document, the 5% is where someone’s freedom or treatment lives.

The Assumption Underneath the Pipeline

The hidden assumption is that parser accuracy is a uniform property — that if a tool scores well on a benchmark, it behaves reasonably on your documents. The data does not support this. The Applied AI PDF Benchmark found parser accuracy varying by more than 55 points across document types, with legal contracts landing around 95% while academic papers drop into the 40–60% range. The same tool can be excellent on one corpus and unusable on another.

Worse, errors are not independently distributed. Industry analyses of PDF parsers describe the cascade plainly: a single layout-detection mistake near the top of a document can corrupt entire downstream sections, because chunking and retrieval both inherit the broken structure. The pipeline does not gracefully degrade. It silently propagates.

Now layer in the clinical evidence. A 2026 medRxiv review of real-world clinical RAG concluded that most suboptimal responses are attributable to faulty source-document retrieval, not to model hallucination. That is the field telling itself, quietly, that the dominant failure mode is upstream of the model — and that we have spent two years aiming our safety effort at the wrong layer.

The Stanford RegLab study of leading legal AI tools found end-to-end hallucination rates between 17% and 33%, with Lexis+ AI accurate on 65% of queries and Westlaw AI-Assisted Research accurate on only 42% (Stanford HAI). Parsing is one root cause among several — chunking, retriever, generator all contribute — but in a domain where one wrong citation can sink a case, end-to-end accuracy this brittle is not a routine engineering shortfall. It is a governance crisis dressed in benchmark language.

What the Archivists Already Knew

There is an older profession that has thought about this for centuries — archivists, paleographers, medical records librarians, court reporters. They never assumed transcription was neutral. They built professional ethics around the act of rendering a source faithfully: provenance chains, version control, redundant transcription, certified copies. When stakes were high, they did not trust a single reader. They triangulated.

Software has mostly forgotten this lineage. We treat ingest as a pipeline stage rather than as a custodial act. But the moral structure is the same. To convert a document into machine-readable form is to take custody of someone’s record — their contract, their diagnosis, their prospectus — and become responsible for what survives the conversion. Document parsing is not an engineering problem with ethical side effects. It is an ethics problem that happens to require engineering. We just outsourced the ethics by calling it a library.

Thesis: Parsing Is Triage

Thesis: in any high-stakes RAG system, document parsing is the moral choke point — the place where what the system can and cannot say truthfully is decided long before the model speaks.

Once you accept that, several uncomfortable things follow. The question “is the model accurate?” is the wrong unit of evaluation. The right question is whether the document, as the system actually saw it, still carries the meaning the source intended. Almost no commercial pipeline reports this. Users see polished answers; nobody shows them the messy intermediate representation.

Regulatory frameworks already point this direction without naming it. NIST’s Generative AI Profile (NIST AI 600-1, July 2024) calls out information integrity and retrieval poisoning as named risks under the AI RMF. The OWASP Top 10 for LLM Applications added “Vector and Embedding Weaknesses” as a distinct RAG-specific category in its 2025 update. FINRA’s 2026 Annual Regulatory Oversight Report identifies summarization and information extraction from unstructured documents as the top GenAI use case at member firms. The EU AI Act, with its high-risk obligations now phased to December 2, 2027 for Annex III standalone systems and August 2, 2028 for medical devices (European Commission), will make these expectations enforceable rather than aspirational.

The early case law of AI in law was not really about parsing. Mata v. Avianca (Justia, S.D.N.Y., June 22, 2023) sanctioned attorneys $5,000 for citing fabricated cases generated by direct ChatGPT use, no RAG in sight. The next generation of cases will be subtler — systems that parsed real documents, retrieved real passages, and still produced wrong answers because the document layer quietly deformed the source. As far as the public record shows, no enforcement action yet hangs harm specifically on a document-parsing error. The absence is not reassurance. It is a clock.

What This Costs Us in Courtrooms, Clinics, and Trading Floors

What follows from accepting parsing as a moral choke point is not a checklist. It is a different posture toward what these systems are.

It means asking, before a single retrieval call, whether the documents you are ingesting can be parsed reliably enough for the stakes of the use case — and refusing the project if the answer is no. It means surfacing the parsing layer to the people who bear the consequences: the clinician should be able to see how the chart was extracted, the lawyer how the contract was segmented, the compliance officer which fields the system could not confidently read. It means treating “the parser failed silently” as a reportable incident, not a backlog ticket.

It also means the harder cultural shift: accepting that the ethics of high-stakes RAG cannot be delegated to model providers, retrieval libraries, or the optimism of vendor benchmarks. The custodial responsibility belongs to whoever puts the system in front of a person whose life it affects — and answers when the parser was wrong, the answer was confident, and the decision has already been made.

Where This Argument Could Break

This argument leans on the claim that parsing errors dominate the upstream causes of high-stakes RAG failure. That claim is directionally supported by clinical research and benchmark variance, but a clean global statistic does not yet exist publicly — vendor and community benchmarks differ in methodology and corpus. If future independent evaluation showed that, in mature pipelines, parser errors fall well below retriever and generator errors, the moral weight I am placing on the parsing layer would need to redistribute. The custodial duty would not vanish, but its center of gravity would move.

The Question That Remains

We built systems that read for us at industrial scale and called the reading “preprocessing.” If the most consequential mistakes happen in the layer we treat as plumbing, the question is no longer technical. It is: who is willing to be the named human accountable for what the parser did to the document — before the model ever saw it?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

Ethically, Alan

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors