From RAG to Agentic RAG: Prerequisites and Technical Limits of Retrieval-Augmented Agents

Table of Contents

ELI5

A retrieval-augmented agent is a RAG pipeline an LLM can steer in a loop — deciding when to retrieve, when to rewrite the query, when to stop. The price for that autonomy is compounded latency, cost, and unreliability.

You build a classic RAG pipeline. The first dozen queries return good answers, the demo lands, the team agrees to wrap it in an agent loop so the model can decide for itself when to search. Two weeks later, your failure rate is climbing past 20%. Nothing broke — every individual stage still works — but reliability does not add. It multiplies. That arithmetic is where Retrieval Augmented Agents begin, and it is also where most teams discover they skipped the prerequisites.

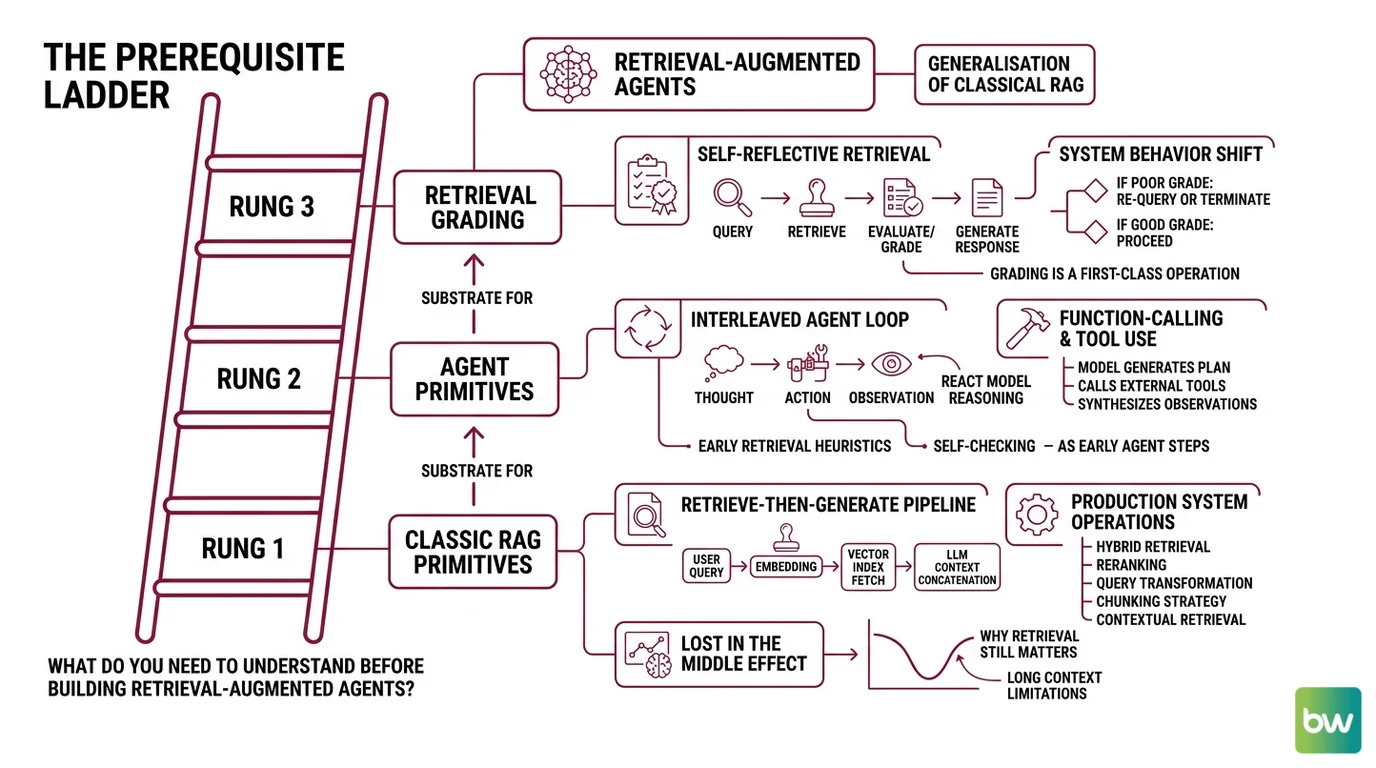

The Prerequisite Ladder

Before retrieval-augmented agents make sense as an architecture, the reader needs a working mental model of three earlier layers. The Singh et al. (2025) survey treats the agentic loop as a generalisation of classical RAG, not a replacement — and the path between the two runs through a small set of papers that promoted retrieval grading from a heuristic into a first-class operation. Skip a rung and the system above it becomes mysterious.

What do you need to understand before building retrieval-augmented agents?

Three rungs, in order. Each one is the substrate the next one stands on.

Rung 1 — classic RAG primitives. The retrieve-then-generate pipeline introduced by Lewis et al. (2020) is still the engine inside every agent: a query becomes an embedding, the embedding fetches the top-k passages from a vector index, the passages are concatenated into the LLM’s context. The Naive → Advanced → Modular progression catalogued in the Gao et al. (2023) survey adds the operations every production system inherits — hybrid retrieval (dense plus lexical), reranking, query transformation, chunking strategy, and contextual retrieval. None of these are optional knowledge. If the reader cannot say why a reranker improves precision but worsens latency, the agent loop above will look like magic.

There is a reason retrieval still matters even when context windows reach hundreds of thousands of tokens. Liu et al. (2023) showed that language models drop roughly 30% in accuracy when the relevant passage sits in the middle of a long context rather than at the edges — the so-called “lost in the middle” effect. Stuffing the whole corpus into the window does not save you. You still need to put the right thing in the right place.

Rung 2 — agent primitives. The interleaved Thought → Action → Observation loop introduced by Yao et al. (2022) is the substrate every retrieval-augmented agent inherits. ReAct lets a model reason in natural language, emit a tool call, observe the result, and re-plan. The Anthropic engineering team catalogued the six composable patterns these agents actually use in production — prompt chaining, routing, parallelisation, orchestrator-workers, evaluator-optimizer, and the fully autonomous agent — in their December 2024 “Building Effective AI Agents” essay. The Workflow Orchestration For AI layer is what threads these patterns together. Without it, the loop has no place to live.

The tool layer matters as much as the reasoning layer. Many production retrieval-augmented agents now extend beyond retrieval into Code Execution Agents that can run a Python snippet against the retrieved chunks, parse a PDF, or hit a calculator before returning. The four-part Weaviate reference model captures this cleanly: LLM plus memory (short and long term) plus planning (reflection, self-critique, query routing) plus tools. Each component is a place where the system can succeed or fail independently.

Rung 3 — the bridge papers. Two architectures connect classical RAG to the full agentic loop, and missing either of them is the single most common reason developers misunderstand what Agentic RAG actually adds. Self-RAG (Asai et al. 2023) fine-tunes the generator to emit reflection tokens that decide whether to retrieve and whether the generated answer is grounded in the retrieved passages. Corrective RAG, or CRAG (Yan et al. 2024), bolts a lightweight retrieval evaluator onto the front of the pipeline; that evaluator scores each retrieved document and routes the trajectory into one of three corrective actions — use the documents, refine the query, or fall back to a web search.

Neither Self-RAG nor CRAG is a multi-agent system. They are the moment retrieval grading became a decision the model — or a small evaluator alongside it — could make on its own. Once you accept that grading is a runtime decision rather than a config flag, the LangGraph framing snaps into place: as the LangChain documentation puts it, “an LLM makes a decision about whether to retrieve context from a vectorstore or respond to the user directly,” and the canonical reference graph (generate_query_or_respond → retriever_tool → grade_documents → rewrite_question → generate_answer) is just that decision wired into a cycle.

Why the order is non-negotiable

The rungs are not interchangeable. An engineer who jumps from “I built a chatbot over our PDFs” straight to a multi-agent orchestrator will inherit every failure mode of rung one (bad chunking, lost-in-the-middle, miscalibrated similarity) and multiply them by every failure mode of rung two (loop divergence, infinite tool calls, planning collapse). The bridge papers exist precisely because the community discovered, around 2023–2024, that you cannot reason your way out of bad retrieval. If the documents are wrong, more thinking produces a more confident wrong answer.

Not naive RAG with a router on top. A regime where retrieval, grading, and generation are three independent failure surfaces the agent is asked to coordinate.

The Four Physics of Stacked Failures

The technical limits of retrieval-augmented agents are not bugs that a better vendor will patch. They are architectural ceilings imposed by the fact that you are now chaining several stochastic components, each with its own latency, cost, and error distribution. The Redis production write-ups frame these as five operational pressures — latency, cost, reliability, complexity, and observability overhead — but four of them collapse into a small set of compounding mathematical facts.

What are the technical limitations of retrieval-augmented agents in production?

Four physics, each with its own shape.

Latency stacks linearly. A naive RAG call is roughly two model invocations: an embedding pass and a generation pass. A retrieval-augmented agent typically performs a planning call, one or more retrieve-then-grade cycles, an optional rewrite, and a final synthesis. Each step is itself a network round-trip to a model endpoint. The user-facing latency is the sum of every step in the trajectory, plus retrieval, plus reranking. There is no architectural trick that hides this — only caching of intermediate results, which itself adds complexity to invalidate correctly.

Cost climbs by roughly an order of magnitude per layer. The Lushbinary RAG Production Guide offers a useful rule of thumb (not a measured benchmark): naive RAG runs around $0.001 per query, hybrid retrieval with reranking around $0.005 per query, and a full retrieval-augmented agent landing somewhere between $0.02 and $0.10 per query depending on trajectory length. Treat these numbers as orders of magnitude, not price quotes. The shape matters more than the digits. Every additional grading step, every rewrite, every tool call adds a model invocation that the user is implicitly paying for.

Reliability multiplies — it does not add. This is the failure mode most teams underestimate. Suppose retrieval succeeds 95% of the time, reranking succeeds 95% of the time, and generation succeeds 95% of the time. The end-to-end success rate is not 95% — it is 0.95 × 0.95 × 0.95 ≈ 0.81. Roughly one in five trajectories fails through no single component’s fault. The same arithmetic applies at every additional agent step: the system’s reliability follows 0.95ⁿ, not 0.95. This is illustrative arithmetic, not an empirical measurement (per the Lushbinary guide), but the shape is real. Adding a corrective step does not improve reliability unless the evaluator that triggers the correction is itself more accurate than the components it is correcting.

Evaluator fragility cascades errors rather than fixing them. Every corrective architecture — Self-RAG, CRAG, the grader node in a LangGraph cycle — depends on a critic model that decides whether to retrieve again, rewrite the query, or stop and answer. The Meilisearch production write-up makes the uncomfortable point that the same grader making the “retrieve again” call also makes the “stop and answer” call, so its calibration shapes the entire trajectory. A miscalibrated evaluator does not correct errors; it amplifies them. If the critic systematically over-trusts retrieved documents, the loop terminates early with a confident wrong answer. If it under-trusts them, the agent enters an infinite refinement spiral until a step budget cuts it off.

Where the limits hit hardest

Async, long-horizon tasks survive these physics better than synchronous chat does. The NVIDIA Developer Blog draws the distinction cleanly: classical RAG is a linear “query → retrieve → generate” pipeline that is fast, cheap, and static — exactly what you want behind a typing indicator. Retrieval-augmented agents that “query, refine, use RAG as a tool, manage context over time” are suited to research, summarisation, and code correction — tasks where the user has already accepted that the answer will take time. Forcing an agentic loop into a real-time chat surface is where the latency and cost ceilings become product problems.

Not a critique of agentic-rag. A statement about which workloads it earns its complexity on.

Security & compatibility notes:

- LangChain Core serialization injection (CVE-2025-68664, “LangGrinch”): Critical vulnerability allowing secret extraction via crafted serialized objects. Affects every retrieval-augmented-agent stack built on LangChain. Patched in 1.2.5 / 0.3.81 with breaking changes —

load()/loads()now enforce an allowlist, defaultsecrets_from_env=False, and block Jinja2.- LangChain Core path traversal (CVE-2026-34070): High-severity issue in legacy

load_prompt()/load_prompt_from_config(); both are deprecated and scheduled for removal in 2.0.0.- LangGraph 2.0 (Feb 2026): Breaking change to

StateGraphinitialization. Pre-2.0 tutorials are still indexed in search results — verify version before copying graph definitions. Migration:langchain migrate langgraph.- LangGraph prebuilt deprecated:

langgraph.prebuiltfunctionality has moved tolangchain.agents.- LlamaIndex Python 3.9 dropped and

llama-index-workflows2.0 breaking (2026-03-16): Query Pipelines are deprecated in favour of event-driven Workflows.

What the Physics Predicts

The mechanism turns into active understanding the moment you can predict, in advance, where the system will hurt. The arithmetic of stacked components produces a small number of if-then statements that hold regardless of vendor or framework.

- If you add a corrective step without measuring the calibration of its evaluator, you will degrade the system you were trying to improve.

- If your per-stage reliability is 95% and your trajectory length grows from three steps to six, expect end-to-end success to fall from roughly 81% to roughly 73%, not stay flat.

- If you place a retrieval-augmented agent behind a synchronous chat box, latency will become the product complaint long before answer quality does.

- If your retrieval is wrong, additional reasoning steps will produce a more confident wrong answer, not a corrected one. Reasoning is not a substitute for relevance.

Rule of thumb: add an agent layer only when the workload tolerates seconds of latency, when the per-stage components already perform reliably in isolation, and when the evaluator that grades retrieval has been measured against ground truth — not just trusted.

When it breaks: the dominant failure mode in retrieval-augmented agents is not a crashed component but a confidently wrong trajectory produced by a miscalibrated grader on top of merely-acceptable retrieval. Without per-stage observability, that failure looks like “the model hallucinated” — when in fact the model faithfully reflected a broken chain.

The Data Says

Retrieval-augmented agents are a generalisation of classic RAG, not a replacement for it — and the same arithmetic that makes them powerful (composable steps, runtime decisions, reflection) is what makes them fragile (compounding latency, cost, and 0.95ⁿ reliability). The prerequisite chain runs through naive RAG, the agent primitives of ReAct, and the bridge papers Self-RAG and CRAG before the full agentic loop earns its complexity.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors