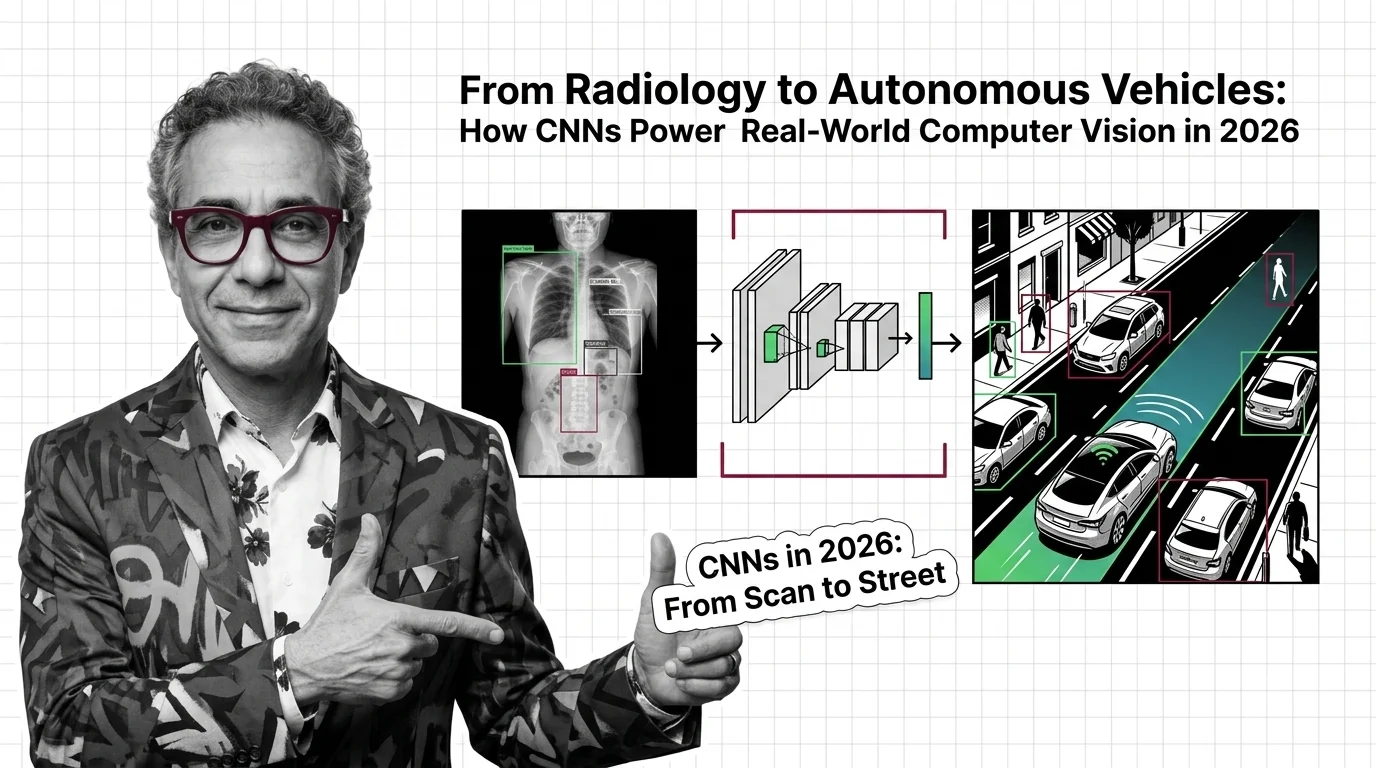

From Radiology to Autonomous Vehicles: How CNNs Power Real-World Computer Vision in 2026

Table of Contents

TL;DR

- The shift: CNNs and vision transformers are converging into hybrid architectures that dominate production deployments across medical imaging, autonomous driving, and real-time detection.

- Why it matters: The “CNN vs. transformer” debate is settled — production chose “both,” and teams still running pure architectures are falling behind.

- What’s next: Hybrid CNN-ViT models will define the next wave of FDA-cleared devices and autonomous driving stacks.

The Convolutional Neural Network was supposed to be last decade’s architecture. Vision transformers were the future. Then 2026 happened — and the production numbers told a different story. Radiology AI devices cleared 1,100 FDA authorizations. Waymo crossed half a million paid rides a week. YOLO26 shipped an NMS-free design faster than anything before it. Every one of these systems runs on convolutional layers — the same Neural Network Basics for LLMs that power modern AI, applied to pixels instead of tokens.

The architecture that was supposed to be replaced became the backbone of the most consequential computer vision deployments on earth.

The Pure-Architecture Era Just Ended

Thesis: Hybrid CNN-transformer models are winning every production race that matters, and the organizations still debating “CNN vs. transformer” are asking the wrong question.

The academic debate spent three years on this. Production teams didn’t wait. They shipped both.

A survey of hybrid CNN-ViT architectures confirms the convergence: CNNs extract local Feature Map patterns while transformers handle global dependencies (Yunusa et al.). Neither architecture alone matches the hybrid.

ConvNeXt V2 proved the point from the CNN side — eight model variants, with the Huge variant reaching 88.9% ImageNet top-1 accuracy (Meta Research GitHub). That’s a pure CNN from 2023, still competitive with leading transformer designs, with no successor announced. A three-year-old architecture holding its ground tells you the replacement narrative was always wrong.

But the real signal isn’t benchmark competition. It’s production convergence.

From Tumor Detection to Robotaxis

Medical imaging is the clearest proof. The FDA has authorized 1,451 AI-enabled medical devices since 1995, with 1,104 in radiology — 76% of the total (The Imaging Wire). GE HealthCare leads with 120 authorizations, Siemens Healthineers at 89, Philips at 50.

In February 2025, Aidoc received the first FDA clearance for a foundation-model-based AI device — a rib fracture triage system built on CARE1 (The Imaging Wire). Foundation models entering clinical radiology changes the game.

Cancer detection follows the same trajectory. CNN-TumorNet achieved 99% accuracy on brain MRI classification in a research setting (Frontiers in Oncology). Hybrid models integrating CNNs with improved Swin Transformers are advancing lung cancer CT classification. Note: these are research-dataset results — clinical deployment is a separate hurdle.

Autonomous driving tells a parallel story from a different sensor stack.

Waymo operates across 10 US cities with over 500,000 paid rides per week and roughly 3,000 vehicles, per industry reporting (HumAI Blog). Its EMMA architecture fuses cameras, LiDAR, and radars through an end-to-end multimodal model. CNN layers process raw sensor data. Transformers handle scene-level reasoning.

Tesla took a different path — camera-only, no LiDAR. FSD v12 replaced 300,000 lines of C++ with end-to-end neural networks (FredPope.com). Unsupervised driving launched in Austin in January 2026 with a reported 30-40 vehicles (HumAI Blog). The first Cybercab production unit followed in February 2026.

Two competing philosophies. Both running CNN-based feature extraction at the perception layer.

Who Moves Up

Teams already running hybrid CNN-transformer stacks are aligned with the production trend — no migration needed. Medical device companies with FDA experience own a regulatory moat that deepens with every new clearance cycle.

And the real-time detection community just got another upgrade. YOLO26 dropped in January 2026 with 57.5 mAP on COCO and 43% faster CPU inference, using an NMS-free end-to-end design with MuSGD optimizer (Ultralytics Docs). The toolchain is accelerating, not stalling.

Security & compatibility notes:

- Ultralytics PyPI compromise (Dec 2024): Versions 8.3.41 and 8.3.42 were hit by a supply chain attack injecting a cryptominer. Fixed in 8.3.43+. YOLO26 is unaffected, but pin your dependencies.

Who Gets Left Behind

Pure-transformer maximalists. The bet that vision transformers would make CNNs obsolete is not paying off in production. The hybrid is the equilibrium.

Teams running legacy CNN pipelines without Residual Connection modernization or current Batch Normalization practices. Architectures that missed the ConvNeXt-era structural improvements are competing on deprecated baselines.

Autonomous driving startups without a coherent sensor-fusion strategy. Whether you pick Waymo’s multi-sensor approach or Tesla’s camera-only bet, you need an architecture argument. You’re either picking a lane or getting run off the road.

What Happens Next

Base case (most likely): Hybrid CNN-ViT architectures become the default for production computer vision across medical, automotive, and industrial verticals. Signal to watch: Major cloud providers ship hybrid-first vision APIs. Timeline: 12-18 months.

Bull case: Foundation models on hybrid architectures break through FDA clearance for primary diagnosis — not just triage — creating a new category of AI-native medical devices. Signal: A foundation-model device cleared for primary diagnostic use. Timeline: 18-24 months.

Bear case: Regulatory friction slows FDA clearances, and a major autonomous driving incident freezes deployment expansion. Signal: FDA clearance pace drops below 2025 levels; a high-profile robotaxi safety event. Timeline: 6-12 months.

Frequently Asked Questions

Q: How are convolutional neural networks used in medical imaging and cancer detection? A: CNNs drive the majority of FDA-cleared radiology AI — 1,104 authorized devices and counting. Models like CNN-TumorNet achieve high accuracy on brain tumor classification, while hybrid CNN-transformer architectures push lung cancer CT detection forward.

Q: How do self-driving cars use CNNs for real-time object detection and scene understanding? A: Waymo and Tesla both use CNN layers for raw sensor processing at the perception layer. Transformers handle higher-level scene reasoning, but CNNs extract the spatial features that make real-time detection possible.

Q: ConvNeXt and hybrid architectures vs pure vision transformers: where is CNN research heading in 2026? A: Toward convergence. ConvNeXt V2 matches transformer-level accuracy with a pure CNN design. Research surveys confirm hybrid CNN-ViT models outperform either architecture alone. The production trend is hybrid-first.

The Bottom Line

CNNs are not being replaced. They’re being promoted — into the hybrid architectures powering every major production vision system shipping in 2026.

You’re either building on hybrid architectures or you’re building on assumptions.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors