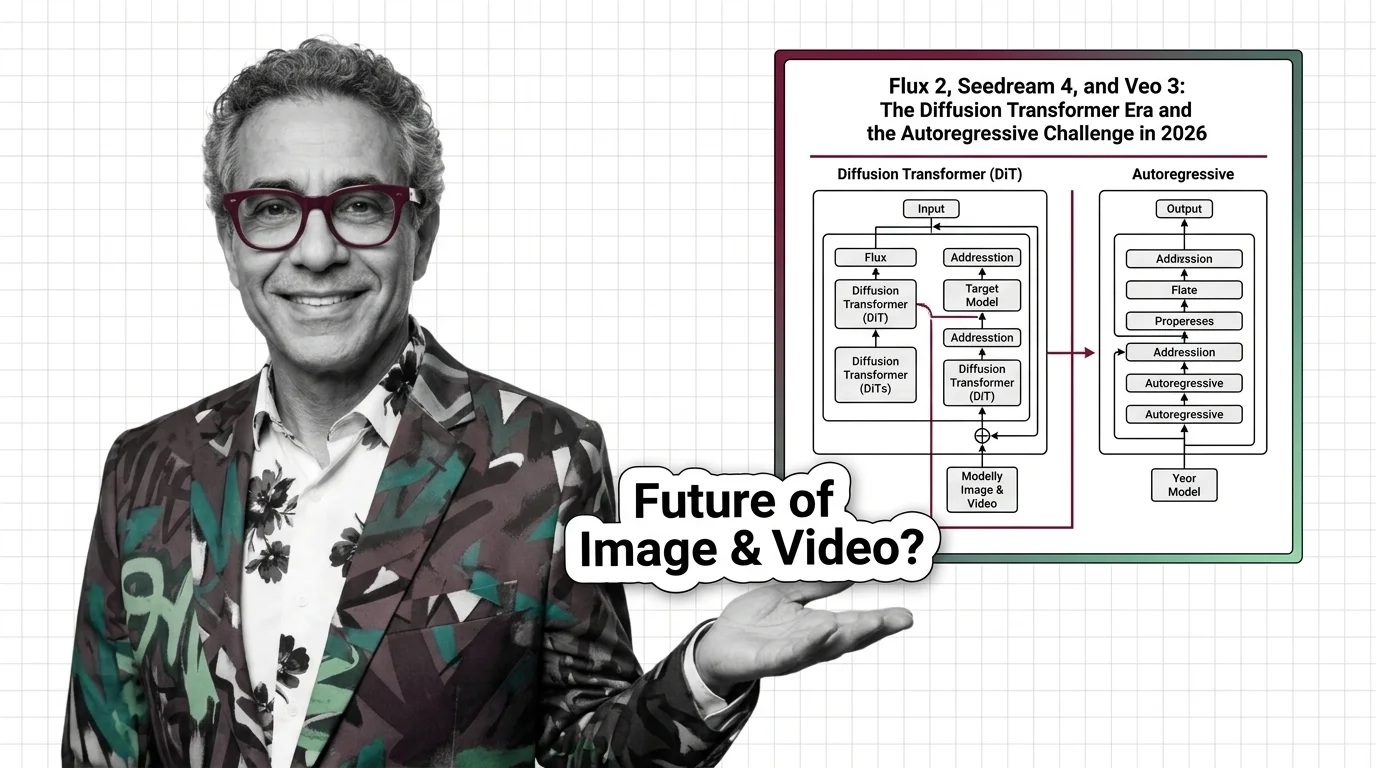

FLUX.2, Seedance, Nano Banana: Diffusion vs. Autoregressive in 2026

Table of Contents

TL;DR

- The shift: Image and video generation has locked into two architectures — rectified-flow diffusion transformers on one side, autoregressive token models on the other.

- Why it matters: The architecture you build on decides your cost, your latency, your text-rendering quality, and how tightly your model couples to an LLM’s world knowledge.

- What’s next: The autoregressive camp is closing the quality gap. The diffusion camp owns the high-fidelity ceiling. Both scale. Only one will win each use case.

Two architecture camps just drew their lines. Every serious image and video lab now ships some flavor of Rectified Flow on a Diffusion Transformer backbone. On the other side, OpenAI and Google are treating images like tokens — letting an LLM emit pixels the way it emits words. The war nobody called a war is already being fought in production.

The Architecture Race Just Split in Two

Thesis: The 2026 image and video frontier has settled on two stacks — rectified-flow diffusion transformers on one, autoregressive token models on the other — and almost every frontier release now lives on one of those two tracks.

Diffusion Models used to mean one thing. A U-Net backbone, a long Noise Schedule, Classifier-Free Guidance for steering, and a DDIM sampler to keep inference tolerable. That stack produced every flagship model from 2022 through 2024.

It is not the frontier stack anymore.

The frontier stack swapped the U-Net for a transformer and collapsed the noise schedule into a straight-line Flow Matching objective. The 2024 rectified flow + MMDiT paper is the blueprint (Esser et al. 2024). Every major image and video release since late 2025 has shipped some variant of it.

OpenAI and Google placed the counter-bet. Their next-generation image models are not diffusion — they are autoregressive, generating images token-by-token from a multimodal LLM. Two roads, both live, both in production.

The clean, single-architecture era of generative media is over.

Three Labs, One Stack — and Two Defectors

The evidence doesn’t organize by timeline. It organizes by what it proves.

Signal 1 — Diffusion transformers converged across every frontier image lab. Black Forest Labs shipped FLUX.2 on November 25, 2025 — a 32-billion-parameter open-weight model coupling a Mistral-3 24B vision-language encoder to a hybrid MMDiT backbone (BFL Blog). ByteDance’s Seedream 4.5 followed in December 2025 with native 2048×2048 output (WaveSpeedAI). Stable Diffusion 3, FLUX.2, and Seedream all trace back to the same 2024 MMDiT paper.

Signal 2 — Video diffusion is where the Elo race actually lives. On the Artificial Analysis text-to-video leaderboard (April 2026), Alibaba’s HappyHorse-1.0 leads with an Elo of 1,361. ByteDance’s Seedance 2.0 sits at 1,273 with synchronized 15-second audio+video. Google’s Veo 3 family hovers near 1,217 (Artificial Analysis). All three are diffusion transformers. Kling 3.0 from Kuaishou, launched February 5, 2026, uses a DiT paired with a 3D VAE and native multilingual audio (Kuaishou IR). The architecture is not in dispute.

Signal 3 — OpenAI and Google are hedging with autoregressive. Google launched Nano Banana Pro on November 20, 2025 as the Gemini 3 Pro Image model, and followed with Nano Banana 2 on February 26, 2026. Both are autoregressive-style, built directly on Gemini 3 (Google Blog). OpenAI’s “ChatGPT Images,” based on GPT Image 1.5, shipped December 16, 2025 — also autoregressive. Meanwhile Sora 2, OpenAI’s diffusion transformer for video, is being sunset: the app goes dark April 26, 2026; the API follows September 24, 2026 (Sora Wikipedia). The company that popularized diffusion at scale is quietly walking away from it on the image side.

The market didn’t pick one architecture. It picked two, and assigned them different jobs.

Who Owns the New Stack

Three groups are winning this split.

Black Forest Labs and ByteDance own the high-fidelity ceiling. FLUX.2 [pro] sells for roughly $0.03 per megapixel and ships with multi-reference support for up to 10 input images at 4-megapixel output (BFL Blog, VentureBeat). Seedream 4.5 holds the Chinese-market flagship position and anchors ByteDance’s consumer funnel into Dreamina and CapCut. Both are betting the rectified-flow DiT stack holds its quality lead for the next two cycles.

Google is the only lab playing both sides. Veo 3.1 and the Mar 31, 2026 release of Veo 3.1 Lite keep Google in the video-diffusion game (Google Blog). Nano Banana Pro and Nano Banana 2 keep it in the autoregressive game. Whichever architecture wins a given workload, Google ships it. That optionality is the most undervalued strategic position in generative media right now.

Hardware vendors scaling for transformer-shaped inference. The diffusion transformer is architecturally closer to an LLM than the old U-Net was. That means every NVIDIA Blackwell optimization, every serving framework, every quantization trick built for text-token inference applies — with minimal retooling — to generative image and video. The wall between LLM infrastructure and media infrastructure just collapsed.

You’re either building on transformers for media or explaining to your board why you aren’t.

Who’s Running Last Year’s Playbook

The losers share a pattern: they bet on the old architecture and misread the calendar.

OpenAI’s Sora 2. A frontier diffusion transformer for video, launched with enormous fanfare, now mid-sunset. Any pipeline that hardcoded the Sora API is on a deadline. The app shutdown arrives April 26, 2026 (Sora Wikipedia).

Pure U-Net, pure CFG legacy stacks. U-Net is still in production in Stable Diffusion XL and plenty of open-source forks, but it no longer ships at the frontier. Every 2025–2026 flagship — FLUX.2, Seedream 4.5, Kling 3.0, Sora 2, Veo 3.x — uses a DiT. Teams still tuning U-Net kernels are optimizing the last generation’s bottleneck.

Anyone assuming diffusion can’t be beaten on text rendering and LLM integration. Nano Banana Pro blends up to 14 reference images with five-person consistency and renders readable text inside generated images (Google DeepMind). Historically, readable in-image text has been diffusion’s weakest seam. The autoregressive camp turned that seam into a selling point.

U.S. teams planning around Seedance 2.0. ByteDance received cease-and-desist letters from Disney, Paramount, Warner Bros. Discovery, Netflix, Sony, and the MPA in February 2026 (Variety). As of April 2026, Seedance 2.0 remains excluded from U.S. rollout. If your production plan depended on it, your production plan needs a replacement.

What Happens Next

Base case (most likely): Rectified-flow DiT stays the default for high-fidelity image and video generation. Autoregressive image models win workloads where text rendering, LLM reasoning, and multi-image composition matter more than photorealism. Signal to watch: Whether FLUX.3 and Seedream 5.0 ship on schedule and whether Google moves Veo onto a unified Gemini backbone. Timeline: Through end of 2026.

Bull case: Flow-matching training objectives, combined with distilled few-step samplers, collapse inference latency enough that diffusion transformers match autoregressive speed while keeping the fidelity lead. One architecture absorbs both use cases. Signal: Sub-second 2K generation in open-weight DiT releases. Timeline: Late 2026 to mid-2027.

Bear case: Autoregressive image models close the fidelity gap fast enough that the diffusion camp loses its one durable advantage. The rectified-flow stack survives only in video, where temporal coherence remains hard for token-by-token generation. Signal: An autoregressive model beating FLUX.2 [pro] in blind image-quality evaluations. Timeline: Possible within twelve months if Google or OpenAI scales aggressively.

Frequently Asked Questions

Q: What are the best diffusion image and video models in 2026? A: For images, FLUX.2 (open-weight, Nov 2025) and Seedream 4.5 (Dec 2025) lead the rectified-flow DiT camp. For video, HappyHorse-1.0 tops the Artificial Analysis leaderboard, with Seedance 2.0 and Veo 3.1 close behind as of April 2026.

Q: How are video diffusion models like Sora, Kling 3, and Seedance 2 changing film and advertising? A: They now ship with synchronized audio and multi-second clip coherence, which collapses pre-viz and ad-iteration cycles. Hollywood pushback against Seedance 2.0 shows the economics have already shifted — studios are defending IP, not debating feasibility.

Q: Will autoregressive models like GPT Image and Nano Banana replace diffusion in 2026? A: Not universally. Autoregressive models are overtaking diffusion where text rendering, LLM reasoning, and multi-image composition matter most. Diffusion transformers still hold the photorealism and video ceiling. Expect a split, not a replacement.

Q: What is the future of diffusion as rectified flow, MMDiT, and flow matching mature? A: Rectified flow and flow matching have already replaced DDPM-style training at the frontier. The next wave is distillation — turning many-step samplers into few-step or single-step generators — to close the latency gap with autoregressive models without sacrificing fidelity.

The Bottom Line

The single-architecture era of generative media just ended. You are either shipping on a rectified-flow diffusion transformer, or you are shipping on an autoregressive image model — and your product strategy has to pick a side per workload, not per year. The decisions made in the next two quarters will set generative media’s default stack for the rest of the decade.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors