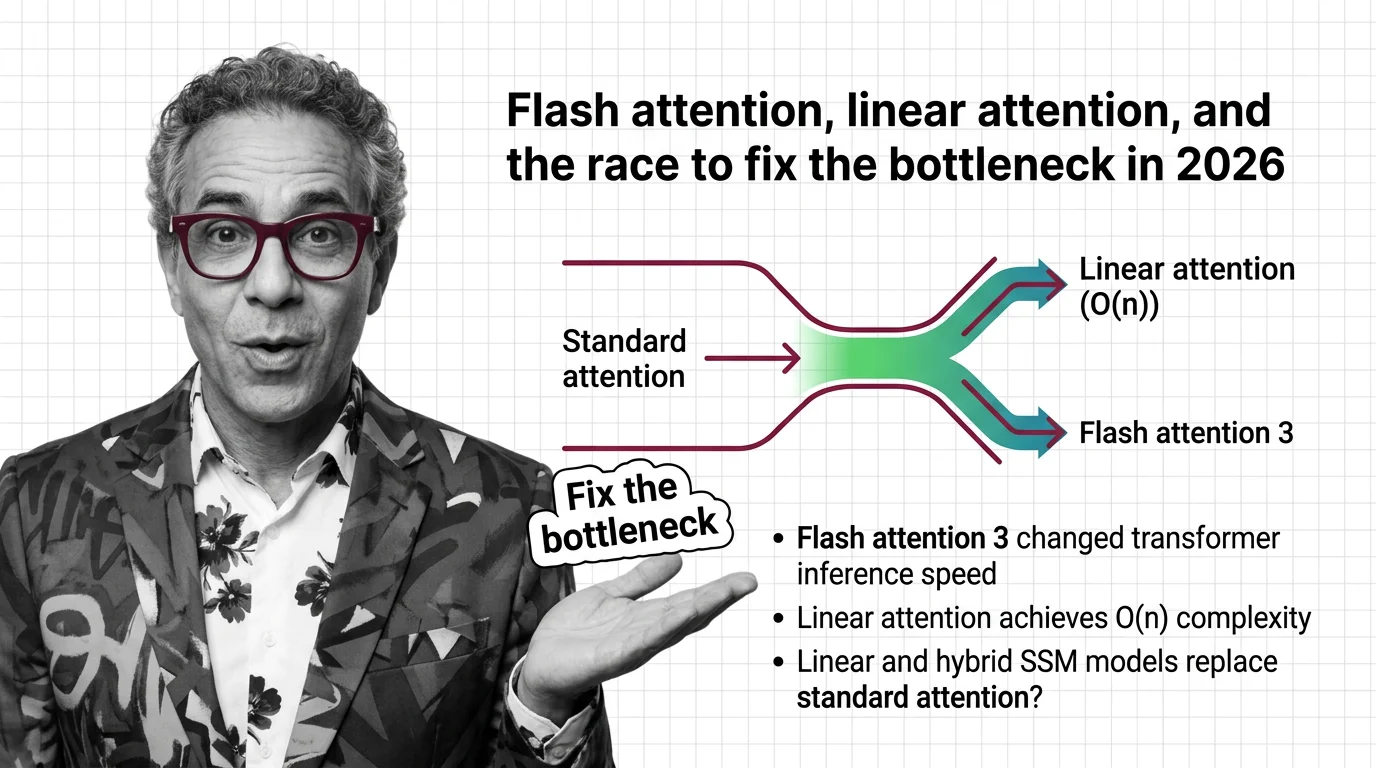

Flash Attention, Linear Attention, and the Race to Fix the Bottleneck in 2026

Table of Contents

TL;DR

- What happened: FlashAttention-4 launched for Hopper and Blackwell GPUs while linear attention libraries hit production readiness — two competing solutions to the same quadratic scaling wall.

- Why it matters: Inference cost and context length are now the primary competitive moats, and the fix you choose locks you into a hardware and architecture path.

- What’s next: Hybrid models mixing standard and linear attention are emerging as the pragmatic middle ground, but the winner is far from decided.

What Happened

FlashAttention-4 launched as a beta on March 5, 2025 (Dao-AILab GitHub), then reached production integration via cuDNN 9.14 in January 2026 — a CuTeDSL implementation targeting NVIDIA Hopper and Blackwell GPUs (PyTorch Blog, ASCII News). Meanwhile, the open-source flash-linear-attention library has been steadily expanding its supported architectures (fla-org GitHub).

Two radically different bets on the same problem, arriving at the same time. One optimizes the existing Softmax bottleneck. The other removes it entirely.

The backstory matters. FlashAttention-3, published in July 2024 by Tri Dao and collaborators from Together AI, Meta, NVIDIA, and Princeton, pushed H100 utilization to 75% on FP16 — roughly 740 TFLOPs/s (FA3 arXiv Paper). It proved that smarter memory access patterns could extract dramatically more from existing hardware. But FA3 never shipped as a standalone package. The versioning jumped from v2.8.x straight to v4.0.0 beta (Dao-AILab GitHub). FA3 was the research. FA4 is the product.

Read This Twice

Thesis: The quadratic Attention Mechanism bottleneck now has two competing escape routes, and the organizations that pick wrong will pay the compound cost of migration in eighteen months.

FA4 delivers real numbers. On Blackwell GPUs, it reaches 1,605 TFLOPS/s — up to 2.4 times faster than Triton implementations and roughly 1.3 times faster than cuDNN attention kernels (ASCII News). On FP8, the FA3 research showed paths toward close to 1.2 PFLOPs/s with 2.6 times lower numerical error than baseline FP8 quantization (FA3 arXiv Paper).

But FA4 is forward-pass only in BF16 right now. Backward pass support is planned but not shipped (PyTorch Blog). That means training workloads — the most expensive compute on any AI team’s budget — still can’t use it. Inference gets faster. Training stays expensive.

Meanwhile, Linear Attention takes a different approach. Instead of optimizing Query Key Value dot products through better memory tiling, it restructures the computation itself to achieve O(n) complexity. Models like Stanford’s BASED have explored this approach, targeting faster prefill while working to match recall benchmarks (Hazy Research Blog).

The trade-off is real. Linear attention gains speed by approximating what Flash Attention computes exactly. Recall quality versus standard attention remains an active research question with no settled consensus.

Security & compatibility notes:

- FlashAttention-4 beta: FA4 v4.0.0 beta introduces breaking API changes with a CuTeDSL backend. New install path:

pip install flash-attn-4. Existing FA2 code will not work without migration.

The Winners

Teams running inference-heavy workloads on Hopper or Blackwell hardware get an immediate win from FA4. The speedups are concrete, the integration path through PyTorch is clean, and NVIDIA’s hardware roadmap is locked in.

Hybrid architecture builders are the dark horse. AI21’s Jamba model — a Transformer Architecture-Mamba-MoE hybrid using a 1:7 attention-to-Mamba ratio with 256K context support — proved the concept (AI21 Blog). Microsoft’s Phi-4-mini-flash took it further: a 3.8B parameter hybrid using Mamba, sliding window attention, and a single full-attention layer that delivered 10x higher throughput and 2-3x lower latency compared to its pure-attention counterpart (Microsoft Azure Blog). The hybrid approach sidesteps the binary choice entirely: use full attention where recall matters, use linear alternatives where speed matters.

Open-source teams building on flash-linear-attention now have a production-grade library covering a growing catalog of architectures. That’s an ecosystem, not a prototype.

The Losers

Anyone locked into a single attention paradigm without a migration plan. If you built your entire stack around FA2 and assumed the API would stay stable, the FA4 beta just handed you a rewrite.

Organizations running long-context workloads on older GPU generations. FA4 targets Hopper and Blackwell. If your fleet is A100s, the performance ceiling hasn’t moved.

Teams betting purely on linear attention for production deployment. The recall quality gap is narrowing but not closed. Deploying linear-only models for tasks requiring precise retrieval is still a gamble.

What Happens Next

Base case (most likely): Hybrid architectures become the default for new large-scale models by late 2026. Full attention handles precision-critical layers, linear or SSM variants handle the bulk of sequence processing. Signal to watch: A top-five lab releasing a flagship model with a hybrid architecture. Timeline: Q3-Q4 2026.

Bull case: FA4 ships backward pass support and FP8 training, making pure transformer architectures fast enough that linear attention loses its primary selling point. Signal: FA4 backward pass announcement with benchmark numbers matching or exceeding FA3 training throughput. Timeline: Early 2027.

Bear case: Neither approach solves the cost curve for million-token contexts. Inference costs remain prohibitive for most production use cases, and only the largest labs can afford to run long-context models. Signal: Cloud inference pricing for million-token contexts shows no meaningful decline through 2026. Timeline: Ongoing through 2027.

Frequently Asked Questions

Q: How Flash Attention 3 changed transformer inference speed in 2026? A: FA3 itself was a July 2024 research paper, not a shipped product. Its techniques — asynchronous computation and low-precision kernels reaching 75% GPU utilization — fed directly into FlashAttention-4, which reached production integration in January 2026 through cuDNN 9.14.

Q: What is linear attention and how does it achieve O(n) complexity? A: Linear attention replaces the softmax-normalized dot product with kernel-based approximations, enabling computation that scales linearly with sequence length instead of quadratically. The trade-off is reduced recall precision on some retrieval-heavy tasks.

Q: Will linear attention and hybrid SSM models replace standard attention by 2027? A: Full replacement is unlikely. Hybrid architectures — mixing standard attention layers with linear or SSM layers — are the more probable outcome. The recall quality gap in pure linear models remains an open research problem as of early 2026.

The Bottom Line

The quadratic bottleneck has two exits now. FlashAttention-4 makes the existing path faster. Linear attention builds a different road entirely. The smart money is on hybrids — and the window to pick your architecture before the ecosystem locks in is closing fast.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors