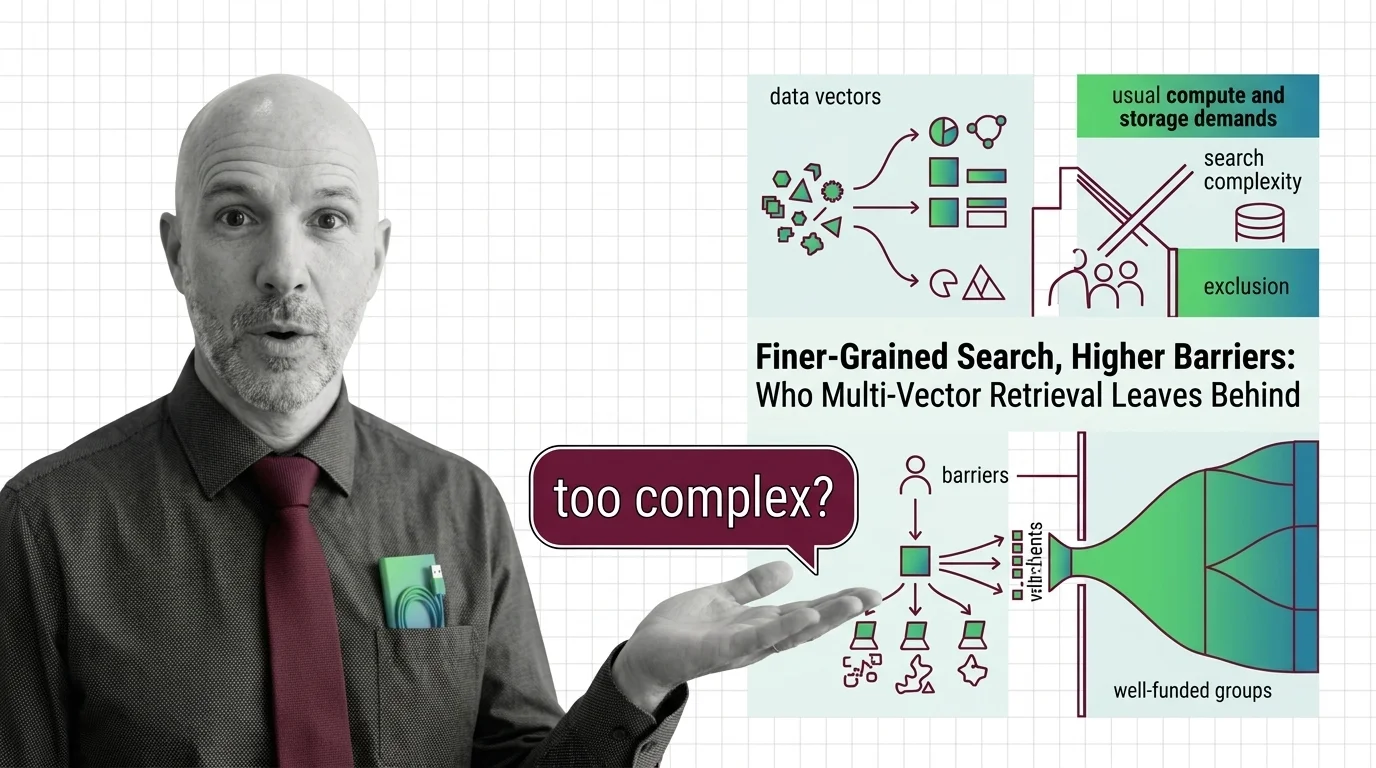

Finer-Grained Search, Higher Barriers: Who Multi-Vector Retrieval Leaves Behind

Table of Contents

The Hard Truth

What if the most significant leap in search quality we have achieved this decade is, by its very architecture, designed to serve only those who can already afford the best answers?

Every advance in information retrieval carries the same implicit promise — better answers, for everyone, eventually. Multi Vector Retrieval is the latest chapter in that promise, and it delivers something genuinely new: search that preserves the internal structure of meaning by storing many Embeddings per document instead of one. The trouble is that “richer” and “heavier” turn out to be the same word when you are the one paying the infrastructure bill.

The Promise Nobody Questions

Traditional Similarity Search Algorithms compress an entire document into a single vector — a point in high-dimensional space that represents the document’s overall meaning. Multi-vector approaches like ColBERT invert this logic. They store one embedding per token, preserving which words modify which, where emphasis falls, what context shifts a sentence from neutral to ironic (Weaviate Blog). The improvement in retrieval quality is real and measurable. Colpali extends this principle to visual documents, processing up to 1024 image patches per page to handle layouts, tables, and figures that single-vector methods flatten into noise (ColPali Paper).

None of this is hype. The granularity captures semantic nuance that coarser methods genuinely miss. And that is precisely what makes the next question uncomfortable — because the technology works, the question of who gets to use it matters more, not less.

The Case That Compression Will Save Us

The conventional defense is compelling and, in its core logic, correct. Compression research has made dramatic progress. ColBERTv2 reduced index sizes from 154 GB to between 16 and 25 GB through residual quantization — a six-to-tenfold improvement (Santhanam et al.). MUVERA, a Google research project, demonstrated a 90% latency reduction and 32x memory compression while actually improving recall (Google Research Blog). Open-source libraries like Ragatouille make ColBERT-based retrieval available to individual developers. ConstBERT proposes fixed-size document vectors to reduce variable-length storage overhead further.

The argument, stated simply: the cost curve is falling. Give it time. The benefits will diffuse downward. Multi-vector retrieval, the logic goes, should be no different from any prior generation of expensive-then-cheap technology.

The Assumption Hidden Inside Patience

The hidden premise is temporal — it treats the diffusion of technology as automatic rather than conditional. Costs do fall. But they fall unevenly, and the years between “available to well-funded labs” and “available to everyone” are not a neutral waiting room. While compression narrows the gap between single-vector and multi-vector storage, the fundamental architecture still demands more — more embeddings per document, more memory, more specialized hardware. Managed vector database costs scale with embedding count, and running multi-vector systems at scale typically requires GPU infrastructure (SJSU Library).

The parallel is instructive. Broadband internet also became cheaper over time. But the communities that waited longest for access — rural regions, lower-income areas, developing nations — did not simply experience a delay. They experienced a compounding disadvantage. The services, platforms, and economic ecosystems built during the broadband era assumed broadband. When connectivity finally arrived, the architecture of opportunity had already been constructed around those who had it first.

The question is not whether multi-vector retrieval will become affordable. The question is what happens during the years when it is not — and whose problems receive better answers first.

When Precision Becomes a Gate

Consider what multi-vector retrieval actually optimizes. It excels at the kind of search that rewards nuance — academic research, legal discovery, medical literature review, complex multi-modal document analysis. These are precisely the domains where search quality carries the highest social stakes. Better legal retrieval produces better legal outcomes. Better medical literature search accelerates clinical insight. Better academic search shortens the distance between a question and its answer.

If the organizations with the infrastructure to run multi-vector Vector Indexing are primarily well-resourced institutions — large law firms, funded hospitals, corporate research labs — then the technology does not merely create a performance gap. It creates a knowledge-access gap that mirrors existing inequalities.

A single video processed through multi-vector retrieval can require roughly ten megabytes of index storage; at platform-wide scale, the storage requirements become enormous, reaching into petabyte territory for large video libraries, according to one engineering analysis (DasRoot). The architecture does not discriminate intentionally. But its resource requirements function as a filter — and filters, however neutral in design, always have a distributional consequence.

What Performance Benchmarks Do Not Measure

Thesis (one sentence, required): Multi-vector retrieval, by coupling search quality to infrastructure investment, risks converting a technical advance into an access privilege — not through intention, but through the economics of its architecture.

This is not a rejection of the technology. ColBERT, ColPali, and their successors represent genuine scientific progress in how machines model the structure of meaning. The problem is not that these systems exist. The problem is that the discourse around them treats performance benchmarks as sufficient — as though a system that works brilliantly for those who can run it owes nothing to those who cannot. We measure recall, latency, and compression ratios. We do not measure who the improved recall serves.

The Questions We Owe This Field

There are no clean prescriptions here — this is a structural tension, not a software defect. But several directions deserve more attention than they currently receive. Should compression research be evaluated not only by how much it reduces cost, but by whether it reduces cost below the threshold where smaller organizations and public institutions can participate? Should benchmark papers include an infrastructure accessibility section alongside their accuracy tables? Should open-source retrieval wrappers be treated not as convenience layers but as critical infrastructure for equitable access — and funded accordingly?

These are not rhetorical flourishes. They are design decisions with ethical consequences that the field has been comfortable leaving unexamined.

Where This Argument Is Most Fragile

The most honest objection is also the simplest: the cost curve may fall faster than this essay assumes. If approaches like MUVERA reduce multi-vector retrieval to single-vector costs within a few years, the access gap narrows before it compounds. The second vulnerability is empirical — no peer-reviewed study currently quantifies the actual cost differential between organizations of different sizes running multi-vector systems. The structural argument is sound, but the magnitude of the problem remains an open question.

If cost parity arrives through normal research progress, this essay becomes a historical footnote about a temporary friction. That would be the best possible outcome.

The Question That Remains

Multi-vector retrieval makes search genuinely better — richer, more faithful to the structure of meaning, more capable of handling the complexity of real documents. The question it leaves unanswered is whether “better for those who can afford it” is a form of progress or a form of sorting. And whether the distance between those two depends entirely on how long we wait before asking.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors