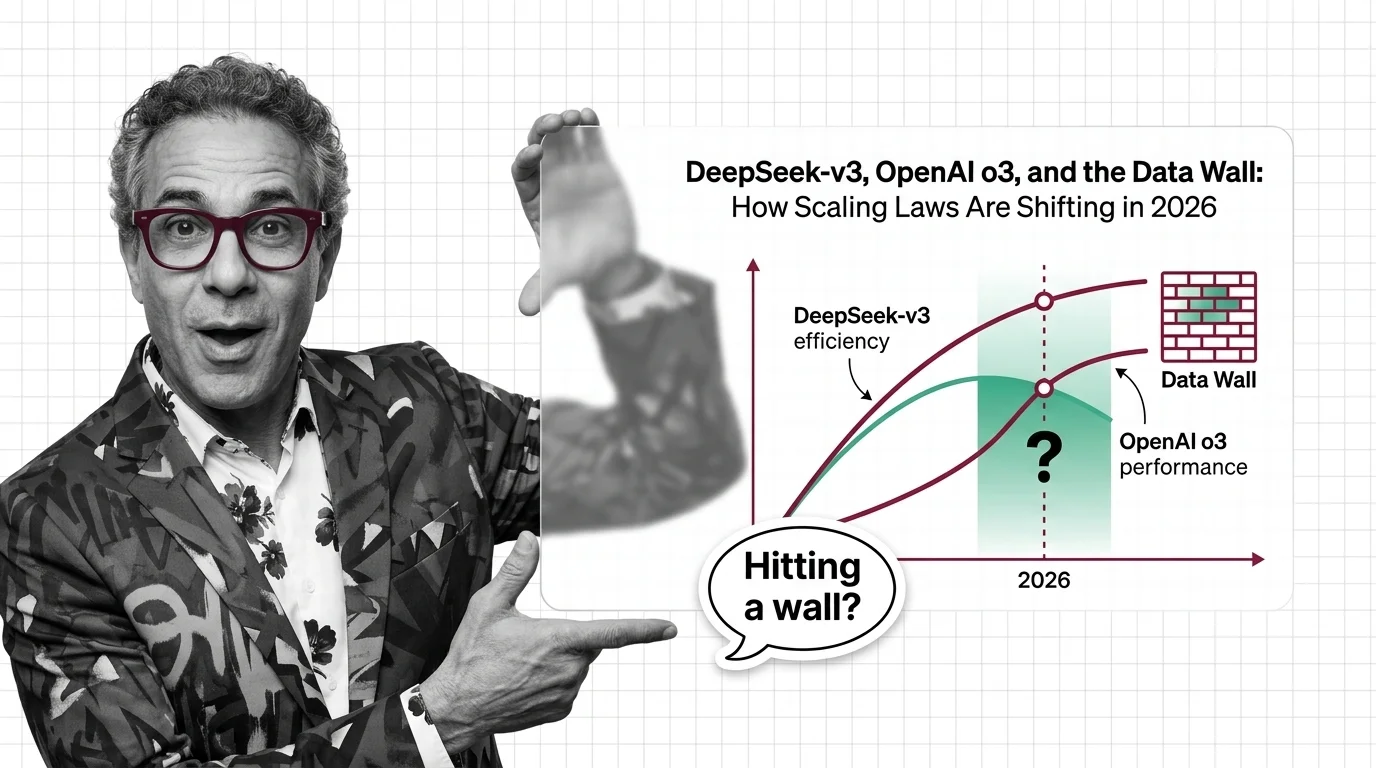

DeepSeek-v3, OpenAI o3, and the Data Wall: How Scaling Laws Are Shifting in 2026

Table of Contents

TL;DR

- The shift: Scaling laws fractured into three competing dimensions — training efficiency, inference-time compute, and post-training optimization — ending the era of one-formula scaling.

- Why it matters: The “more data, bigger model” playbook no longer defines the frontier. Teams choosing the wrong scaling dimension burn capital for diminishing returns.

- What’s next: Hybrid scaling becomes the default architecture strategy by end of 2026 as the data wall forces every lab to diversify.

For three years, the playbook was simple. More parameters. More data. Better model. That playbook just expired — not because it stopped working, but because three different teams proved there are faster roads to the same destination. The race is no longer about who spends the most on Pre Training. It’s about who scales the right dimension.

The Scaling Playbook Just Split in Three

Thesis: Scaling Laws haven’t hit a wall — they’ve fragmented into competing paradigms, and the winners will be those who scale the right axis.

The original Chinchilla Scaling insight was straightforward: for a given compute budget, there’s an optimal ratio of model size to training data — roughly 20 tokens per parameter (Hoffmann et al.). The Power Law relationship between compute and Loss Function improvement seemed settled. Industry treated it as the operating manual for frontier AI.

Then two things happened simultaneously.

DeepSeek proved you could match frontier performance at a fraction of the compute. OpenAI proved you could boost capability by spending compute at inference time instead of training time. Both were right. Neither invalidated the original laws. They demonstrated that the curve has more axes than anyone was optimizing for.

The third shift is quieter but equally significant. Post-training methods — RLHF, GRPO, RLVR — are now the primary capability drivers. Pre-training provides the foundation. Post-training and Inference Time Scaling deliver the performance leap.

That’s a paradigm fracture.

Three Bets, One Direction

DeepSeek-V3 shipped in December 2024 with 671 billion parameters but only 37 billion active at any time — a Mixture-of-Experts architecture processing tokens at roughly 250 GFLOPs each, compared to Llama 3.1 405B at 2,448 GFLOPs (Weights & Biases).

Ten times more efficient. Same league of capability.

The headline training cost of $5.6 million made waves — but that figure covers the final training run only. Research, ablations, and the hardware infrastructure behind 2,048 H800 GPUs push the real investment far higher (Interconnects). The signal isn’t the dollar amount. It’s the compute-per-capability ratio.

The successors proved it wasn’t a fluke. V3.1 added hybrid reasoning in 2025. V3.2 shipped in December 2025 with GRPO refinements and sparse attention, reaching GPT-5 level benchmarks. Efficiency compounds.

OpenAI pushed in the opposite direction.

O3 scored 87.7% on GPQA Diamond, 71.7% on SWE-bench Verified, and reached 2727 Elo on Codeforces. But the ARC-AGI results tell the real story: 75.7% at roughly $26 per task on high-efficiency settings, jumping to 87.5% at roughly $4,560 per task — a 172x compute gap between configurations (ARC Prize). For context, humans solve the same tasks at around $5 each. The capability is real. The economics are not there yet.

As Francois Chollet put it: “You couldn’t throw more compute at GPT-4 and get these results.” O3 didn’t just scale better — it scaled differently, producing what appeared to be Emergent Abilities through inference-time search rather than parameter expansion.

By March 2026, OpenAI has moved past o3 to the GPT-5.x series. O3 proved the thesis that scaling at inference time works. The frontier already moved on. But the proof of concept stands.

Meanwhile, the data wall is accelerating everything. High-quality text data hits exhaustion somewhere between 2026 and 2032 — a window with wide confidence intervals, revised upward from earlier estimates (Epoch AI). Industry already overtrains well beyond the Compute Optimal Training ratio. Llama 3 trained at roughly 1,875 tokens per parameter — nearly 100x the original 20:1 baseline.

The wall isn’t theoretical. It’s the reason everyone is looking for alternative scaling dimensions.

Who Gains From the Split

Labs with architecture innovation. DeepSeek’s MoE approach and Multi-Head Latent Attention proved that smarter architecture beats brute-force parameter scaling. Their early 2026 research into Manifold-Constrained Hyper-Connections signals the next round.

Teams investing in post-training infrastructure. The shift from pre-training dominance to post-training plus inference-time scaling means the winning stack isn’t the biggest GPU cluster. It’s the best Fine Tuning and reinforcement learning pipeline.

Inference providers. Inference demand is projected to exceed training demand by a factor of 118 by 2026, claiming an estimated 75% of total AI compute by 2030 (Emerge Haus). The business model is migrating from selling training runs to selling inference at scale.

Who Gets Caught in the Middle

Teams still scaling the old way. If your strategy is “buy more GPUs and train a bigger model,” you’re optimizing one axis in a three-axis race. Diminishing returns are already visible.

Organizations waiting for clarity. The clarity already arrived. DeepSeek, OpenAI, and the post-training community each proved a different thesis across 2024 and 2025. The question isn’t which approach wins. It’s which combination your use case demands.

You’re either picking your scaling dimension or you’re burning compute on the wrong one.

What Happens Next

Base case (most likely): Hybrid scaling becomes the default. Labs combine efficient pre-training with MoE architectures, targeted post-training via GRPO and RLVR, and adaptive inference-time compute. Pure pre-training scaling continues but yields diminishing relative returns. Signal to watch: Frontier labs publishing cost-per-capability benchmarks instead of parameter counts. Timeline: Already underway. Dominant by end of 2026.

Bull case: Synthetic data and curriculum learning break through the data wall, restoring pre-training scaling curves at new efficiency points. A lab achieves frontier-class performance at dramatically lower total training cost. Signal: A sub-100B parameter model matching frontier performance on reasoning and coding benchmarks. Timeline: Late 2026 to mid-2027.

Bear case: The three scaling dimensions compete for the same finite compute pool. No clear winner emerges, costs rise as teams hedge across all three axes simultaneously. Signal: Major labs increasing compute budgets without proportional capability gains for two consecutive quarters. Timeline: Mid-2026 if data wall estimates prove conservative.

Frequently Asked Questions

Q: How did DeepSeek-v3 and OpenAI o3 change the scaling laws debate in opposite directions? A: DeepSeek proved frontier capability is reachable at a fraction of the compute through architecture efficiency. O3 proved capability gains come from scaling compute at inference time. Both expanded the definition of scaling — neither killed it.

Q: Is pretraining scaling hitting a wall in 2026 and what paradigm comes after Chinchilla-optimal training? A: Pure pretraining faces diminishing returns as quality data grows scarce. Three paradigms emerged as successors: efficient architecture design, post-training optimization through reinforcement learning, and inference-time compute scaling. The replacement is a portfolio, not a single method.

Q: What real experiments in 2025-2026 showed scaling laws breaking down or holding at extreme scale? A: DeepSeek-V3 matched frontier performance at roughly one-tenth the compute of comparable dense models. O3 scored 87.5% on ARC-AGI where previous models scored single digits — by scaling inference compute, not parameters. Both showed scaling laws holding along new axes.

The Bottom Line

Scaling laws didn’t break. They multiplied. The era of one-dimensional scaling — more data, more parameters, better model — gave way to a three-front race across training efficiency, inference-time compute, and post-training optimization. The teams that recognized the split early aren’t just ahead. They’re playing a different game entirely.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors