DAGs vs. State Machines, Retry Logic, and the Hard Technical Limits of AI Workflow Orchestration

Table of Contents

ELI5

Workflow orchestration for AI is the runtime layer that decides when each step of an LLM pipeline runs, what happens when a step fails, and where its state lives. Two architectures dominate: DAGs and state machines.

A team wires their first LLM agent into Apache Airflow. The agent calls a tool, the tool times out, the retry policy fires. The agent calls the tool again — and again, and again. Three retries later the OpenAI bill has tripled, and nobody can explain why the DAG keeps mutating between runs. The orchestrator did exactly what it was designed to do. The pipeline was designed for the wrong physics.

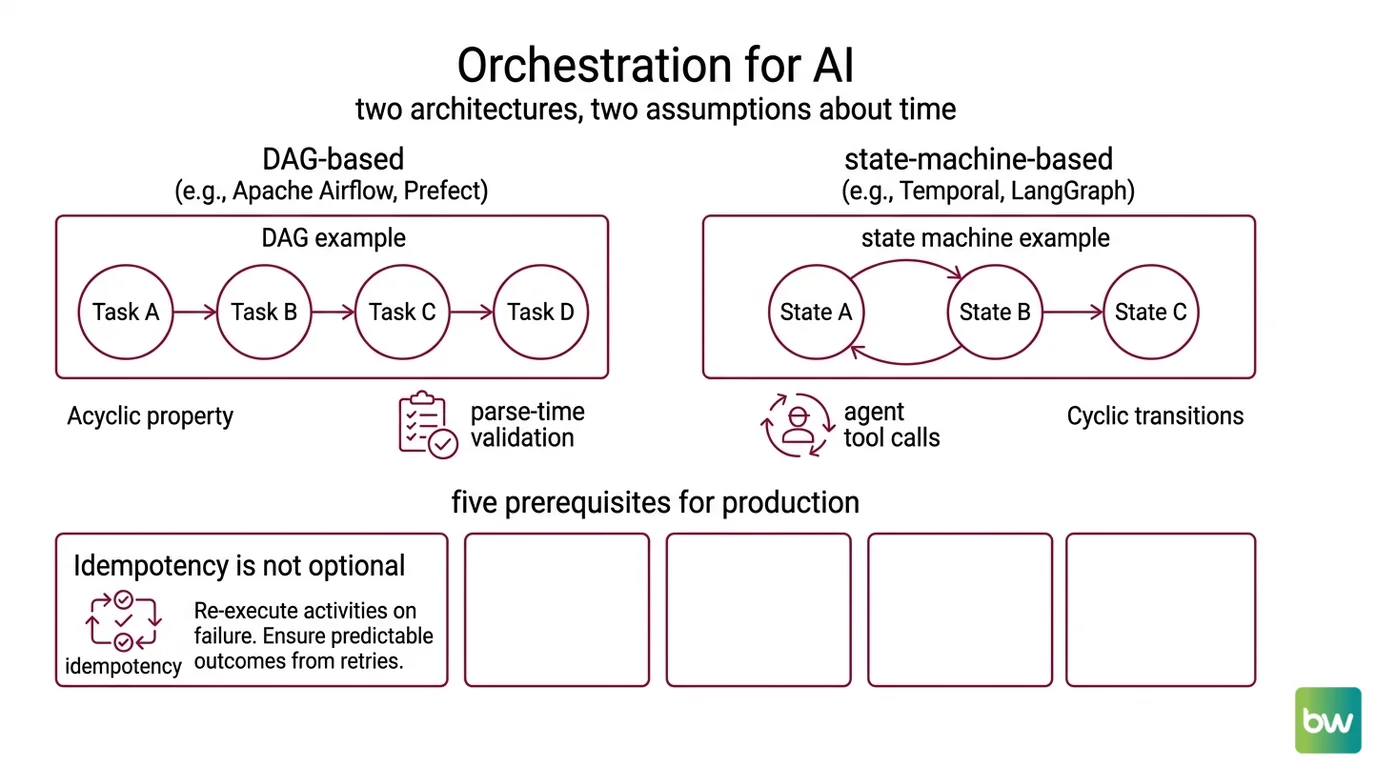

Two architectures, two assumptions about time

Every system for Workflow Orchestration For AI encodes a hypothesis about how steps relate to each other. DAGs assume a one-way arrow: A finishes, then B starts, then C. State machines allow cycles: A may return to itself, transition to B, then back to A, then forward again. The choice of orchestrator is the choice of hypothesis — and AI workflows quietly violate the DAG one most of the time.

What do you need to know before adopting workflow orchestration for AI?

A DAG-based orchestrator like Apache Airflow models a pipeline as a directed acyclic graph: each task is a node, dependencies are edges, and the scheduler walks the graph from sources to sinks. The acyclic property is the load-bearing constraint. It is what makes retries deterministic, parse-time validation possible, and the failure surface tractable. Airflow 3.2.1 is the current stable release, and Airflow 2 reaches end of life in April 2026 (Airflow Docs). Prefect 3 sits in the same family but builds its graph at runtime from arbitrary Python control flow, with an event-driven engine open-sourced from Prefect Cloud (Prefect Docs).

A state-machine framework like Temporal or LangGraph models the same pipeline as a graph of states and transitions, including transitions back to states already visited. LangGraph’s StateGraph defines nodes as functions and edges as transitions over a typed state object — usually a TypedDict or Pydantic model — and the graph must be compiled before invocation (LangChain Docs). The cycle is not a bug. It is the whole point: an agent that calls a tool, examines the result, and decides whether to call the same tool with different arguments is, structurally, a loop.

This split surfaces five concrete prerequisites before any AI workflow goes into production.

First, idempotency is not optional. State-machine engines like Temporal re-execute activities on failure as part of their durability model. Temporal’s docs are explicit — activities must be idempotent because the engine will, by design, replay them (Temporal Docs). The same constraint applies to DAGs the moment retries are turned on, but DAG users often discover it only after a charged-twice incident.

Second, state lives somewhere durable, or it doesn’t survive. The orchestrator’s checkpointing scope determines what gets reconstructed after a crash. LangGraph’s checkpointers save state between nodes, not inside a node — durability inside a long-running node is deferred to LangSmith or an external runtime (LangChain Docs). Temporal persists every workflow event to a database. AWS Step Functions stores execution history as discrete events. Each one draws the durable boundary in a different place.

Third, the retry policy is part of the failure model, not a decoration. Temporal’s default policy uses an initial interval of 1 second, a backoff coefficient of 2.0, a maximum interval of 100 seconds, and an unlimited number of attempts (Temporal Docs). The “unlimited” part is real but rarely safe in practice — teams that adopt Temporal almost always override maximum_attempts to a bounded value. The default is a starting point, not a recommendation.

Fourth, structured tracing is a precondition for debugging, not a nice-to-have. The 2026 Guide to Agentic Workflow Architectures lists checkpointing, idempotency keys, retry policies, durable state storage, and structured traces as the five non-negotiable building blocks for production AI workflows (StackAI 2026 Guide). Skip any one and the system is technically running but operationally opaque.

Fifth, dynamic graph shape is the dividing line. If the number of branches in a pipeline is known at design time, a DAG fits. If the number of branches depends on what the LLM returned — three tool calls or thirty — the orchestrator needs to handle runtime expansion. Airflow added dynamic task mapping in 2.3, which makes the “Airflow can’t do dynamic branching” objection out of date; the real limitation is where the expansion happens and how high the ceiling sits.

Where the abstractions hit the floor

Every orchestrator publishes hard limits in its quota documentation. AI workloads tend to find them faster than batch workloads because LLM outputs are unpredictable in size and the cyclical patterns of agents pile up history quickly.

What are the technical limitations of DAG-based and state-machine orchestrators for LLM workflows?

Airflow’s dynamic task mapping caps at 1,024 tasks per mapped operator by default (max_map_length = 1024), and the expansion happens at scheduler time rather than mid-execution — mapped task groups also disallow further mapping, which makes nested fan-out impossible (Airflow Docs). For a batch retrieval job over a million documents, 1,024 mapped tasks is enough only if each task processes a thousand documents. For an agent that decides at runtime to spawn a tool call per retrieved chunk, the ceiling is reached during a single planning turn.

AWS Step Functions Standard executions halt at 25,000 events — a hard quota that cannot be raised (AWS Step Functions Docs). Every state entry, exit, task start, task end, and Lambda invocation counts toward it. An agent that loops fifty times — tool call, observation, tool call, observation — burns through that ceiling in a single execution if the inner activities are themselves multi-step. The Express variant lifts the event ceiling but caps total duration at five minutes, which is usually shorter than a multi-step agent run; Standard runs for up to a year per execution but pays for that durability in event accounting.

State payload size is capped at 256 KiB per task input or output in Step Functions (AWS Step Functions Docs). LLM context windows now exceed a megabyte; passing a full conversation history through an orchestrator that caps each state transition at 256 KiB requires explicit chunking or external storage. The orchestrator does not warn you that your message history has grown beyond the cap — the execution simply fails at the boundary.

LangGraph’s durability is between nodes, not inside them. If a node executes a long-running LLM call and the worker dies mid-stream, LangGraph can resume from the previous checkpoint, but the call itself does not replay token-by-token. The framework’s docs are clear about this scope; for replay-grade durable execution inside long activities, the recommended pattern is to delegate to a durable runtime layer (LangChain Docs).

The trade-offs that remain are qualitative because no public benchmark compares DAG and state-machine orchestrators on LLM-specific reliability metrics — tail latency under retries, recovery cost after worker death, cost-per-successful-completion. Vendor claims fill the gap, and they should be read as marketing until independent measurement appears.

Not a finished science. A live engineering question.

Airflow version notes:

- Airflow 3 XCom pickling disabled by default: Code that XCom’d arbitrary Python objects — including LangChain message objects between tasks — breaks on upgrade. Fix: serialize state explicitly as JSON or Pydantic before crossing task boundaries (Airflow Docs).

- Airflow 2 end of life April 2026: New AI projects should target Airflow 3.x; tasks no longer have direct DB access in Airflow 3, and BashOperator/PythonOperator moved to

apache-airflow-providers-standard(Airflow Docs).

What the architecture predicts

The choice between DAG and state machine is not a preference — it is a forecast. If the pipeline shape is fixed and the failure mode is “rerun the last task,” a DAG is the right hypothesis. If the shape is unknown until the model speaks and the failure mode is “rebuild state from history,” a state machine is the right hypothesis. AI workflows usually fall in the second category, which is why the production pattern that emerged in 2025–2026 is hybrid: Temporal for macro durability — retries, persistence, recovery — and LangGraph for the micro reasoning loop inside an agent (Temporal Blog).

The architecture predicts specific behaviors before you observe them:

- If you wire an LLM agent into a pure DAG with retries enabled and non-idempotent tool calls, you should observe duplicate side effects — duplicate API charges, duplicate database writes, duplicate emails sent. The orchestrator is doing its job; the abstraction is doing the wrong job.

- If you store full conversation history in Step Functions state, you should observe execution failures at the 256 KiB boundary even though the underlying logic is sound. The orchestrator’s event log will show the cause; the application code will not.

- If you let an agentic loop run on Step Functions Standard with deep inner activities, you should observe abrupt termination around the 25,000-event mark, usually with no clear failure cause inside any single state.

Rule of thumb: Pick the orchestrator whose failure model matches the failure model of the workflow. Retry semantics, state scope, and dynamic shape are the three knobs that have to fit before voice, framework familiarity, or vendor preference matter.

When it breaks: The dominant failure mode is non-idempotent side effects under retry — an LLM tool call that hits a non-idempotent API replays on retry and charges twice, sends twice, or writes twice. The orchestrator cannot detect this; the application has to enforce idempotency keys before the orchestrator’s retry policy is safe to enable.

The Data Says

Workflow orchestration for AI is the choice between two hypotheses about time. DAGs assume forward-only progression and cap dynamic fan-out at hard limits the marketing pages rarely mention. State machines accept cycles but charge for them in event history, idempotency requirements, and durability scope that stops at the node boundary. The production answer in 2026 is hybrid — durable engine outside, reasoning loop inside — and it works because each layer is operating inside its own physics.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors