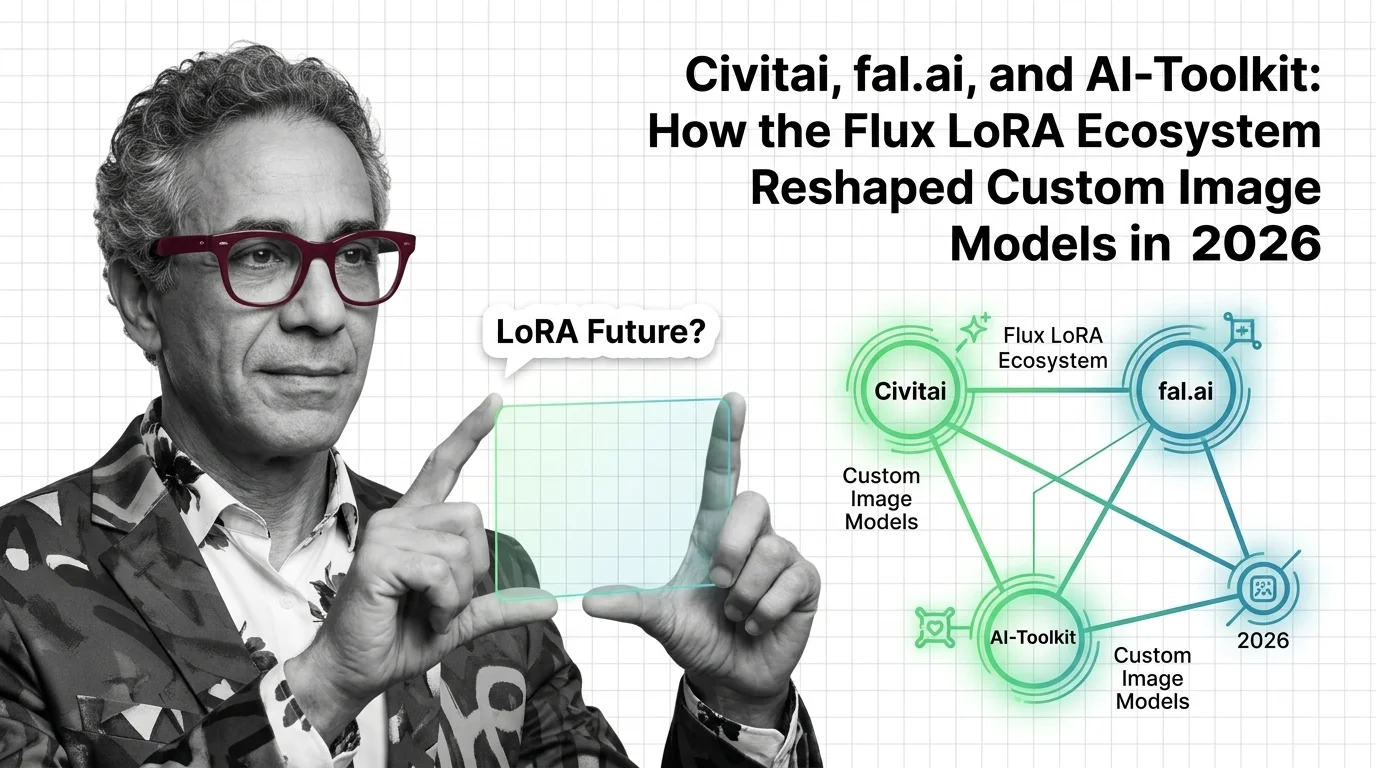

Civitai, fal.ai, AI-Toolkit: The 2026 Flux LoRA Ecosystem

Table of Contents

TL;DR

- The shift: The LoRA for Image Generation market reorganized into three layers — marketplace, serverless API, and open-source trainer — all betting on Flux as the base.

- Why it matters: Closed frontier image models like GPT Image block fine-tuning, so every team that needs a custom style or product likeness now picks a layer in the open stack.

- What’s next: Flux.2 reset the trainer race. The platforms that shipped support within months are pulling ahead. The rest are running last year’s playbook.

The image generation market quietly split this year. Frontier models keep getting better at one-shot prompts, but the leaders all closed the door on fine-tuning. Teams that need a brand-locked style or a product photo that actually looks like their product are shopping in a parallel ecosystem — and that ecosystem just restructured itself around Flux.

The Flux LoRA Stack Just Split Into Three Layers

Thesis (one sentence, required): The 2026 custom image model market is no longer one ecosystem — it’s three vertically stacked layers, each competing on different axes, all betting on the same open-weight base.

The split happened in plain sight. Marketplace and no-code training is Civitai’s lane. Serverless API training — pay-per-step, no GPU babysitting — belongs to fal.ai, Replicate, and Astria. Open-source local training is ostris’s AI-Toolkit, Kohya SS, and OneTrainer.

Three layers, one substrate. That’s the structural fact. The reason all three converged on the Flux family — with SDXL still underneath — matters more than the split itself.

The Closed Models Forced This

GPT Image 1.5, Nano Banana 2, and Nano Banana Pro currently sit at the top of the artificialanalysis.ai text-to-image arena. None of them expose a LORA surface. None of them allow fine-tuning at all. If you need brand consistency or a custom subject, the frontier is a wall, not a workshop.

So the entire customization economy migrated to open-weight bases. Flux and SDXL became the substrate of choice in 2025. Then Flux.2 landed on November 25, 2025, and reset the training stack again, per the Black Forest Labs Blog.

Flux.2 also changed the rules. The Dev variant supports LoRA. The Pro, Flex, and Max variants do not, per fal.ai. That single design choice splits the LoRA economy into who can train on the new base and who is stuck retraining everything they already built.

Because here is the catch: Flux.1-dev LoRAs are not directly portable to Flux.2-dev. The architecture changed. Catalogs need to be rebuilt.

Compatibility & policy notes:

- FLUX.1-dev → FLUX.2-dev LoRA portability: LoRAs trained on FLUX.1-dev must be retrained for FLUX.2-dev (architecture changed).

- Civitai Stability AI removal: Stability AI Core Models removed from the Civitai Generator on July 31, 2025. SDXL and SD 1.5 unaffected.

- Kohya SS Flux training:

--split_modedeprecated in favor of--blocks_to_swap. Older tutorials may fail without an update.

The Winners Already Separated From the Pack

Three names own the top of the new stack.

ostris’s AI-Toolkit is the quiet kingmaker. It is the open-source training engine Replicate wraps as a Cog for its own Flux fine-tuner (Replicate’s GitHub repository). AI-Toolkit ships under MIT and supports Flux.1, Flux.2 Dev, Flux.2 Klein, SDXL, and Qwen Image among others (ostris’s GitHub repository). The 24 GB VRAM example configs make it accessible to a single workstation.

fal.ai moved fastest on Flux.2. Its Flux.2 [dev] trainer prices at $0.008 per step — a 1000-step run lands at $8 (fal.ai). Serverless H100 starts at $1.89/hr (fal.ai’s pricing page). For teams that want pay-per-use without managing infrastructure, fal.ai is the default choice in 2026.

Civitai keeps the marketplace crown despite a rougher year. The on-site trainer covers most SDXL and SD 1.5 jobs at 500 Buzz, with a 5-minute Flux Rapid Training tier at 4000 Buzz per 100 images (Civitai Education). The library is still the largest publicly browsable LoRA catalog in the open ecosystem.

That’s the leaderboard for the people who actually train.

The Losers Are Equally Visible

Civitai’s hosting model is under pressure. Visa and Mastercard forced the platform to tighten celebrity-likeness and extreme-content LoRAs in 2025, generating around 950 negative user reactions (Unite.AI). Then Stability AI Core Models were pulled from the Civitai Generator effective July 31, 2025 (Civitai). The marketplace is still dominant. Its content moat is shrinking.

Stability AI itself is the bigger casualty. Losing distribution on the largest open-model marketplace is a strategic re-rating, not a flesh wound.

Anyone whose LoRA library is locked to Flux.1-dev is on borrowed time. Flux.2 is not a drop-in upgrade — the architecture changed enough that LoRAs need to be retrained, per a Hugging Face discussion on the release. Teams that built deep catalogs in 2024 and 2025 face a re-training bill or slow obsolescence.

Trainers that still don’t ship Flux.2 support are getting passed over. The window for catching up is short.

What Happens Next

Base case (most likely): The stack consolidates. fal.ai and Replicate split serverless. Civitai keeps the marketplace lead but loses share at the high-craft end. AI-Toolkit becomes the de-facto training engine wrapped by everyone else. Signal to watch: Another major platform switches its trainer engine to ostris’s AI-Toolkit. Timeline: Six to nine months.

Bull case: Flux.2 Klein ships full LoRA training on consumer GPUs and pushes the entry point down again. Signal: A widely-used open trainer ships a Klein 4B LoRA recipe with sub-12 GB VRAM. Timeline: Late 2026.

Bear case: Frontier closed models — GPT Image, Nano Banana — finally open a managed fine-tuning surface and absorb mid-market customization demand. Signal: OpenAI or Google ships an image-model fine-tuning API with brand-output guarantees. Timeline: Twelve to eighteen months.

Frequently Asked Questions

Q: Which LoRA training platforms and marketplaces are leading in 2026? A: Civitai owns the marketplace and no-code training. fal.ai leads serverless API training, especially for Flux.2. ostris’s AI-Toolkit is the dominant open-source trainer and powers Replicate’s Cog. Kohya SS still anchors local training at the technical end.

Q: Where is LoRA for image generation heading as Flux.2 dominates and closed models like GPT Image block fine-tuning? A: Toward an open-weight monoculture for customization. As long as frontier models stay closed, every brand, character, and product LoRA flows through the Flux and SDXL stack. The open ecosystem absorbs the entire fine-tuning market by default — not by winning quality, but by being the only door open.

The Bottom Line

The custom image market is now an open-stack market. Closed leaders captured raw quality; open leaders captured customization. AI Image Editing workflows that depend on brand or character consistency live on Diffusion Models you can actually fine-tune. You’re either picking the layer that fits your team — marketplace, API, or local — or you’re paying someone else to do it for you next quarter.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

Stay ahead, DAN.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors