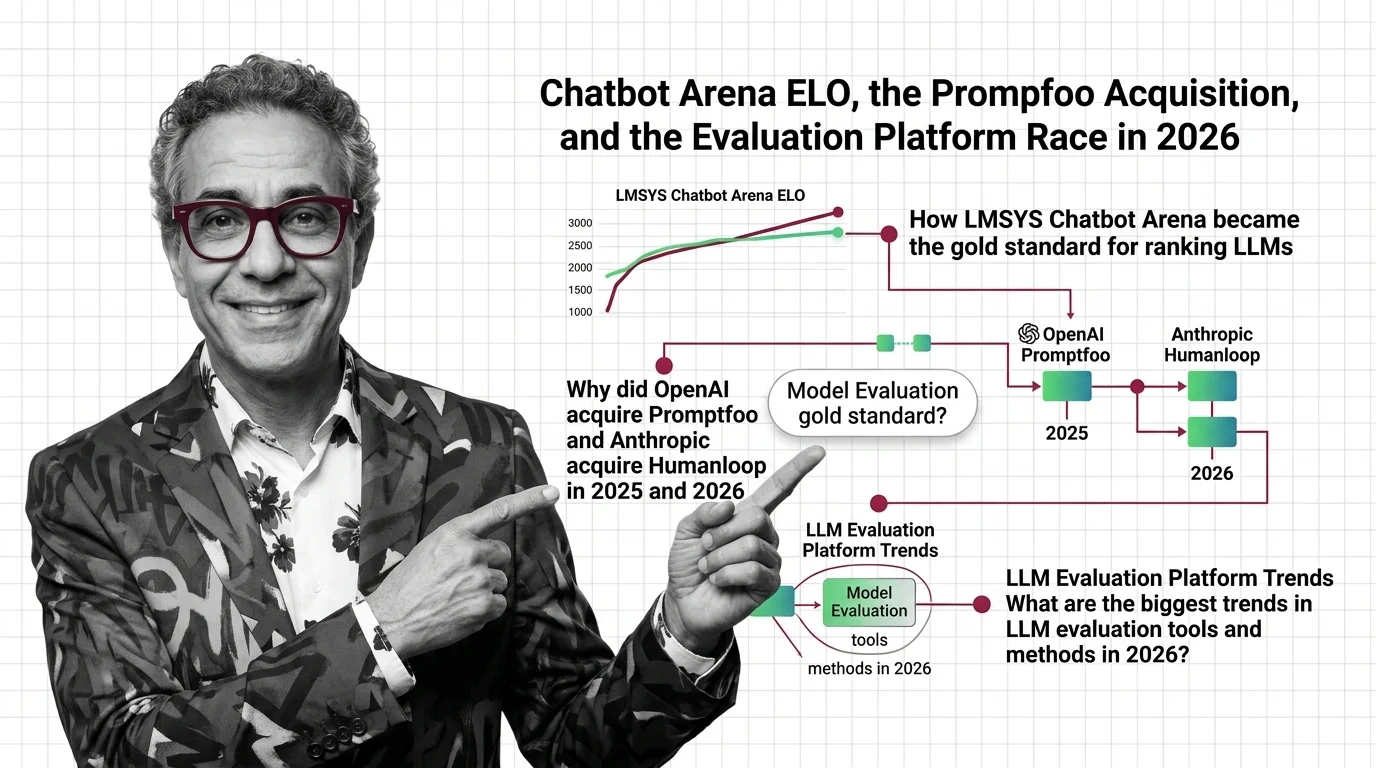

Chatbot Arena ELO, the Promptfoo Acquisition, and the Evaluation Platform Race in 2026

Table of Contents

TL;DR

- The shift: Frontier labs are acquiring evaluation startups because controlling how models are scored is now as strategic as building the models themselves.

- Why it matters: Independent evaluation tools are being absorbed into proprietary platforms, concentrating the scoring infrastructure inside the labs being scored.

- What’s next: Arena’s $1.7B valuation prices in its independence. The acquisition wave suggests that independence has an expiration date.

Six months ago, evaluation was infrastructure. Something teams bolted on after shipping. Now OpenAI, Anthropic, and Google are spending real capital to own it.

That’s not a tooling trend. That’s a power grab.

The Evaluation Layer Is Now a Strategic Weapon

Thesis: The frontier labs stopped treating Model Evaluation as a neutral utility and started treating it as a competitive moat — and the acquisition wave proves it.

For years, evaluation lived in the background. Teams picked a benchmark — HumanEval, SWE Bench, BLEU — ran their scores, and shipped. The evaluation layer was a commodity. Nobody was acquiring it.

That changed in two moves.

OpenAI acquired Promptfoo on March 9, 2026 (TechCrunch). Promptfoo was founded in 2024, raised $23M, carried an $86M valuation, and was used by more than a quarter of the Fortune 500. The open-source CLI stays MIT-licensed for now, but the core technology is being folded into OpenAI’s Frontier platform (Promptfoo Blog).

Seven months earlier, Anthropic acqui-hired Humanloop’s team — cofounders Raza Habib, Peter Hayes, Jordan Burgess, plus roughly twelve engineers (TechCrunch). Not a full acquisition. An acqui-hire: the team, not the IP or assets. Humanloop as a standalone product no longer exists.

The pace alone tells the story. OpenAI completed six acquisitions in Q1 2026 — nearly matching its eight total for all of 2025 (Crunchbase News).

The evaluation stack is being absorbed into the platforms it was built to judge.

That is not an accident. It is a strategy.

Three Signals, One Direction

Arena — the platform formerly known as Chatbot Arena, rebranded from LMArena in January 2026 — raised $150M at roughly $1.7B (Wikipedia). That is up from a $600M seed valuation just eight months prior. Founded at UC Berkeley in April 2023, Arena went from research project to the industry’s most-cited ranking system in under three years.

As of March 2026, the platform carries 5.6M votes across 333 models, processing 4M head-to-head comparisons monthly (Arena Leaderboard). The ELO Rating system it runs — crowdsourced, double-blind pairwise comparisons — became the closest thing to a neutral benchmark the industry has.

But neutral is a loaded word.

A 2025 paper from Cohere, Stanford, MIT, and AI2 documented what they called the “Leaderboard Illusion”: top labs selectively testing models in private, publishing only the highest scorer (TechCrunch). Meta’s Llama 4 incident made it concrete — twenty-seven model variants tested behind the curtain, only the winning result shown to the public (Simon Willison). Arena updated its policies after the backlash.

The gold standard has cracks. It is still the gold standard.

That tension defines this entire market.

Who Owns the Score Now

The labs that buy evaluation infrastructure win twice. They get internal tooling. And they reduce the number of independent referees.

OpenAI now has Promptfoo’s red-teaming and evaluation suite embedded in its agent platform. Every enterprise customer testing OpenAI agents will run those tests on OpenAI-owned infrastructure.

Anthropic absorbed the team that built one of the strongest LLM As Judge platforms in the market. The engineers who understood human-in-the-loop evaluation at scale are now building Anthropic’s internal systems.

Arena stands alone — the only high-profile evaluation platform that has not been acquired. Its valuation prices in that independence.

Whether it keeps it is another question.

The Independence Problem

Independent evaluation startups just lost their leverage. Two of the strongest teams in the space are now inside frontier labs. The talent pool for independent evaluation just got thinner.

Any remaining startup building evaluation tools faces a new calculus: grow fast enough to matter, or become the next acqui-hire. The window between “interesting product” and “talent acquisition” keeps shrinking.

Static benchmarks — Perplexity-based scoring, fixed test suites — were already losing relevance. Benchmark Contamination made them unreliable. Now the dynamic alternatives are consolidating inside the very companies they were designed to evaluate.

You’re either building evaluation infrastructure the labs need to buy — or you’re building features they’ll replicate in a quarter.

What Happens Next

Base case (most likely): Evaluation splits into two tiers. Lab-owned tools dominate enterprise adoption through platform bundling. Arena and a handful of independents hold credibility for public rankings. The tension between proprietary and independent scoring becomes a permanent market feature. Signal to watch: Whether Arena accepts strategic investment from a frontier lab. Timeline: By end of 2026.

Bull case: Arena’s independence becomes its moat. Regulators or enterprise buyers demand third-party evaluation, making neutral platforms more valuable. Signal: EU AI Act enforcement requiring independent model evaluation. Timeline: 2027.

Bear case: Arena’s credibility erodes further. Gaming incidents multiply. Labs build internal benchmarks that enterprise customers trust more. The concept of a neutral public leaderboard fades. Signal: A major lab withdraws from Arena or launches a competing public benchmark. Timeline: Late 2026 to mid-2027.

Frequently Asked Questions

Q: How LMSYS Chatbot Arena became the gold standard for ranking LLMs A: Arena pioneered crowdsourced, double-blind pairwise comparisons using an Elo-based rating system. With millions of votes across hundreds of models, its scale and methodology made it the default ranking reference — though its neutrality has been challenged by selective private testing from top labs.

Q: Why did OpenAI acquire Promptfoo and Anthropic acquire Humanloop in 2025 and 2026 A: Both moves bring evaluation expertise in-house. OpenAI folded Promptfoo’s red-teaming tools into its Frontier platform. Anthropic acqui-hired Humanloop’s team for their human-in-the-loop evaluation experience. Controlling the evaluation stack is now a competitive priority.

Q: What are the biggest trends in LLM evaluation tools and methods in 2026 A: Three forces are reshaping evaluation: consolidation of independent tools into frontier lab platforms, growing credibility challenges for public leaderboards, and the shift from static benchmarks to dynamic human-preference-based scoring systems.

The Bottom Line

The evaluation layer is no longer neutral ground. The labs are buying the referees. Arena’s independence is the last firewall — and it has a price tag.

The question is not whether evaluation matters. It is who gets to define what “better” means.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors