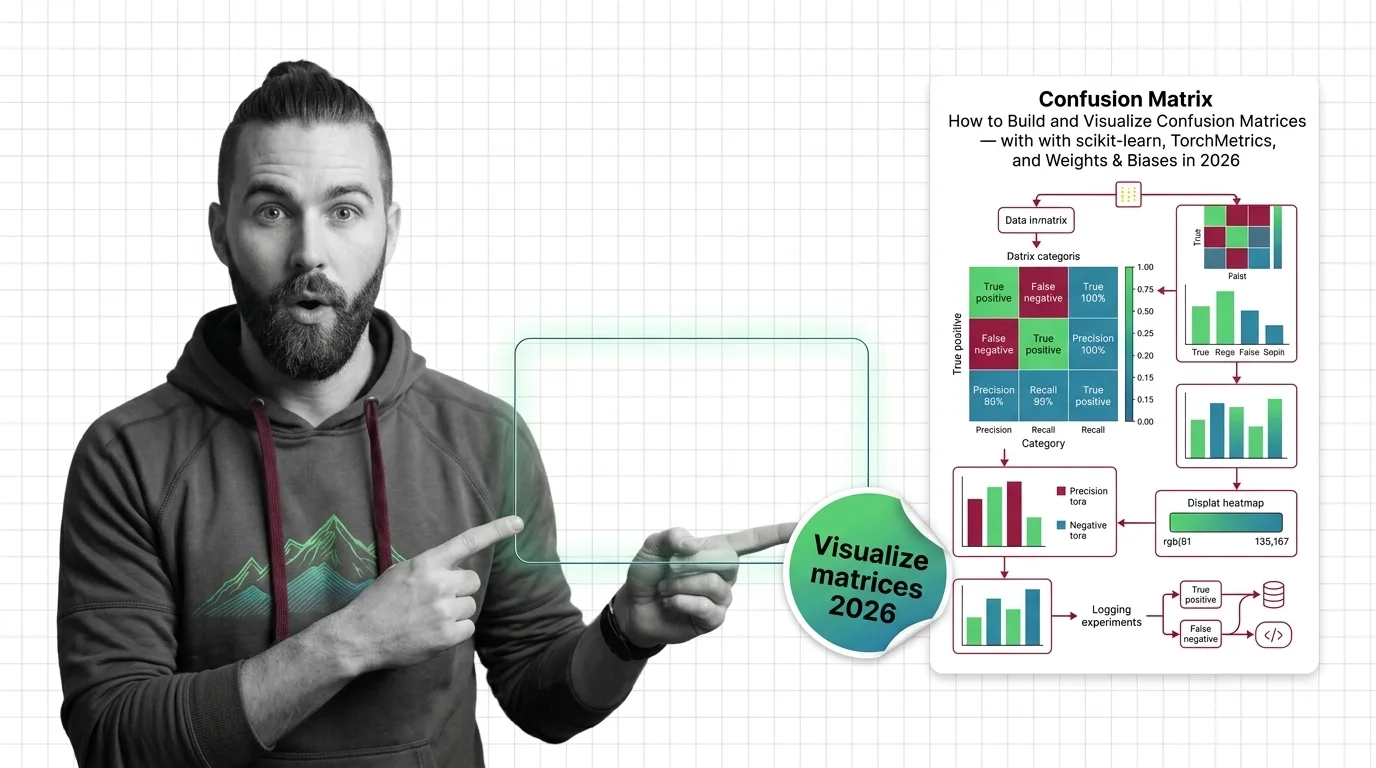

Confusion Matrices: scikit-learn, TorchMetrics & W&B (2026)

Specify, build, and validate confusion matrix pipelines with scikit-learn 1.8, TorchMetrics 1.9, and Weights & Biases …

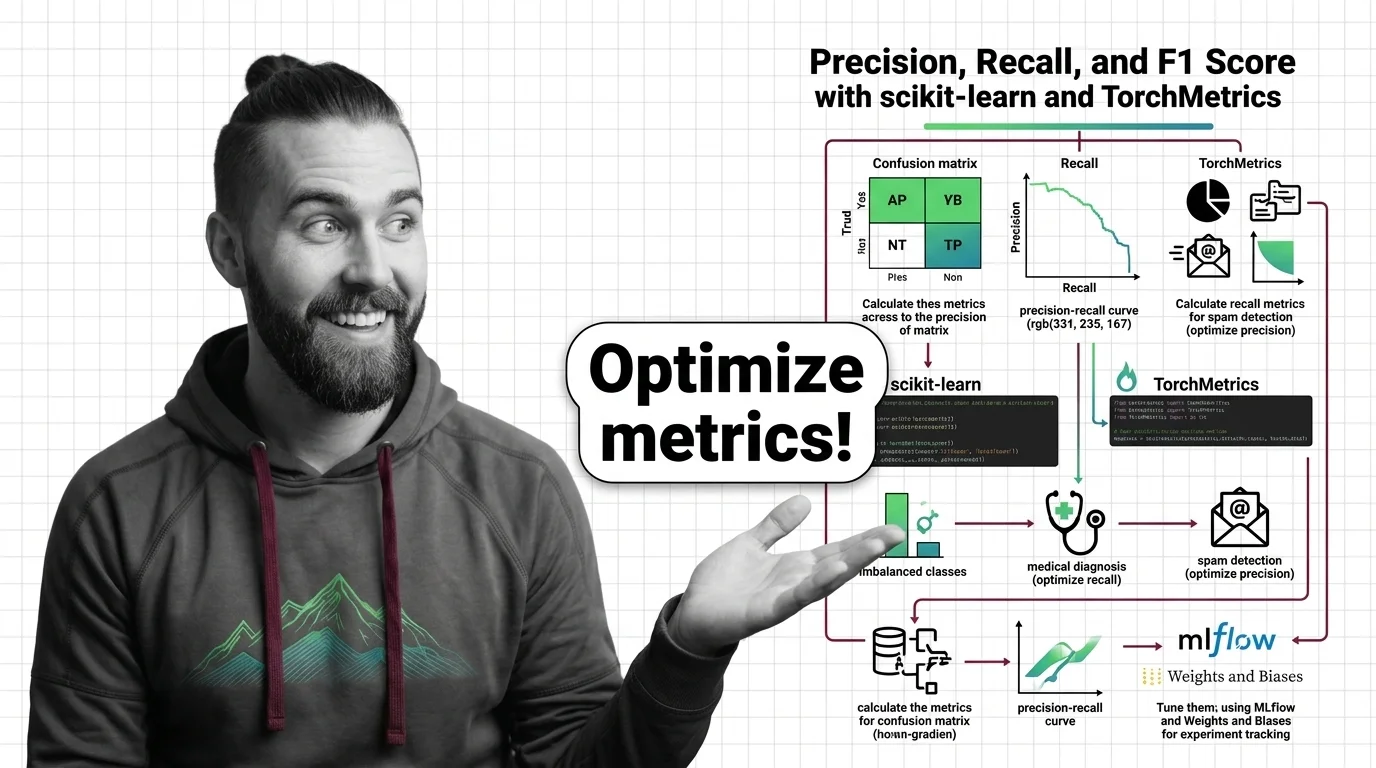

How to Calculate and Tune Precision, Recall, and F1 Score with scikit-learn and TorchMetrics in 2026

Specify precision, recall, and F1 score evaluation in scikit-learn 1.8 and TorchMetrics 1.9. A framework to prevent …

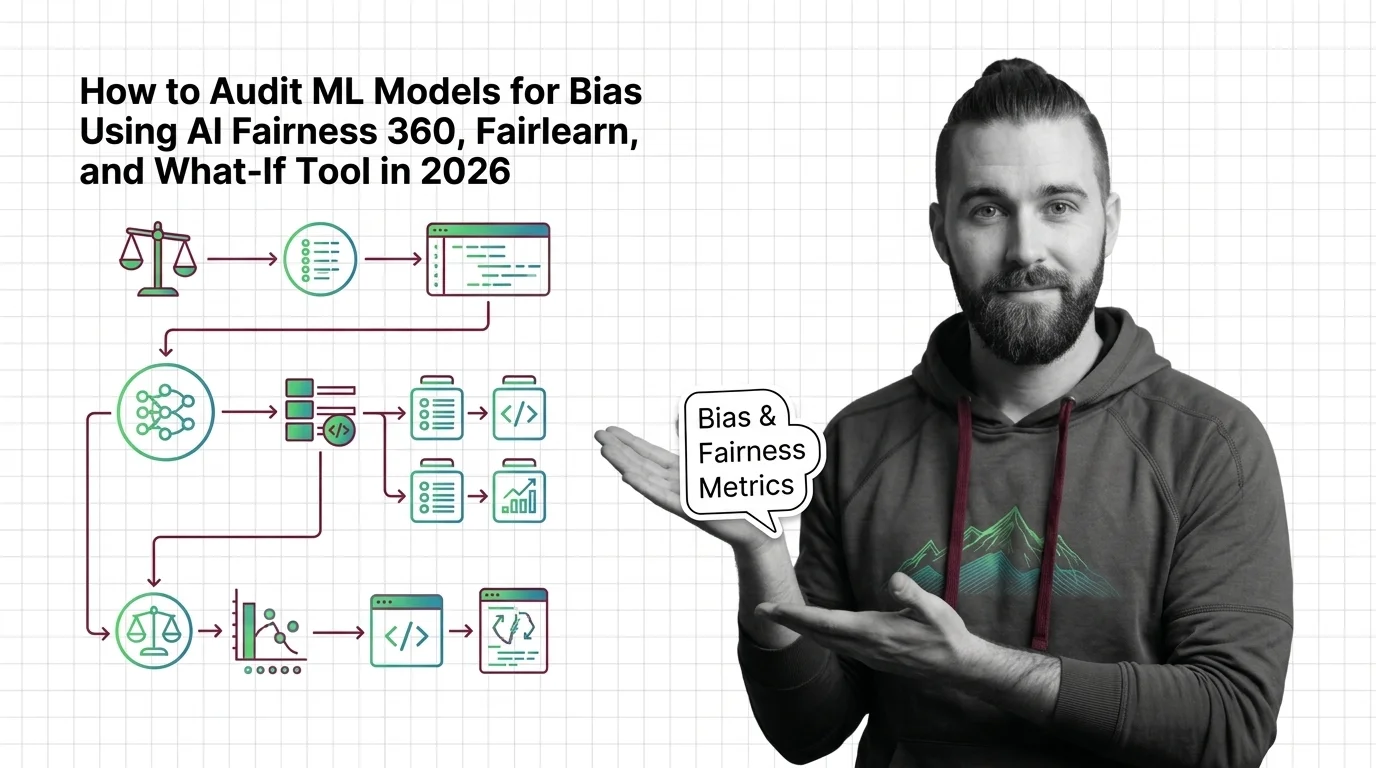

How to Audit ML Models for Bias Using AI Fairness 360, Fairlearn, and What-If Tool in 2026

Audit ML models for bias with AI Fairness 360, Fairlearn, and What-If Tool. Specification framework for fairness …

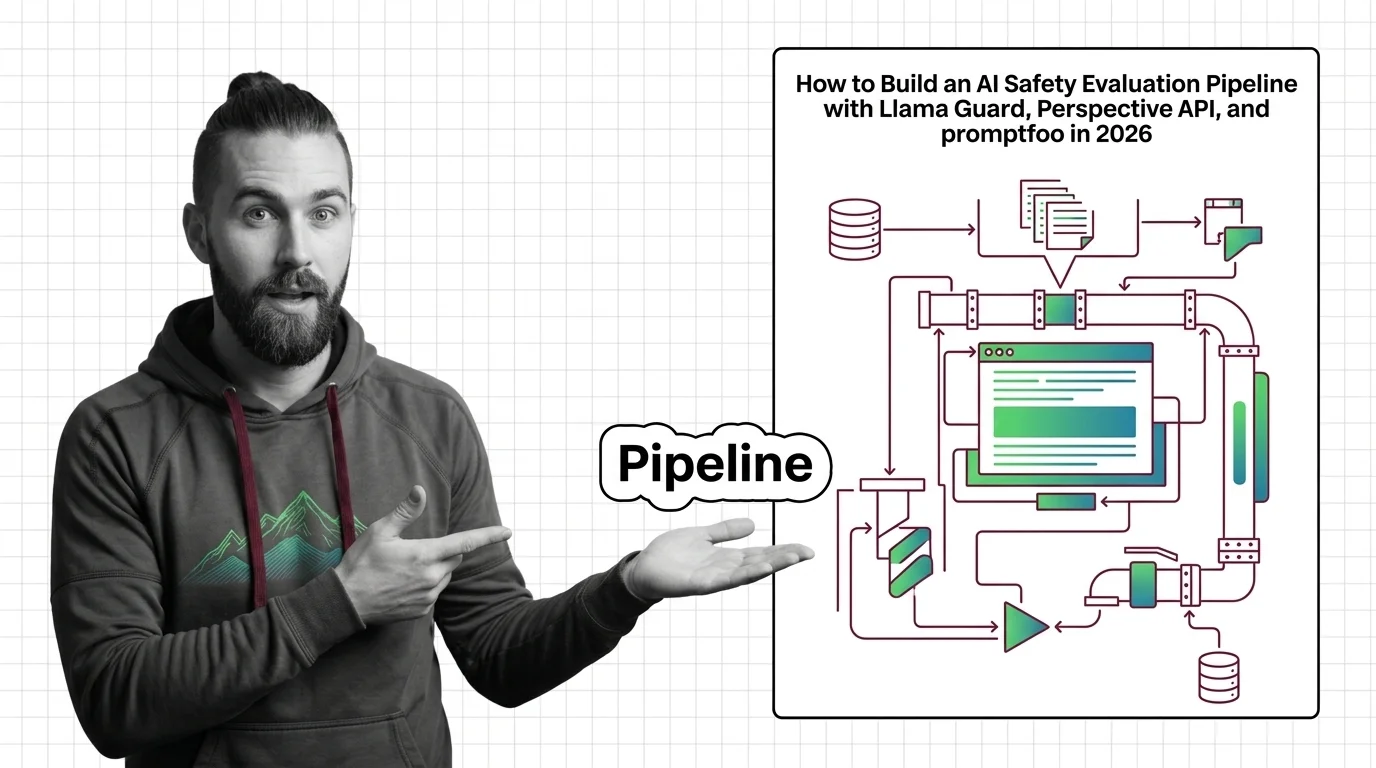

AI Safety Evaluation: Llama Guard, Perspective API, promptfoo 2026

Production AI safety pipeline with Llama Guard 4, ShieldGemma, and promptfoo. Covers taxonomy design, model evaluation, …

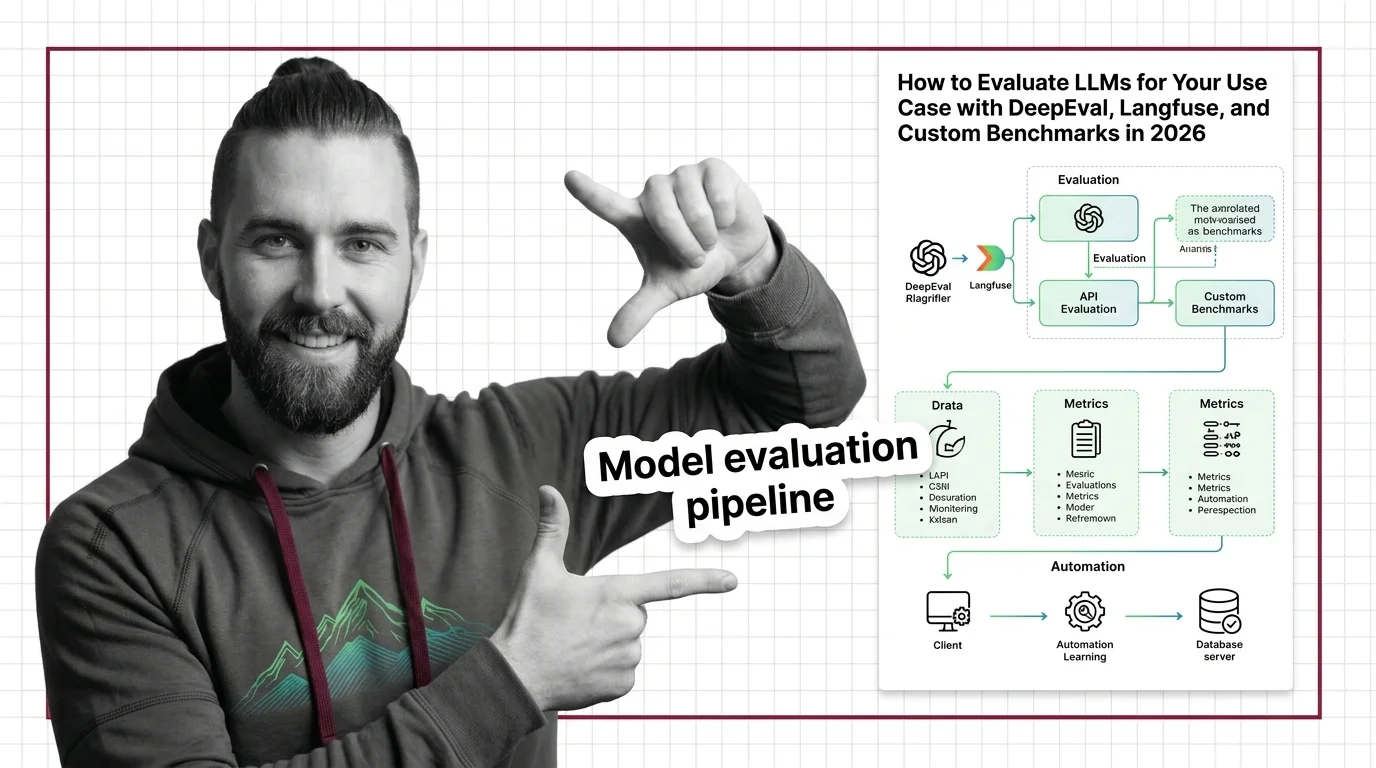

How to Evaluate LLMs for Your Use Case with DeepEval, Langfuse, and Custom Benchmarks in 2026

Build an LLM evaluation pipeline with DeepEval, Langfuse, and Promptfoo. Covers metrics selection, production tracing, …

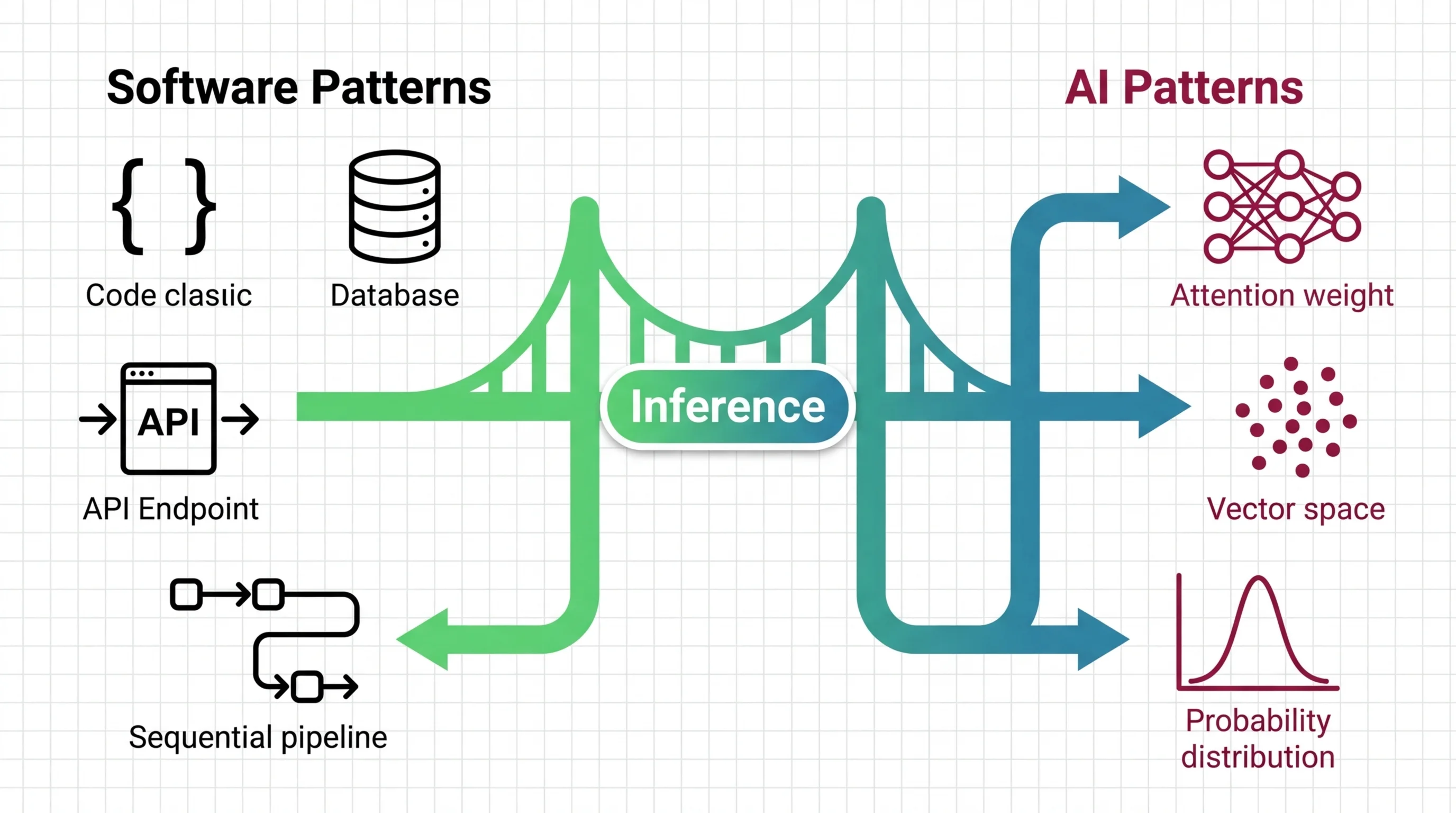

Inference Optimization for Developers: What Transfers and What Breaks

LLM inference breaks your cost model, scaling instincts, and test expectations. Learn what transfers from backend …

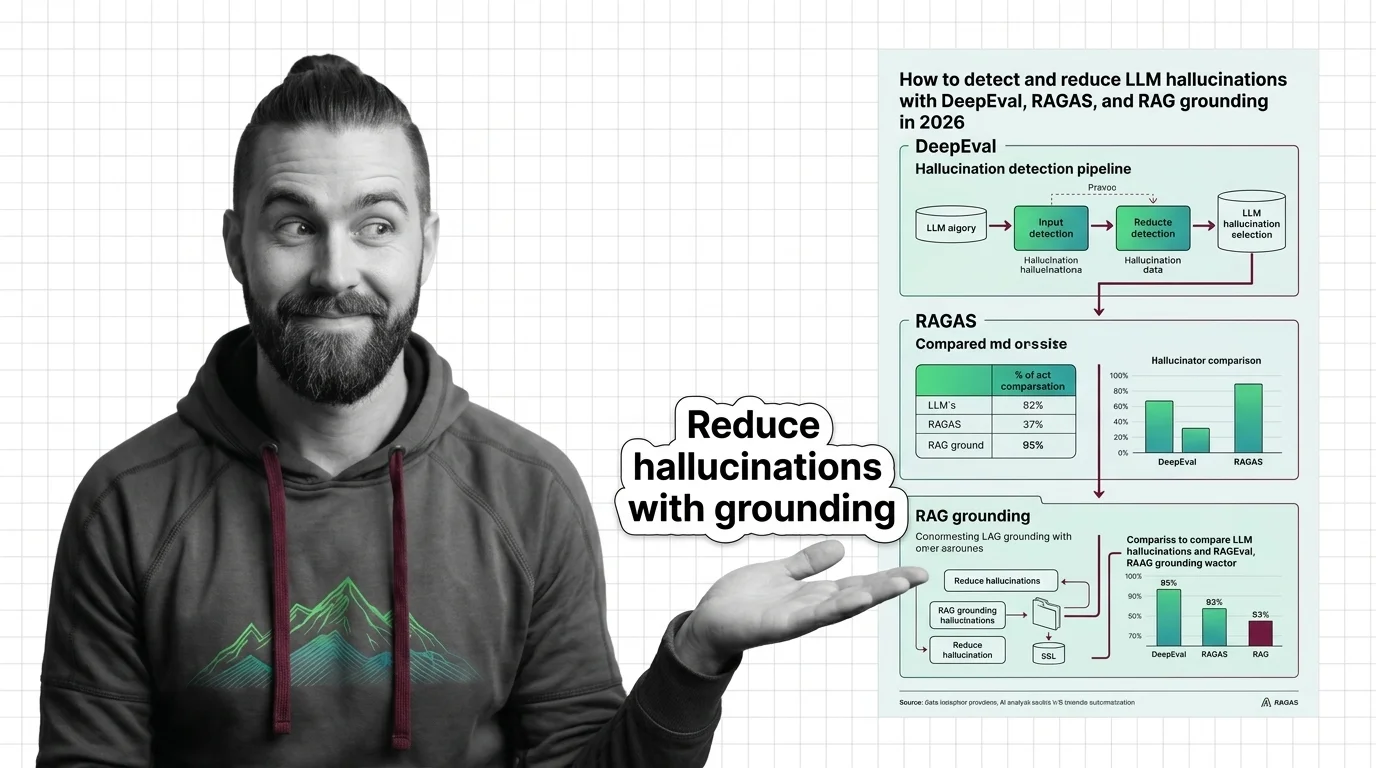

How to Detect and Reduce LLM Hallucinations with DeepEval, RAGAS, and RAG Grounding in 2026

Build a hallucination detection pipeline with DeepEval, RAGAS, and RAG grounding checks. Step-by-step framework for …

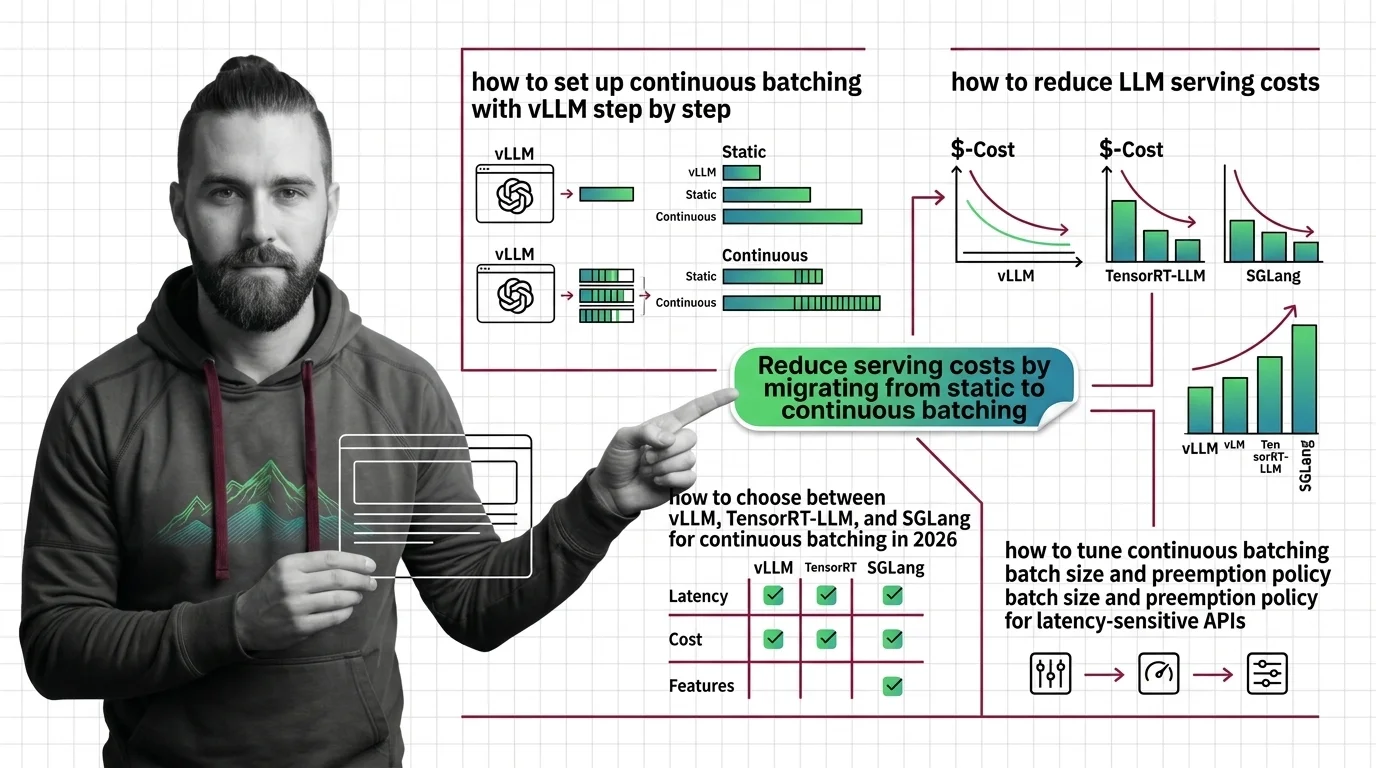

How to Deploy Continuous Batching with vLLM, TensorRT-LLM, and SGLang in 2026

Deploy continuous batching with vLLM, TensorRT-LLM, or SGLang using a parameter-by-parameter framework. Covers engine …

How to Choose and Configure Temperature, Top-P, and Min-P for Every LLM Use Case in 2026

Configure temperature, top-p, and min-p for code generation, creative writing, and RAG pipelines across OpenAI, …

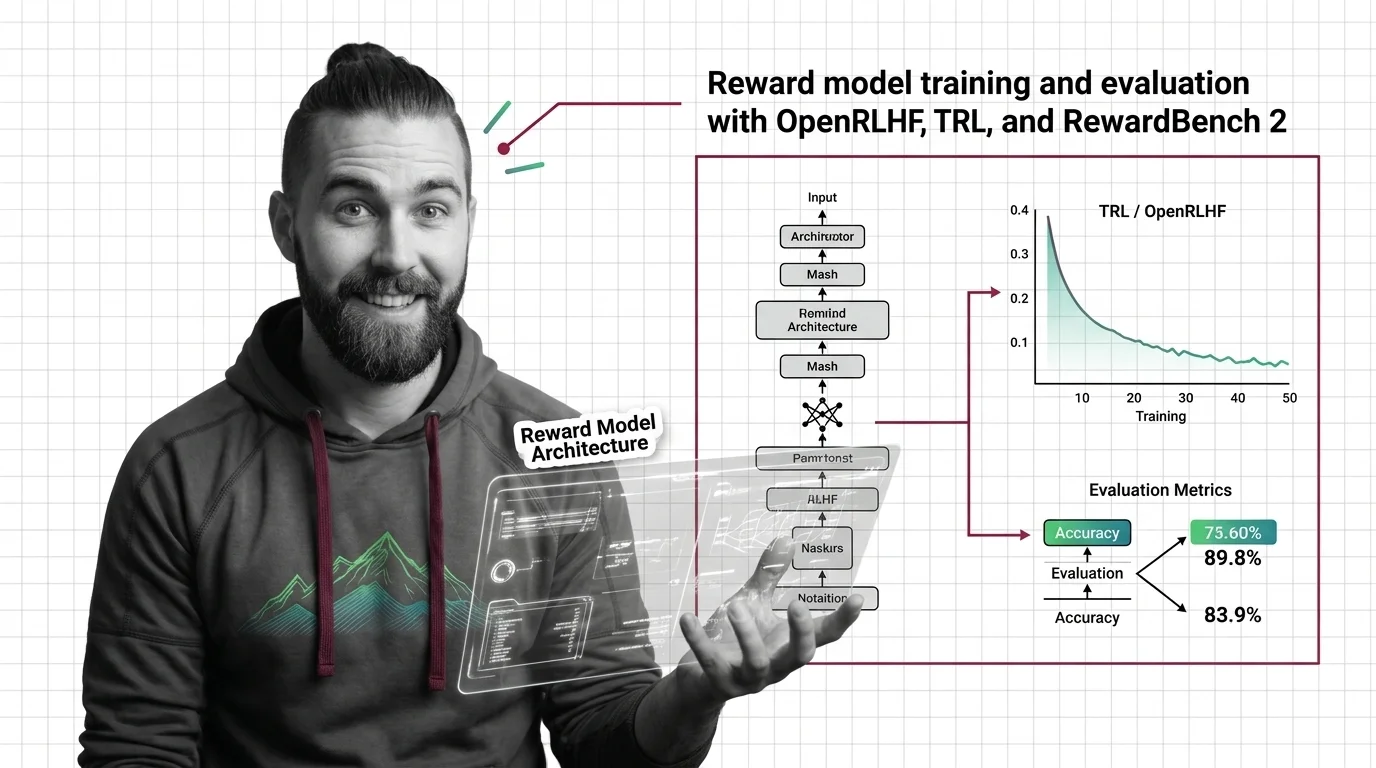

How to Train and Evaluate a Reward Model with OpenRLHF, TRL, and RewardBench 2 in 2026

Train a reward model using TRL or OpenRLHF, then evaluate with RewardBench 2. Spec-first guide covering architecture, …

How to Red Team an LLM with Promptfoo, PyRIT, and Garak in 2026

Build an LLM red teaming pipeline with Promptfoo, PyRIT, and Garak. Map attack surfaces, run multi-turn tests, and score …

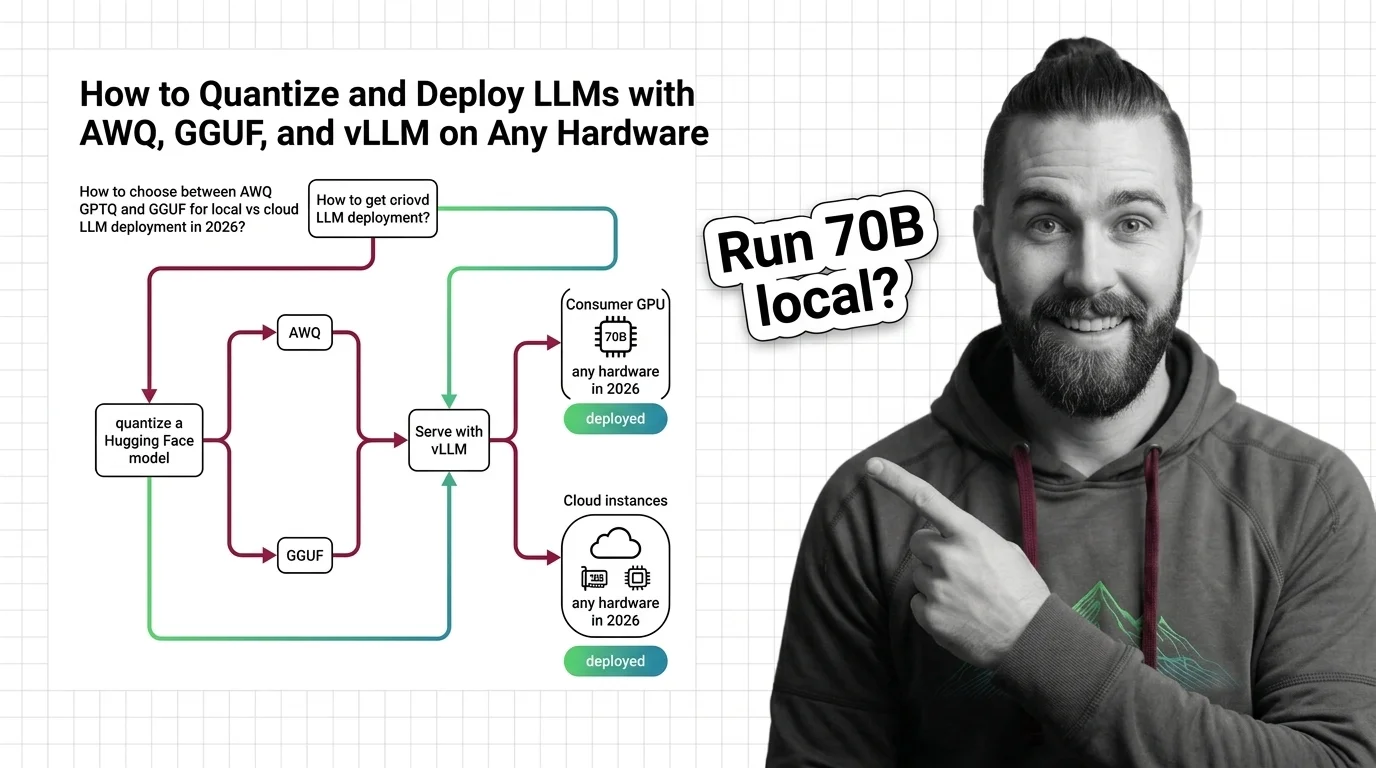

How to Quantize and Deploy LLMs with AWQ, GGUF, and vLLM on Any Hardware in 2026

Choose the right LLM quantization format for your hardware. AWQ, GPTQ, and GGUF compared — plus current vLLM and …

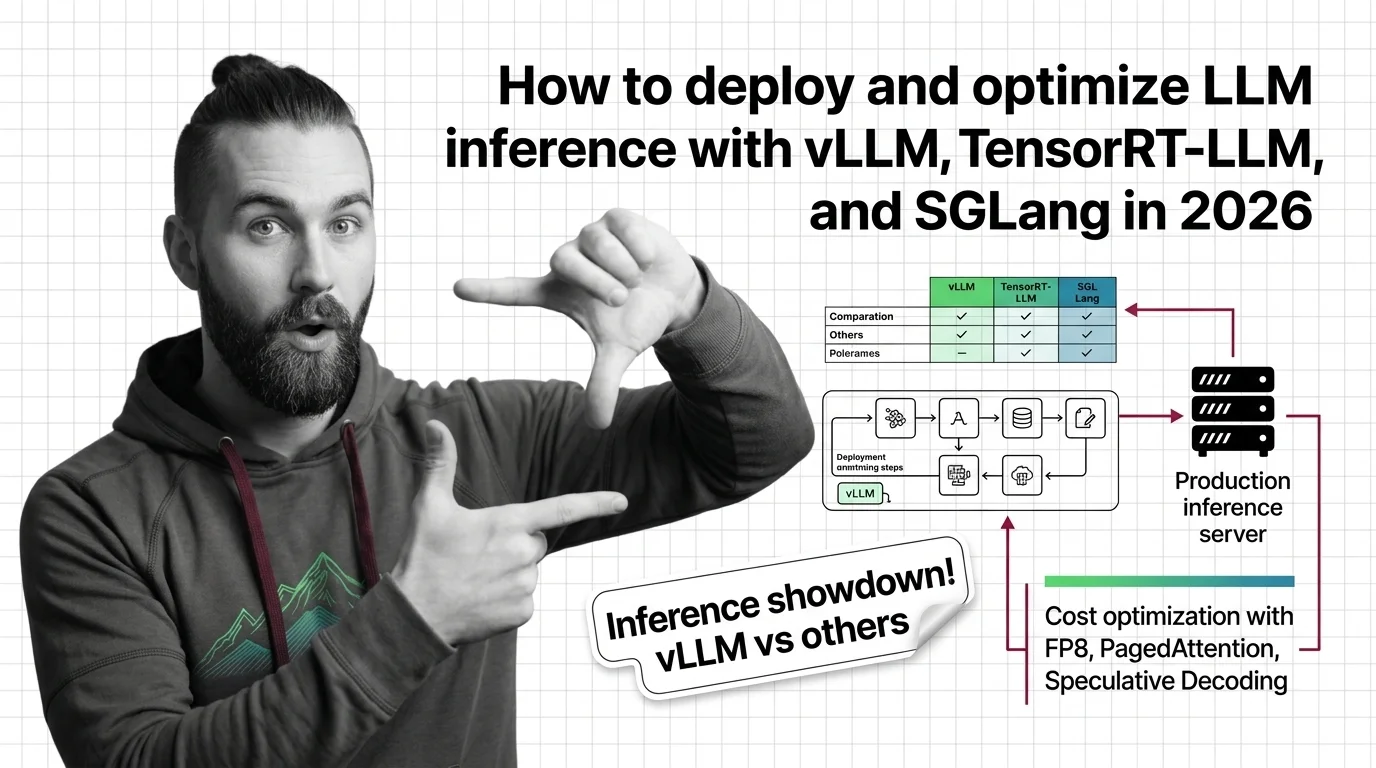

How to Deploy and Optimize LLM Inference with vLLM, TensorRT-LLM, and SGLang in 2026

Deploy production LLM inference with vLLM, TensorRT-LLM, or SGLang. Covers workload profiling, engine selection, FP8 …

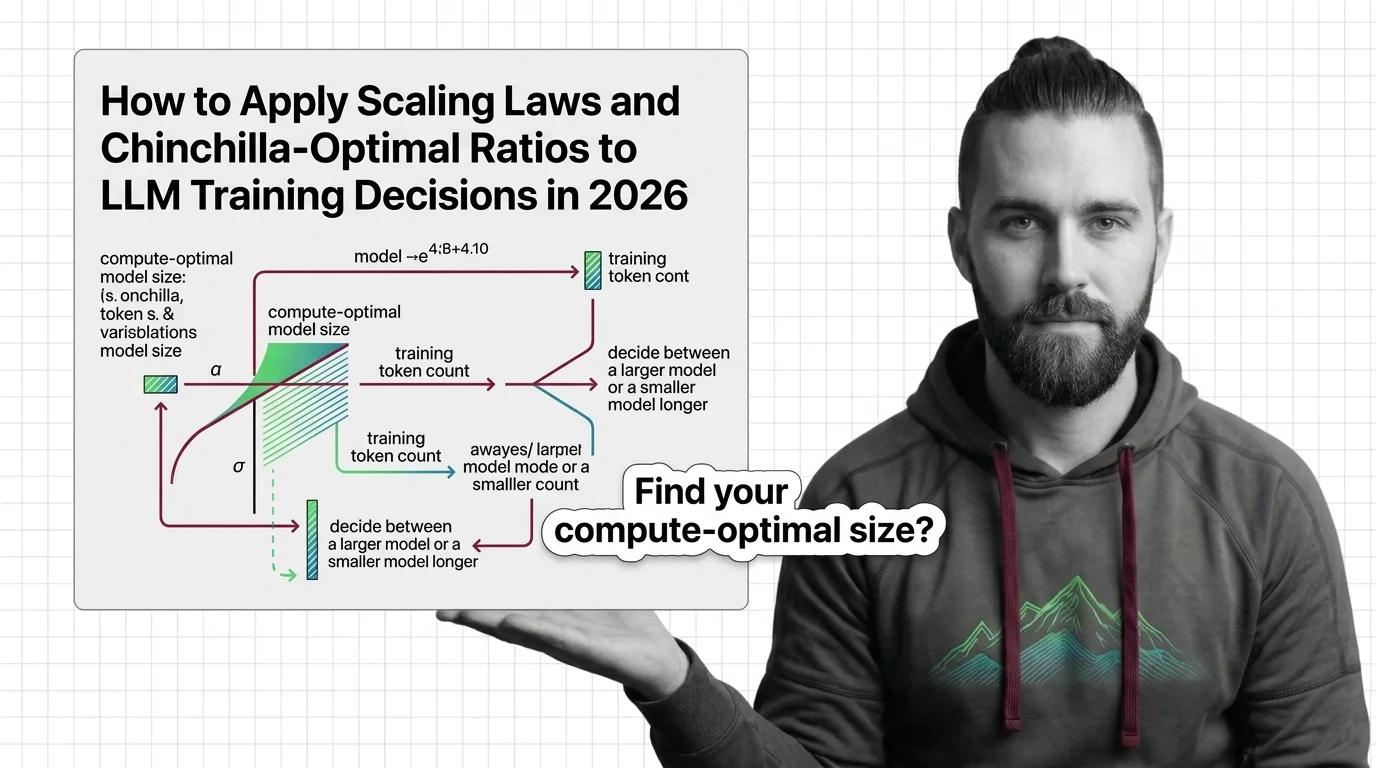

How to Apply Scaling Laws and Chinchilla-Optimal Ratios to LLM Training Decisions in 2026

Apply scaling laws and Chinchilla-optimal ratios to real LLM training decisions. Compute budgeting, model sizing, and …

How to Train a Language Model with RLHF Using OpenRLHF and TRL in 2026

Decompose, specify, and validate a full RLHF training pipeline with OpenRLHF and TRL in 2026. Covers SFT, reward …

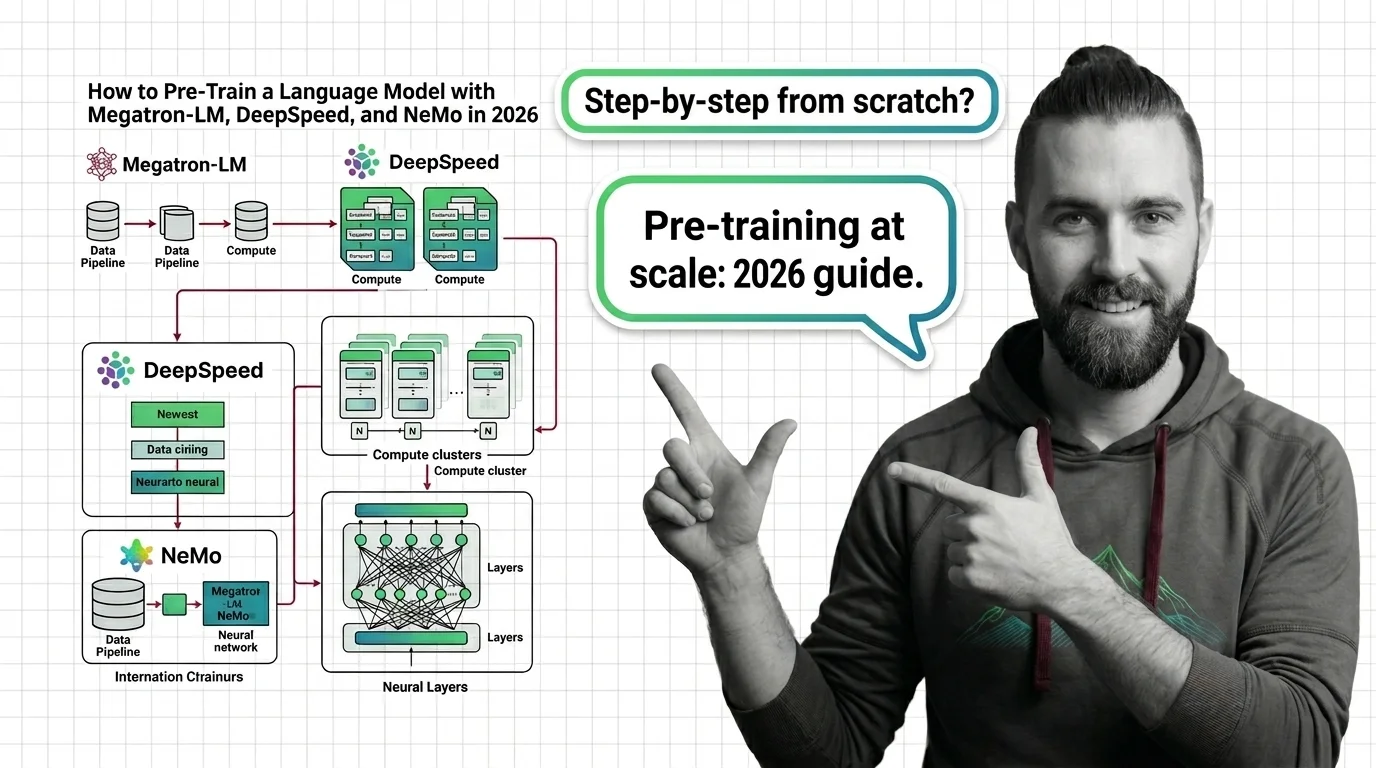

How to Pre-Train a Language Model with Megatron-LM, DeepSpeed, and NeMo in 2026

Pre-train a language model using Megatron-LM, DeepSpeed, and Megatron Bridge in 2026. Specification-first guide to …

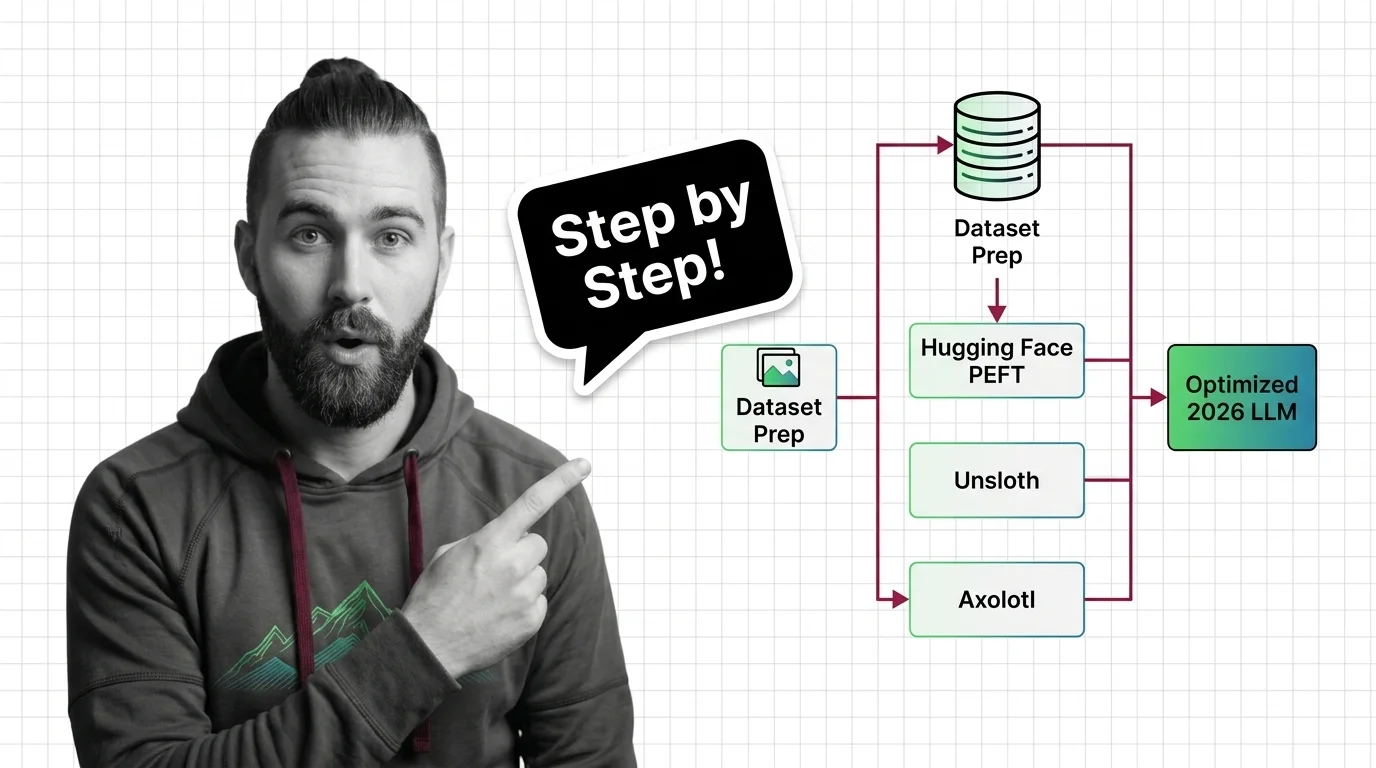

How to Fine-Tune an Open-Source LLM with Hugging Face PEFT, Unsloth, and Axolotl in 2026

Fine-tune open-source LLMs with PEFT, Unsloth, and Axolotl using a specification-first framework. Dataset prep, LoRA …

How to Fine-Tune and Deploy Sentence Transformers for Semantic Search and Clustering in 2026

Fine-tune Sentence Transformers v5.3 for semantic search and clustering. Covers MultipleNegativesRankingLoss, Matryoshka …

How to Build a Multi-Vector Retrieval Pipeline with RAGatouille, ColBERTv2, and Qdrant in 2026

Build a production multi-vector retrieval pipeline with ColBERTv2, RAGatouille, and Qdrant. Specification-first …

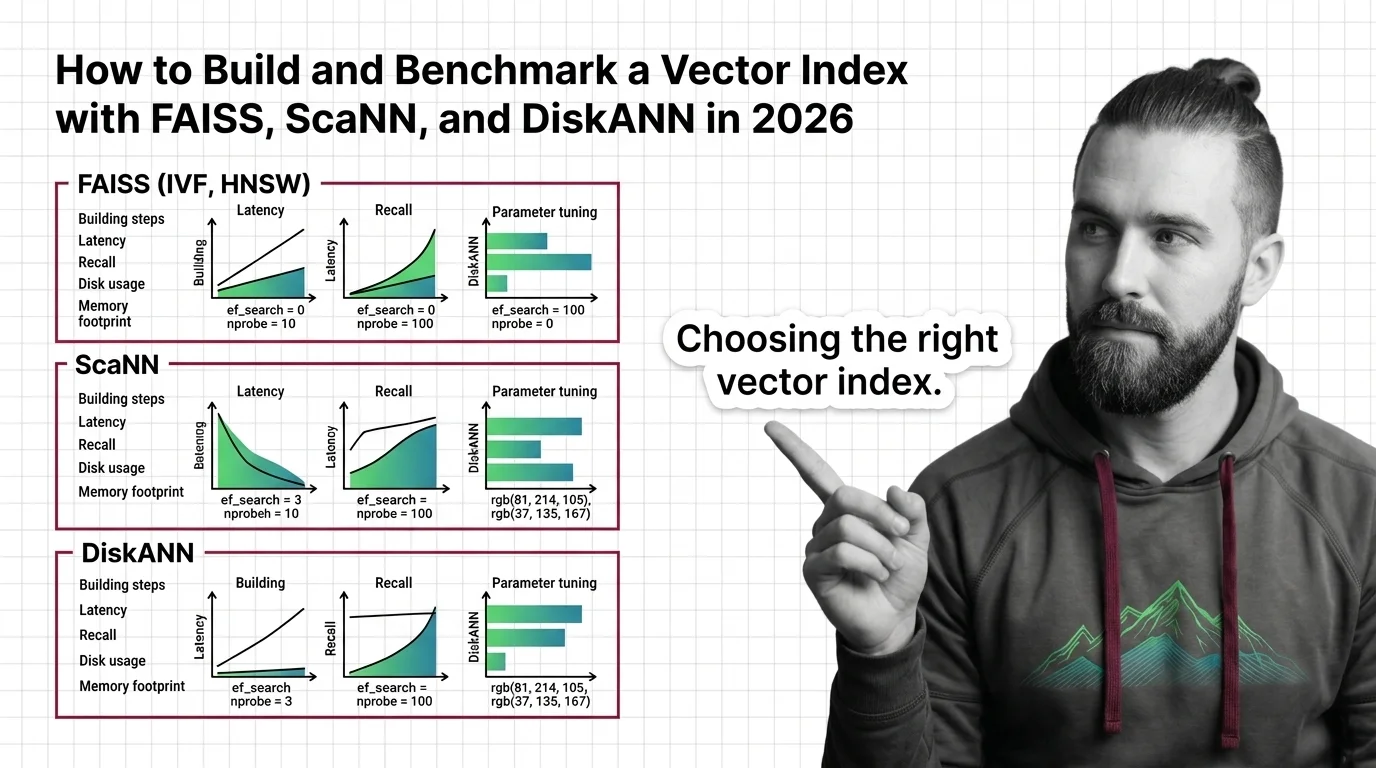

How to Build and Benchmark a Vector Index with FAISS, ScaNN, and DiskANN in 2026

Build and benchmark vector indexes with FAISS, ScaNN, and DiskANN. Choose index types by dataset size, tune parameters …

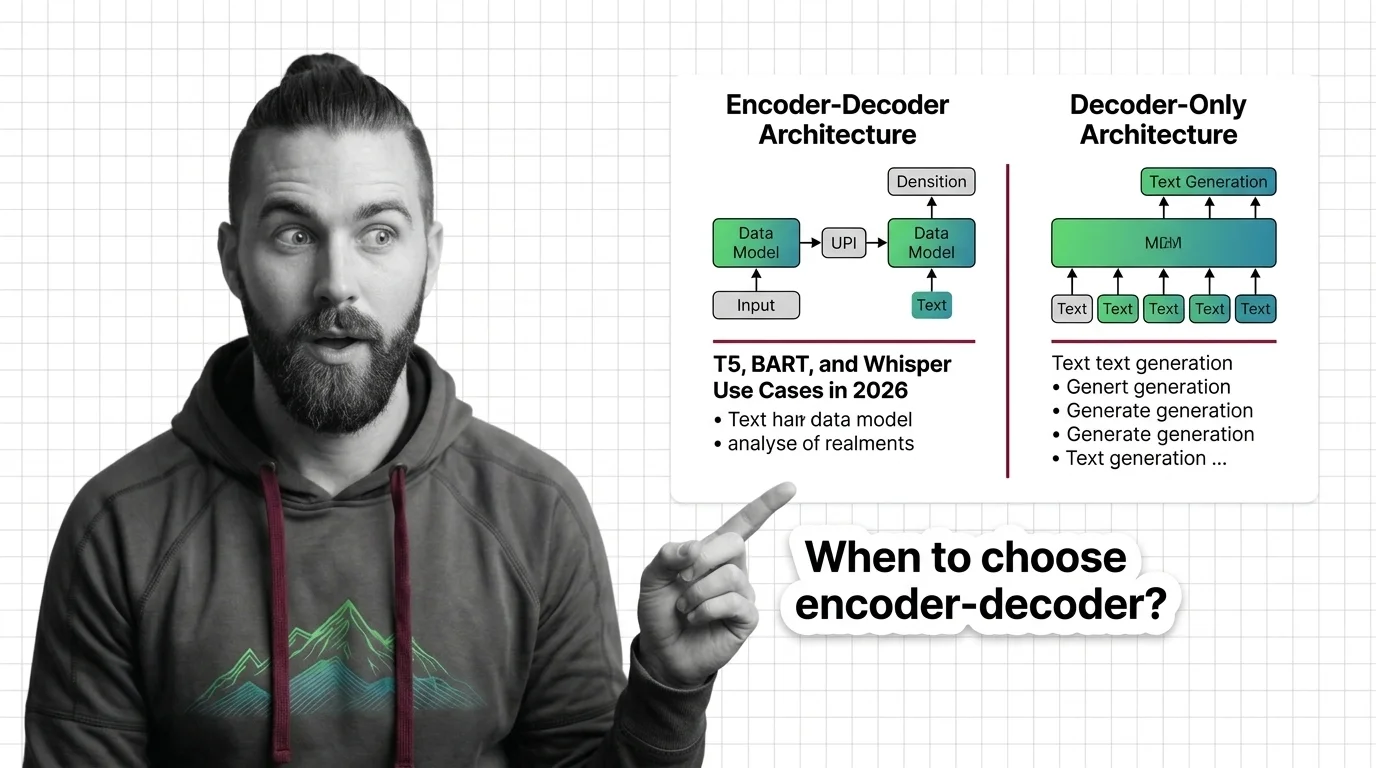

When to Choose Encoder-Decoder Over Decoder-Only: T5, BART, and Whisper Use Cases in 2026

Learn when encoder-decoder models like T5, BART, and Whisper outperform decoder-only alternatives. A spec framework for …

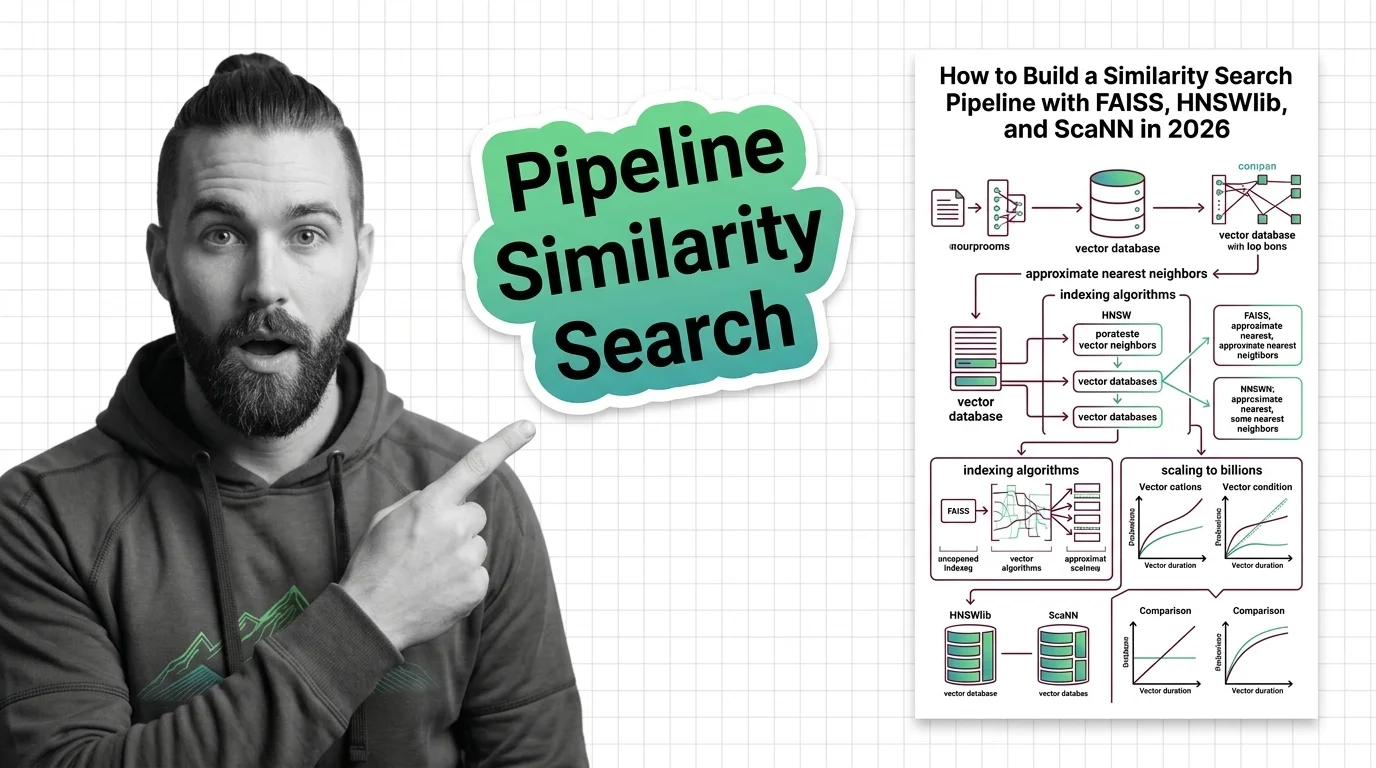

Similarity Search Pipeline: FAISS, HNSWlib, ScaNN (2026)

Select between FAISS, HNSWlib, and ScaNN for production vector search. Specification-first approach covering index …

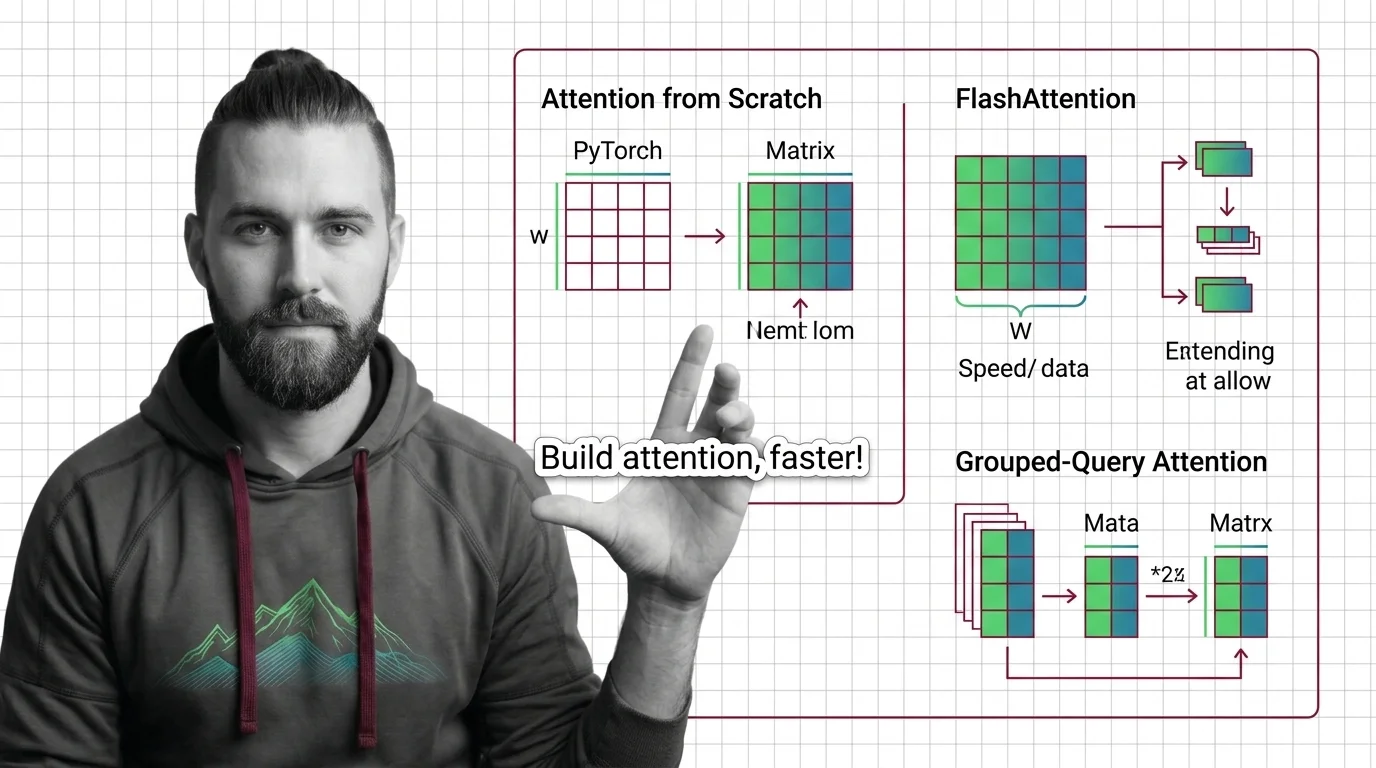

Implementing Attention from Scratch: PyTorch, FlashAttention, and Grouped-Query Optimization

Spec your attention implementation before writing code. Learn to decompose QKV projections, configure FlashAttention …

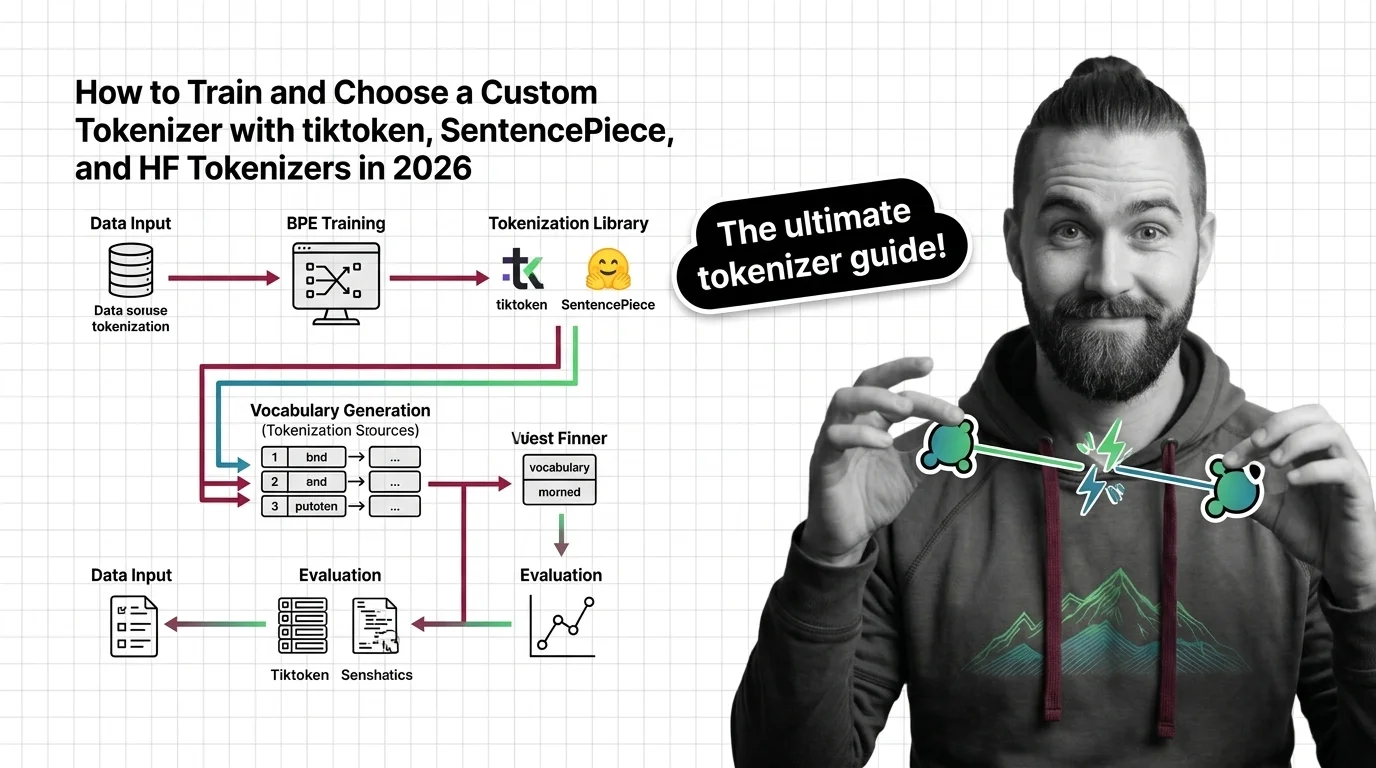

How to Train and Choose a Custom Tokenizer with tiktoken, SentencePiece, and HF Tokenizers in 2026

Learn how to choose, train, and validate a custom tokenizer using tiktoken, SentencePiece, and HF Tokenizers with a …

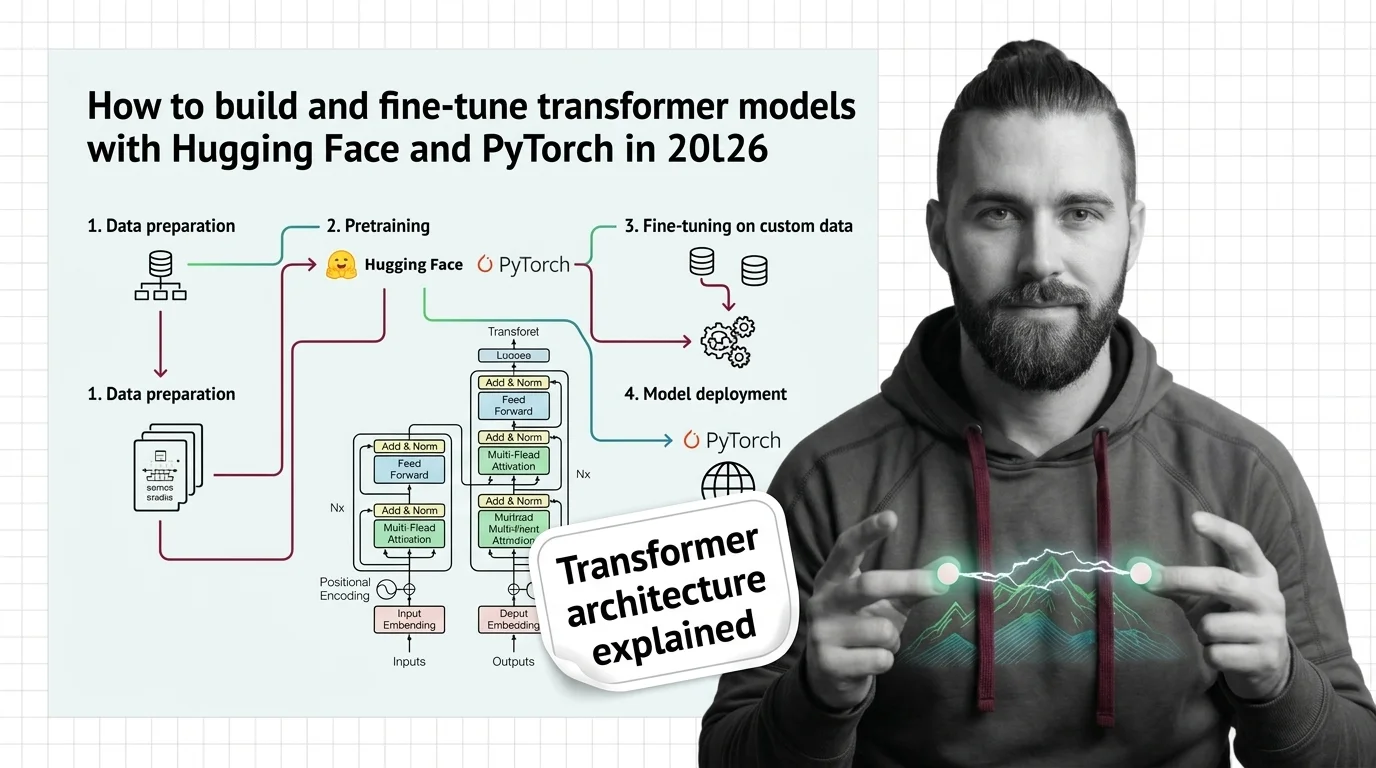

How to Build and Fine-Tune Transformer Models with Hugging Face and PyTorch in 2026

Build and fine-tune transformer models the specification-first way. PyTorch 2.10, Hugging Face Transformers v5, and the …

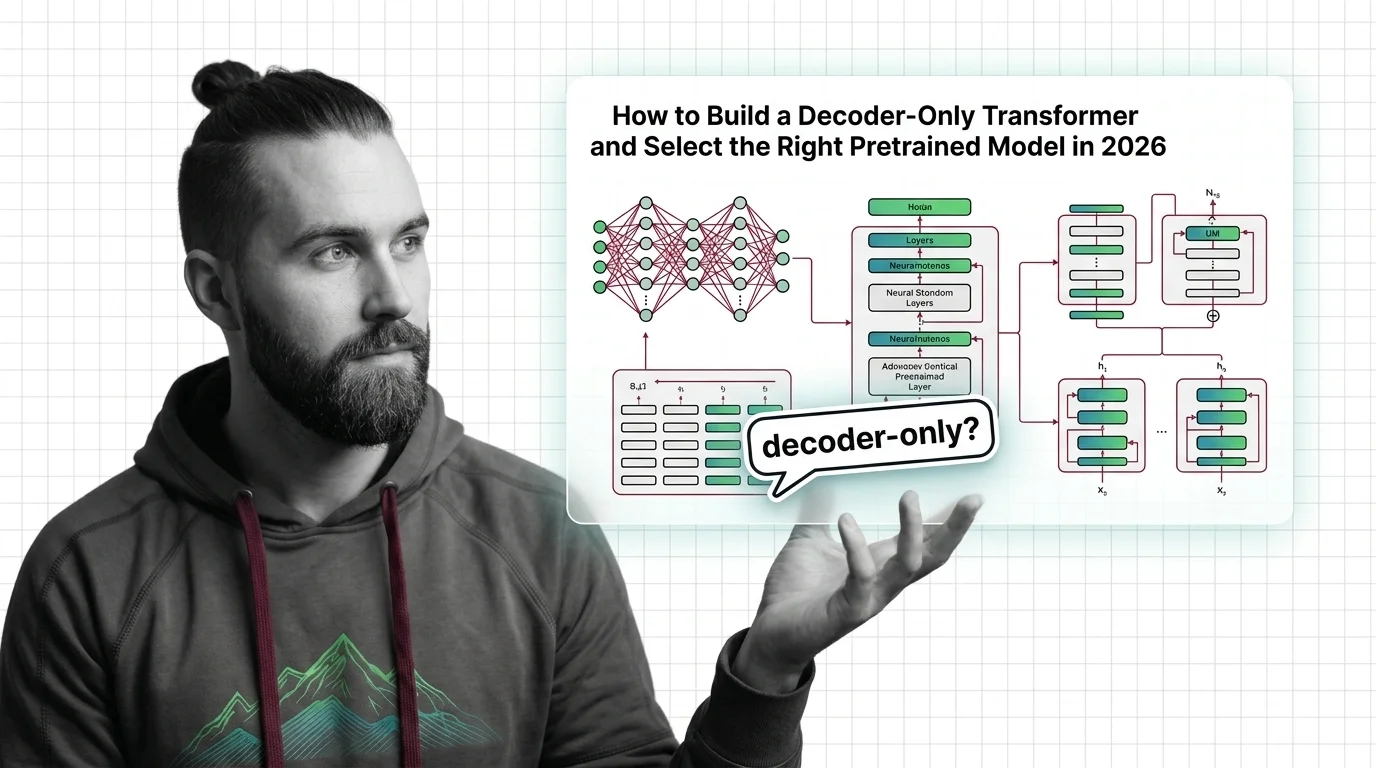

How to Build a Decoder-Only Transformer and Select the Right Pretrained Model in 2026

Build a decoder-only transformer with correct causal masking in PyTorch, then pick between GPT-5, LLaMA 4, and DeepSeek …

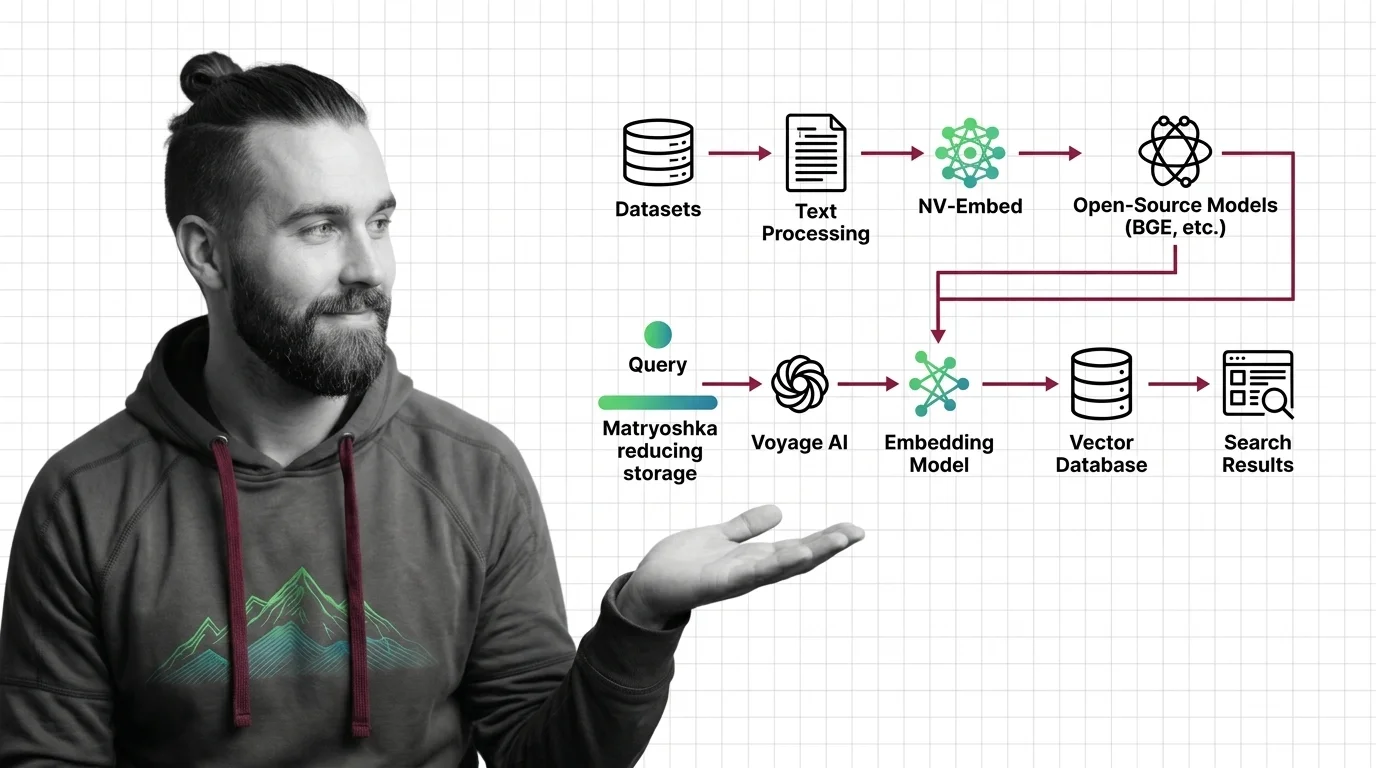

Embedding Models: Voyage 4 vs NV-Embed-v2 vs BGE-M3 2026

Choose between Voyage 4, NV-Embed-v2, and BGE-M3. Includes Matryoshka embeddings and cost optimization strategies for …

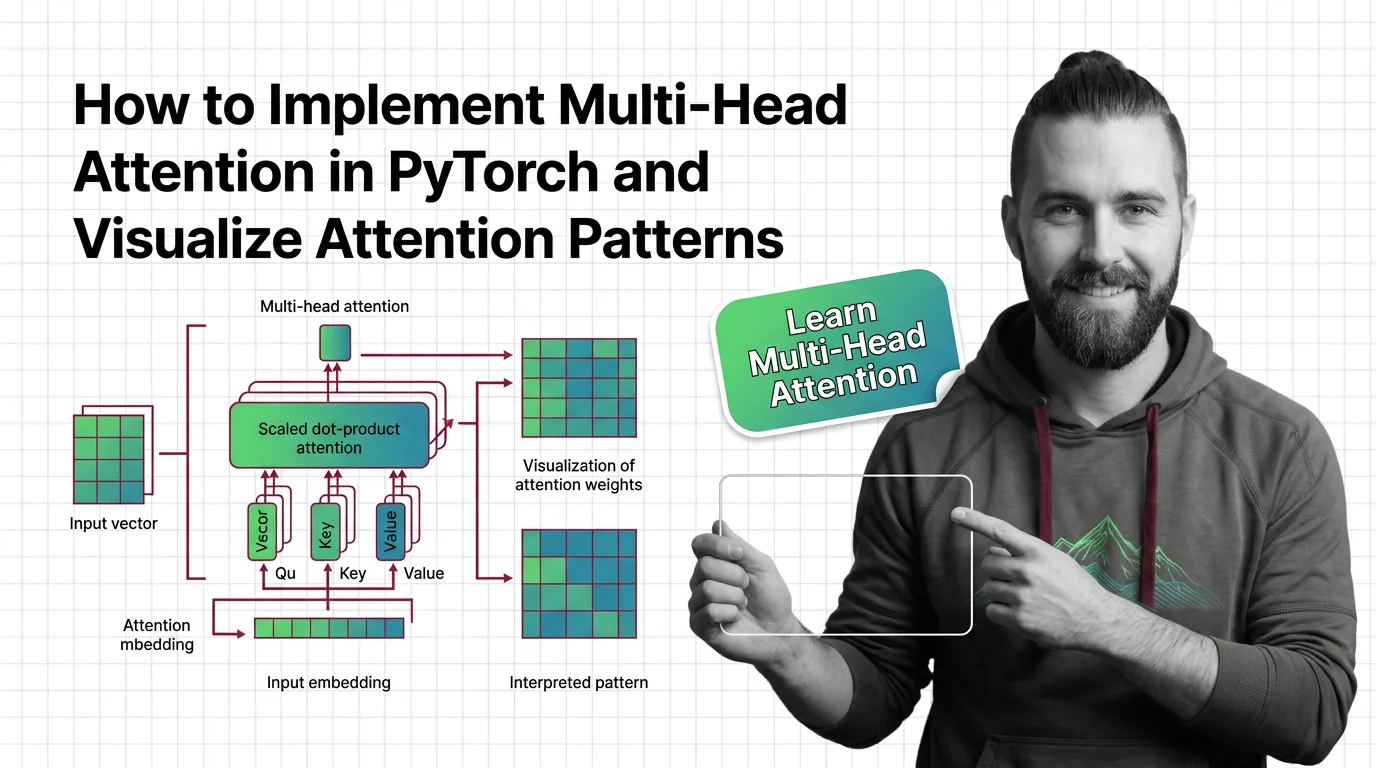

How to Implement Multi-Head Attention in PyTorch and Visualize Attention Patterns

Specify multi-head attention for AI-assisted PyTorch builds. Decompose QKV projections, constrain SDPA kernels, and …

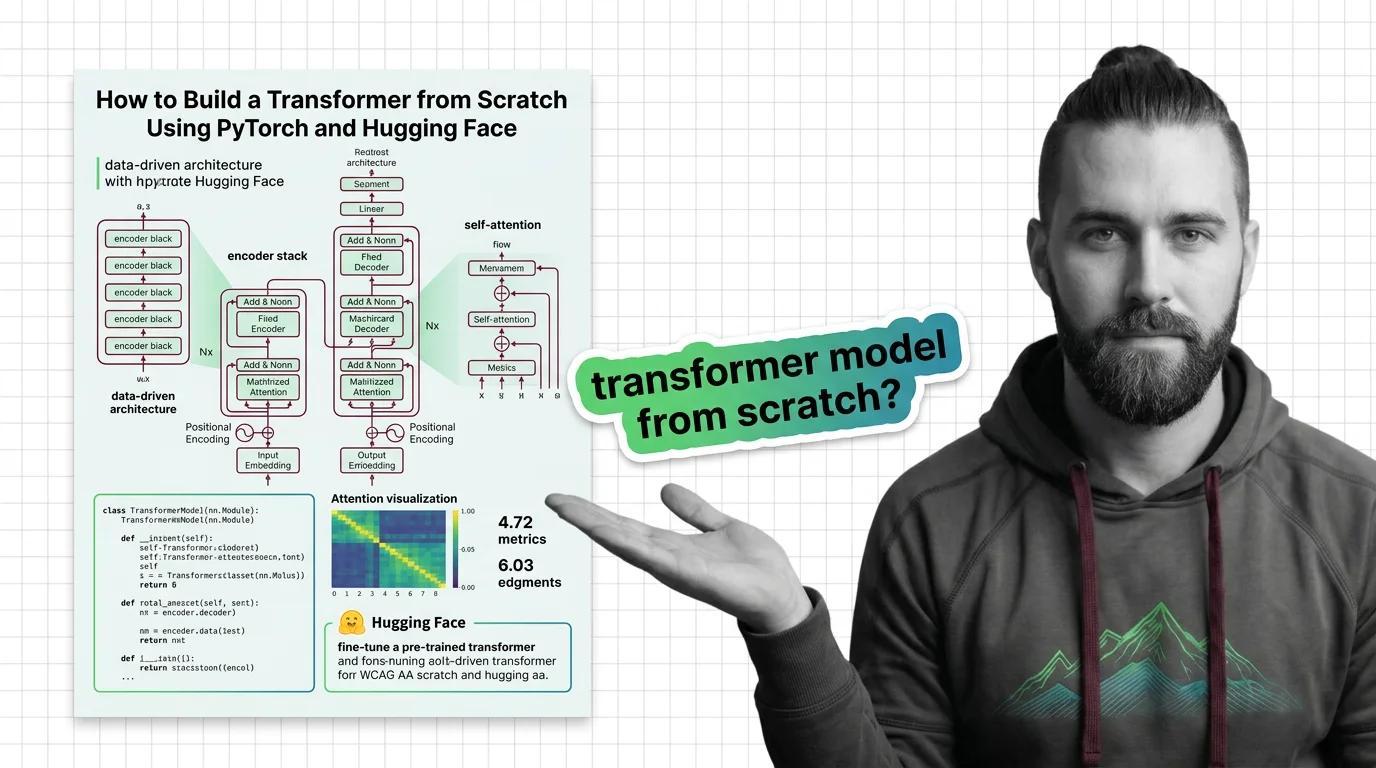

How to Build a Transformer from Scratch Using PyTorch and Hugging Face

Specify a transformer from scratch in PyTorch and Hugging Face. Decompose attention, embeddings, and training loops into …