How to Fine-Tune and Deploy Sentence Transformers for Semantic Search and Clustering in 2026

Fine-tune Sentence Transformers v5.3 for semantic search and clustering. Covers MultipleNegativesRankingLoss, Matryoshka …

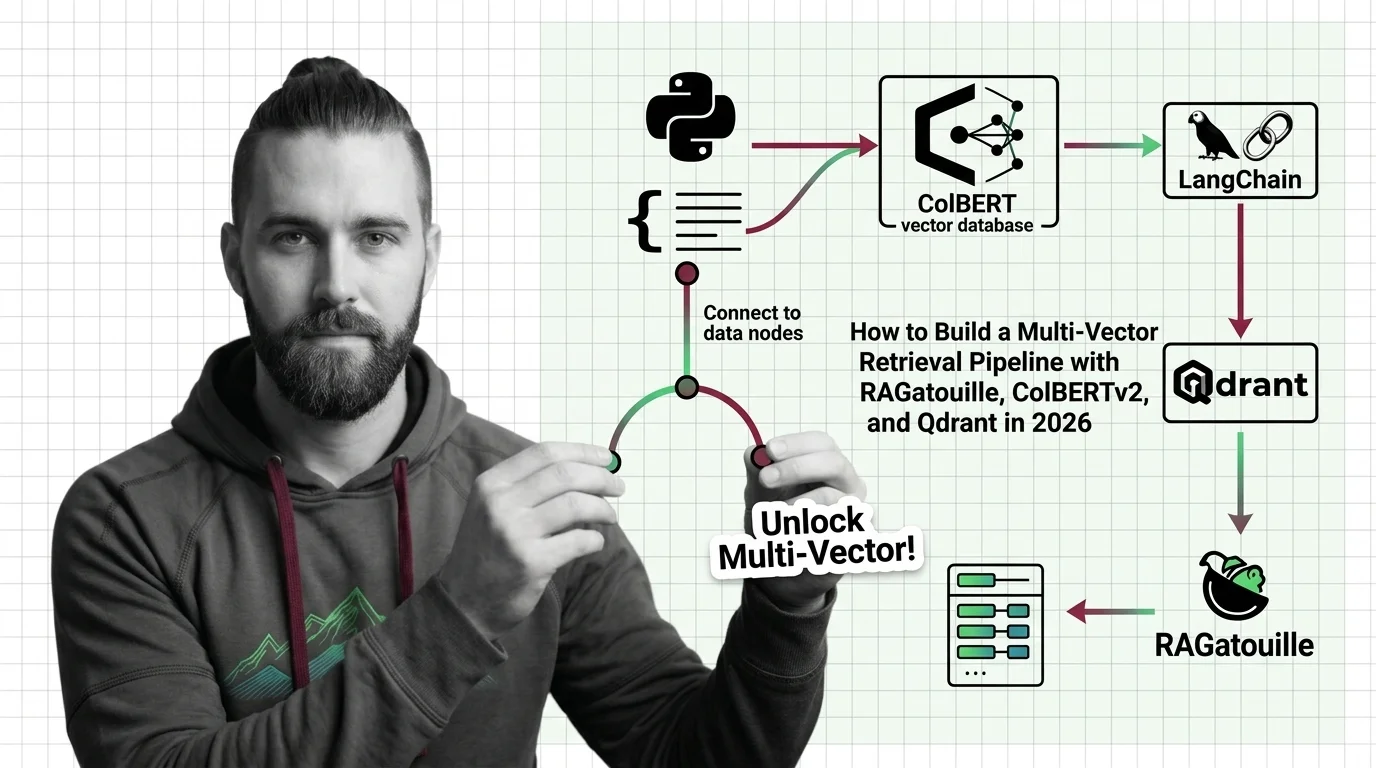

How to Build a Multi-Vector Retrieval Pipeline with RAGatouille, ColBERTv2, and Qdrant in 2026

Build a production multi-vector retrieval pipeline with ColBERTv2, RAGatouille, and Qdrant. Specification-first …

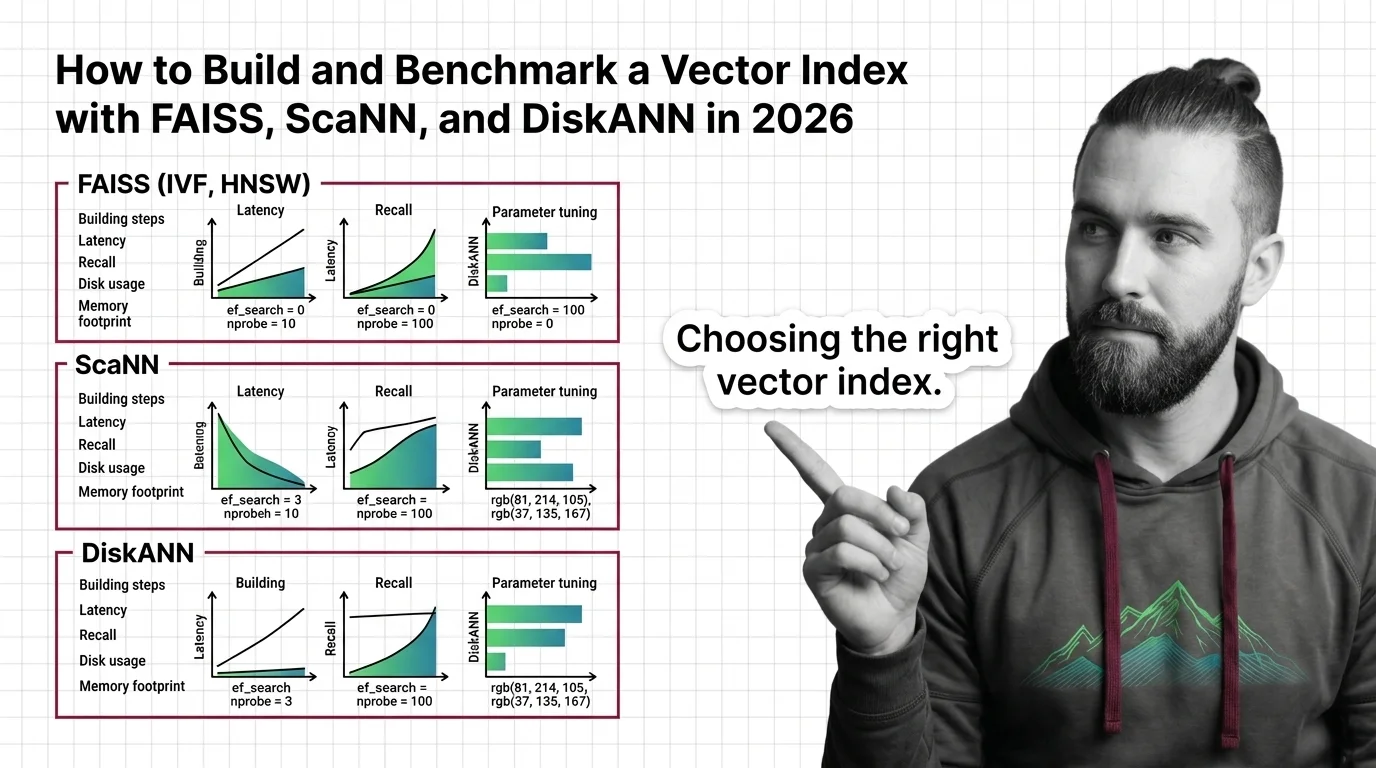

How to Build and Benchmark a Vector Index with FAISS, ScaNN, and DiskANN in 2026

Build and benchmark vector indexes with FAISS, ScaNN, and DiskANN. Choose index types by dataset size, tune parameters …

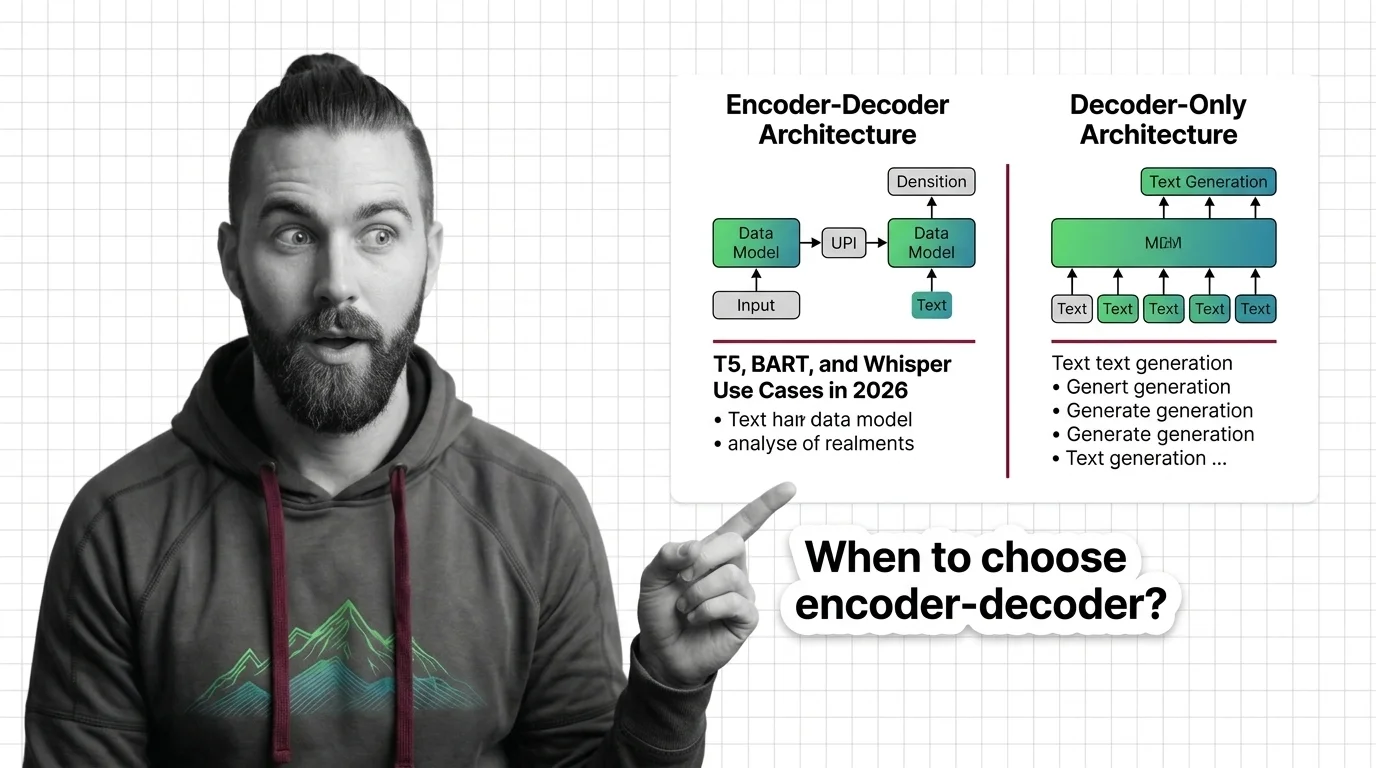

When to Choose Encoder-Decoder Over Decoder-Only: T5, BART, and Whisper Use Cases in 2026

Learn when encoder-decoder models like T5, BART, and Whisper outperform decoder-only alternatives. A spec framework for …

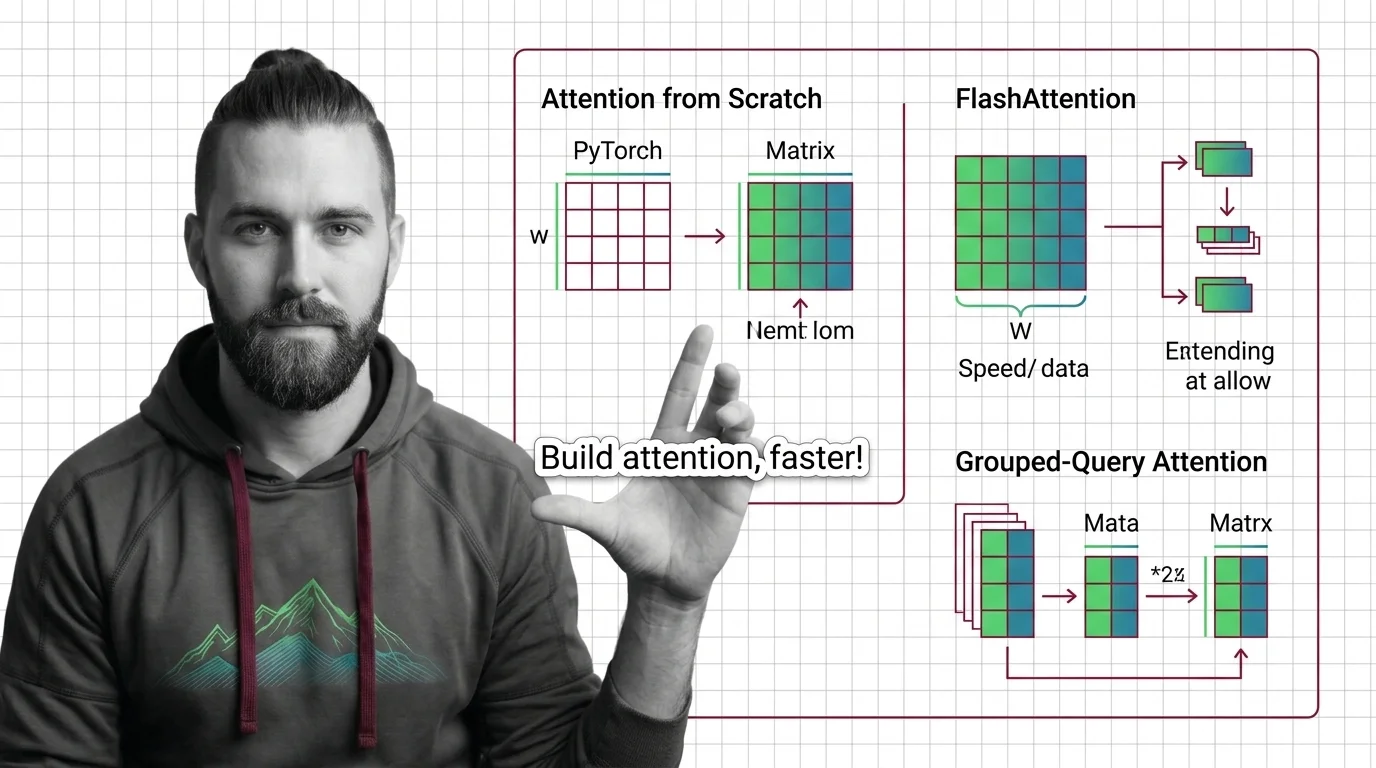

Implementing Attention from Scratch: PyTorch, FlashAttention, and Grouped-Query Optimization

Spec your attention implementation before writing code. Learn to decompose QKV projections, configure FlashAttention …

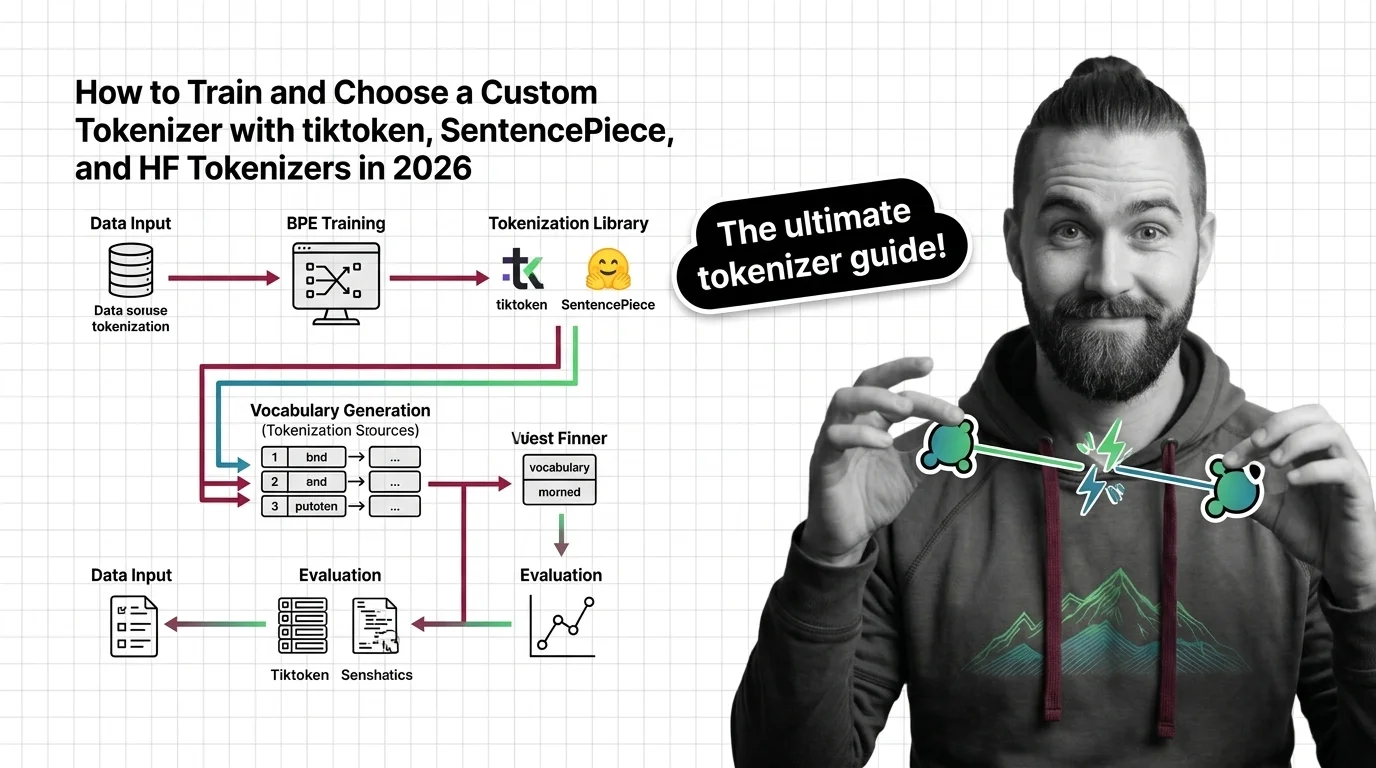

How to Train and Choose a Custom Tokenizer with tiktoken, SentencePiece, and HF Tokenizers in 2026

Learn how to choose, train, and validate a custom tokenizer using tiktoken, SentencePiece, and HF Tokenizers with a …

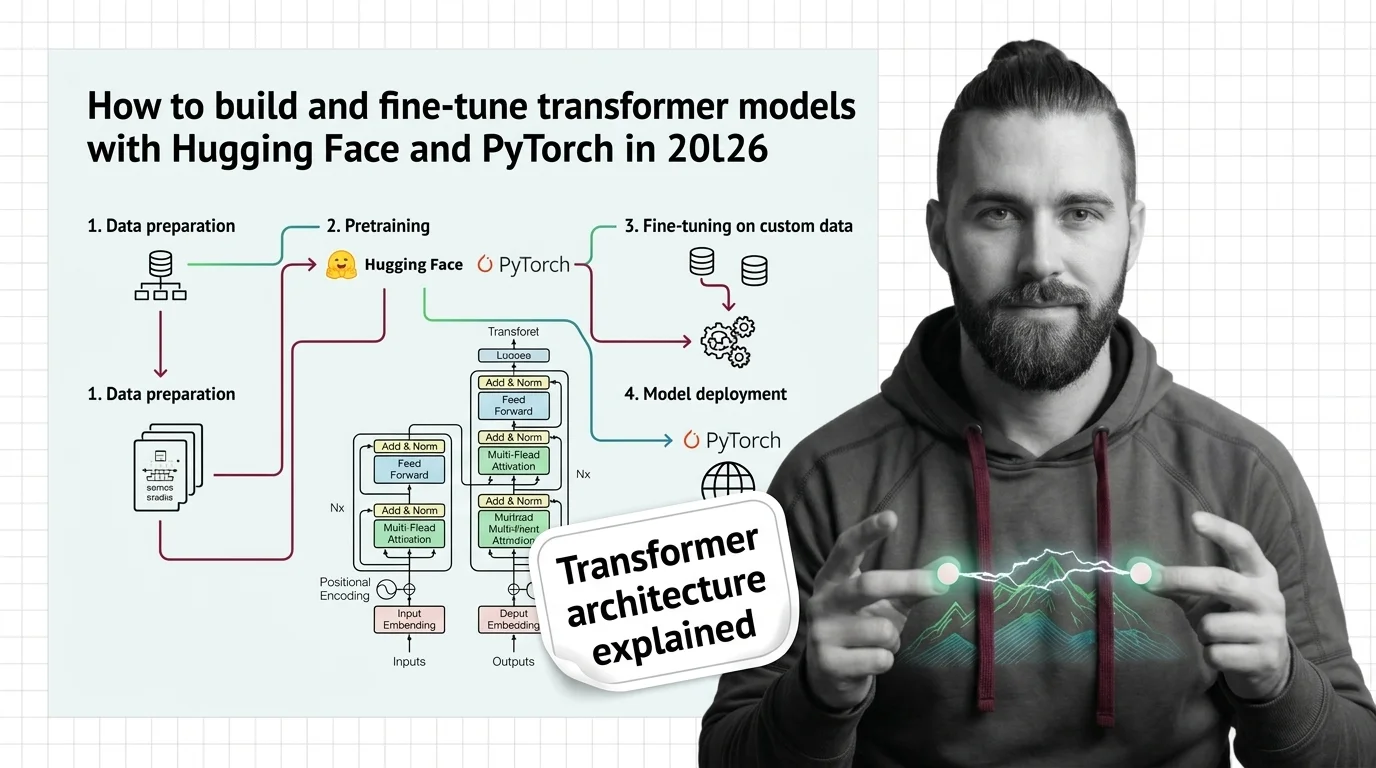

How to Build and Fine-Tune Transformer Models with Hugging Face and PyTorch in 2026

Build and fine-tune transformer models the specification-first way. PyTorch 2.10, Hugging Face Transformers v5, and the …

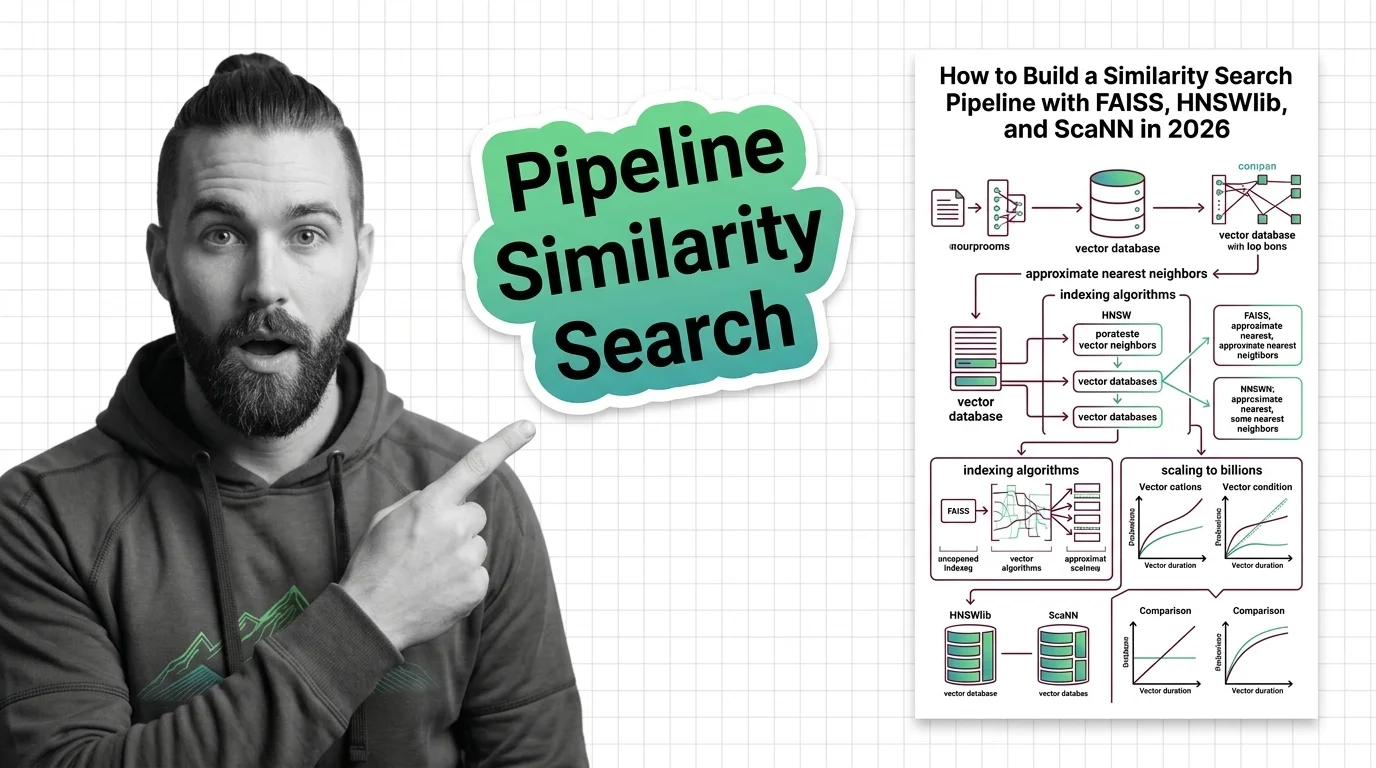

How to Build a Similarity Search Pipeline with FAISS, HNSWlib, and ScaNN in 2026

Build a similarity search pipeline with FAISS, HNSWlib, or ScaNN using a specification-first approach. Covers index …

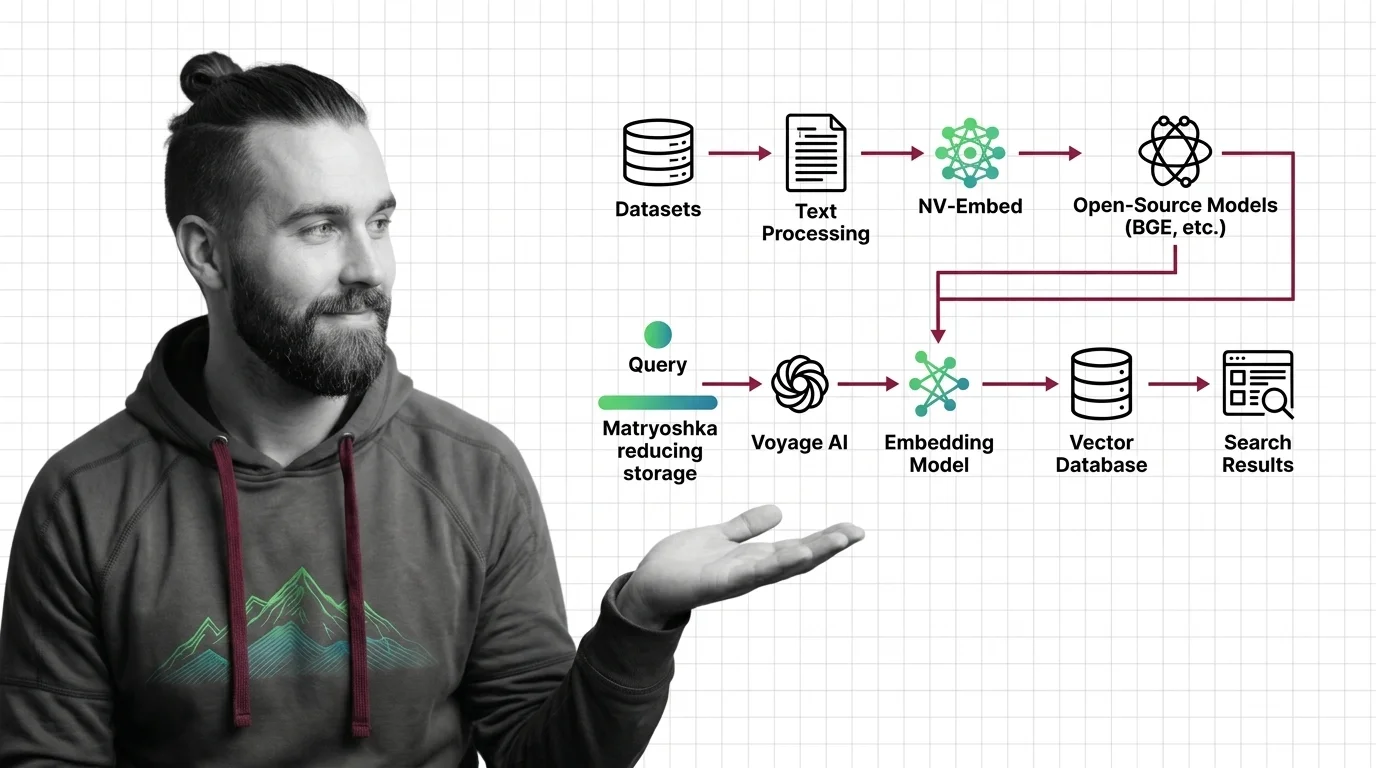

How to Build a Semantic Search Pipeline with Voyage AI, NV-Embed, and Open-Source Models in 2026

Specification-first framework for building semantic search in 2026. Choose between Voyage 4, NV-Embed-v2, and BGE-M3 …

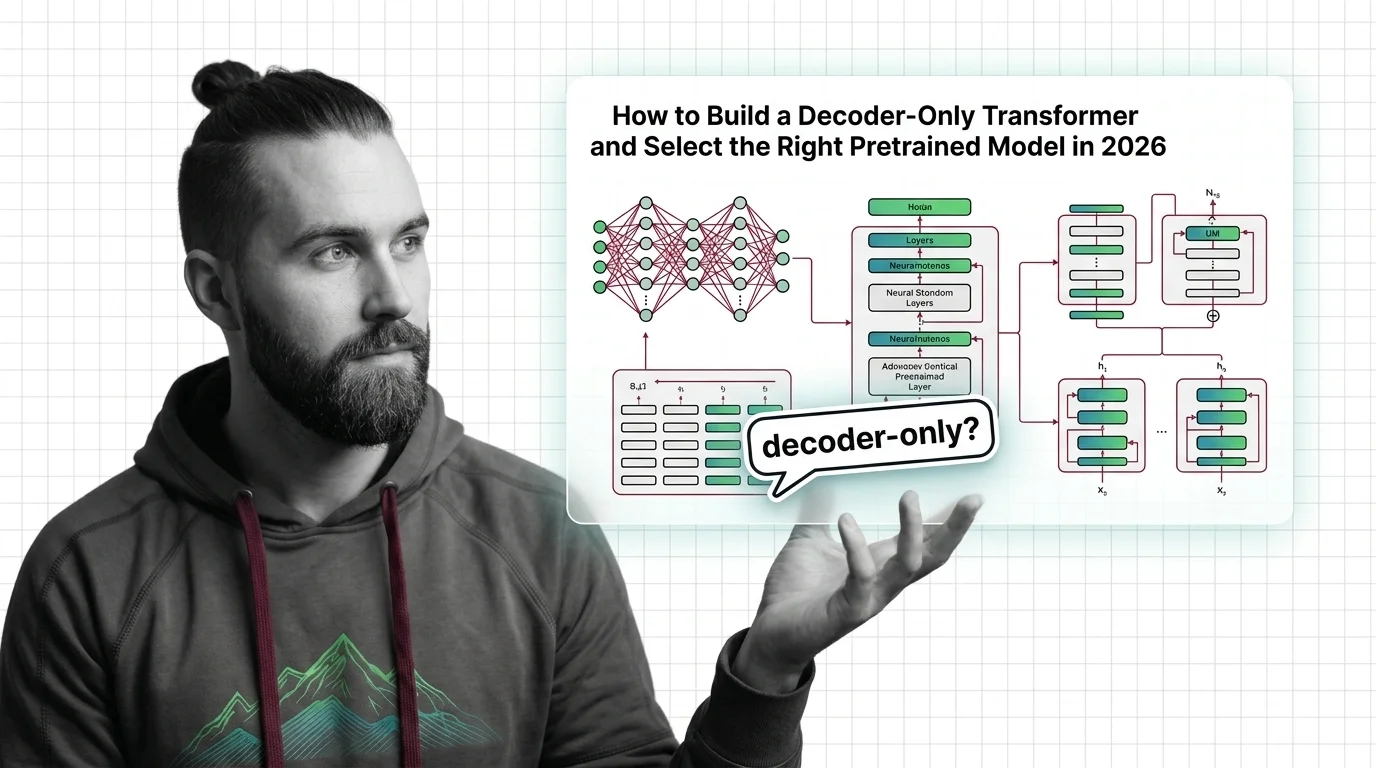

How to Build a Decoder-Only Transformer and Select the Right Pretrained Model in 2026

Build a decoder-only transformer with correct causal masking in PyTorch, then pick between GPT-5, LLaMA 4, and DeepSeek …

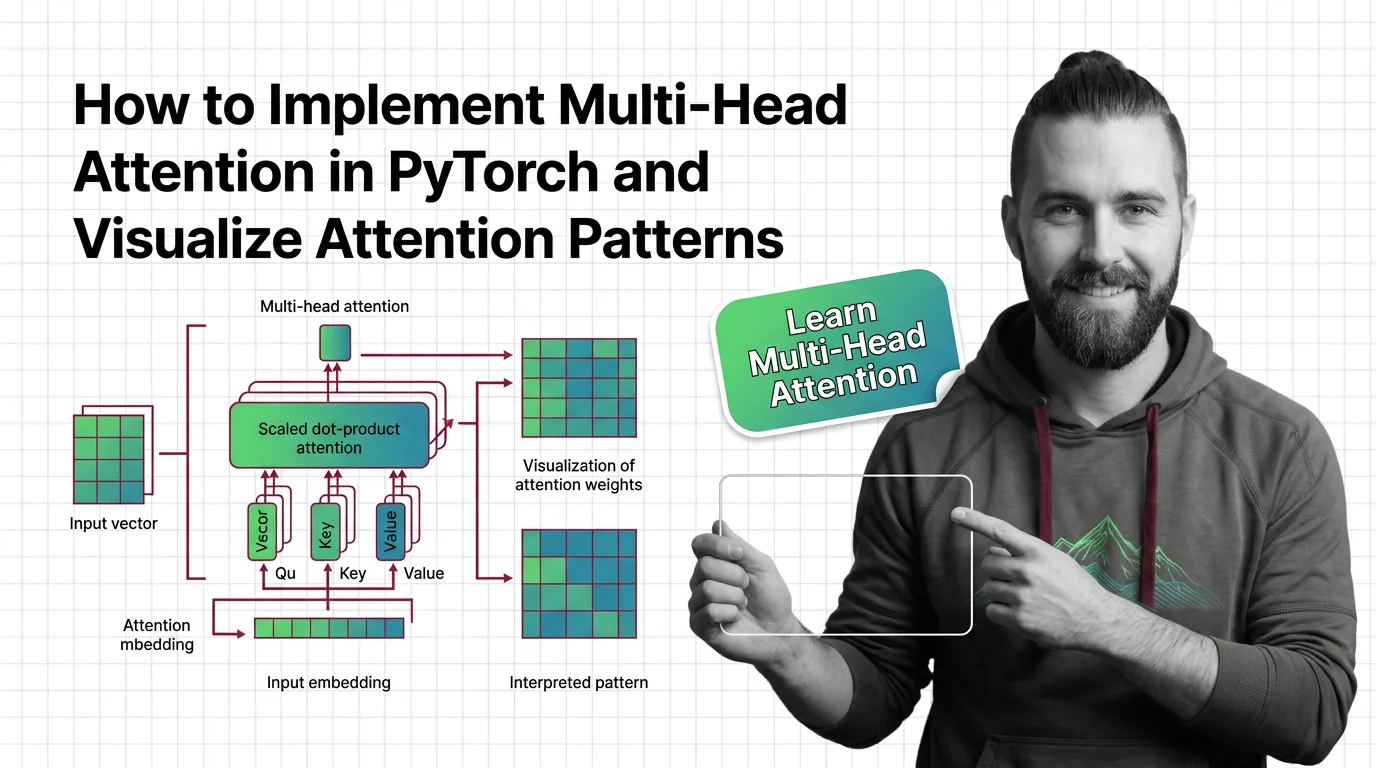

How to Implement Multi-Head Attention in PyTorch and Visualize Attention Patterns

Specify multi-head attention for AI-assisted PyTorch builds. Decompose QKV projections, constrain SDPA kernels, and …

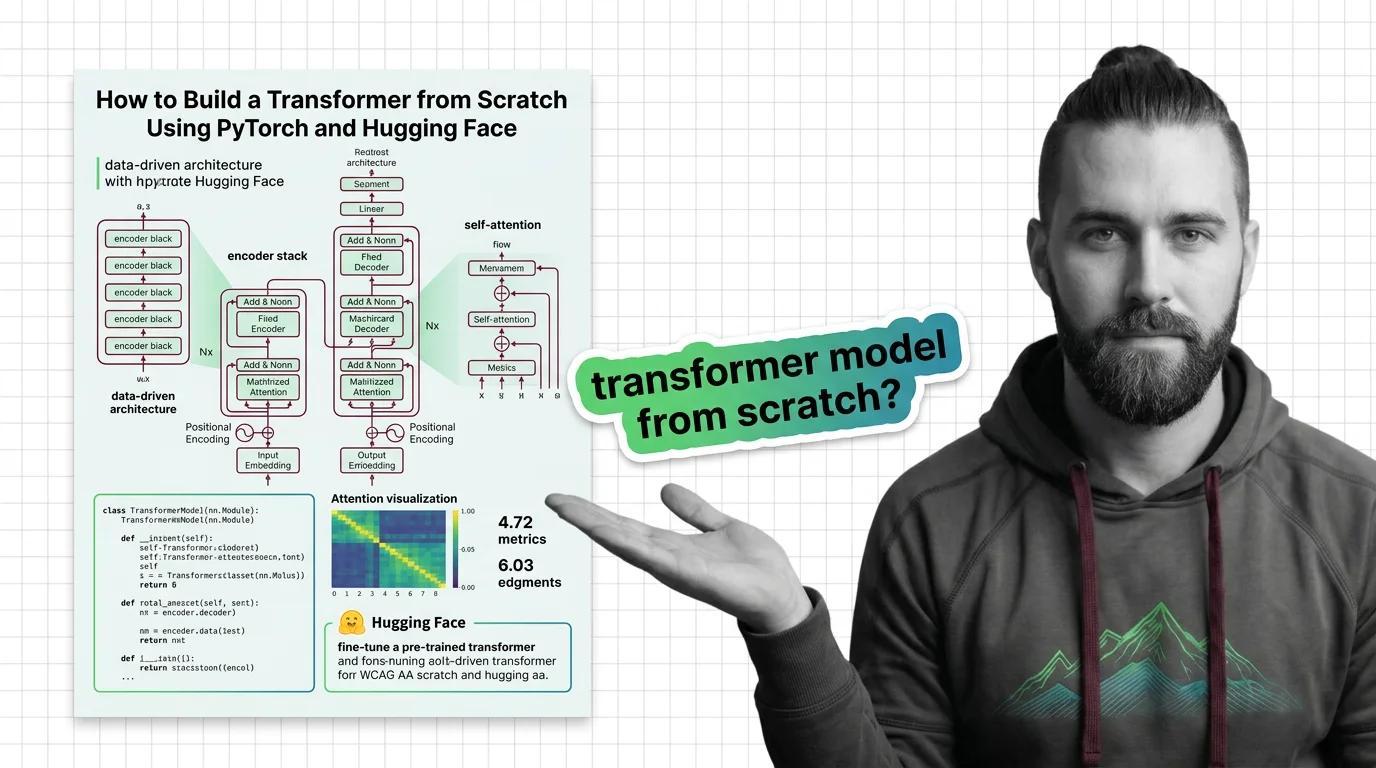

How to Build a Transformer from Scratch Using PyTorch and Hugging Face

Specify a transformer from scratch in PyTorch and Hugging Face. Decompose attention, embeddings, and training loops into …