LLM Foundations

Core mechanics of large language models — training, inference, tokenization, and the mathematics of next-token prediction.

- Home /

- AI Principles /

- LLM Foundations

What Are Agent Guardrails? How Permission Systems Constrain AI

Agent guardrails enforce permission boundaries on autonomous AI. Learn how Claude SDK, NeMo, and Llama Guard constrain …

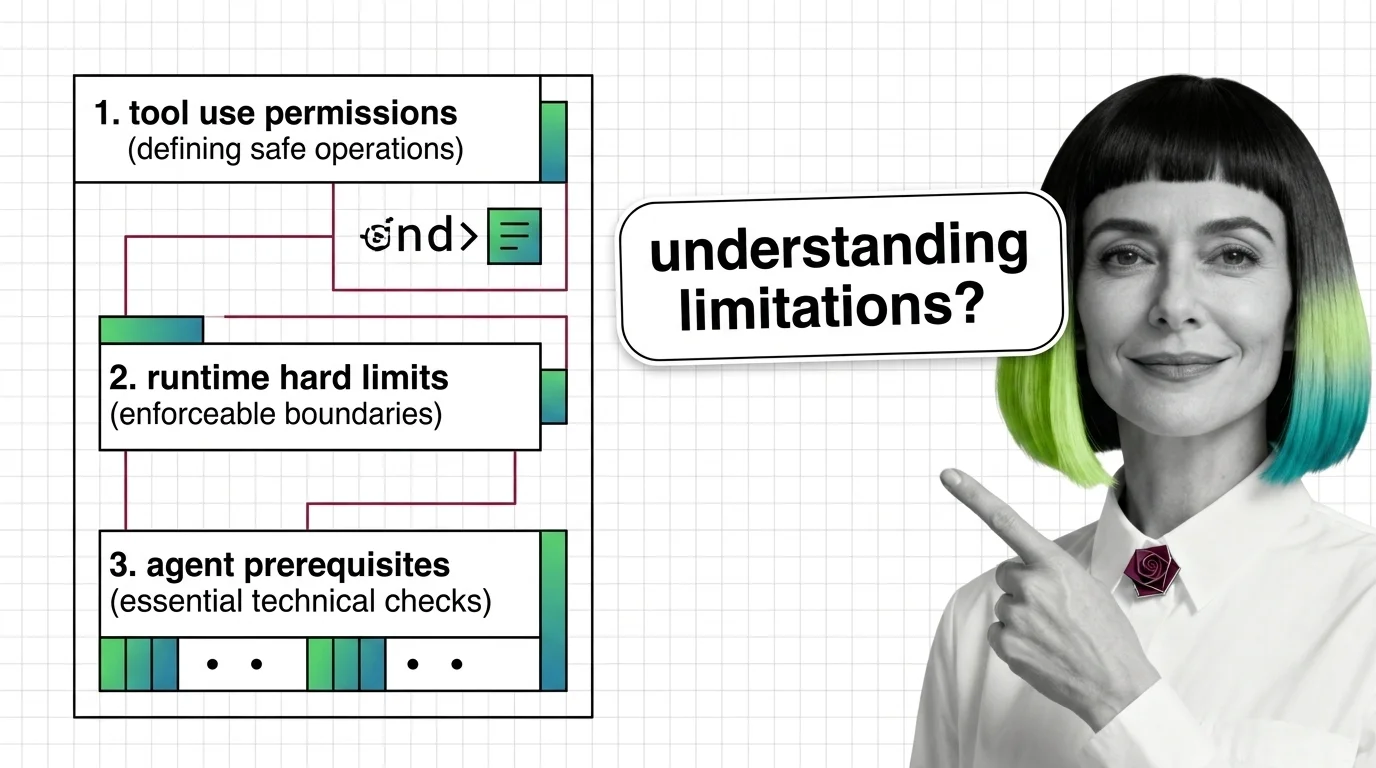

Prerequisites for Agent Guardrails: Tool Use and Runtime Limits

Agent guardrails are runtime classifiers wrapped around tool-use loops — useful, partial, and demonstrably evadable. …

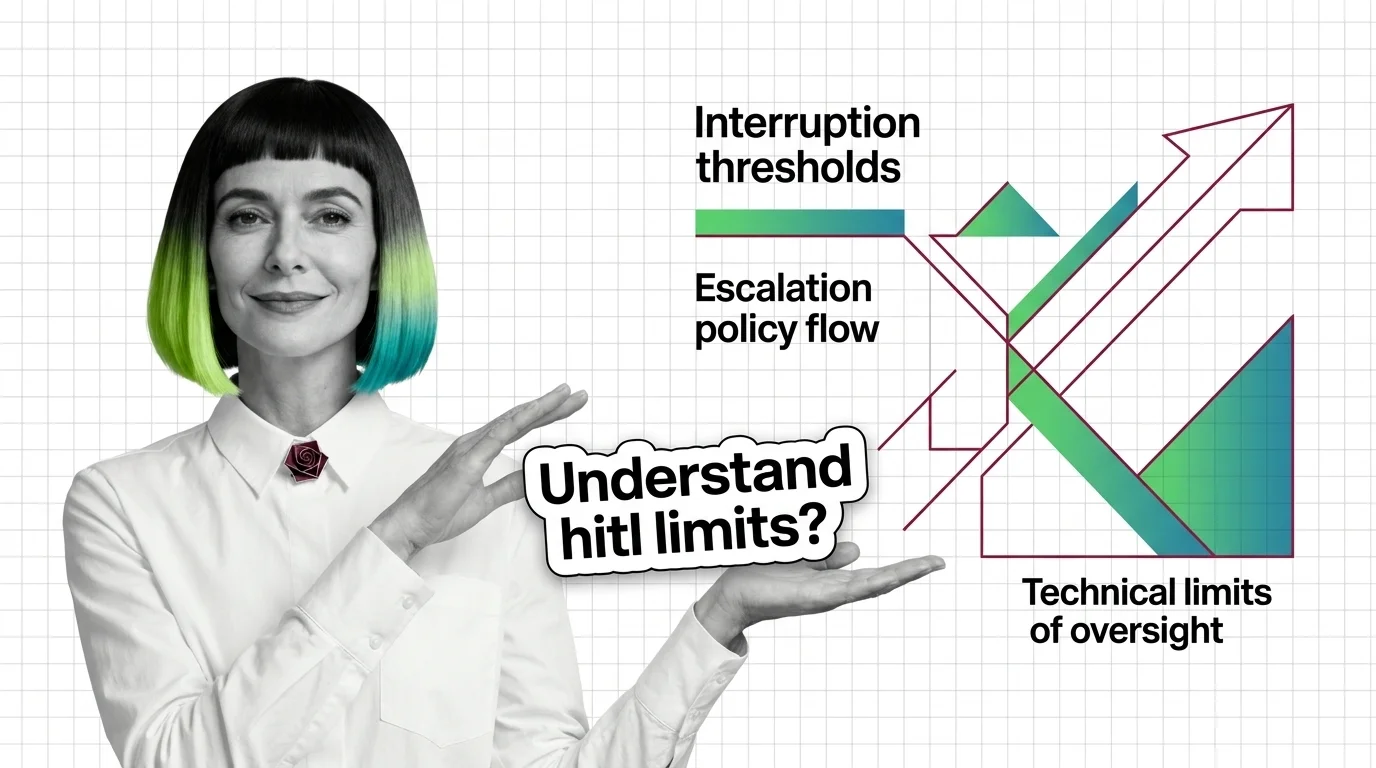

Prerequisites and Technical Limits of HITL for AI Agents

HITL for agents is easy to start and hard to scale. Learn the prerequisites — durable state, idempotency, escalation — …

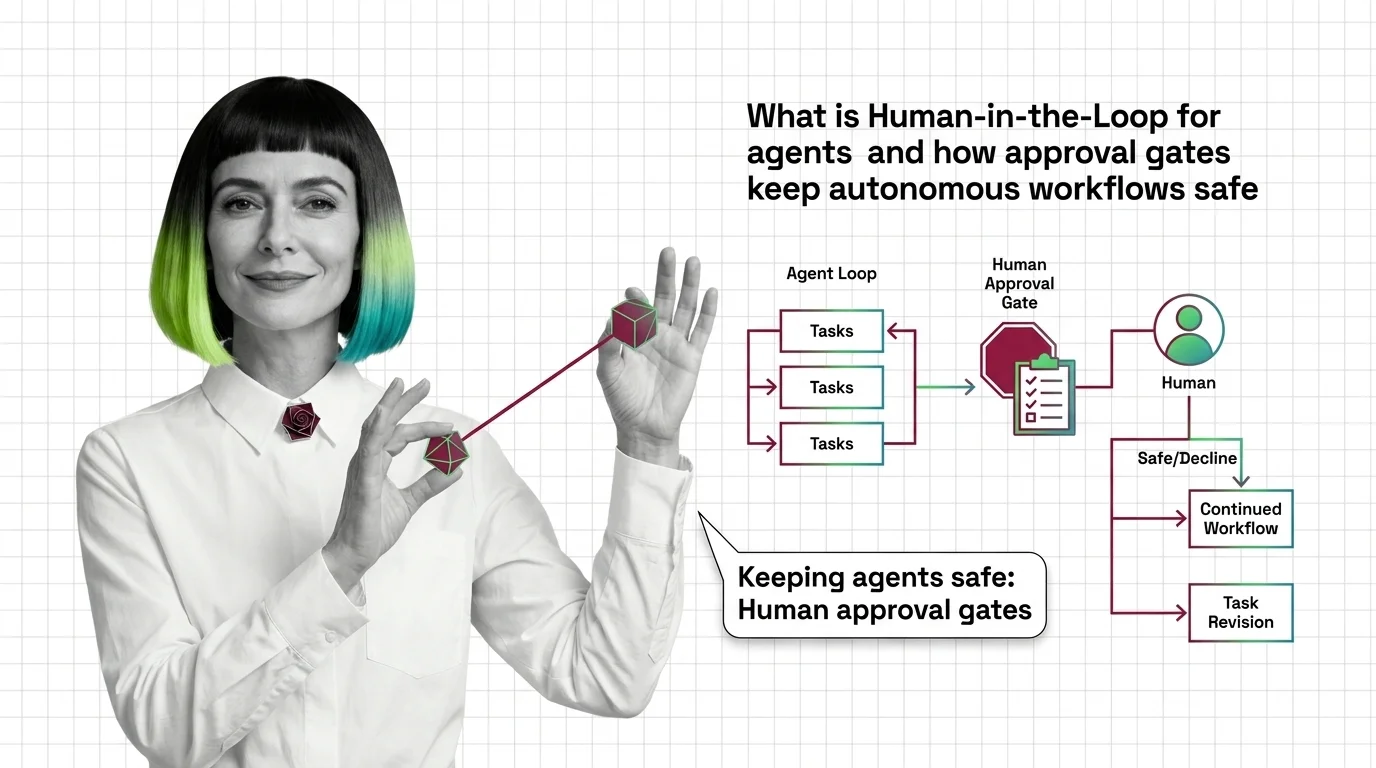

Human-in-the-Loop for AI Agents: How Approval Gates Work

Human-in-the-loop for AI agents pauses autonomous workflows at risky steps and routes them to a human gate. Here's how …

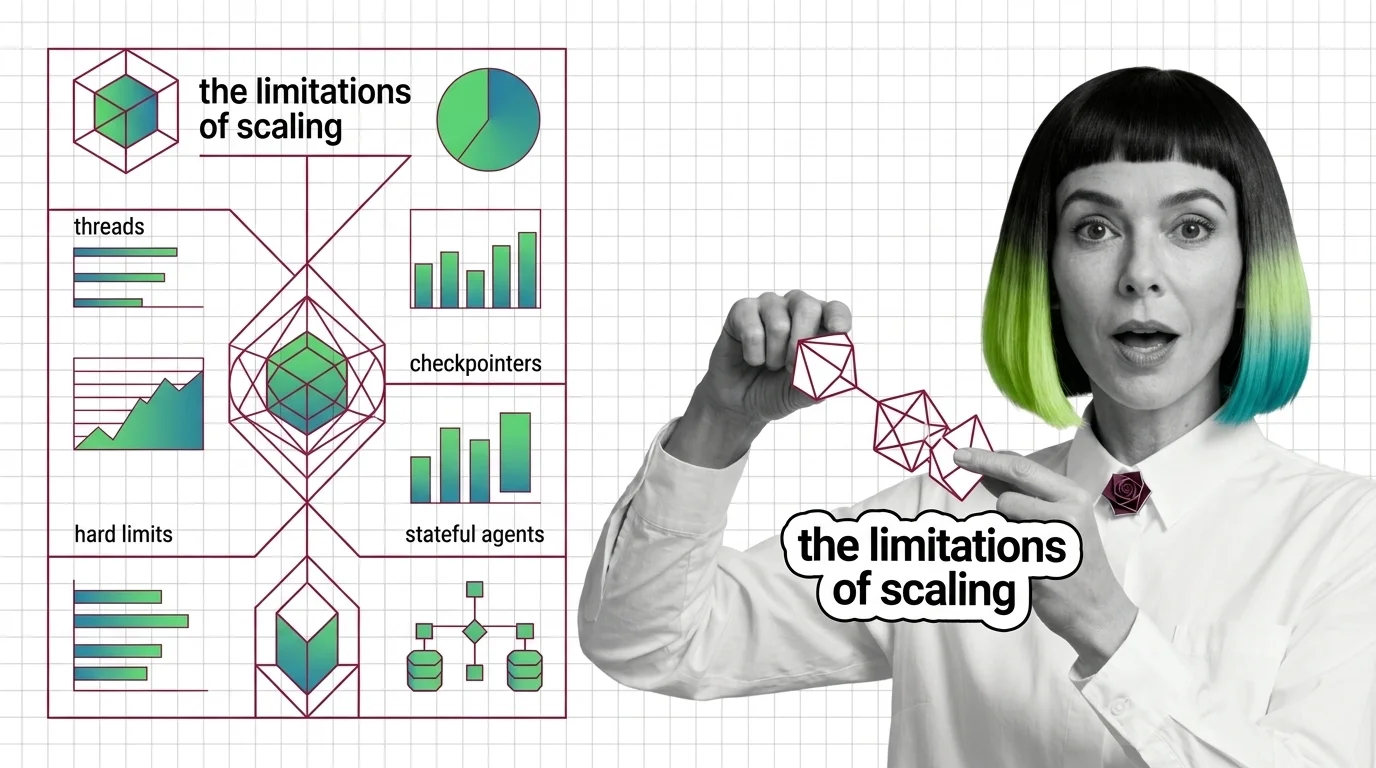

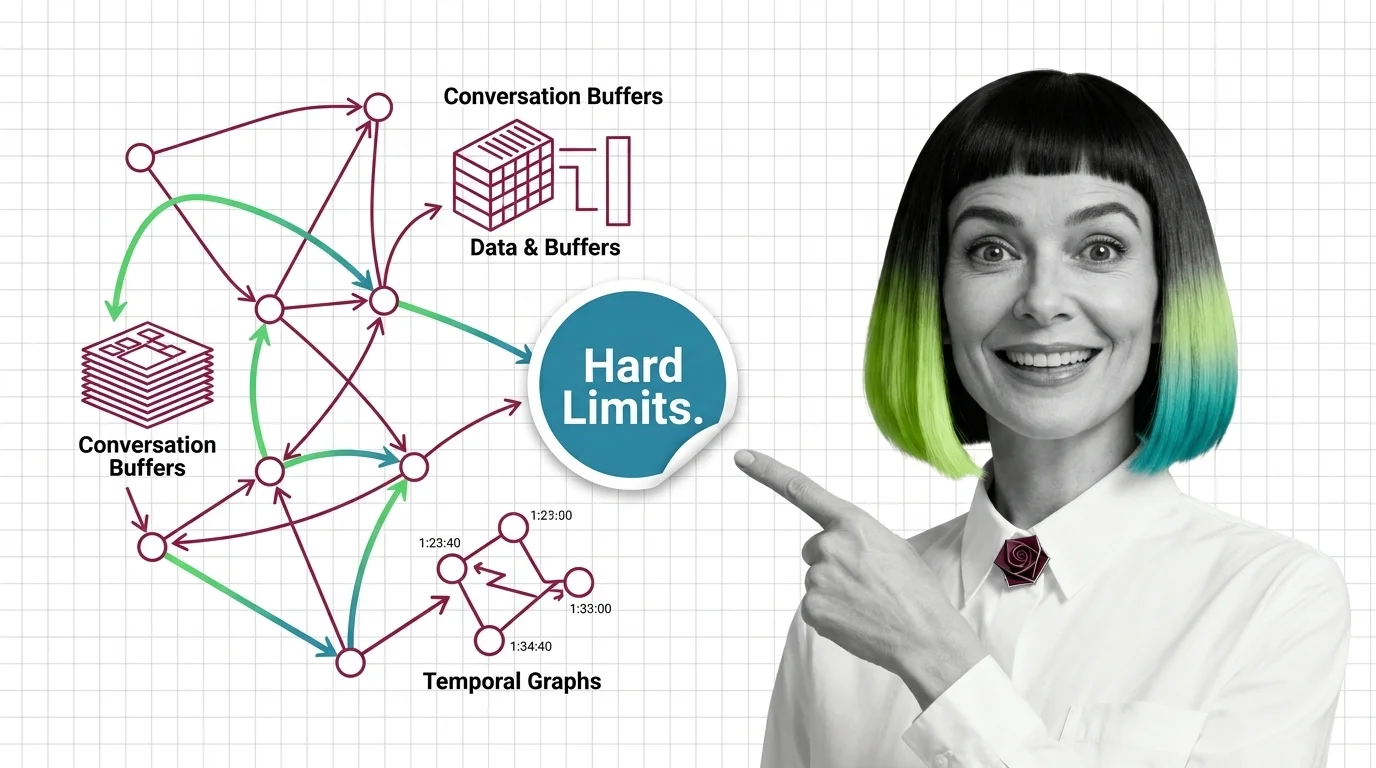

Agent State Management: Threads, Checkpointers, Hard Limits

Agent state is not memory — it is plumbing that replays snapshots between steps. Mona explains threads, checkpointers, …

Agent State Management: How Checkpointing Persists Memory Across Turns

Agent state management decides whether your agent remembers. See how LangGraph checkpointers, threads, and reducers …

Agent Evaluation: How Trajectory Analysis Measures AI Agents

Agent evaluation grades the path, not just the final answer. Learn how trajectory analysis exposes silent reasoning …

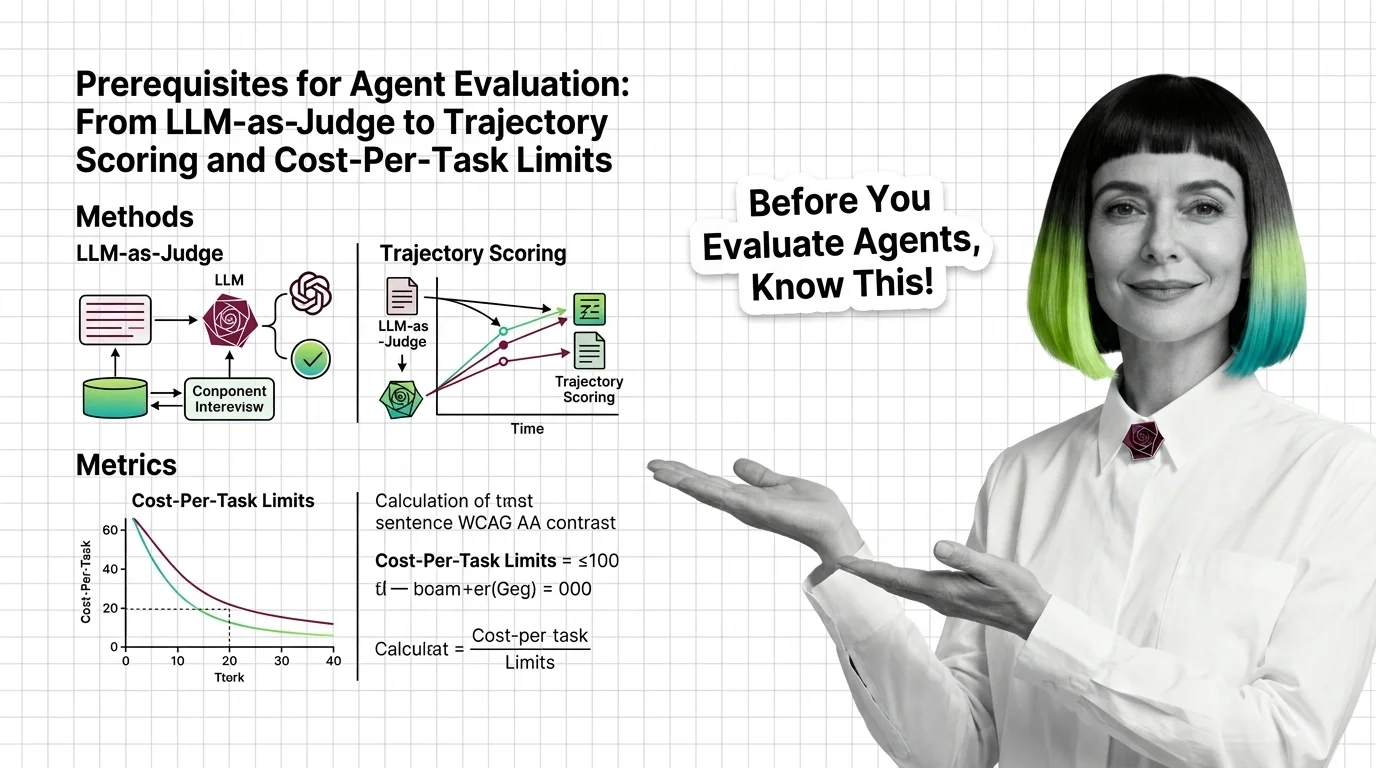

Agent Evaluation Prerequisites: LLM-as-Judge to Cost-Per-Task

Agent evaluation needs three signals: outcome, trajectory, cost. Learn why LLM-as-judge has known biases and where major …

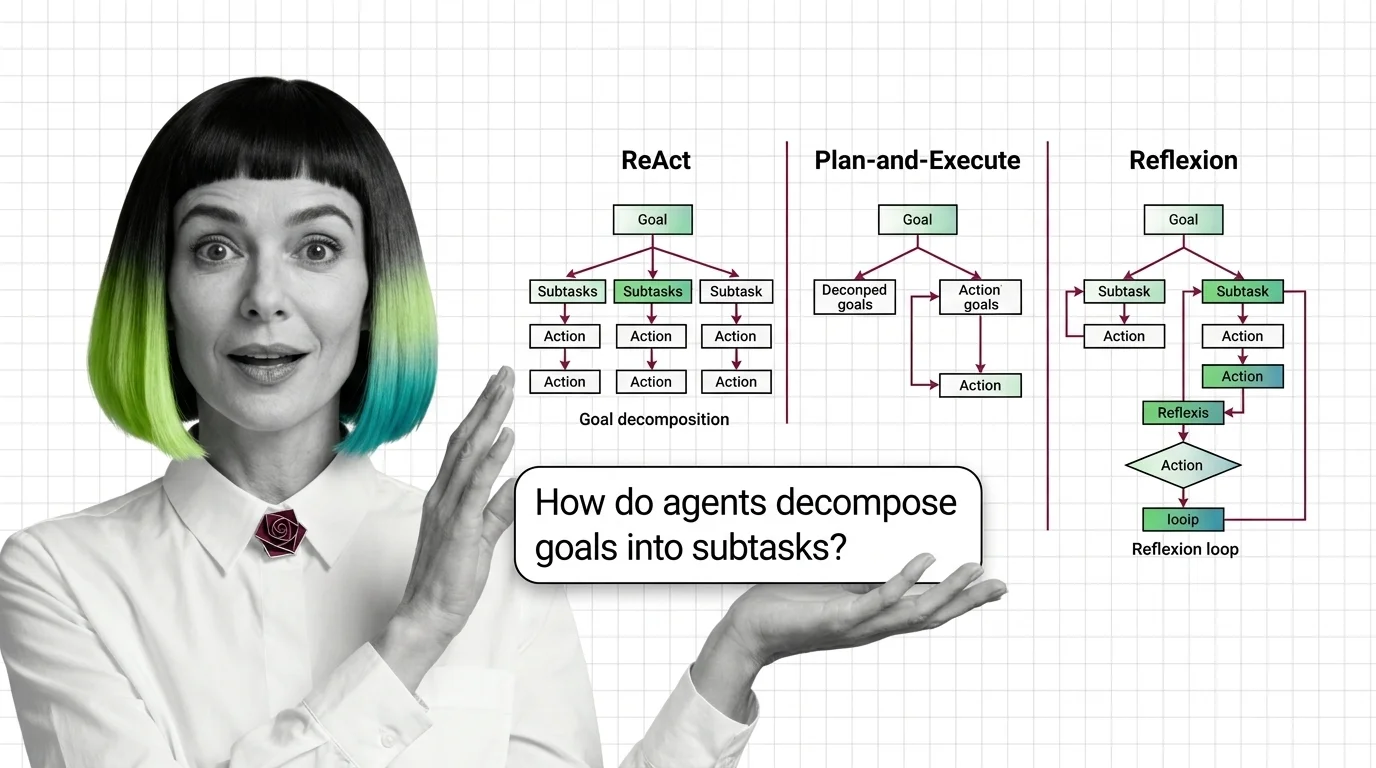

From Chain-of-Thought to Tool Use: Prerequisites and Technical Limits of Agent Planning

Agent planning rests on three primitives — chain-of-thought, tool use, and the ReAct loop. Learn the prerequisites and …

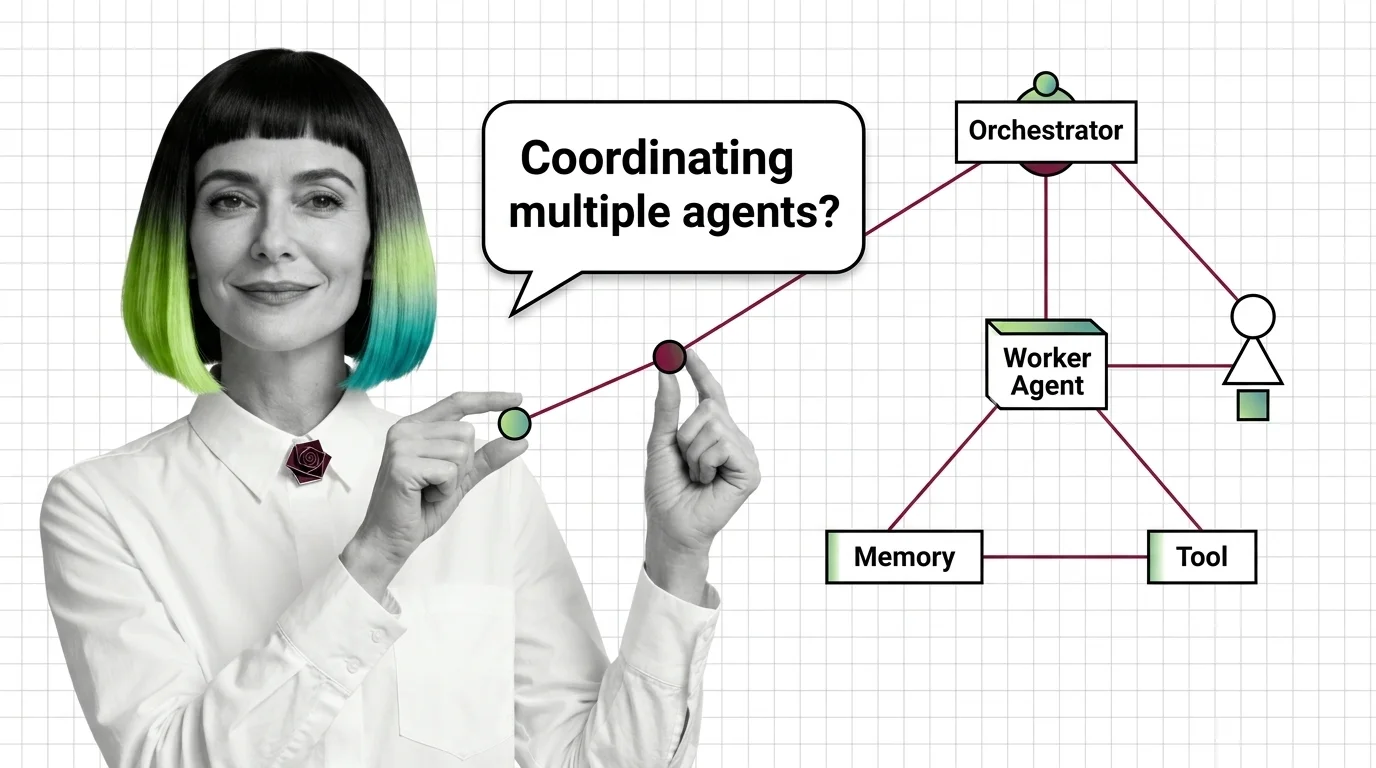

Multi-Agent Systems: Supervisor, Debate, and Swarm Patterns

Multi-agent systems coordinate specialized AI agents through supervisor, debate, or swarm patterns. Here is how each …

Multi-Agent Systems: Prerequisites and Hard Technical Limits

Before multi-agent systems, master tool use, the ReAct loop, and memory. Then face the limits: context blow-up, error …

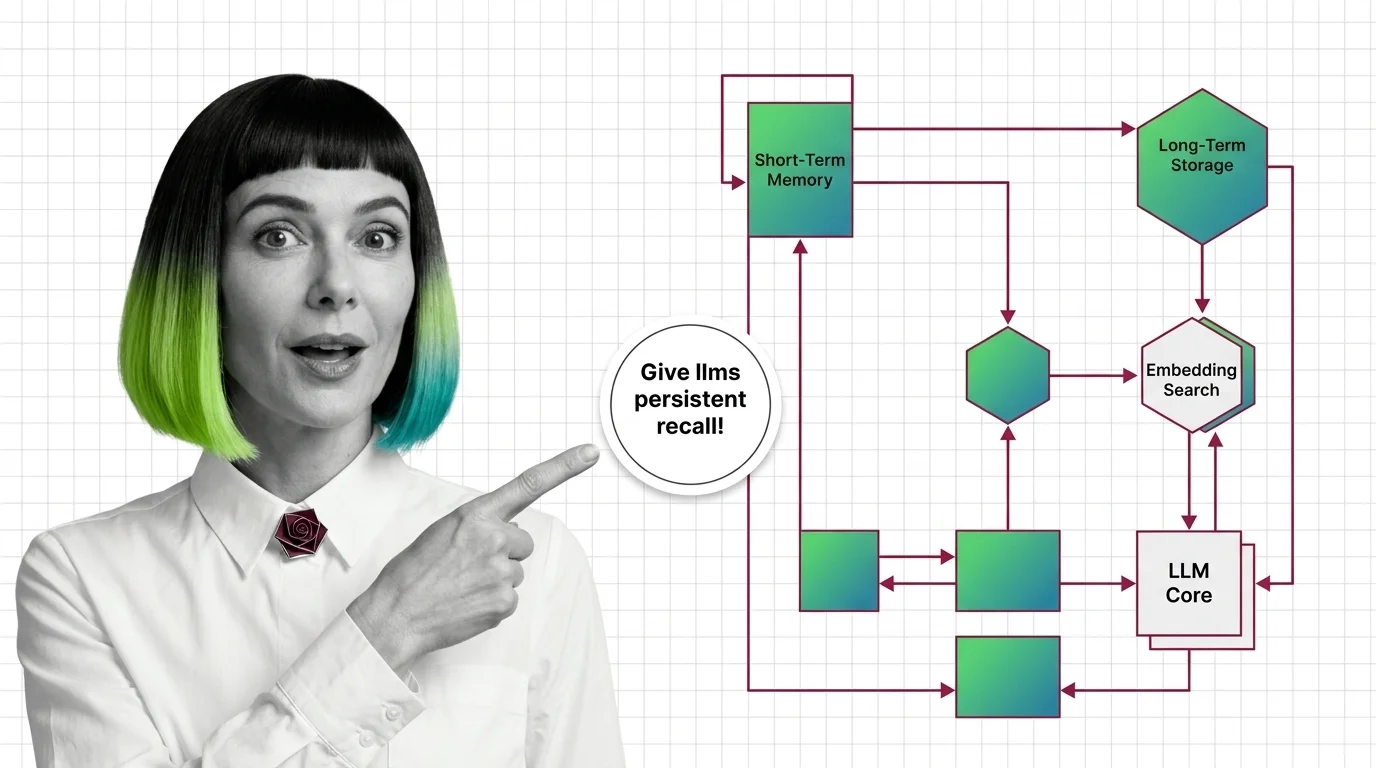

Agent Memory Systems: How LLMs Get Persistent Recall Across Sessions

Agent memory systems give LLMs persistent recall across sessions. Inside the architectures: temporal graphs, …

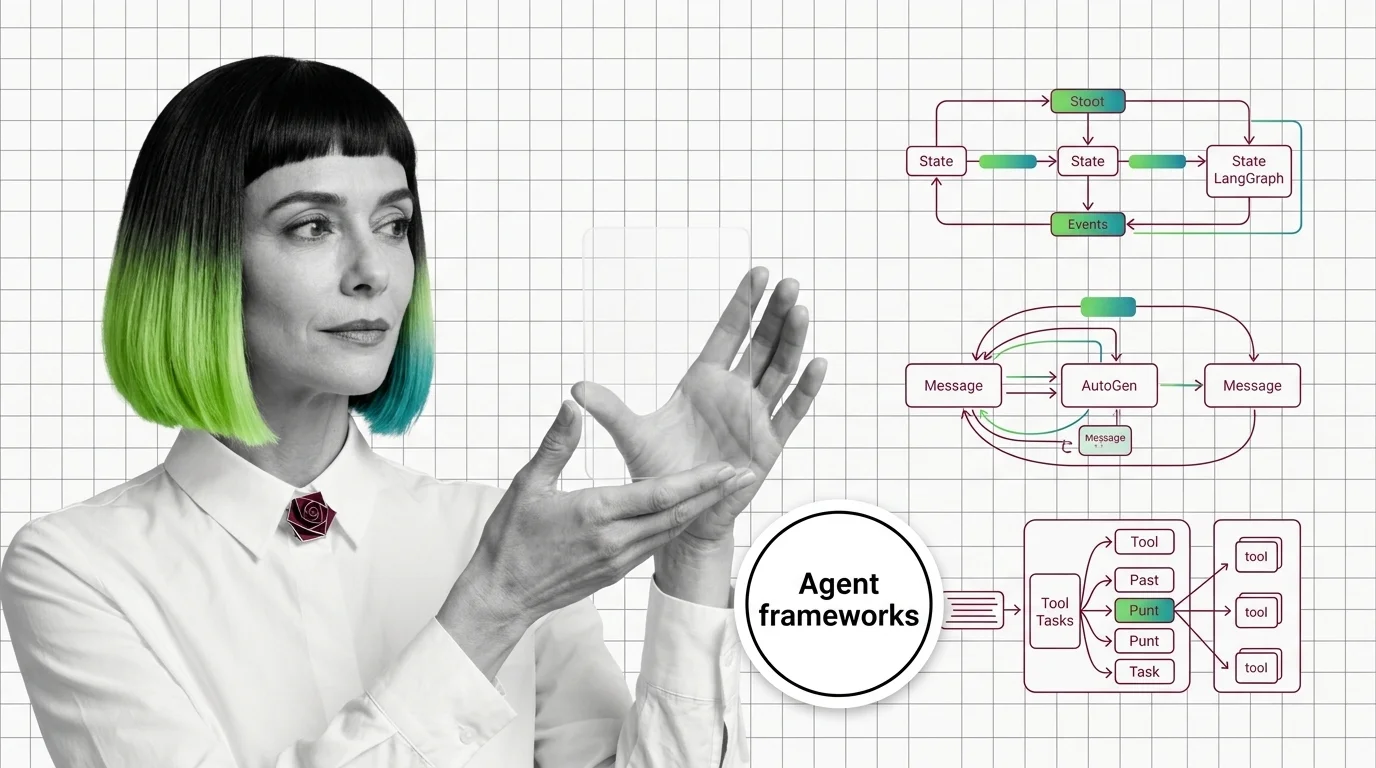

Graph vs Conversation vs Crew: LangGraph, AutoGen, CrewAI Patterns

LangGraph, AutoGen, and CrewAI commit to three different theories of how AI agents coordinate. The pattern you pick …

Agent Planning and Reasoning: ReAct, Plan-and-Execute, Reflexion

Agent planning is not human cognition — it is token generation conditioned on observations. How ReAct, Plan-and-Execute, …

Agent Memory Architectures: Prerequisites and Hard Limits

Agent memory isn't a bigger context window. Learn the prerequisites for designing agent memory systems and the hard …

Agent Frameworks: How LangGraph, CrewAI, and AutoGen Orchestrate LLMs

Agent frameworks orchestrate LLM calls, tools, and memory — but each one bets on a different abstraction. Learn what …

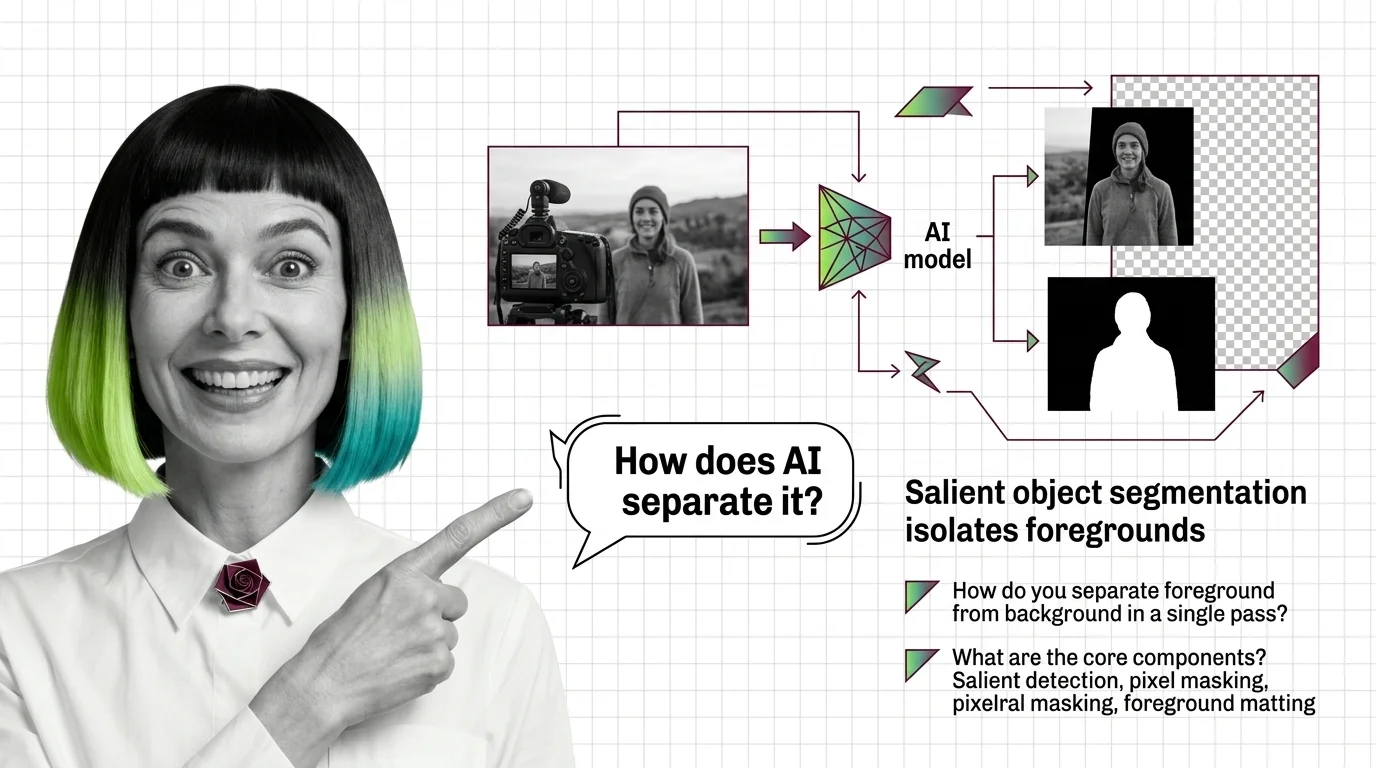

What Is AI Background Removal? How Salient Object Segmentation Works

AI background removal is not one model — it's salient object detection plus alpha matting. See how U2-Net, BiRefNet, and …

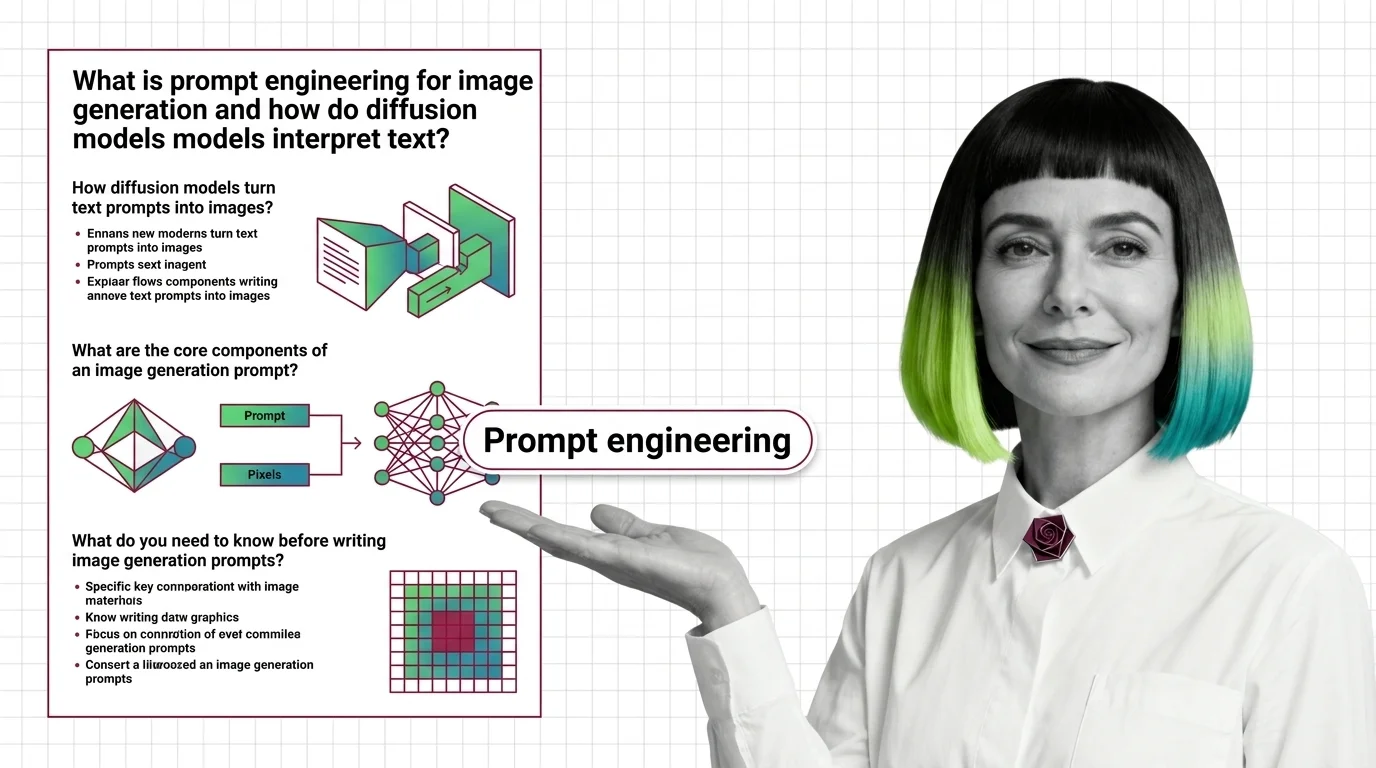

Prompt Engineering for Image Generation: How Diffusion Models Read Text

Image prompts steer probability, not pixels. Learn how diffusion models, cross-attention, and CFG turn text into images …

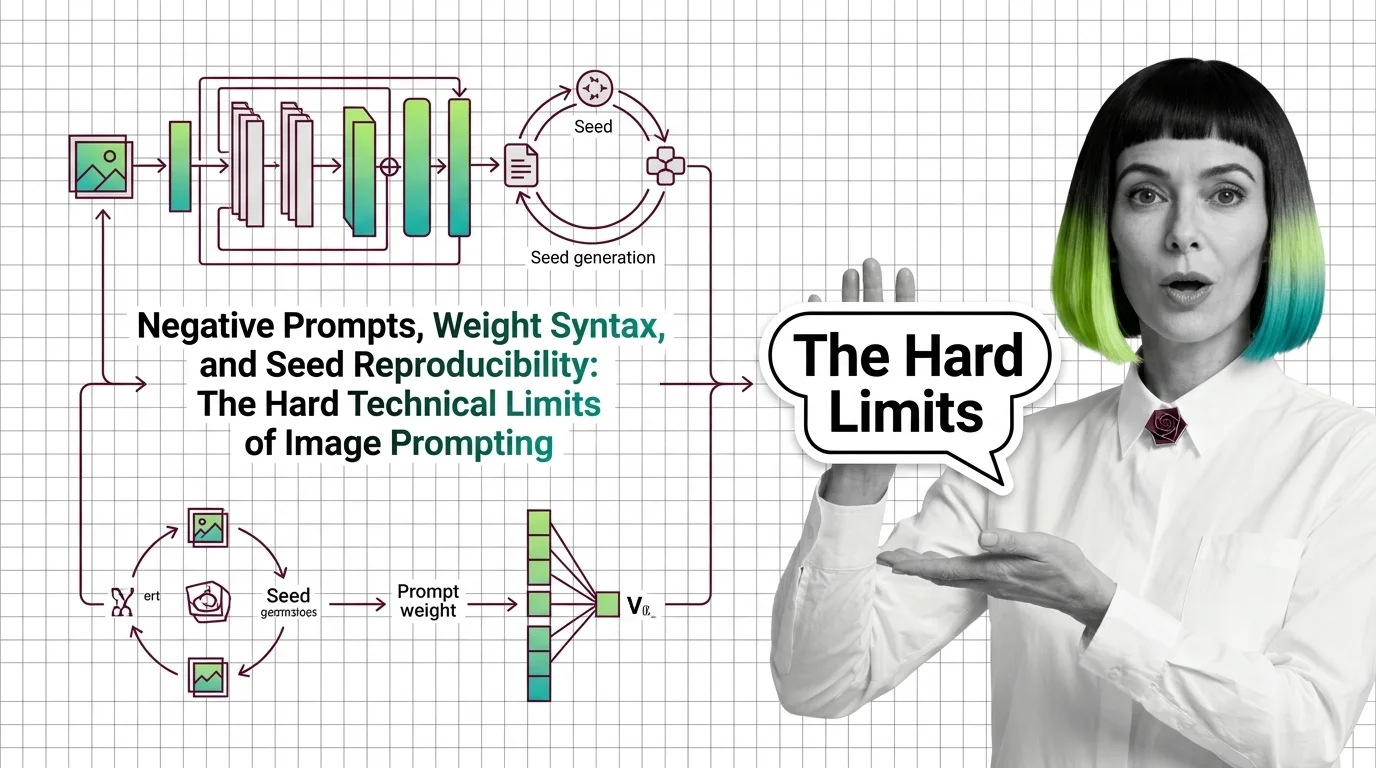

Negative Prompts, Weights, Seeds: Image Prompting Limits 2026

Negative prompts and weight syntax aren't universal — and seed reproducibility breaks across model versions. Inside the …

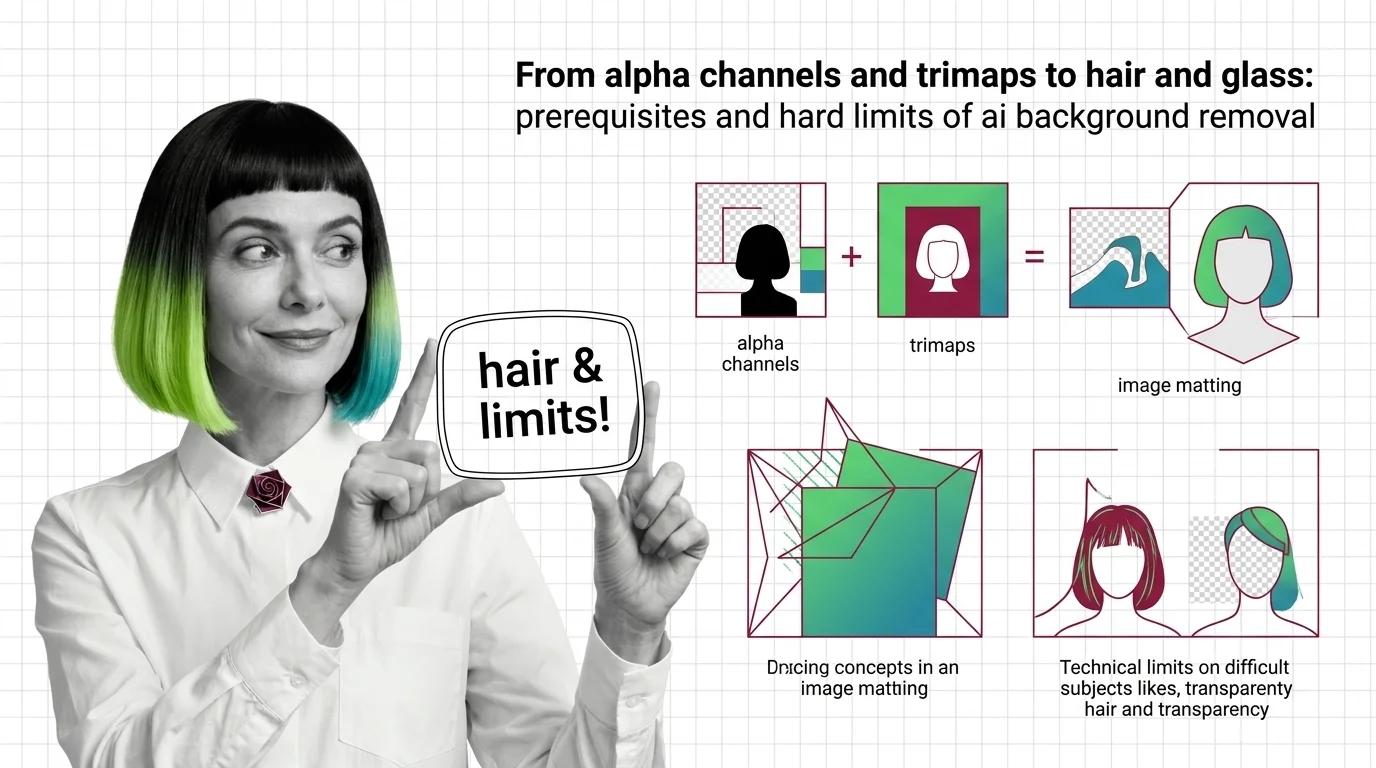

Alpha Channels, Trimaps, and the Hard Limits of AI Background Removal

Background removal is alpha estimation, not subject detection. Learn how trimaps and matting work, and why hair, glass, …

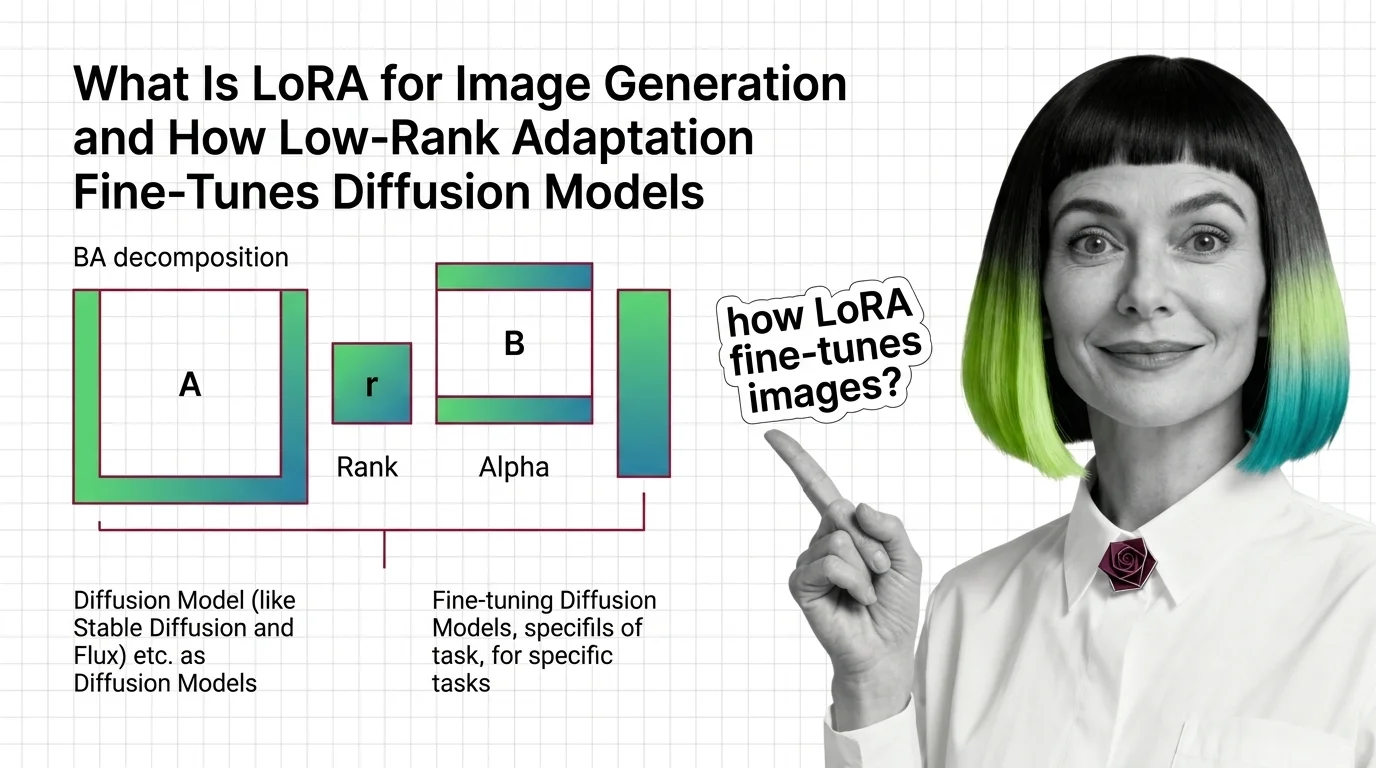

How LoRA Fine-Tunes Diffusion Models for Image Generation

LoRA fine-tunes Stable Diffusion and FLUX without retraining. Learn how rank, alpha, and the BA decomposition turn a …

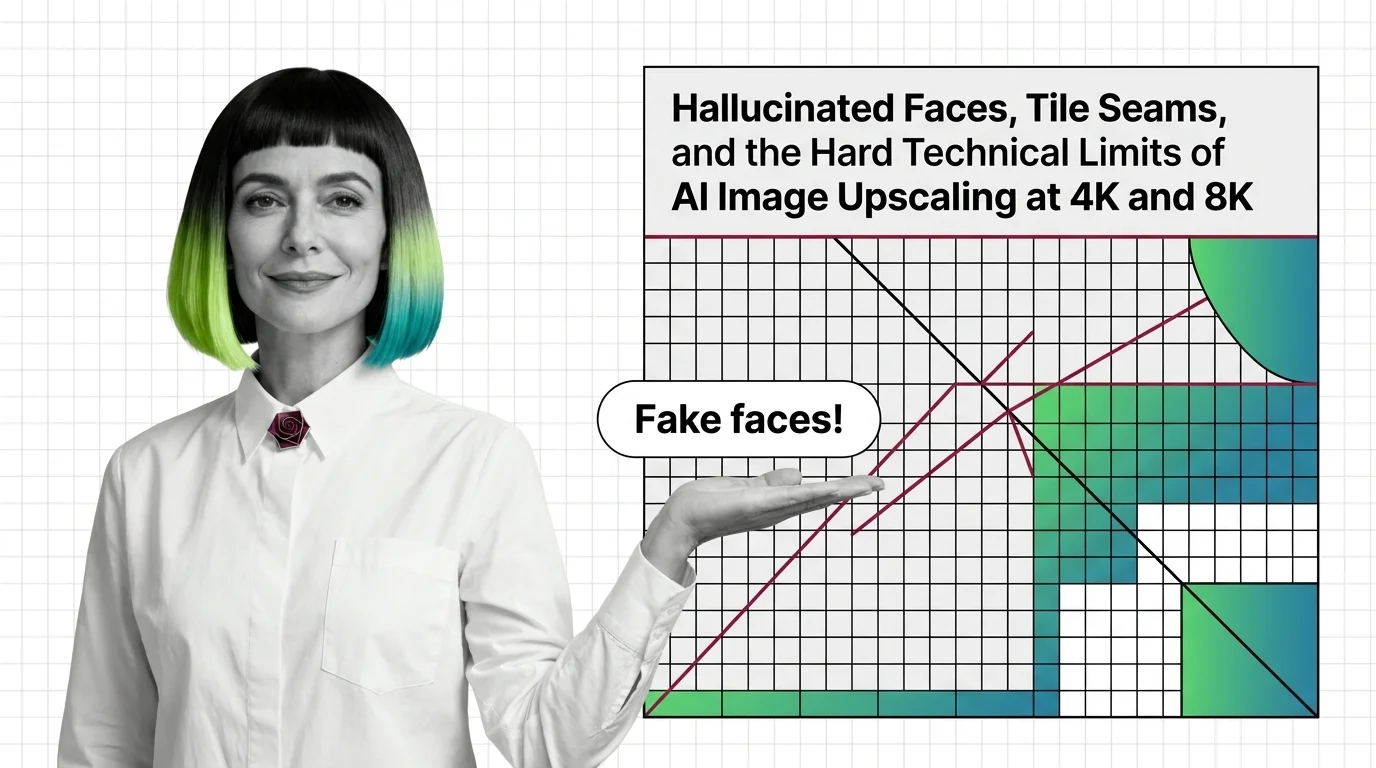

Why AI Upscalers Hallucinate Faces and Tile Seams at 4K and 8K

AI upscalers don't break at 4K and 8K because of weak hardware. The failures are structural — rooted in diffusion priors …

What Is Image Upscaling and How AI Super-Resolution Reconstructs Detail Beyond the Original Pixels

AI image upscaling doesn't enlarge what was captured — it generates plausible pixels from a learned prior. Learn how GAN …

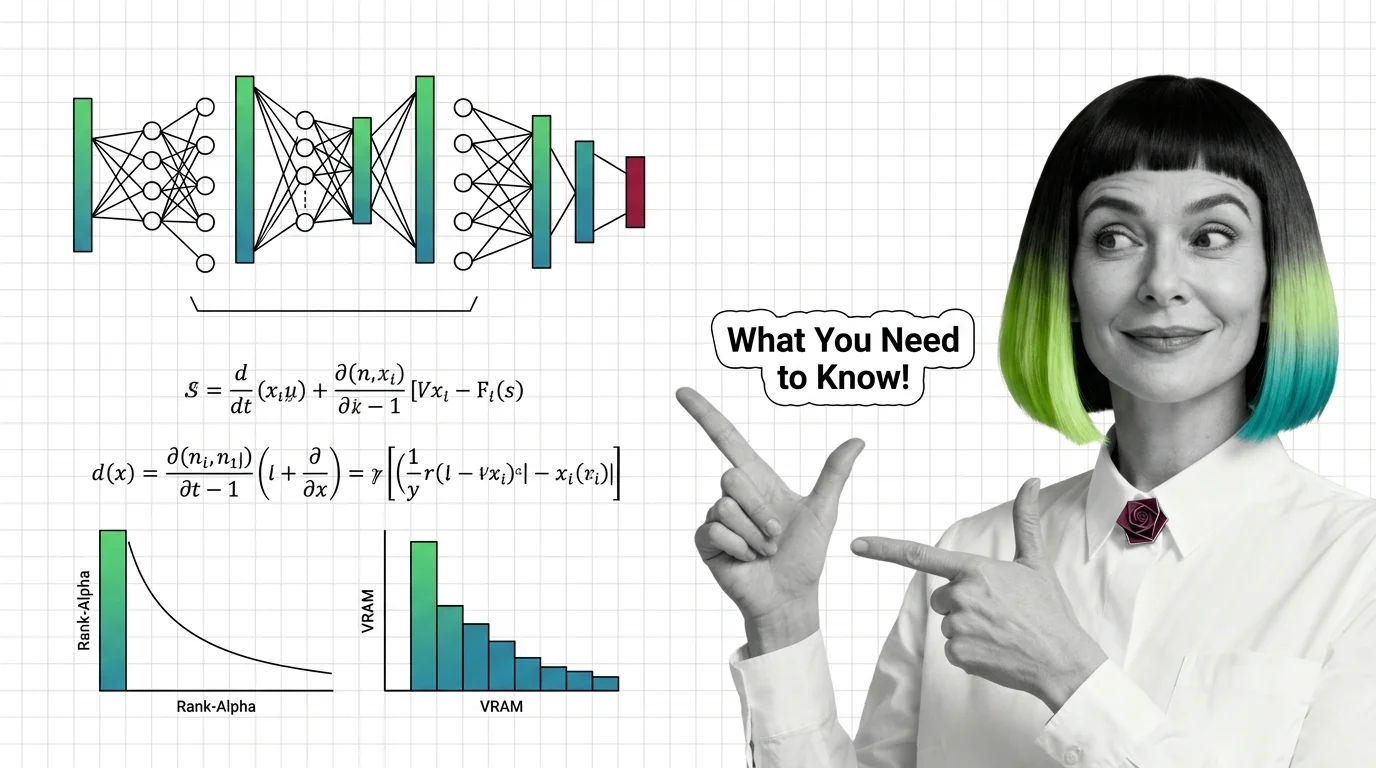

Training Image LoRAs: Diffusion Math, Rank-Alpha, and VRAM Limits

Image LoRAs retarget diffusion models with small adapter files. Learn the rank-alpha math, VRAM ranges from SD 1.5 to …

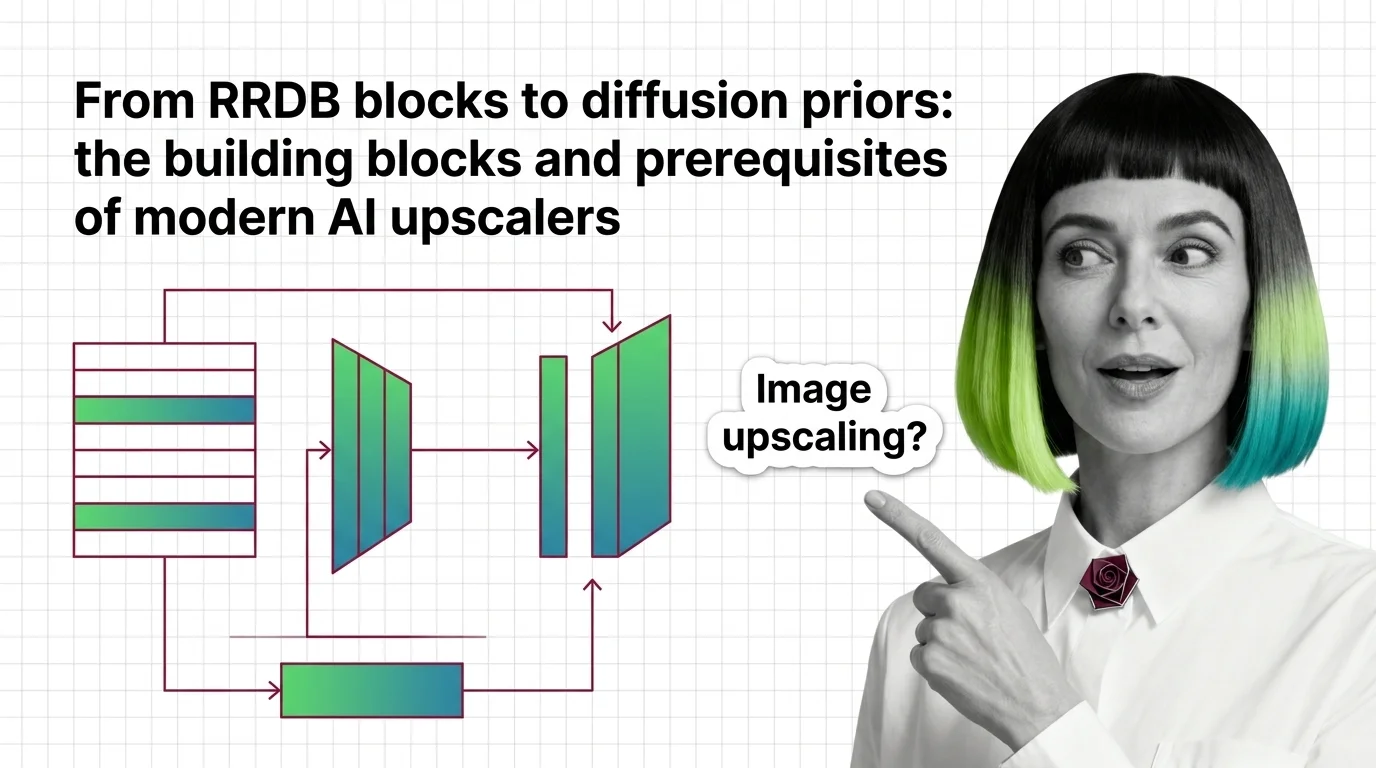

From RRDB Blocks to Diffusion Priors: Inside Modern AI Upscalers

How modern AI upscalers are built — from ESRGAN's RRDB blocks and Real-ESRGAN to SUPIR's diffusion prior, plus the …

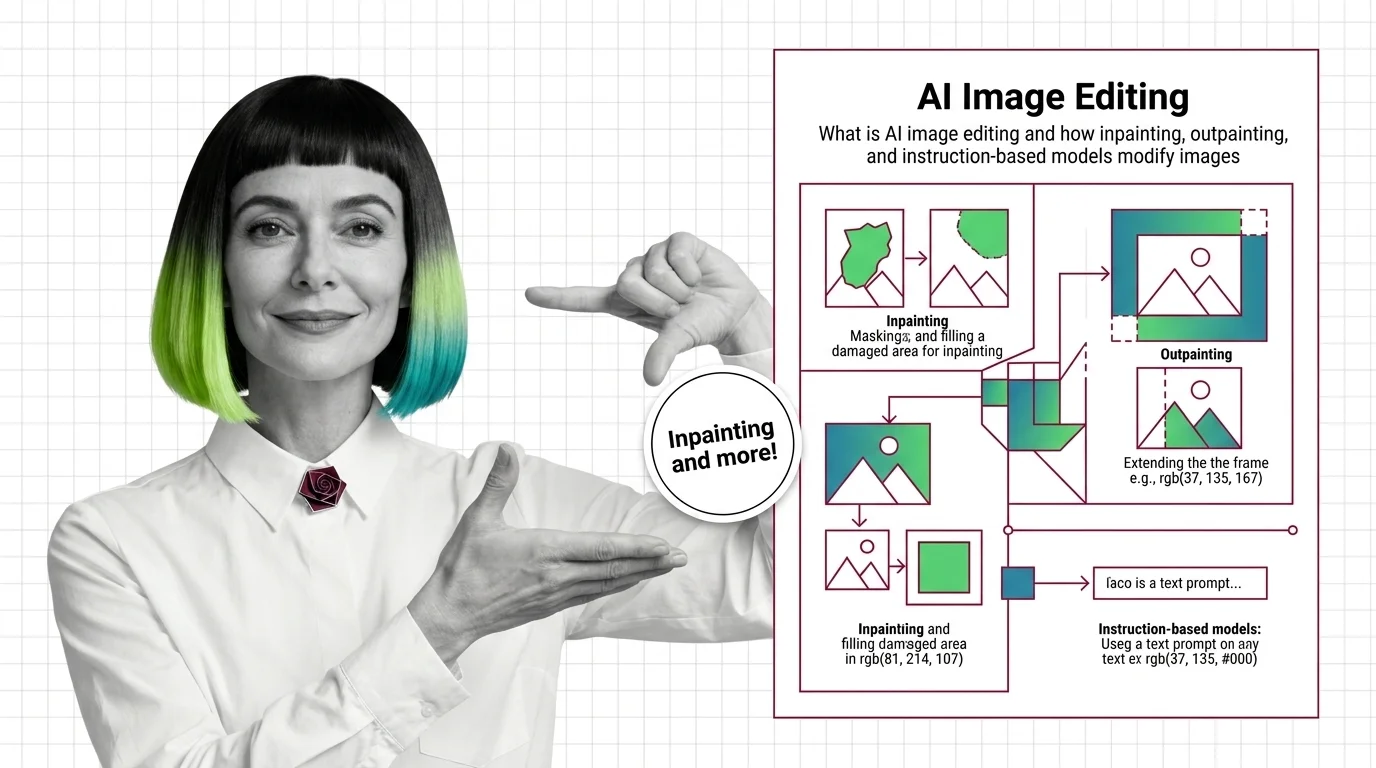

What Is AI Image Editing? Inpainting, Outpainting, Edit Models

AI image editing uses diffusion to modify pixels under a mask or follow text instructions. Learn how inpainting, …

From Diffusion to InstructPix2Pix: AI Image Editing Prerequisites

Before using GPT Image or FLUX, understand diffusion, classifier-free guidance, and why InstructPix2Pix made …

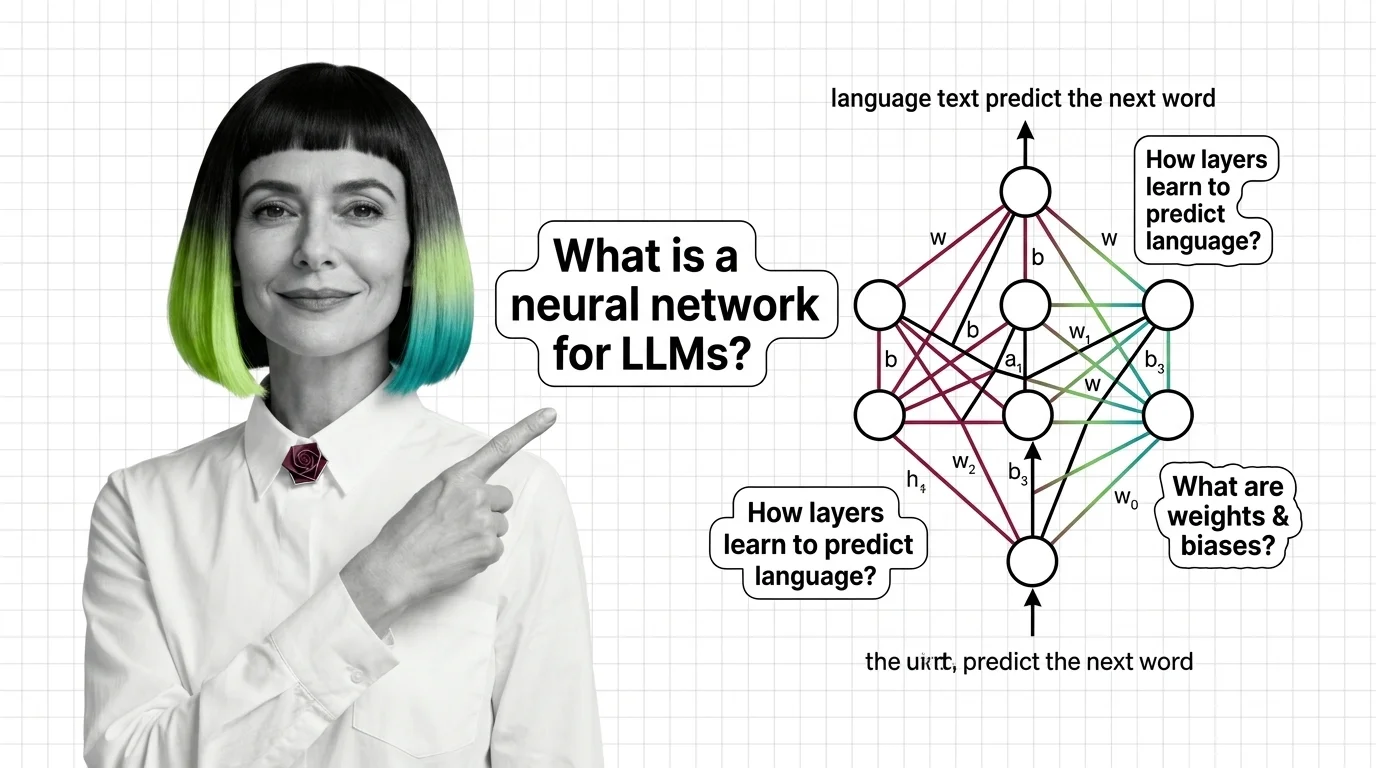

What Is a Neural Network and How It Learns to Generate Language

Neural networks learn language by adjusting millions of weights through backpropagation. Learn how layers, gradients, …

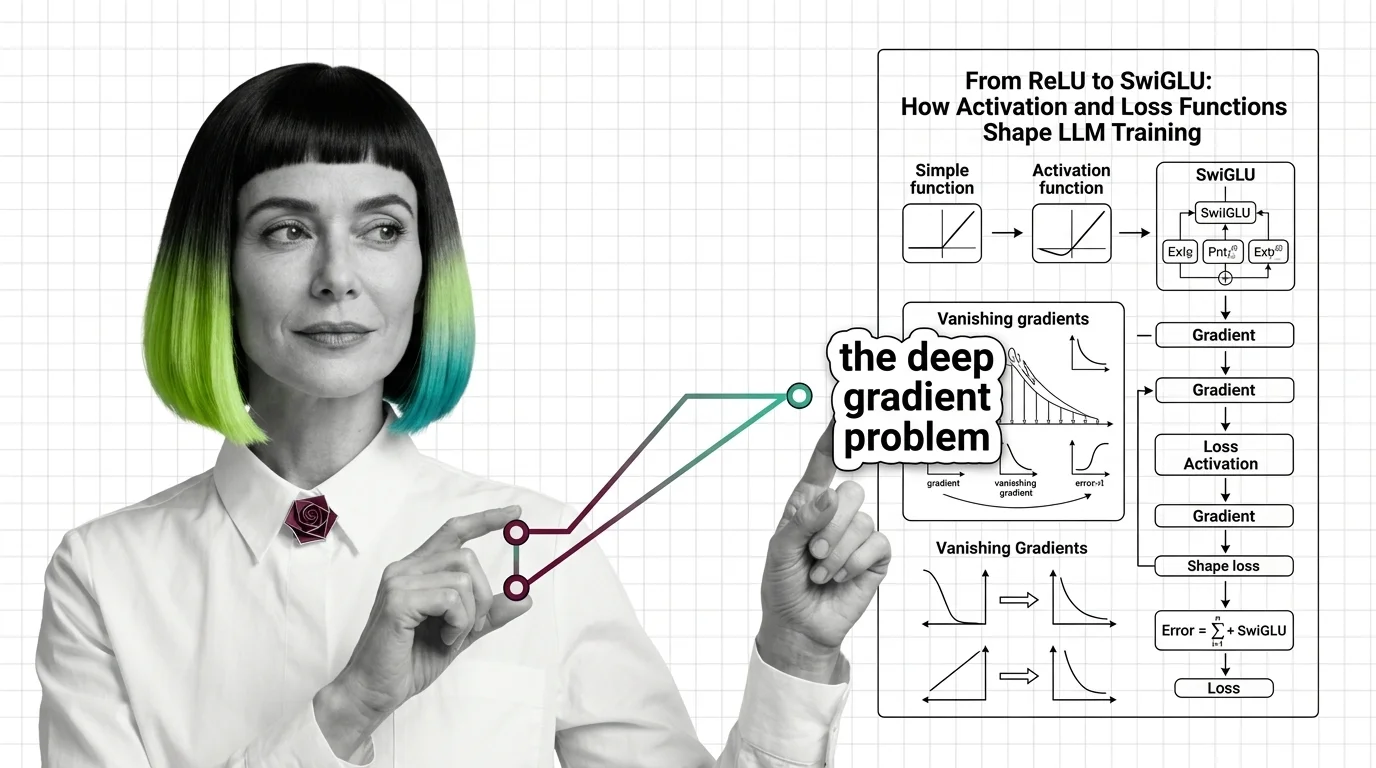

From ReLU to SwiGLU: How Activation and Loss Functions Shape LLM Training

Trace the path from ReLU to SwiGLU and understand how activation functions, cross-entropy loss, and gradient dynamics …

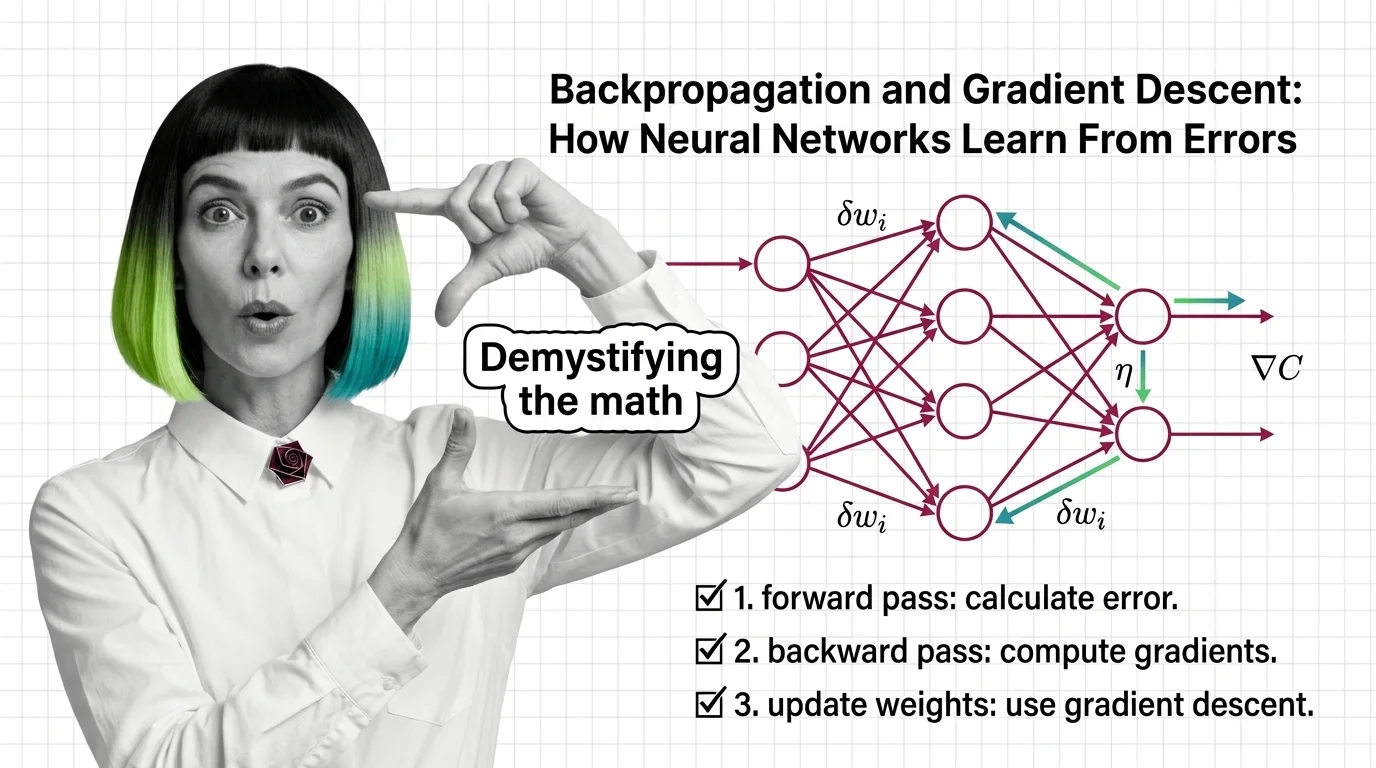

Backpropagation and Gradient Descent: How Neural Networks Learn From Errors

Learn how backpropagation and gradient descent train neural networks by propagating error signals backward through …