AI Trends

Where AI is heading — model launches, market shifts, and enterprise adoption patterns. DAN analyzes the signals that matter for leaders navigating disruption.

- Home /

- AI Trends

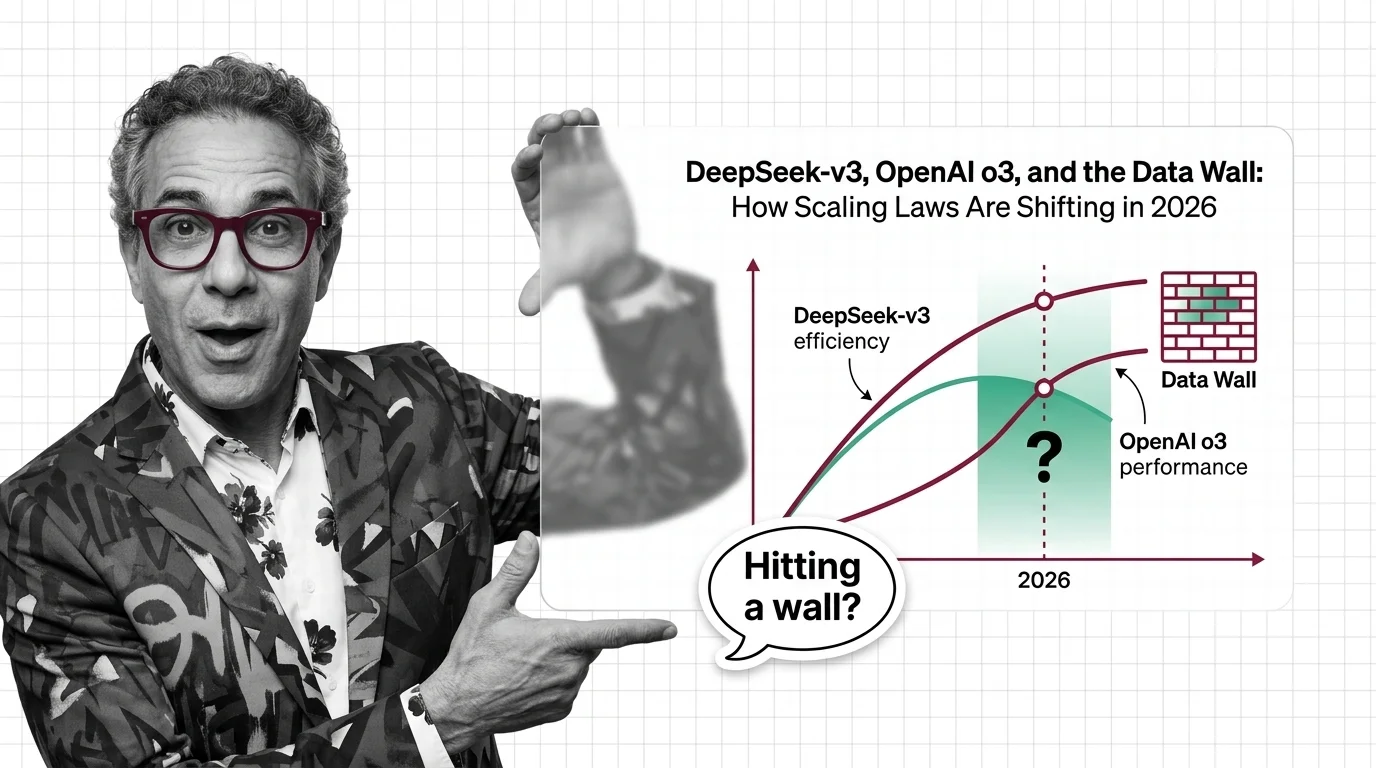

DeepSeek-v3, OpenAI o3, and the Data Wall: How Scaling Laws Are Shifting in 2026

Scaling laws split in 2025 along three axes. DeepSeek proved efficiency, o3 proved inference-time compute, and the data …

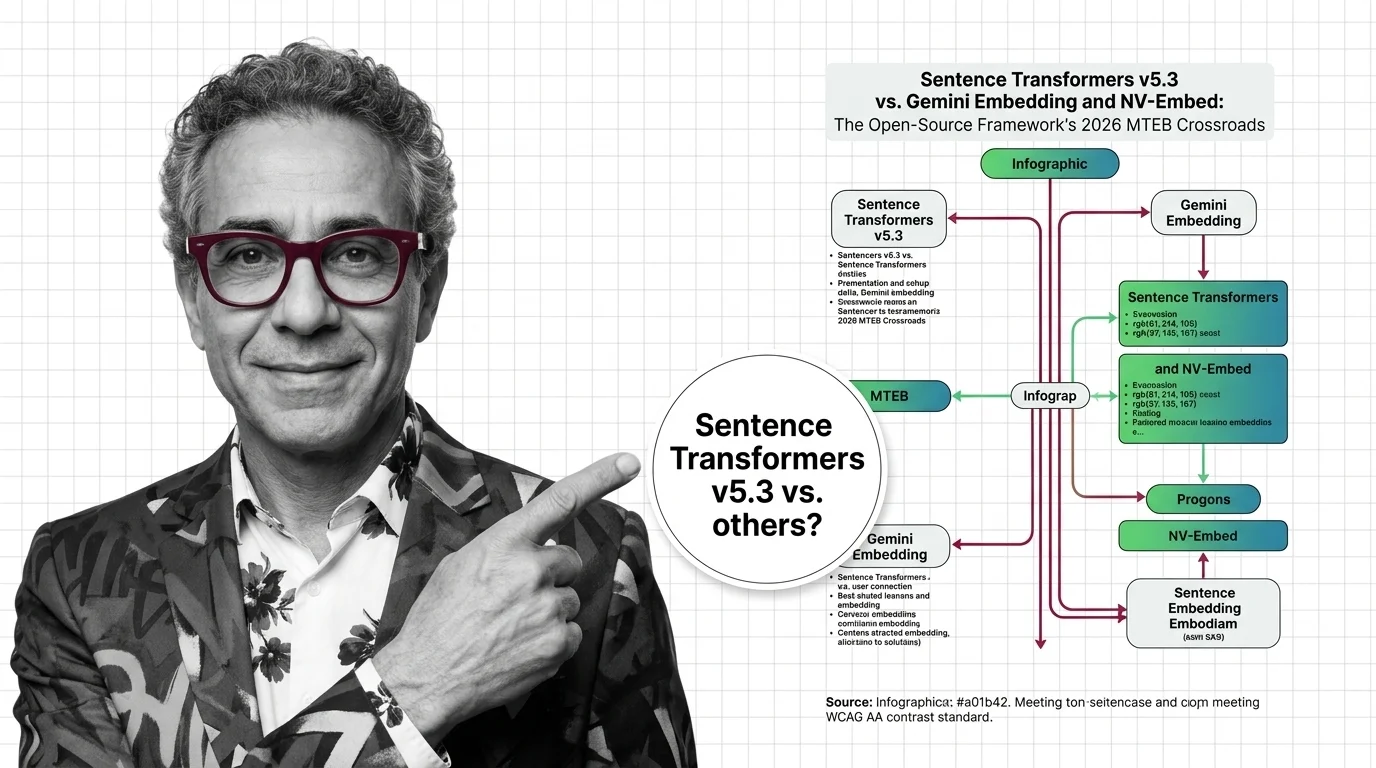

Sentence Transformers v5.3 vs Gemini & NV-Embed: MTEB 2026

v5.3 introduces new contrastive losses as Gemini Embedding claims MTEB #1. Why framework innovation matters more than …

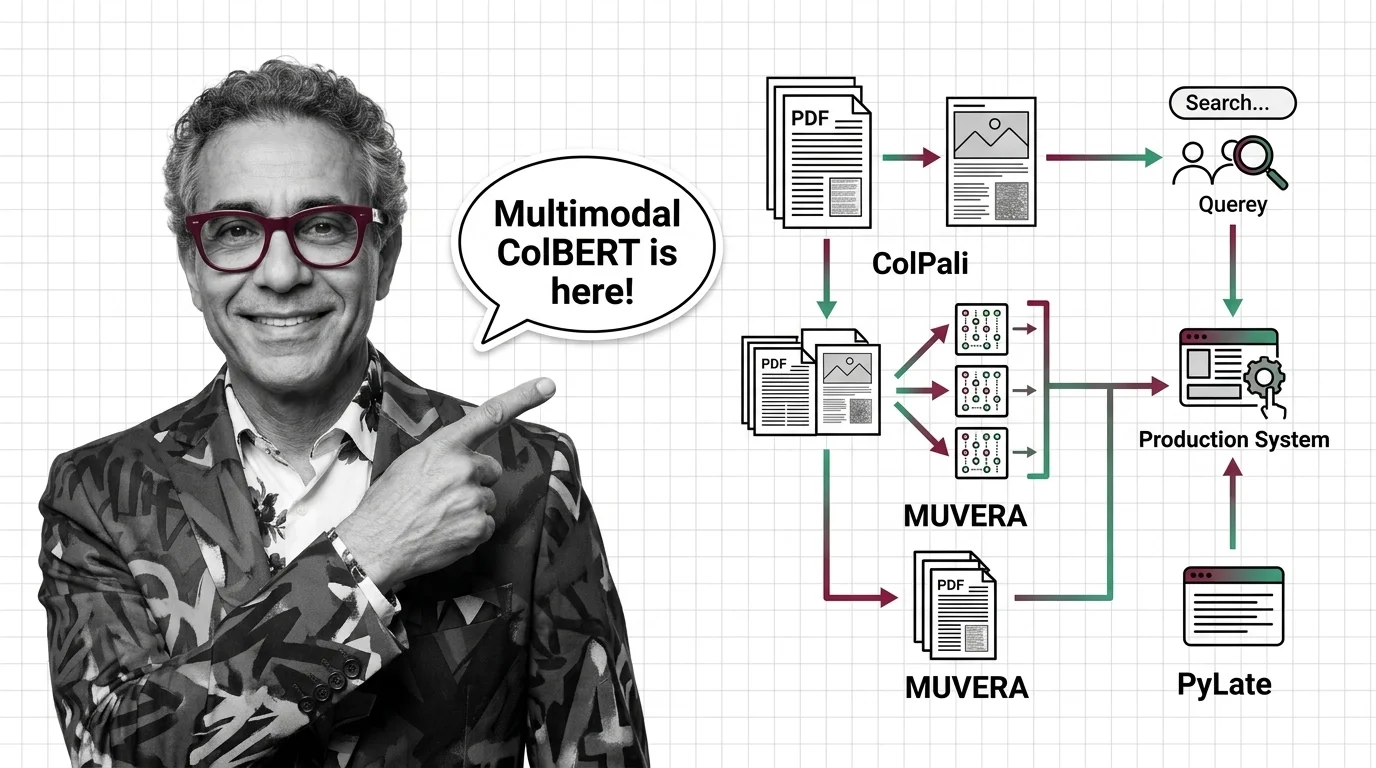

ColPali, MUVERA, and PyLate: How Multi-Vector Retrieval Went Multimodal in 2026

ColPali, MUVERA, and PyLate converged to make multi-vector retrieval multimodal and production-ready. Here's what the …

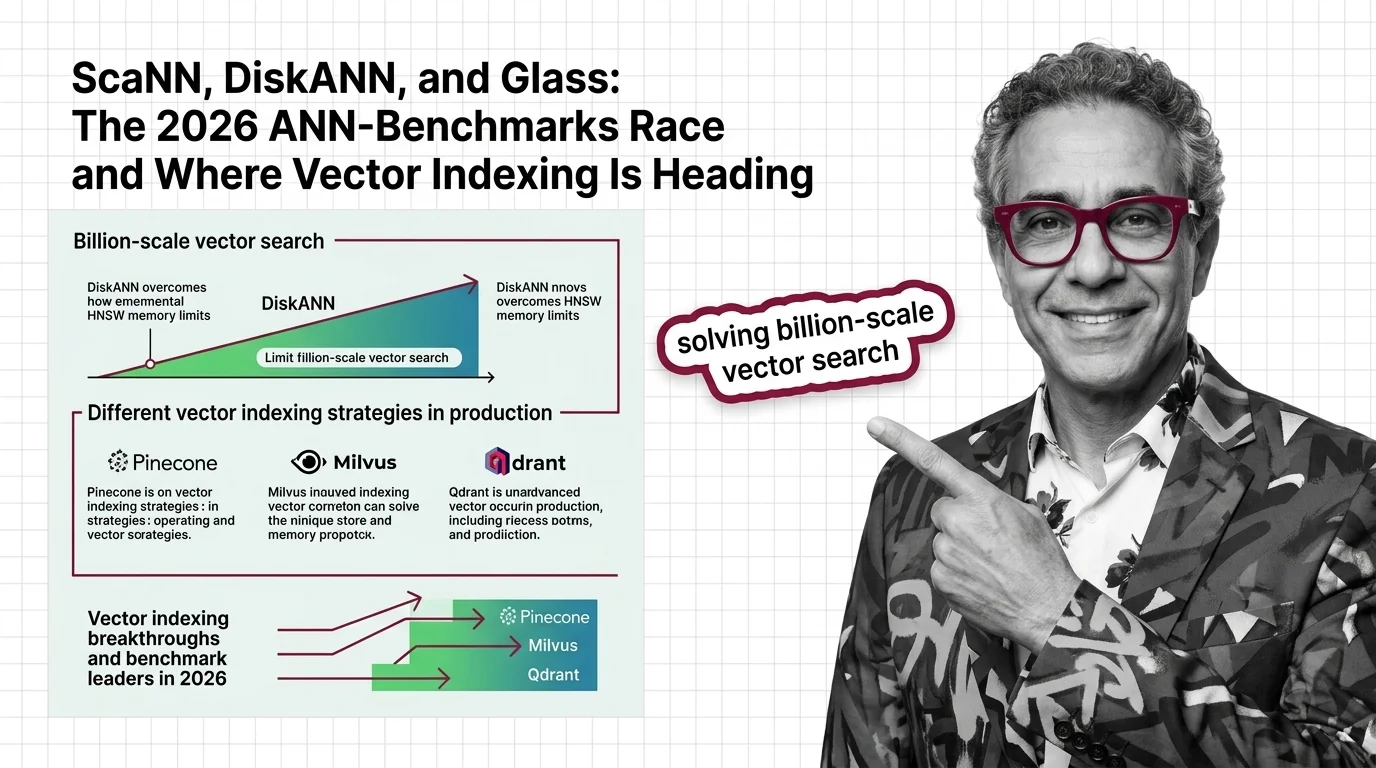

ScaNN, DiskANN, and Glass: The 2026 ANN-Benchmarks Race and Where Vector Indexing Is Heading

SymphonyQG, Glass, and ScaNN are rewriting ANN benchmark rankings. Learn which vector indexing strategies win at scale …

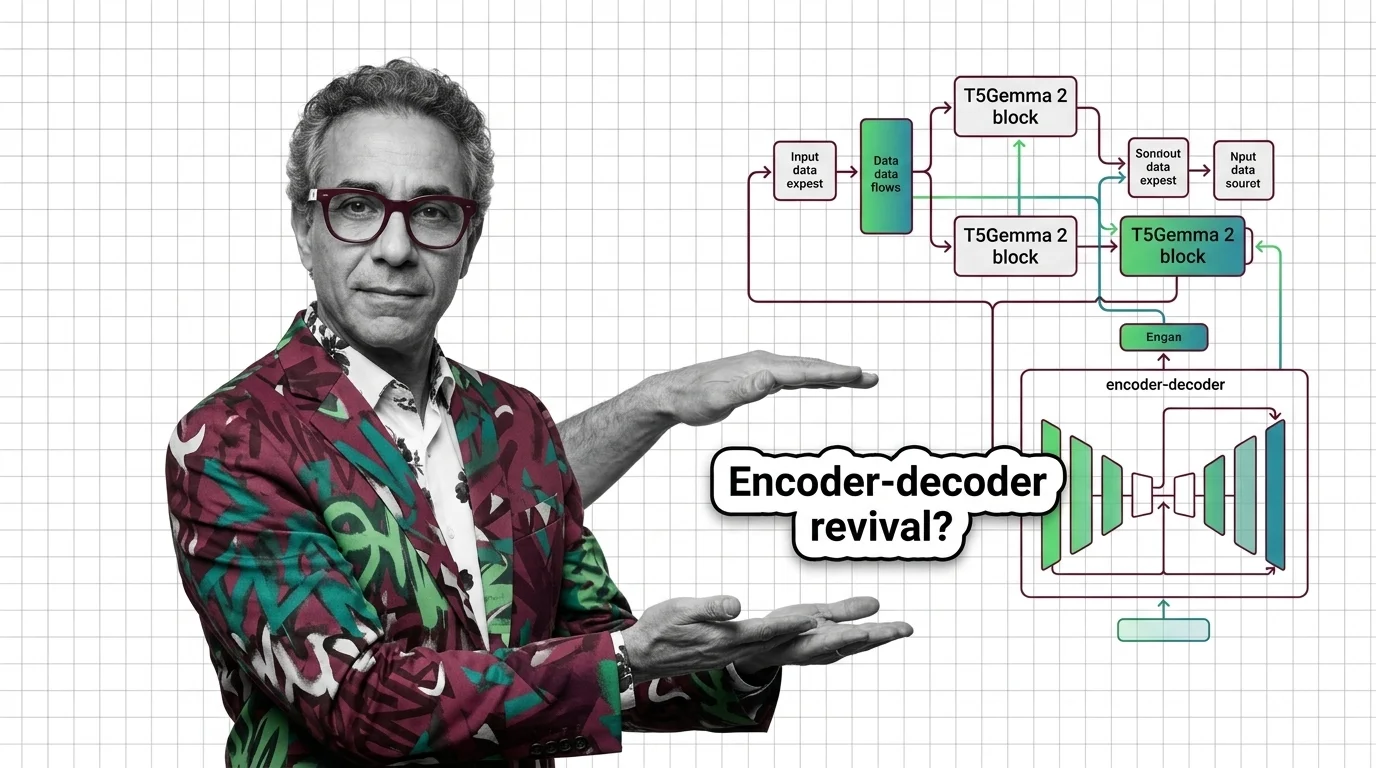

Why Google's T5Gemma 2 Bets on Encoder-Decoder Architecture

T5Gemma 2 brings 128K context and multimodal input via encoder-decoder, defying the decoder-only trend. Learn why Google …

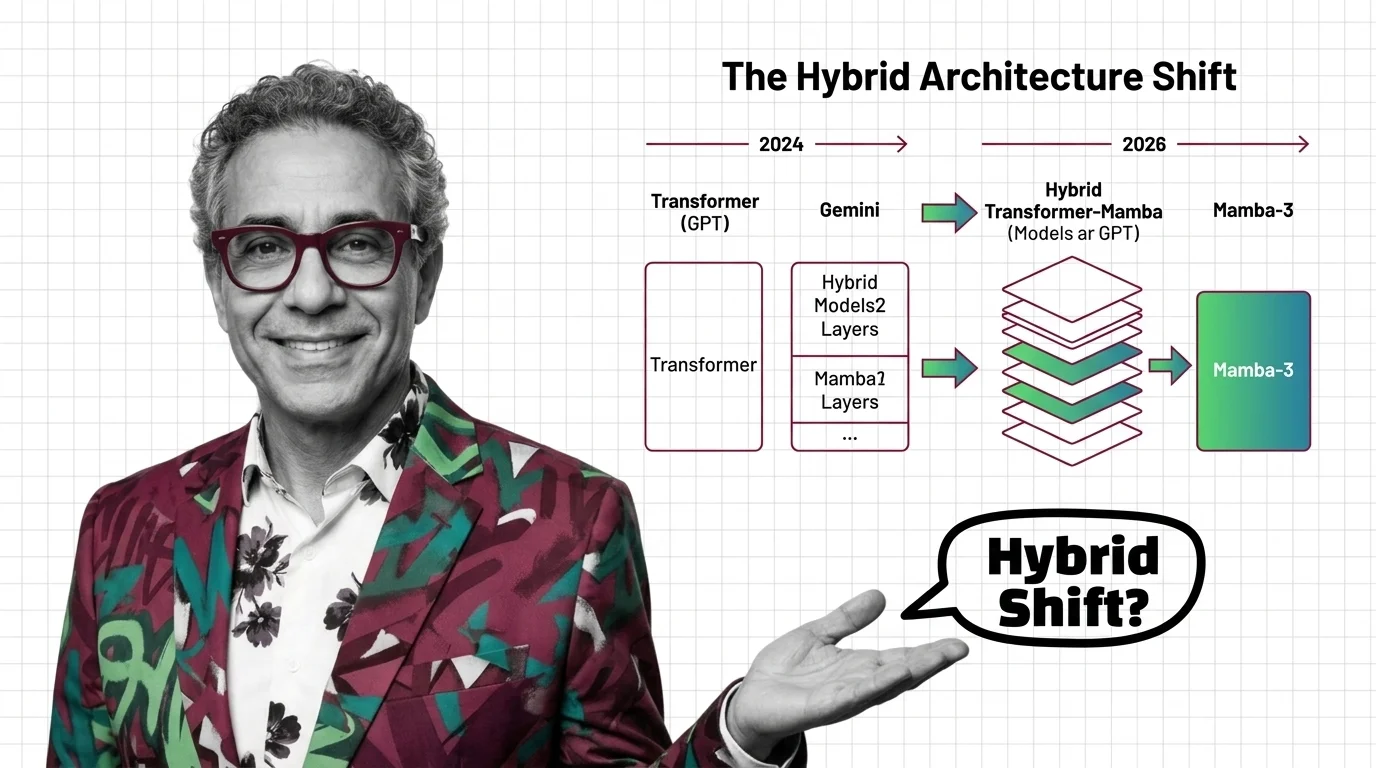

Transformers in 2026: GPT to Gemini, Mamba-3, and the Hybrid Architecture Shift

Mamba-3 and Nvidia Nemotron signal the hybrid architecture era. See which AI models still run pure transformers, who is …

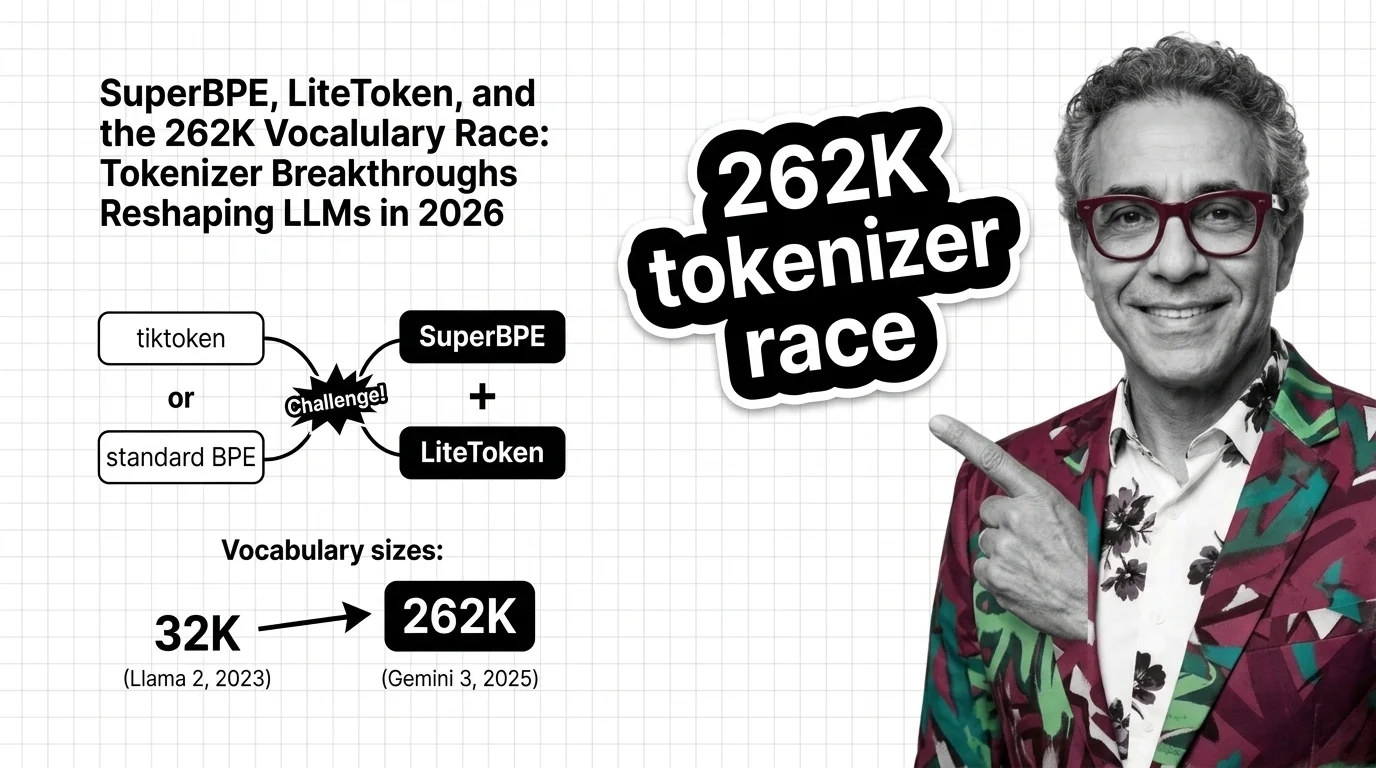

SuperBPE, LiteToken, 262K Vocab: 2026 Tokenizer Breakthrough

Tokenization is the overlooked frontier. SuperBPE and LiteToken expose 262K vocabulary gains in inference costs, …

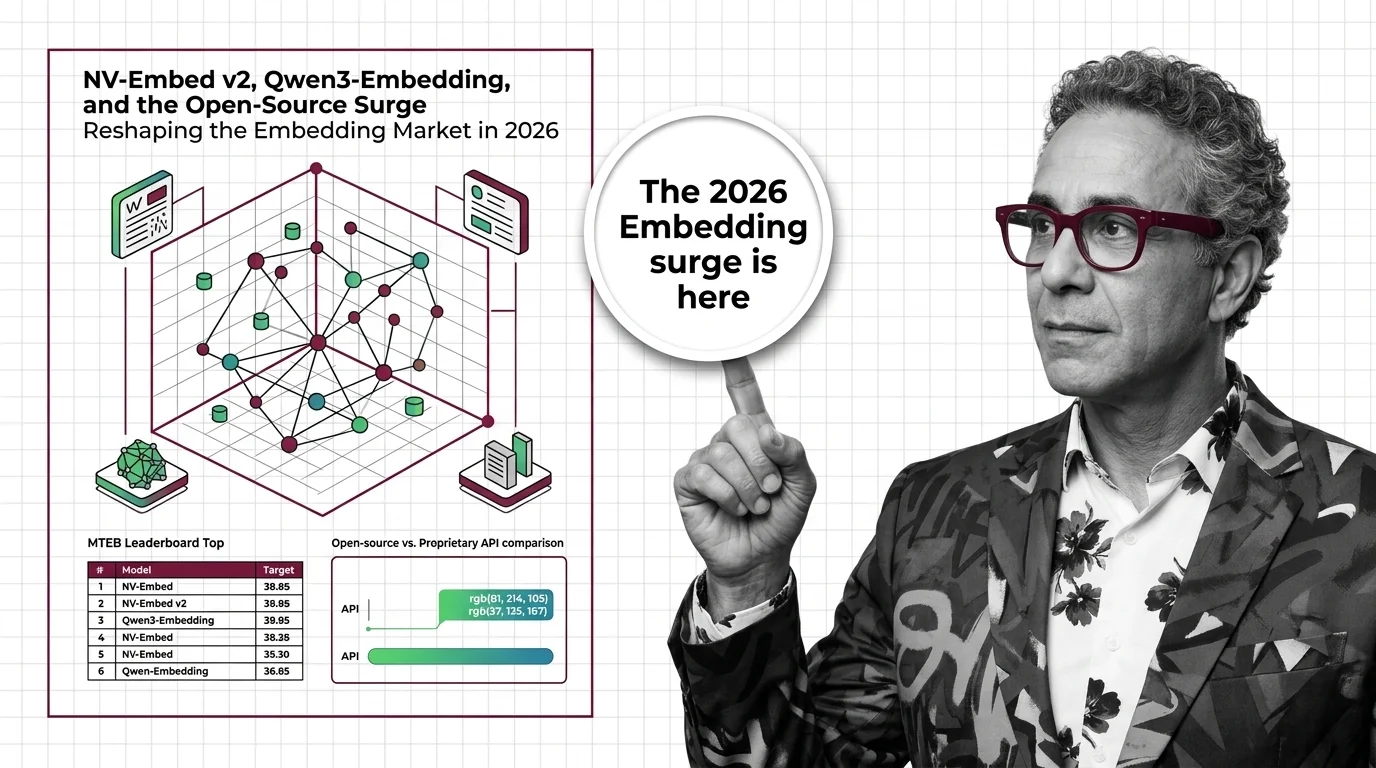

NV-Embed v2, Qwen3-Embedding, and the Open-Source Surge Reshaping the Embedding Market in 2026

Open-weight embedding models now match proprietary APIs on benchmarks at a fraction of the cost. What the 2026 market …

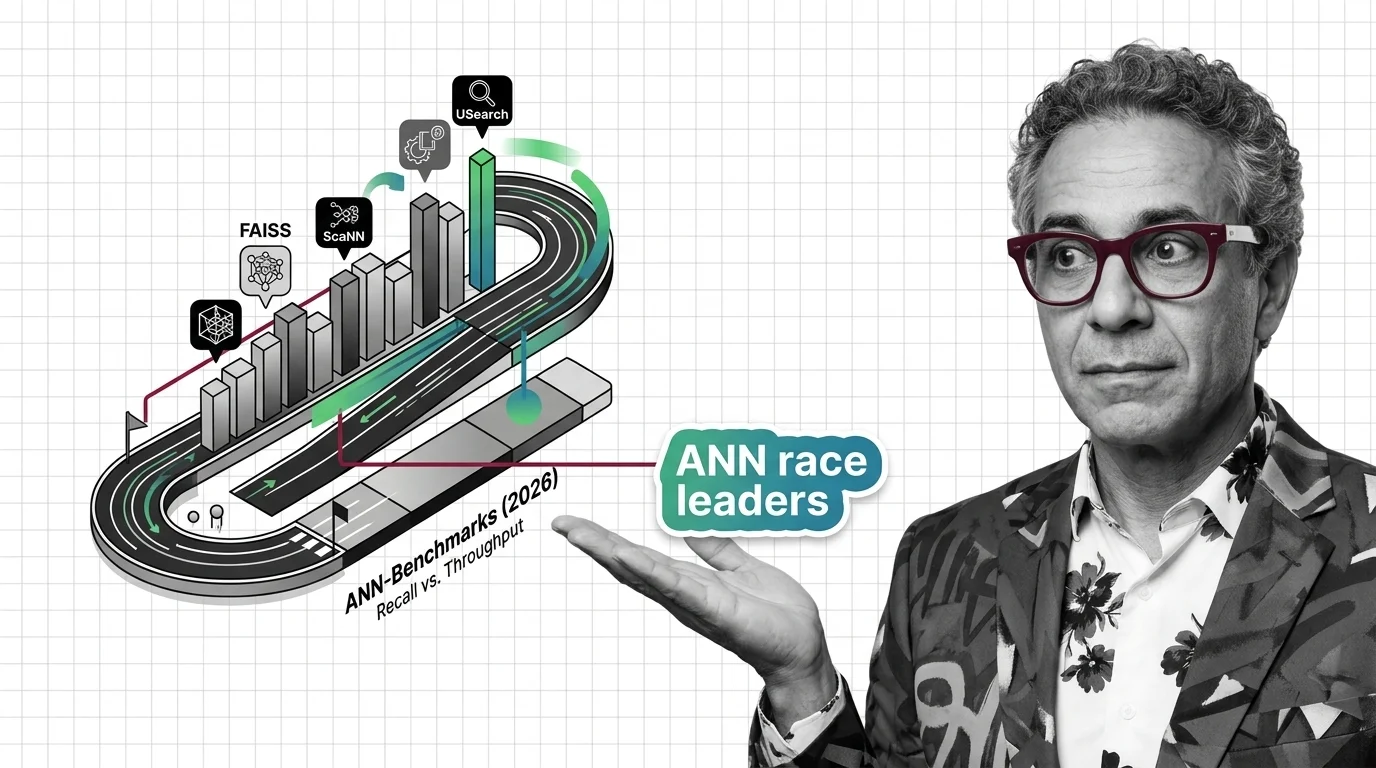

FAISS vs. ScaNN vs. USearch on ANN-Benchmarks: The Similarity Search Library Race in 2026

The ANN library race split into GPU-first and disk-first lanes. See which similarity search libraries lead in 2026 and …

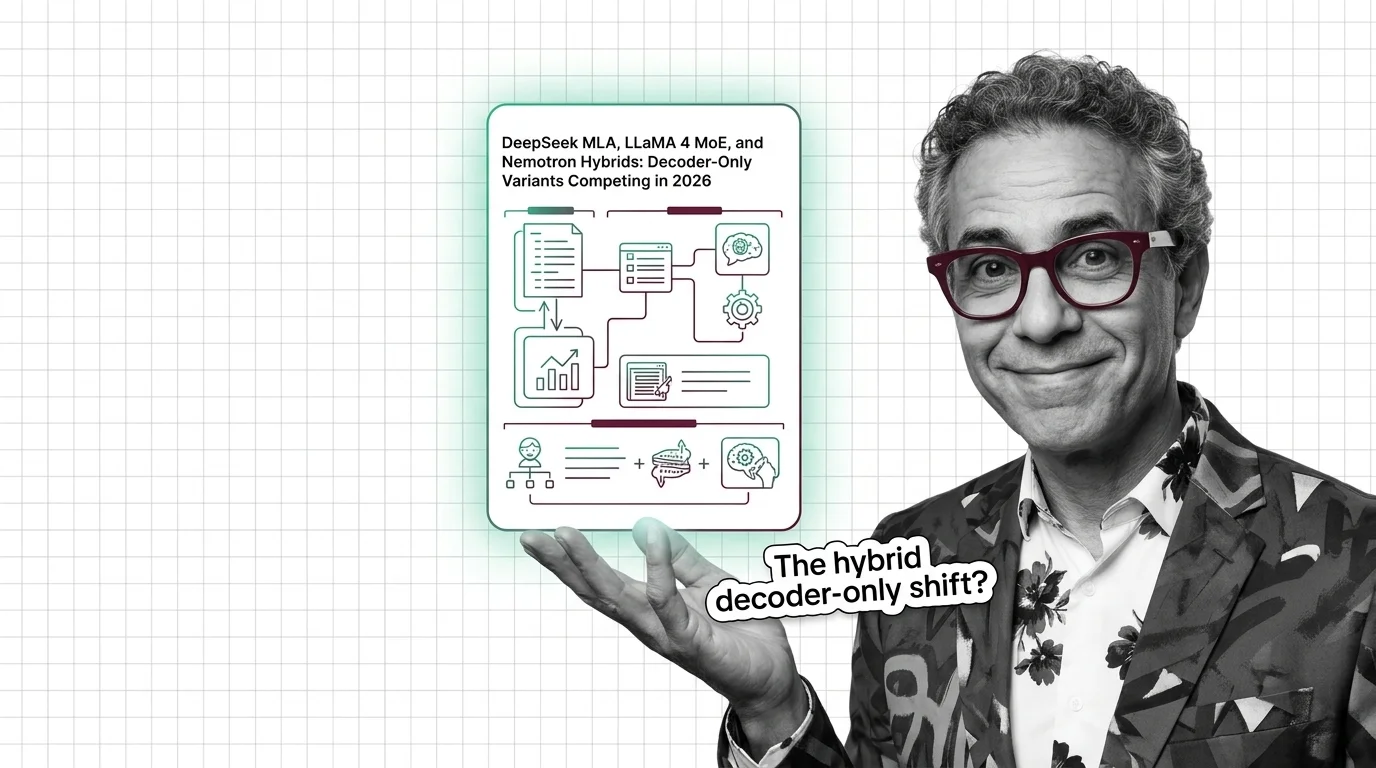

DeepSeek MLA, LLaMA 4 MoE, and Nemotron Hybrids: Decoder-Only Variants Competing in 2026

The decoder-only paradigm fractured. DeepSeek MLA, LLaMA 4 MoE, and NVIDIA Nemotron hybrids compete on inference cost — …

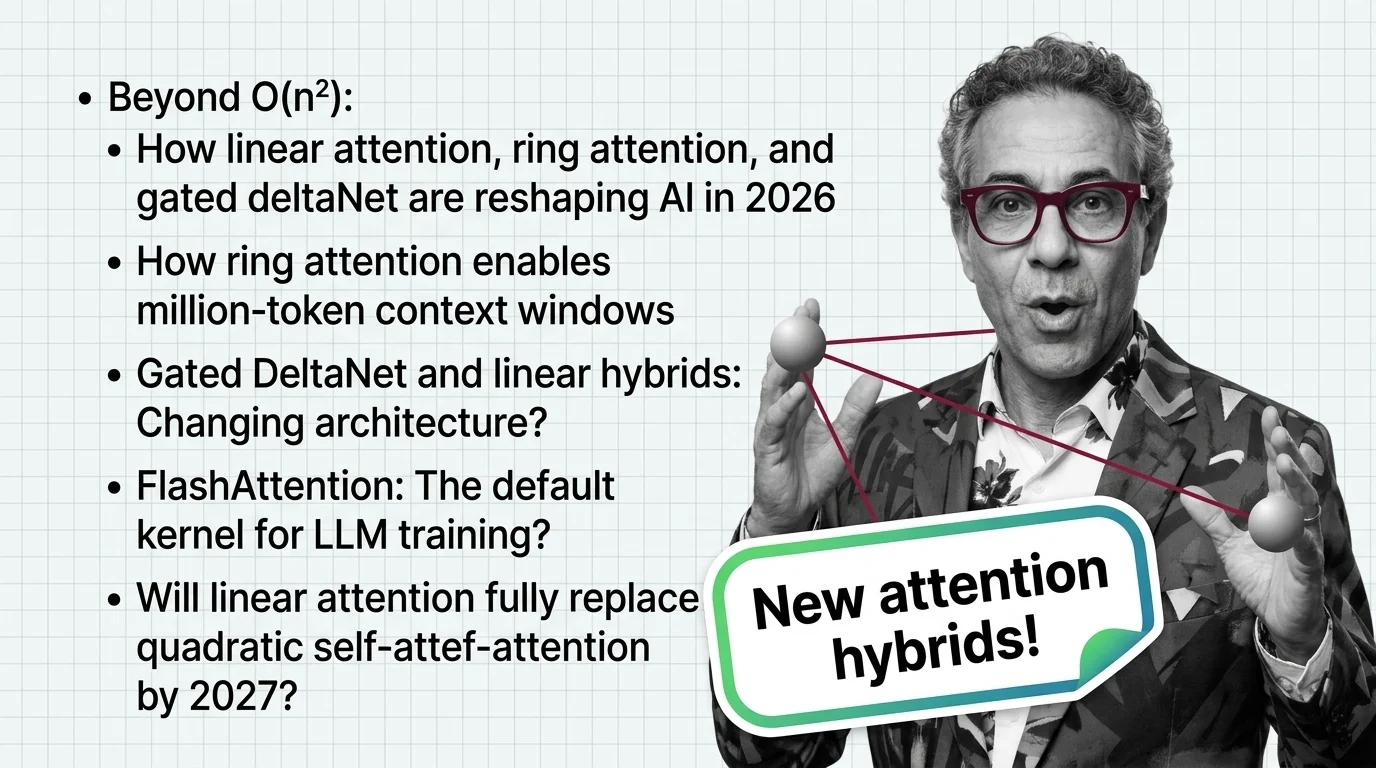

Beyond O(n²): How Linear Attention, Ring Attention, and Gated DeltaNet Are Reshaping AI in 2026

Linear attention hybrids with a 3:1 ratio are replacing pure quadratic self-attention. See which labs lead, who fell …

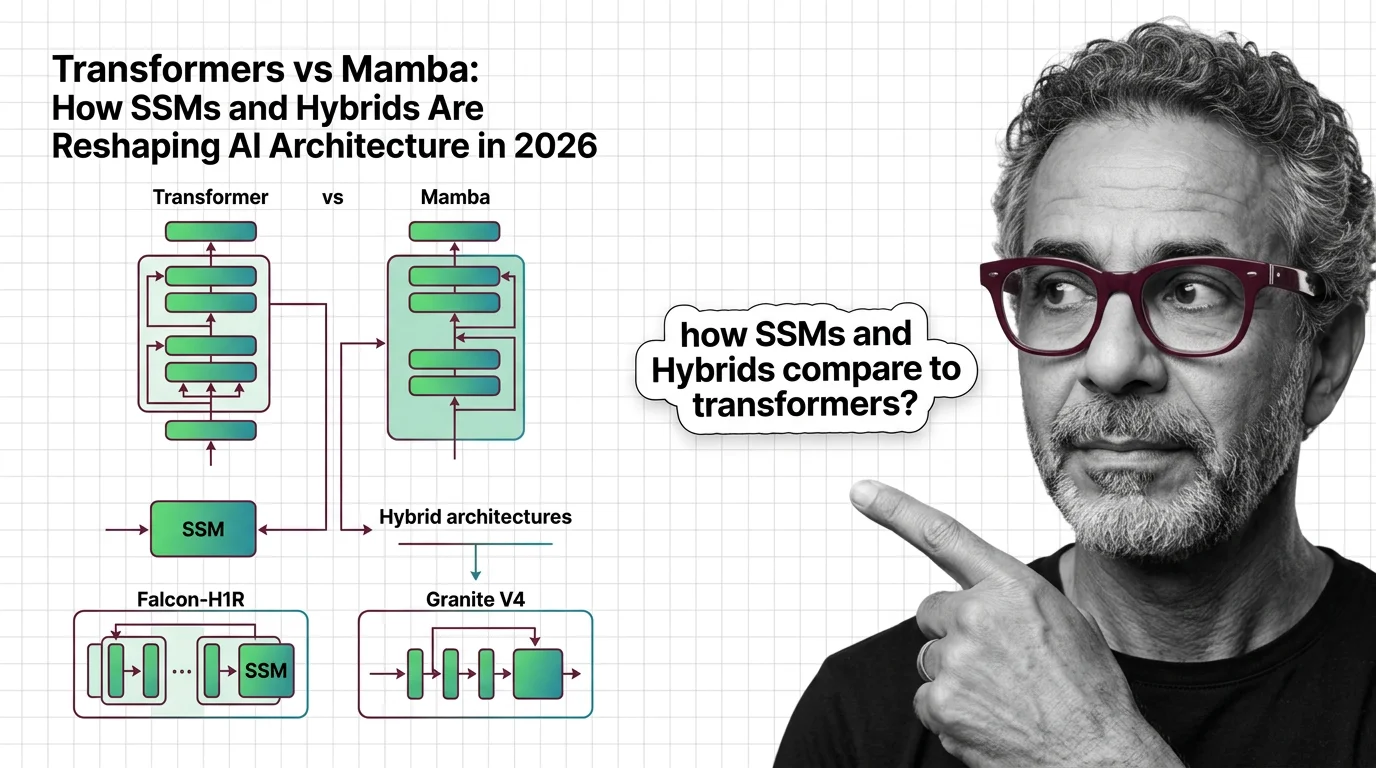

Transformers vs Mamba: How SSMs and Hybrids Are Reshaping AI Architecture in 2026

Hybrid SSM-transformer models from Falcon, IBM, and AI21 are outperforming pure transformers at a fraction of the cost. …

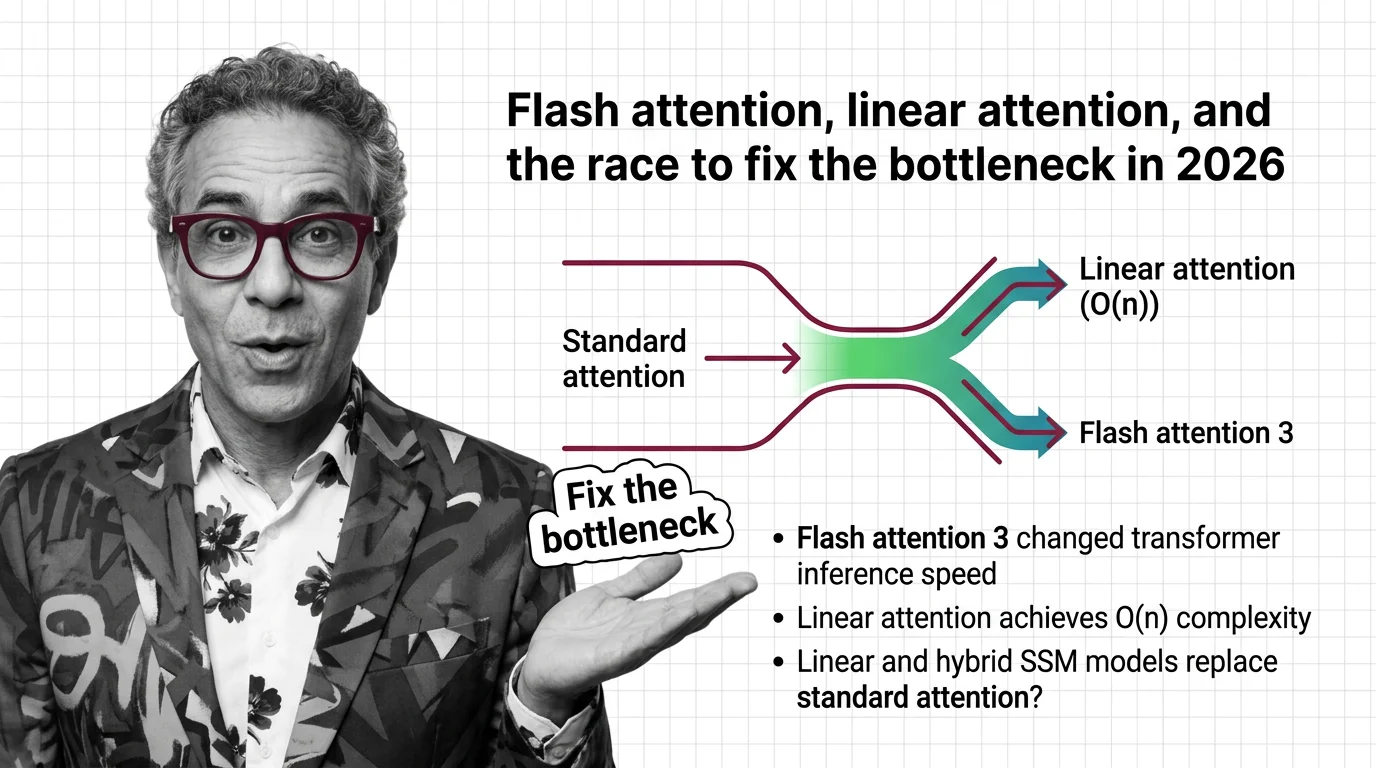

Flash Attention, Linear Attention, and the Race to Fix the Bottleneck in 2026

FlashAttention-4 and linear attention models are racing to solve the quadratic bottleneck in transformers. Here's who …