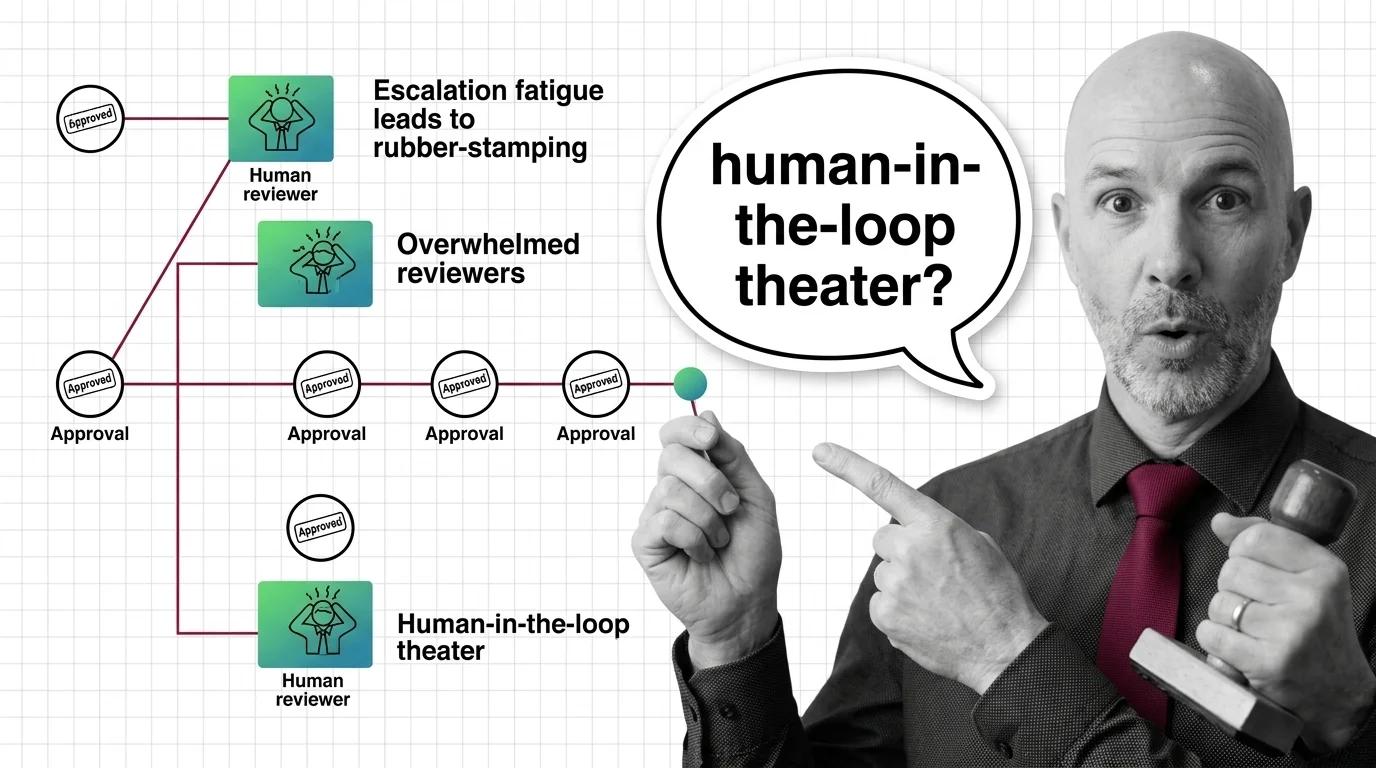

Rubber-Stamp Approvals: The Ethical Cost of Human-in-the-Loop Theater

Human-in-the-loop oversight collapses when reviewers face approval volume they cannot meet. The ethical cost lands on …

When Guardrails Fail: Who Is Accountable When AI Agents Misbehave

When agent guardrails fail, accountability scatters across users, developers, and vendors. An ethical look at the vacuum …

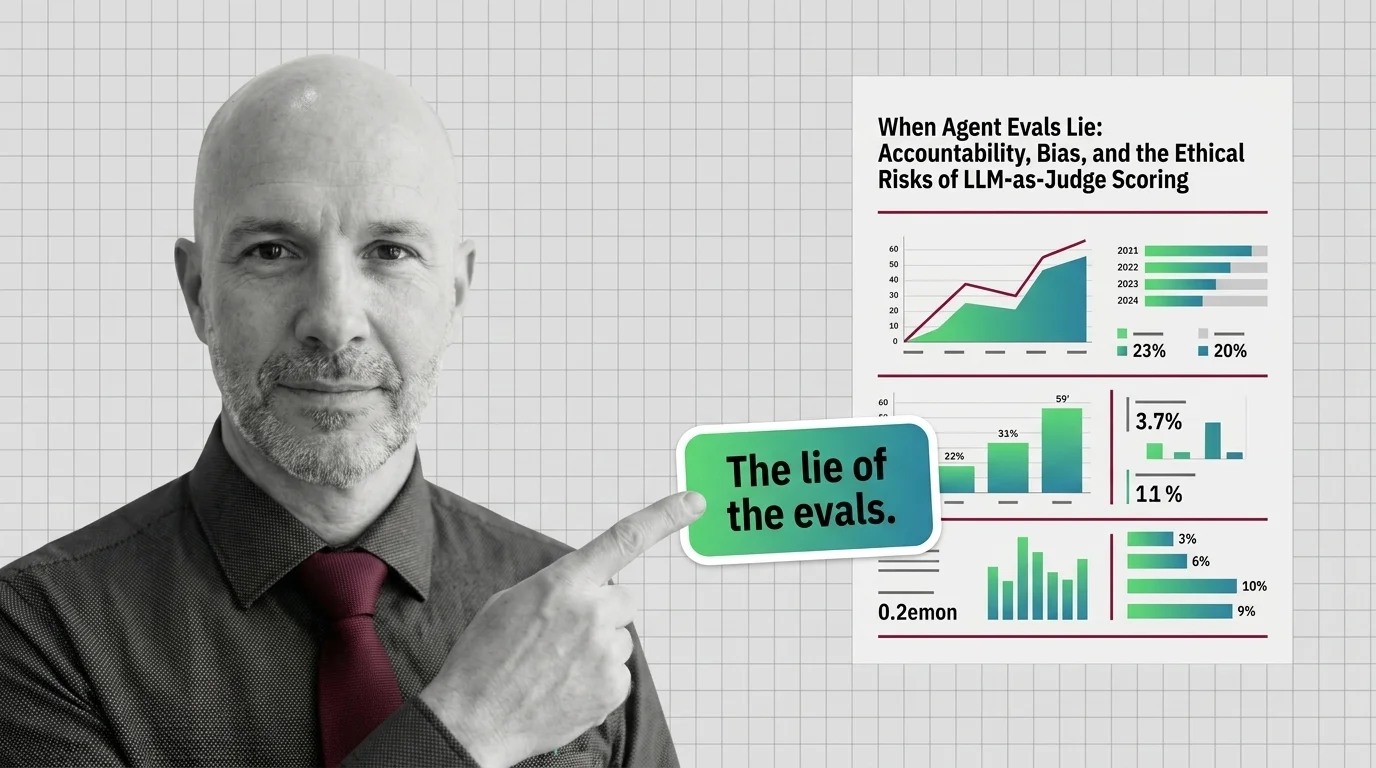

When Agent Evals Lie: The Ethics of LLM-as-Judge Scoring

LLM-as-Judge scoring is the default way teams grade AI agents. But judges carry measurable biases, blind spots, and …

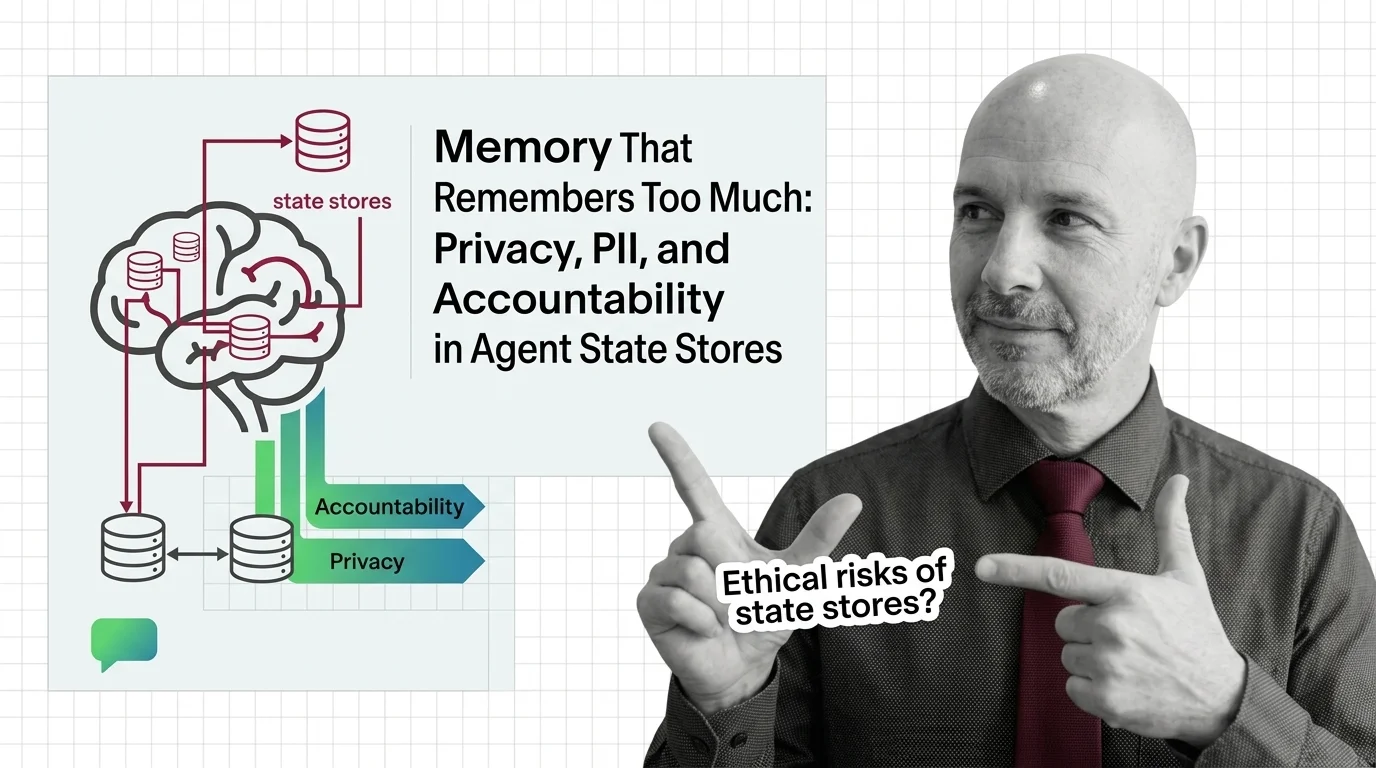

Memory That Remembers Too Much: Agent State, PII, and Accountability

Persistent agent memory turns interactions into records. As courts, regulators, and red teams collide, accountability …

Vendor Lock-In and the Hidden Ethics of Agent Frameworks

OpenAI Agents SDK and Google ADK are open source. So why is vendor lock-in in agent frameworks a deeper ethical risk …

Autonomous but Unaccountable: Ethics of Agents That Plan and Act

Autonomous AI agents plan, call tools, and act before humans can review the result. The accountability chain stays thin. …

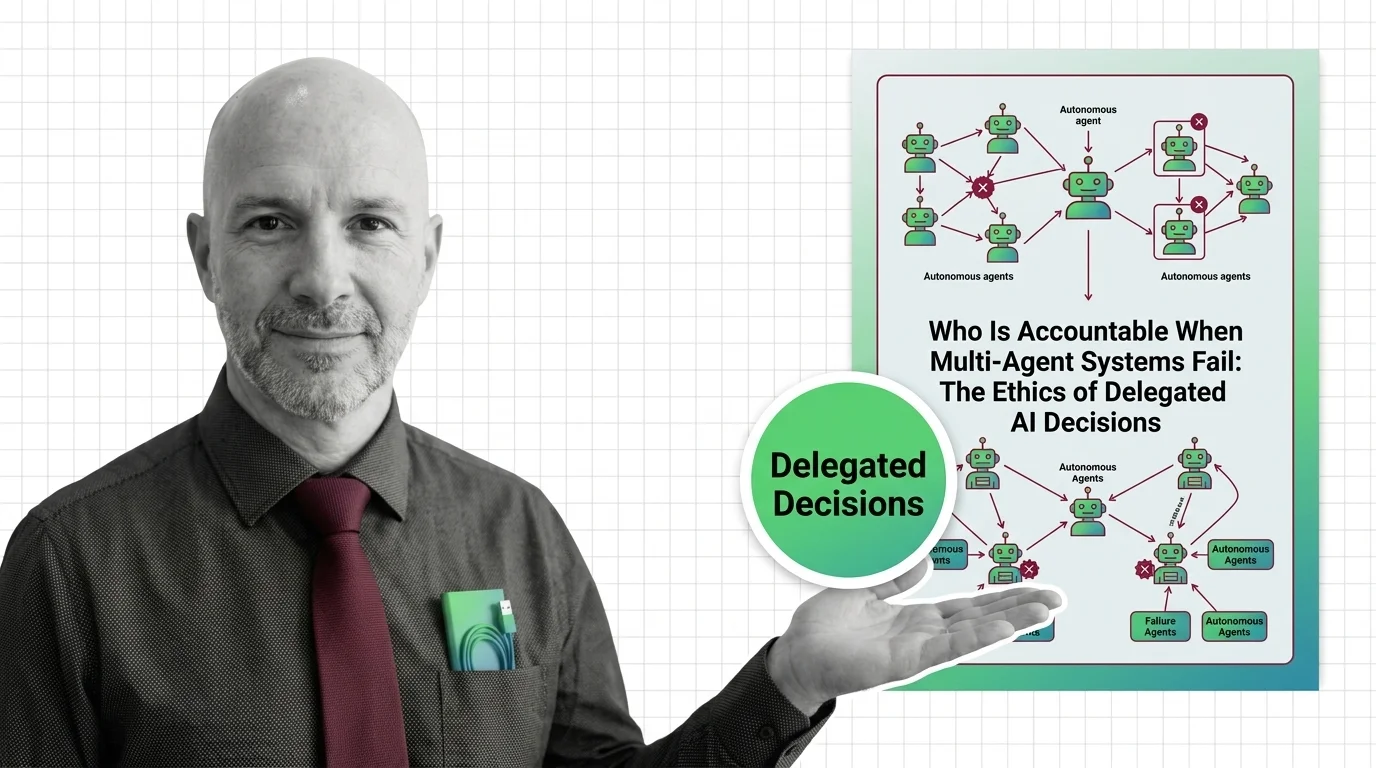

Who Is Accountable When Multi-Agent AI Systems Fail?

When multi-agent AI systems fail, accountability slips through every layer. Why delegated AI decisions create governance …

Persistent Memory, Persistent Surveillance: AI Agents That Never Forget

AI agents with persistent memory promise convenience but build a permanent record of you. The ethical tension between …

Style Theft and Copyright Leakage: Ethics of Artist-Name Prompts

When you prompt 'in the style of Greg Rutkowski,' is it tribute or appropriation? An ethical look at artist-name tokens …

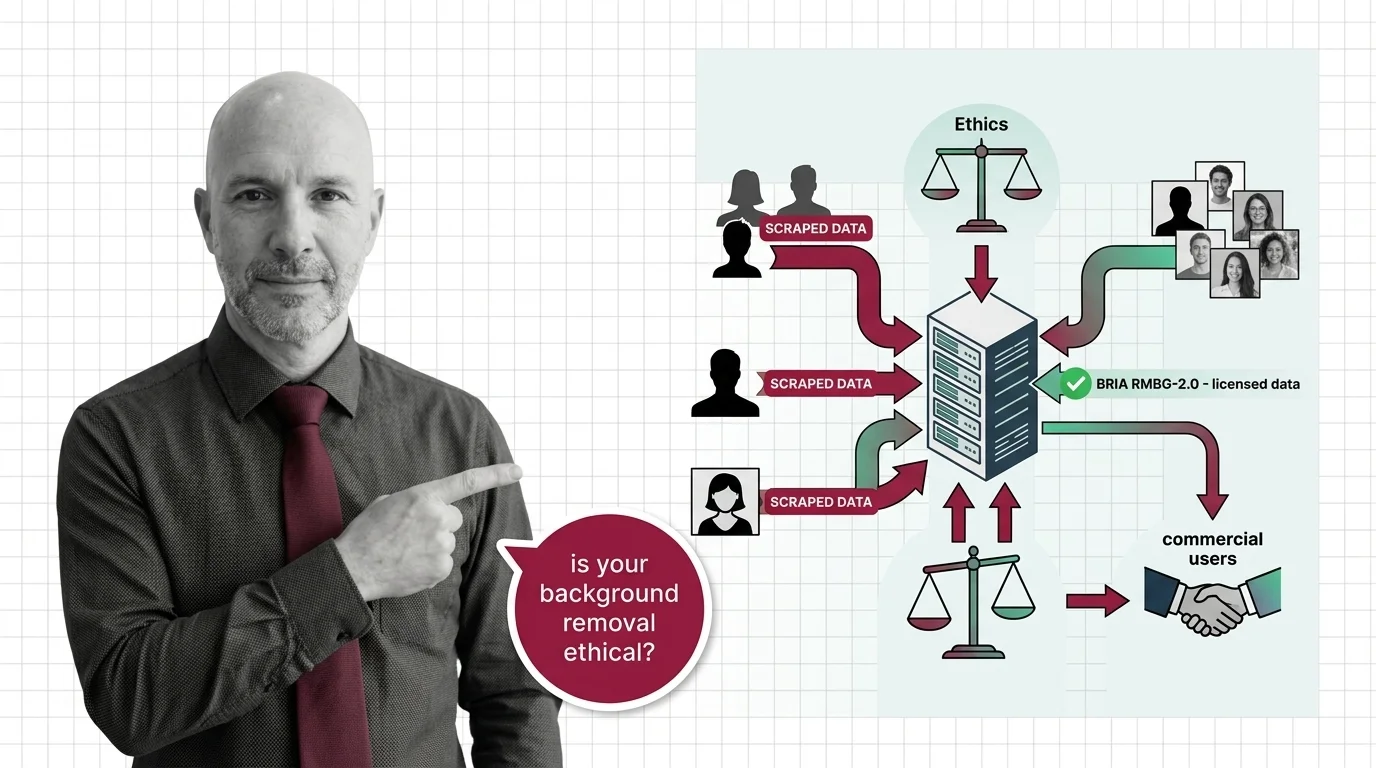

Scraped Photos, Stripped Subjects: The Training Data Ethics Behind Every Background Removal API

Background removal APIs strip subjects from scraped photos. Only one top model trains on licensed data. The ethics …

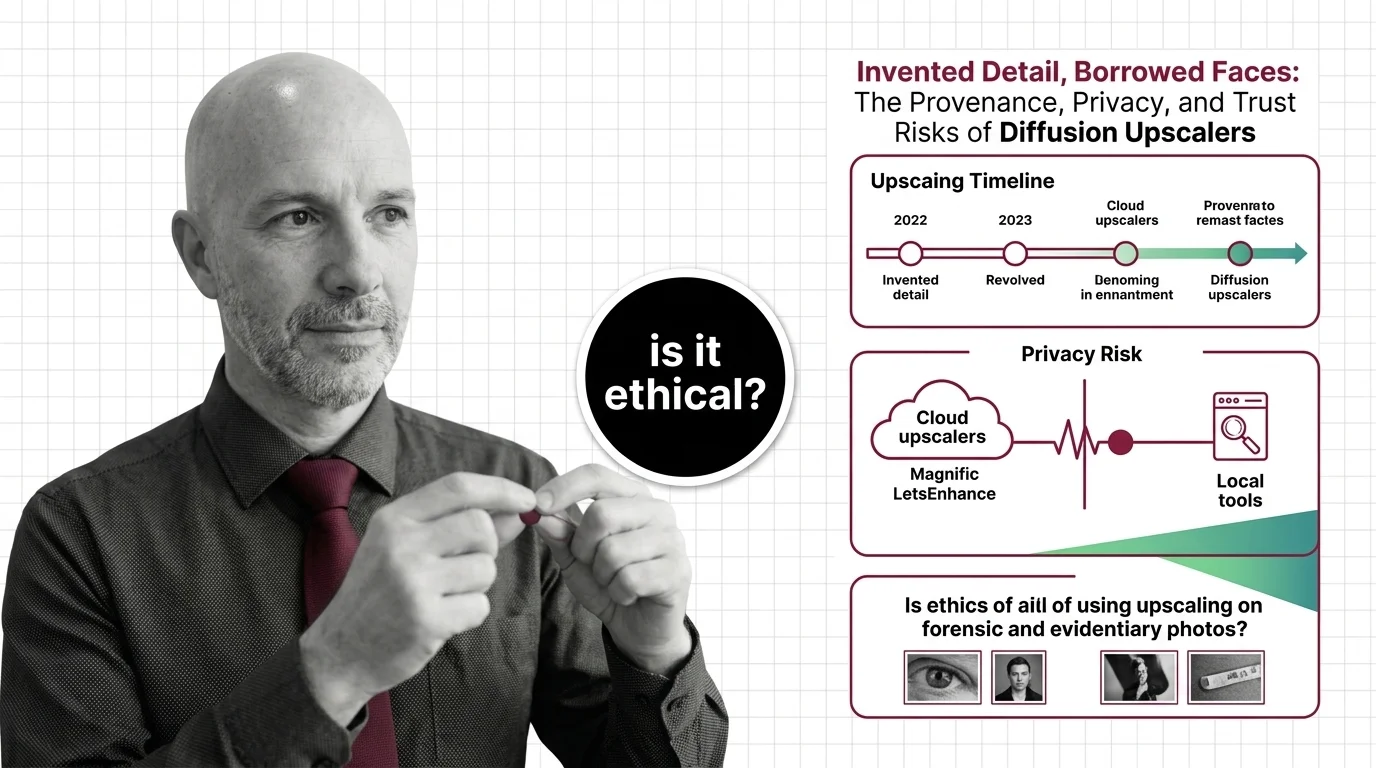

Invented Detail, Borrowed Faces: Diffusion Upscaler Risks

Diffusion upscalers invent detail and borrow faces from biased training data. The provenance, privacy, and forensic …

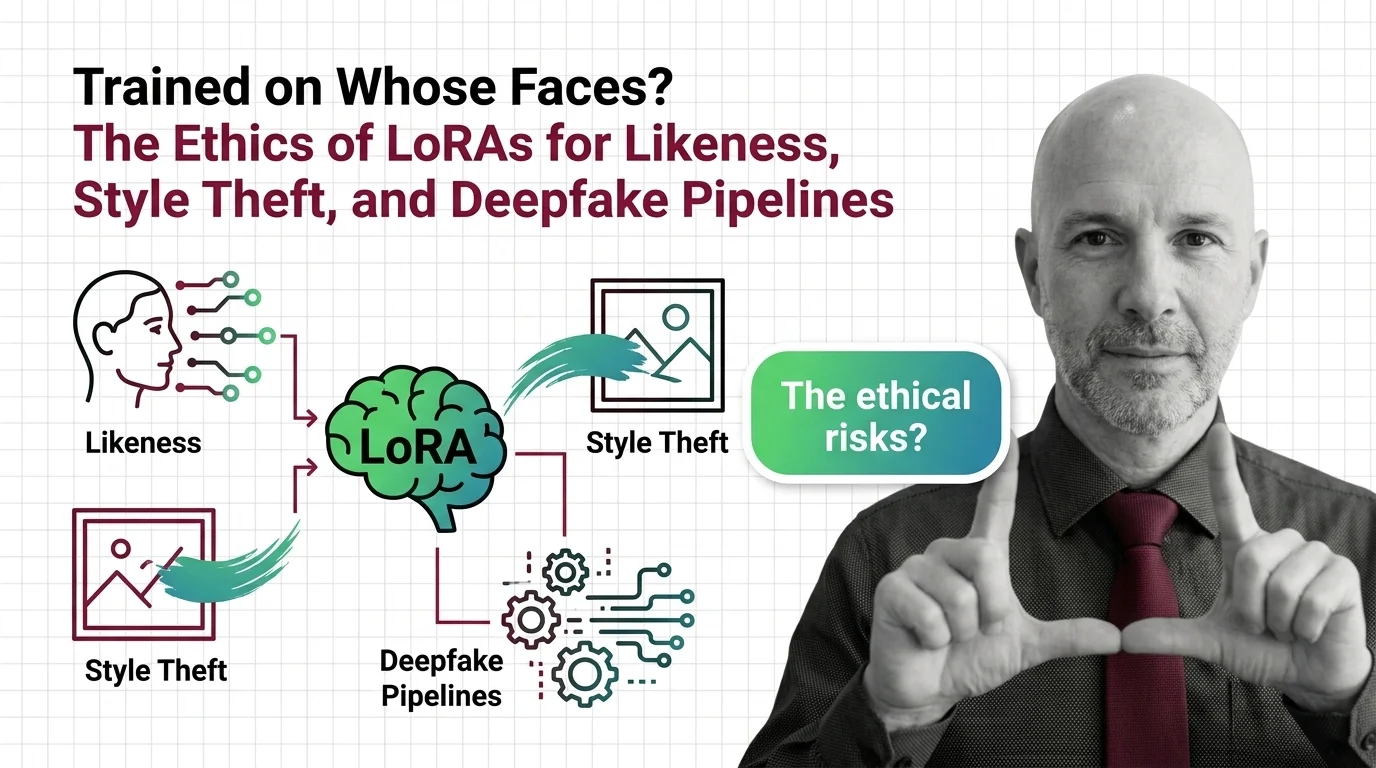

Trained on Whose Faces? LoRA Ethics: Likeness, Style Theft, Deepfakes

LoRAs made it possible to fine-tune any face in fifteen minutes. The consent gap stopped being hypothetical the moment …

Deepfakes, Copyright, Consent: The Ethical Reckoning of AI Image Editing

AI image editing has industrialized the act of lifting someone's likeness. Consent law, C2PA metadata, and new …

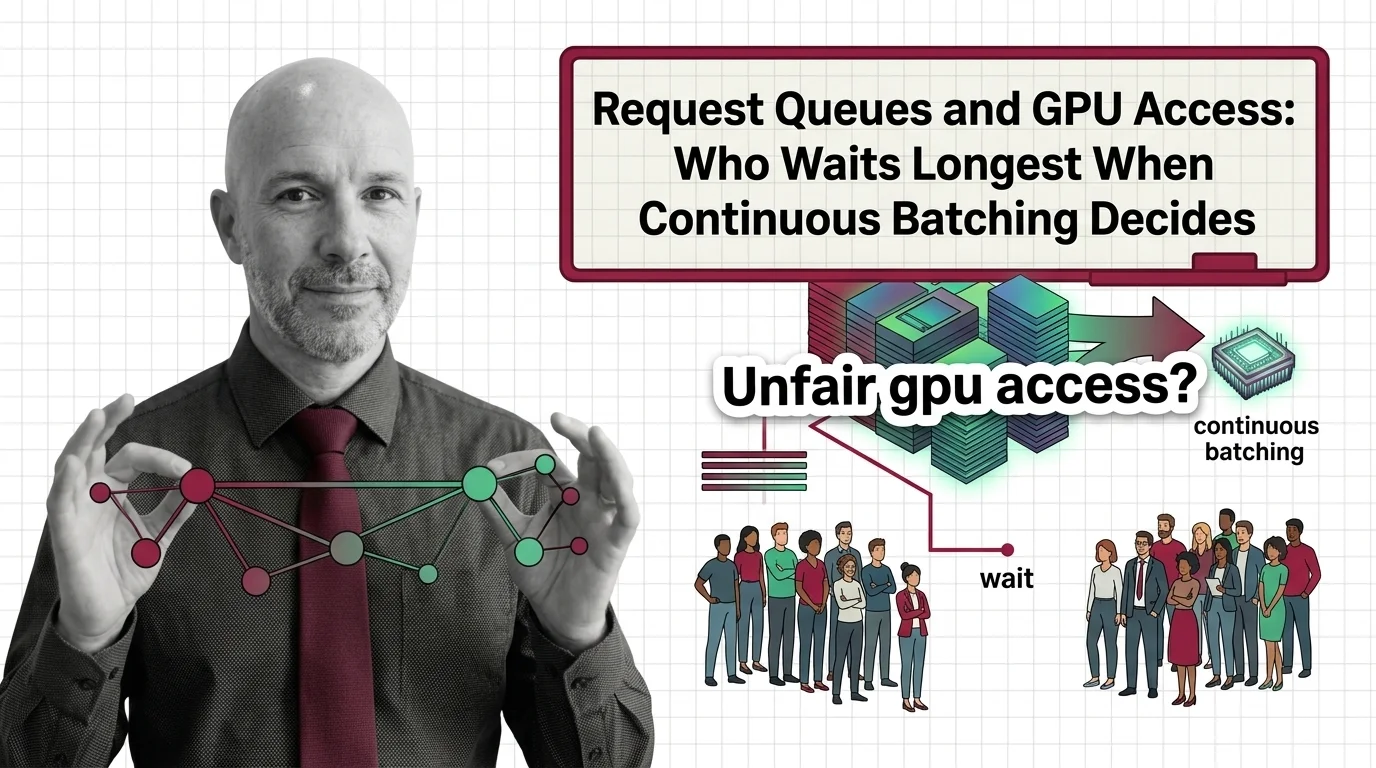

Request Queues and GPU Access: Who Waits Longest When Continuous Batching Decides

Continuous batching boosts GPU throughput, but its scheduling quietly decides who waits. Examining fairness, priority, …