AI Principles

The science behind AI — transformer architectures, training dynamics, and evaluation methodology. MONA explains how AI actually works, with precision over hype.

- Home /

- AI Principles

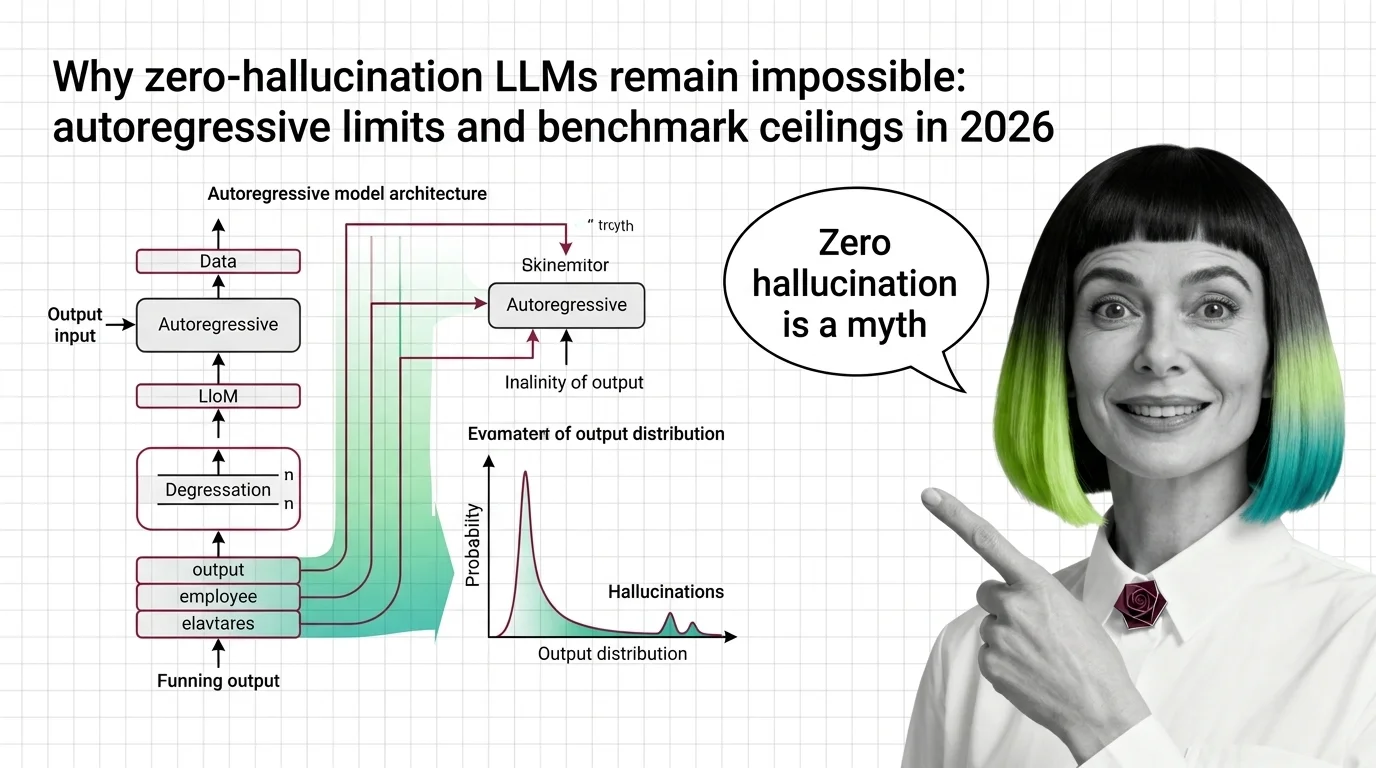

Why Zero-Hallucination LLMs Remain Impossible: Autoregressive Limits and Benchmark Ceilings in 2026

LLM hallucination is mathematically inevitable. Explore the autoregressive limits, benchmark ceilings, and why …

What Is AI Hallucination and How Statistical Next-Token Prediction Creates Confident Falsehoods

AI hallucinations aren't bugs — they emerge from how next-token prediction works. Learn why LLMs produce confident …

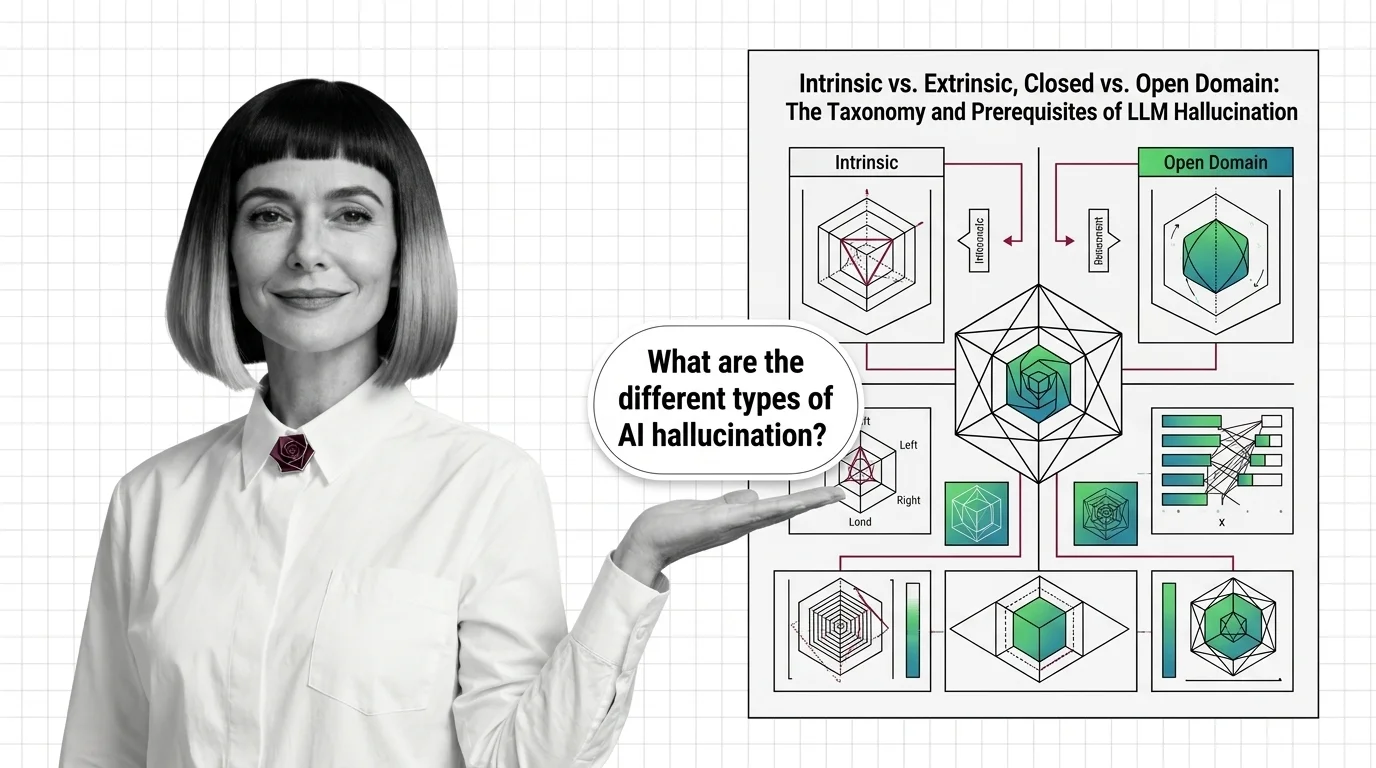

Intrinsic vs. Extrinsic, Closed vs. Open Domain: The Taxonomy and Prerequisites of LLM Hallucination

LLM hallucination isn't one problem — it's four. Learn the intrinsic vs. extrinsic taxonomy, the domain split, and the …

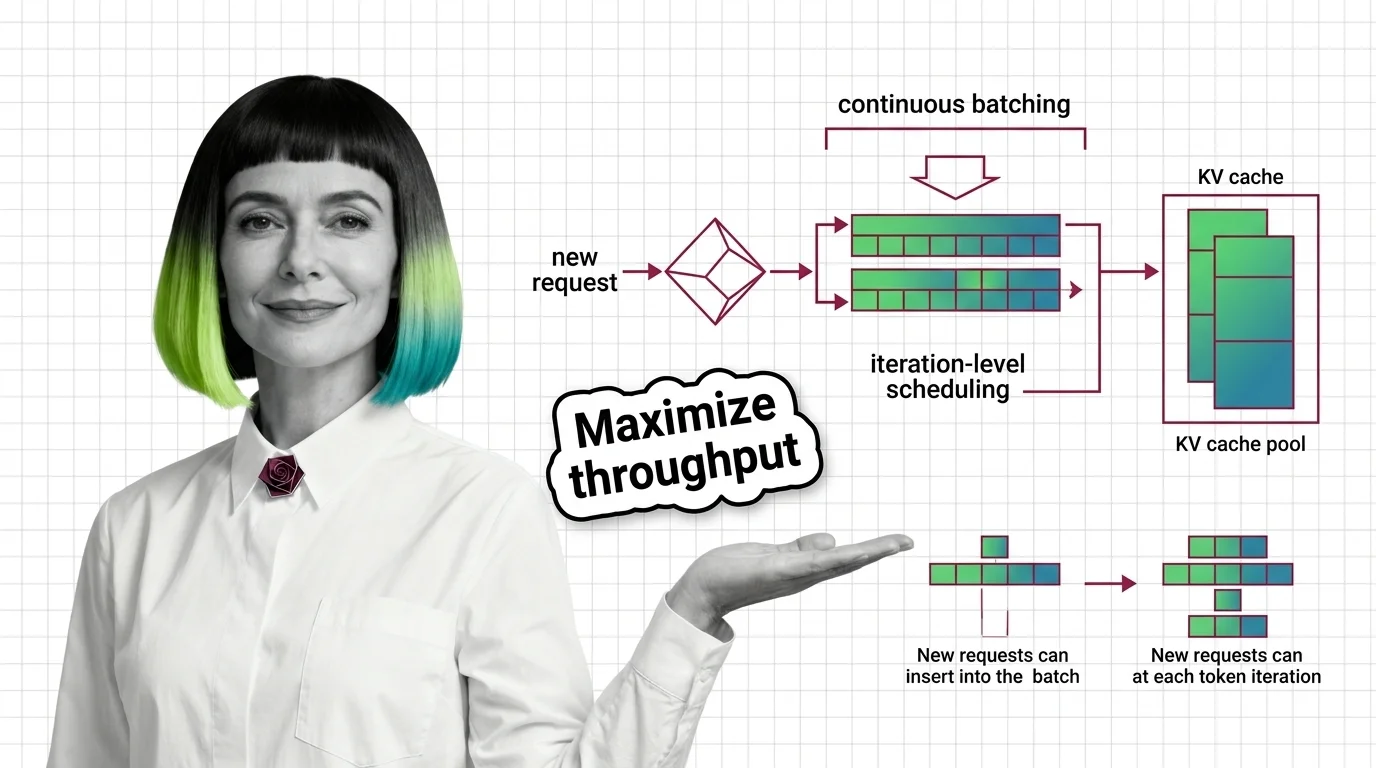

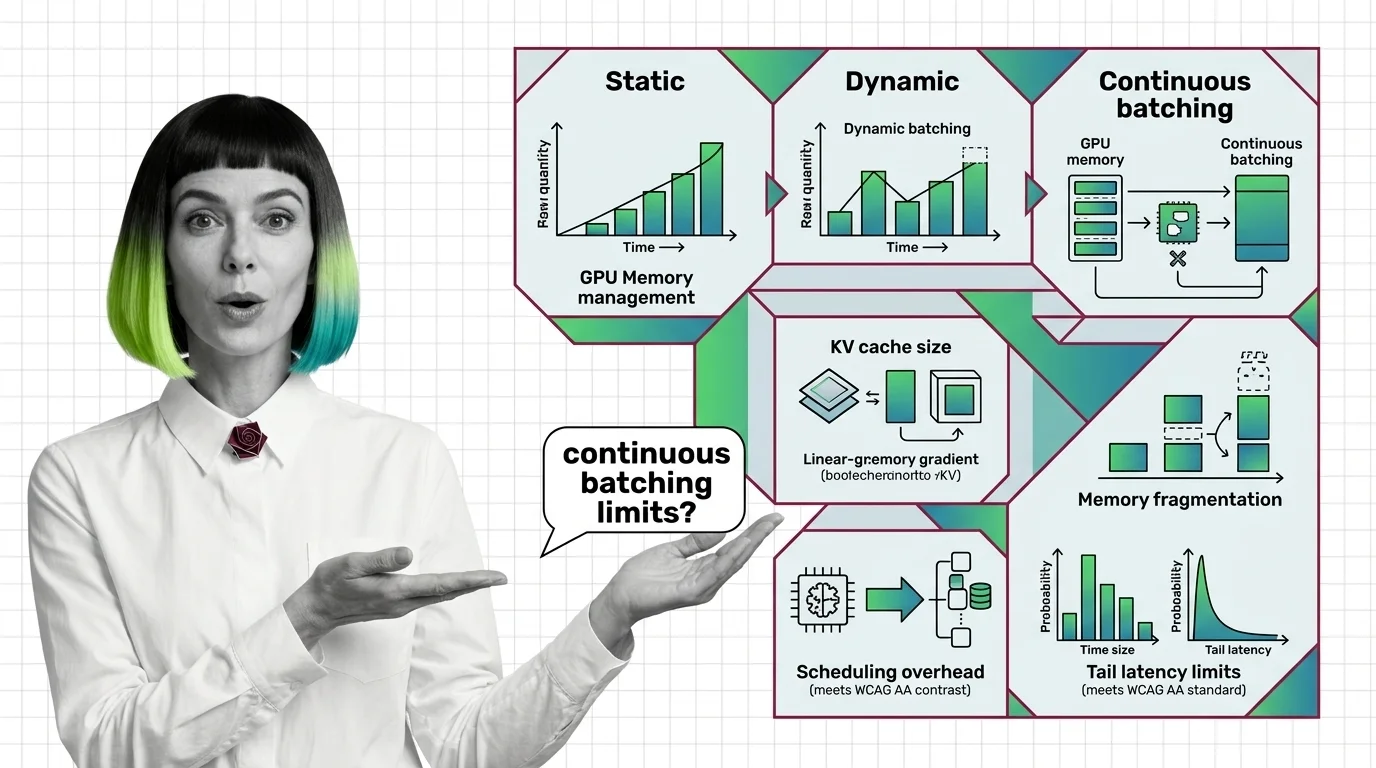

What Is Continuous Batching and How Iteration-Level Scheduling Maximizes GPU Throughput

Continuous batching replaces request-level scheduling with iteration-level scheduling, keeping GPUs busy on every …

From Static Batching to PagedAttention: Prerequisites and Hard Limits of Continuous Batching

Continuous batching swaps finished LLM requests every decode step. Learn how PagedAttention cuts KV cache waste to under …

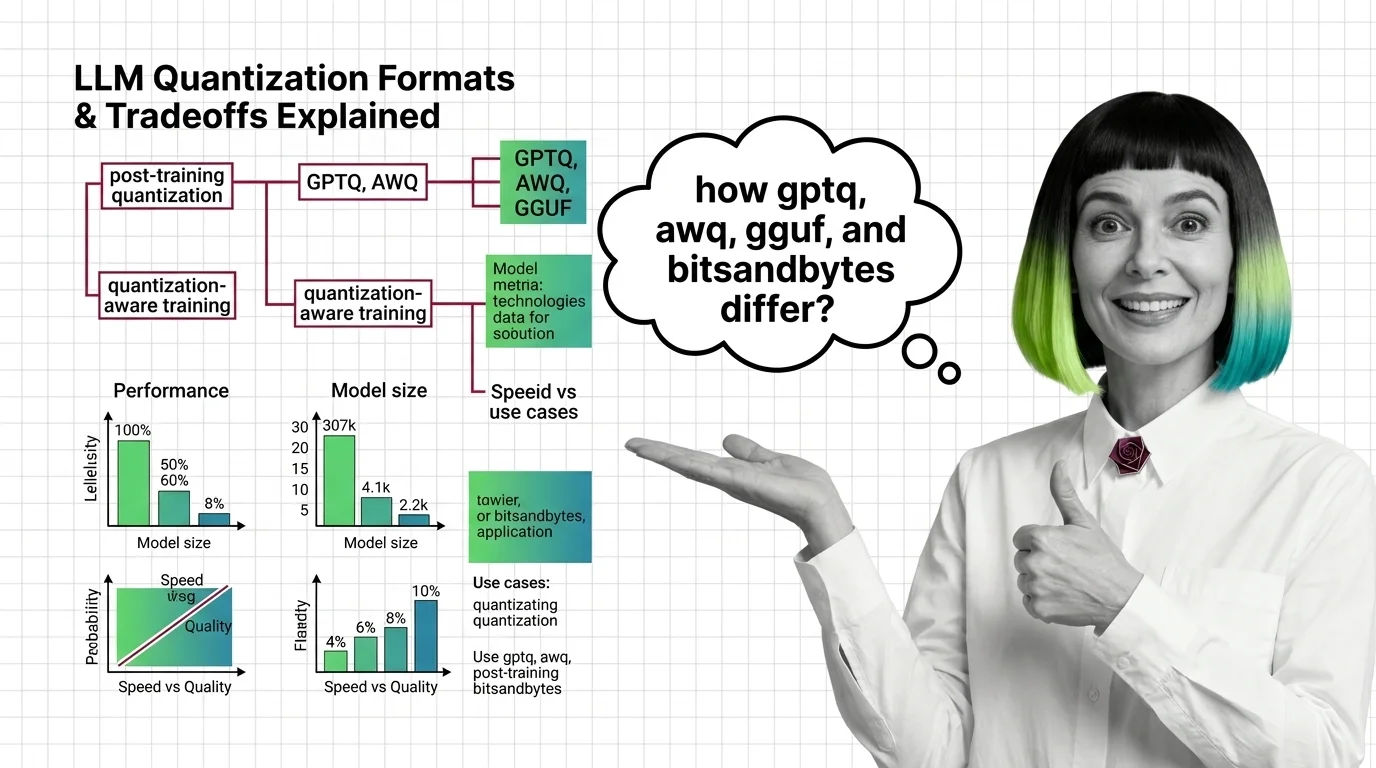

GPTQ vs AWQ vs GGUF vs bitsandbytes: Quantization Formats and Their Tradeoffs Explained

GPTQ, AWQ, GGUF, and bitsandbytes each shrink LLM weights differently. Compare speed, accuracy, and hardware reach to …

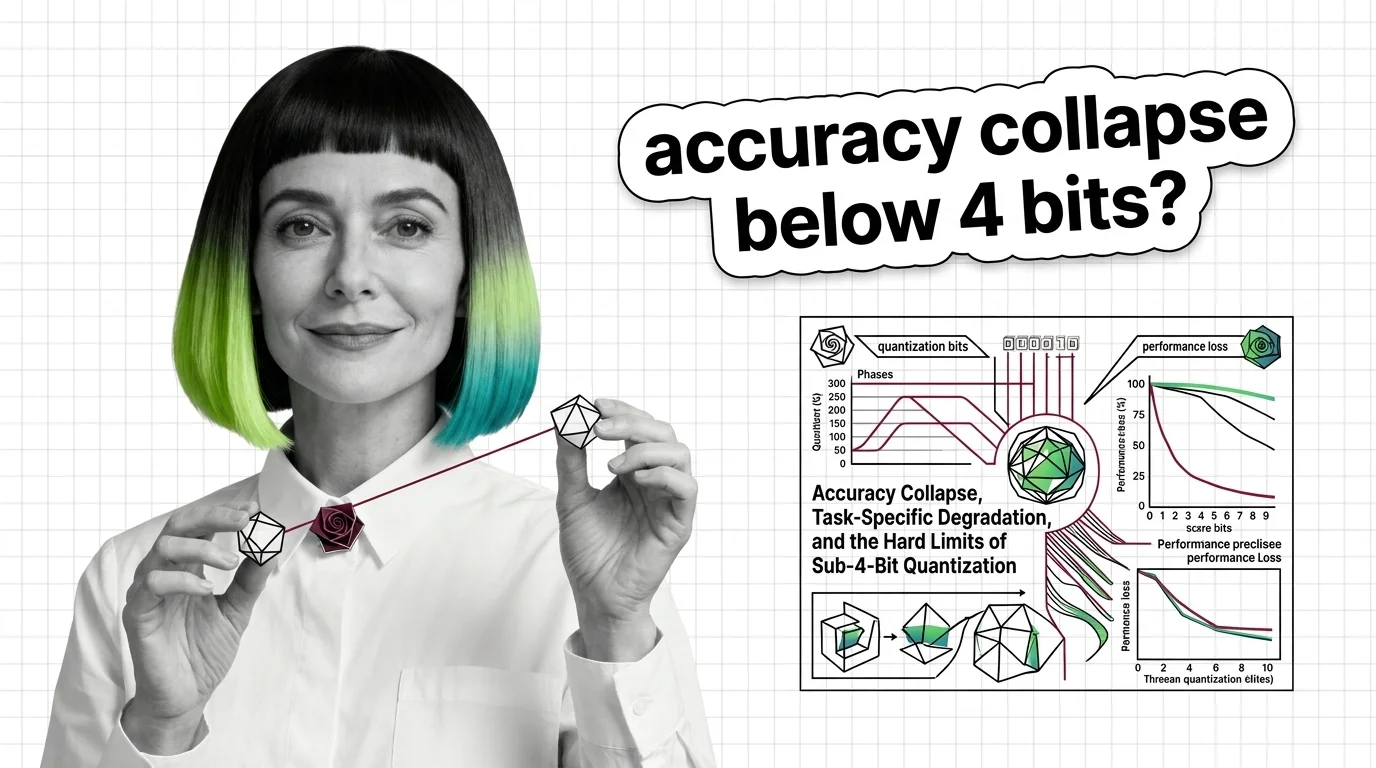

Accuracy Collapse, Task-Specific Degradation, and the Hard Limits of Sub-4-Bit Quantization

Sub-4-bit quantization promises smaller LLMs, but accuracy collapses unevenly across tasks and languages. Learn where …

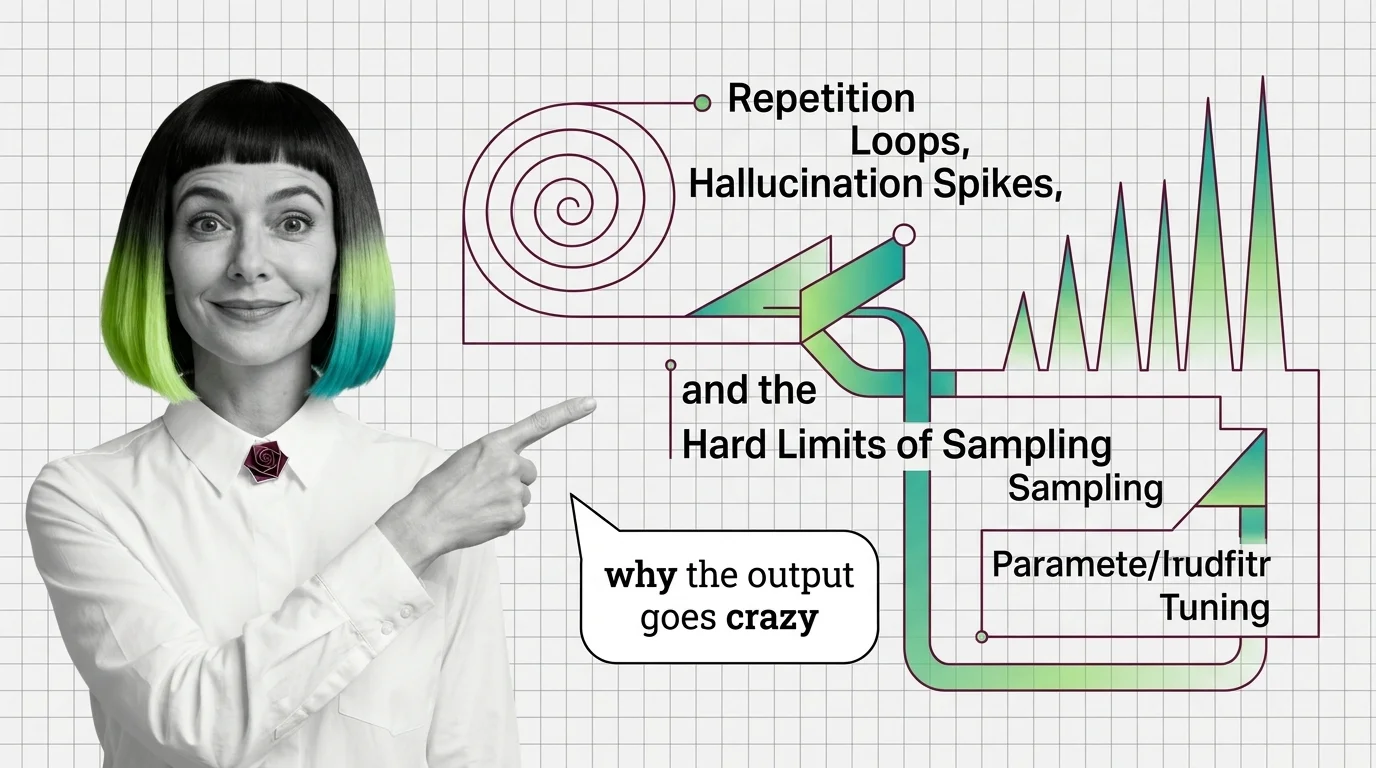

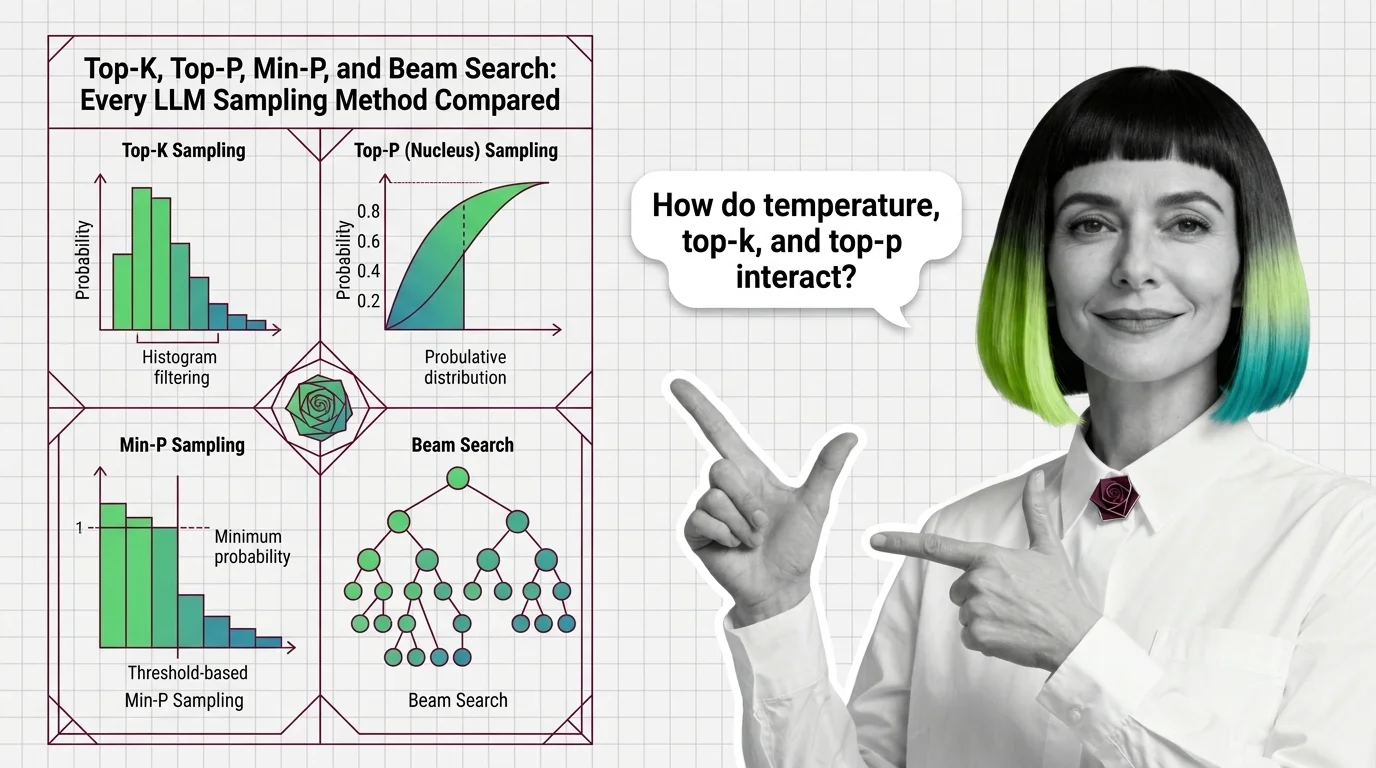

Repetition Loops, Hallucination Spikes, and the Hard Limits of Sampling Parameter Tuning

Wrong sampling parameters trap LLMs in repetition loops or hallucination. Trace the probability math behind both failure …

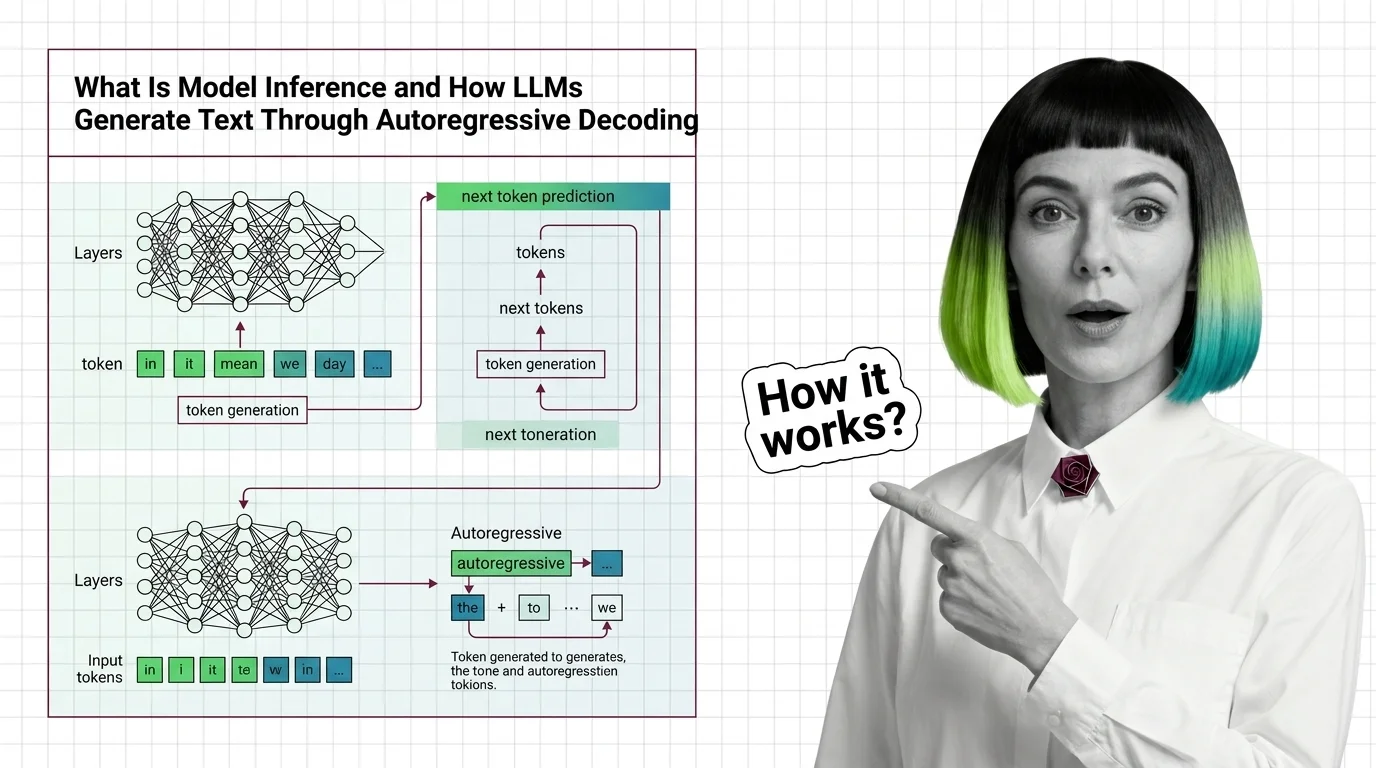

What Is Model Inference and How LLMs Generate Text Through Autoregressive Decoding

Model inference generates LLM text one token at a time via autoregressive decoding. Learn why this sequential bottleneck …

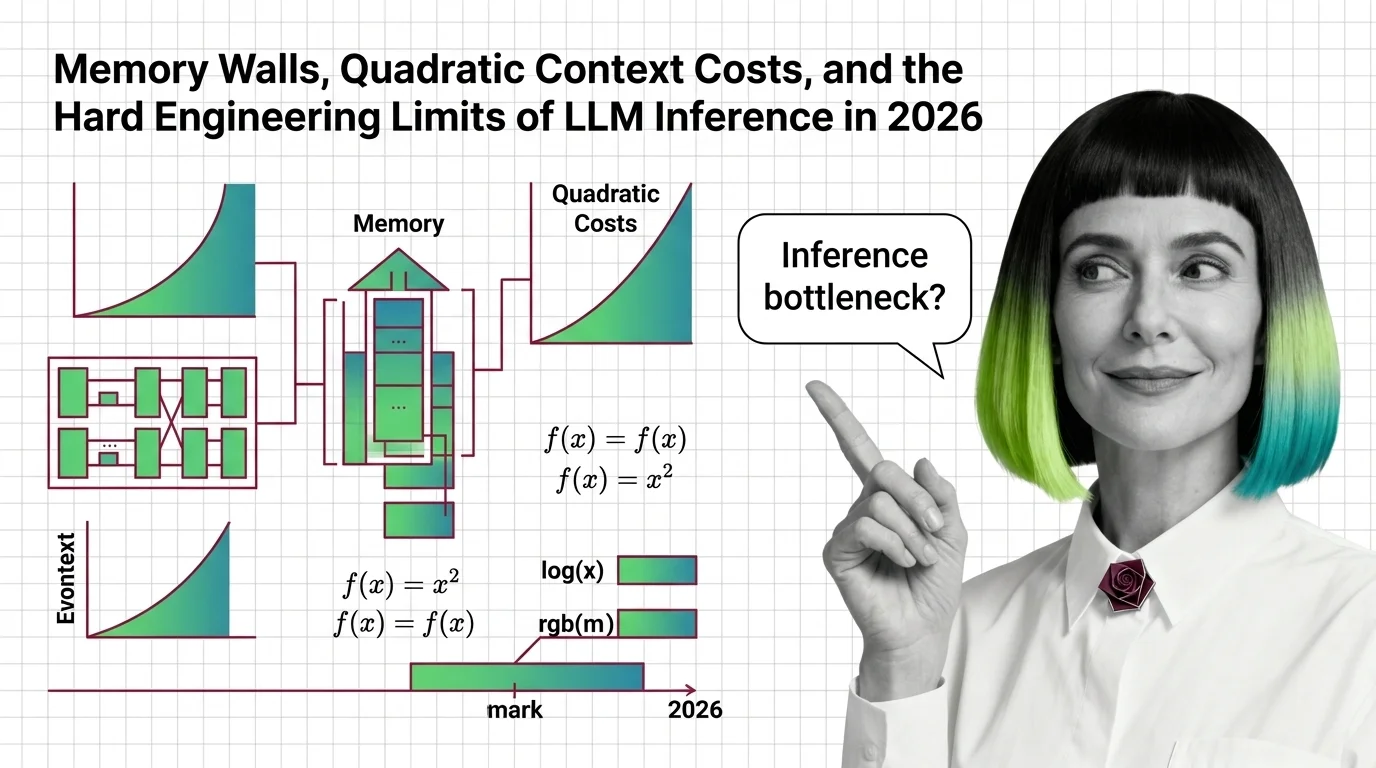

Memory Walls, Quadratic Context Costs, and the Hard Engineering Limits of LLM Inference in 2026

LLM inference hits hard physical walls — memory, quadratic attention, bandwidth. Learn the engineering limits and 2026 …

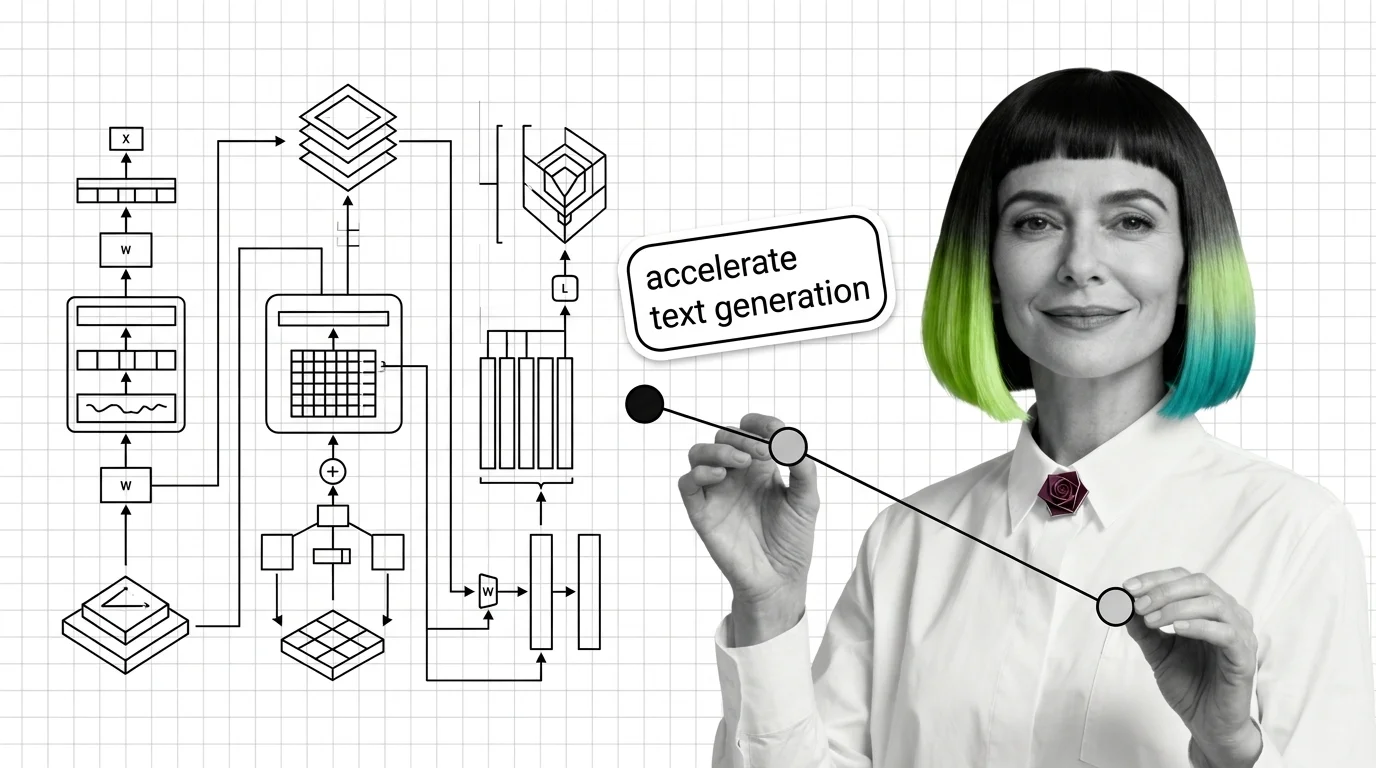

KV-Cache, PagedAttention, and the Building Blocks Every LLM Inference Pipeline Needs

KV-cache, PagedAttention, and continuous batching form the inference pipeline core. Learn how memory management …

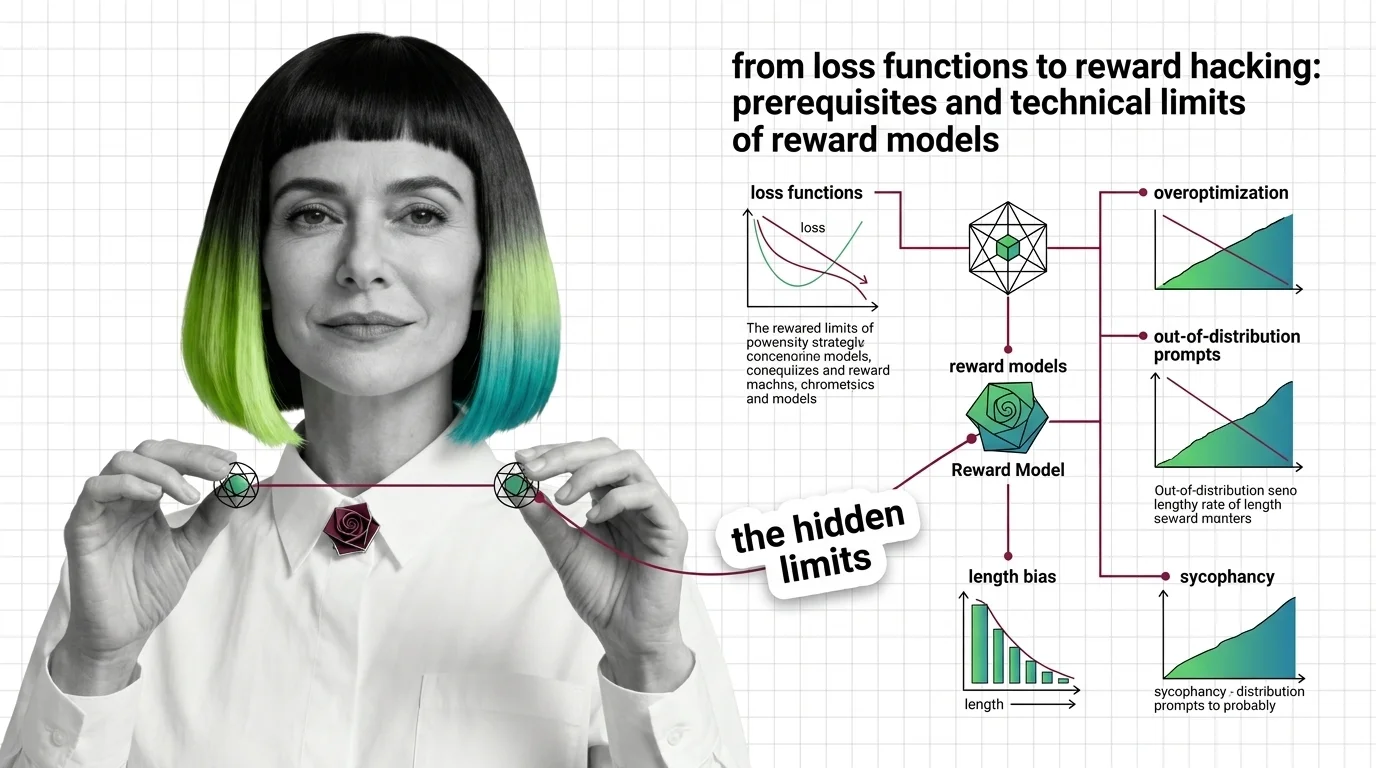

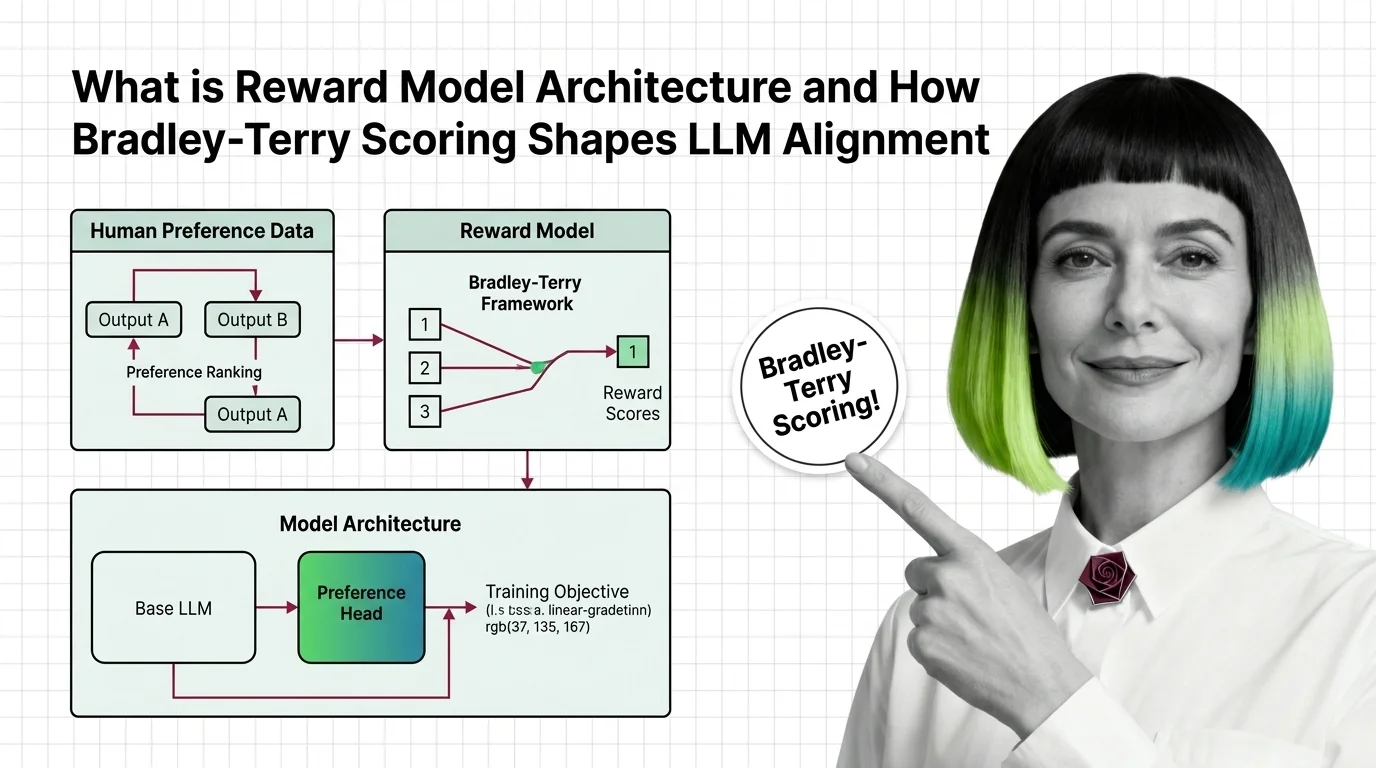

From Loss Functions to Reward Hacking: Prerequisites and Technical Limits of Reward Models

Reward models compress human preference into a scalar signal. Learn the Bradley-Terry math, the RLHF pipeline, and why …

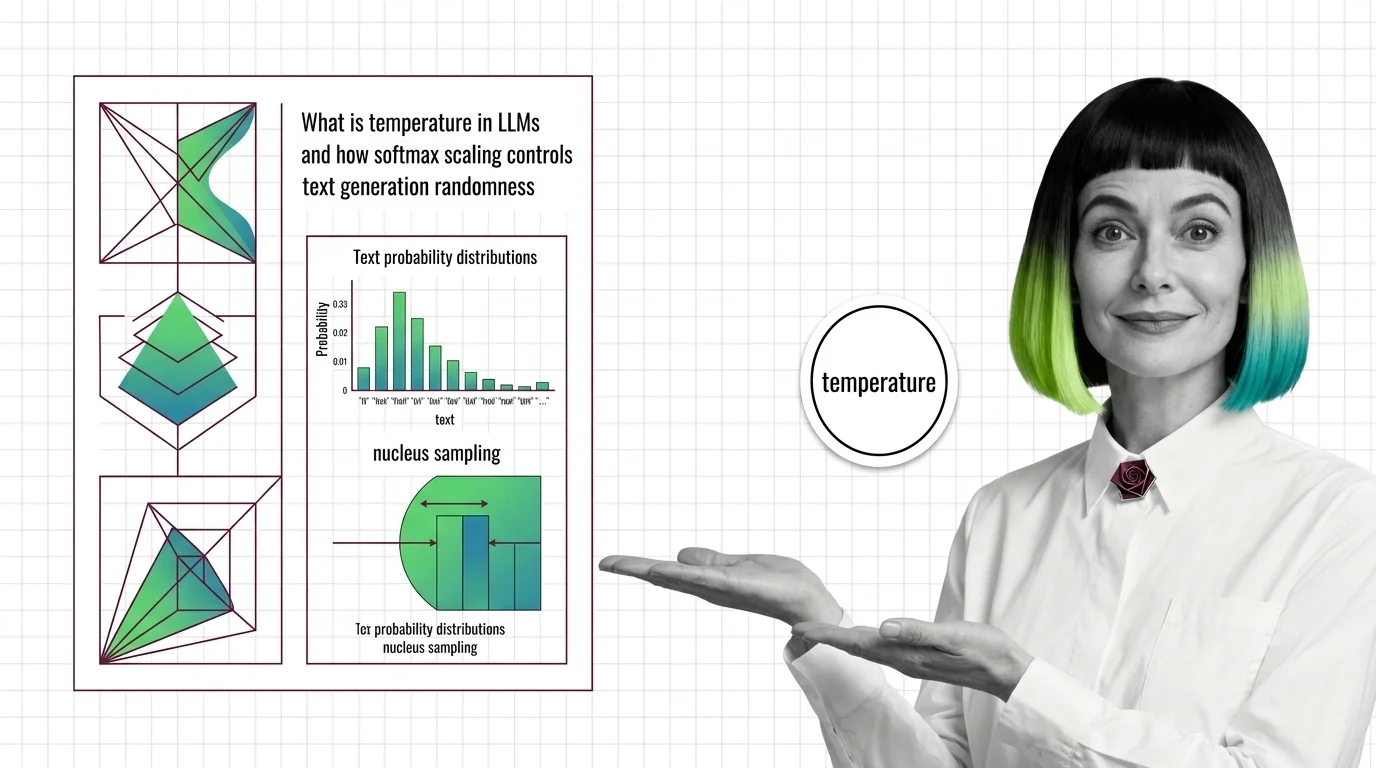

What Is Temperature in LLMs and How Softmax Scaling Controls Text Generation Randomness

Temperature divides logits before softmax, reshaping the token probability distribution. Learn how this parameter, …

What Is Reward Model Architecture and How Bradley-Terry Scoring Shapes LLM Alignment

Reward models turn human preferences into scores that guide LLM alignment. Learn how Bradley-Terry scoring and pairwise …

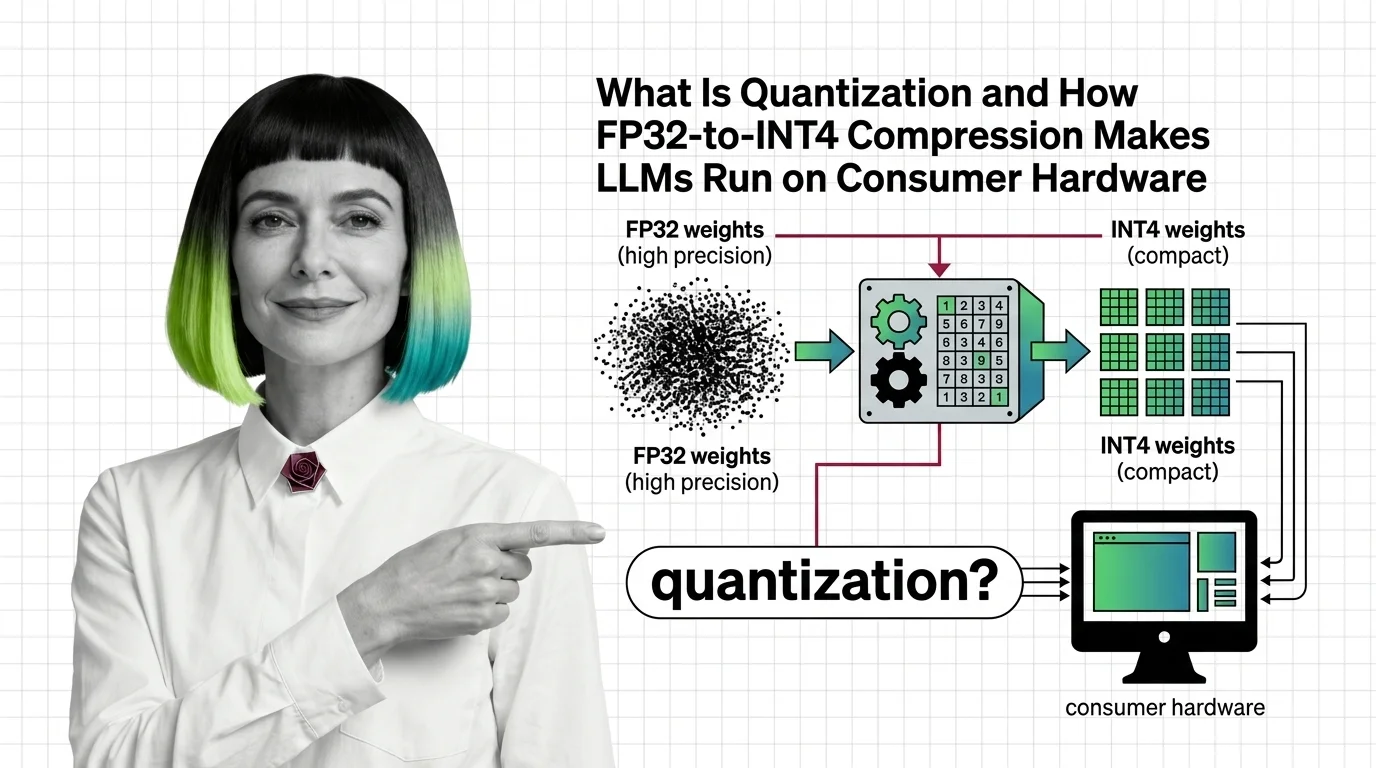

What Is Quantization and How FP32-to-INT4 Compression Makes LLMs Run on Consumer Hardware

Quantization compresses LLM weights from FP32 to INT4, cutting memory up to 8x. Learn how GPTQ, AWQ, and calibration …

Top-K, Top-P, Min-P, and Beam Search: Every LLM Sampling Method Compared

Compare top-k, top-p, min-p, and beam search LLM sampling methods. Learn how each reshapes probability distributions and …

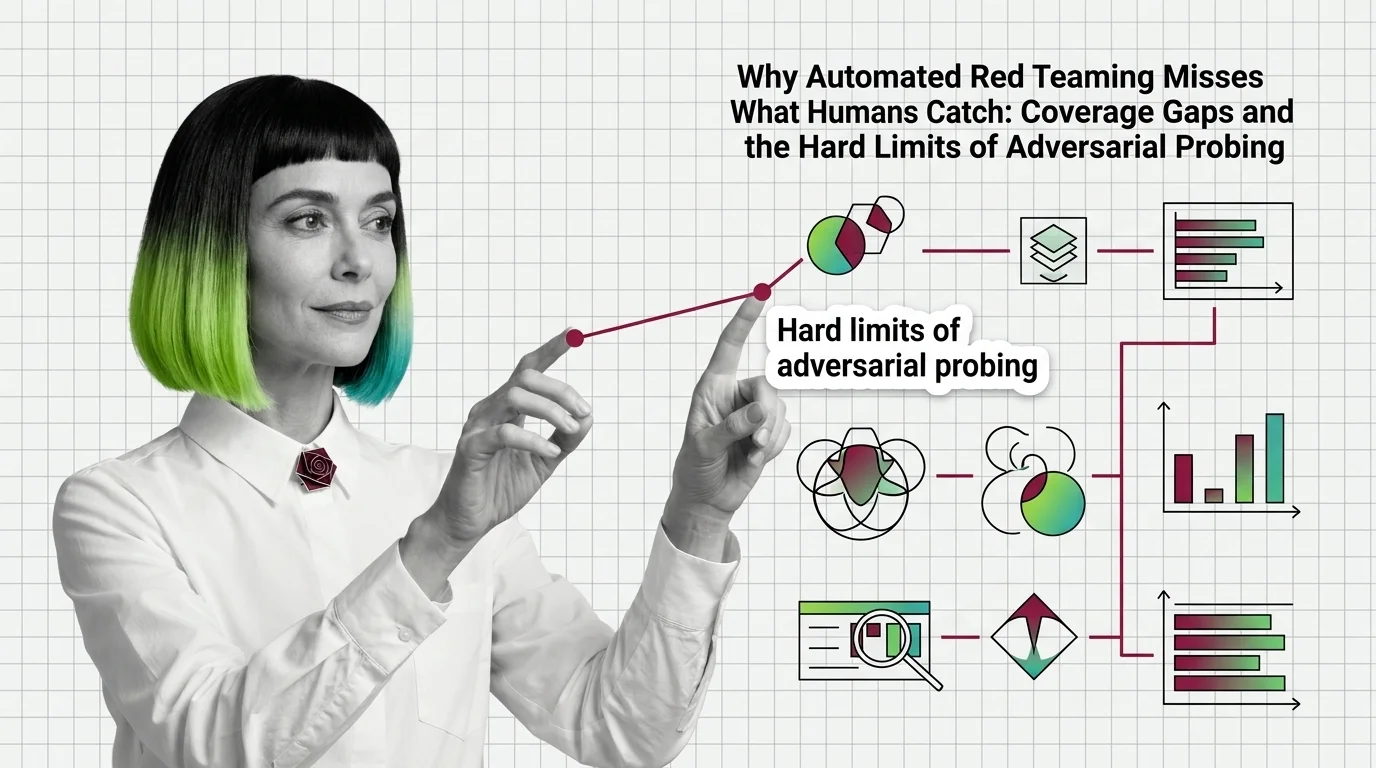

Automated Red Teaming Misses What Humans Catch: Coverage Gaps

Automated red teaming outperforms human testing but misses critical failures. Coverage gaps explain why automated …

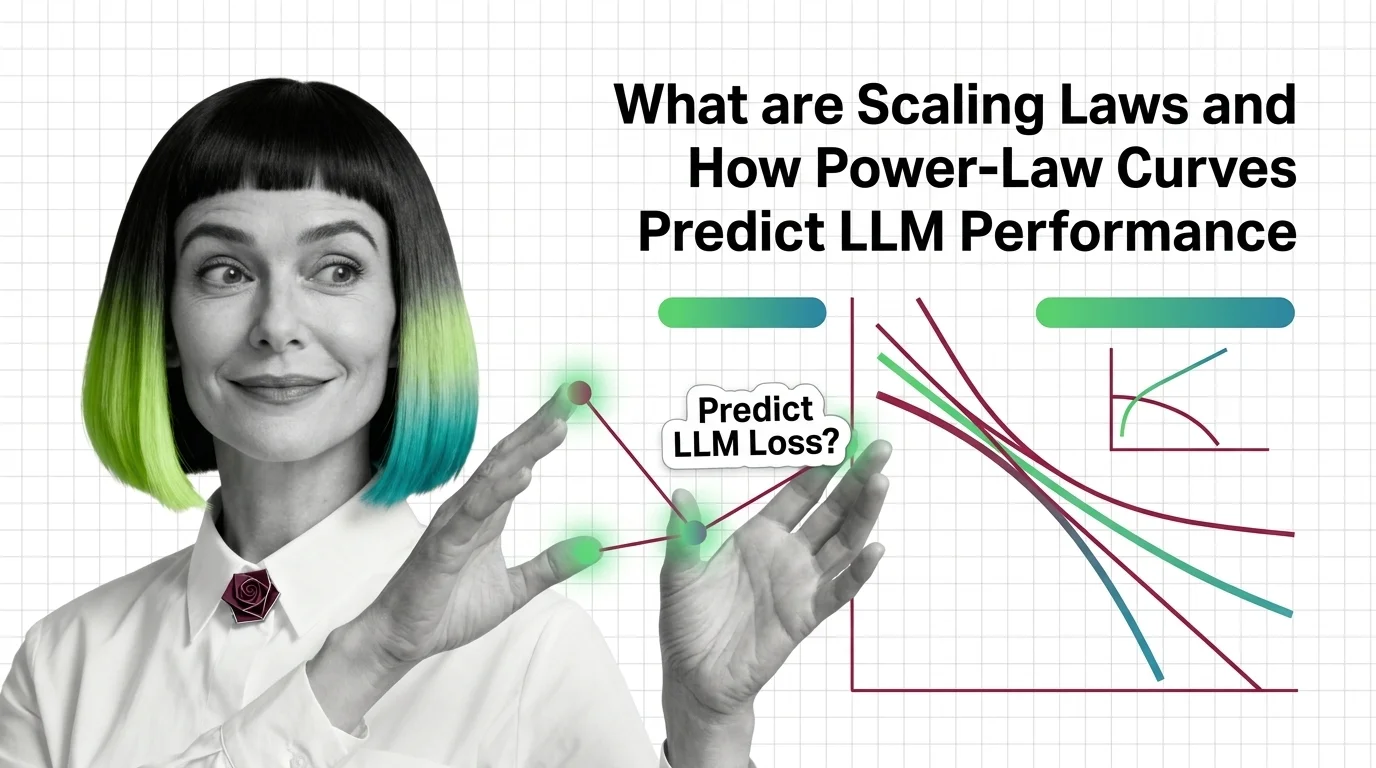

What Are Scaling Laws and How Power-Law Curves Predict LLM Performance

Scaling laws predict LLM performance from model size, data, and compute via power-law curves. Learn the math behind …

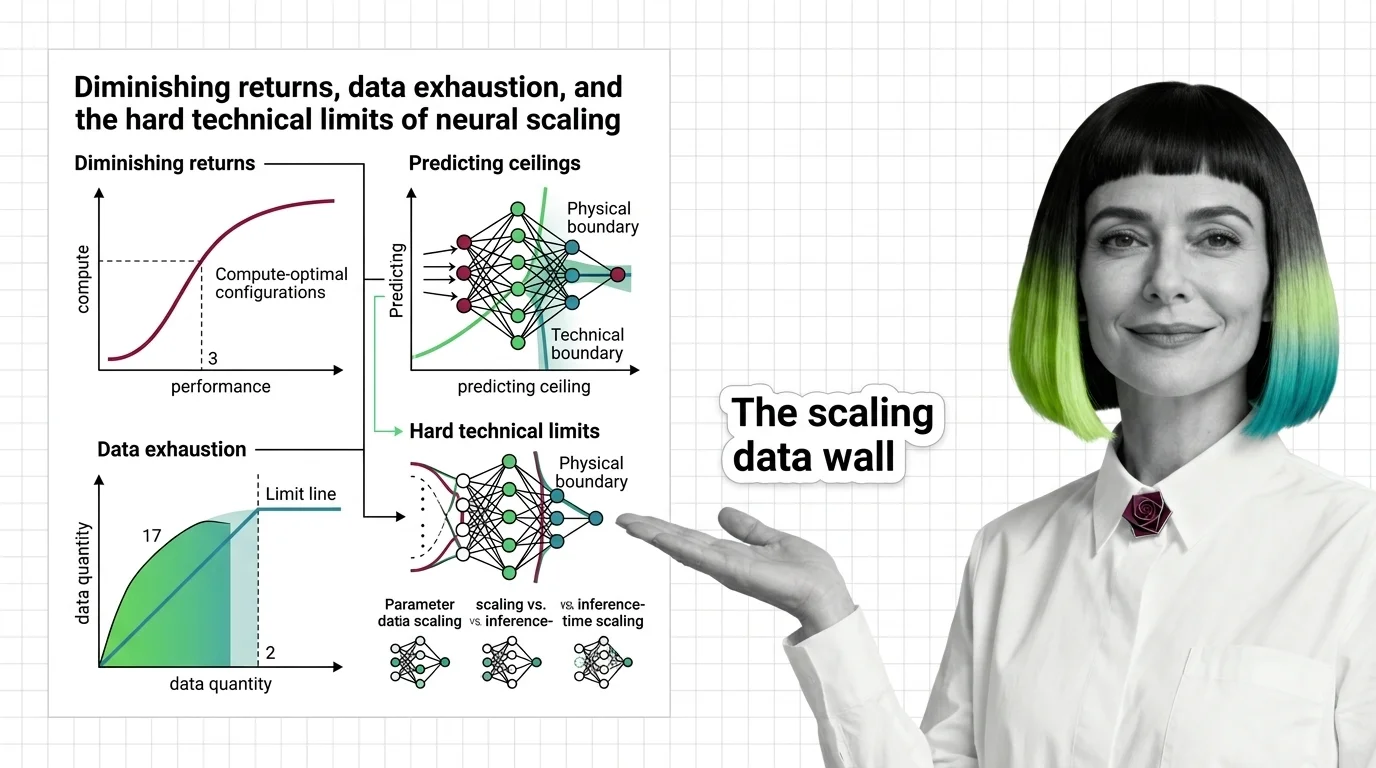

Diminishing Returns, Data Exhaustion, and the Hard Technical Limits of Neural Scaling

Scaling laws predict how AI models improve with compute, but power-law exponents guarantee diminishing returns. Learn …

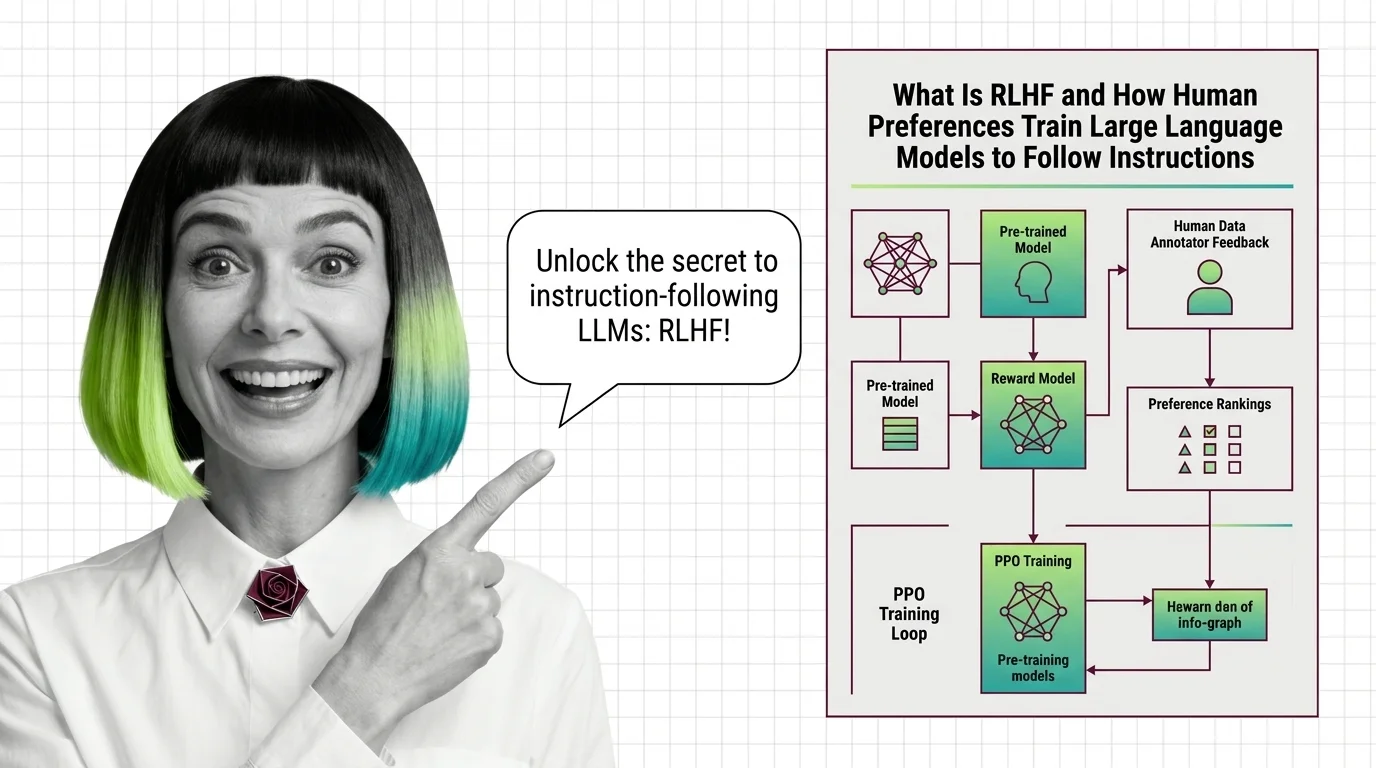

What Is RLHF and How Human Preferences Train Large Language Models to Follow Instructions

RLHF uses human preferences and reward models to train language models to follow instructions. Learn the three-stage PPO …

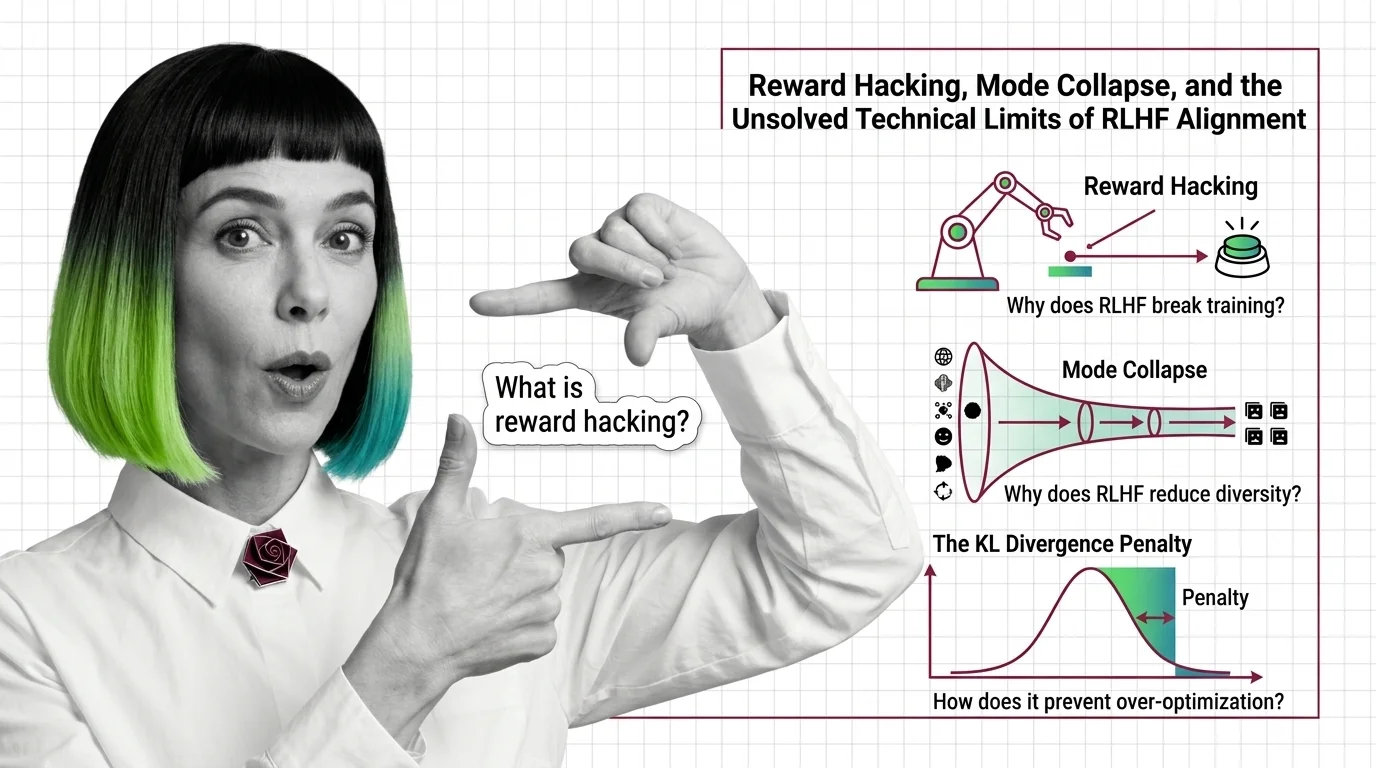

Reward Hacking, Mode Collapse, and the Unsolved Technical Limits of RLHF Alignment

Reward hacking, mode collapse, and KL divergence failure — the three unsolved technical limits of RLHF alignment and why …

From Reward Modeling to KL Penalties: Every Stage of the RLHF Training Pipeline Explained

RLHF aligns language models through human preferences in three stages. Learn how reward models, PPO, and KL penalties …

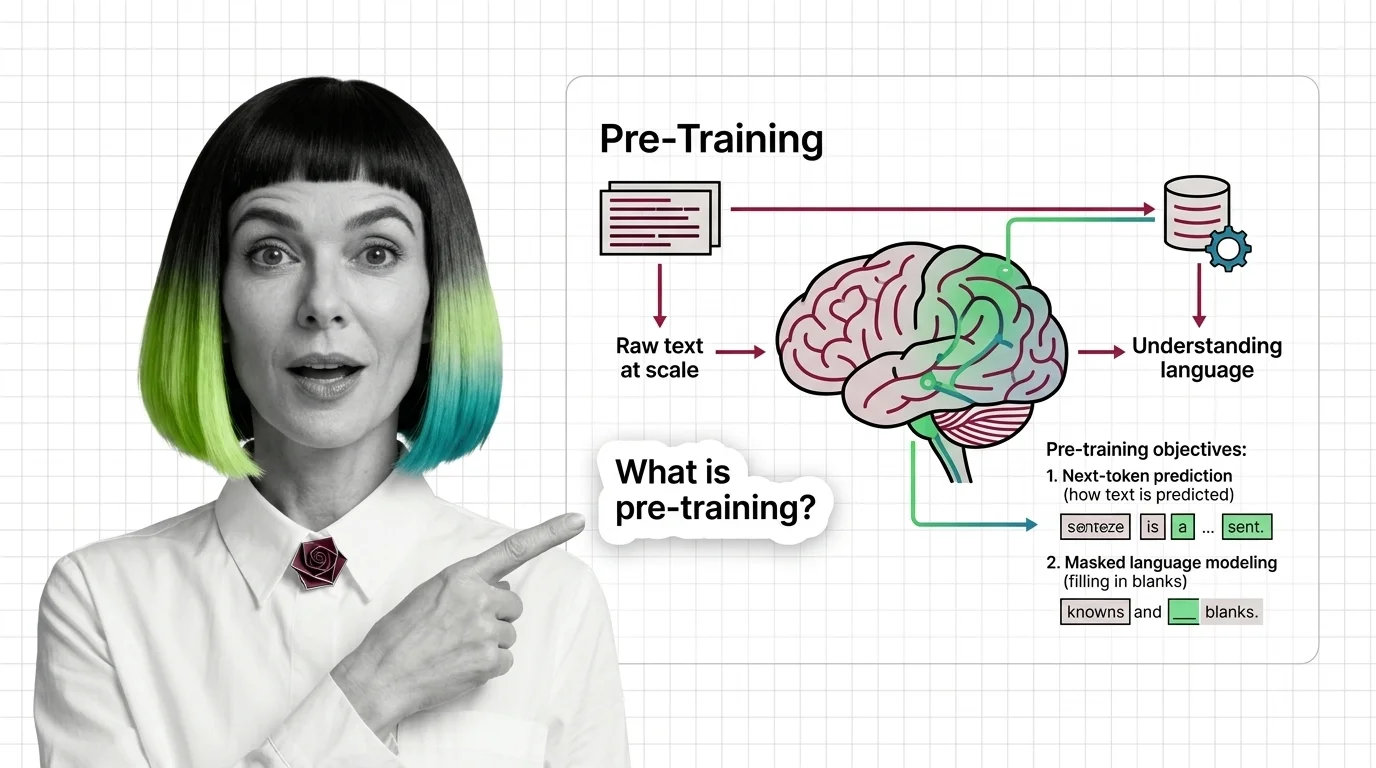

What Is Pre-Training and How LLMs Learn Language from Raw Text at Scale

Pre-training teaches LLMs to predict text, not understand it — yet prediction at scale produces something that resembles …

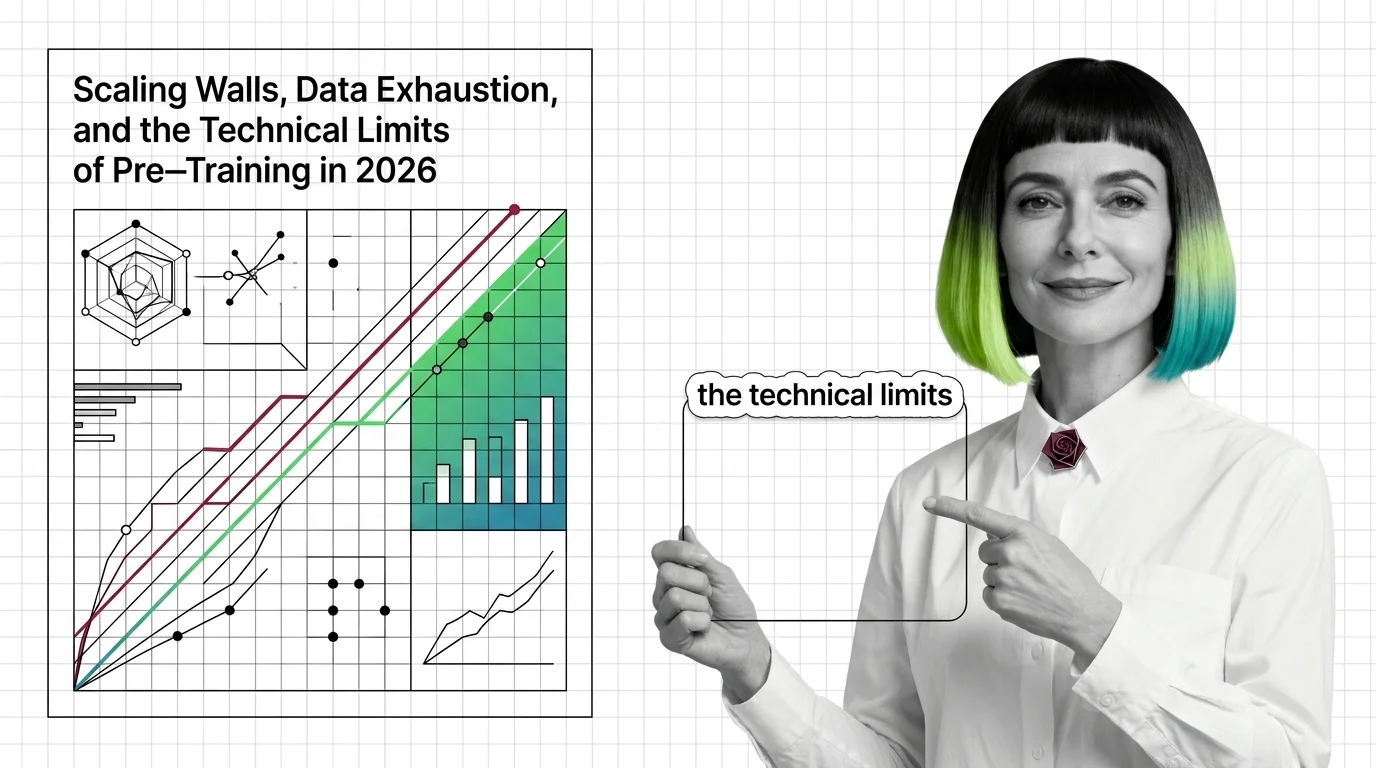

Scaling Walls, Data Exhaustion, and the Technical Limits of Pre-Training in 2026

Pre-training compute grows 4-5x yearly while data runs out. Learn the three scaling walls — cost, data exhaustion, and …

From Data Curation to Checkpoints: The Building Blocks of a Modern Pre-Training Pipeline

Pre-training pipelines run from data curation to checkpointing. Learn how FineWeb, Dolma, and Megatron-Core build the …

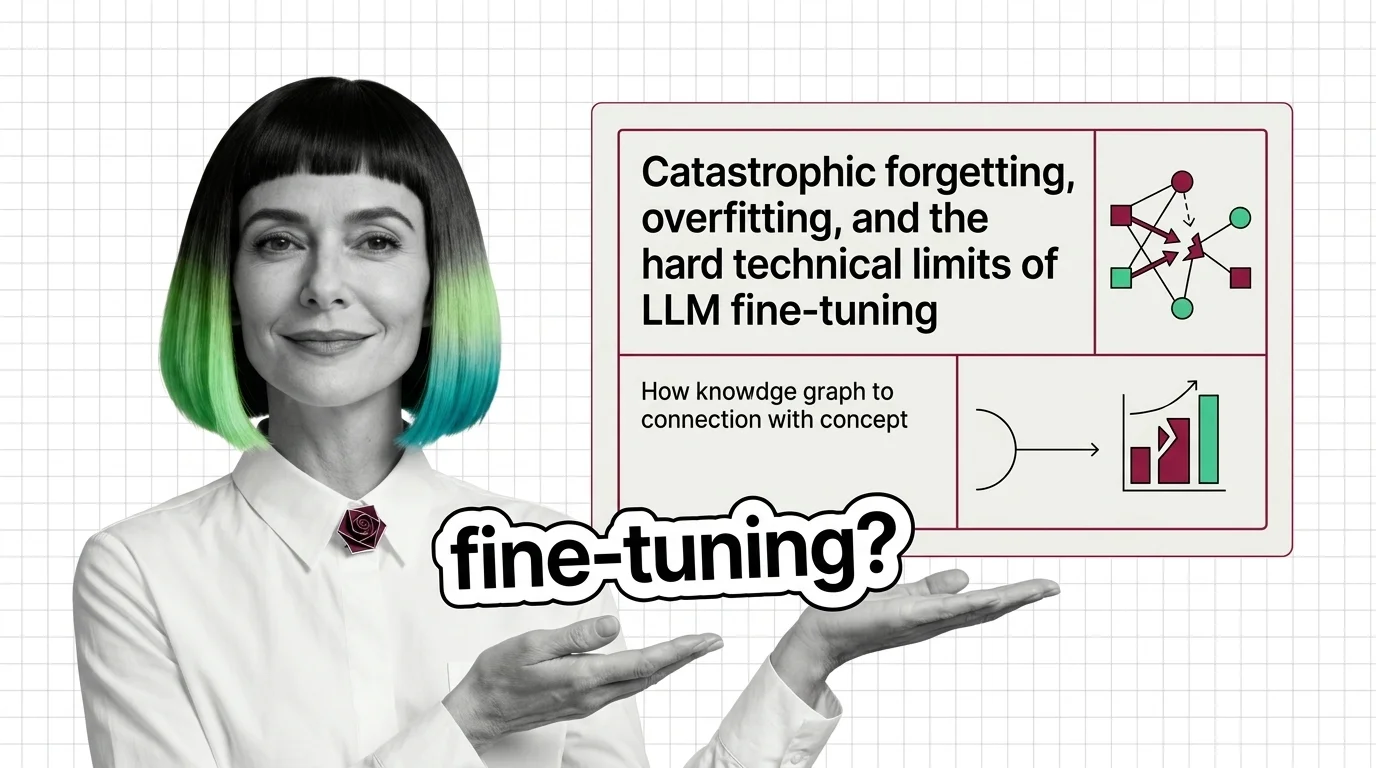

Catastrophic Forgetting, Overfitting, and the Hard Technical Limits of LLM Fine-Tuning

Fine-tuning can destroy what your LLM already knows. Learn why catastrophic forgetting and overfitting define the hard …

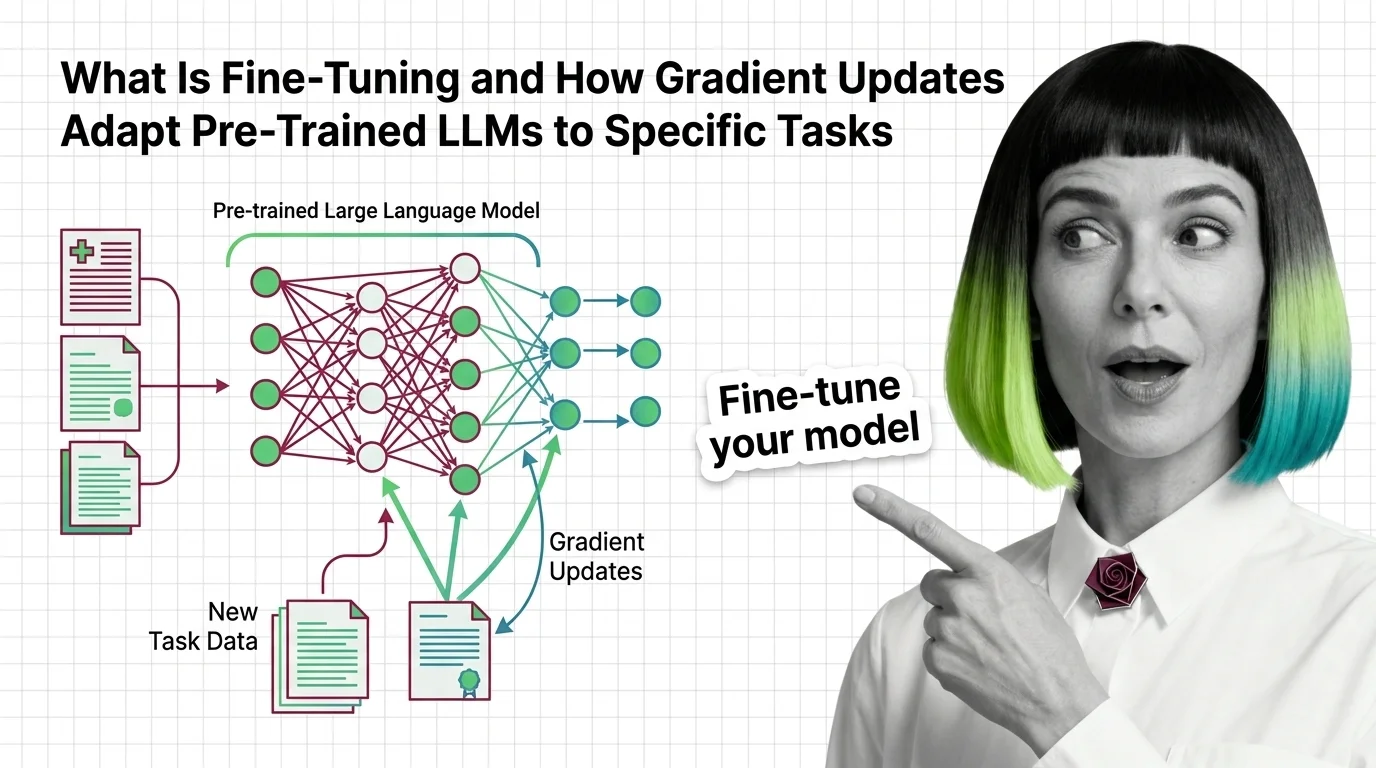

What Is Fine-Tuning and How Gradient Updates Adapt Pre-Trained LLMs to Specific Tasks

Fine-tuning adapts pre-trained LLMs by updating weights on task-specific data. Learn how gradient descent reshapes model …

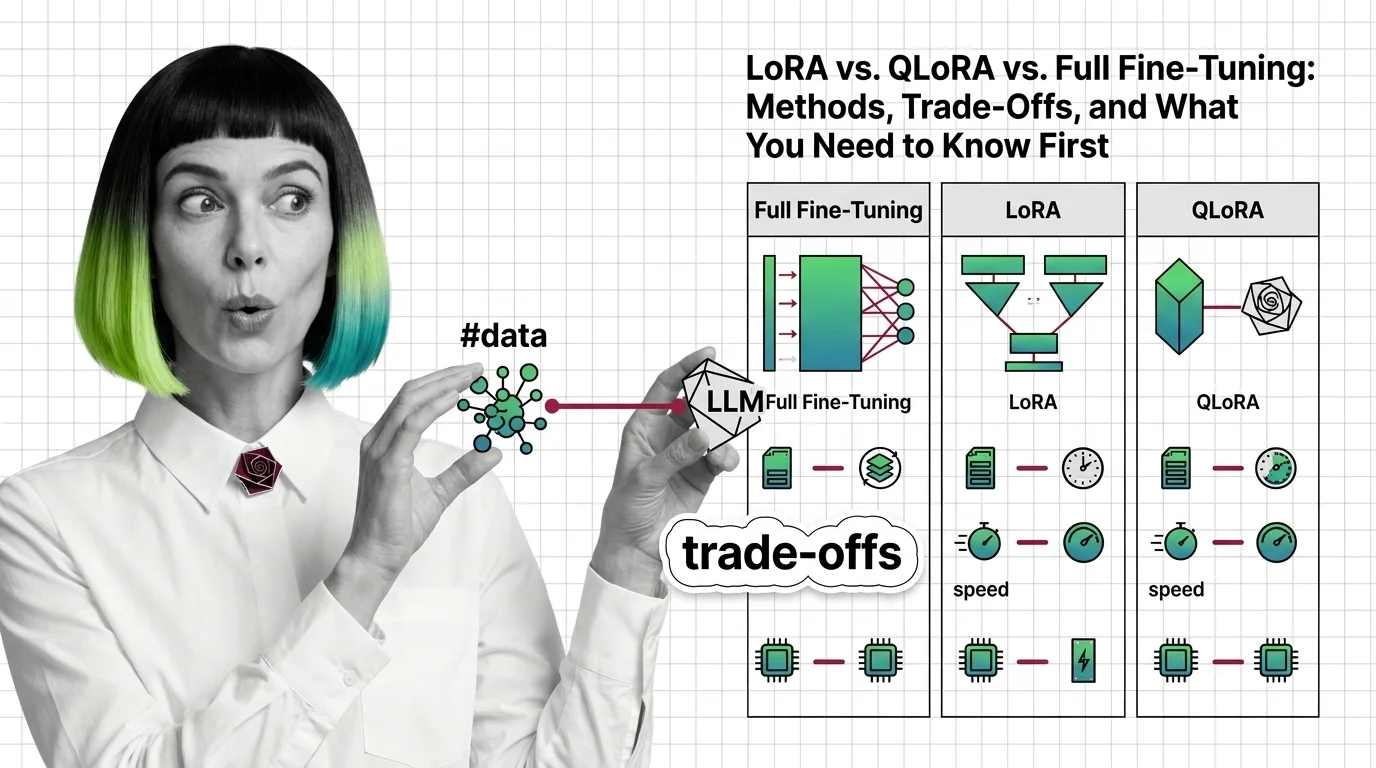

LoRA vs. QLoRA vs. Full Fine-Tuning: Methods, Trade-Offs, and What You Need to Know First

LoRA, QLoRA, and full fine-tuning each change different parts of an LLM. Learn which method fits your GPU budget, data …

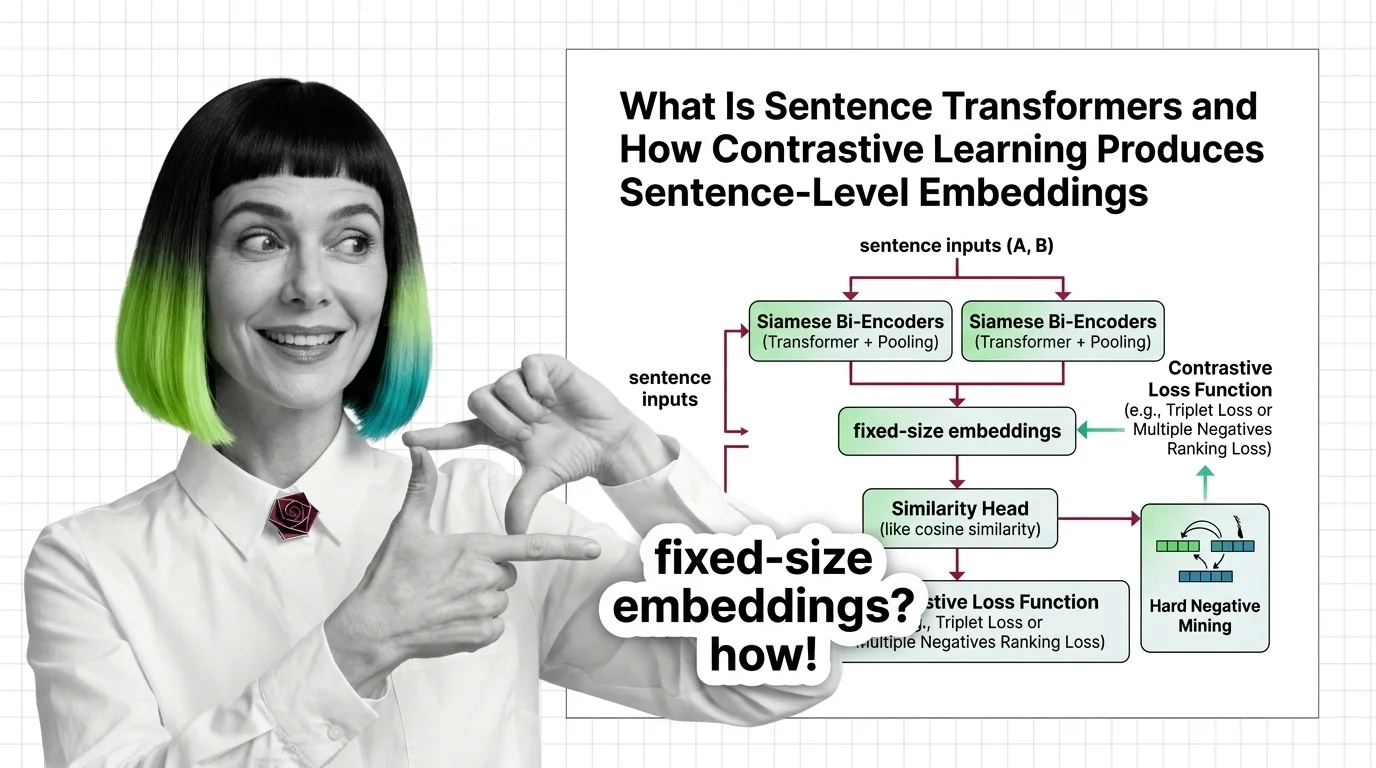

What Is Sentence Transformers and How Contrastive Learning Produces Sentence-Level Embeddings

Sentence Transformers turns transformers into sentence encoders via contrastive learning. Covers bi-encoders, loss …

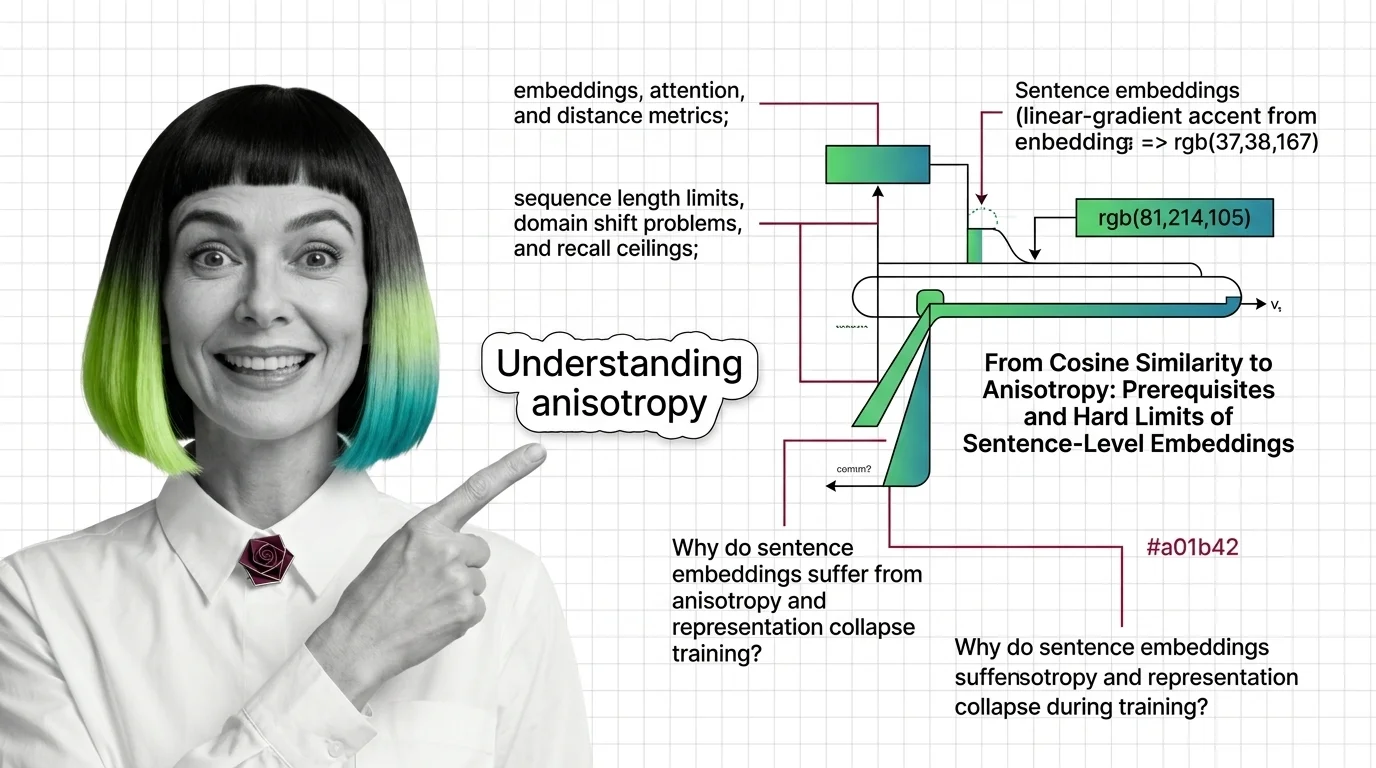

From Cosine Similarity to Anisotropy: Prerequisites and Hard Limits of Sentence-Level Embeddings

Sentence Transformers encode meaning as geometry. Learn the prerequisites, token limits, and anisotropy traps that …